On Nonparametric Guidance for Learning Autoencoder Representations

Unsupervised discovery of latent representations, in addition to being useful for density modeling, visualisation and exploratory data analysis, is also increasingly important for learning features relevant to discriminative tasks. Autoencoders, in p…

Authors: Jasper Snoek, Ryan Prescott Adams, Hugo Larochelle

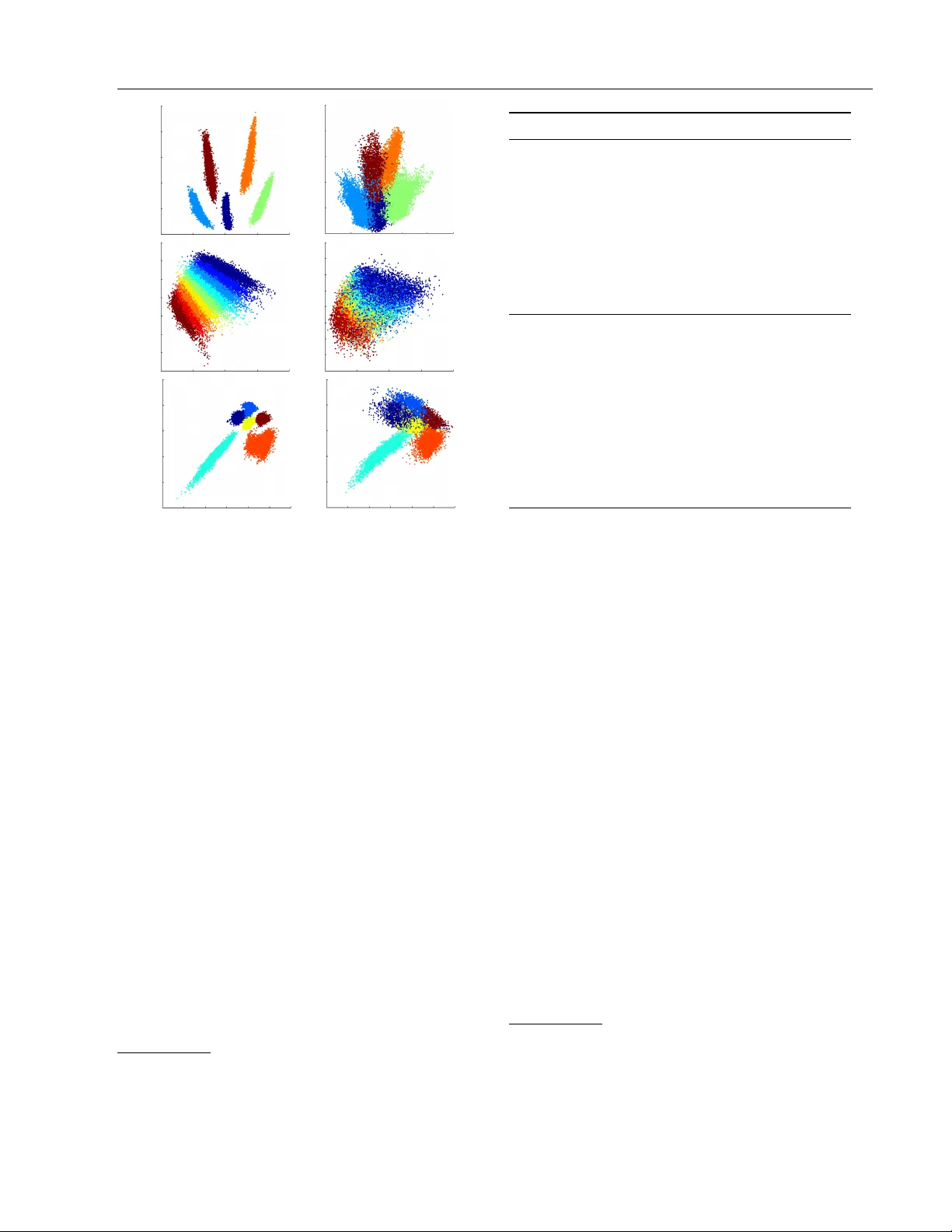

On Nonparametric Guidance for Learning Auto enco der Represen tations Jasp er Sno ek Ry an Prescott Adams Hugo Laro c helle Univ ersity of T oron to Harv ard Univ ersity Univ ersity of Sherbro ok e Abstract Unsup ervised discov ery of laten t representa- tions, in addition to being useful for den- sit y mo deling, visualisation and exploratory data analysis, is also increasingly imp ortan t for learning features relev ant to discrimina- tiv e tasks. Auto encoders, in particular, ha ve pro ven to b e an effective w ay to learn la- ten t co des that reflect meaningful v ariations in data. A contin uing chall enge, how ever, is guiding an auto encoder tow ard representa- tions that are useful for particular tasks. A complemen tary c hallenge is to find codes that are in v arian t to irrelev ant transformations of the data. The most common wa y of intro- ducing suc h problem-sp ecific guidance in au- to encoders has been through the incorp ora- tion of a parametric component that ties the laten t representation to the lab el informa- tion. In this work, we argue that a prefer- able approac h relies instead on a nonpara- metric guidance mechanism. Conceptually , it ensures that there exists a function that can predict the lab el information, without ex- plicitly instan tiating that function. The su- p eriorit y of this guidance mechanism is con- firmed on t wo datasets. In particular, this approac h is able to incorp orate in v ariance in- formation (lighting, elev ation, etc.) from the small NORB ob ject recognition dataset and yields state-of-the-art p erformance for a sin- gle lay er, non-conv olutional netw ork. 1 In tro duction The inference of constrained laten t representations pla ys a key role in machine learning and probabilis- tic mo deling. Broadly , the idea is that discov ering a c ompr esse d represen tation of the data will correspond to determining what is imp ortan t and unimp ortan t ab out the data. One can also view constrained la- ten t represen tations as pro viding fe atur es that can b e used to solv e other machine learning tasks. Of partic- ular importance are metho ds for latent representation that can efficiently construct co des for out-of-sample data, enabling rapid feature extraction. Neural net- w orks, for example, provide such feed forward feature extractors, and auto encoders, sp ecifically , ha ve found use in domains such as image classification (Vincent et al., 2008), sp eech recognition (Deng et al., 2010) and Ba yesian nonparametric mo dels (Adams et al., 2010). While the representations learned with auto enco ders are often useful for discriminative tasks, they require that the salien t v ariations in the data distribution b e relev ant for lab eling. This is not necessarily alw ays the case; as irrelev an t factors of v ariation gro w in im- p ortance and increasingly dominate the input distri- bution, the representation extracted b y auto enco ders tends to become less useful (Laro c helle et al., 2007). T o address this issue, Bengio et al. (2007) in tro duced mild sup ervised guidance into the auto encoder train- ing ob jectiv e, by adding connections from the hid- den lay er to output units predicting label information (those connections are equiv alent to the parameters of a logistic regression classifier). The same approach w as follow ed by Ranzato and Szummer (2008), to learn compact representations of do cumen ts. One downside of this approach is that it p oten tially complicates the task of learning the autoenco der rep- resen tation. Indeed, it now tries to solv e tw o addi- tional problems: find a hidden representation from whic h the lab el information can b e predicted and trac k the parametric v alue of that predictor (i.e. the logis- tic regression w eigh ts) throughout learning. How ever, w e are only in terested in the first problem (increased predictabilit y of the lab el). The actual parametric v alue of the lab el predictor is not imp ortan t. Once the auto encoder is trained, the lab el predictor can easily b e found by training a logistic regressor from scratc h, k eeping the hidden la yer fixed. W e might even wan t to use a classifier that is very differen t from the logis- tic regression classifier for which the hidden la yer has b een trained for. On Nonparametric Guidance for Learning Auto enco der Representations In this work, we inv estigate this issue and explore a differen t approach to in tro ducing sup ervised guidance. W e treat the latent space of the auto encoder as also b eing the latent space for a Gaussian pro cess latent v ariable mo del (GPL VM) (Lawrence, 2005). The dis- criminativ e labels are then taken to b elong to the visi- ble space of the GPL VM. The end result is a nonpara- metrically guided auto encoder whic h combines an ef- ficien t feed-forward parametric enco der/decoder with the Bay esian nonparametric inclusion of lab el informa- tion. W e discuss ho w this corresp onds to marginalizing out the parameters of a mapping from the laten t rep- resen tation to the lab el and show exp erimen tally ho w this approach is preferable to explicitly instantiating suc h a parametric mapping. Finally , we sho w how this h ybrid model also pro vides a w ay to guide the autoen- co der’s representation away from irrelev ant features to whic h the encoding should be in v ariant. 2 Unsup ervised Learning of Latent Represen tations W e first review the t wo differen t latent representation learning algorithms on whic h this w ork builds. W e then discuss a relationship b etw een the t wo that pro- vides part of the motiv ation for the proposed nonpara- metrically guided auto enco der. 2.1 Auto encoder Neural Netw orks Our starting point is the auto encoder (Cottrell et al., 1987), whic h is an artificial neural netw ork that is trained to reproduce (or reconstruct) the input at its output. Its computations are decomp osed into tw o parts: the enco der, which computes a latent (often lo wer-dimensional) representation of the input, and the deco der, which reconstructs the original input from its latent represen tation. W e denote the latent space b y X and the visible (data) space b y Y . W e assume that these spaces are real-v alued with dimension J and K , respectively , i.e., X = R J and Y = R K . W e de- note the encoder, then, as a function g ( y ; φ ) : Y → X and the deco der as f ( x ; ψ ) : X → Y . With a set of N examples D = { y ( n ) } N n =1 , y ( n ) ∈ Y , w e join tly optimize the enco der parameters φ and deco der parameters ψ for the least-squares reconstruction cost: φ ? , ψ ? = arg min φ,ψ N X n =1 K X k =1 ( y ( n ) k − f k ( g ( y ( n ) ; φ ); ψ )) 2 , (1) where f k ( · ) is the k th output dimension of f ( · ). Au- to encoders hav e b ecome popular as a mo dule for “greedy pre-training” of deep neural netw orks (Bengio et al., 2007). In particular, the denoising auto encoder of Vincent et al. (2008) is effectiv e at learning ov ercom- plete latent represen tations, i.e., co des of higher di- mensionalit y than the input. Overcomplete represen- tations are though t to b e ideal for discriminativ e tasks, but are difficult to learn due to trivial “identit y” so- lutions to the auto enco der ob jective. This problem is circum ven ted in the denoising autoenco der b y provid- ing as input a corrupted training example, while ev al- uating reconstruction on the noiseless original. With this ob jective, the autoenco der learns to leverage the statistical structure of the inputs to extract a richer laten t represen tation. 2.2 Gaussian Process Latent V ariable Models One alternativ e approach to the learning of laten t rep- resen tations is to consider a low er-dimensional mani- fold that reflects the statistical structure of the data. Suc h manifolds ma y b e difficult to directly define, how- ev er, and so man y approaches to laten t co ding frame the problem indirectly by sp ecifying distributions on functions b etw een the visible and latent spaces. The Gaussian process latent v ariable mo del (GPL VM) of La wrence (2005) takes a Bay esian probabilistic ap- proac h to this and constructs a distribution o ver map- ping functions using a Gaussian pro cess (GP) prior. The GPL VM results in a p o werful nonparametric mo del that analytically marginalizes ov er the infinite n umber of p ossible mappings from the latent to the visible space. While initially used for visualization of high dimensional data, GPL VMs hav e achiev ed state- of-the-art results for a num b er of tasks, including mod- eling h uman motion (W ang et al., 2008), classification (Urtasun and Darrell, 2007) and collaborative filtering (La wrence and Urtasun, 2009). As in the autoenco der, the GPL VM assumes that the N observ ed data D = { y ( n ) } N n =1 are the im- age of a homologous set { x ( n ) } N n =1 , arising from a vector-v alued “deco der” function f ( x ) : X → Y . Analogously to the squared-loss of the previous section, the GPL VM assumes that the observ ed data hav e b een corrupted by zero-mean Gaussian noise: y ( n ) = f ( x ( n ) ) + ε with ε ∼ N (0 , σ 2 I K ). The in- no v ation of the GPL VM is to place a Gaussian pro cess prior on the function f ( x ) and then optimize the la- ten t represen tation { x ( n ) } N n =1 , while marginalizing out the unknown f ( x ). 2.2.1 Gaussian Pro cess Priors The Gaussian pro cess provides a flexible distribution o ver random functions, the prop erties of which can b e specified via a positive definite cov ariance function, without having to c ho ose a particular finite basis. T yp- ically , Gaussian pro cesses are defined in terms of a dis- Jasp er Sno ek, Ryan Prescott Adams, Hugo Laro c helle tribution ov er scalar functions and in keeping with the con ven tion for the GPL VM, we shall assume that K in- dep enden t GPs are used to construct the v ector-v alued function f ( x ). W e denote each of these functions as f k ( x ) : X → R . The GP requires a co v ariance kernel function, which we denote as C ( x , x 0 ) : X × X → R . The defining characteristic of the GP is that for any finite set of N data in X there is a corresp onding N - dimensional Gaussian distribution ov er the function v alues, which in the GPL VM w e take to b e the com- p onen ts of Y . The N × N co v ariance matrix of this distribution is the matrix arising from the application of the co v ariance kernel to the N p oin ts in X . W e de- note an y additional parameters go v erning the b eha vior of the cov ariance function b y θ . Under the comp onent-wise indep endence assumptions of the GPL VM, the Gaussian pro cess prior allows one to analytically in tegrate out the K latent scalar func- tions from X to Y . Allo wing for each of the K Gaus- sian pro cesses to hav e unique hyperparameter θ k , we write the marginal likelihoo d, i.e., the probabilit y of the observed data given the h yp erparameters and the laten t represen tation, as p ( { y ( n ) } N n =1 | { x ( n ) } N n =1 , { θ k } K k =1 , σ 2 ) = K Y k =1 N ( y ( · ) k | 0 , Σ θ k + σ 2 I N ) , (2) where y ( · ) k refers to the v ector [ y (1) k , . . . , y ( N ) k ] and where Σ θ k is the matrix arising from { x n } N n =1 and θ k . In the basic GPL VM, the optimal x n are found by maximizing this marginal lik eliho od. 2.2.2 The Back-Constrained GPL VM Although the GPL VM enforces a smo oth mapping from the latent representation to the observed data, the conv erse is not true: neighbors in observed space need not b e neighbors in the latent represen tation. In man y applications this can b e an undesirable prop- ert y . F urthermore, enco ding nov el datap oin ts in to the laten t space is not straightforw ard in the GPL VM; one m ust optimize the laten t representations of out- of-sample data using, e.g., conjugate gradien t meth- o ds. With these considerations in mind, La wrence and Qui ˜ nonero-Candela (2006) reformulated the GPL VM with the constrain t that the hidden represen tation b e the result of a smo oth map from the observed space. P arameterized b y φ , this “enco der” function is denoted as g ( y ; φ ) : Y → X . The marginal likelihoo d ob jective of this b ack-c onstr aine d GPL VM can now b e formu- lated as finding the optimal φ under: φ ? = arg min φ K X k =1 ln | Σ θ k ,φ + σ 2 I N | + y ( · ) k T ( Σ θ k ,φ + σ 2 I N ) − 1 y ( · ) k , (3) where the k th cov ariance matrix Σ θ k ,φ no w dep ends not only on the k ernel hyperparameters θ k , but also on the parameters of g ( y ; φ ), i.e., [ Σ θ k ,φ ] n,n 0 = C ( g ( y ( n ) ; φ ) , g ( y ( n 0 ) ; φ ) ; θ k ) . (4) La wrence and Qui ˜ nonero-Candela (2006) explored m ultilay er p erceptrons and radial-basis-function net- w orks as possible smooth maps g ( y ; φ ). 2.3 GPL VM as an Infinite Auto encoder The relationship b et w een Gaussian pro cesses and arti- ficial neural net works is well-established. Neal (1996) sho wed that the prior o ver functions implied by man y parametric neural netw orks b ecomes a GP in the limit of an infinite num b er of hidden units, and Williams (1998) subsequen tly deriv ed a co v ariance function that corresp onds to suc h a netw ork under a particular ac- tiv ation function. One ov erlo oked consequence of this relationship is that it also connects auto encoders and the back-constrained Gaussian pro cess latent v ariable mo del. By apply- ing the co v ariance function of Williams (1998) to the GPL VM, the resulting mo del is a density netw ork (MacKa y, 1994) with an infinite num b er of hidden units in the single hidden la yer. Then, using a neu- ral netw ork for the GPL VM back constraints trans- forms the density netw ork into a semiparametric au- to encoder, where the enco der is a parametric neural net work and the deco der is a Gaussian pro cess. Alternativ ely , one can start from the autoenco der and notice that, for a linear deco der with a least-squares reconstruction cost and zero-mean Gaussian prior ov er its w eights, it is p ossible to integrate out the de- co der. Learning then corresp onds to the minimization of Eqn. (3) with a linear k ernel for Eqn. (4). An y non-degenerate p ositiv e definite kernel corresp onds to a deco der of infinite size, and also recov ers the general bac k-constrained GPL VM algorithm. Suc h an infinite auto enco der exhibits some desirable prop erties. The infinite deco der net work ob viates the need to explicitly sp ecify and learn a parametric form for the generally sup erfluous deco der netw ork and rather marginalises ov er all p ossible deco ders. This comes at the cost of having to in v ert as many matrices (the GP co v ariances) as there are input dimensions. Hence, for large input dimensionalit y , one could argue that the fully parametric autoenco der is preferable. On Nonparametric Guidance for Learning Auto enco der Representations 3 Sup ervised Guiding of Latent Represen tations As discussed earlier, when the salien t v ariations in the input are only weakly informative about a particular discriminativ e task, it can b e useful to incorporate la- b el information into unsup ervised learning. Bengio et al. (2007) sho wed, for example, that while a purely sup ervised signal can lead to ov erfitting, mild sup er- vised guidance can b e b eneficial when initializing a discriminativ e deep neural netw ork. F or that reason, Bengio et al. (2007) prop osed that laten t representa- tions also b e trained to predict the lab el information, b y adding a parametric mapping c ( x ; Λ) : X → Z from the latent represen tation’s space X to the lab el space Z and backpropagating error gradien ts from the output to the represen tation. Bengio et al. (2007) in- v estigated the use of a linear logistic regress ion clas- sifier for the parametric mapping. Such “partial su- p ervision” would encourage disco v ery of a laten t rep- resen tation that is useful to a sp ecific (but learned) parametrization of such a linear classifier. A similar approac h w as used b y Ranzato and Szummer (2008) to learn compact represen tations of do cuments. There are tw o disadv antages to this strategy . First, the assumption of a sp ecific parametric form for the mapping c ( x ; Λ) restricts the guidance to classifiers within that family of mappings. The second is that the learned represen tation is committed to one partic- ular setting of the parameters Λ. Consider the learn- ing dynamics of gradient descent optimization for this strategy . A t ev ery iteration t of descent (with current state φ t , ψ t , Λ t ), the gradient from sup ervised guid- ance encourages the laten t representation (currently parametrized b y φ t , ψ t ) to become more predictiv e of the lab els under the current lab el map c ( x ; Λ t ). Such b eha vior discourages mo ves in φ, ψ space that make the latent represen tation more predictive under some other label map c ( x ; Λ ? ) where Λ ? is potentially dis- tan t from Λ t . Hence, while the problem w ould seem to b e alleviated by the fact that Λ is learned jointly , this constan t pressure to wards representations that are im- mediately useful should increase the difficulty of rep- resen tation learning. 3.1 Nonparametrically Guided Auto encoder Rather than directly sp ecifying a particular discrimi- nativ e regressor for guiding the latent representation, it seems more desirable to simply ensure that such a function exists . That is, w e w ould prefer not to ha ve to c ho ose a laten t represen tation that is tied to a sp ecific map to labels, but instead find represen tations that are consisten t with man y suc h maps. One w ay to arrive at such a guidance mechanism is to marginalize out the parameters Λ of a label map c ( x ; Λ) under a dis- tribution that p ermits a wide family of functions. W e ha ve seen previously that this can be done for recon- structions of the input space with a deco der f ( x ; ψ ). W e follo w the same reasoning and do this instead for c ( x ; Λ). Integrating out the parameters of the lab el map yields a back-constrained GPL VM acting on the lab el space Z , where the back constraints are determined by the input space Y . The p ositiv e defi- nite k ernel sp ecifying the Gaussian process then deter- mines the properties of the distribution ov er mappings from the laten t represen tation to the lab els. The result is a h ybrid of the auto encoder and back-constrained GPL VM, where the enco der is shared across models. F or notation, w e will refer to this approach to guided laten t represen tation as a nonp ar ametric al ly guide d au- to enc o der , or NPGA. Let the lab el space Z be an M -dimensional real space 1 , i.e., Z = R M , and the n th training example has a lab el v ector z ( n ) ∈ Z . The co v ariance function that relates lab el v ectors in the NPGA is [ Σ θ m ,φ, Γ ] n,n 0 = C ( Γ · g ( y ( n ) ; φ ) , Γ · g ( y ( n 0 ) ; φ ) ; θ m ) , where Γ ∈ R H × J is an H -dimensional linear pro jection of the enco der output. F or H J , this pro jection impro ves efficiency and reduces ov erfitting. Learning in the NPGA is then formulated as finding the opti- mal φ, ψ , Γ under the com bined ob jective: φ ? , ψ ? , Γ ? = arg min φ,ψ , Γ (1 − α ) L auto ( φ, ψ ) + αL GP ( φ, Γ ) where α ∈ [0 , 1] linearly blends the t wo ob jectives L auto ( φ, ψ ) = 1 K N X n =1 K X k =1 ( y ( n ) k − f k ( g ( y ( n ) ; φ ); ψ )) 2 L GP ( φ, Γ ) = 1 M M X m =1 h ln | Σ θ m ,φ, Γ + σ 2 I N | + z ( · ) m T ( Σ θ m ,φ, Γ + σ 2 I N ) − 1 z ( · ) m i . W e use a linear decoder for f ( x ; ψ ), and the enco der g ( y ; φ ) is a linear transformation follow ed b y a fixed elemen t-wise nonlinearit y . As is common for auto enco ders and to reduce the n umber of free parameters in the mo del, the enco der and decoder weigh ts are tied. F or the larger NORB dataset, w e divide the training data into mini-batches of 350 training cases and p erform three iterations of conjugate gradient descent p er mini-batch. Finally , as prop osed in the denoising auto enco der v ariant of Vin- cen t et al. (2008), w e alw ays add noise to the encoder inputs in cost L auto ( φ, ψ ), keeping the noise fixed dur- ing each iteration. 1 F or discrete labels, we use a “one-hot” enco ding. Jasp er Sno ek, Ryan Prescott Adams, Hugo Laro c helle 3.2 Related Mo dels The combination of parametric unsupervised learning and nonparametric sup ervised learning has b een ex- amined previously . Salakhutdino v and Hinton (2007) prop osed merging auto encoder training with nonlin- ear neighborho o d comp onen t analysis, which encour- ages the enco der to ha ve similar outputs for similar inputs b elonging to the same class. Note that the bac kconstrained-GPL VM p erforms a similar role. Ex- amining Equation 3, one can see that the first term, the log determinan t of the kernel, regularizes the la- ten t space. It pulls all examples together as the de- terminan t is minimized when the cov ariance b et ween all pairs is maximized. The second term is a data fit term, pushing examples that are distant in lab el space apart in the latent space. In the case of a one-hot co ding, the lab els act as indicator v ariables including only indices of the concentration matrix that reflect in ter-class pairs in the loss. Thus the GPL VM enforces that examples close in the lab el space will b e closer in the laten t space than examples that are distant in lab el space. There are sev eral notable differences, ho wev er, b et ween this work and the NPGA. First, as the NPGA is a natural generalization of the back- constrained GPL VM, it can be in tuitively interpreted as a marginalization of lab el maps, as discussed in the previous section. Second, the NPGA enables the wide library of cov ariance functions from the Gaussian pro- cess literature to b e incorp orated into the framework of learning guided representation and naturally accomo- dates contin uous lab els. Finally , as will b e discussed in Section 4.2, the NPGA not only enables learning of unsup ervised features that capture discriminatively- relev ant information, but also allows representations that can ignor e irrelev ant information. Previous work has also hybridized Gaussian pro cesses and unsup ervised connectionist learning. In Salakhut- dino v and Hinton (2008), restricted Boltzmann ma- c hines w ere used to initialize a neural netw ork that w ould provide features to a Gaussian process regressor or classifier. Unlik e the NPGA, ho wev er, this approach do es not address the issue of guided unsupervised rep- resen tation. Indeed, in NPGA, Gaussian pro cesses are used only for representation learning, are applied only on small mini-batches and are not required at test time. This is important, since deplo ying a Gaussian pro cess on large datasets such as the NORB data p oses significan t practical problems. Because their metho d relies on a Gaussian pro cess at test time, a direct ap- plication of the approach prop osed b y Salakhutdino v and Hinton (2008) would be prohibitiv ely slo w. Although the GPL VM was originally prop osed as a laten t v ariable mo del conditioned on the data, there has b een work on adding discriminative lab el infor- mation and additional signals. The Discriminative GPL VM (DGPL VM) (Urtasun and Darrell, 2007) in- corp orates discriminativ e class lab els through a prior based on discriminant analysis that enforces separa- bilit y betw een classes in the laten t space. The DG- PL VM is, how ever, restricted to discrete lab els and requires a GP mapping to the data, w hic h is compu- tationally prohibitive for high dimensional data. Shon et al. (2005) introduced a Shared-GPL VM (SGPL VM) that used multiple GPs to map from a single shared laten t space to v arious related signals. W ang et al. (2007) demonstrate that a generalisation of m ultilinear mo dels arises as a GPL VM with pro duct k ernels, eac h mapping to differen t signals. This allows one to sepa- rate v arious signals in the data within the context of the GPL VM. Again, due to the Gaussian process map- ping to the data, the shared and m ultifactor GPL VM are not feasible on high dimensional data. Our mo del o vercomes the limitations of these through using a nat- ural parametric form of the GPL VM, the autoenco der, to map to the data. 4 Empirical Analyses W e now presen t exp erimen ts with NPGA on t wo dif- feren t classification datasets. Our implementation of NPGA is av ailable for download at http://removed. for.anonymity.org . In all experiments, the discrim- inativ e v alue of the learned representation is ev alu- ated by training a linear (logistic) classifier, a standard practice for ev aluating latent representations. 4.1 Oil Flow Data As an initial empirical analysis we consider a m ulti- phase oil flow classification problem (Bishop and James, 1993). The data are tw elve-dimensional, real- v alued measuremen ts of gamma densitometry mea- suremen ts from a simulation of multi-phase oil flo w. The classification task is to determine from which of three phase configurations each example originates. There are 1,000 training and 1,000 test examples. The relativ ely small size of these training data make them useful for empirical ev aluation of differen t mo dels and training pro cedures. W e use these data primarily to address tw o concerns: • T o what extent does the nonparametric guidance of an unsup ervised parametric auto encoder im- pro ve the learned feature represen tation with re- sp ect to the classification ob jectiv e? • What additional benefit is gained through using nonparametric guidance o ver simply incorp orat- ing a parametric mapping to the lab els? On Nonparametric Guidance for Learning Auto enco der Representations Beta Alpha 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 (a) 0 0.2 0.4 0.6 0.8 1 2 3 4 5 Alpha Percent Classification Error Fully Parametric Model NPGA − RBF Kernel NPGA − Linear Kernel (b) (c) (d) Figure 1: The effect of scaling the relative con tributions of the auto encoder, logistic regressor and GP costs in the h ybrid ob jectiv e b y modifying α and β . (a) Classification error on the test set on a linear scale from 6% (dark red) to 1% (dark blue) (b) Cross-sections of (a) at β = 0 (a fully parametric model) and β = 1 (NPGA). ( α = 0 . 5) (c & d) Laten t pro jections of the 1000 test cases within the laten t space of the GP for a NPGA ( α = 0 . 5) and a bac k-constrained GPL VM. T o address these questions, we construct a new ob jec- tiv e that linearly blends our proposed supervised guid- ance cost L GP ( φ, Γ ) with the one prop osed by Bengio et al. (2007), referred to as L LR ( φ, Γ ): L ( φ, ψ , Λ , Γ ; α, β ) = (1 − α ) L auto ( φ, ψ ) + α ((1 − β ) L LR ( φ, Λ) + β L GP ( φ, Γ )) , where β ∈ [0 , 1]. Λ are the parameters of a m ulti-class logistic regressor that maps to the lab els. Thus, α con trols the relative imp ortance of sup ervised guid- ance, while β controls the relative imp ortance of the parametric and nonparametric supervised guidance. A grid searc h o ver α and β was p erformed at in terv als of 0 . 1 to assess the b enefit of the nonparametric guid- ance. At each interv al a model w as trained for 100 iter- ations and classification p erformance was assessed via logistic regression on the hidden units of the enco der. Notice how the cost L LR ( φ, Λ) is sp ecifically tailored to this situation. The encoder used 250 noisy rectified linear (NRenLU (Nair and Hinton, 2010)) units, and zero-mean Gaussian noise with a standard deviation of 0.05 was added to the inputs of the autoenco der cost. A subset of 100 training samples was used to mak e the problem more challenging. Eac h exp erimen t was rep eated 20 times with random initializations. The GP lab el mapping used an RBF kernel and w ork ed on a pro jected space of dimension H = 2. Results are presented in Fig. 1. Fig. 1b demonstrates that p erformance impro ves b y integrating out the la- b el map, ev en when compared with direct optimiza- tion under the discriminativ e family that will be used at test time. Figs. 1c and 1d provide a visualisation of the latent representation learned b y NPGA and a stan- dard back-constrained GPL VM. W e see that the for- mer embeds muc h more class-relev ant structure than the latter. An interesting observ ation is that a simple linear ker- nel also tends to outp erform parametric guidance (see Fig. 1b). This doesn’t mean that an y kernel will work for any problem. How ever, this confirms that the ben- efit of our approach is achiev ed mainly through inte- grating out the lab el mapping, rather than having a more p o werful nonlinear mapping to the label. 4.2 Small NORB Image Data As a second empirical analysis, the NPGA is ev alu- ated on a c hallenging dataset with m ultiple discrete and real-v alued lab els. The small NORB data (LeCun et al., 2004) are stereo image pairs of fifty to ys b elong- ing to five generic categories. Each ob ject was imaged under six ligh ting conditions, nine elev ations and eigh- teen azimuths. The ob jects were divided ev enly into test and training sets yielding 24,300 examples eac h. The v ariations in the data resulting from the differen t imaging conditions imp ose significant n uisance struc- ture that will in v ariably b e learned by a standard au- to encoder. F ortunately , these v ariations are known a priori . In addition to the class lab els, there are tw o real-v alued vectors (elev ation and azimuth) and one discrete vector (lighting type) associated with each im- age. In our empirical analysis w e examine tw o ques- tions: • As the autoenco der attempts to coalesce the v ari- ous sources of structure in to its hidden lay er, can the NPGA guide the learning in suc h a w ay as to separate the class-inv ariant transformations of the data from the class-relev ant information? • Are the b enefits of nonparametric guidance still observ ed in a larger scale classification problem, when mini-batch training is used? Jasp er Sno ek, Ryan Prescott Adams, Hugo Laro c helle Classes Elev ation Ligh ting Figure 2: Visualisations of the NORB training (left) and test (right) data latent space representations in the NPGA, corresponding to class (first row), elev a- tion (second row), and lighting (third row). Colors corresp ond to class lab els. T o address this question, an NPGA w as emplo yed with GPs mapping to each of the four labels. Eac h GP was applied to a unique partition of the hidden units of an autoenco der with 2400 NReLU units. A GP map- ping to the class labels was applied to half of the hid- den units and operated on a H = 4 dimensional laten t space. The remaining 1200 units w ere divided ev enly among GPs mapping to the three auxiliary lab els. As the ligh ting lab els are discrete, they w ere treated sim- ilarly to the class lab els, with H = 2. The elev ation lab els are con tin uous, so the GP w as mapped directly to the lab els, with H = 2. Finally , as the azimuth is a p eriodic signal, a p eriodic kernel w as used for the azim uth GP , with H = 1. This elucidates a ma jor ad- v antage of our approac h, as the GP provides flexibilit y that w ould b e challenging with a parametric mapping. This configuration was compared to an auto enco der ( α = 0), an auto enco der with parametric logistic re- gression guidance and a similar NPGA where only a GP to classes was applied to all the hidden units. A bac k-constrained GPL VM and SGPL VM were also ap- plied to these data for comparison 2 . The results 3 are 2 The GPL VM and SGPL VM were applied to a 96 di- mensional PCA of the data for computional tractability , used a neural net cov ariance mapping to the data, and otherwise used the same bac k-constraints, k ernel configu- ration, and minibatch training as the NPGA. 3 A v alidation set of 4300 training cases w as withheld Mo del Accuracy Auto encoder + 4(Log)reg ( α = 0 . 5) 85.97% GPL VM 88.44% SGPL VM (4 GPs) 89.02% NPGA (4 GPs Lin – α = 0 . 5) 92.09% Auto encoder 92.75% Auto encoder + Logreg ( α = 0 . 5) 92.91% NPGA (1 GP NN – α = 0 . 5) 93.03% NPGA (1 GP Lin – α = 0 . 5) 93.12% NPGA (4 GPs Mix – α = 0 . 5) 94.28% K-Nearest Neighbors 83.4% (LeCun et al., 2004) Gaussian SVM 88.4% (Salakh utdinov and Laro c helle, 2010) 3 Lay er DBN 91.69% (Salakh utdinov and Laro c helle, 2010) DBM: MF-FULL 92.77% (Salakh utdinov and Laro c helle, 2010) Third Order RBM 93.5% (Nair and Hinton, 2009) T able 1: Exp erimen tal results on the small NORB data test set. Relev ant published results are sho wn for comparison. NN, Lin and Mix indicate neural net- w ork, linear and a combination of neural netw ork and p eriodic cov ariances resp ectively . rep orted in T able 1. A visualisation of the structure learned by the GPs is sho wn in Figure 2. The mo del with 4 GPs with nonlinear k ernels obtains an accuracy of 94.28% and significantly outp erforms all other models, ac hieving to our kno wledge the b est (non-con volutional) results for a shallo w mo del on this dataset. Applying nonparametric guidance to all four of the signals app ears to separate the class relev ant information from the irrelev ant transformations in the data. Indeed, a logistic regression classifier trained only on the 1200 hidden units on whic h the class GP w as applied achiev es a test error of 94.02%, implying that half of the latent representation can be discarded with virtually no discriminativ e p enalt y . One interesting observ ation is that, for linear k ernels, guidance with resp ect to all lab els decreases the p er- formance compared to using guidance only from the class lab el (from 93.03% down to 92.09%). An au- to encoder with parametric guidance to all four labels for parameter selection and early stopping. Neural net co- v ariances with fixed hyperparameters w ere used for eac h GP , except for the GP on the rotation lab el, which used a p eriodic kernel. The raw pixels w ere corrupted by setting the v alue of 20% of the pixels to zero for denoising au- to encoder training. Each image was lighting and contrast normalized. The error on the test set w as ev aluated using logistic regression on the hidden units of each mo del. On Nonparametric Guidance for Learning Auto enco der Representations w as tested as w ell, mimic king the configuration of the NPGA, with tw o logistic and t w o gaussian outputs op- erating on separate partitions of the hidden units. This mo del achiev ed only 86% accuracy . These observ ations highligh t the adv antage of the GP form ulation for su- p ervised guidance, whic h gives the flexibilit y of choos- ing an appropriate k ernel for differen t lab el mappings (e.g. a p erio dic k ernel for the rotation lab el). 5 Conclusion In this pap er we observ e that the back-constrained GPL VM can b e interpreted as the infinite limit of a particular kind of auto encoder. This relationship enables one to learn the enco der half of an auto en- co der while marginalizing ov er decoders. W e use this theoretical connection to marginalize o ver functional mappings from the latent space of the auto encoder to an y auxiliary lab el information. The resulting non- parametric guidanc e encourages the auto enco der to enco de a latent representation that captures salient structure within the input data that is harmonious with the lab els. Sp ecifically , it enforces the require- men t that a smooth mapping exists from the hidden units to the auxiliary lab els, without choosing a par- ticular parameterization. By applying the approach to tw o data sets, w e show that the resulting non- parametrically guided auto encoder improv es the latent represen tation of an auto enco der with resp ect to the discriminativ e task. Finally , we demonstrate on the NORB data that this mo del can also b e used to dis- c our age laten t represen tations that capture statistical structure that is known to b e irrelev ant through guid- ing the auto enco der to separate the v arious sources of v ariation. This ac hieves state-of-the-art performance for a shallow non-conv olutional model on NORB. References R. P . Adams, Z. Ghahramani, and M. I. Jordan. T ree- structured stick breaking for hierarchical data. In Neur al Information Pr o c essing Systems , 2010. Y. Bengio, P . Lam blin, D. Popovici, and H. Laro chelle. Greedy lay er-wise training of deep netw orks. In Neural Information Pr o c essing Systems , pages 153–160, 2007. C. M. Bishop and G. D. James. Analysis of multiphase flo ws using dual-energy gamma densitometry and neural net works. Nucle ar Instruments and Metho ds in Physics R e se ar ch , pages 580–593, 1993. G. W. Cottrell, P . Munro, and D. Zipser. Learning in ternal represen tations from gra y-scale images: An example of extensional programming. In Confer enc e of the Co gni- tive Scienc e So ciety , pages 462–473, 1987. L. Deng, M. Seltzer, D. Y u, A. Acero, A.-R. Mohamed, and G. E. Hin ton. Binary coding of speech sp ectrograms using a deep autoenco der. In Intersp e e ch , 2010. H. Laro c helle, D. Erhan, A. Courville, J. Bergstra, and Y. Bengio. An empirical ev aluation of deep architec- tures on problems with many factors of v ariation. In International Confer enc e on Machine L e arning , 2007. N. D. Lawrence. Probabilistic non-linear principal com- p onen t analysis with Gaussian pro cess latent v ariable mo dels. Journal of Machine L e arning R ese ar ch , 6:1783– 1816, 2005. N. D. Lawrence and J. Qui ˜ nonero-Candela. Local distance preserv ation in the GP-L VM through bac k constrain ts. In International Conferenc e on Machine Le arning , 2006. N. D. La wrence and R. Urtasun. Non-linear matrix factor- ization with Gaussian pro cesses. In International Con- fer enc e on Machine L earning , 2009. Y. LeCun, F. J. Huang, and L. Bottou. Learning methods for generic ob ject recognition with in v ariance to p ose and ligh ting. Computer Vision and Pattern R e co gnition , 2004. D. J. MacKay . Bay esian neural net works and density net- w orks. In Nucle ar Instruments and Metho ds in Physics R e se ar ch, A , pages 73–80, 1994. V. Nair and G. E. Hinton. 3d ob ject recognition with deep b elief nets. In Neur al Information Pr o c essing Systems , 2009. V. Nair and G. E. Hin ton. Rectified linear units impro ve restricted Boltzmann machines. In International Con- fer enc e on Machine L earning , 2010. R. Neal. Bay esian learning for neural netw orks. L e ctur e Notes in Statistics , 118, 1996. M. Ranzato and M. Szummer. Semi-supervised learning of compact document representations with deep net works. In International Conferenc e on Machine Le arning , 2008. R. Salakhutdino v and G. Hinton. Learning a nonlinear em b edding b y preserving class neigh b ourhoo d structure. In Artificial Intel ligenc e and Statistics , 2007. R. Salakhutdino v and G. Hinton. Using deep b elief nets to learn cov ariance k ernels for Gaussian pro cesses. In Neur al Information Pr o c essing Systems , 2008. R. Salakh utdinov and H. Laro c helle. Efficient learning of deep Boltzmann machines. In Artificial Intel ligenc e and Statistics , 2010. A. P . Shon, K. Gro cho w, A. Hertzmann, and R. P . N. Rao. Learning shared laten t structure for image syn thesis and rob otic imitation. In Neur al Information Pr o c essing Sys- tems , 2005. R. Urtasun and T. Darrell. Discriminativ e Gaussian pro- cess laten t v ariable mo del for classification. In Interna- tional Confer enc e on Machine L e arning , 2007. P . Vincent, H. Laro c helle, Y. Bengio, and P .-A. Manzagol. Extracting and composing robust features with denois- ing auto encoders. In International Confer enc e on Ma- chine L e arning , 2008. J. M. W ang, D. J. Fleet, and A. Hertzmann. Multifactor Gaussian process mo dels for st yle-conten t separation. In International Confer enc e on Machine L e arning , volume 227, 2007. J. M. W ang, D. J. Fleet, and A. Hertzmann. Gaussian pro- cess dynamical mo dels for h uman motion. IEEE P AMI , 30(2):283–298, 2008. C. K. I. Williams. Computation with infinite neural net- w orks. Neur al Computation , 10(5):1203–1216, 1998.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment