Robust Localization from Incomplete Local Information

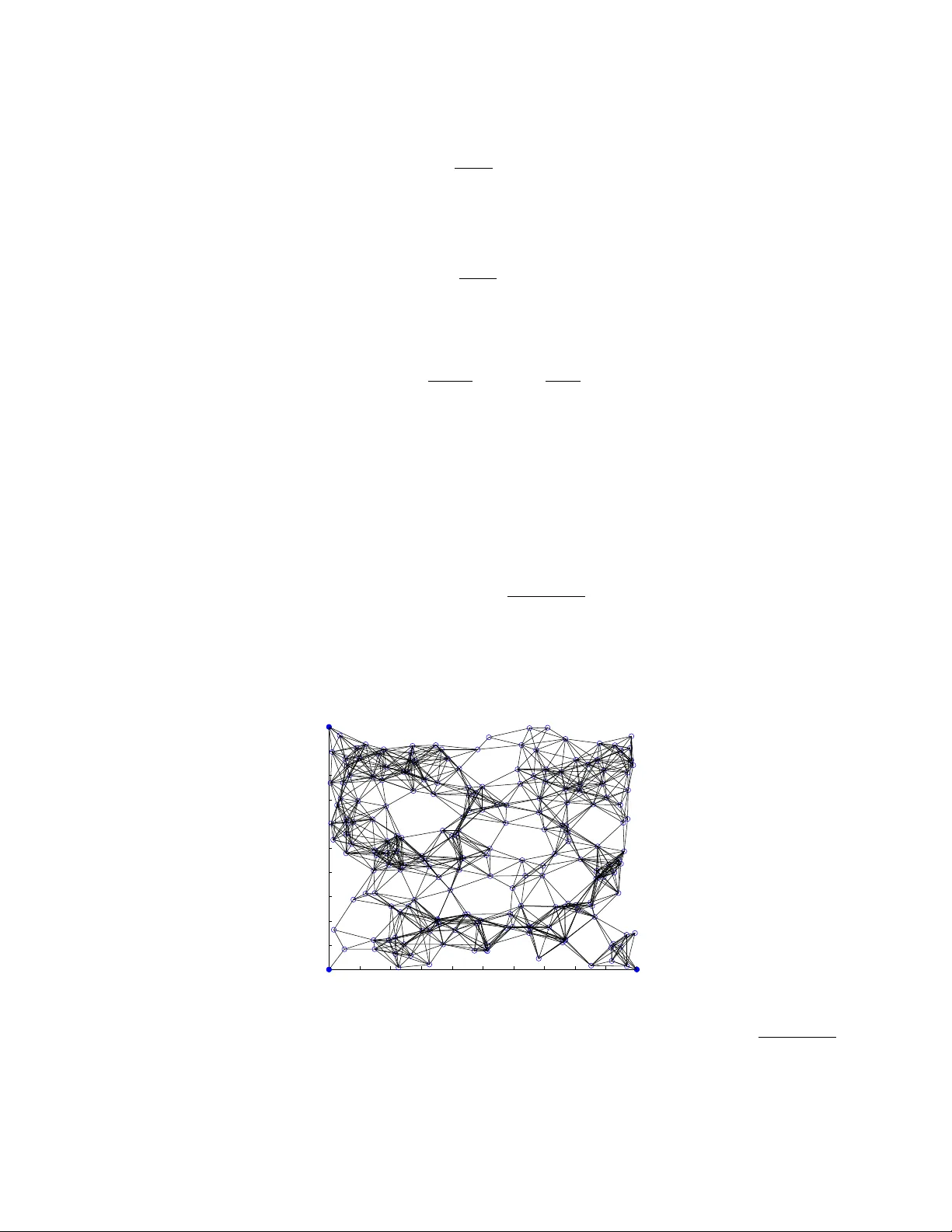

We consider the problem of localizing wireless devices in an ad-hoc network embedded in a d-dimensional Euclidean space. Obtaining a good estimation of where wireless devices are located is crucial in wireless network applications including environme…

Authors: Amin Karbasi, Sewoong Oh