Unsupervised K-Nearest Neighbor Regression

In many scientific disciplines structures in high-dimensional data have to be found, e.g., in stellar spectra, in genome data, or in face recognition tasks. In this work we present a novel approach to non-linear dimensionality reduction. It is based …

Authors: Oliver Kramer

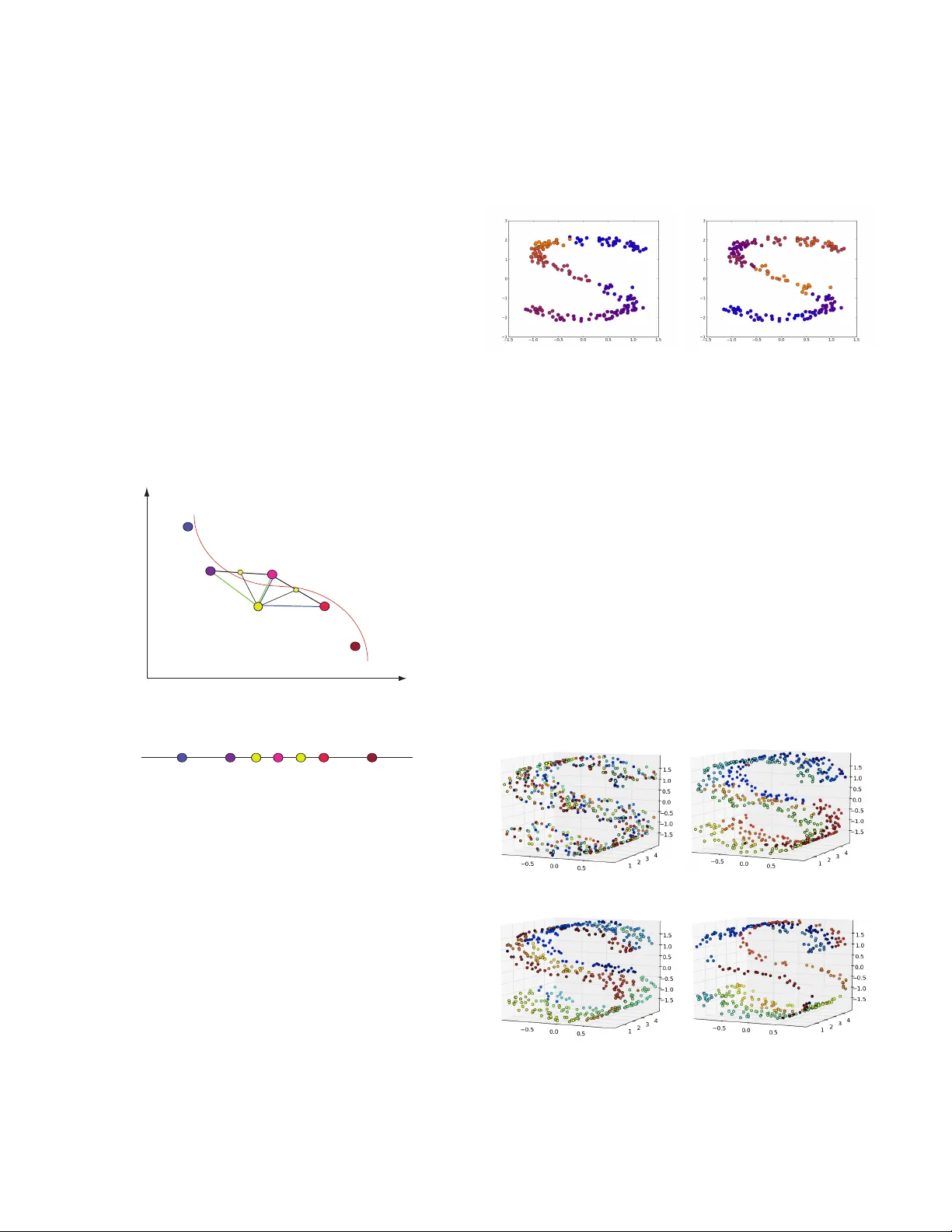

Unsupervised K-Nearest Neighbor Re gression Oli ver Kramer Fakult ¨ at II, Department f ¨ ur Informatik Carl von Ossietzk y Univ ersit ¨ at Oldenbur g 26111 Oldenbur g, Germany oliver .kramer@uni-oldenb ur g.de Abstract —In many scientific disciplines structur es in high- dimensional data hav e to be found, e.g ., in stellar spectra, in genome data, or in face recognition tasks. In this w ork we present a novel approach to non-linear dimensionality reduction. It is based on fitting K-nearest neighbor regression to the unsu- pervised regression framework for learning of low-dimensional manifolds. Similar to r elated approaches that are mostly based on kernel methods, unsupervised K-nearest neighbor (UNN) regres- sion optimizes latent variables w .r .t. the data space reconstruction error employing the K-nearest neighbor heuristic. The problem of optimizing latent neighborhoods is difficult to solve, but the UNN formulation allo ws the design of efficient strategies that iteratively embed latent points to fixed neighborhood topologies. UNN is well appropriate for sorting of high-dimensional data. The iterative variants are analyzed experimentally . I . I N T R O D U C TI O N Dimensionality reduction and manifold learning ha ve an im- portant part to play in the understanding of data. In this work we introduce two fast constructiv e heuristics for dimensional- ity reduction called unsupervised K-nearest neighbor regres- sion. Meinicke [8] proposed a general unsupervised regression framew ork for learning of lo w-dimensional manifolds. The idea is to reverse the regression formulation such that low- dimensional data samples in latent space optimally reconstruct high-dimensional output data. W e take this frame work as basis for an iterativ e approach that fits KNN to this unsupervised setting in a combinatorial variant. The manifold problem we consider is a mapping F : y → x corresponding to the dimensionality reduction for data points y ∈ Y ⊂ R d , and latent points x ∈ Y ⊂ R q with d > q . The problem is a hard optimization problem as the latent variables X are unknown. In Section II we will re vie w related dimensionality reduction approaches, and repeat KNN regression. Section III presents the concept of UNN regression, and two iterativ e strategies that are based on fixed latent space topologies. Conclusions are drawn in Section IV. I I . R E L A T E D W O R K Many dimensionality reduction methods have been pro- posed, a very famous one is principal component analysis (PCA), which assumes linearity of the manifold [5], [10]. An extension for learning of non-linear manifolds is kernel PCA [12] that projects the data into a Hilbert space. Further famous approaches for manifold learning are Isomap by T enenbaum, Silva, and Langford [15], locally linear embedding (LLE) by Roweis and Saul [11], and principal curves by Hastie and Stuetzle [3]. A. Unsupervised Regr ession The work on unsupervised regression for dimensionality reduction starts with Meinicke [8], who introduced the cor- responding algorithmic framew ork for the first time. In this line of research early work concentrated on non-parametric kernel density regression, i.e., the counterpart of the Nadaraya- W atson estimator [9] denoted as unsupervised kernel re- gression (UKR). Klanke and Ritter [6] introduced an op- timization scheme based on LLE, PCA, and leave-one-out cross-validation (LOO-CV) for UKR. Carreira-Perpi ˜ n ´ an and Lu [1] argue that training of non-parametric unsupervised regression approaches is quite expensi ve, i.e., O ( N 3 ) in time, and O ( N 2 ) in memory . Parametric methods can accelerate learning, e.g., unsupervised regression based on radial basis function networks (RBFs) [13], Gaussian processes [7], and neural networks [14]. B. KNN Regr ession In the following, we give a short introduction to K-nearest neighbor regression that is basis of the UNN approach. The problem in regression is to predict output values y ∈ R d to giv en input values x ∈ R q based on sets of N input-output examples (( x 1 , y 1 ) , . . . , ( x N , y N )) . The goal is to learn a function f : x → y known as regression function. W e assume that a data set consisting of observed pairs ( x i , y i ) ∈ X × Y is given. For a nov el pattern x 0 , KNN regression computes the mean of the function values of its K-nearest neighbors: f knn ( x 0 ) = 1 K X i ∈N K ( x 0 ) y i (1) with set N K ( x 0 ) containing the indices of the K -nearest neighbors of x 0 . The idea of KNN is based on the assumption of locality in data space: In local neighborhoods of x patterns are expected to have similar output values y (or class labels) to f ( x ) . Consequently , for an unknown x 0 the label must be similar to the labels of the closest patterns, which is modeled by the av erage of the output value of the K nearest samples. KNN has been prov en well in various applications, e.g., in detection of quasars in interstellar data sets [2]. I I I . U N S U P E RV I S E D K N N R E G R E S S I O N In this section we introduce two iterativ e strategies for UNN regression based on minimization of the data space reconstruction error (DSRE) [8]. A. Unsupervised Regr ession Let Y = ( y 1 , . . . y N ) with y ∈ R d be the matrix of high-dimensional patterns in data space. W e seek for a low- dimensional representation, i.e., a matrix of latent points X = ( x 1 , . . . x N ) , such that a regression function f applied to X ,,point-wise optimally reconstructs the pattern”, i.e., we search for an X that minimizes E ( X ) = 1 N k Y − f ( x ; X ) k 2 F . (2) E ( X ) is called data space reconstruction error (DSRE). Latent points X define the low-dimensional representation. The re- gression function applied to the latent points should optimally r econstruct the high-dimensional patterns. B. UNN An UNN regression manifold is defined by variables x ∈ X ⊂ R q with the unsupervised formulation of an UNN regression manifold f U N N ( x ; X ) = 1 K X i ∈N K ( x , X ) y i . (3) Matrix X contains the latent points x that define the manifold, i.e., the lo w-dimensional representation of data Y . Parameter x is the location where the function is ev aluated. An optimal UNN regression manifold minimizes the DSRE E ( X ) = 1 N k Y − f U N N ( x ; X ) k 2 F , (4) with Frobenius norm k A k 2 F = v u u t d X i =1 N X j =1 | a ij | 2 . (5) In other words: an optimal UNN manifold consists of low- dimensional points X that minimize the reconstruction of the data points Y w .r .t. KNN regression. Regularization in UNN regression may be not as important as regularization in other methods that fit into the unsupervised regression framework. For example, in UKR regularization means penalizing ex- tension in latent space with E ( X ) p = E ( X ) + λ k X k , and weight λ [6]. In KNN regression moving the lo w-dimensional data samples infinitely apart from each other does not have the same effect as long as we can still determine the K- nearest neighbors, but extension can be penalized to avoid redundant solutions. For practical purposes (limitation of size of numbers) it might be reasonable to restrict continuous KNN latents spaces, e.g., to x ∈ [0 , 1] q . In the following section fixed latent space topologies are used that do not require further regularization. C. Iterative Strate gy 1 For KNN not the absolute positions of data samples in latent space are relev ant, but the relativ e positions that define the neighborhood r elations . This perspective reduces the problem to a combinatorial search for neighborhoods N K ( x i , X ) with i = 1 , . . . , N that can be solved by testing all combinations of K -element subsets of N elements, i.e., all N K combinations. The problem is still difficult to solve, in particular for high dimensions. In the following, we introduce a combinatorial approach to UNN, and introduce two iterativ e local strategies. The idea of our first iterativ e strategy (UNN 1) is to itera- tiv ely assign the data samples to a position in an existing latent space topology that leads to the lowest DSRE. W e assume fixed neighborhood topologies with equidistant positions in latent space, and therefore restrict the optimization problem of Equation (3) to a search in a subset of latent space. x y y 1 2 latent space data space xx x x x 12 3 4 5 6 f(x ) 3 f(x ) 5 y Fig. 1. UNN 1: illustration of embedding of a low-dimensional point to a fixed latent space topology w .r .t. the DSRE testing all ˆ N + 1 positions. As a simple variant we consider the linear case of the latent variables arranged equidistantly on a line x ∈ R . In this simplified case only the order of the elements is important. The first iterativ e strategy works as follows: 1) Choose one element y ∈ Y , 2) test all ˆ N + 1 intermediate positions of the ˆ N embedded elements in latent space, 3) choose the latent position with min E ( X ) , and embed y , 4) remov e y from Y , and repeat from Step 1 until all elements hav e been embedded. Figure 1 illustrates the ˆ N + 1 possible embeddings of a data sample into an existing order of points in latent space (yellow/bright circles). For example, the position of element x 3 results in a lower DSRE with K = 2 than the position of x 5 , as the mean of the two nearest neighbors of x 3 is closer to y than the mean of the two nearest neighbors of x 5 . The complexity of UNN 1 can be described as follo ws. Each DSRE ev aluation takes K d computations. W e assume that the K nearest neighbors are saved in a list during the embedding for each latent point x , so that the search for indices N K ( x , X ) takes O (1) time. The DSRE has to be computed for N + 1 positions, which takes ( N + 1) · K d steps, i.e., O ( N ) time. D. Iterative Strate gy 2 The iterative approach introduced in the last section tests all intermediate positions of previously embedded latent points. W e propose a second iterative variant (UNN 2) that only tests the neighbored intermediate positions in latent space of the nearest embedded point y ∗ ∈ ˆ Y in data space. The second iterativ e strategy works as follows: 1) Choose one element y ∈ Y , 2) look for the nearest y ∗ ∈ ˆ Y that has already been em- bedded (w .r .t. distance measure like Euclidean distance), 3) choose the latent position next to y ∗ with min E ( X ) and embed y , 4) remov e y from Y , add y to ˆ Y , and repeat from Step 1 until all elements hav e been embedded. Figure 2 illustrates the embedding of a 2-dimensional point y (yellow) left or right of the nearest point y ∗ in data space. The position with the lowest DSRE is chosen. In comparison to UNN 1, ˆ N distance comparisons in data space have to be computed, but only 2 positions have to be tested w .r .t. the data space reconstruction error . UNN 2 computes the nearest y y 1 2 latent space data space x x 3 4 f(x ) 3 f(x ) 4 y y * x * Fig. 2. UNN 2: testing only the neighbored positions of the nearest point y ∗ in data space. embedded point y ∗ for each data point, which takes N d steps. Only for the two neighbors the DSRE has to be computed, resulting in an overall number of N d + 2 K d steps, i.e., it takes O ( N ) time. Because of the multiplicative constants, UNN 2 is faster in practice. For example, for N = 1 , 000 , K = 10 , and d = 100 , UNN 1 takes 1 , 001 , 000 steps, while UNN 2 takes 102 , 000 steps. T esting all combinations takes 1000 10 steps, which is not computable in reasonable time. The following experimental section will answer the question, if this speedup of UNN 2 has to be paid with worse DSREs. E. Experiments This section shows the behavior of the iterative strategies on three test problems. W e will compare the DSRE of both strategies to the initial DSRE at the end of this section. 1) 2D- S : First, we compare UNN 1 and UNN 2 on a simple 2-dimensional data set, i.e., the 2-dimensional noisy S with N = 200 (2D- S ). Figure 3 shows the experimental results with K = 5 nearest neighbors. Similar colors correspond to neighbored latent points. Part (a) shows an UNN 1 embedding (a) (b) Fig. 3. (a) UNN 1, and (b) UNN 2 embedding with K = 5 on 2D- S . of the 2D- S data set. Part (b) sho ws the embedding of the same data set with UNN 2. The colors of both embeddings show a satisfying topological sorting, although we can observe local optima. 2) 3D- S : In the following, we will test UNN regression on a 3-dimensional S data set (3D- S ). The variant without a hole consists of 500 data points, the variant with a hole in the middle consists of 400 points. Figure 4 (a) shows the order of elements of the 3D- S data set without a hole at the beginning. The corresponding embedding with UNN 1 and K = 10 is shown in Part (b) of the figure. Again, similar colors correspond to neighbored points in latent space. Part (c) of Figure 4 shows the UNN 2 embedding achieving similar results. Also on the UNN embedding of the S data set with hole, see Part (d) of the figure, a reasonable neighbored (a) (b) (c) (d) Fig. 4. Results of UNN on 3D- S : (a) the unsorted S at the beginning, (b) the embedded S with UNN 1 and K = 10 , (c) the embedded S with UNN 2 and K = 10 , and (d) a variant of S with a hole embedded with UNN 2. assignments can be observed. Quantitati ve results for the DSRE are reported in T able I. 3) USPS Digits: Last, we experimentally test UNN regres- sion on test problems from the USPS digits data set [4]. For this sake we take 100 data samples of 256-dimensional (16 x 16 pixels) pictures of handwritten digits of 2’ s and 5’ s. W e embed a one-dimensional manifold, and show the high- (a) (b) Fig. 5. UNN 2 embeddings of USPPS digits: (a) 2’ s, and (b) 5’s. Digits are shown that are assigned to every 14th embedded latent point. Similar digits are neighbored in latent space. dimensional data that is assigned to ev ery 14th latent point, i.e., neighbored digits in the plot are neighbored in latent space. Figure 5 shows the result of the UNN 2-embedding for 2’ s and 5’ s with K = 10 . W e can observe that neighbored digits are similar to each other , while digits that are dissimilar are further away from each other in latent space. 4) DSRE Comparison: Last, we compare the DSRE achiev ed by both strategies with the initial DSRE, and the DSRE achieved by LLE on all test problems. For the USPS digits data set we choose the number 7 . T able I shows the experimental results of three settings for the neighborhood size K . The lowest DSRE on each problem is highlighted with bold figures. After application of the iterative strategies the DSRE is significantly lower than initially . Increasing K results in higher DSREs. With exception of LLE with K = 10 on 2D- S , the UNN 1 strategy always achieves the best results. UNN 1 achiev es lo wer DSREs than UNN 2, with exception of 2D- S , and K = 10 . The win in accuracy has to be paid with T ABLE I C O MPA R IS O N O F D SR E F OR I NI T I A L DAT A SE T , A N D AF T E R E M B ED D I N G W I TH S T RATE G Y UN N 1 , A N D U N N 2. 2D- S 3D- S K 2 5 10 2 5 10 init 201.6 290.0 309.2 691.3 904.5 945.80 UNN 1 19.6 27.1 66.3 101.9 126.7 263.39 UNN 2 29.2 70.1 64.7 140.4 244.4 296.5 LLE 25.5 37.7 40.6 135.0 514.3 583.6 3D- S hole digits (7) K 2 5 10 2 5 10 init 577.0 727.6 810.7 196.6 248.2 265.2 UNN 1 80.7 108.1 216.4 139.0 179.3 216.6 UNN 2 101.8 204.4 346.8 145.3 195.4 222.1 LLE 94.9 198.9 387.4 147.8 198.1 217.8 a constant runtime factor that may play an important role in case of large data sets, or high data space dimensions. I V . C O N C L U S I O N S W ith UNN regression we hav e fitted a fast regression technique into the unsupervised setting for dimensionality re- duction. The two iterati v e UNN strate gies are efficient methods to embed high-dimensional data into fixed one-dimensional latent space taking O ( N ) time. The speedup is achiev ed by restricting the number of possible solutions (reduction of solution space), and applying fast iterativ e heuristics. Both methods turned out to be performant on test problems in first experimental analyses. UNN 1 achiev es lower DSREs, but UNN 2 is slightly faster because of the multiplicative constants of UNN 1. Our future work will concentrate on the analysis of local optima the UNN strategies approximate, and how the approach can be extended to guarantee global optimal solutions. Furthermore, the UNN strategies can be extended to latent topologies with higher dimensionality . For q = 2 the insertion of intermediate solutions into a grid is more difficult: it results in shifting rows and columns of the grid, and thus changes the latent topology in parts that may not be desired. A simple stochastic search strategy can be employed that randomly swaps positions of latent points in the grid. R E F E R E N C E S [1] M. ´ A. Carreira-Perpi ˜ n ´ an and Z. Lu. Parametric dimensionality reduction by unsupervised regression. In CVPR , pages 1895–1902, 2010. [2] F . Gieseke, K. L. Polsterer, A. Thom, P . Zinn, D. Bomanns, R.-J. Dettmar , O. Kramer, and J. V ahrenhold. Detecting quasars in large- scale astronomical surveys. In ICMLA , pages 352–357, 2010. [3] Y . Hastie and W . Stuetzle. Principal curves. Journal of the American Statistical Association , 85(406):502–516, 1989. [4] J. Hull. A database for handwritten text recognition research. IEEE P AMI , 5(16):550–554, 1994. [5] I. Jolliffe. Principal component analysis . Springer series in statistics. Springer , New Y ork u.a., 1986. [6] S. Klanke and H. Ritter . V ariants of unsupervised kernel regression: General cost functions. Neur ocomputing , 70(7-9):1289–1303, 2007. [7] N. D. Lawrence. Probabilistic non-linear principal component analysis with gaussian process latent variable models. J ournal of Machine Learning Research , 6:1783–1816, 2005. [8] P . Meinicke. Unsupervised Learning in a Generalized Regr ession F rame work . PhD thesis, Univ ersity of Bielefeld, 2000. [9] P . Meinicke, S. Klanke, R. Memise vic, and H. Ritter . Principal surfaces from unsupervised kernel regression. IEEE T rans. P attern Anal. Mach. Intell. , 27(9):1379–1391, 2005. [10] K. Pearson. On lines and planes of closest fit to systems of points in space. Philosophical Magazine , 2(6):559–572, 1901. [11] S. T . Roweis and L. K. Saul. Nonlinear dimensionality reduction by locally linear embedding. SCIENCE , 290:2323–2326, 2000. [12] B. Sch ¨ olkopf, A. Smola, and K.-R. Mller. Nonlinear component analysis as a kernel eigenv alue problem. Neural Computation , 10(5):1299–1319, 1998. [13] A. J. Smola, S. Mika, B. Sch ¨ olkopf, and R. C. W illiamson. Regularized principal manifolds. J. Mach. Learn. Res. , 1:179–209, 2001. [14] S. T an and M. Mavrov ouniotis. Reducing data dimensionality through optimizing neural network inputs. AIChE Journal , 41(6):1471–1479, 1995. [15] J. B. T enenbaum, V . D. Silva, and J. C. Langford. A global geometric framew ork for nonlinear dimensionality reduction. Science , 290:2319– 2323, 2000.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment