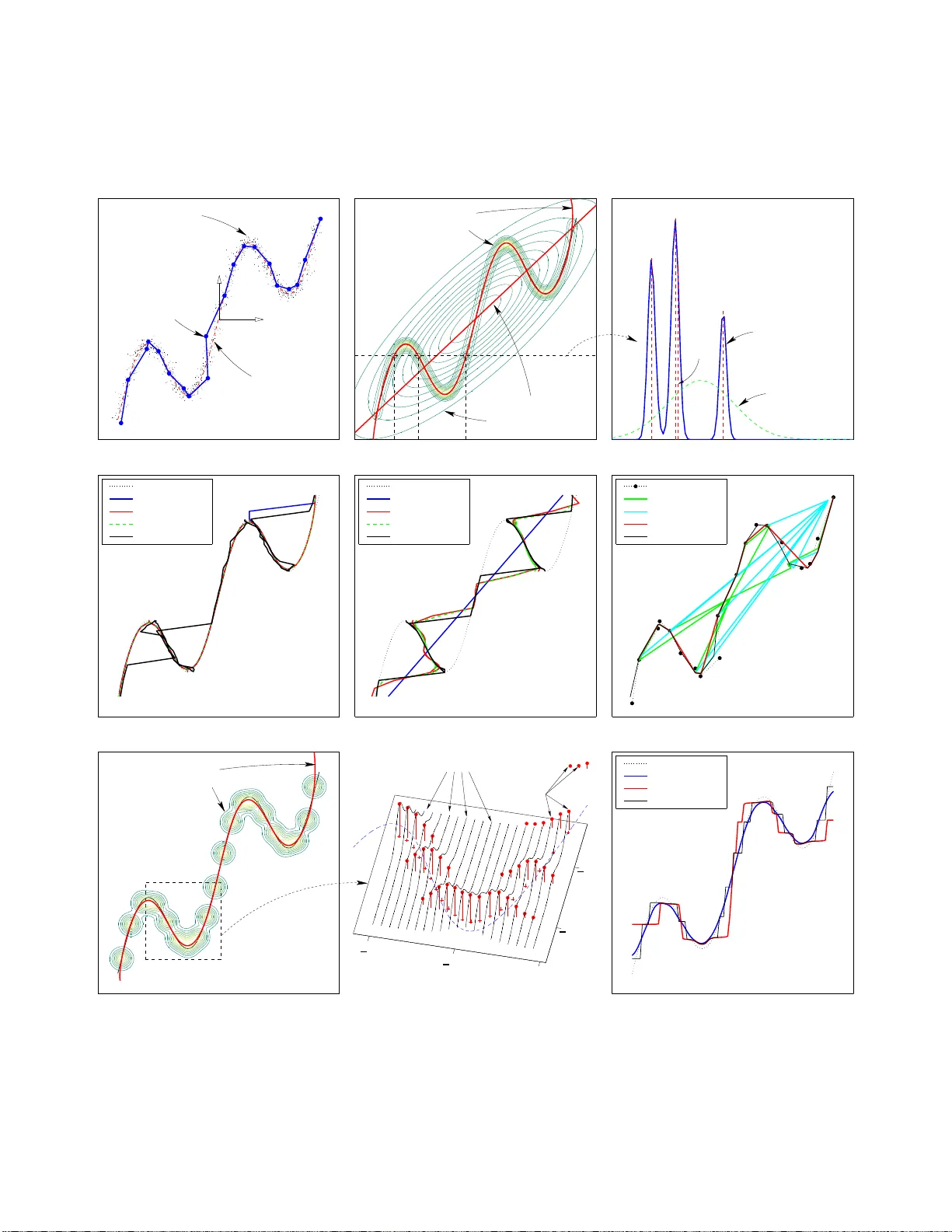

Reconstruction of sequential data with density models

We introduce the problem of reconstructing a sequence of multidimensional real vectors where some of the data are missing. This problem contains regression and mapping inversion as particular cases where the pattern of missing data is independent of …

Authors: Miguel A. Carreira-Perpi~nan