MIS-Boost: Multiple Instance Selection Boosting

In this paper, we present a new multiple instance learning (MIL) method, called MIS-Boost, which learns discriminative instance prototypes by explicit instance selection in a boosting framework. Unlike previous instance selection based MIL methods, w…

Authors: Emre Akbas, Bernard Ghanem, Narendra Ahuja

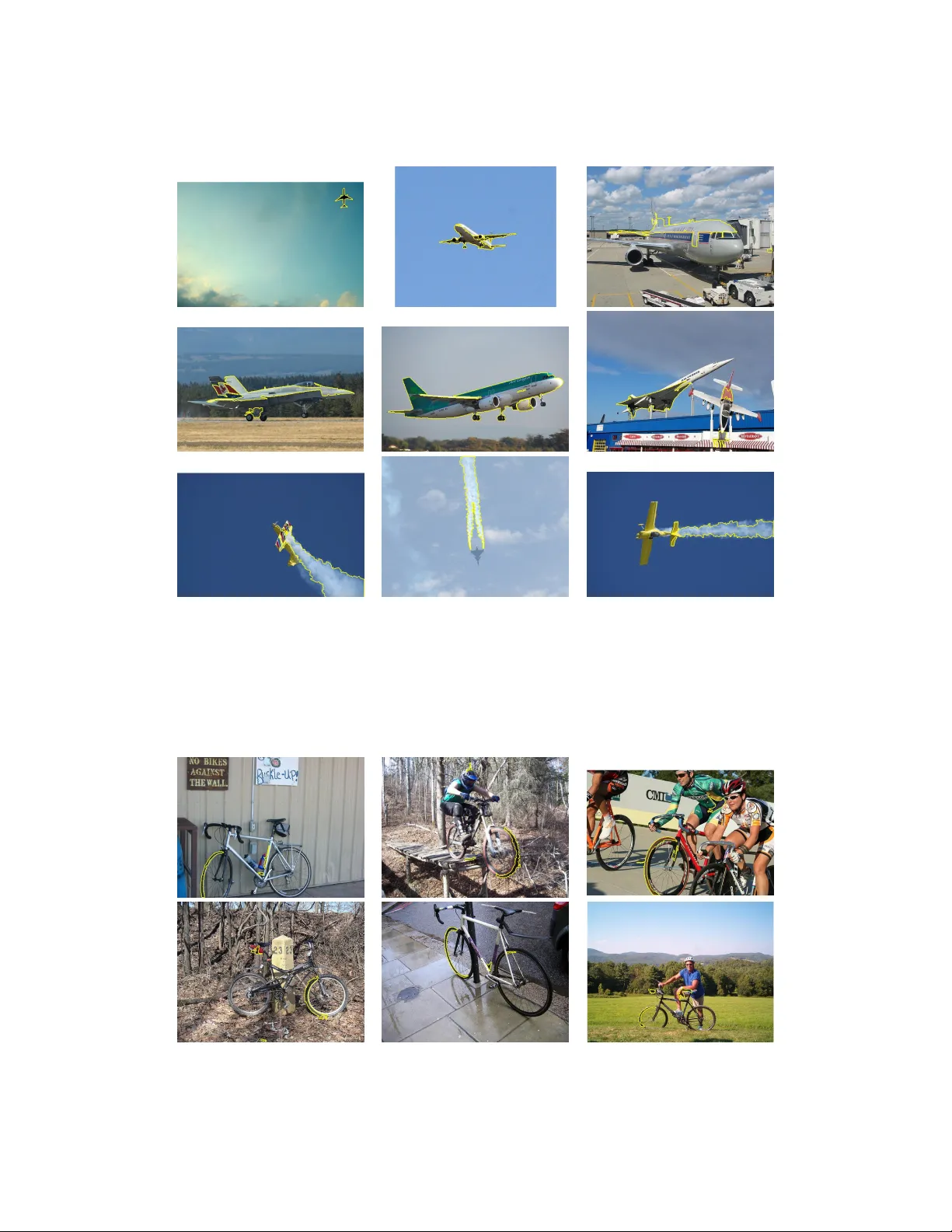

MIS-Boost: Multiple Instance Selection Boosting Emre Akbas Bernard Ghanem Narendra Ahuja Department of Electrical and Computer Engineering Computer V ision and Robotics Lab Beckman Institute for Advanced Science and T echnology Univ ersity of Illinois at Urbana-Champaign, IL USA 61801 { eakbas,bghanem2,ahuja } @vision.ai.uiuc.edu Abstract In this paper , we present a new multiple instance learning (MIL) method, called MIS-Boost, which learns discriminati ve instance prototypes by explicit instance selection in a boosting frame work. Unlike pre vious instance selection based MIL methods, we do not restrict the prototypes to a discrete set of training instances but allow them to take arbitrary values in the instance feature space. W e also do not restrict the total number of prototypes and the number of selected-instances per bag; these quantities are completely data-driv en. W e show that MIS-Boost outperforms state-of-the-art MIL methods on a number of benchmark datasets. W e also apply MIS-Boost to large-scale image classification, where we show that the automatically selected prototypes map to visually meaningful image regions. 1 Intoduction T raditionally , supervised learning algorithms require labeled training data, where each training in- stance is given a specific class label. The performance and learning capability of such algorithms are impacted by the correctness of these instance labels especially when they are obtained through human interaction. In some applications (e.g. object detection, semantic segmentation, and activity recognition), ambiguities in human labeling may arise (e.g. when detection bounding boxes are not accurately sized or positioned). T raditional supervised learning methods cannot easily resolv e these label ambiguities, which are inherently handled by multiple instance learning methods. These latter methods are based on a significantly weaker assumption about the underlying labels of the training instances. They do not assume the correctness of each indi vidual instance label, yet they assume la- bel correctness at the lev el of groupings of training instances. Multiple instance learning (MIL) can be viewed as a weakly supervised learning problem where the labels of sets of instances (known as bags ) are giv en, while the labels of the instances in each bag are unknown. In a typical binary MIL setting, a negati ve bag contains instances that are all labeled negati ve, while a positiv e bag contains at least one instance labeled positi ve. Since the instance labels in positi ve bags are unkno wn, a MIL classifier seeks an optimal labeling scheme for the training instances so that the resulting labels of the training bags are correct. Due to their ability to handle incomplete knowledge about instance labels of training data, MIL methods ha ve gained significant attention in the machine learning and computer vision communities. In fact, the MIL learning frame work manifests itself in numerous applications that span from text categorization [1] and drug activity recognition [2] to many vision applications, where an image (or video) is represented as a bag of instances (interest points, patches, or image segments), only a subset of which are meaningful for the task in question (e.g. image classification). Recently , it has been shown that MIL methods can achieve state-of-the-art performance in a multiplicity of vision applications including content-based image retriev al [3, 4, 5], image classification [6, 7, 8], activity recognition [9], object tracking [10, 11], object detection [12, 13], and image segmentation [14]. In fact, label ambiguity and incompleteness lead to significant challenges in the MIL framew ork, 1 especially when positiv e bags are dominated by negati ve instances (e.g. an image of an airplane dominated by patches of sky). It is not surprising to see empirical e vidence that the use of traditional supervised learning methods in MIL problems often leads to reduced accuracy [15]. Consequently , much effort has been done to dev elop effecti ve learning methods that exploit the structure of MIL problems. W e will give a brief o vervie w of the most recent and popular MIL methods next. Related W ork During the past two decades, many MIL methods hav e been proposed with a significant interest in MIL emerging in recent years especially within the machine learning and vision communities. The work in [16] is one of the earliest papers that address the MIL problem, whereby it was cast in the framew ork of recognizing hand-written numerals. Over the next twenty years, MIL literature has abound with algorithms that dif fer in two main respects: (1) the level at which the labeling is determined: instance-lev el (bottom-up) or bag-level (top-down) and (2) the type of data modeling assumed, i.e. generativ e vs. discriminati ve. (1). Bottom-up vs. T op-down: While most MIL approaches address the problem of predicting the class of a bag directly without inferring the labels of the instances that belong to this bag, some approaches use max margin techniques to do this inference [1, 17, 18]. The latter approaches e xploit the fact that the instances of negati ve bags hav e neg ati ve labels ( − 1 ) and at least one instance in each positiv e bag has a positive label ( +1 ). For example, a MIL version of SVM is proposed in [1], where the traditional SVM optimization problem is transformed into a mixed-integer program and subsequently solved by alternating between solving a traditional SVM problem and heuristically choosing the positiv e instances for each positive bag. (2). Generative vs. Discriminativ e: Some MIL approaches are generativ e in nature, since they assume that the underlying instances conform to a certain structure. An early generati ve approach is based on finding an optimal hyper -rectangle discriminant in the instance space [2]. Other prominent generativ e MIL approaches are based on the notion of div erse density (DD) [19]. These approaches seek a “concept” instance 1 that is close to at least one instance of each positiv e bag and far away from all the instances in the negati ve bags. In other words, a concept instance is a vector in in- stance space that best describes the positiv e bags and discriminates them from the negativ e bags. The existence of such a concept assumes that positiv e instances are compactly clustered and well separated from negati ve instances. Such an assumption is strict and does not always hold in natural data, which tends to be multi-modal. DD-based MIL approaches compute this optimal concept by formulating the problem in a maximum likelihood framework using a noisy-OR model of the like- lihood. Improvements on the original DD formulation hav e been made, where the EM algorithm is used to find the concept instance in [3] and multiple concepts are estimated in [20]. Moreover , stan- dard supervised learning techniques, such as kNN, linear and kernel SVM, AdaBoost, and Random Forests, hav e been adapted to the MIL problem, thus, leading to citation kNN [21], MI-kernel [22], MIGraph/miGraph [6], mi/MI/DD-SVM [1, 23], MI-Boost [24], MI-Winno w [4], MI logistic re- gression [25], and most recently MIForests [11]. Furthermore, some MIL approaches activ ely seek instances in the training set (denoted prototypes) that carry discriminativ e power between the posi- tiv e and negati ve classes. In what follows, we will denote these as instance selection MIL methods. Such approaches transform the original feature space into another space defined by the selected pro- totypes (e.g. using bag-to-instance similarities) and subsequently apply standard supervised learning techniques in the new space. In [7], all training instances are selected to be prototypes, while only one instance per bag is systematically initialized, greedily updated, and selected in [26, 6]. Our proposed instance selection method (dubbed Multiple Instance Selection Boost or MIS-Boost) is inspired by the instance selection MIL methods mentioned above (e.g. MILES [7, 8] and MILIS [6]) and the MI-Boost algorithm in [24]. It was hinted earlier that instance selection MIL methods comprise two fundamental stages. (i) In the representation stage, the original training bags are rep- resented in a new feature space determined by the selected prototypes. (ii) In the classification stage, a supervised learning technique is used to build a classifier in the new feature space to optimize a giv en classification cost. Most of these methods treat the two stages independently and sequently , in such a way that representation (i.e. prototype selection) is unaffected by class label distribution. Here, MILIS is an exception, since it iterati vely selects prototypes fr om the tr aining set to minimize 1 This instance does not hav e to be one of the instances in the training set. 2 classification cost. This selection is further restricted, since only one prototype is selected per train- ing bag. W e consider this to be a restriction because we believ e that the prototype selection process should be data dependent. For example, in the case of image classification, some “simple” object classes (e.g. airplane) may yield a smaller number of prototypes than other more “comple x” classes (e.g. bicycle). As compared to previous methods, prototypes selected by MIS-Boost do not necessarily belong to the gi ven training set and the number of these prototypes is not predefined, since the y are determined in a data-driven fashion (boosting). Since the search space for prototype instances is no longer limited, more discriminativ e and possibly fewer prototypes can be learned . This learning process directly inv olves minimization of the final classification cost. As such, MIS-Boost learns a new representation based on the estimated prototypes, in a boosting framework. This leads to an iterati ve algorithm, which learns prototype-based base classifiers that are linearly combined. At each iteration of MIS-Boost, a prototype is learned so that it maximally discriminates between the positi ve and negati ve bags, which are themselves weighted according to how well they were discriminated in earlier iterations. The number of prototypes is determined in a data-driv en way by cross-v alidation. Experiments on benchmark datasets sho w that MIS-Boost achie ves state-of-the-art performance. When applied to image classification, MIS-Boost selects prototypes that map to meaningful image segments (e.g. class specific object parts). In Section 2, we give a detailed description of the MIS-Boost algorithm including our proposed instance selection/learning method. W e show that MIS-Boost achiev es or improves upon state-of- the-art results on benchmark MIL datasets and popular image classification datasets in Section 3. 2 Proposed Algorithm Giv en a training set T = { ( B 1 , y 1 ) , ( B 2 , y 2 ) , . . . , ( B N , y N ) } where y i ∈ {− 1 , +1 } ∀ i , B i repre- sents the i th bag and y i its label, our goal is to learn a bag-classifier F : B → {− 1 , +1 } . Each bag consists of an arbitrary number of instances. The number of instances in the i th bag is denoted by n i , so we have B i = { ~ x i 1 , ~ x i 2 , . . . , ~ x in i } , where each instance ~ x ij ∈ R N ∀ i, j . W e propose the following additi ve model as our bag classification function: F ( B ) = sign M X m =1 f m ( B ) ! , (1) where each f m ( B ) , called a base classifier, is associated with a prototype instance ~ p m ∈ R N . The function f m : B → [ − 1 , 1] is a bag classifier , like F , and it returns a score between − 1 and 1 , which quantifies the “existence” of the prototype instance ~ p m within bag B . The “existence” of ~ p m within bag B i is determined by the distance from ~ p m to the closest region to ~ p m within B i , that is: D ( ~ p m , B ) = min j d ( ~ p m , ~ x ij ) , (2) where d ( · , · ) is a distance function between two instances, which we take to be Euclidean. W e denote D ( · , · ) as the instance-to-bag distance function. Here, we note that this instance-to-bag distance is used in other instance selection MIL methods (e.g. MILES and MILIS); howe ver , the prototype ~ p m in these methods is restricted to a discrete subset of the training samples. By removing this restriction on ~ p m and allowing it to take arbitrary values in R N , more discriminativ e and possibly fewer prototypes need to be learned . The function f m ( · ) computes the instance-to-bag distances first, and classifies the bags using these distances. Although f m ( · ) can take any suitable form, we opt to use the simple scaled and shifted sigmoid function, parameterized by β 0 , β 1 , and ~ p m . f m ( B ) = 2 1 + e − ( β 1 D ( ~ p m ,B )+ β 0 ) − 1 , (3) Since F is an additi ve model, we use additive boosting to learn its base classifiers [27]. W e call our algorithm multiple instance selection boosting, or MIS-Boost for short. One of the main reasons for 3 choosing boosting for classification is its ability to select a suitable number of prototypes, in a data- driv en fashion. By performing cross-validation, not only does the classifier av oid overfitting on the training set, but it also automatically determines the number of base classifiers (i.e. the number of prototypes) needed to form F . Note that other instance selection methods predefine or fix the number of prototypes that are used. Among the many variants of boosting, we choose Gentle-AdaBoost for its numerical stability properties [27]. 2.1 Learning base classifier f m At each iteration of Gentle-AdaBoost, a weighted least-squares problem must be solved (step 2(a), Algorithm 4 in [27]). In our formulation, the following error should be minimized: arg min ~ p m ,β 0 ,β 1 ε m where ε m = N X i =1 w i y i − 2 1 + e − ( β 1 D ( ~ p m ,B i )+ β 0 ) + 1 2 (4) Here, w i is the weight of the i th bag at the current iteration. The main difficulty in optimizing the cost function above is the fact that the instance-to-bag distance term D ( ~ p m , B ) in volves the non- differentiable “min” function. It is this same function that forces other instance selection methods (e.g. MILES and MILIS) to restrict the prototype search space to a subset of the training samples. For example, MILES considers all training samples as valid prototypes, thus, making learning the classifier ( ` 1 SVM) significantly computationally expensi ve. On the other hand, MILIS takes a brute-force approach to prototype selection by greedily choosing one instance from each training bag as a valid prototype. Although selection is done so that an overall classification cost is iterati vely reduced, this selection strategy highly restricts the feasible prototype space. T o alle viate the problem of non-differentiability in our formulation, we replace “min” in D ( ~ p m , B i ) with a differentiable approximation (known as “soft-min”) to form the soft-instance-to-bag distance ˜ D ( ~ p m , B i ) . By setting α to a lar ge positive constant, we ha ve: D ( ~ p m , B i ) ≈ ˜ D ( ~ p m , B i ) = n i X j =1 π j d ( ~ p m , ~ x ij ) , where π j = e − αd ( ~ p m , ~ x ij ) n i X k =1 e − αd ( ~ p m , ~ x ik ) . (5) Replacing D ( ~ p m , B ) with ˜ D ( ~ p m , B ) in Eq. (4) renders the cost function dif ferentiable, allowing for gradient descent optimization. Howe ver , it is not a con vex cost function, so there is a risk of settling into undesirable local minima. T o alleviate this problem, we allow for multiple initializations of ~ p m . Preferably , these initialization points should be sampled from the entire instance feature space. For this purpose, we cluster all the training instances using k -means, and use the cluster centers as initialization points for ~ p m . W e minimize the cost in Eq. (4) using coordinate-descent. W e start by initializing ~ p m to a cluster center and optimize ov er the ( β 0 , β 1 ) parameters. Then, we fix these parameters and optimize ov er ~ p m . W e iterate this procedure until con ver gence; that is, the difference between successive errors becomes smaller than a given threshold. The overall algorithm used to learn a base classifier is summarized in Algorithm 1. 2.2 Determining the number of base classifiers As the number of base classifiers increases, Gentle-AdaBoost tends to ov erfit on the training data. In order to prev ent this and determine the number of base classifiers automatically , we perform 4 - fold cross validation, whereby we randomly split the training data into 4 equal-size pieces and use 3 pieces for training and the rest for v alidation. W e run the algorithm for a large number of base classifiers and pick the number which giv es the least classification error on the validation set. W e giv e the pseudo-code of MIS-Boost in Algorithm 2. 3 Experiments In this section, we e v aluate the performance of MIS-Boost on fi ve different MIL benchmark datasets and two COREL image classification datasets. W e compare our performance to those of the most 4 Algorithm 1 Learning f m (Pseudo-code for learning a base classifier) Input: T raining set { ( B 1 , y 1 ) , ( B 2 , y 2 ) , . . . , ( B N , y N ) } , W eights w i , i = 1 , 2 , . . . , N , cluster cen- ters { c 1 , c 2 , . . . , c K } , Error tolerance T ol. Output: Base classifier f m ( x ) // Initialize ~ p m to each cluster center for ~ p 0 m = c 1 , c 2 , . . . , c K do error(-1) ← ∞ ( β 0 0 , β 0 1 ) ← arg min ˜ ε m | ( ~ p m = ~ p 0 m ) { Fix ~ p m and minimize ov er β ’ s } error(0) ← ˜ ε m ( ~ p 0 m , β 0 0 , β 0 1 ) t ← 0 while | error( t + 1 ) − error( t ) | ≥ T ol do t ← t + 1 ~ p t m ← arg min ~ p m ˜ ε m | ( β 0 = β t − 1 0 ,β 1 = β t − 1 1 ) { Fix ( β 0 , β 1 ) and minimize ov er ~ p m } ( β t 0 , β t 1 ) ← arg min ˜ ε m | ( ~ p m = ~ p t − 1 m ) error( t ) ← ˜ ε m ( ~ p t m , β t 0 , β t 1 ) end while Keep ( ~ p ∗ m , β ∗ 0 , β ∗ 1 ) with the least error so far end for Set ( ~ p m , β 0 , β 1 ) ← ( ~ p ∗ m , β ∗ 0 , β ∗ 1 ) , and output f m Algorithm 2 MIS-Boost (Pseudo-code for the MIS-Boost algorithm) Input: T raining set { ( B 1 , y 1 ) , ( B 2 , y 2 ) , . . . , ( B N , y N ) } , maximum number of base classifiers M , number of clusters K Output: Classifier F ( x ) Cluster all instances, x ij , i = 1 , 2 , . . . , N ; j = n i , into K clusters. Cluster centers are { c 1 , c 2 , . . . , c K } . Split the training set into train-set and validation-set. W eights w i ← 1 / N for i = 1 , 2 , . . . , N , and F ( x ) = 0 . for m = 1 , 2 , . . . , M do Learn a base classifier f m using the algorithm giv en in Algorithm 1. Update F ( x ) ← F ( x ) + f m ( x ) , Update w i ← w i e − y i f m ( B i ) and normalize weights so that P w i = 1 . Evaluate F ( x ) on the validation-set, compute v alidation-error ( m ) . end for M ← arg min m (validation-error) Output F ( x ) = sign P M i =1 f m ( x ) recent and state-of-the-art MIL methods available for each dataset. In another experiment, we use MIS-Boost in a large-scale image classification task and visualize samples of the instances that are closest to the learned prototype(s). The results of this experiment suggest that the learned prototypes are not only discriminati ve but also visually meaningful, that is they are similar to the parts of image that are relev ant for classification. 3.1 Benchmark MIL datasets The drug activity prediction datasets, “Musk1” and “Musk2” described in [2], and the image datasets, “Elephant”, “F ox”, “T iger” introduced in [1] have been widely used and have become standard benchmark datasets for MIL methods. F or each dataset, we perform 10 -fold cross valida- tion and report the average per-fold test classification accuracy . This is the standard way of reporting results on these datasets. In all our experiments in this section and the two following sections, we set the number of clusters K = 100 , and the maximum number of base learners, or prototypes, to M = 100 . W e report our results in T able 1, where we list the results of the most recent and state-of-the-art MIL methods. T o the best of our knowledge, this table gives the most comprehensiv e comparison 5 T able 1: Percent classification accuracies of MIL algorithms on benchmark MIL datasets. Best results are marked in bold fonts. Method Musk1 Musk2 Elephant Fox T iger MIS-Boost 90.3 94.4 89.0 80.0 85.5 MIForest[11] 85 82 84 64 82 MIGraph[28] 90.0 90.0 85.1 61.2 81.9 miGraph[28] 88.9 90.3 86.8 61.6 86.0 MILBoost[24] 71 61 73 58 58 EM-DD[3] 84.8 84.9 78.3 56.1 72.1 DD[23] 88.0 84.0 N/A N/A N/A MI-SVM[1] 77.9 84.3 81.4 59.4 84.0 mi-SVM[1] 87.4 83.6 82.0 58.2 78.9 MILES[7] 88 83 81 62 80 MILIS[29] 88 83 81 62 80 MI-Kernel[22] 88 89 84 60 84 A W -SVM[30] 86 84 82 64 83 AL-SVM[30] 86 83 79 63 78 MissSVM[18] 87.6 80.0 N/A N/A N/A T able 2: Percent classification accuracies on the COREL-1000 and COREL-2000 datasets. Method COREL-1000 COREL-2000 MIS-Boost 84.2 70.8 MILIS[29] 83.8 70.1 MILES[7] 82.3 68.7 MIForest[11] 82 69 between MIL methods on the benchmark datasets. Clearly , MIS-Boost outperforms other methods in all datasets except the “T iger” class. There, we hav e the second best accuracy with only a 0 . 5% difference with the top performing method, miGraph [28]. Among all the methods in T able 1, MIS- Boost is the most similar to MILES and MILIS, as they are also instance-selection-based methods. Except on “Musk1”, our algorithm significantly outperforms these two methods. W e believe that this improvement is largely due to the fact that our method, in contrast to MILES and MILIS, does not restrict the prototypes to a subset of the training instances, as we discussed in Section 2.1. 3.2 COREL dataset The COREL-2000 image classification dataset [7] contains 2000 images in 20 classes. COREL- 1000 is just a subset of this dataset, which contains the first 10 classes. W e use the same features and experimental settings as in [7], and train one-vs-all MIS-Boost classifiers to deal with the multiclass case. The results of MIS-Boost and three most recent, state-of-the-art methods are gi ven in T able 2. Our method outperforms the other methods on both datasets. T o illustrate the data-driven nature of our algorithm, we giv e the number of prototypes learned per class in Figure 1. 3.3 P ASCAL V OC 2007 The image categorization task of P ASCAL VOC is inherently a MIL problem since the label of an image indicates the existence of at least one object of that label class within the image. Howe ver , to the best of our knowledge, no MIL results have been reported on this dataset. This is probably because of the large number of images ( 10 4 ) it contains. If we assume that each image has a fe w hundred instances, then the total number of instances is in the order of millions. Instance selection based methods like MILES would easily run into memory problems. In this section, we e valuate the performance of MIS-Boost on this large-scale image classification dataset, and visualize the selected instances, i.e. those instances that are closest to the learned prototypes to see if they overlap with the object(s) of interest. T o this end, we run MIS-Boost on three selected classes from the dataset, “aeroplane”, “bicycle”, and “tvmonitor”. 6 0 2 4 6 8 10 12 14 16 18 20 0 10 20 30 40 50 60 70 80 90 100 classes COREL−2000 number of prototypes automatically learned Figure 1: Number of base learners, or prototypes, per class as determined by MIS-Boost on the COREL-2000 dataset. T o decide what type of instances (or features) to use, we did preliminary experiments with SIFT keypoint/descriptors [31] and regions obtained by the segmentation algorithm [32]. Re gions gav e better results ( 0 . 55 av erage-precision (AP)) 2 than the SIFT descriptors ( 0 . 38 AP) on the “aeroplane” class, so we decided to use regions. This dataset is not only huge but also unbalanced. The “aeroplane” class has 442 positive vs. 9518 negati ve; the “bicycle” class has 482 positiv e vs. 9458 negati ve; and “tvmonitor” has 485 positi ve vs. 9429 negati ve images (bags). This unbalancedness makes finding discriminativ e instances in the positiv e bags, among the clutter from the relatively large number of negativ e bags, quite challenging. MIS-Boost yields an av erage-precision (AP) score of 0 . 55 for the “aeroplane” class. This score is significantly below the state-of-the-art (e.g. 0 . 76 in [33]) on that dataset. W e believe that this dis- crepancy is largely due to the fact that MIL methods do not model the context (or the background), while [33] and other similar approaches do so. Although the MIL approach seems to be the most ap- propriate one for the P ASCAL dataset (giv en how the ground-truth is formed), these results suggest that the context/background information is highly discriminative. Another reason for the discrep- ancy might be the instance features we use, namely the segmentation might fail to capture the object or its parts. MIS-Boost yields an AP of 0 . 28 on “bicycle” (compared to 0 . 65 in [33]) and 0 . 36 on “tvmonitor” (compared to 0 . 52 in [33]). Next, we visualize the selected instances in each image that are closest to the learned prototypes. Figure 2 gi ves examples of true positi ves from the aeroplane class. On each image, we sho w the top three instances, i.e. regions, that are closest to the top 3 prototypes learned by MIS-Boost. These regions make the highest contribution to the correct classification of their images. Similarly , Figure 3 and Figure 4 present the same for the “bicycle” and “tvmonitor” classes, respecti vely . As one can observe from the images, the most discriminative regions usually overlap with the object of interest. Occasionally , some wrong instances are selected as shown in the lo wer-middle, and lower - right images of Figure 4. Apparently , the “tvmonitor” classifier learned square-shaped or frame- shaped prototypes, and the windo w in the lo wer-middle image, and the door in the lower -right image are good selections for this prototype. W e will make these challenging P ASCAL datasets publicly av ailable (online), in a MIL format, along with the code needed to visualize selected instances. W e hope that these larger and more challenging datasets are used to compare MIL methods in the future. 4 Conclusion W e presented a new multiple instance learning (MIL) method that learns discriminativ e instance prototypes by explicit instance selection in a boosting framework. W e argued that the following three design choices and/or assumptions restrict the capacity of a MIL method: (i) treating the pro- totype learning/choosing step and learning the final bag classifier independently , (ii) restricting the 2 This is the standard performance measure used in P ASCAL VOC. 7 Figure 2: Example true positives from the “aeroplane” class (i.e. these images contain at least one instance of aeroplane). On each image, the three regions that are most similar to the top three prototypes learned by MIS-Boost are shown with yello w boundaries (Best viewed in color). Figure 3: Example true positives from the “bicycle” class. See caption of Figure 2 for an explanation of the yellow boundaries (Best vie wed in color). 8 Figure 4: Example true positiv es from the “tvmonitor” class. See caption of Figure 2 for an expla- nation of the yellow boundaries (Best vie wed in color). prototypes to a discrete set of instances from the training set, and (iii) restricting the number of selected-instances per bag. Our method, MIS-Boost, overcomes all three restrictions by learning prototype-based base classifiers that are linearly boosted. At each iteration of MIS-Boost, a proto- type is learned so that it maximally discriminates between the positi ve and negati ve bags, which are themselves weighted according to how well they were discriminated in earlier iterations. The num- ber of total prototypes and the number of selected-instances per bag are determined in a completely data-driv en way . W e showed that our method outperforms state-of-the-art MIL methods on a num- ber of benchmark datasets. W e also applied MIS-Boost to large-scale image classification, where we showed that the automatically selected prototypes map to visually meaningful image re gions. References [1] S. Andrews, I. Tsochantaridis, and T . Hofmann, “Support vector machines for multiple- instance learning, ” NIPS , pp. 561–568, 2003. [2] T . G. Dietterich, R. H. Lathrop, and T . Lozano-perez, “Solving the Multiple-Instance Problem with Axis-Parallel Rectangles, ” Artificial Intelligence , vol. 89, pp. 31–71, 1997. [3] Q. Zhang and S. Goldman, “EM-DD: An improved multiple-instance learning technique, ” NIPS , vol. 2, pp. 1073–1080, 2002. [4] S. Cholleti, S. Goldman, and R. Rahmani, “MI-W inno w: A New Multiple-Instance Learning Algorithm, ” International Confer ence on T ools with Artificial Intellig ence , pp. 336–346, 2006. [5] S. V ijayanarasimhan and K. Grauman, “K eyw ords to visual categories: Multiple-instance learning forweakly supervised object categorization, ” CVPR , pp. 1–8, 2008. [6] Z. Fu, A. Robles-K elly , and J. Zhou, “MILIS: Multiple Instance Learning with Instance Selec- tion, ” IEEE TP AMI , vol. 33, no. 10, pp. 1–20, 2010. [7] Y . Chen, J. Bi, and J. Z. W ang, “MILES: multiple-instance learning via embedded instance selection.” IEEE TP AMI , vol. 28, no. 12, pp. 1931–47, 2006. [8] J. Foulds and E. Frank, “Revisiting multi-instance learning via embedded instance selection, ” Lectur e Notes in Computer Science , 2008. [9] M. Stikic and B. Schiele, “Acti vity recognition from sparsely labeled data using multi-instance learning, ” Location and Context A war eness , pp. 156–173, 2009. [10] B. Babenko, M.-H. Y ang, and S. Belongie, “V isual Tracking with Online Multiple Instance Learning, ” IEEE TP AMI , pp. 983–990, 2010. [11] C. Leistner , A. Saffari, and H. Bischof, “MIF orests: Multiple-Instance Learning with Random- ized T rees, ” ECCV , pp. 29–42, 2010. 9 [12] P . V iola, J. C. Platt, and C. Zhang, “Mul tiple Instance Boosting for Object Detection, ” in NIPS , 2007. [13] C. Zhang and P . V iola, “Multiple-instance pruning for learning efficient cascade detectors, ” in NIPS , 2008. [14] A. V ezhne vets and J. Buhmann, “T owards weakly supervised semantic segmentation by means of multiple instance and multitask learning, ” in CVPR , 2010, pp. 3249–3256. [15] S. Ray and M. Craven, “Supervised versus multiple instance learning: An empirical compari- son, ” in International Conference on Mac hine Learning , 2005, pp. 697–704. [16] J. K eeler , D. Rumelhart, and W . Leo w , “Integrated segmentation and recognition of hand- printed numerals, ” in NIPS , 1990, pp. 557–563. [17] P .-M. Cheung and J. T . Kwok, “A regularization frame work for multiple-instance learning, ” International Confer ence on Machine Learning , no. 1, pp. 193–200, 2006. [18] Z.-H. Zhou and J.-M. Xu, “On the relation between multi-instance learning and semi- supervised learning, ” ICML , no. 1997, pp. 1167–1174, 2007. [19] O. Maron and T . Lozano-P ´ erez, “A frame work for multiple-instance learning, ” in NIPS , 1998, pp. 570–576. [20] R. Rahmani, S. a. Goldman, H. Zhang, S. R. Cholleti, and J. E. Fritts, “Localized content-based image retriev al.” IEEE TP AMI , vol. 30, no. 11, pp. 1902–12, 2008. [21] J. W ang and J. Zucker , “Solving the multiple-instance problem: A lazy learning approach, ” in ICML , no. 1994, 2000, pp. 1119–1126. [22] T . Gartner, P . Flach, A. K owalczyk, and A. Smola, “Multi-instance kernels, ” in ICML , 2002, pp. 179–186. [23] Y . Chen and J. W ang, “Image categorization by learning and reasoning with regions, ” JMLR , vol. 5, pp. 913–939, 2004. [24] P . V iola, J. C. Platt, and C. Zhang, “Mul tiple Instance Boosting for Object Detection, ” in NIPS , 2007. [25] Z. Fu, “Fast multiple instance learning via l1, 2 logistic regression, ” ICPR , pp. 1–4, 2008. [26] Z. Fu and A. Robles-K elly , “An instance selection approach to Multiple Instance Learning, ” in CVPR . IEEE Computer Society , 2009, pp. 911–918. [27] J. Friedman, T . Hastie, and R. T ibshirani, “Additiv e Logistic Re gression: A Statistical V ie w of Boosting, ” The Annals of Statistics , vol. 28, no. 2, pp. 337–407, 2000. [28] Z.-H. Zhou, Y .-Y . Sun, and Y .-F . Li, “Multi-instance learning by treating instances as non-I.I.D. samples, ” ICML , pp. 1–8, 2009. [29] Z. Fu, A. Robles-Kelly , and J. Zhou, “Milis: Multiple instance learning with instance selec- tion, ” IEEE TP AMI , pp. 958 – 977, Aug 2010. [30] P . Gehler and O. Chapelle, “Deterministic annealing for multiple-instance learning, ” in Pr oc. of the 11th Int. Conference on Artificial Intelligence and Statistics , Meila, M., and X. Shen, Eds., March 2007, pp. 123–130. [31] D. G. Lo we, “Distincti ve image features from scale-in variant k eypoints, ” IJCV , no. 2, pp. 91 – 110, 2004. [32] E. Akbas and N. Ahuja, “From Ramp Discontinuities to Se gmentation Tree, ” in Asian Confer - ence on Computer V ision (ACCV’09) . Xi’an, China: Springer, 2009, pp. 123 –134. [33] F . Perronnin, J. S ´ anchez, and T . Mensink, “Impro ving the Fisher K ernel for Lar ge-Scale Image Classification, ” in ECCV , vol. 6314. Springer Berlin / Heidelberg, 2010, pp. 143–156–156. 10

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment