The World is Either Algorithmic or Mostly Random

I will propose the notion that the universe is digital, not as a claim about what the universe is made of but rather about the way it unfolds. Central to the argument will be the concepts of symmetry breaking and algorithmic probability, which will b…

Authors: Hector Zenil

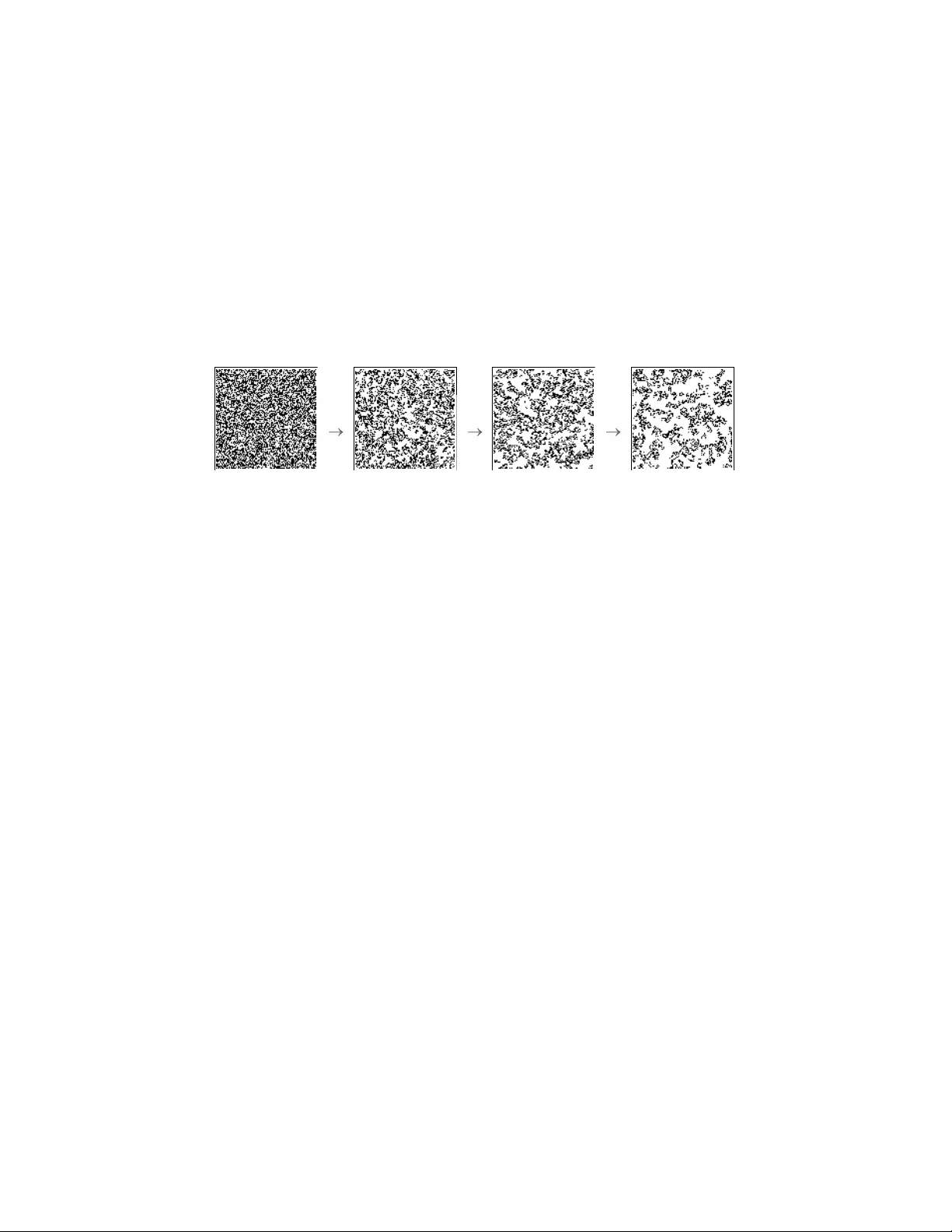

The W orld is Either Algorithmic or Mostly Random Third Prize Winning Essa y 2011 F QXi Con test Is R e ality Digital or A nalo g? Hector Zenil IHPST Univ ersit´ e de P aris 1 – Pan th ´ eon-Sorb onne Abstract I will propose the notion that the universe is digital, not as a claim ab out what the universe is made of but rather about the w a y it unfolds. Cen tral to the argument will b e the concepts of symmetry breaking and algorithmic probability , which will b e used as to ols to compare the w ay patterns are distributed in our w orld to the wa y patterns are distributed in a sim ulated digital one. These concepts will pro vide a framew ork for a discussion of the informational nature of reality . I will argue that if the universe were analog, then the world would likely b e random, making it largely incomprehensible. The digital mo del has, ho wev er, an inherent b eaut y in its imp osition of an upp er limit and in the con vergence in computational pow er to a maximal lev el of so- phistication. Even if deterministic, that it is digital do esn’t mean that the w orld is trivial or predictable, but rather that it is built up from op erations that at the lo west scale are very simple but that at a higher scale look complex and even random, though only in app earance. 1 Ev erything out of nothing Among the simplest h yp otheses compatible with the b est accoun t of the ori- gin of the universe that we curren tly ha ve, one can either c ho ose to start from nothing, the state of the universe with all its matter and energy squeezed in to an infinitely small point of no length, no width, and infinite density called a singularit y; or else a fraction later, out of a state of complete disor- der such that once particles formed they couldn’t do an ything except collide 1 with each other in a completely disordered wa y . In either case there had to ha ve b een a transition to the state in whic h we find ourselves to da y , in a univ erse with physical la ws describing our realit y , from the biggest to the tiniest, la ws that are often simple enough to b e easily comprehensible even if the phenomena they describ e are complicated. The universe seems highly ordered and structured today , in contrast to the background noise left b e- hind by the Big Bang, whic h is similar to what one would see on the screen of an old untuned analog TV (Fig. 1, left image) or hear on an untuned radio station. Given the random state physicists b eliev e to ha ve existed at the inception, em b o dying little to no information, how did we arrive at the structured w orld that we now inhabit? Figure 1: F rom noise to highly organized structures. The cosmic bac kground radiation (left) or what the universe lo oked like at all scales in every direction, and the kinds of structures (right) we find in our everyda y life to day . Whether the universe b egan its existence as a single p oin t, or whether its inception w as a state of complete randomness, one can think of either the p oin t or the state of randomness as quintessen tial states of p erfect symmetry . Either no part had more or less information b ecause there were no parts or all parts carried no information, like white noise on the screen of an un tuned TV. In suc h a state one would b e unable to send a signal, simply b ecause it w ould b e destro yed immediately . But thermal equilibrium in an expanding space was unstable, so asymmetries started to arise and some regions no w app eared co oler than others. The universe quickly expanded and b egan to pro duce the first structures. When the universe had co oled to the p oin t where the simplest atoms could form, white noise no longer dominated and matter to ok o ver, em- barking on a pro cess of structure formation. The first symmetry breaking led to more symmetry breaking. This symmetry breaking can b e found ev erywhere in the universe, from the great disparity b et ween matter and an timatter (atoms with inv erted charge particles) to the w ay planets ro- tate in a single direction around the sun; or in the form of what is to da y 2 kno wn as homo c hirality , groups of molecules that lac k a configuration of non- sup erp osable mirror images; and in living b eings, all of whom share amino acids and sugars but genetically enco ded so that eac h p ossesses one partic- ular (arbitrary) m olecular orien tation rather than another. This symmetry breaking is the fabric of information. The laws of physics may ha ve arisen in this w ay , not as agen ts shaping the universe but as a result of the unfolding of this dynamic of information pro cessing from symmetry breaking. 1.1 Complexit y from randomness If y ou wished to pro duce the digits of the mathematical constant π by throw- ing digits at random, you’d ha ve to try again and again un til y ou got a few consecutiv e num b ers matching an initial segment of the decimal expansion of π . The probability of succeeding w ould b e v ery small: 1 / 10 multiplied b y the desired num ber of digits. F or example, (1 / 10) 2400 for a segment of length 2400 digits of π . But if instead of throwing digits in to the air, one were to thro w bits of computer programs and execute them on a digital computer, things turn out to b e very differen t. F or example, a program pro ducing the digits of the mathematical constant π would ha ve a greater chance of b eing pro duced b y a computer program. The following is an example of a program written in ANSI C language of only 158 c haracters pro ducing the first 2400 digits of π : int a=10000,b,c=8400,d,e,f[8401],g;main(){for(;b-c;) f[b++]=a/5;for(;d=0,g=c*2;c-=14,printf(‘‘\%.4d’’,e+d/a), e=d\%a)for(b=c;d+=f[b]*a,f[b]=d\%--g,d/=g--,--b;d*=b);} This program compresses the first 2400 digits of π , and there are man y other form ulae that can b e implemen ted as short computer programs to generate an y arbitrary num b er of digits of π . Computer programs are lik e ph ysical laws; they pro duce order b y fil- tering out a portion of what one feeds them. Start with a random-lo oking string and run a randomly c hosen program on it, and there’s a go o d c hance y our random-lo oking string will b e turned into a regular, often non-trivial, and highly organized one. In con trast, if y ou were to throw particles, the c hances that they’d group in the wa y they do if there were no physical la ws w ould be so small that nothing w ould happ en in our universe. Ph ysical la ws, lik e computer programs, make things happ en. Just as formulae producing the digits of π are compressed versions of π , ph ysical la ws distill natural phenomena from a series of observ ations. These 3 la ws are v aluable b ecause thanks to them one can predict the outcome of a natural phenomenon without having to w ait for it to unfold in real time. Solv e the equations describing planetary motion and instead of ha ving to w ait tw o years to kno w the future p ositions of a planet, one can (almost 1 ) kno w them precisely and in a fraction of a second (the time it takes to compute the equation) tw o years in adv ance. La ws are alwa ys asso ciated with calculations, and it is no coincidence that all these calculations turn out to b e computable, whether the computations are carried out by humans or b y computers. F or all practical purp oses physical laws are just lik e computer programs. 2 A bit-string Univ erse When Leibniz started doing binary arithmetic, he thought that the world could ha ve come into existence from nothing (zero) and one b ecause ev ery- thing could b e written in this language (the simplest p ossible language by n umber of symbols inv olv ed), the binary language. Out of this extremely simplified language, out of its b eauty , the w orld came into b eing b efore his ey es. If the world were digital at the lo west scale one would end up seeing nothing but strings of bits. They would hav e looked v ery random in the first seconds of existence of the universe, and they w ould suddenly hav e started displa ying patterns equiv alen t to clusters of particles–as actually happ ened. Strings w ould ha ve formed the equiv alent of galaxies, bit-string galaxies, bit- string solar systems and bit-string planets, indeed all manner of bit-string things. The existence of h uman-made digital computers in the univ erse is an ob vious demonstration that the universe is capable of p erforming digital computation. The main questions therefore are whether this computation o ccurs naturally in the universe, how p erv asiv e it is, and whether it is of the same kind as that p erformed b y digital computers. In other w ords, how differen t is a bit-string universe to the universe in which we liv e. The bit- string version is of course an o versimplification, but the tw o ma y not, in the end, b e that different. F or if all matter is made of the same basic particles, what mak es one ob ject differen t from another other than the fact that it o ccupies a differen t 1 Although this is a whole sub ject unto itself, it ma y b e p ointed out here that the fact that our theories are approximate is due to the same symmetry-breaking happ ening at all scales, making our predictions div erge in the long term. But it is this same phenomenon—a phenomenon that one may asso ciate with imp erfection as opposed to p erfect symmetry– that has contin uously created information in the past and con tinues to do so still. 4 space? What mak es a cup a cup and not a human b eing is quite simply the w ay its particles are configured. One could disassemble a cup (or sev eral cups) and reassemble them as a h uman b eing. What mak es a cup a cup and a h uman b eing a h uman b eing is information. In an informational universe, the world would b e computing itself, enabling things to remain themselves. Our digital computers would b e reprogramming a part of the univ erse to mak e it compute what we wan t it to compute. Figure 2: P atterns generated b y a 2D 9-neigh b or with W olfram’s rule n umber 40. Shown here are state space diagrams ev ery 6 runtime steps starting from a 100 × 100 array of (pseudo)random bits. But at a microscopic level, the quantum greatly differs from the macro- scopic world. In the macroscopic world randomness is apparen t, according to this view (and ultimately according to classical mechanics), but it is fundamen tally differen t under the standard interpretation of quan tum me- c hanics. A t the quantum scale, things seem disconnected, y et a compatible informational interpretation ma y b e p ossible. Information can only exist in our w orld if it is carried by a pro cess; every bit has to hav e a corresp on- den t physical carrier. Even though this carrier is not matter, it takes the form of an in teraction b etw een comp onents of matter, an atom interacting with another atom, or a particle in teracting with another particle. But at the lo west level, the most elementary particles, just lik e single bits, carry no information (the Shannon En tropy of a single bit is 0) when they are not interacting with other particles. They ha ve no causal history b ecause are memoryless isolated from external interaction. When particles interact with other particles they link themselves to a causal netw ork and lo ok as if they were forced to define a v alue as a result of this interaction (e.g. a measuremen t). What surprises us ab out the quan tum world is precisely its lac k of the causality that we see ev erywhere else and are so used to. But it is the interaction and its causal history that carries all the memory of the system, with the new bit app earing to us as if it had b een defined at ran- dom. Linking a bit to the causal netw ork ma y also pro duce correlations of measuremen ts b etw een seemingly disconnected parts of space, but if space is informational at its deepest lev el, if information is even more fundamen tal 5 than the matter of whic h it is made and the physical laws go verning that matter, the question of whether these effects violate physical laws may b e irrelev ant. As Charles Bennett[1] has p oin ted out before, in a discrete universe, information is nev er lost. If one throws a deck of cards in to the air, they spread out as they fall, the original order b ecomes unknow able once on the flo or, but the information indicating the cards exact original p osition and path to the flo or is hidden in the form of the air molecules that the cards displaced, the particles that the cards touched in their wa y to the flo or, the particles touc hed b y the particles touc hed b y the cards, ev en the heat all this particles friction pro duced. Reversing ev ery motion would restore the deck to its initial condition. If anything in the c hain turns out to b e random this basic principle collapses, how muc h the collapsing w ould affect the world dep ends of how the world is connected at all scales. Whether there is a kind of garbage collector at the lo west lev el of the universe to erase this memory is yet to b e discov ered but as Bennett p oints out based in the principles of thermo dynamics, this is not the case, information do es not disapp ears. If the w orld w ere analog the w a y this information dissipates may allow information loss. But pro ducing random bits in a discrete universe, where all even ts are the cause of other ev ents, w ould actually b e v ery expensive b ecause one w ould need to devise a w ay to break the causal net work, assuming that this w ere p ossible to b egin with, pro duce a random bit, and keep the rest of the causal net work un touc hed (otherwise w e would see nothing but randomness, whic h is not the case). 3 The algorithmic nature of the w orld If one do es not ha ve any reason to choose a specific distribution and no prior information is av ailable ab out a dataset, the uniform distribution is the one making no assumptions according to the principle of indifference. Consider an unkno wn op eration generating a binary string of length k bits. If the method is uniformly random, the probability of finding a particular string s is exactly 2 − k , the same as for any other string of length k , which is equiv alent to the chances of picking the right digits of π . How ev er, data (just like π –largely present, for example, in nature in the form of common pro cesses relating to curves) are usually pro duced not at random but b y a pro cess. There is a measure which describ es the exp ected pattern frequency dis- tribution of an abstract machine running a random program. A pro cess that 6 pro duces a string s with a program p when executed on a universal T uring mac hine T has probability m ( s )[8] (iden tified as the miraculous univ ersal distribution in [7]). F or an y given string s , there is an infinite num ber of programs that can pro duce s , but m ( s ) is defined suc h that one can as- sign a probabilit y of a string b eing pro duced by a random program (see the App endix). The distribution m ( s ) has another in teresting particularit y , one can start out of almost an ything and, as most probabilistic distributions, the distri- bution remains mostly unc hanged. It is the pro cess that determines the shap e of the distribution and not the initial conditions from which the pro- grams may start from. This is imp ortan t b ecause one do es not make an y assumption on the distribution of initial conditions but on the distribution of programs. Programs running on a universal T uring machine should b e uniform, which do es not necessarily mean truly random. F or example, to approac h m ( s ) from b elow, one can actually define a set of programs of certain size and define an y en umeration to systematically run each program one b y one. This is imp ortant b ecause this means one do es not actually need true randomness, the kind of randomness assumed in quantum mechanics. So one do es not really need quantum mechanics to explain the complexity of the w orld or to underly reality to explain it, one do es require, how ev er, computation, at least in this informational w orldview. It is information that w e think may explain some quan tum phenomena and not quan tum mec hanics what explains computation (neither the structures in the world and how it seems to algorithmically unfold), so w e put computation at the lo west level underlying physical realit y . Just as strings can b e produced b y programs, we may ask after the probabilit y of a certain outcome from a certain natural phenomenon, if the phenomenon, just lik e a computing mac hine, is a pro cess rather than a random even t. If no other information ab out the phenomenon is assumed, one can see whether m ( s ) sa ys anything ab out a distribution of p ossible outcomes in the real world. In a world of computable processes, m ( s ) would indicate the probabilit y that a natural phenomenon pro duces a particular outcome and how often a certain pattern would o ccur. If you w ere going to b et against certain even ts in the real world, without ha ving an y other information, m ( s ) would b e a reasonable decision if the world were digital, just as m ( s ) is for abstract (digital) m ac hines. 7 Figure 3: Start out of nothing or out of randomness and one gets a structured world by iterated computation. 3.1 Un v eiling the machinery So if one wished to know whether the world were algorithmic in nature, one would first need to sp ecify what an algorithmic w orld would lo ok like. If the world is in an y resp ect an algorithmic w orld, the structures in it should b e alike, with their distribution of patterns resembli ng each other. T o demonstrate this, w e conceiv ed and performed[16] a series of exp erimen ts to pro duce data by purely algorithmic means in order to compare it to sets of data pro duced by several physical sources. On the one hand, samples from physical sources w ere tak en. At the righ t lev el data can alw ays be written in binary because each ph ysical observ ation leading to v alues (w eigh t, lo cation, etc.) can b e n umerated independently as a discrete sequence. Eac h frequency distribution is the result of the count of the num b er of o ccurrences of k -tuples (substrings of length k ) extracted from the binary strings from b oth the empirical and the digital datasets obtained from the output of digital mac hines. On the other hand, we pro duced an ex- p erimen tal version of m ( s ) by running a large set of small T uring machines for whic h the halting times are kno wn (thanks to the Busy Bea ver problem). The frequency distributions generated were then statistically compared (see [16]). A ranking correlation test was carried out and its significance mea- sured to v alidate either the n ull hypothesis or the alternative (the latter b eing that the similarities among empirical data and the digital world are 8 due to c hance). Some similarities and differences b etw een the ph ysical world and the purely algorithmic world were found. T o cite a typical example of a differ- ence, in the study of the string symmetry group (see App endix), strings of the type (01) n app ear low-rank ed in the empirical data distributions, unlik e in the digital ones, where they appear b etter rank ed. In the real world, just as pro cesses don’t start from a blank tap e (to use a T uring mac hine as an analogy–see App endix), strings are usually not delimited, one can- not really tell when a physical phenomena has stopp ed but rather when the measuremen t finished. In the real w orld, the probabilit y of destroying highly symmetrical strings due to this lack of delimitation is higher than that of assem bling a symmetrical string b y changing bits at random. There is no w ay to tell when a pro cess starts or ends in nature, and lik ewise there is no w ay to set measurements taking into account the “righ t” time lengths of empirical dataset streams. In the simulated w orld things are differen t b ecause machines hav e a halting configuration, such as a sp ecial halting state. And b ecause in our sim ulated w orld m ac hines hav e no in teraction with an y other machines, p eri- o dic and highly symmetric strings ha ve a greater c hance of making it in tact, app earing b etter ranked in the frequency of strings from higher to low er fre- quency (hence low er to higher random complexity). T o verify that this was actually the case we set up tw o other different exp eriments. One consisted in starting the mac hines from random initial conditions. Ev en if this do es not fully simulate ha ving pro cesses interacting with other pro cesses at ev ery step, it is a setup closer to what happ ens in the real w orld, where compu- tations usually start where other computations end. The other exp eriment consisted of running non-self-delimited mac hines like one-dimensional cel- lular automata which by definition do not hav e an y halting configuration. Their computations could b e halted at arbitrary times, resulting in strings of arbitrary length, just as would happ en in most exp erimen ts in the real w orld when one has basically to decide when to stop making a measurement in order to start a frequency analysis. What we found was what we w ere exp ecting to find: the highly structured (01) n string w as ranked lo wer com- pared to the first simulation, and the longer the n the less well ranked in comparison to the original exp eriment with halting machines, and closer to the rank of the distributions of empirical data. What happ ens is that in the real world highly organized strings hav e little c hance of making it if they interact with other systems. Changing a single bit destro ys a p erfect 2-perio d pattern of a (01) n string, and the longer the string, the greater the o dds of it b eing destroy ed by an in teraction with 9 another system or b ecause it has b een trimmed in the wrong place at the time of the measuremen t. In the c ase of halting T uring machines, how ev er, strings are delimited b y the co de telling the m ac hine to produce exactly n alternations and halt. W e found that while the correlation b etw een the real world dataset and the digital dataset w as not significan t enough to b e conclusive, each dis- tribution w as correlated–with v arying degrees of confidence–with at least one algorithmic distribution pro duced by a mo del of computation. In other w ords, distributions from empirical data disp erse patterns in a similar wa y to the distributions generated by using digital computers (T uring machines, cellular automata and other abstract m ac hines). 3.2 Ho w different is our w orld from a sim ulated digital one? T o illustrate how natural pro cesses may b e algorithmic, and to sp ecify what w e really mean by this, the case of DNA could serve as a p erfect example. Pro cesses kno wn to b e in volv ed in the replication and transmission of DNA are, among others, chromosomal translo cation (a fragment of one c hromo- some is broken off and is then attac hed to another), rev erse transcriptase, fragmen t co de exc hange and crosso ver, chemical annealing (pairing b y h y- drogen b onds to a complementary sequence) and DNA denaturation (separa- tion into single-stranded lengths through the breaking of hydrogen b onding b et ween the bases). These are all relativ ely simple pro cesses. A subset of purely digital op erations can match such op erations with computational ones. Op erations such as joining, copying, partitioning, complementation, trimming, or replacing are equiv alent to those that ma y b e observ ed in DNA. Rules determining the w a y DNA replicates ma y also be of the same algorith- mic type as those gov erning other types of physical phenomena, leading us to sometimes discov er strong similarities in their tuples distribution. DNA construction, except p erhaps for the mutation op eration (the result of in- teraction with another system and not necessarily a true random operation) is the result of a long p erio d of application of simple rules, with la yer up on la yer of the co de of life built up ov er billions of years in a deep algorithmic pro cess with its own characteristic rules making things lik e protein unfolding to app ear to us highly complex. The claim that empirical data can b e treated as a whole as a distribution (i.e. the frequency of certain patterns against others)–as if all empirical data w ere of the same nature–m ust of course b e made with great circumsp ection. One would first need to show that there is a general join t distribution be- hind all sorts of empirical data. This w as indeed something that we tested 10 and rep orted[16]. The demonstration that most empirical data carries an algorithmic signal is that most data are comprehensible to some greater or lesser degree. Think of the diverse kinds of data that you store in y our p er- sonal computer, whether m usic, images or text, all of them highly (lossless) compressible. One ma y wonder whether the lossless compressibility of data is in an y sense an indication of the discreteness of the w orld. It is, and we hav e presen ted some material here that supp orts our answer. The relationship is actually strong; the c hances of finding incompressible data in an analog w orld are m uch greater, simply b ecause the p ossibilities for an ything are m uch greater. The essen tial difference b etw een one w orld and the other is the in tro duction of actual infinit y . An analog w orld means that one can divide space and/or time in to an infinite num b er of pieces, and that matter and everything else ma y b e capable of following an y of these infinitely many paths and con voluted tra jectories. It may seem p ossible to make a lot of sense out of it by wa y of the successful fields of differential calculus and mathematical analysis, y et one has to make a distinction betw een what sym b ols represent and what the actual calculations among the symbols are. If our world is analog, it w ould ha ve a greater c hance of looking like a Chaitin Ω, a random num b er by definition (see the App endix), dep endent on the unpredictability of univ ersal digital computers, also called the halting probabilit y . The world ma y b e a small, apparently ordered fragment of a globally random univ erse, as Calude and Mey erstein ha ve suggested[3]. In whic h case we should consider ourselves extremely lucky to live in a tin y , apparen tly ordered part. Unlik e some physicists who seem to think that a theory explaining the univ erse will ultimately be very complicated and mathematical (for example the views expressed by Stephen W einberg in a recent in terview with Amir Aczel[11]), we think that the correlations found are due to the follo wing rea- son: general physical pro cesses are dominated b y simple algorithmic rules, the same rules that digital computers are capable of carrying out. Our ap- proac h suggests that the information in the world is the result of pro cesses resem bling computer programs rather than of dynamics c haracteristic of a more random, or analog, world. 11 References [1] Bennett, C.H. “Logical Depth and Ph ysical Complexit y” in Rolf Herk en (ed) The Universal T uring Machine–a Half-Century Survey, Oxford Univ ersity Press 227-257, 1988. [2] Calude, C.S. Information and R andomness: A n Algorithmic Persp e c- tive. (T exts in Theoretical Computer Science. An EA TCS Series), Springer; 2nd. edition, 2002. [3] Calude, C.S. and Mey erstein, F. W. Is the universe lawful? Chaos, Solitons & F ractals 10, 6 (1999), 1075–1084. [4] Chaitin, G.J. Algorithmic Information The ory. Cambridge Univ ersity Press, 1987. [5] Delaha y e, J.-P . and Zenil, H. “On the Kolmogorov-Chaitin complexit y for short sequences”, in Calude, C.S. (ed.) R andomness and Complexity: fr om Chaitin to L eibniz. W orld Scien tific, p. 343–358, 2007. [6] Kolmogoro v, A.N. Thr e e appr o aches to the quantitative definition of in- formation . Problems of Information and T ransmission, 1(1): 1–7, 1965. [7] Kirc hherr, W. Li, M. The mir aculous universal distribution. Mathemat- ical In telligencer, 1997. [8] Levin, L. L aws of information c onservation (non-gr owth) and asp e cts of the foundation of pr ob ability the ory. Problems in F orm. T ransmission 10, 206–210, 1974. [9] Llo yd, S. Pr o gr amming the universe. Vintage, 2007. [10] Rado, T. On nonc omputable F unctions. Bel l System T e chnic al J. 41, 877–884, Ma y 1962. [11] Pour L a Scienc e (F renc h edition of Scien tific American), No. 400, F ebruary 2011. [12] Shannon, C. E. A Mathematic al The ory of Communic ation. The Bell System T echnical J. 27, 379–423 and 623–656, July and Oct. 1948 [13] Solomonoff, R. A Pr eliminary R ep ort on a Gener al The ory of Induc- tive Infer enc e. (Revision of Rep ort V-131), Contract AF 49(639)-376, Rep ort ZTB–138, Zator Co., Cambridge, Mass., Nov, 1960. 12 [14] T uring, A.M. On Computable Numb ers, with an Applic ation to the Entscheidungspr oblem , Pro ceedings of the London Mathematical So- ciet y . 2 42: 230–65, 1936, published in 1937. [15] W olfram, S. A New Kind of Scienc e. W olfram Media, 2002. [16] Zenil, H. and Delahay e, J.-P . “On the Algorithmic Nature of the W orld” in Do dig-Crnko vic, G. and Burgin, M. (eds.) Information and Compu- tation. W orld Scientific, 2010. 13 App endix: Some basic theory T uring mac hine A T uring machine[14] is a 5-tuple { s i , k i , s 0 i , k 0 i , d i } , where s i is the tape sym b ol the machine’s head is scanning at time t , k i the machine’s current state (the instruction) at time t , s 0 i a unique symbol to write (the machine can o verwrite a 1 on a 0, a 0 on a 1, a 1 on a 1, or a 0 on a 0) at time t + 1, k 0 i a state to transition into (whic h ma y b e the same as the one it was already in) at time t + 1, and d i a direction to mo ve in time t + 1, either to the right ( R ) cell or to the left ( L ) cell, after writing. At a time t the T uring mac hine pro duces an output describ ed by the con tiguous cells in the tap e visited b y the head. The mac hine halts if and when it reaches the sp ecial halt state 0. A univ ersal T uring machine is capable of reading the transition rules of an y other machine and p erforming the same computation ov er any initial configuration of the tap e. The halting problem One can ask whether there is a T uring mac hine U which, given code ( T ) and the input s , even tually stops and pro duces 1 if T ( s ) halts, and 0 if T ( s ) do es not halt. T uring[14] prov es that there is no such U . The Busy Beav er game W e denote by ( n, 2) the class (or space) of all n-state 2-sym b ol T uring ma- c hines (with the halting state not included among the n states). A busy b ea ver machine[10] is a T uring machine that writes more 1s on the tape than an y other of the same size (num b er of states). If σ T is the n umber of 1s on the tape of a T uring mac hine T up on halting, then: P ( n ) = max { σ T : T ∈ ( n, 2) T ( n ) hal ts } . If t T is the num b er of steps that a mac hine T tak es up on halting, then S ( n ) = max { t T : T ∈ ( n, 2) T ( n ) hal ts } . P ( n ) and S ( n ) are noncom- putable by reduction to the halting problem. V alues are kno wn for (n,2) with n ≤ 4. Algorithmic complexit y The algorithmic complexity[13, 6, 8, 4] (also kno wn as Kolmogorov-Chaitin complexit y or program-size complexit y) C U ( s ) of a string s with resp ect to a universal T uring machine U , measured in bits, is defined as the length in 14 bits of the shortest T uring machine U that pro duces the string s and halts. F ormally , C U ( s ) = min {| p | , U ( p ) = s } where | p | is the length of p measured in bits. Algorithmic complexit y formalizes the concept of simplicit y versus com- plexit y . It opp oses what is simple to what is complex or random. Algorithmic probabilit y Levin’s m ( s ) is the probability of pro ducing a string s with a random pro- gram p when running on a universal prefix-free T uring mac hine[8]. That is, a machine for which a v alid program is never the b eginning of any other program, so that one can define a conv ergen t probability the sum of which is at most 1. F ormally , m ( s ) = Σ p : U ( p )= s 2 −| p | , i.e. the sum o ver all the programs for which U with p outputs the string s and halts. m ( s ) is the probabilit y that the output of U is s when provided with a sequence of fair coin flip inputs as a program. m is related to the concept of algorithmic complexit y in that m ( s ) is at least the maxim um term in the summation of programs, which is 2 − C ( s ) . Roughly sp eaking, algorithmic probability says that if there are man y long descriptions of a certain string, then there is also a short description (low algorithmic complexity) and vice versa. As neither C ( s ) nor m ( s ) is computable, no program can exist which takes a string s as input and pro duces m ( s ) as output. String symmetry group The symmetry group of an ob ject is the group of all isometries under whic h it is in v arian t. One can iden tify three symmetry preserving transformations for bit strings: iden tity (id), rev ersion (re), complemen tation (co) and the com- p osition of (re) and (co) are the p ossible symmetry preserving op erations. A to ol that mak es it p ossible to coun t the num b er of discrete com binatorial ob jects of a given t yp e as a function of their symmetrical cases is provided b y Burnside’s lemma, given b y the formula: (2 n + 2 n/ 2 + 2 n/ 2) / 4 for n o dd, (2 n + 2( n + 1) / 2) / 4 otherwise. 15

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment