Tech Report A Variational HEM Algorithm for Clustering Hidden Markov Models

The hidden Markov model (HMM) is a generative model that treats sequential data under the assumption that each observation is conditioned on the state of a discrete hidden variable that evolves in time as a Markov chain. In this paper, we derive a no…

Authors: Emanuele Coviello, Antoni B. Chan, Gert R.G. Lanckriet

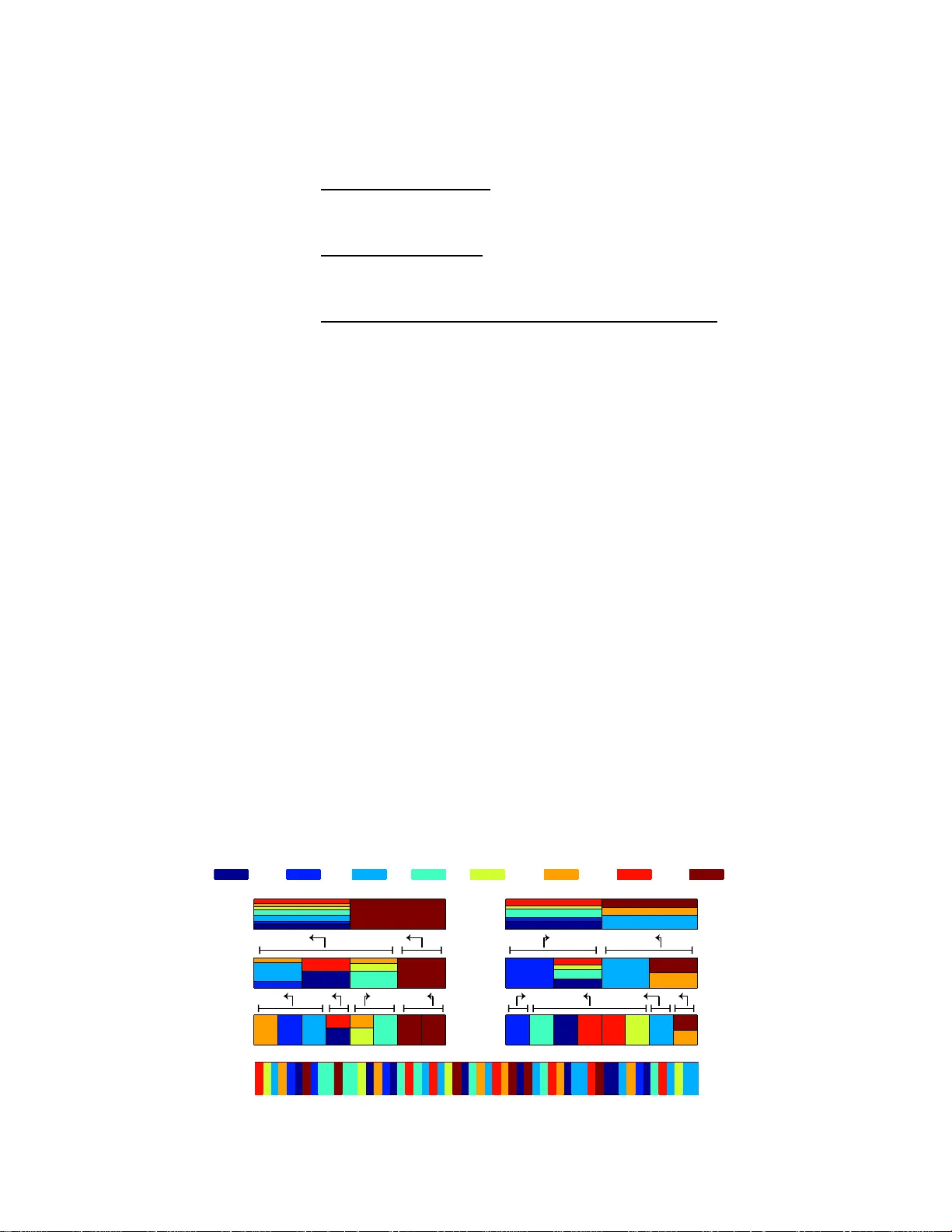

T ech Report A V ariational HEM Algorithm f or Cluste ring Hidden Markov Models Emanuele Coviello ∗ Departmen t of Electrical and Compute r Engineering University of California, San Diego 9500 Gilman Drive La Jolla, CA 92093 ecoviell@ucs d.edu Anotni B. Chan Departmen t of C omputer Science City University of Hong K on g T at Chee A venue, K owloon T ong, Hong K o ng abchan@cityu .edu.hk Gert R.G. Lanckriet Departmen t of Electrical and Compute r Engineering University of California, San Diego 9500 Gilman Drive La Jolla, CA 92093 gert@ece.ucs d.edu Abstract The hidden Markov model (HMM) is a g enerative model that treats sequential d ata under the assumption that each obser vation is conditioned o n the state of a discrete hidden variable th at ev olves in time as a Markov chain. In this paper, we derive a n ovel algorithm to cluster HMMs throug h their pro bability distributions. W e propo se a hierarchical EM algorithm that i) clusters a giv en collection of HMMs into groups of HMMs that are similar , in t erms of the distrib utions they represent, and ii) char acterizes each grou p by a “cluster center”, i.e., a n ovel HMM that is representative for the gro up. W e present several empirical studies that illustrate the benefits of the proposed algorithm. 1 Intr oduction The hidd en Markov m odel (HMM ) [1] is a probab ilistic model that assumes a sig nal is gen erated by a d ouble embedded stochastic proce ss. A h idden state pro cess, which e volves over discrete time instants as a Ma rkov chain, encodes the dynamics of the signal, and an observation p rocess, at each time co nditioned on th e curren t state, enco des the appeara nce of the signal. HMMs h av e been successfully applied to a variety of ap plications, including speech recognition [1], music analysis [2], on-line hand-wr iting recognition [3], analysis of biolog ical sequences [4]. The f ocus o f this paper is an algorithm for clustering HMMs. More p recisely , w e d esign a n algo rithm that, g i ven a collection of HMMs, partitio ns them into K clusters of “similar” HMMs, while also learning a rep resentative HMM “clu ster center” that sum marizes each cluster appropriately . This is ∗ http://acsweb .ucsd.edu/ ∼ ecoviell/ 1 similar to standard k-means clustering, e x cept that the data points are HMMs no w instead of vectors in R d . Such HMM clustering algorithm has v ar ious potential applications, ranging from hierarchical clustering of sequential data (e.g ., spee ch or motion sequences), over hier archical ind exing for fast retriev al, to re ducing the comp utational co mplexity of e stimating mixtur es of HMMs from large datasets via hierarch ical modelin g (e.g., to learn semantic annotation models for music and video). One possible approac h is to group HMMs in parameter space. Howe ver , as HMM parameter s lie on a non-lin ear manifold, they cannot be clustered by a simple application of the k-me ans algorithm, which ass umes real vectors in a Euclidean space . One solution, proposed in [5], fir st constructs an approp riate similarity matrix between a ll HMMs th at are to be clu stered (e.g. , based on the Bhat- tacharya affinity , which depen ds on the HMM parameters [6]), and then applies spectral clu stering. While this approach has pr oven successful to group HMMs into similar clusters [5], it d oes not allo w to generate novel HMMs as cluster centers. I nstead, one is limited to representing each cluster by one of the gi ven HMMs, e.g., the HMM which the spectral clustering procedu re maps the closest to each spectral clustering center . This may be suboptim al for v ar ious applications of HMM clustering. An alternative to clustering the HMMs in parameter space is to cluster them dir ectly with re spect to the pr obab ility distr ibutions they re present. T o cluster Gaussian probability distributions, V ascon- celos and Lipm ann [7] prop osed a hierar chical expectation -maximizatio n (HEM) alg orithm. This algorithm starts fr om a Gaussian mixtur e model (GMM) with K ( b ) compon ents and re duces it to another GMM with fe wer compon ents, where each of th e mixture components of the reduced GMM represents, i.e., cluster s , a group of the original Gaussian mixture components. More recently , Chan et al. [8] deriv ed an HEM algorithm to cluster dynamic textur e (DT) mo dels (i.e ., line ar dyn amical systems, LDSs) through their probability distributions. HEM has been ap plied successfully to con- struct GMM hier archies f or ef ficient image in dexing [9], to cluster vid eo r epresented by DTs [10], and to estimate GMMs or DT mixtur es (DTMs,i.e., LDS mixtures) from large datasets for semantic annotation of images [11], video [10] and music [12, 13]. T o extend the HEM framework for GMMs to mix tures of HM Ms (H3Ms), addition al margin aliza- tion of the h idden-state processes is requir ed, as for DTMs. Ho wever , wh ile Gaussians a nd DTs allow trac table inf erence in th e E-step of HEM, this is no longer the case for HMMs. Th erefore , in this work , we derive a variational formu lation of the HEM algorith m (VHEM) to clu ster HMMs throug h their probability distributions, based on a variational approx imation der iv ed by Her shey [14]. The resulting algorithm not o nly allows to cluster HMMs, it a lso learns novel HMMs th at are r epresentative centers of each cluster, in a way that is co nsistent with the und erlying gen erative probab ilistic model of the HMM. The resulting VHEM algorithm can be generalized to hand le o ther classes of gr aphical mo dels, fo r whic h stand ard HEM would other wise be intr actable, by leverag- ing similar variational approx imations. The efficacy of the VHEM-H3M algorithm is demo nstrated for various ap plications, includin g h ierarchical motion clusterin g, sem antic music ann otation, an d online hand-writin g recog nition. 2 The hidden Markov model A hidden Markov model ( HMM) M assumes a sequenc e of τ o bservations y 1: τ is g enerated b y a double e mbedde d stochastic p rocess, wh ere eac h ob servation y t at tim e t depend s on the state of a discrete hidd en variable x t and where the sequen ce of hid den states x 1: τ ev olves as a first ord er Markov pr ocess. The discrete variables can take o ne of N values, and the ev olution o f the hidd en process is encoded in a tra nsition matrix A = [ a β ,γ ] β ,γ =1 ,...,N whose entries are the state transition probab ilities a β ,γ = P ( x t +1 = γ | x t = β ) , an d an initial state distribution π = [ π 1 , . . . , π N ] , where π β = P ( x 1 = β ) . Each state gener ates ob servation accord ingly to an emission p robab ility density function , p ( y t | x t = β , M ) , which her e we assume to be a Gaussian mixture model: p ( y | x = β ) = M X m =1 c β ,m N ( y ; µ β ,m , Σ β ,m ) (1) where M is the nu mber of Gau ssian co mponen ts an d c β ,m are the mix ing weig hts. In the fo l- lowing, when r eferring to a sequen ces of length τ , we will use the notation π x 1: τ P ( x 1: τ ) = π x 1 Q τ t =2 a x t − 1 ,x t to represent the probability that the HMM gen erates the state sequ ence x 1: τ . The HMM is specified by the param eters M = { π , A, c β ,m , µ β ,m , Σ β ,m } which can be efficiently learned with the forward-b ackward algorithm [1], which is based on maximum likelihood. 2 A hidden Markov mixture model (H3M) [15] models a set observation sequences as samples from a group of K hidd en Markov models, which rep resent dif ferent sub-behaviors. For a gi ven sequence, an assignm ent variable z ∼ multin omial( ω 1 , · · · ω K ) selects the p arameters of one of the K HMMs, where the k − th H MM is selected with probability ω k . Each mixture componen t is parametrized by M z = { π z , A z , c z β ,m , µ z β ,m , Σ z β ,m } and the H3M is parame trized b y M = { ω z , M z } K z =1 . Given a collectio n S = { y 1 1: τ , . . . , y |S | 1: τ } of relevant observation sequen ces, the p arameters of M can be learned with recourse to the EM algorithm [15]. T o redu ce clutter , here we assume that all the HMMs have the same numb er of states N an d that all emission probab ilities ha ve M mixture compo nents, though a more general case could be deri ved. 3 V ariational hierar chical EM al gorithm f or H3Ms The hierar chical expectation maxim ization algor ithm (HEM) [ 7] was in itially propo sed to clu ster Gaussian distributions, by redu cing a GMM with a large nu mber of compon ents to a new GMM with fewer components, and then extended to dynamic te xture models [8]. In this section we d erive a variational formu lation of the HEM algorithm (VHEM) to cluster HMMs. 3.1 Formulation Let M ( b ) be a base hidde n Markov mixture m odel with K ( b ) compon ents. Th e g oal o f the VHEM algorithm is to find a r educed mixtur e M ( r ) with K ( r ) < K ( b ) compon ents that represent M ( b ) . The likelihood of a random sequence y 1: τ ∼ M ( b ) is giv en by p ( y 1: τ |M ( b ) ) = K ( b ) X i =1 ω ( b ) i p ( y 1: τ | z ( b ) = i, M ( b ) ) , (2) where z ( b ) ∼ multinomial( ω ( b ) 1 , · · · ω ( b ) K ( b ) ) is the hid den variable that indexes the mixtur e comp o- nents. p ( y 1: τ | z = i , M ( b ) ) is the likeli hood of y 1: τ under the i th mixture compon ent, and ω ( b ) i is the prior weight for the ith compon ent. The likelihood of the random sequence y 1: τ ∼ M ( r ) is p ( y 1: τ |M ( r ) ) = K ( r ) X j =1 ω ( r ) j p ( y 1: τ | z ( r ) = j, M ( r ) ) , (3) where z ( r ) ∼ m ultinomia l( ω ( r ) 1 , · · · , ω ( r ) K ( r ) ) is the hidden variable for indexing compo nents in M ( r ) . Note that we will always u se i and j to index the comp onents of th e b ase m odel, M ( b ) , and the reduc ed mo del, M ( r ) , respectively . I n ad dition, we will always use β and γ to ind ex th e hidden states of M ( b ) i and ρ and σ for M ( r ) j . T o reduce clutter we will denote p ( y 1: τ | z ( b ) = i, M ( b ) ) = p ( y 1: τ |M ( b ) i ) , and E y 1: τ |M ( b ) ,z ( b ) = i [ · ] = E M ( b ) i [ · ] . In a ddition, we will use short- hands M ( b ) i,β 1: τ and M ( r ) i,ρ 1: τ when conditionin g over spec ific state sequen ces. F o r exam ple, we denote p ( y 1: τ | x 1: τ = β 1: τ , M ( b ) i ) = p ( y 1: τ |M ( b ) i,β 1: τ ) , and E y 1: τ |M ( b ) i ,x 1: τ = β 1: τ [ · ] = E M ( b ) i,β 1: τ [ · ] . Finally , we will use m and ℓ for indexing the g aussian mixtu re compon ents of the emission pro babilities of the base respectiv ely reduced mixture, which we will denote as M ( b ) ,i β ,m and M ( r ) ,j ρ,ℓ . 3.2 Parameter estimation - a variational f ormulat ion T o obtain th e red uced mo del, we co nsider a set of N virtual samples drawn from the b ase mod el M ( b ) , such that N i = N ω ( b ) i samples are drawn fro m th e i th compo nent. W e deno te the set of N i virtual samples f or the i th com ponen t as Y i = { y ( i,m ) 1: τ } N i m =1 , where y ( i,m ) 1: τ ∼ M ( b ) i , and the entire set of N samples as Y = { Y i } K ( b ) i =1 . Note that, in this formulatio n, we are not generating virtual samples { x ( i,m ) 1: τ , y ( i,m ) 1: τ } acc ording to th e join t distribution of the base comp onent, p ( x 1: τ , y 1: τ |M ( b ) i ) . The reason is that the h idden state spaces o f each base mixtur e com ponen t M ( b ) i may have a different 3 representatio n, (e. g., , the num bering of the h idden states ma y be permu ted b etween th e com po- nents). T his ba sis mismatch will cause prob lems when the paramete rs of M ( r ) j are co mputed fr om virtual samples of the hidd en states of { M ( b ) i } . Instead, we must treat X i = { x ( i,m ) 1: τ } as “m issing” informa tion, as in the standard EM formu lation. The likelihood of the virtual samples is J ( M ( r ) ) = log p ( Y |M ( r ) ) = K ( b ) X i =1 log p ( Y i |M ( r ) ) = K ( b ) X i =1 log K ( r ) X j =1 ω ( r ) j p ( Y i |M ( r ) j ) . (4) In particu lar , f or a giv en M ( r ) , the co mputation of log p ( Y i |M ( r ) ) can be carried out solvin g the optimization prob lems [16, 17]: log p ( Y i |M ( r ) ) = max P i ( z i ) log p ( Y i |M ( r ) ) − D ( P i ( z i ) || P ( z i = j | Y i , M ( r ) )) (5) = max P i ( z i ) X j P i ( z i = j ) h log ω ( r ) j + log p ( Y i |M ( r ) j ) − log P i ( z i = j ) i (6) for i = 1 , . . . , K ( b ) , where P i ( z i ) ar e variational d istributions and D ( p k q ) = R p ( y ) log p ( y ) q ( y ) dy is the Kullback-L eibler (KL) divergence between two distributions, p and q . In orde r to ob tain a consistent clusterin g [7], we assum e the whole sample Y i is assigned to the same comp onent of the reduced model, i.e., P i ( z i = j ) = z ij , with P K ( r ) j =1 z ij = 1 , ∀ i and z ij ≥ 0 ∀ i, j , and (6) becomes: log p ( Y i |M ( r ) ) = max z ij X j z ij h log ω ( r ) j + log p ( Y i |M ( r ) j ) − log z ij i (7) Considering that virtu al samples Y i are independ ent for dif ferent v alues of i , we ca n solve (6) inde- penden tly for each i , using the result in Section 6.2, and find ˆ z ij = ω ( r ) j exp { N i E M ( b ) i h log p ( Y i |M ( r ) j ) i } P K ( r ) j ′ =1 ω ( r ) j ′ exp { N i E M ( b ) i h log p ( Y i |M ( r ) j ′ ) i } . (8) For the likelihood of the virtual samples p ( Y i |M ( r ) j ) we use log p ( Y i |M ( r ) j ) = N i X m =1 log p ( y ( i,m ) 1: τ |M ( r ) j ) = N i " 1 N i N i X m =1 log p ( y ( i,m ) 1: τ |M ( r ) j ) # (9) ≈ N i E M ( b ) i h log p ( y 1: τ |M ( r ) j ) i (10) where (10) follows from the law of large number s [7 ] (as N i → ∞ ). Substituting (10) in (8) we g et the formula for z ij derived in [7]: ˆ z ij = ω ( r ) j exp { N i E M ( b ) i h log p ( y 1: τ |M ( r ) j ) i } P K ( r ) j ′ =1 ω ( r ) j ′ exp { N i E M ( b ) i h log p ( y 1: τ |M ( r ) j ′ ) i } . (11) W e fo llow a similar appro ach to com pute the expected log -likelihoods E M ( b ) i h log p ( y 1: τ |M ( r ) j ) i . W e introdu ce variational d istributions P i,j β 1: τ to approximate P ( x 1: τ | y 1: τ , M ( r ) j ) for ob servations y 1: τ ∼ M ( b ) i emitted by state sequence β 1: τ , and solve the maximization problem E M ( b ) i h log p ( y 1: τ |M ( r ) j ) i = (12) = max P i,j X β 1: τ π ( b ) ,i x 1: τ E M ( b ) i,β 1: τ h log p ( y 1: τ |M ( r ) j ) − D ( P i,j β 1: τ || P ( x 1: τ | y 1: τ , M ( r ) j )) i (13) = max P i,j X β 1: τ π ( b ) ,i x 1: τ E M ( b ) i,β 1: τ " X ρ 1: τ P i,j β 1: τ ( x 1: τ = ρ 1: τ ) log π ( r ) ,j ρ 1: τ p ( y 1: τ |M ( r ) j,ρ 1: τ ) P i,j β 1: τ ( x 1: τ = ρ 1: τ ) # (14) 4 where in (13) we have used the law o f total pro bability to con dition the expectation over each state sequence β 1: τ of M ( b ) i In general, maximizing (14) exactly sets the v ariational distribution t o the true posterior and reduces (together with (11)) to the E-step of the HEM algorithm for hidden state models derived in [10]: E M ( b ) i h log p ( y 1: τ |M ( r ) j ) i = E M ( b ) i h E x 1: τ | ˆ M ( r ) j h log p ( x 1: τ , y 1: τ |M ( r ) j ) ii + ˜ H (15) where the inner exp ectation is taken with respe ct to the curr ent estimate ˆ M ( r ) j of M ( r ) j , and ˜ H is some term that does not depend on M ( r ) j . 3.3 The variational HEM f or HMMs The ma ximization of (14) cann ot be carried o ut in a efficient way , as it in volves computin g the expected log- likelihood of a m ixture. T o make it tractable we follow a variational app roximation propo sed by Hershey [14], and restrict the maximiza tion to facto red d istribution in th e form of a Markov chain, i.e., P i,j β 1: τ ( x 1: τ = ρ 1: τ ) = φ i,j ρ 1: τ | β 1: τ = φ i,j 1 ( ρ 1 , β 1 ) τ Y t =2 φ i,j t ( ρ t − 1 , ρ t , β t ) (16) where P N ρ =1 φ i,j 1 ( ρ 1 , β 1 ) = 1 ∀ β 1 and P N ρ =1 φ i,j t ( ρ t − 1 , ρ t , β t ) = 1 ∀ β t , ρ t − 1 . Substituting (16) into (14) we get a lower boun d to the e xpected log-likelihood (14), i.e., E M ( b ) i h log p ( y 1: τ |M ( r ) j ) i ≥ J i,j ( M ( r ) j , φ i,j ) ∀ φ i,j (17) where we have defined J i,j ( M ( r ) j , φ i,j ) = X β 1: τ π ( b ) ,i β 1: τ X ρ 1: τ φ i,j ρ 1: τ | β 1: τ log π ( r ) ,j ρ 1: τ exp E M ( b ) i,β 1: τ [log p ( y 1: τ |M ( r ) j,ρ 1: τ , )] φ i,j ρ 1: τ | β 1: τ . (18) Using th e pr operty of HMM with memory o ne th at ob servations at different time instants are ind e- penden t given the cor respond ing states, we ca n break th e expectation ter m in equatio n (1 8) in the following summation E M ( b ) i,β 1: τ [log p ( y 1: τ |M ( r ) j,ρ 1: τ )] = τ X t =1 L ( M ( b ) i,β t ||M ( r ) j,ρ t ) (19 ) where L ( M ( b ) i,β t ||M ( r ) j,ρ t ) = E M ( b ) i,β t [log p ( y t |M ( r ) j,ρ t )] . As th e emission pr obabilities are GMMs, th e computatio n (19) cannot be carried ou t efficiently . Hence, we use a variational approximation [18], and in troducte variational par ameters η ( i,β ) , ( j,ρ ) ℓ | m for ℓ , m = 1 , . . . , M , with P M ℓ =1 η ( i,β ) , ( j,ρ ) ℓ | m = 1 ∀ m , and η ( i,β ) , ( j,ρ ) ℓ | m ≥ 0 ∀ ℓ,m . I ntuitively , η ( i,β ) , ( j,ρ ) is the responsibility matrix between gau s- sian ob servation compon ents f or state β in M ( b ) i and state ρ in M ( r ) j , where η ( i,β ) , ( j,ρ ) ℓ | m means th e probab ility tha t an o bservation fr om c ompon ent m of M ( b ) i,β correspo nds to compo nent ℓ of M ( r ) j,ρ . Again, we obtain a lo wer bound: L ( M ( b ) i,β t ||M ( r ) j,ρ t ) ≥ L ( M ( b ) i,β t ||M ( r ) j,ρ t ) ∀ η ( i,β ) , ( j,ρ ) (20) where we have defined: L ( M ( b ) i,β t ||M ( r ) j,ρ t ) = M X m =1 c ( b ) ,i β ,m M X ℓ =1 η ( i,β ) , ( j,ρ ) ℓ | m h log c ( r ) ,j ρ,ℓ + L G ( M ( b ) ,i β ,m ||M ( r ) ,j ρ,ℓ ) − log η ( i,β ) , ( j,ρ ) ℓ | m i (21) where L G ( M ( b ) ,i β ,m ||M ( r ) ,j ρ,ℓ ) = E y |M ( b ) ,i β ,m [log P ( y |M ( r ) ,j ρ,ℓ )] can be computed exactly for Gaussians L G ( M ( b ) ,i β ,m ||M ( r ) ,j ρ,ℓ ) = − 1 2 d log 2 π + lo g Σ ( r ) j,ρ − 1 2 tr Σ ( r ) j,ρ − 1 Σ ( b ) i,β (22) − 1 2 ( µ ( r ) j,ρ − µ ( b ) i,β ) T Σ ( r ) j,ρ − 1 ( µ ( r ) j,ρ − µ ( b ) i,β ) . (23) 5 Plugging (21) into (19) and (18) we get the lower bou nd to the e xpected log-likelihood : E M ( b ) i h log p ( y 1: τ |M ( r ) j ) i ≥ J i,j ( M ( r ) , φ i,j , η ) ∀ φ i,j , η (24) where we have defined: J i,j ( M ( r ) j , φ i,j , η ) = X β 1: τ π ( b ) ,i β 1: τ X ρ 1: τ φ i,j ρ 1: τ | β 1: τ [log π ( r ) ,j ρ 1: τ + τ X t =1 L ( M ( b ) i,β t ||M ( r ) j,ρ t ) − log φ i,j ρ 1: τ | β 1: τ ] . (25 ) Maximizing the right h and side of (2 4) with respect to φ η finds the most accurate approx imation to the real posterior within the restricted class, i.e., the one that achiev es the tightest lo wer b ound. Finally , plugging (24) into (4), we obtain a lo wer bound on the log-likelihood of the virtual sample: J ( M ( r ) ) ≥ J ( M ( r ) , z , φ , η ) ∀ z , φ , η (26) where we have defined: J ( M ( r ) , z , φ , η ) = K ( b ) X i =1 K ( r ) X j =1 z ij log ω ( r ) j − K ( b ) X i =1 K ( r ) X j =1 z ij log z ij + K ( b ) X i =1 K ( r ) X j =1 z ij N i S X β =1 π ( b ) ,i β 1: τ S X ρ =1 φ i,j ρ | β log π ( r ) ,j ρ 1: τ + K ( b ) X i =1 K ( r ) X j =1 z ij N i S X β =1 π ( b ) ,i β 1: τ S X ρ =1 φ i,j ρ 1: τ | β 1: τ τ X t =1 L ( M ( b ) i,β t ||M ( r ) j,ρ t ) − K ( b ) X i =1 K ( r ) X j =1 z ij N i S X β =1 π ( b ) ,i β 1: τ S X ρ =1 φ i,j ρ 1: τ | β 1: τ log φ i,j ρ 1: τ | β 1: τ (27) T o find the tightest possible lo wer bound to the log-likelihood of the virtual sample we need to solve J ( M ( r ) ) ≥ max z , φ , η J ( M ( r ) , z , φ , η ) . (28) Starting from an initial guess fo r M ( r ) , the parameter s can be estimated by maximizing (28) irtera- ti vely with respect to ( E-step ) η , φ , z an d ( M-step ) M ( r ) 3.4 E-step The E-steps first considers the maximization of (28) with respect to η for fixed M ( r ) z a nd φ , i.e., ˆ η = arg max η J ( M ( r ) , z , φ , η ) (29) It can be e asily verified that the m aximization (29) does n ot d epend on z an d φ can b e carried out indepen dently for each tuple ( i, j, β , ρ, m ) using the resu lt in Section 6.2 [18], which giv es: ˆ η ( i,β ) , ( j,ρ ) ℓ | m = c ( r ) ,j ρ,ℓ exp n L G ( M ( b ) ,i β ,m ||M ( r ) ,j ρ,ℓ ) o P M ℓ ′ =1 c ( r ) ,j ρ,ℓ ′ exp n L G ( M ( b ) ,i β ,m ||M ( r ) ,j ρ ′ ,ℓ ) o (30) and that the terms in (21) can then be compu ted for each ( i, j, β , ρ ) as: L ( M ( b ) i,β ||M ( r ) j,ρ ) = M X m =1 c ( b ) ,i β ,m log M X ℓ =1 c ( r ) ,j ρ,ℓ exp n L G ( M ( b ) ,i β ,m ||M ( r ) ,j ρ,ℓ ) o . (31) Next, (28) is maximized with respect to φ fo r fixed ( M ( r ) z and η ) , i.e. , ˆ φ = arg ma x φ J ( M ( r ) , z , φ , η ) (32) 6 The maxim ization does no t depend s on z and can be carr ied out in depend ently for each pair ( i, j ) with a backward recursion recursion [14] that computes ˆ φ i,j t ( ρ t − 1 , ρ t , β t ) = a ( r ) ,j ρ t − 1 ,ρ t exp n L ( M ( b ) i,β t ||M ( r ) j,ρ t ) + L i,j t +1 ( β t , ρ t ) o P ρ a ( r ) ,j ρ t − 1 ,ρ exp n L ( M ( b ) i,β t ||M ( r ) j,ρ ) + L i,j t +1 ( β t , ρ ) o (33) L i,j t ( ρ t − 1 , β t − 1 ) = N X β =1 a ( b ) ,i β t − 1 ,β log N X ρ =1 a ( r ) ,j ρ t − 1 ,ρ exp n L ( M ( b ) i,β ||M ( r ) j,ρ ) + L i,j t +1 ( β , ρ ) o (34) for T = τ , . . . , 2 , where it is und erstood t hat L i,j τ +1 ( β t , ρ t ) = 0 , and terminates with ˆ φ i,j 1 ( ρ 1 , β 1 ) = π ( r ) ,j ρ 1 exp n L ( M ( b ) i,β 1 ||M ( r ) j,ρ 1 ) + L i,j 2 ( β 1 , ρ 1 ) o P ρ π ( r ) ,j ρ exp n L ( M ( b ) i,β 1 ||M ( r ) j,ρ ) + L i,j 2 ( β 1 , ρ ) o (35) J i,j ( M ( r ) j , ˆ φ i,j , η ) = N X β =1 π ( b ) ,i β log N X ρ =1 π ( r ) ,j ρ exp n L ( M ( b ) i,β ||M ( r ) j,ρ ) + L i,j 2 ( β , ρ ) o . (36) Next, the maximization of (28) with respect to z f or fixed M ( r ) φ and η , i.e., ˆ z = argma x z J ( M ( r ) , z , φ , η ) ( 37) reduces to compu te the ˆ z ij as in (11) using (36) to appro ximate (10). Finally , we compute the following summary statistics: ν i,j 1 ( σ , γ ) = π ( b ) ,i γ ˆ φ i,j 1 ( σ , γ ) (38) ξ i,j t ( ρ, σ , γ ) = N X β =1 ν i,j t − 1 ( ρ, β ) a ( b ) ,i β ,γ ˆ φ i,j t ( ρ, σ , γ ) for t = 2 , . . . , τ (39) ν i,j t ( σ , γ ) = N X ρ =1 ξ i,j t ( ρ, σ , γ ) for t = 2 , . . . , τ (40) and the aggregates ˆ ν i,j 1 ( σ ) = N X γ =1 ν i,j 1 ( σ , γ ) (41) ˆ ν i,j ( σ , γ ) = τ X t =1 ν i,j t ( σ , γ ) (42) ˆ ξ i,j ( ρ, σ ) = τ X t =2 N X γ =1 ξ i,j t ( ρ, σ , γ ) . (43) The quan tity ν i,j t ( σ , γ ) is the responsibility between state γ o f the HMM M ( b ) i and state σ of th e HMM M ( r ) j at time t , when m odeling a sequen ce generated by M ( b ) i . Similarly , the q uantity ξ i,j t ( ρ, σ , γ ) is th e r esponsibility be tween a transition fro m state ρ to state σ (reached at time t ) for the HMM M ( r ) j and state γ (a t time t ) of the HMM M ( b ) i , when mod eling a sequence genera ted by M ( b ) i . Consequen tly , the statistic ˆ ν i,j 1 ( σ ) is the expected nu mber of times that the HM M M ( r ) j starts fr om state σ , when mo deling sequenc es g enerated b y M ( b ) i . The quan tity ˆ ν i,j ( σ , γ ) is the expected numbe r of times th at the HMM M ( b ) i is state γ wh en the HMM M ( r ) j is in state σ , w hen both modelin g sequences genera ted by M ( b ) i . Finally , the quantity ˆ ξ i,j ( ρ, σ ) is the expected number of tr ansitions from state ρ to state σ o f the HM M M ( r ) j , when modeling sequences genera ted by M ( b ) i . 7 3.5 M-step The M-steps in volv es maximizing (28) with respec t to M ( r ) for fixed z φ and η , i.e. , ˆ M ( r ) = arg ma x M ( r ) J ( M ( r ) , z , φ , η ) . (44) In the following, we detail the update ru les for the parameters of the reduced model M ( r ) . 3.5.1 HMMs mixture weights The re-estimation of the mixtur e weights, given the con straint P K ( r ) j =1 ω ( r ) j = 1 , is so lved using the result in Section (6.1): ω ( r ) j ∗ = P K ( b ) i =1 ˆ z i,j K ( b ) . (45) 3.5.2 Initial state probabilities The cost fu nction (28) factors in depend ently for ea ch { π ( r ) ,j σ } N σ =1 ( j is fixed) and reduc es to terms in the form: J ( M ( r ) , z , φ , η ) = N X σ =1 K ( b ) X i =1 ˆ z i,j N i ˆ ν i,j 1 ( σ ) log π ( r ) ,j σ . (46) Considering the constraint P N σ =1 π ( r ) ,j σ = 1 , the results in Section 6.1 gives the update formulas π ( r ) ,j σ ∗ = P K ( b ) i =1 ˆ z i,j N i P N γ =1 ˆ ν i,j 1 ( σ ) P N σ ′ =1 P K ( b ) i =1 ˆ z i,j N i P N γ =1 ˆ ν i,j 1 ( σ ′ ) . (47) 3.5.3 State transition probabilities Similarly , the co st function (2 8) factors indepe ndently for each { a ( r ) ,j ρ,σ } N σ =1 ( j and ρ are fixed) and reduces to terms in the form: J ( { a ( r ) ,j ρ,σ } N σ =1 , z , φ , η ) = N X σ =1 K ( b ) X i =1 ˆ z i,j N i ˆ ξ i,j ( ρ, σ ) log a ( r ) ,j ρ,σ (48) Considering the constraint P N σ =1 a ( r ) ,j ρ,σ = 1 , the results in Section 6.1 gives the update form ula a ( r ) ,j ρ,σ ∗ = P K ( b ) i =1 ˆ z i,j N i ˆ ξ i,j ( ρ, σ ) P N σ ′ =1 P K ( b ) i =1 ˆ z i,j N i P τ t =2 ˆ ξ i,j ( ρ, σ ′ ) (49) 3.5.4 Eission probability density functions In gene ral, in the cost functio n (28) factors ind ependen tly f or e ach j, ρ, ℓ , and red uces to ter ms in the form: J ( M ( r ) ,j ρ,ℓ , z , φ , η ) = K ( b ) X i =1 ˆ z i,j N i N X γ =1 ˆ ν i,j ( ρ, γ ) M X m =1 c ( b ) ,i β ,m ˆ η ( i,β ) , ( j,ρ ) ℓ | m log c ( r ) ,j ρ,ℓ + L G ( M ( b ) ,i β ,m ||M ( r ) ,j ρ,ℓ ) (50) Using basic matrix calculus, and defining a weighted sum operator Ω j,ρ,ℓ ( x ( i, β , m )) = X i ˆ z i,j N i X β ˆ ν i,j t ( ρ, β ) M X m =1 c ( b ) ,i β ,m x ( i, β , m ) (51) 8 the parameters M ( r ) are updated accordin gly to: c ( r ) ,j ρ,ℓ ∗ = Ω j,ρ,ℓ ˆ η ( i,β ) , ( j,ρ ) ℓ | m P M ℓ ′ =1 Ω j,ρ,ℓ ′ ˆ η ( i,β ) , ( j,ρ ) ℓ ′ | m (52) µ ( r ) ,j ρ,ℓ ∗ = Ω j,ρ,ℓ η ( i,β ) , ( j,ρ ) ℓ | m µ ( b ) ,i β ,m Ω j,ρ,ℓ ˆ η ( i,β ) , ( j,ρ ) ℓ | m (53) Σ ( r ) ,j ρ,ℓ ∗ = Ω j,ρ,ℓ ˆ η ( i,β ) , ( j,ρ ) ℓ | m h Σ ( b ) ,i β ,m + ( µ ( b ) ,i β ,m − µ ( r ) ,j ρ,ℓ )( µ ( b ) ,i β ,m − µ ( r ) ,j ρ,ℓ ) t i Ω j,ρ,ℓ ˆ η ( i,β ) , ( j,ρ ) ℓ | m (54) Equation s (52-54) are all weighted av erages over all base models, model states, and Gaussian com- ponen ts. 4 Experiments In th is section, we pr esent th ree em pirical studies of the VHEM-H 3M algor ithm. Each ap plication exploits some of th e ben efits of VHE M. First of all, instead of clusterin g HM Ms on the pa rameter manifold , VHEM-H3M clu sters HMMs direc tly throug h the distributions they represent. Gi ven a collection of input HMMs, VHEM estimates a smaller mixtur e of novel HMMs that consistently models the distribution r epresented by th e input HMMs. This is achieved by maximizing the log- likelihood of “v irtual” samples gen erated from th e inpu t H MMs. As a r esult, the VHEM cluster centers are consistent with the underlyin g gener ativ e proba bilistic frame work. Second, VHEM allows to estimate models from large-scale data sets, by break ing the learning prob- lem into smaller pieces. First, a data set is sp lit into small non-overlapping portion s and intermediate models are learned fr om each p ortion. Th en, the final m odel is estima ted from the in termediate mod- els using the VHEM -H3M algorithm . While based on the same max imum-likelihoo d principles as direct EM estimation o n the full data set, this VHEM estimation pr ocedur e has sign ificantly lower memory req uirements, since it is no lon ger requ ired to store the en tire data set du ring paramete r estimation. I n ad dition, since the interm ediate mod els are estimated indep endently of each other, this estimation task can easily be parallelized. Lastly , the “virtual” samples (i.e., sequences) VHEM implicitly generates for maximum-likeliho od estimation need not be of the same length as the actual input data for estimating the intermediate models. Making the virtual sequence s relativ ely short will positively impact the run time of each VHEM iteration. Th is may be achieved without loss of mod- eling accuracy , as VHEM a llows to comp ensate for shor ter virtua l training sequences b y imp licitly integrating ov er a virtually unlimited number of them. 4.1 Hierarchical motion clustering 1 2 Level 4 Level 3 Level 2 1 2 Level 4 1 2 3 4 1 2 3 4 Level 3 1 2 3 4 5 6 7 8 1 2 3 4 5 6 7 8 Level 2 5 10 15 20 25 30 35 40 45 50 55 Level 1 walk 1 jump run jog basket soccer walk 2 sit VHEM algorithm PPK-SC [2] Figure 1: Hierarch ical clustering of the MoCap dataset, with VHEM and SC-PPK. 9 In this experiment we tested the VHEM alg orithm on hierarch ical motion clustering, using the Mo- tion Capture da taset (http://mocap .cs.cmu.e du/ ), which is a co llection of time- series data rep resent- ing human motions. In particular, we start from K 1 = 56 motion e xamples form 8 different classes, and learn a HMM for each of them, form ing the first le vel of the hierarchy . A tree-structure is formed by successiv ely cluster ing HMMs with the VHEM algo rithm, an d u sing th e learn ed cluster ce nters as th e representa ti ve HMMs at the new level. The seco nd, third and fo urth levels o f th e hierarchy correspo nd to, respecti vely , K 2 = 8 , K 3 = 4 and K 4 = 2 . The hierar chical clustering ob tained with VHEM is illu strated in Fig ure 1 (lef t). In the fir st level, each vertical b ar rep resents a motion seq uence, with different colors indicating d ifferent gro und- truth classes. I n th e second level, the 8 HMM clusters ar e shown with vertical b ars, with the co lors indicating the p ropor tions of th e mo tion classes in the cluster . Almost all clu sters are p opulated b y examples fr om a single m otion class (e.g., “ run”, “jo g”, “jump”), which dem onstrates that VHE M can gro up similar motio ns tog ether . W e n ote an error of the VHEM in clustering a portion of the “soccer” examples with “basket”. Movin g up the hier archy , th e VHEM algorith m clusters similar motions classes together (as indicated by the arrows), and at the last (Le vel 4) it creates a dichotom y between the “sit” and the rest of the motion classes. This is a desirable beha vior as the a the kinetics of the “sit” sequences (i.e., sitting on a stoo l and going down) are co nsiderably different fo rm th e rest. On the right o f Figure 1, th e same exper iment is r epeated usin g spec tral clusterin g in tand em with PPK similarity (SC-PPK) [5]. The SC-PPK clusters motions sequences properly , howe ver it in- correctly aggregates th e “sit” and “soccer”, and produces a last le vel (Level 4 ) not well interpretab le. While VHEM has lower R and-ind ex than SC-PPK at Le vel 2 ( 0 . 940 vs. 0 . 973 ) , it has higher Rand- index at Level 3 ( 0 . 775 vs. 0 . 73 7 ) and L ev el 4 ( 0 . 5 91 vs. 0 . 568 ). T his su ggests that the novel HMMs cluster centers learned by VHEM retain more inform ation that t he spectral cluster centers. annotation retrie val P R F MAP AR OC P@10 HEM-H3M 0.470 0.210 0.258 0.438 0.700 0.450 EM-H3M 0.415 0.214 0.248 0.423 0.704 0.422 HEM-DTM 0.430 0.202 0.252 0.439 0.701 0.453 T able 1: Ann otation and retriev al perfo rmance on CAL500, for VHEM-H3M , EM-H3M and HEM-DTM[ 13] classification VHEM-H3M EM-H3M τ = 5 0.569 0.349 τ = 10 0.570 0.389 τ = 15 0.573 0.343 T able 2: Online hand- writing clas- sification accuracy (20 characters) 4.2 A utoma tic music tagging In this exper iment we ev alua ted VHEM-H3M on au tomatic music tagg ing. W e con sidered the CAL500 collection f orm Barrington et al. [12], wh ich co nsists in 5 02 so ngs an d pr ovides binary annotation s with respect to a vocabulary V of 149 tags, rangin g from ge nre and instrumen tation, to mood and usage . T o represen t the acoustic co ntent of a son g we extrac t a time series of au dio fea- tures Y = { y 1 , . . . , y T } , by computin g the first 1 3 Mel freque ncy cepstral coefficients (MFCC) [1] over half-overlapp ing windows of 92 ms of audio signal, au gmented with first and seco nd d eriv a ti ves. Automatic music tag ging is fo rmulated as a supervised multi-class labeling p roblem [1 1], where each class is a tag f rom V . W e mo del tags with H3M pro bability distributions over the sp ace of audio f ragments (e.g. , sequences of τ = 12 5 audio fe atures, which appro ximately correspon ds to 6 seconds of a udio). Each tag mod el is learned from audio- fragmen ts extracted from relevant song s in the database, u sing th e VHEM-H3M. The database is first pro cessed at th e song level, using the EM algorith m to lea rn a H 3M for ea ch song f rom a dense sampling of audio fragmen ts. For each tag, the song-lev el H3Ms that are rele vant to the tag are pooled together to form a big H3M, and the VHEM algorithm is used to learn the final tag-model. In table 1 we present a comp arison of the VHEM-H3M algorithm with the standard EM-H3M algo- rithm and a state-of- the-art auto-tagge r (HEM-DT M) [13], which uses the dy namic texture mixture model and an ef ficient HEM algorithm, on both annotation an retrieval o n the CAL500 dataset. An- notation is measur ed with precision (P), recall (R), f-sco re (F), and retrieval is measured with mean av erage p recision (MA P), area u nder the oper ating char acteristic cur ve ( AR OC), an d pr ecision at the first 10 retrieved objects (P@10). All re ported metrics are a verages ov er the 98 tags that ha ve at least 30 examples in CAL500, and are result of 5 fold-c ross v alida tion. VHEM-H3M achie ves better 10 perfor mance over EM-H3 M (except o n p recision and AR OC which are compa rable) an d stron gly improves the top of the ran king list, as de monstrated b y th e h igher P@10 score. Perform ance of VHEM-H3M and HEM-DTM are close on all metrics with only slight variations, e xcept on annota- tion precision where VHEM-H3M registers a significantly higher score. 4.3 Online hand-writing recognition In this experim ent we in vestigated the per forman ce of the VHEM-H 3M algor ithm on classification of o n-line h and-writin g. W e considere d the Chara cter Trajectories Data Set [19], wh ich c onsists in 2858 examples of characters from the s ame writer , and used half of the data for training and half for testing. An HMM (with N = 2 and M = 1 ) was first learn ed fro m eac h o f the training seq uences using the E M algorithm . For each letter, all the relevant HMMs were c lustered with the VHEM to form a H3M with K ( r ) = 2 comp onents. W e r epeated the sam e experiment usin g the E M-H3M algorithm directly on a ll the relevant sequ ences in the train data. For each letter, we allowed the EM algorithm up to three times the total runn ing time of the VHEM (includ ing the estimation of the correspo nding intermediate HMMs). T a ble 2 lists classification accuracy on the test set, for VHEM- H3M, using dif ferent v alue s of τ , and for the correspond ing runs of EM-H3M . A small τ suffices to provide a regular estimate, and sim ultaneously deter mines shor ter running times for VHEM (un der 2 minutes f or a ll 20 letter s). On the other ha nd, the EM algo rithm needs to evaluate the likelihoo d of a ll the origin al sequ ences at e ach iter ation, which de termines slower iterations, and prevents the EM from con verging to ef fectiv e estimates in the t ime allowed. 5 Conclusion In this pap er , we present a variational HEM (VHEM) algo rithm for clustering HMMs th rough their distributions. Moreover , VHEM summ arizes each cluster by estimating a new HMM as c luster center . W e demonstrate the efficacy of this algorithm for various ap plications, includ ing hierarchical motion clustering, semantic music annotation , and onlin e hand-writing recognition. 6 Ap pendix on useful optimization prob lems 6.1 The optimization problem max α ℓ L X ℓ =1 β ℓ log α ℓ (55) s.t. L X ℓ =1 α ℓ = 1 α ℓ ≥ 0 , ∀ ℓ is optimized by α ∗ ℓ = β ℓ P L ℓ ′ =1 β ′ ℓ . (56) This can be easily compute d with the optim ization { α ∗ ℓ } = arg ma x α ℓ L X ℓ =1 β ℓ log α ℓ + λ L X ℓ =1 α ℓ − 1 ! where the second term is a Lagran gian term for the weights to sum to 1 , and noticing that the positivity constraints are autom atically satisfied by (56). 11 6.2 The optimization problem max α ℓ L X ℓ =1 α ℓ ( β ℓ − log α ℓ ) (57) s.t. L X ℓ =1 α ℓ = 1 α ℓ ≥ 0 , ∀ ℓ is optimized by α ∗ ℓ = exp β ℓ P L ℓ ′ =1 exp β ′ ℓ . (58) This can be easilly computed with the optimization { α ∗ ℓ } = arg ma x α ℓ L X ℓ =1 α ℓ ( β ℓ − lo g α ℓ ) + λ L X ℓ =1 α ℓ − 1 ! where the second term is a Lagran gian term for the w eights to sum to 1 , and noticing that the po si- ti vity constraints are automatica lly satisfied by (58). Refer ences [1] L. Rab iner and B. H. Juang , Fundamen tals of Speech Recognition . Upper Sadd le Riv er (NJ, USA): Prentice Hall, 1993 . [2] Y . Qi, J. Paisley , and L. Carin, “Music analy sis using h idden markov mixtur e models, ” Sign al Pr ocessing, IEEE T ransactions on , v o l. 55, no. 11, pp. 5209–5 224, 2007. [3] R. Nag, K. W ong, and F . F allside, “Script recognition using hidden markov models, ” in Acous- tics, Spee ch, a nd Signa l Pr ocessing, IEEE In ternational Conference on ICAS SP’86. , vol. 11. IEEE, 1986, pp. 2071–2 074. [4] A. Krogh, M. Brown, I. M ian, K. Sjolander, and D. Haussler , “Hidd en mar kov models in computatio nal biolo gy . app lications to protein mo deling, ” Journal of Molecu lar Biology , vol. 235, no. 5, pp. 1501– 1531, 1994 . [5] T . Jebara, Y . Son g, an d K. Thadan i, “Spectral clusterin g a nd embe dding w ith hid den markov models, ” Machine Learning: ECML 200 7 , pp. 164–175, 2007. [6] T . Jebara, R. Kondor, and A. How ard, “Probab ility prod uct kernels, ” The Journal o f Machine Learning Resear ch , vol. 5, pp. 819–844 , 2004. [7] N. V asconcelos and A. Lippman , “Learning mixture hierarchies, ” in Advances in Neural Infor- mation Pr ocessing Systems , 19 98. [8] A. B. Chan, E. Coviello, and G. Lan ckriet, “Deriv a tion of the hier archical EM alg orithm fo r dynamic textures, ” City University of Hong K ong, T ec h. Rep., 2010. [9] N. V ascon celos, “Imag e ind exing with mixtu re h ierarchies, ” in I EEE Conf. Computer V ision and P attern Recognitio n , 200 1. [10] A. Chan, E. C oviello, and G. Lanckriet, “Clustering dynamic textures wi th the hierarch ical em algorithm , ” in Intl. Confer ence on Comp uter V ision and P attern Recognition , 2010. [11] G. Car neiro, A. Chan , P . Mor eno, and N. V asco ncelos, “Superv ised learnin g of semantic classes fo r image an notation and r etriev al, ” I EEE T ransaction s o n P a ttern An alysis and Ma- chine Intelligence , v ol. 29 , no. 3, pp. 394–410, 2007. [12] D. Turnb ull, L. Barr ington, D. T orres, and G. Lanc kriet, “Sem antic an notation and r etriev al of m usic and sound effects, ” I EEE T ransaction s on Audio, Speech and La nguage Pr o cessing , vol. 16, no. 2, pp . 467–476, February 2008. [13] E. Coviello, L. Barringto n, A. Ch an, and G. L anckriet, “ Au tomatic m usic tagg ing with time series models, ” in Pr oceedings ISMIR , 2010 . 12 [14] J. Hershey , P . Olsen, an d S. Ren nie, “V ariation al Kullback -Leibler div ergence for h idden Markov mode ls, ” in Automatic Sp eech Recogn ition & Un derstanding, 2 007. ASRU . IEEE W o rkshop on . IEEE, 200 8, pp. 323–328. [15] P . Smyth, “Clustering sequences with hidden markov models, ” in Advances in neural informa- tion pr ocessing systems , 19 97. [16] M. Jordan, Z. Gh ahraman i, T . Jaakkola, and L . Saul, “ A n intr oduction to variational meth ods for graphical models, ” Mac hine learning , v ol. 37, no. 2, pp. 183– 233, 1999. [17] T . S. Jaakkola, “T utorial on V ariation al Approxima tion Methods, ” in In Ad vanced Mean F ield Methods: Theory and Practice . MIT Press, 2000, pp. 129–1 59. [18] J. Her shey and P . Olsen, “ Ap proxim ating the Kullback Leibler divergence between Gaussian mixture mod els, ” in Acoustics, Speech and S ignal Pr oc essing, 200 7. I CASSP 20 07. IEEE In - ternational Confer ence on , v ol. 4. Ieee, 2007 . [19] B. W illiam s, M. T oussaint, and A. Storkey , “Ex tracting motion prim itiv es from natu ral h and- writing data, ” Artificial Neur al Networks–ICANN 2006 , pp. 634–6 43, 2006 . 13

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment