Human Heuristics for Autonomous Agents

We investigate the problem of autonomous agents processing pieces of information that may be corrupted (tainted). Agents have the option of contacting a central database for a reliable check of the status of the message, but this procedure is costly …

Authors: Franco Bagnoli, Andrea Guazzini, Pietro Lio

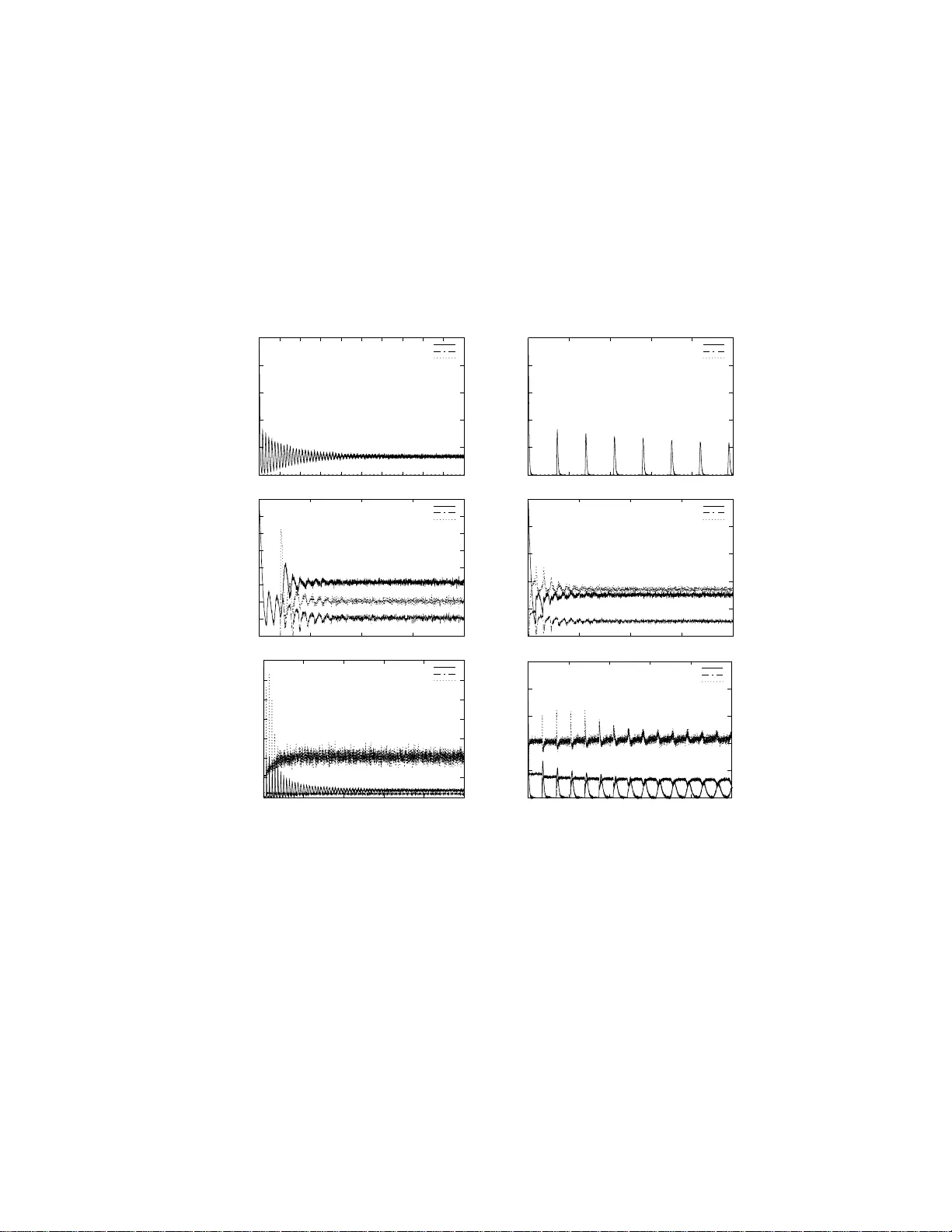

Human Heuristics for Autonomous Agen ts F ranco Bagnoli 1 ⋆ , Andrea Guazzini 1 and Pietro Li` o 2 1 Department of Energy , Universit y of Florence, V ia S. Marta 3, 50139 Firenze, Italy . Also CSDC and IN FN, sez. Firenze 2 Computer Laboratory , Universi ty of Cambridge, 15 J.J. Thompson Aven ue, Cam bridge, CB30FD, UK Abstract. W e inv estigate the problem of a utonomous agen ts pro cessing pieces of information that may b e corrupted (tainted). Agents ha ve the option of contacting a central database for a reliable chec k of the status of the message, but this pro cedure is costly and therefore should b e used with parsimony . Agents ha ve to ev aluate the risk of b eing infected, and decide if and when communicating partners are affordable. T rustability is implemen ted as a personal (one-to-on e) record of past con tacts among agen ts, and as a mean-field m on itoring of the le vel of message co rrup- tion. M oreov er, this information is slo wly forgotten in time, so that at the end everybo dy is chec ked against th e database. W e explore th e b e- havior of a homogeneous system in the case of a fixed p o ol of spreaders of corrupted messages , and in the case of sp ontaneous appearance of corrupted messages . 1 In tro duction One of the most pro mising ar ea in co mputer science is the desig n of alg orithms and computer a rchitectures close ly ba s ed on our re a soning pro cess and o n how the bra in works. Human neural circuits r eceive, enco de and a nalyze the “av ail- able informatio n” fr o m the en vironment in a fast, relia ble a nd economical wa y . The evolution of h uman co gnition could b e viewed as the re sult of a contin uous improv ement of neural structures which drive the decisio n making pro cesses from the inputs to the final b ehaviors, co gnitions and emotions. Heuristics are simple, efficient rules, ha rd-co ded b y evolutionary pro cesse s or lea r ned, which hav e b een prop osed to explain how people make decisions, come to judgments, and solv e problems, typically when facing complex pr o blems or incomplete information. It is common exp erience that that muc h o f hum an rea s oning and decisio n making can b e mo deled b y fast a nd frugal heur istics that ma ke inferences with limited time and knowledge. F or example, Dar win’s de lib er a tion ov er whether to marry provides an interesting example of such heuristic pro cess [1 ,2]. ⋆ to whom correspondence should b e addressed 2 Heuristics Let us quickly review s o me widely accepted h ypo thesis ab out heuristics. In the early 19 70s, Daniel Kahneman and Amos Tv ers k y (K&T) pro duced a se- ries of impor tant papers ab out decisions under uncertaint y [3,4,5,6 ,7]. Their basic claim was that in asse ssing probabilities, “p e ople r ely on a li mite d nu mb er of heuristic princi ples which r e duc e the c omplex tasks of assessing pr ob abilities and pr e dicting values to simpler judgmental op er ations” . Although K&T cla imed that, as a general r ule, heuristics a re quite v aluable, in some cases, their use lea ds “to sever e and systematic err ors” . O ne of the most striking features of their a r- gument w as that the error s follo w certain sta tis tics and, therefore , they could be describ ed a nd even predicted. The resulting arguments hav e prov ed highly influen tial in ma n y fields, including computer science (a nd particula r ly in human- machine in teraction a rea) where the influence has stemmed from the effort to connect algorithmic accuracy to sp eed o f ela bo ration a nd, eq ually imp orta nt, to the algorithmic understanding of the human log ic [7 ]. If human b eings use iden- tifiable heur istics, and if they are prone to sys tematic err ors, w e migh t b e able to design computer architectures and algor ithms to impro ve human-computer int era ction (and a lso to study human b ehavior). K&T describ ed three genera l-purp ose heuristics: representativ eness , a v ail- ability and anc horing . People use the availabi lity heuristic when they answer a questio n of proba bilit y by relying upon knowledge that is readily av ailable rather than examine other a lternatives or pro cedures. There a re situations in which p eople assess the frequency o f a class or the proba bilit y of an e vent by the ease with which instances or o ccurrences can be bro ught to mind. F or exa mple, one may asse ss the r isk o f heart attack among middle-aged p eople by re c a lling such o ccurr ences a mong one’s acquaint ances . Av ailabilit y is a useful clue for as- sessing frequency o r probability , b e cause ins tances o f la rge cla sses ar e usually reached b etter and faster than instances of less frequent clas ses. How ever, av ail- ability is affected by factors other than frequency and proba bilit y . This is a p oint ab out how familiarity ca n affect the av ailability of instances. F o r p eople without statistical kno wledge, it is far from irr ational to use the av ailabilit y heur is tic; the pro blem is that this heuristic can lead to serious error s of fact, in the f or m of e x cessive fea r of small risks and neglect of larg e ones. The re pr esentativeness heur istic is inv olved when p eople make an assessment of the degree of corre s po ndence betw een a sample and a po pulation, an instance and a categor y , an act and an actor or , mo re generally , b etw een an outcome and a model. This heuristic can be thought of as the reflexiv e tendency to as- sess the simila rity of c hara cteristics on relatively salient a nd even superficia l features, and then to use these assessments o f similarity as a basis of judgment. Representativ eness is comp osed by ca tegorizatio n and generalizatio n: in order to fo recast the be havior of an (unknown) sub ject, we firs t identif y the gr o up to which it be lo ngs (categoriz a tion) and them we asso cia te the “typical” b ehavior of the group to the item. Suppos e , for exa mple, that the ques tion is whether some p ers on, Paul, is a co mputer scientists or a clerk employ ed in the public a d- ministration. If Paul is des crib ed as shy and withdrawn, and as having a pas sion for deta il, most pe o ple will think that he is likely to be a computer scientist a nd Human Heuristics for Autonomous Agents 3 ignore the “ba se-rate”, that is, the fact that there far more cler k employ ed in public admin than computer scientists. It should be readily apparent that the representativeness heuristic will pro duce problems whenever p eople are igno r ing base-ra tes, as they are pr one to do. K&T also sugg ested that estimates are o ften made fro m an initial v alue, or anchoring , which is then adjusted to pro duce a final ans wer. The initial v alue seems to have undue influence. In one study , K&T asked sub jects to say whether the num b er that e mer ged from the wheel was higher o r low er than the relev ant per centage. It turne d o ut that the starting po in t, though clearly random, gr eatly affected peo ple’s answers. If the starting p oint was 65, the median estimate was 45%; if the starting po int was 10, the median estimate was 25%. Several o f recent contributions on heuristic hav e put the attention on the “dual-pro ces s ” to human thinking [8,9,10,11,12]. According to these h yp othesis, peo ple ha ve t wo systems for making decisions. One o f them is r apid, in tuitiv e, but sometimes error-prone; the other is slower, reflective, and more statistical. One of the p erv asive themes in this collection is tha t heuris tics a nd bias es can b e connected with the intuitiv e system and that the slower, more r eflective sys tem might be able to make corrections. The dual-pro cess idea has some links with the exp erimental evidences of the presence of ar eas for emotions in the brain, for instance of fear- type . These “emo tio nal” areas ma y b e trigger ed b efor e tha n the co gnitive areas beco me involv ed. W e shall try to consider some of these co ncepts to model autonomous a gents that hav e the task o f pro cessing mess a ges from source s that are not always trustable. The a gent is a direct abs traction o f an human being, ea sily understa nd- able b y psychologists and biologist w ith the adv an tage of following a sto chastic dynamics that can be combined with other appro aches lik e ODE [14,15,1 6,13,17]. Here we make the analogy b etw een the diffusion o f hoaxes, g ossips, etc., a nd that of c o mputer viruses or worms. The inco ming informatio n may b e corr upted for many reasons: some a gents may b e infected by malware and particularly viruses, some o f them ma y b e progra mmed t o pro vide false information or they ma y just b e malfunctioning. Let us suppo se that the pro cessing of a co rrupted information will infect the elab orated messa ge, so that the corruption “p erco lates and propaga tes” into the connection net work, unless stopp ed. W e assume tha t an a gent may contact a central database for inquiring ab out the reliability of a message, but this chec kout is costly , at lea st in ter ms of the time re q uired for pro cess ing the information. Therefore, an age n t is confronted with tw o opp or tunities: either trust the sender, accept the message and the risk or pas s ing false information and proc e s s it in a s hort time, or cont act the central databas e , be sure of the correctness o f t he message but also waste more time (or other res o urces suc h as bandwidth) in elab orating it. This is analo g ous to the pas spo rt check when cro ssing a boundar y: customers may either trust the iden tity card a nd let p eople pass quickly , or c heck them ag ainst a database, slowing down the queue. This paper, which is motiv a ted b y the fact that h uman heuristics ma y be used to improve the efficiency of artificial sy s tems o f autonomo us de c is ion-makers 4 Heuristics agents, is structured as follows. In Section 2, we in tro duce a mo del where the ab ov e men tioned heuris tics are implemented. Section 3 fo cuses o n equilibrium and asy mpto tic co nditions in the absence o f infection. In Sectio n 4, we describ e the different scenarios which a re considered (no infection, quenched infection and annealed infection); n umerical results for different v alue of cont ro l parameters under infection are rep or ted in Section 5. A discussion a bo ut the psychological implications o f the mo del and conclusio ns are dr awn in Section 6 . 2 Mo del Let us consider a s c enario with N age nts, identified by the index i = 1 , . . . , N . Each agent interacts with other K randomly chosen agents. The connections indicate messages transferred. In principle, one can hav e input co nnections with himself (meaning further pro cessing of a given piece of information) and multi- ple connections with a given partner (more infor ma tion tra nsferred). An agent receives information from its co nnecting inputs, ela bo rates it and send the result to its output links. Let us assume for simplicity that this o ccurs in a synchronous wa y and at discrete time steps t . The information how ever can b e tainted (cor - rupted), either maliciously (vir us, sabo tage, a ttack) or because it is based on incorrect data . If a n info r mation is ta inted, a nd it is a ccepted for pro c e ssing, it contaminates the o utput. All agents ha ve the pos s ibilit y o f chec king the cor rectness of the incoming messages a gainst a central database, but this operatio n is co s tly (say , in terms of time), and therefore heuristics are used to balance b etw een cost and the r isk of b eing infected. An agent i has a dynamica l memory for the reliability of its pa rtners j , − 1 ≤ α ij ≤ 1; this memory is use d to decide if a messa ge is a cceptable or not. The g reater α ij > 0, the more the partner is consider e d reliable, the r everse for α ij < 0. How ever, the trusting on an individual is not a n abs o lute v alue, it has to be compared with the p erception o f the level of the infection. Let us denote b y 0 ≤ A i ≤ 1 the p erception of the risk i.e. , the p erceived pro bability of messag e contamination, of individual i . A simple yet meaningful wa y o f co m bining risk per ception with unce rtaint y is to assume that each individual i decides acco rding with its previous knowledge ( α ij ) if | α ij | > A i and chec ks a gainst the da tabase ( i.e. , get to know the truth) o therwise. If A i is large, the agent i will b e s uspicious and c heck many messages against the database , the r everse fo r small v alues of A i . After c hecking the databa s e, o ne kno ws the truth a bo ut his/her partner. This information can b e used to increas e o r decrea se α ij and also to compute A i . In particular , if the chec k is p ositive (negativ e), α ij increases (decreas es) of a giv en amoun t v α . Finally A i in increased b y a quan tit y v A n i /c i , where c i is the cos t (total n umber of c hecks for a given time s tep) and n i the n um b er of infected discov ered. The idea is that A i represents the p erceived “average” level of infection, c orresp onding to the “risk perceptio n” of b e ing infected. W e shall limit here to fixed and homogeneous r esp onses, in a n mo re r ealistic case, Human Heuristics for Autonomous Agents 5 different classes of ag en ts or individua ls will r e act different ly , according to their “progr amming” and their pas t exp erie nc e s , to a given perc e ption o f the infection level. Some of these quantities change smo othly in time. There is a n o bliv ion mech- anism on α ij and A i , implemented with the pa rameters r α and r A , resp ectively , such that the information stor ed τ time steps b efore the present time has weigh t (1 − r ) τ . New information is s tored with w eight r . This mechanism emulates a finite memor y of the agent, without the need of ma naging a lis t. The observ able quantities are the tota l n umber of infected individuals, I , the cost of quer ying t he database, C and the num b er of err ors E , which ar e given by the num ber o f tainted accepted messa ges and not-tainted refused messa ges. In this model, w e ar e only in terested in the correc tnes s o f the mes sage, not in its conten t. Actually , a real message should b e considere d a s a set of ’ato mic’ parts, each of whic h can b e a nalyzed, even tually with their relatio ns, in order to judge the reliability of the messa ge itself. F or instance, the spam detection mechanism is often based on a s c ore a s signed to pa tterns ( e.g. , MONEY, SE X, LOTTER Y) app earing in the messa ge. Therefore, a more accurate mo del should represent messages as v ectors or lists of items. W e deal here with a simple scalar approximation. W e tr y to include the human heuris tics in this simple mo del by means of A (representativ eness) and α ij (av ailability). The oblivio n mechanism can more- ov er be consider e d the parameter corresp onding to the “anchoring” exper iences. In o ur present mo del, there is only one v aria ble connected to a ffo r dability (from completely trustable to completely not trustable), and the categorizatio n pro- cedure consists essentially in trying to assess the placement of an individual on this axis. The trustability of an individual ( α ij ) depends on the past in terac- tions. Since A represents the a verage le v el of infectivity , the trustabilit y of an individual is ev aluated against it, in o rder to s av e the cost (or the time) o f the chec k aga inst the central databas e. 3 Relaxation to equilibrium and asymptotic state without infection 0 0.01 0.02 0.03 0.04 0.05 0.06 0.07 0.08 0.09 0 0.002 0.004 0.006 0.008 0.01 0.012 0.014 0.016 0.0 18 0.02 P ( α ) α (a) P 1 P 2 0 0.002 0.004 0.006 0.008 0.01 0.012 0.014 0.016 0.018 0.02 0 0 .002 0.004 0.006 0.008 0.01 0.01 2 0.014 0.016 0.018 0.02 P ( α ) α (b) P 1 P 2 0 0.005 0.01 0.015 0.02 0.025 0 0 .002 0.004 0.006 0.008 0.01 0.01 2 0.014 0.016 0.018 0.02 P ( α ) α (c) P 1 P 2 Fig. 1. The asymptotic distribution P ( α ) fo r a < 2 r ( a = 0 . 0 06 and r = 0 . 01) (a); a = 2 r ( a = 0 . 01 and r = 0 . 005) (b); a > 2 r ( a = 0 . 02 and r = 0 . 005) (c). 6 Heuristics In order to put into evidence the emerg ing features of our mo del, let us first study the case without infection. Without “stim ulation”, the threshold A i is fixed, and tak es the v alue v A for all individuals. The o nly dynamical v ariables are the α ij . Starting from a p eaked (single-v a lued) distribution of α ij , the mo del ex - hibits oscillatory patterns and long transien ts tow ar ds an equilibrium distribu- tion (Fig. 1). W e found that b y increasing the connectivity K , the p eaks be- come thinner and higher , following a linear rela tionship. The affinities α ij in the asymptotic state hav e a non trivial distr ibution, ranging fro m 0 to 2 v . Let us ca ll P ( α ) the probabilit y distribution of α . F rom numerical simulation (see Fig. 1 ), one can see tha t P ( α ) can be divided int o tw o branches, P 1 ( α ) for 0 ≤ α ≤ v and P 2 ( α ) for v ≤ α ≤ 2 v . The evolution o f P ( α ) is given by the combination of t wo phases: control against the da tabase, that in the mean field appro ach o ccurs with probability a = K/ N for all α ≤ v (and therefore for P 1 ), and the oblivion mechanism, that m ultiplies all α b y (1 − r α ). Comb ining the t wo effects, one finds for the asymptotic s tate P 1 ( α ) = 1 − a 1 − r α P 1 α 1 − r α , (1) P 2 ( α ) = a 1 − r α P 1 α 1 − r α − v + 1 1 − r α P 2 α 1 − r α . (2) F rom E q. (1), one ge ts easily that P 1 ( α ) ∝ α x , with x = ln(1 − a ) ln(1 − r α ) − 1 ≃ a r α − 1 . In particular, the v alue x = 1 (Fig. 1- b) corresp onds to a = 2 r α . W e were not able to expres s the as ymptotic distribution P 2 ( α ) in terms o f known functions. 0 200 400 600 800 1000 1200 1400 1600 1800 0 100 200 300 400 500 6 00 700 800 900 1000 C t 0 500 1000 1500 2000 2500 3000 3500 4000 0 100 200 300 400 500 6 00 700 800 900 1000 C t Fig. 2. Relaxation to equilibrium for the cost C for N = 500, r α = 0 . 00 5 a nd K = 5 ( a = 0 . 01) (left); K = 50 ( a = 0 . 1) (right). The pro cess of relaxation to the equilibrium is in genera l given b y oscillations, whose p erio d is r elated to r α . A rough e s timation can be obtained b y c o nsidering Human Heuristics for Autonomous Agents 7 that a pulse of a gents with the same v alue o f α = 2 v will exp erience the oblivion at an exp onential ra te (1 − r α ) T , until α = v , a fter which a fraction a of the pulse is re-injected a gain to the v alue α = 2 v . The co ndition for the pseudo-p erio dicity (for the fraction a o f agents) is 2 v (1 − r α ) T = v , from which the p erio d T can be estimated T ≃ − ln(2) ln(1 − r α ) ≃ ln(2) r α in the limit of s mall r α . Since the re- injected fractio n is given b y a , the la rger is its v alue, the la rger the osc illations and the slo wer is the relax a tion to the asymptotic distribution, as is shown in Fig. 2. One ca n notice that the p erio d is r oughly the same (same v alue of r α ), but the a mplitude of oscillations is muc h larg er in the plo t to the right (larger a ). Since a = K / N , these large osc illa tions mak e difficult to p erform measure- men ts on the asymptotic state on small p opulations, but large v alues of N r e quire longer simulations. One may say that the mo del is intrinsically complex. The asymptotic cost is given b y C ∞ = a R v α 0 P 1 ( α ) ∝ av a/r α α . As one can see from Fig. 1, there is a cost even in the absence of infection, since the agents hav e to monitor the lev el of infection against the database. The lo wer v alues of the cost ar e asso c ia ted to v alues o f r α smaller than a . 0 100 200 300 400 500 600 700 800 900 1000 0 100 200 300 400 50 0 600 700 800 900 1000 C , E , I t C I E Fig. 3. T emp or al b ehavior of the co st C , infection I and error le vel E for K = 5, n = 500 ( a = 0 . 01), r = 0 . 0 05. The pulse is at the time 500 4 Infectivit y scenarios The source of infection ma y be quenched, i.e . , a fra ction p of the p opulation alwa ys emits ta inted messages , or annealed, in which case the fraction p of the 8 Heuristics spreaders is changed at ea ch time step. Let us fir st study t he ca se o f a pulse of infection (with p = 1) in the asymptotic state and a duration ∆t = 2 0. F or large v alues of the asymptotic cost, The infection is removed in just a few time steps, as shown in Fig. 3. 0 100 200 300 400 500 600 700 800 900 0 1000 2000 3 000 4000 50 00 6000 7000 8000 9000 10000 C , E , I t C I E 0 100 200 300 400 500 600 700 800 900 0 1000 2000 3 000 4000 50 00 6000 7000 8000 9000 10000 C , E , I t C I E Fig. 4. T empo ral be havior o f the cost C , infection i and error level E for K = 2, n = 500 ( a = 0 . 004), r = 0 . 001. Left: v A = 10 − 3 (eradication). Right: v A = 10 − 4 (endemic infection). The pulse o ccurs a t time 5000 F or smaller v alues of the cost, the fate of the infection is r elated to the scenario (quench ed or annealed infecto r s). If the infection level is small, and the infectors are quenched, the rising of the corr e spo nding α ij efficiently isolate the contagion. In the case of a “pulse” o f infectio n, or for annealed infector s, the fate of the contagion is ma inly r uled by the quantit y A i . I f A i grows rapidly ( v A sufficiently large), a temp orary incre a se of the co st is enough to eradicate the epidemics, see Fig. 4. In the opp osite c ase, the infection becomes endemic even for non-persistent infectors: it is maintained by the spreading mec hanism. The increment used in the follo wing investigations is s ma ll enoug h so that we can observe the p ersistence of the infection. If the infector s ar e per sistently renewed, the con tagio n c annot get er adicated but o nly kept under co n trol. The role of the tw o heuristics is different in the t wo cases. The representativeness heuristic ( α ij ) is the optimal stra tegy to detect ag ent s which a re constantly less reliable tha n the o thers (quenched case), but it is com- pletely useless in the annea le d case. The av ailabilit y heuristic ( A i ), considering at each time step the average infection o f the system, is able to control the s pread of infectio n in the annea led case. The oblivion mechanism, related to the anchoring heuristic, is a the key parameter gov erning the sp eed of adaptatio n to v ariable externa l co nditions. It controls the oscillations of the cost (Fig. 2) and it is fundamental to minimize the computational load o f the control pro ces s. The oblivion of α ij (representativ eness parameter) co ntrols the co mputatio nal cost at the equilibrium in both cases. High v a lues of r α corres p ond to a conserv ativ e behavior of th e system, in this case a lar ge computatio nal cost and a cor resp onding low num b e r o f infected Human Heuristics for Autonomous Agents 9 and error s c haracter ized the equilibrium. Low v alues of r α corres p ond to the dissipative b ehavior for which the system minimizes the c o mputational cost but allows large fluctuations of infected a nd high v alues of err ors. 5 Dynamical b eha vior W e run extensive numerical sim ulations and recor ded the a symptotic cost C , nu mber o f infected p eople I , and err ors E as function of the oblivion pa r ameters ( r α and r A ), the probability and the pulse of infection ( p ), and the density of contacts ( K ). In these simulation we k ept v A = 10 − 6 in order to s tay in the endemic pha se, and ther efore r A did not play any role. Fig. 5 shows the effect of infection with differ en t v alues of r α and co n tact density for for K = 5 and N = 500. Plots (a) and (b) sho w the oscilla to ry patterns without infectio n ( p = 0) for r α = 1 0 − 3 (a), r α = 10 − 4 (b). The oblivion r α (in the prese nce of infection) changes both the oscillato ry freq uency (as studies in the previous section) and the oscillato ry delay b e fo re conv ergence to a ba sic fluctuation pattern. Note that increa sing r α the frequency o f the oscillations increases. When r α = 0 . 0001, (a), the p erio d T is T = ln 210 4 ≈ 7000; for r α = 0 . 0 01, (b), T ≈ 700). By adding infection (annealed version), we obtain a quick er co nv ergence the basal fluctua tion e q uilibrium (c). W e found that the time to r each the basic fluctuation equilibrium do es not dep end on the infection proba bilit y and the level of the fluctuation remain unchanged e ven for long runs (d). Plo ts (e) and (f ) show that with the same v alue of the infection probability , increa sing the density of contacts pro duces lar ger fluctua tio ns, a quick er conv ergence of the cost ( K = 30 for plot (e) with respect to K = 5 for all other s ). The t wo scenario s hav e different oblivion ( r α = 10 − 3 (e), r α = 10 − 4 (f )). Then, incre a sing p , th e fre q uency o f the oscillations r e mains the same but the p eaks broade n. 6 Discussion and Conclusions In this pap e r we hav e b een modeled the cog nitive mechanisms known as a v ail- ability and representativeness heur istics. The r o le of the first one in the human decision making pro cess seems to be to pro duce a pro babilit y estimation of an even t base d on the relative observed (registered) fre q uency dis tr ibution. The second heuristic, repres en tativeness, acts infer ring certain attributes from other s easier-to -detect. Both heuristics are lia ble or ”noise affected”, but surely they represent a very fast way to analyze environmental data using little qua n tity of memory and time. But the v ery interesting as pect, and no t underlined enough in literature, is the role o f the co op era tion b etw een heuristics. The co-o ccurrence of their activities could be co ordinate a lso in the human cognition, but of course it is very interesting from a computational po in t of view. W e suppos ed that the av ailabilit y heuristic corresp onds to a mean field es- timation of the “ risk”, while repre sent ativeness par tially maintains the memory 10 Heuristics 0 500 1000 1500 2000 2500 0 5000 10000 15000 20000 25000 30000 35 000 40000 45 000 50000 C, I, E t C I E (a) 0 500 1000 1500 2000 2500 0 10000 20000 30000 40000 50000 C, I, E t C I E (b) 0 200 400 600 800 1000 1200 1400 1600 0 5000 10000 15000 20000 C, I, E t C I E (c) 0 500 1000 1500 2000 2500 0 5000 10000 15000 20000 C, I, E t C I E (d) 0 2000 4000 6000 8000 10000 12000 14000 0 10000 20000 30000 40000 50000 C, I, E t C I E (e) 0 500 1000 1500 2000 2500 0 20000 40000 60000 8 0000 100000 C, I, E t C I E (f ) Fig. 5. Co st C , Infection level I and Errors E vs. time t for t wo different v alues of the par ameter r α , r α = 1 0 − 3 (a,c,d,e); r α = 1 0 − 4 (b,f ), different v alues of p , p = 0, (a,b); p = 10 − 2 (d,e,f ); p = 10 − 6 , (c) and for some v alue o f the connectivity ( K = 5 for plots (a,b,c,d,f ), K = 30 for plot (e)) and popula tion N = 500. Human Heuristics for Autonomous Agents 11 of the previous interactions with the o thers. In the que nched and annealed sce- narios w e can capture the effect of the heuristics coor dination. The quenched scenario considers the case of “systematic s pr eaders” where sa me agen ts emits at eac h time step a ta in ted messag e . In this case the av ailabilit y heuristic would fails to minimize cost a nd infection if representativ eness was absent. On the con trary in the a nnealed scenar io the spreaders are completely c ho- sen at random at each time step. In this extreme case there is no information contained in the previous histor y of the system, and representativ eness heuristic bec ame completely useless. In this situation the o nly av ailable info r mation is the rate o f infection, a nd av aila bilit y heuristic is the most efficient w ay to minimize bo th cos t and risk of infection. The oblivion mec hanism a sso ciated to the t wo heuristics determines both the cost of the con trol pro cess and a sort of its re a ctivity . In a verage the cost, which represents the num ber of op era tions/computation to cop e the task, is pro- po rtional to the o blivion v alue, the n um b er of infected and e rrors are in v ersely prop ortional to the obliv ion. If the cost as so as it happ ens in the biolo gical do- main, is cons idered as a quantit y which the system has to minimized, it means that will exist an optimal v alue of b oth oblivion pa rameters for each possible condition. The reactivity of the con trol pro ces s could b e defined as the time needed from the system to r educe to ze r o a new infection. In our model the oblivion of b oth the tw o heuristics app ears to control also the size of “c o st oscil- lations”. W e found that the larger the oblivion level, the lo wer the oscillations and the time needed to rea ch the asymptotic equilibrium. Our simulations show that under the infectio n, the cost reaches its asy mptotic v alue muc h earlier than without infection. This suggests that a low v alue of infection level may even provide so me adv antages for the quick dumping of the oscillator y behavior res ulting in an improved cos t predicta bilit y . The in vestigation o f heuristics exploits a ma jor overlap betw een artificial in- telligence (AI), co gnitive sc ience and psychology . The int eres t in heuristics is based on the ass umption that humans pro cess information in wa ys that comput- ers can emulate and heuristics ma y provide the basic bricks for bridging from brains to computers . Our model framework appro ach is quite g eneral and offers some p oints of reflections on ho w the study of co mplex systems may b ecome help developing new ar eas of AI. In the past y ear s the AI communit y has debated as to whether the mind is b est viewed as a netw ork of neurons (connectionism), or as a collectio n of higher-le vel structures such as symbols, s ch emata, heuristics, and rules, i.e. , emphasizing the role of symbolic computation. No wada ys the symbolic repr e sent ations to pro duce general intelligence is in slightly decline but the “neuron ensem ble” paradigm has also shifted tow ards mor e complex mo dels particularly taking into account a nd combining findin gs from both fNMR a nd cognitive psychology fields ( [19,20]). Here we show that the incorp or ation of simple heuristics in a small netw ork of agents leads to a ric h and complex dynamics .Our model do es not tak e int o account mutation and natur al selection which is o f k ey imp ortanc e for the emer - 12 Heuristics gence o f complex behavior in animal so cie ties and in the brain developmen t (see for exa mple Pinker and the follow up debate [21]). A multi-agent mo del, where each age nt repres e n t a message/ modifying per - son/neuro n, ca n serve as a very natural abstra ction of communication netw orks, and hence be easily used by psy c holo g ists a s w ell as computer s c ien tists. Such a mo del also allows the tra cking of single agent fates so that comm unities with low member num b e rs are easily dealt with and these models als o provide for m uch more detailed analysis co mpa red to a verage population a ppr oaches like contin uous differential equations. Heuristics may hav e even gr e a ter v alue in case of environmental challenges, i.e. o rganisms need to adapt quickly to environmental fluctuations, for e xample starv ation and high comp etition, they must b e a ble to make inferences that ar e fast, fr ugal, and accurate. These real-world requirements lead to a new concep- tion of what prop er rea s oning is: ecolo gical r ationality . F ast a nd fruga l heuristics that ar e matched to pa rticular environmen tal str uctures allow orga nisms to b e ecologica lly rational. The study of ec o logical ra tionality thus inv olves ana ly zing the structure of environments, the structure o f heur is tics, and the match b etw een them. References 1. E. Healey , Emma Darwin: The I nspir ational W ife of a Genius , (Headline Publish- ing Group, London U K 2001 ). 2. H. Litc hfield, Emm a Darwin, a c entury of famil y letters , 1792-1896 (D. Appleton And Compan y , London UK 1940). 3. A. Tv ersky and D. K ahneman, A vailabil ity: A heuristic for judging f r e quency and pr ob abil ity . Cognitiv e Psycholo gy 5 , 207 (1973). 4. A. Tve rsky and D. Kahneman, Judgment under unc ertainty: Heuristics and biases , Science 185 , 112 4 (1974). 5. D. Kahneman and A. Tversky , Pr osp e ct the ory: A n analysis of de cision under risk Econometrica 47 , 263 (1979). 6. A. Tv ersky and D. Kahneman, The fr aming of de cisi ons and the psycho lo gy of choic e , Science 211 , 453 (1981). 7. D. K ahneman, P . Slovic and A. Tversky (Eds), Judgment under unc ertainty: heuristics and biases (Cam bridge Universit y Press, N ew Y ork 1982).. 8. B.J. McNeil, S.G. Pauk er, H.C. Soxand A . Tv ersky , On the elici tation of pr efer enc es for alternative ther apies , New England Journal of Medicine 306 , 1259 (1982). 9. E. S h elley T a ylor, The A vailability Bias in So cial Per c eption and Inter action , in Judgment Under Unc ert ainty: Heuristics and Biases , D. Kahneman, P . Slovic and A. Tv ersky (Eds.) (Cam bridge Universit y Press, New Y ork 1982) 10. P . Slo vic, B. Fisc hoff and S. L ichtenstein, F act s versus F e ars: Understanding Per- c eive d Risk, in Judgment Under Unc ertainty: Heuristics and Biases in emph Jud g- ment Under Uncertaint y: H euristics and Biases, D. Kahneman, P . Slovic, and A. Tve rsky (Eds.), (Cam bridge Universit y Press, New Y ork 1982). 11. G. Gigerenzer, P .M. T odd and th e ABC Research Group, Simple Heuristics That Make Us Sm art , (O x ford Universi ty Press, Oxford UK 1999). Human Heuristics for Autonomous Agents 13 12. C. Christensen and A.S. Abb ott, T e am M e dic al De cision Making , in De cision Mak- ing in He alth Car e: The ory, Psycholo gy, and Applic ations , G.B. Chapman and F.A. Sonnenberg (Eds.), (Cam bridge Universit y Press, N ew Y ork 2000). 13. R. Sun (Ed.), Co gnition and multi agent inter action: fr om c o gnitive mo deling to so cial simulation (Cam bridge Universit y Press, New Y ork 2006). 14. G. W eiss, Multiagent Systems: A Mo dern Appr o ach to D i stribute d Artificial Intel- ligenc e (The MIT Press, Cam bridge Mass. 2000). 15. M. W ooldridge, Intr o duction to MultiA gent Systems (Wiley , New Y ork 2002). 16. M. d’Inv erno and M. Luck, Understanding A gent Systems (Springer Series on Agen t T echnology , Springer, Berli n 2003). 17. E. Merelli et al. , A gents i n bioinformatics, c omputational and systems biolo gy , Brief Bioinform., 8 , 45 (2007). 18. H. D rey fus, What Computers Stil l Can ’t Do (MIT Press, Cam bridge Mass. 1992). 19. M.S. Gazza niga, C o gnitive Neur oscienc e (Mit Press, Cambridge Mass. 1995). 20. M.R. Rosenzw eig, S.M. Breedlo ve, N.V . W atson, Biolo gic al Psycholo gy: An Intr o- duction to Behavior al and Co gnitive Neur oscienc e , (Sinauer Asso ciates, Su nderland Mass. 2004). 21. S. Pinker, How the M ind Works (Norton, New Y ork, 1997).

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment