On the Performance of MPI-OpenMP on a 12 nodes Multi-core Cluster

With the increasing number of Quad-Core-based clusters and the introduction of compute nodes designed with large memory capacity shared by multiple cores, new problems related to scalability arise. In this paper, we analyze the overall performance of…

Authors: Abdelgadir Tageldin Abdelgadir, Al-Sakib Khan Pathan, Mohiuddin Ahmed

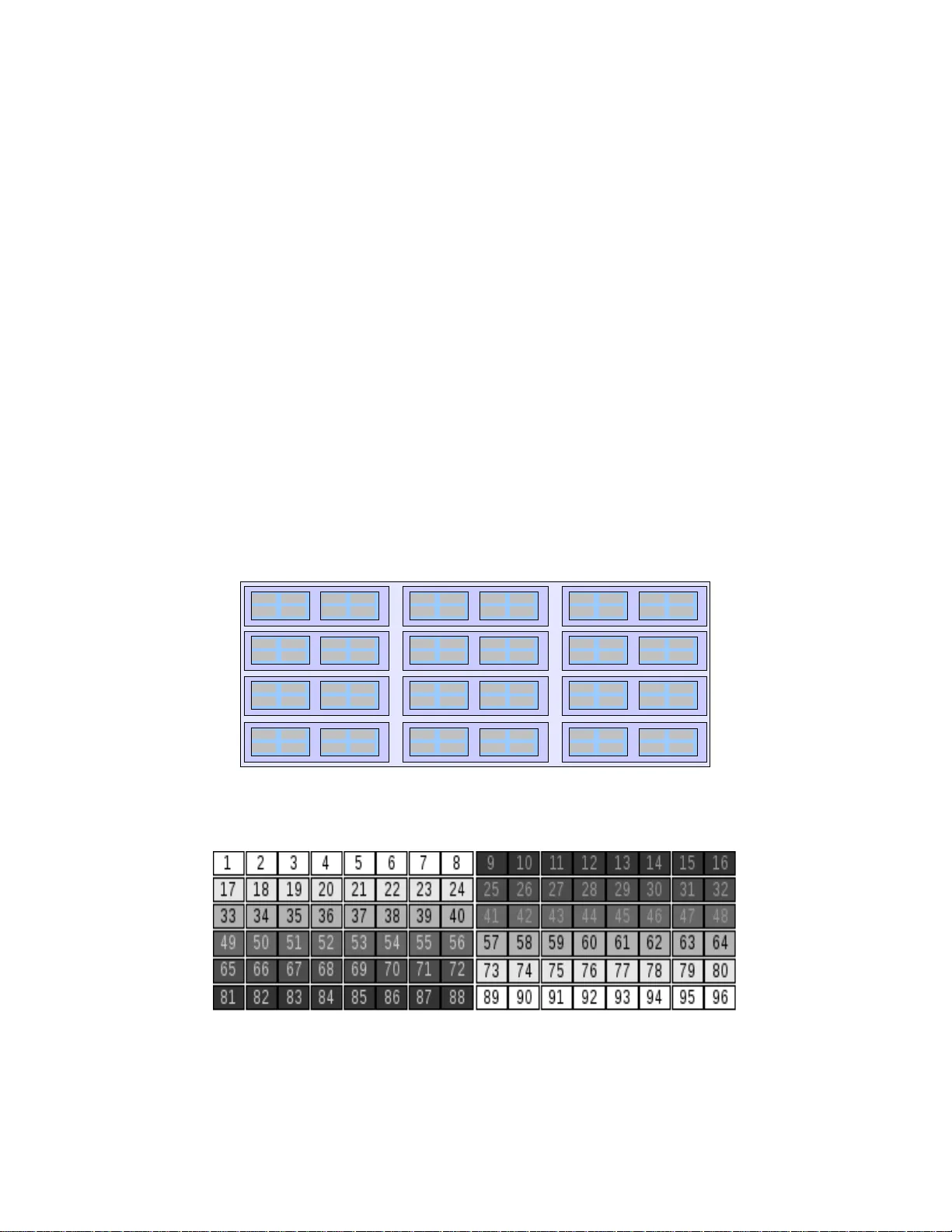

On the Performance of MPI-OpenMP on a 12 nodes Multi-core Cluster Abdelgadir Tag eldin Abdelgad ir 1 , Al-Sakib Khan Patha n 1 ∗ , Mohiuddin Ahmed 2 1 Department of Computer Science, Inte rnational Islamic Universit y Malaysia, Gombak 53100, Kuala Lumpur, Malaysia 2 Department of Computer Netw ork, Jazan University, Saudi Arabia abddu1@gmail.com , sakib@iium.edu.m y , ahmed255555@yahoo.com Abstract. With the increasing number of Quad-Core-base d clusters and the introduction of compute nodes designed with large memor y capacity shared by m ultiple cores, new problems rel ated to scalability arise. In this paper, we analyze the overall p erfo rmance of a cluster built with nodes having a dual Quad-Core Processor o n each node. Some benchmark r esults are presented and some observations are mentioned when handling such processors on a benchmark test. A Quad -Core-based cluster's complexity ar ises from the fact t hat both local communication and network communications between the running processes need to be addressed. The poten tials of an MPI-OpenMP approach are pinpointed because of its reduced communication overhead . At the end, we come to a conclusion that an MPI-OpenMP solution shoul d be consider ed in such clusters since optimizing network communications between nodes is as important as optimizing local communica tions between processors in a multi-core c luster. Keywords: MPI-OpenMP, hybrid, Mu lti-Core, Cluster. 1 Introduction The integration of two or more processors within a sing le chip is an advanced tech nology for tackling th e disadvantages exp osed by a singl e core when it com es to in creasing t he speed, as more heat is generated and m ore power is c onsumed by t hose si ngle cores. T he word c ore refe rs as well to a processor in this new context and can be used interchan geably. Som e of the famous and com mon ex am ples of these processors are the Intel Qua d Core; which is the processor our research cluste r is based on, and t he AMD Opt eron or Phe nom Quad-c ore. This aggregation of classical cores in to a single “Processor” has in troduced the d ivision of work load among the multipl e processing cor es as if the exe cution was t o happen on a fast single processor, this also in troduced the ne ed of parallel and multi-threaded approaches in so lving most kinds of pr oblems. When Quad-cores processors are deployed in a cluster, 3 type s of communicat ion links m ust be considered: (i ) between the two p rocessors on the sa me chip, (ii) between the chips in a sam e node, and (iii) between different processors in different nodes. All these ∗ This work was supported by IIUM research incentive funds. Abde lgadir Tageldin Abdelgadir also has been working with MIMOS Berhad research institute. communicat ions methods need to be c onsidered on such clust er in order t o deal with t he associated chall enges [1], [2], [3 ]. The rest of the pap er is orga nized as follo ws: in Section 2, we briefly introduce MPI and O penMP and di scuss performance measurement with High Perfo rmance Linpac k (HPL), Secti on 3 prese nts the architecture of our cluster, Section 4 desc ribes the resea rch methodol ogies used , Section 5 records our findings and future e xpectations and Section 6 co ncludes t he paper. 2 Basic Terminologies and Background 2.1 MPI And OpenMP The Message passin g models provi de a method of comm unication am ongst sequenti al processes in a paralle l environm ent. These processes execut e on the diffe rent nodes i n a cluster but interact by “passing me ssages”, hence the name. There can be m ore than a single process t hre ad in each proce ssor. The Message Passing Interface (MPI) [8] approach sim ply focuses on t he process comm unication ha ppening across the n etwork, while the OpenMP targets inter-process co mmunications between processors. W ith th is in mind, it will make more sense to employ OpenMP paral lelizati on for inter-pr ocess comm unications within the node and MP I for message passi ng and network communication betw een nodes. It is also possible to use MPI fo r each core as a separate entity with its own address space; this will force us to deal with the cluster di fferently though. With t his simple definitions of M PI and OpenMP, a question arises whether it will be advantageous to employ a hybrid mode wh ere more than one OpenMP and MPI process with multiple th reads on a node so that there is at least s ome explicit intra-node co mmunications [2], [3 ]. 2.2 Performance Meas urement with HPL High Performance Linp ack (HPL) is a well -known benchm ark su itable for parallel workloads th at are core-limited and memory i ntensive. Li npack is a floati ng-point be nchm ark that solves a den se system of linear equatio ns in parallel. The result of th e test is a metric called GigaFlops that translates to billions of flo ating point operations per second. Linpack performs an operation called LU Factori zation. This is a highly p arallel process, utilizing the processor's cache up to the maximum li mit possible, though the HPL benchmark itself may not be considered as a memory int ensive benchm ark. The processor operatio ns it does perfo rm are predomi nantly 64-bit floating- point vector operati ons and u ses SSE instruct ions. This benchm ark is used to determine t he world’s t op-500 fastest computers. In this work, HPL is used to measures th e pe rformance of a si ngle node an d conseque ntly, a clust er of nodes throug h a simulat ed replication of sci entific an d mathem atical applicati ons by solving a dense system of linear equations. In the HPL benchm ark, there are a num ber of metrics used to rate a system. One of t hese important measure s is Rmax , measured in Gigaflops that represents the ma ximum pe rform ance achievabl e by a system . In addition, the re is also Rpeak , which is the theoretical peak performance for a specific system [4]; this is obtained from: [N proc * Clock freq * FP/clock] (1) Where N proc is the number of processors availabl e, FP/clock is the floating-point operation per clock cy cle, Clock freq is the frequency of a processor in MHz or GHz. 3 The Architecture of our Cluster Our cluster consists of 12 Comput e Nodes and a Head Node as depicted in Figure 1. Fig. 1. Cluster physical archit ecture 3.1 Machine Specifications The cluster consi sted of two node types: a Hea d Node and Compute Nodes . The tests were run on the compute nodes only as the Head node was different in both capacity and sp eed. Its addition will increase the complexity of the tests. The Compute Node specific ations sh own in Tabl e 1 are sam e. Each node ha s an Inte l Xeon Dual Q uad Core Processor runni ng at 3.00 GHz. N ote that the system had eight of the m entioned pr ocessors and t hat the sufficient size of cache reduces the latencies in accessing instructi ons a nd data; this generally im proves performa nce for applications w orking on lar ge amount of data set s. The H ead Node s pecificati on (Table 2) was sim ilar and had a n Intel Quad Xe on Quad Core Processor r unning at 2.9 GHz. Table 1. Compute Node processing specifications. Elemen t Featur es processor 0 (Upto 7) cpu family 6 model name Intel(R) Xeon(R) CPU E5450 @ 3.00GHz stepping 6 cpu MHz 2992.508 cache size 6144 KB cpu cores 4 fpu yes flags fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush dts acpi mmx fxsr sse sse2 ss ht tm syscall nx lm constant_tsc pni monitor ds_cpl vmx est tm 2 cx16 xtpr lahf_lm bogomips 6050.72 clflush size 64 cache_alignment 64 address sizes 38 bits physical, 48 bits virtual RAM 16GB 3.2 Cluster Configurati on The cluster was built using Ro cks 5.1 64-bits Cluster Su ite, Rocks [9] is a Linux Distribution b ased on CentOS [10], it is intended for High Performance Computing systems. Intel 10.1 compiler suite was used; the Intel MPI implementation and the In tel Math Kernel Library were uti lized as well. The cluster wa s connected to t wo networks, one used for MPI-based operations and the other for normal data transfer. As a side-note relevant to practition ers, a failed attempt was done with an HPCC version of the Linpack benchmark that utilized an OpenMPI library implem entation; the res ults were unex pectedly low. Test s based on the Ope nMPI config uration and s ubsequent planned test-runs were aborted. Table 2. Head Node specifications. Elemen t Featur es processor 0 ( Upto 16) cpu family 6 model name Intel(R) Xeon(R) CPU X7350 @ 2.93GHz stepping 11 cpu MHz 2925.874 cache size 4096 KB cpu cores 4 fpu yes flags fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush dts acpi mmx fxsr sse sse2 ss ht tm syscall nx lm constant_tsc pni monitor ds_cpl vmx est tm 2 cx16 xtpr lahf_lm bogomips 5855.95 clflush size 64 cache_alignment 64 address sizes 40 bits physical, 48 bits virtual 4 Research Methodology Tests were done in two main iterations, the first iteration was a single no de performance measurement followed by an extended iteration that include d all the 12 nodes. T hes e tests consumed a lot of time; the cluster was not fully dedicated for pu re research pur poses as it was used as a productio n cluster as well, t ime was lim ited for test -runs. Our main research focused on exam ining to what extent, the cluster would scale, as it was the first Quad-core to be deployed at th e site. In thi s paper, we f ocus on the m uch more successful test-run o f the hybri d implem entation of HPL by Intel for Xeon Pr ocessors. In e ach of the iterations, different configurations and set-ups were implemented; these included changing the grid topol ogy used by HPL according to different settings. This was needed since the cluster contai ned both a n internal grid – between proce ssors – and a n external grid com posed of the n odes themselves. In each test trial, a configuration was set and performance was m easured using HPL. An analysis of the factors affecting performa nce is recorded fo r each trial an d graphs were generated t o clarify pr ocess-distributi on in the grid of processes. 4.1 Single Node Test The test for a singl e node was done fo r all nodes. This i s a precautio nary measure to c heck whether all nodes are performing as expected since the cluster's pe rformance i n an HPL test- run is lim ited by the slowest of nodes. Ta ble 3 shows the results from different nodes, the avera ge is approxim ately 75.6 G flops. This num ber can be cal culated using Equati on 2. In eac h node, t here are Dual Xeon Q uad Processors , ma king the theoret ical peak performa nce equal to: R peak = 8*3*4 = 96Gflop s/node. (2) But the maximum performance obtained was at an approximate average of 75. 6 Gflops/node, t his is the Rmax Value obtainable for a single node. The efficiency is calculated at 78.8%. Table 3. Performance of Cluster Nodes Node 1: 7.517e+01 Gflops , Node 2: 7.559e+01 Gflops , Node 3: 7.560e+01 Gflops , Node 4: 7.552e+01 Gflops , Node 5: 7.558e+01 Gflops , Node 6: 7.559e+01 Gflops , Node 7: 7.557e+01 Gflops , Node 8: 7.560e+01 Gflops , Node 9: 7.537e+01 Gflops , Node 10: 7.561e+01 Gflops Node 11: 7.557e+01 Gflops Node 12: 7.562e+01 Gflops Table 4 shows the param eters used for the single node test . 4.2 Multiple Nodes Test The Multiple node test required many iterations to scale well an d reach an optimal perfo rmance in the limited ti me the researcher had. The first thing put in to consideration wa s the grid topo logy to be used in order to achieve good results. Severa l grids were pro posed depe nding on the knowledge ga thered from previous e xperiences; it is considered that in a cluster-wide t est, attainm ent of high perform ance is dependent on the num ber of cores and th e frequency o f the processor be ing used on each n ode. Dist ribution of processes is cruci al; a balanced dist ribution of processes will basically result in better performance. Table 4. HPL configuration for Single Node test. Choice Parameters 6 device out (6=stdout,7=stderr,file) 1 # of problems sizes (N) 40000 Ns 1 # of NBs 192 NBs 0 PMAP process mapping (0=Row-,1=Column-major) 1 # of process grids (P x Q) 1 Ps 8 Qs 16.0 threshold 1 # of panel fact 0 1 2 PFACTs (0=left, 1=Crout, 2=Right) 1 # of recursive stopping criterium 4 2 NBMINs (>= 1) 1 # of panels in recursion 2 NDIVs 1 # of recursive panel fact. 1 0 2 RFACTs (0=left, 1=Crout, 2=Right) 1 # of broadcast 0 BCASTs (0=1rg,1=1rM,2 =2rg,3=2rM,4 =Lng,5=LnM) 1 # of lookahead depth 0 DEPTHs (>=0) 2 SWAP (0=bin-exch,1=long,2=mi x) 256 swapping threshold 1 L1 in (0=transposed,1=no-transposed) form 1 U in (0=transposed,1=no-transposed) form 0 Equilibration (0=no,1=yes) 8 memory alignment in double (> 0) Generally, HPL is controlled by two main parameters th at describe how processes are distributed across the cluster's nodes; these values P and Q are both critical benchmark-tuning param ete rs when producing good performance is required. P a nd Q should be as close t o equal as possible, but when they are not equal; P should be less than Q. That is because when P is m u ltiplied by Q, it actually gives the numbe r of MPI processes to be used and how they are distributed across the nodes. In this cluster, ther e are several choices, such as 1x96, 2x48, 3x32, 4x24, 6x1 6, 8x12 . However, the network can affect performance, an d in our case, the intr oduction of Multi-Cores within a single node; so different t rials are needed to achieve best perfor mance. Another param eter needed is N, whi ch is the size of the problem to be fed to HPL. W e have used the following formula as in [4] to estimate the prob lem size: () [] 8 / ) 1000000000 * ( MB M sizes ∑ (3) This will give a value th at will approximate ly be N, for example: N = sqrt(12*16*1 000000000) ~= 15 4919 However, it is preferred not t o take the whole result, we chose 140000 as N, giving m ore than 25% to other l ocal system processes; this is to av oid the use of the virtual me mory which will render the whole test-run u seless. An overloaded system will u se the swap area, and this will ne gativ ely affect th e results of the bench mark. It is advisable to make full use of m ain memory, b ut at the sam e time avoid using t he virtual memory. The optimal performance was achieved with HPL input parameters as in Table 5: Table 5. HPL configuration for 12 Nodes test. Parameter Value N 140000 NB 192 PMAP Row-major process m apping P 6 Q 16 RFACT Crout BCAST 1ring SWAP Mix (threshold = 256) L1 no-transposed form U no-transposed form EQUIL no ALIGN 8 double precision words A first expectation was 3x32 or 4x24 will produce the optimal performance, b ut a 6x16 grid (Figure 2 and Figure 3) obtained the best performance at 662. 2 Gflops, Perform ance increase is linear to some extent, but will not equal the overall absolute sum of 12 n odes that is 907 Gflops. Thi s is acceptable, as a clust er's performance does not scale linearly in reality [1 ], thus th e efficiency of th e cluster is calculated at approximately 60%, which is sat isfactory for a Gigabit-based cluster. 5 Observations, Discussions, and Future Expectations By looking at the general topolo gical structure of t his cluster, we notice that different cores will be completing the same process in parallel, this leads to hig h network c ommunicat ion between the different nodes in suc h clusters. Moreover, processing spee d tends to be fast er than the Gig abit networ k's communi cation link s peed availa ble for the cluster. This will be translated in to waiting time in which some cores may become idle. In preliminary test-runs, we opted to use an M PI-only appr oach based on o ur previous experiences with clusters, the results were disappointing, reaching a maximum of approxim ately 205Gflops. An option was propose d to run the Linpa ck benc hmark test using Intel's MPI library in its hybrid mode, this ve rsion feat ured an MPI-OpenMP implementation of HPL, it uses MPI for the network communi cation while utilizin g OpenMP for local commu nication between co res. This approach seemed more appropriate for a Multi-Node cluster and the re sults previously presen ted in this paper are based on a Hybrid impl ementation of HPL benc hmark. The direct effect of this was a fully saturated network as well as fully utilized processors. [5 ], [6]. Core Core Core Core Cor e Core Cor e Core Core Core Core Core Cor e Core Cor e Core Core Core Core Core Cor e Core Cor e Core Core Core Core Core Cor e Core Cor e Core Core Core Core Core Core Co r e Core Co r e Core Core Core Core Core Co r e Core Co r e Core Core Core Core Core Co r e Core Co r e Core Core Core Core Core Co r e Core Co r e Core Core Core Core Cor e Core Cor e Core Core Core Core Core Cor e Core Cor e Core Core Core Core Core Cor e Core Cor e Core Core Core Core Core Cor e Core Cor e Core Fig. 2. Physical view of 6x16 cores grid Fig. 3. Abstract view of MPI pro cess distribution on the 6x16 grid Another factor obse rved whi ch affected the perf ormance of this cl uster is the netwo rk, the current set -up of the cluster includes two netw orks, one is used solely for MPI traffi c, which is the networ k that obtained the hig hest possible result. From these findings, it is recom mended that mu lt i-core clusters deployed fo r MPI jobs should have a dedicated network to run t hose types of jobs. It was n oti ced in the test-run phases th at MPI processes in general generate huge data, whi ch in turn requires lots of network bandwi dth. This is mainly caused by the higher s peed of Multi-processing in each node in relation to the current speed av ailable in the test cluster. The main advantage of usi ng Intel' s MPI implem entation in this wo rk is the abil ity to define networ k or device fabrics, or in other words, defining a clusters phy sical connec tivity. In this cluster, the fabric can be defined as a TCP network with shared-m emory cores, which i s synonym ous to an Ethernet-based SMP cl uster. When running wit hout explicitly defining the underlyi ng fabric of our cluster, overall perform ance degradation wa s noticeable as the cluster's overall benchmark result was merely 240 Gfl ops, a 40 Gflops more than the p reviously m entioned failed attempts with an MPI only appro ach but still a low number when co nsidering the ov erall expected performance. This was caused by having the MPI processes starte d without previous knowledge of multip le-core nodes, in this scenario, each core will be treated as a single component with no comm unicative relation with its neighboring cores within the same node, resulting in co mmunication rather than processin g which leads to more idle time for that specific c ore. To solve the problem of the l ow overall results obtained, a new para meter to define the underly ing mechanism for t he running MPI library was introduced in next test-runs, and as e xpected, the results obtained reached a m aximum of 662.6 Gflops. This w as the expected result at the beginning of t he test, but was not achievable wi th our prelim inary runs since it additionally needed a defi nition of the underl ying fabric for In tel's MPI to use in order t o achieve such performance. The additi on of the option l ead to an execution aware o f both com munication types avai lable for this cluster which are the Gigabi t communication bet ween the nodes and the shared m emory comm unication withi n a node's cores. This essenti ally leads to the better perf ormance achieved. Another aspect of these tests was how the cluster was viewed or perceived ph ysically and how that differed from the way we should look at it. Wh en dealing with Mul ti-core processors, an abstract view is needed as well, and the best method was to use diagram s, such as in figure 1 and 2. These fi gures depict how a 6x16 t opology was chosen and how processes are distri buted am ong nodes. It can be noticed from the figures that proce sses are passed in a round- robin way across different cores and not n odes. In this cluster, each node has 8 processors, so it can be viewed as 8 different single-core p rocessor nodes. This di stributi on of processes affects the overall performance as well. Unexpectedly, the 6x16 grid performed we ll as a result of having more related processors on a single node, as well as less communication is needed between the processes across the grid. In this configur ation, each of the running processes can heavily utilize the shared cache and local communication bri dges to accomplish some of the tasks. On the other hand, netwo rk communicatio n happens while p rocessing cores are being utilized for processin g. Table 6 summarizes the best as well as unexpected results obtained from several test-runs. From Table 6, we can noti ce the drastic performance obt ained by changing the way we deal wi th modern day com puter clusters. A hi gh increase in performance was the result of an experience we attained when dealing with this new typ es of clusters. Table 6. General summary of trials. Option Types Gflops Obtained PxQ Problem size N Commen ts OpenMPI, MPI 207 Gflops 8x12 140000 Low results, tested with different topologies and ma pping schemes. Intel MPI, fabric-less 204 Gflops 8x12 140000 Another low result, although expectations were high, using non-MPI-only network. Intel MPI, fabric-less 224.6 Gflops 6x16 140000 Good indication of the 6x16 topology which lead us to choose it in later phases. Intel MPI, TCP+Shared Mem. 662.6 Gflops 6x16 140000 The hybrid mode reaches a new peak, 60% overall efficiency. In general, we can summ arize the main obser vations gather ed from a modern day cl uster in the followin g points: 1. The network s ignificantl y affects the Cluster’s perform ance. Thus, separ ating the MPI net work from the normal network may result in better overall performance of the cluster. 2. The cluster's com pute processors and the architecture, of which the proce ssors inherit their features from, should be stu died as di fferent pr ocessors per form di fferently. 3. The MPI impl ementat ion in use must be considered si nce not all pr ovide the same feat ures and per form similarly as shown in Table 6. Although all libraries can run MPI jo bs, as well as the different approac hes available for cluster users. An example of MPI libra ries available are the Open MPI library and the Intel MPI library implementation. 4. Both the physical and abstract aspects are i mportant, de tails of how MPI applications process data must at least be known by a cluster administrator, as these details will determine how a cluster perfor ms. 5. Multi Node cluster scalability is still deb atable; scaling a cluster without upgrad ing network bandwidth may not achieve i ts goal of per formance im provemen t as we found out in this wor k. Perform ance degradation cau sed by scaling up was relatively hi gh; we assume a faster network will yield better performance in relation to scalability for these types of clusters. 6 Conclusion From the obtained result s, we can observe the difference b etween the MPI-OpenMP hy brid implem entations and an MPI-only implementation. Moreov er, how this may heavily affect a benchmark test when done on Multi-core clusters. The numbers obt ained are the results of test-runs e xecuted on a 12 node cluster wi th 96 cores. It was done for the purpose of knowi ng how scalable the cluster was and ho w good was it t o perform, while t hat happened, ma ny more observations were recorded th at we hope will bene fit the research ers and practitioners wo rking with such clusters. References 1. Buyya, R. (ed.): “High Performance Cluster Com puting: Archit ectures and S ystems”, Volume 1, Pr entice Hall PTR, NJ, US A (1999) 2. Dongarra, J.J., Luszczek, P., Petitet, A.: The LINPACK Benchmark: Pa st, Present, and Future, Concurrency and Computation: Practice and Experience, Vol. 15, pp. 1-18 (2003) 3. Gepner, P., Fraser, D.L., Kowalik, M.F.: Seco nd Generation Quad-Core Intel Xeon Processors Bring 45 nm Technology and a New Level of Performance to HPC Applications. M. Bubak et al. (Eds.): ICC S 2008, Part I, Lecture Notes in Computer Science, LNCS 5101, Springe r-Verlag, pp. 417- 426 (2008) 4. Pase, D.M.: Linpack HPL Performance on IBM eServer 326 and xSeries 336 Servers. IBM, July 2005. availabl e at: ftp://ftp.softw are.ibm.com/eser ver/benchmarks/wp_Linpack_072905.pdf 5. Saini, S., Ciotti, R., Gunney, B.T.N ., Spelce, T.E., Koniges, A., Dossa, D., Adamid is, P., Rabenseifner, R., Ti yyagura, S.R. , Mueller, M.,: Performance Eva luation of Supercomputers using HPCC and IMB Be nchmark s. Journal of Computer and System Sciences, Volume 74 Issue 6, DOI: 10.1016/j.jcss.2007.07.002 (2007) 6. Rane, A., Stanzione, D.: Experiences in Tunin g Performance of Hybrid MPI/OpenMP Applications on Quad-core Systems. In Proceedings of the 10th LCI International Conference on High-Performance Clustered Computing (2009) 7. Tools for the Classic HPC Develope r. Whitep aper published b y The Portland Group, v 2.0 September, available at: http://www.pgroup.com/lit/pgi _whitepaper_tools4hpc.pdf (2008) 8. Wu, X., Tayl or, V.: Performance Ch arac teristics of Hybrid MPI/OpenMP Implem entations of NAS Parallel Benchmarks SP and BT on Large-Scale Multicore Clusters. The C omputer Journal (to be published) (2011) 9. http://www.rocksclusters.org/ 10. http://www.centos.org/

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment