A theory of multiclass boosting

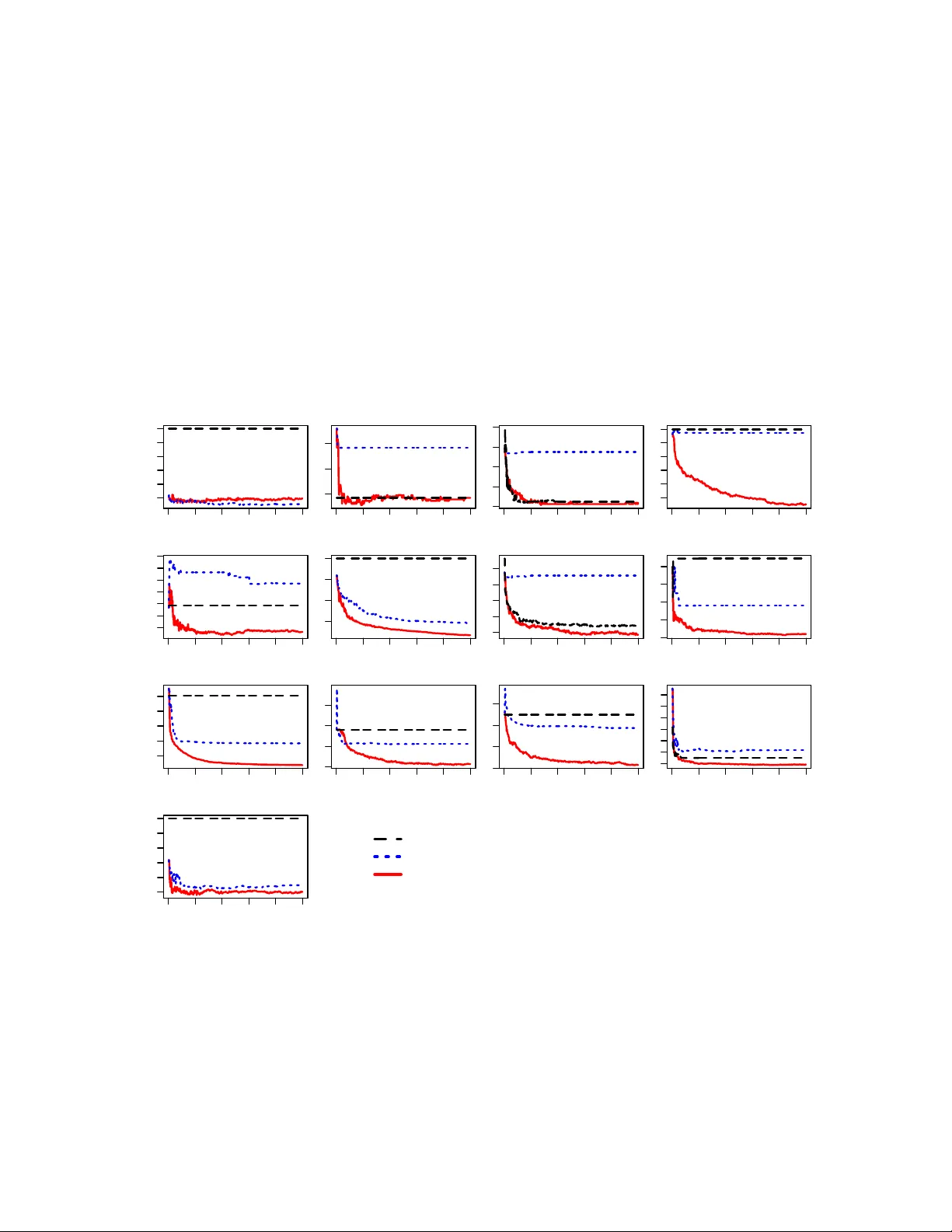

Boosting combines weak classifiers to form highly accurate predictors. Although the case of binary classification is well understood, in the multiclass setting, the "correct" requirements on the weak classifier, or the notion of the most efficient bo…

Authors: Indraneel Mukherjee, Robert E. Schapire