Generalised elastic nets

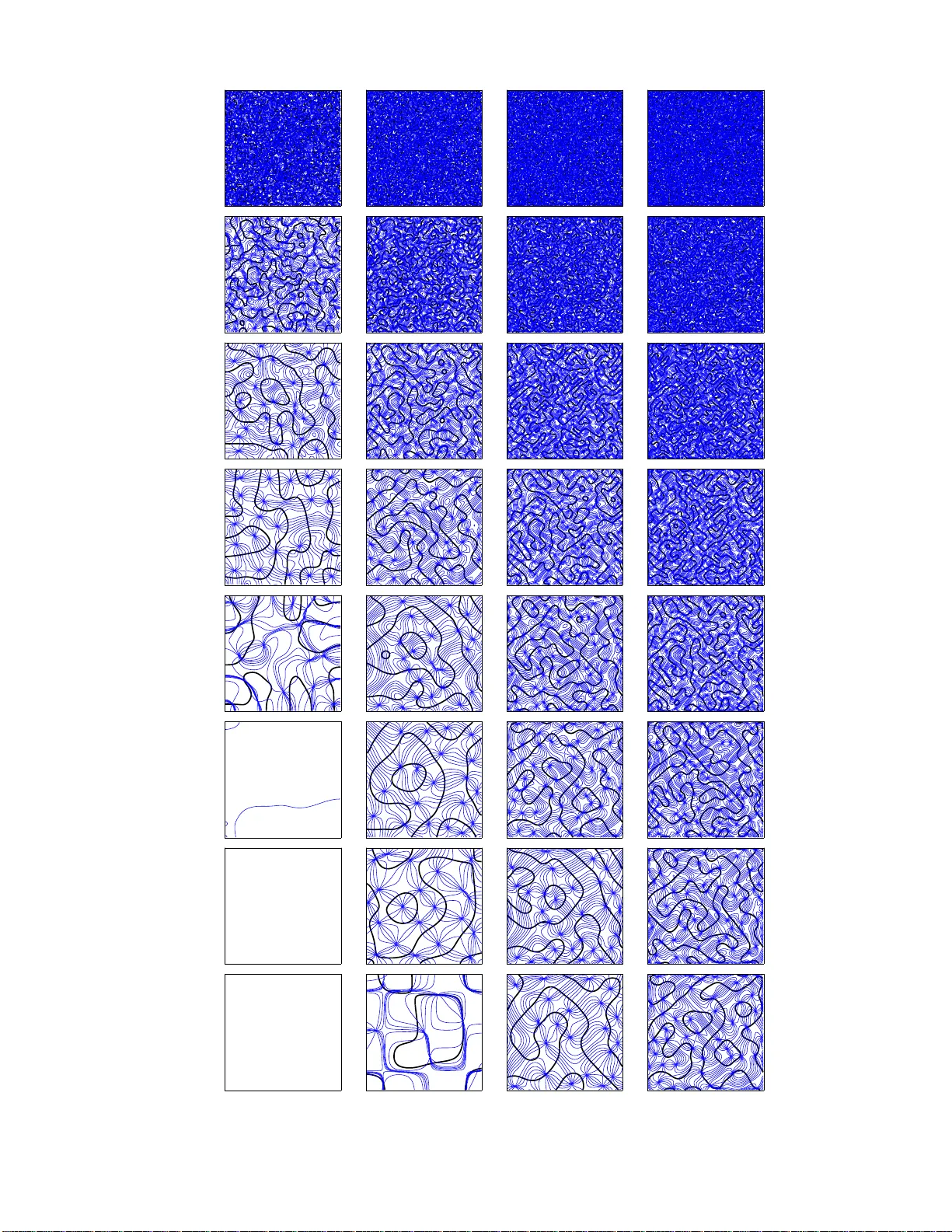

The elastic net was introduced as a heuristic algorithm for combinatorial optimisation and has been applied, among other problems, to biological modelling. It has an energy function which trades off a fitness term against a tension term. In the origi…

Authors: Miguel A. Carreira-Perpi~nan, Geoffrey J. Goodhill