G-NetMon: A GPU-accelerated Network Performance Monitoring System for Large Scale Scientific Collaborations

Network traffic is difficult to monitor and analyze, especially in high-bandwidth networks. Performance analysis, in particular, presents extreme complexity and scalability challenges. GPU (Graphics Processing Unit) technology has been utilized recen…

Authors: Wenji Wu, Phil DeMar, Don Holmgren

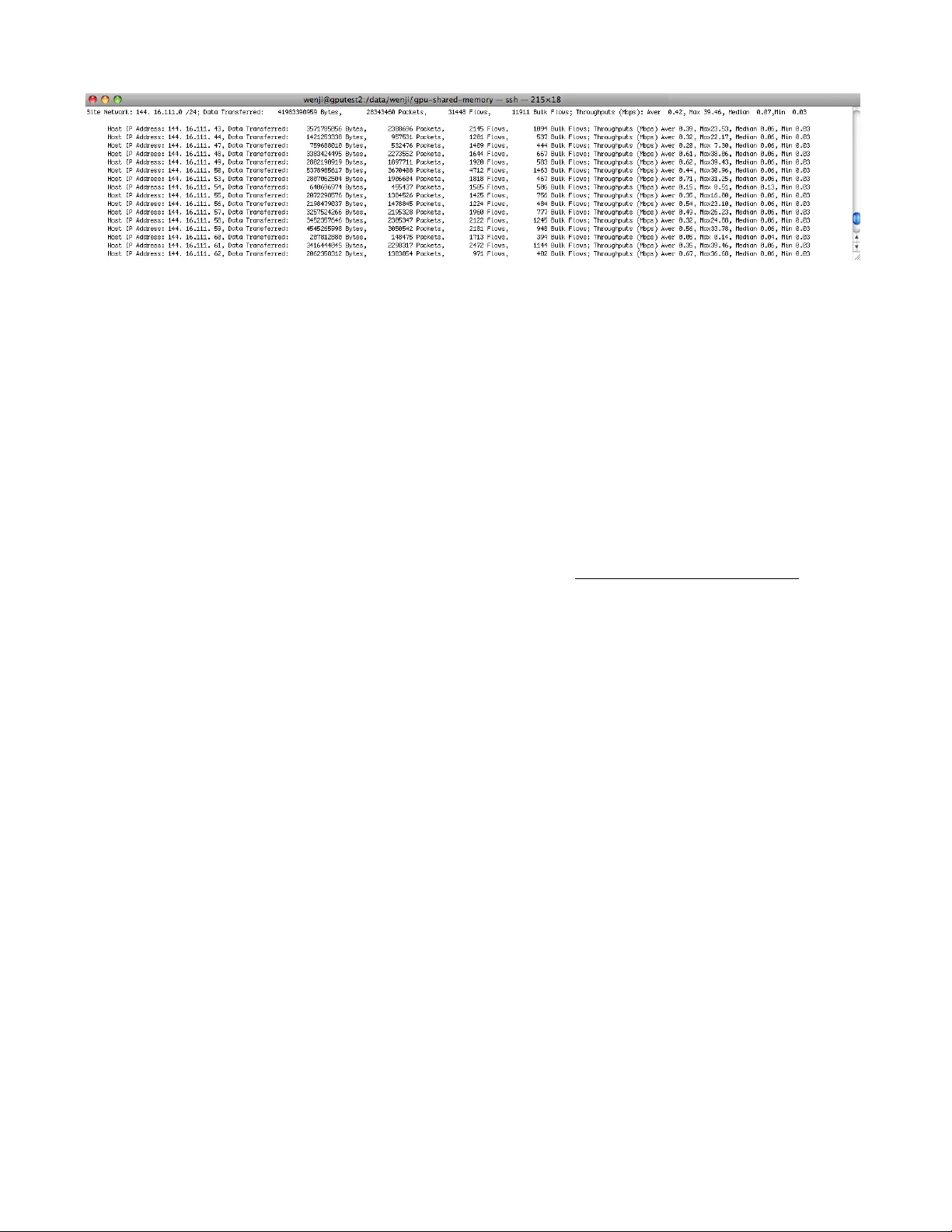

G-NetMon: A GPU-accelerated Network Performance Monitoring System for Large Scale Scientific Collaborations We nji Wu , Ph il D eM ar, Do n H olm gr en, Am ito j Si ngh , R uth Por des Com puti ng D ivis ion, Ferm ilab Bata via, IL 6 0510 , US A E-m ail: { wen ji, d ema r, djh olm , am it oj, r uth} @fn al.g ov A b s t r a c t — N e t w o rk t raf f i c i s d i f f i c u l t t o m o n i t o r a n d a n a l y z e , e s p e c i a l l y i n h i g h - b a n d w i d t h n e t w o rks . P e rf o rm a n c e a n a l y s i s , i n p a rt i c u l a r, p re s e n t s e x t re m e c o m p l e x i t y an d s c a l a b i l i t y c h a l l e n g e s . GP U ( G ra p h i c s P ro c e s s i n g U n i t ) t e c h n o l o g y h a s b e e n u t i l i z e d re c e n t l y t o a c c e l e ra t e g e n e ral p u rp o s e s c i e n t i f i c a n d e n g i n e e ri n g c o m p u t i n g . G P U s o f f e r e x t re m e th re a d - l e v e l pa ra l l e l i s m w i t h h u n d re d s o f s i m p l e c o re s . T h e i r da t a - p a ral l e l e x e c u t i o n m o d e l c a n rap i d l y s o l v e l a rg e p ro b l e m s w i t h i n h e re n t d a t a pa ral l e l i s m . A t F e rmi l a b , w e ha v e p ro t o t y p e d a G P U - a c c e l e ra t e d n e t w o rk p e rf o rm a n c e m o n i t o ri n g s y s t e m , c a l l e d G - N e t M o n , t o s u p p o rt l a rg e - s c a l e s c i e n t i f i c c o l l a b o rat i o n s . I n th i s w o rk , w e e x p l o re n e w o p p o rtu n i t i e s i n n e t w o rk t ra f f i c m o n i t o ri n g a n d a n a l y s i s w i t h G P U s . O u r s y s t e m e x p l o i t s t h e d a t a p a ral l e l i s m t h a t e x i s t s w i t h i n n e t w o rk f l o w d a t a t o p ro v i d e f a s t an a l y s i s o f bu l k da t a mo v e m e n t b e t w e e n F e rmi l a b a n d c o l l a b o ra t i o n s i t e s . Ex p e ri m e n t s d e m o n s t ra t e th a t o u r G - N e t M o n c a n rap i d l y de t e c t s u b - o p t i m a l b u l k d a t a m o v e m e n t s . K e y w o r d s : G P U , Fl o w A n a l y s i s , N e t w o r k P e r f o r m a n c e M o n i t o r i n g , H i g h - s p e e d n e t w w o r k s . I. I NTRO DUCTI ON Large-scale research e fforts such as L arge H adron C ollider experiments and climate modeling are built upon large, globally distributed collaborations. The datasets associated with these projects common ly reach petabytes or tens of petabytes p er year. T he ability to efficiently retrieve, store , analyze, and redistribute the data sets generated by th ese projects is extremely challengin g. Such projects d epend on predictable and efficient data transfers between collaboration sites. However, achieving and sustaining efficient data movemen t o ver high-speed ne tworks with TC P remains an on- going challenge. O bstacles to efficient and sustainab le da ta movemen t arise from many cau ses and can create ma jor impedimen ts to the success of larg e-scale science collaborations. In practice, most sub-optimal data movement problems go unn oticed. Ironically, althou gh various performance de bugging tools and services are available to assist in iden tifying and locating perfo rmance b ottlenecks, these tools cannot be applied until a problem is detected. In many cases, effe ctive measures are not taken to fix a performance problem simply be cause the problem is either no t detected at all or not detected in a timely man ner. T herefore, it is ex tremely beneficial to poss ess a set of tools or servic es th at can qu ickly dete ct sub-op timal da ta mov ement for large-sc ale scientific collabora tions. Generally speaking, network tra ffic is difficult to monitor and an alyze. E xisting tools like Ping, Tracerou te, OW AMP [1] and SN MP provide only coarse-grained m onitoring and diagnosis data about netwo rk statu s [2 ][3]. It is very difficult to use these tools to detect sub-optimal data movement. For example, SNMP-b ased mo nitoring sys tems typica lly provide 1- minute or 5-minute avera ges for n etwork performa nce data of interest. These a verages may obscure the in stantaneous network status . On the other extreme, pack et trace analysis [4][5] involves traffic scrutiny on a per-pa cket basis and requires high-perform ance c omputation and large-volum e storage. It faces ex treme sc alability ch allenges in high-speed networks, especially as network technology evo lves towards 100 Gbps. Flow-base d da ta analy sis, usin g route r-generated flow-data such as Cisco’s NetFlow [6] lies in between the two extremes. It prod uces a finer-graine d analysis than S NMP, y et mu ch less complex or volumin ous as packet trace analysis. In this p aper, w e u se flo w-based analysis to d etect s ub -o ptimal data movem ents for large-scale sc ientific collaboration s. !" !#$" %" %#$" &" &#$" '" '#$" (" (#$" $" &!!)*%%*%&+!!" &!!)*%%*%$+!," &!!)*%%*%,+%-" &!!)*%%*&&+!!" &!!)*%%*&$+!," &!!)*%%*&,+%-" &!!)*%&*!&+!!" &!!)*%&*!$+!," &!!)*%&*!,+%-" &!!)*%&*%&+!!" &!!)*%&*%$+!," &!!)*%&*%,+%-" &!!)*%&*&&+!!" ./0123"45"6748"92:43;<" =>77>4?<" F i g u re 1 N u m b e r o f F l o w R e c o rds G e n e rat e d a t F e rm i l a b B o rde r R o u t e rs To quickly d etect sub-optimal d ata movem ents, it is necessary to calculate transfer rates betw een co llaboration s ites on an ongo ing b asis. Su b-optimal bulk data movement is detected if the associated transfer rate falls below some standard that is either pre defined or provid ed by other netw ork services. To this end, we use netw ork flow d ata to calculate transfer rates between Fermilab and collaboratio n sites. Ou r flow-based analysis requires traffic scrutiny on a per-f low- record basis. In high-bandw idth netwo rks, hundreds of thousands of flow records are generated each minute. Fermila b is the Tier-1 Center fo r the Large Hadron Collider’s (LH C) Compact Muon Solenoid (CMS) experiment, as well as the central data center for several other large-scale research collaborations. Scientif ic d ata (e.g., C MS) domin ates off-site traffic volum es in both inbo und and outbound directions. Every hour, million s of flow re cords are gen erated at Ferm ilab border routers (F igure 1). Proc essing th at mu ch flo w da ta in near real time require s both enormous raw com pute pow er and high I/O throughputs. Recently, GPU tech nology has been employed to accelerate general purpose scientific and eng ineering computin g. GPUs offer extre me thre ad-level p arallelism with h undreds of simp le cores. The massive array of GPU core s offe rs an order of magnitude higher raw compu tation po wer th an a conventiona l CPU. Its data-parallel execution model and amp le mem ory bandwidth effectively h ide memo ry access laten cy and ca n boost I/O intens ive applications w ith inherent data p arallelism. At Fermilab, we hav e prototype d a G PU-accelerated network performance monitoring system (G -NetMon ) for our large-scale scientific collabora tions. In this work, we explore new opp ortunities in network traffic monitoring and analysis with GPU s. G-NetM on exploits the in herent data para llelism that exis ts within netw ork flow data and uses a G PU to rapidly calculate transfer rates between Ferm ilab and collaboratio n sites in near real tim e. Experimen ts dem onstrate th at GPU can accelerate netw ork flow data proce ssing by a factor of 5 or more. G-NetM on can rapidly detect sub-optimal bulk data movemen t. The rest o f the pap er is organiz ed as follo ws. In sec tion 2, we discu ss some backgroun d and related w ork. In section 3, we introduce ou r G-NetM ON d esign. In sec tion 4, w e discuss th e experiments w e used to e valuate how GPU can accelerate network flow data processing in high-speed netw ork environmen ts. Also, we ev aluate how o ur system can effectively detect sub-optimal data transfer between Fermilab and collaboratio n sites. Finally, Sec tion 5 concludes the paper. II. B ACKGROUND & R ELA TED W ORK The rapidly growing po pularity of G PUs mak es them a natural c hoice fo r high-p erformance computin g. Ou r GP U- accelerated netw ork perform ance monito ring system is based on NV IDIA’s Tesla C2 070, featu ring NV IDIA’s latest Fermi GPU architecture . In the followin g sec tions, w e giv e a simp le introduction of NVID IA’s CUDA progra mming mode l and the Fermi GPU architecture. A. CUDA a nd th e F er m i G P U A r chitect ur e CUDA is the hardware and sof tware archite cture that enables N VIDIA GPUs to execute programs written with C, C++, an d other lan guages. It provides a sim ple program ming model that allow s a pplication develo pers to easily program GPU and e xplicitly express para llelism. A CU DA program consists of parts that are exe cuted on the hos t (CPU) and pa rts on th e GP U. The p arts that exhibit little or no data parallelism are im plemented as sequential CPU thre ads. T he pa rts that exhibit a rich amoun t of d ata parallelism are implemented as GPU kernels. G PU insta ntiates a kernel pro gram on a grid of parallel thre ad block s. Each thread within a thread block executes an instance of the kernel, and has a per-thread ID, program counter, registers, and private memory . Threads within a thread block can coop erate amon g themselv es through barrier synch ronization a nd shared memory . Thread blocks are grouped into grids, each of which executes a unique kernel. Each thread block has a unique block ID. A thread ind exes its data with its respe ctive thread ID an d block ID. NVIDIA ’s Fermi GPU architecture con sists of m ultiple streaming m ultiprocessors (SM s), each consistin g of 32 CU DA cores. A C UDA core executes a floating-point o r integer instruction per clock for a thread. Each SM has 16 load/store units, allow ing sou rce and destination addresses to be calculated for six teen thre ads per clock and 4 s pecial fu nction units (SFUs) to exec ute transcenden tal instructions. The SM schedules thre ads in group s of 32 pa rallel threads calle d warps. Each SM fe atures two warp schedulers and tw o instruction dispatch units, allowing tw o w arps to b e issu ed a nd e xecuted concurrently. The exec ution resources in a SM include registers, thread block slo ts, and th read slots. These reso urces are dynam ically partition ed and assigned to threads to suppo rt their exe cution. W e lis t these resource limits per SM in Ta ble 1. In add ition, the F ermi GP U has s ix 64-bit memory partitions, for a 384-bit me mory in terface, sup porting up to a total of 6 GB of G DDR5 DRAM memory. A host interfac e connects the GPU to th e CPU v ia PCI-Expres s. The GigaT hread global scheduler distribu tes thread blocks to S M thread schedu lers. T a b l e 1 P h y s i c a l L i m i t s p e r S M f o r F e rm i G P U M a x i m u m W a rps : 48 M a x i m u m T h re a d s : 1536 M a x i m u m B l o c k s : 8 S h a re d M e m o ry : 48K R e g i s t e r C o u n t : 32K B. GPU in N etw o r k R el a ted A p plic a tio ns GPU o ffers extrem e thread-level parallelism with hun dreds of simple cores. The massive array o f GPU cores offers an order of magn itude higher raw c omputation po wer than a conventional CPU. GPU’s data-parallel execution model and ample m emory ban dwidth fits nicely with most netw orking applications, which have in herent data parallelism at either packet level or at netw ork data flow level. Recently, GP Us have shown a substantial perfo rmance boost to many netw ork applications, includ ing GPU-accelera ted s oftware router [7], pattern matching [ 8][9][ 10 ], ne twork coding [ 11 ], IP ta ble lookup [8], and cryptograp hy [ 12 ]. S o far, the application of GPU in network app lications is ma nly focusing at packet leve l. In th is w ork, we make use of GPU to accelerate network flow data analysis. C. F l ow - ba s ed A na ly si s Flow-based analysis is widely used in traffic engineering [ 13 ][ 14 ], a nomaly detection [ 15 ][ 16 ], traffic classification [ 17 ][ 18 ], performa nce analysis, and security [ 19 ][ 20 ][ 21 ], etc. For examp le, Internet2 m akes use of flow data to generate traffic summ ary informatio n by breakin g the data d own in a number of ways, including by IP proto col, by a well-know n service or applicatio n, by IP prefixes associated with “ local” networks, or by the A S p airs betw een w hich the traffic was exchanged. In [ 15 ], th e sub-spac e method is ap plied to flow traffic to detect netw ork-wide anom alies. III. G-N ET M ON S Y STEM D ESIG N To quick ly detect sub-optimal data m ovements, G-NetMo n uses netwo rk flow d ata to calcula te transfer rates between Fermilab and collabo ration sites on an on-going basis. A su b- optimal bulk data movem ent is detected if the associated transfer rate s fall below some standard that is either predefined or provided by other netwo rk services. Our G PU-accele rated network performance monitorin g system is deplo yed as shown in Figure 2 . It receives flow data from site bord er ro uters as well as internal LAN route rs. The routers ex port NetFlow V5 records. The flow data is comp lete, not sampled . A. S y stem Har dw a re Conf ig ur a to n Our flow -based an alysis requires traffic scru tiny on a per- flow-record basis. Fe rmilab is the U S-CMS Tier-1 C enter and the m ain data ce nter for a few other large-scale research collaborations. E very hour, million s of flow records a re generated at Fermilab border routers (Fig ure 1). Considering the increasing volu me of scientific data cre ated every year, coupled with th e evolu tion tow ards to 100 GigE network technologies, it is an ticipated that o ur ne twork flow data analysis requirements will be increasing accordingly. Therefore, ou r G-NetM on not only needs to han dle current network con ditions, but hav e the capability to accom modate the larg e gro wth of traff ic exp ected in the near future. For now, Fermilab b order routers generate less than 5,000,0 00 flow records every hour. Our target is to allo w G-NetMo n to hand le 50,000,000 flo w records per h our. R&E Networks FNAL Site Border Routers CPU HUB GPU NIC Network Performance Monitoring System CPU CPU RAM T esla C2070 FlowData Feed Fig ure 2 G -Ne tMo n – D eplo ym en t G-NetM on is im plemented in a syste m that consists of two 8-Core 2.4 GHz AM D Opte ron 6136 p rocessors, two 1Gbps Ethernet interfaces, 32 GB of sy stem m emory, and one Tesla C2070 GPU . The Tesla C207 0 G PU features the Ferm i G PU architecture. Its key f eatures are listed in T able 2. Tab le 2 Tesl a C 2070 Ke y Fe atur es S M s : 14 C o re s : 448 C o re F re q . : 1 . 1 5 G H z G l o b a l M e m o ry S i z e : 6 G B G D D R 5 M e m o ry B a n d w i d t h : 1 4 4 G B / s S y s t e m I n t e rf a c e : P C I e x 1 6 G e n 2 D o u b l e P re c i s i o n P e a k P e rf o rm a n c e : 5 1 5 G F l o p s B. S y stem A r ch itect ur e The G-NetMo n arc hitecture is as shown in Figure 3. The system consists of a few parts that are exec uted o n either the host (CPU) or G PU. Based o n the CUD A design principle , the parts tha t exhibit little or no data parallelism are impleme nted as sequentia l CPU threads; the parts that exhibit a rich amount of data parallelism are impleme nted as GPU kernels. FlowData Receiver NetFlow v5 (UDP) Flow Data Store Site Catalog CPU Domain GPU Domain Site Registration Flow Data Store Site Registration Copy TransRate Kernel Catalog Kernel GPU Site Catalog TranRate Statistics TranRate Statistics NetPerf Monitoring Performance Warning Fig ure 3 A G -NetM o n – Ar chi tect ure B .1 CP U D om a in Three CPU threads are imp lemented in the CPU dom ain. S i t e R e g i s t ra t i o n T h re a d : it registe rs scientific subne ts to our network performance m onitoring syste m. The registere d subnets are stored in the S ite Catalog (a data buffer in h ost memory), which he lps to iden tify sc ientific data transfer between F ermilab an d collaboratio n sites. La rge-scale research efforts like LHC C MS are built upon larg e, g lobally distributed collaborations. However, available co mputing a nd network ing resources at different colla boration sites varies greatly. L arger sites, such as Fermilab, have data cen ters com prising tho usands of computation n odes that function as m assively scaled, highly distributed cluster-com puting platforms . T hese sites are usually well conn ected to th e outside world with high-bandw idth links of 10 Gbps or m ore. On the othe r h and, some sm all collaboration sites have limited computing resou rces an d significantly lower bandw idth-networking conne ctivity. Therefore, scientific da ta transfers between collaboration site s can vary greatly in terms of performa nce and scale. It is difficult to design machine-learning algorithm s to automatically identify scientif ic data transf ers in terms of traffic patterns or characteristics. Ho wever, for a large-scale scientific application, the collabora tion relationships between research institutions tend to be relatively static. In addition, the systems and ne tworks a ssigned to a scientific ap plication at a site are relativ ely f ixed. Large-scale scientific data move ment usually o ccurs be tween some specific su bnets at each site. Therefore, by regis tering these subnets to ou r system, we can easily monitor da ta tran sfers between Fe rmilab and its collaboration sites through flow a nalysis of traffic betw een those subnets. F l o w D a t a R e c e i v e r T h re a d : a UDP daemon , which receives NetF low V5 p ackets from b order routers. T he received flow records are store d in Flow Data S tore (a data buffer in host mem ory). In the current implementatio n, Flow Data Store is designed to ho ld 50,000 ,000 flow records. Since a NetFlow V5 flow record is less than 50 B ytes, these 50,000,000 flow records require approximately 2.5G B of memory. Processed flow records in Flow Data Store are perio dically cleaned and stored to disk to cre ate space for subse quent network flow data. N e t P e rf M o n i t o ri n g T h re a d : the main thread of our network perform ance monitorin g sy stem. Periodically (eac h hour), it c opies Site Catalog and Flow Data S tore to GPU memory and la unches the correspon ding GPU ke rnels to calculate the transf er rates between Fe rmilab and its collaboration s ites. When GPU c omputation is completed , the NetPerf M onitoring T hread will sy nthesize the final results. A sub-optimal bulk data movement is detected if the assoc iated transfer rates are b elow so me pre defined s tandard. C onsidering that TC P traffic is elastic, we use the statistics o f transfer rate medians as our evalua tion criteria. Fo r a given site, network performance warnings would be issue d if the associated median we re less than 1M bps for two con secutive hours. B .2 GP U D om a in 1) G P U K e r ne ls In the GPU domain, we h ave im plement ed two G PU kernels, Catalog Kernel and T ransRate Kernel. C a t a l o g K e rne l : it builds G PU S ite Catalog , a hash table for registered scientific subnets in GPU memory, from Site Catalog. TransRa te K ernel makes use of GPU Site Ca talog to rapidly assign flo w re cords to their respective subnets by examining their source or destination IP addresses. To make the ha sh table easy to implement and fast to search , all registered netw orks are transform ed into /24 s ubnets and th en entered in GPU Site Catalog. For the sake of s cientific d ata transfer, a /24 subnet is large e nough for mos t collabora tion sites. A ny netw ork larg er than /24 is divided into multiple entries in the hash table. Since GPU Site Catalog is mainly used for lo okup operations and is rare ly u pdated, there is no need to implem ent locks to pro tect unsyn chronized write accesses. If any upd ate is n ecessary, th e table is rebuilt fro m scratch. T ran s R a t e K e rne l : it calculates the transfer rates between Fe rmilab and its collaboratio n site s. Tra nsRate Kernel exploits the inherent data parallelism that exists within network flow data. W hen GP U instantiates T ransRate Kern el on a grid of parallel threads, each th read han dles a separate flow re cord. On a C20 70 GPU , thousands of flow records can be proces sed simultaneously . To handle a flow record, a TransRate thread first attempts to a ssign the flow re cord to its respec tive site and then c alculates the co rresponding transfer rates. With a hash of the /24 subne t of the flow record’s source or destinatio n IP address, T ransRate K ernel look s up the site to which the flow record b elongs in GP U Site Catalo g. Becau se each flow record includes data such a s the number of pa ckets and bytes in the flow and the tim estamps of the first and last p acket, calculation of transfer rate is sim ple. Howev er, two addition al factors must be co nsidered. First, b ecause a T CP connection is bidirectional, it will generate two flow records, one in each direction. In practice, a bulk d ata moveme nt is usually unid irectional. Only the flow records in the forward direc tion reflect the true data transfer activities . The flow records in the oth er direction simply record the p ure ACKs o f the reverse path and should be excluded from transfer rate calculations. Th ese flow records can be easily filter ed out b y calculating the ir average packet size, which is usually small. Second, a bulk data movem ent usually invo lves frequent administrativ e message exchanges between the two endp oints. A signific ant number of flow records are g enerated du e to these activities. Th ese records usually contain a small numb er o f pac kets with short durations; their calculated transfer rates are genera lly o f lo w accuracy and high variability. T hese flow records are a lso excluded fro m our transfer rate calculation . We ca lculate transfer rates (m aximum , minim um, ave rage, median) for each registere d s ite an d for each host in a registered site. To calcula te th e median statistics, we c reate an array of buc kets for each host to co unt transfer rate frequencies. Each buck et represents a 10kbps inte rval. To sav e space, all transfer rates greater than 100Mp bs are co unted in the last bucket. T herefore, for each h ost, we maintain a bucke t array o f size 10001. A bucket n represents the frequ ency of flow rates that fall w ithin the interval [n*10 kbps (n+ 1)*10kbps]. From th e resulting buck et counts w e determin e the host and site m edians. We use atomic CUDA operations to calculate and store all transfer ra tes in order to prevent unsynchronize d data accesses by the threads. 2). G P U K e r n el O ptim iz a tion The C atalog K ernel is relatively simple, w ith few opportunities fo r optimization. In fa ct, its functionality co uld be included in TransRate Kerne l. Ho wever, because the overhead to launch a kernel is neglig ible [7], w e have chosen to implement it as an ind ependent kernel to preserve a modular design. Our Tr ansRate kernel is optim ized u sing va rious approaches: • Register S pilling Optim ization. Withou t this optimization, a TransRate th read will use 47 registers. These registers hold compiler-generated variables. Because registers are in-chip memories that can b e accessed rapidly, a single thread’s performan ce increases if registers are readily available. H owever, when we used the C UDA Occupancy Calculator to measure SM occupancy with va rying block sizes, to our surprise, the occupancy rates were unacceptably lo w (Table 3). At suc h a low SM occupancy, the overall G PU perform ance would be greatly de graded. The im provement in each single thre ad canno t make up for the loss in ov erall thread parallelism . To raise G PU occupancy, we limit the maxim um nu mber o f registers used by TransRa te to 20 b y c ompiling this kernel with the “-maxrregcou nt 2 0” option. As shown in Tab le 3 , this register spilling optimization is effective, and t he best GPU occupancy achieved as the num ber of threads per block is varied is now 100%. • Shared memory. Shared memories are on-chip memories and can be accessed at very high spe ed in a highly para llel manner. The TransRate kernel makes use o f shared memory as much as pos sible to a ccelerate flow data processing. • Non-cach ing Load. Fermi arch itecture global mem ory has two type s of lo ads, cachin g and non-caching. The cach ing load is the default mo de. It first atte mpts to load from L1 cache, then f rom L2 cache, and finally from the global memory. The load granularity is 1 28 b ytes. T he n on- caching load first atte mpts to hit in L2, a nd then the global memory. Its load g ranularity is 32 bytes. Our experimen ts show that non-cachin g lo ad can boost the performanc e b y at least 10%, and so the op timized TransR ate kernel uses non -caching load to access Flow D ata Store. T a b l e 3 S M O c c u p a n c y R a t e s a t D i f f e re n t K e rn e l B l o c k S i z e s T h re a d S i z e p e r B l o c k 64 128 256 512 S M O c c u p a n c y R a t e s @ R e g i s t e r/ T h re a d = 4 7 33% 42% 33% 33% S M O c c u p a n c y R a t e s @ R e g i s t e r/ T h re a d = 2 0 33% 67% 100% 100% IV. E XPERI MENTA L E VA LUATIO N In this section, we show results o f our experime ntal evaluation of G-N etMon. Firs t, w e evaluate the perform ance of our G-NetM on system. Also, we study how G PU can accelerate network flow data processing in high-volume network data flow e nvironments. Sec ond, we deploy our G- NetMon in F ermilab production environme nts. We evaluate how G-NetM on can effectively dete ct sub-optimal data transfers betwee n Fermilab and its collaboration sites . A. P er f or m a n ce E v a lua tion At presen t, Fermilab border ro uters produ ce fewer than 5,000,000 flow record s in an hour. However, our G-NetM on system is designed to handle a m aximum load of 50 ,000,000 flow records per hour. To e valuate the capabilities and performance of our system at such a ne twork load, w e collect ed more than a day’s flo w records from the border routers and fed G-NetM on with 50 ,000,000 flo w records. Flow Data Re ceiver Thread receives these flow records and stores them in Flow Data Store. We also selec t th e to p 100 /2 4 s cientific subnets that transfer to an d from Fermilab in te rms of traffic v olume, and register them with Site Cata log. A .1 GP U Per f or m a nce & O ptim iz a tion To evaluate G PU performance, and the effects of various GPU kernel optimiz ation approaches, we have imp lemented several G-NetMon variants with different ena bled optimizations. O ur objectives are to co mpare effects: • Shared-M emory vs. Non-S hared-Mem ory. For Non- Shared-Me mory, the TransRate Kernel do es not use shared memory, and all the operations are exe cuted o n GP U global mem ory. • Caching-L oad vs. Non-C aching-Load . • Hash-Ta ble-Search vs. Non-Hash-Table-S earch (sequential search). To calcula te tran sfer rate s betw een Fermilab and collaboration sites, it is first necessary to assign flow records to their respective sites. G-NetMon implements a hash table to perform this function. We have also implemented a Non-H ash-Table method (i.e., sequential sea rch) in w hich all of the registered sc ientific subnets are maintained in a sequential list. To c ategorize a flow record, the T ransRate kern el search es the list one by one un til a matching site , or none, is found . We list all the G- Ne tM on v ariants a ccording to enab led optimizations in Tab le 4. In the table, “Y” indicates that the “xxx” optim ization is enable d, while “N” indicates the optimization is not used. We enabled th e register-sp illing optimization when comp iling all of these G PU variants, and so the Tran sRate K ernel is launched with 100% occupancy. We ran experim ents to me asure the rates at which these G-N etMon variants proc ess network flow d ata and c ompared them with the performan ce of the fully optim ized G-NetM on. Tab le 4 GPU Va rian ts w ith Diffe ren t Fe atur es GPU Va rian ts Feat ure s Has h Tab le Shar e- Mem ory Cac hing Loa d G-Net Mon Y Y N NH -S-C-GPU N Y Y NH - NS - C-GP U N N Y NH - NS - NC -GPU N N N H- NS -C-GPU Y N Y H- NS - NC -GPU Y N N H-S-C-GPU Y Y Y NH -S- NC -GPU N Y N Figu re 4 GP U P roce ssin g Tim e T he NetPerf Mo nitoring thread copies Site Catalog an d Flow Data Store to G PU memory and launches the corresponding GPU kern els to calculate the transfer ra tes. W e evaluate h ow fast GP U can handle these data. The experiment results are shown in Figure 4. To handle 50,00 0,000 flow records, G- NetMon ta kes approxima tely 900 millisec onds. The effects o f the variou s GPU kernel optimiza tions are shown. For example, with the combin ation of hash table and non-cac hing-load, shared-mem ory can accelerate flow record processing by as much as 9.51% . A s discussed abo ve , shared m emories are on- chip m emories that can be accesse d at very high speed in a highly parallel manner. T he experiment results sh ow that the hash table m echanism significa ntly bo osts G -NetMon performance, rang ing fro m 1 1% to 20%. We used NV IDIA’s Compute Visual P rofiler to p rofile the T ransRate K ernel execution. Fig ure 5 gives the “In struction Issued” c omparisons of H ash-Table vs. No n-Hash-Table of all GP U c ode variants. The se experiments show that the hash table mechanism can significantly redu ce flow record catego rization overhead s. Figu re 5 Ha sh-Ta ble ( in R ed) vs. N on -Hash -Table (in Blu e) T o our surprise, Figure 4 show s that n on -caching -load bo osts the perform ance significan tly, by more th an 10%. We spec ulate that the non-caching -load mode bette r fits G -NetMon’s traf fic pattern. When using the ca ching load, the perform ance gain in L1 cac he does not compensate for the perform ance lo ss caused by the larger load granularity. Table 5 give s the compariso ns of caching load vs. non -caching load for v arious mem ory access parameters. W e see tha t caching loa d causes hig her memo ry traffic, degrading th e overall perform ance. Tab le 5 C ach ing -Loa d v s. N on -Cac hin g-Lo ad G-Net Mon H-S-C-GPU L2 R ead Re ques ts 1.61 835e +08 2.98 727e +08 L2 W ri te Req uest s 1.15 432e +07 1.61 657e +07 Glob al M em ory Rea d Re que sts 2.17 803e +08 2.48 466e +08 Glob al M em ory Wri te R eque sts 3.01 409e +07 3.22 455e +07 A .2 GP U v s. C P U In order to evaluate how GPU can accelerate network flow data processin g in high-b andwidth network environm ents, we compare G-NetMon with its corresponding CPU implementa tions. We implemen ted two CPU varian ts, which are term ed H-CP U and NH-C PU, re spectively. L ike G- NetMon, H-CPU applies a hash table mechan ism to rapidly assign flow reco rds to their respective s ites and then calcula tes the c orresponding transfer rates. In contrast, N H-CPU implements a sim ilar Non -Hash-Table metho d (sequentia l search) as NH-S- NC -G PU, in which all of the registered scientific subnets are main tained in a sequential list. To assign a flow record, CPU searches th e list one by one until a matching site, or no ne, is found. We ran each of H-CPU and NH -CPU on a single 2.4 GHz AMD Opte ron 613 6 core, with the sam e set of data as used above. We make the comparison s of G-NetM on vs. H-C PU and NH-S- NC -GPU vs. NH-CPU. The results are show n in Figure 6. It takes H-CP U 4916.67 m s to hand le 50,00 0,000 flow reco rds; in contrast, G-NetMon requires 900ms. For the non-hash-tab le varia nts, NH -CP U and NH -S- NC -GPU take 363 36.67 m s and 1098.23 ms, respectively. T he comp arisons clearly show that GPU can significantly accelerate the flow data processing, by a facto r o f 5.38 (G-N etMon v s. H-CPU ), or by a factor of 33.08 (N H-S- NC -GPU vs. NH -CPU). T he reaso n that we prese nt the comparison of G PU vs. CP U fo r the non-hash-tab le implementa tions is because ma ny n etwork applications feature a simil ar sequential search computa tion pattern as our non - hash-table implementatio ns. For example, a network security application needs to exam ine each packet or f low w ith security rules one b y one. T he experim ent results sh ow GP U can significantly accelera te the data processin g. Fig ure 6 G -Ne tMo n v s. C PU A .3 R ec eiv i ng Flow R ec or d s G -NetMo n rece ives NetFlow V5 pac kets from border routers via UDP . T he receive d flow records are stored in Flow D ata Store. A NetF low V5 flow record is 48 byte s. A 1500 -byte UDP packet, th e largest allowed by stand ard Ethe rnet at the network, can transm it a t m ost 30 flow records. Our G -NetMon system is designed to handle a m aximum load of 50 ,000,000 flow records per hour. Th erefore, the Flo wData Re ceiver thread needs to handle at least 463 packets per second , w hich amounts to an average traffic load of 5.56M bps. Our G- NetMon system c an e asily h andle such a tra ffic lo ad . Ho wever, because the flow records are transmitted via UDP, if CPU is busy with other ta sks and the Flow Data Receiver thread is not scheduled to handle the N etFlow traffic in tim e, the incoming packets can b e dropped when th e UDP receive buffer is full. We hav e run exp eriments to verify this s cenario. In the experiments, the FlowData Receive r thread was assigned to share a core with a CPU-in tensive application and the UDP receive buffer siz e wa s set to 4 MB. We then s ent it UDP traffic at varying rates, ranging from 1 00Mbps to 1Gb ps, for 0.5 seconds. W hen the UDP traffic rates reached 500Mbp s or above, seriou s packet lo ss would occur. We repeated th e above experiments with the F lowData Receive r thread assigned a dedicated co re. No p acket loss w as detected . Therefore, to avoid th e situation of NetFlow packets being dropped, G- NetMon assigns a dedicated core for the FlowData Receiver thread to handle N etFlow traffic. B. N etw o r k P e r f o r m a nc e M onit or in g We have registered 100 /24 scientific subnets that tran sfe r to and from Fermilab in G-NetM on. G-NetM on monitors the bulk data mov ement statu s between Fermilab and thes e subnets by calculating the corresponding data transfer statistics every hour. G-NetM on ca lculates the tran sfer rates (max imum, minimu m, average, median) for each registered site and for each host in a registered site. F igure 7 gives the data transf er rates in an hour between Ferm ilab and a colla boration site. A sub-op timal bulk data m ovement is detected if the associated transfer rate falls below a pred efined s tandard. Considering that TCP traffic is elastic and network condition s are volatile, we use th e statistics of transfer rate m edians as our evaluation criteria. F or a given site , network pe rformance warnings would be issued if the associated median w ere less than 1Mbp s for two conse cutive hours. T o ev aluate the ef fectiveness of G -NetMon in detecting sub- optimal bu lk data m ovements, we inve stigated the G-NetMo n warnings for a period of tw o weeks. During this period, G- NetMon issued perform ance warnings for 7 sites in to tal (there were multiple w arnings for the sam e sites). For those sites th at G-NetM on is sued warnings, we contacted their network administrators to cond uct end-to-end perform ance analysis. Five sites respond ed to our requests. The e nd-to-end performance analysis in dicated p oor netw ork cond itions between the se sites and Fe rmilab. To o ur surprise, one site in Greece is even connected to the outside world with a 100 Mbps link. The inv estigation of th ese warning s demonstrated that our G-NetM on can effectively detect sub-optimal bulk data movemen ts in a timely manner. G -NetMon can detec t a sub - optimal bulk da ta movemen t in two hours. V. C ONC LUSION & D ISCUS SION A t Ferm ilab, we have prototyped a GPU- accelerated n etwork performance m onitoring system for larg e-scale scientific collaborations, called G-N etMon. Our system exploits the inherent data p arallelism that ex ists within network data flows and can rapidly analyze b ulk data movem ents between Fermilab and its co llaboration sites. Ex periments demon strate that our G-NetM on can detect sub-optimal bulk data mo vement in time. C onsidering TCP traffic is elastic a nd netw ork conditions are volatile, our G-N etMon syste m applies a ve ry conservative approach to issue perform ance warnings. G-N etMon is chosen to perform transfer rate ana lysis every hour. Running G- NetMon w ith shorter intervals c an detect sub-optim al bulk data movemen t fas ter. H owever, it would also genera te m ore ephemeral warnings and f inally degr ade our system’s effectiveness. T he main p urpose of this work is to explore new opportunities in network traffic monito ring and analysis with GPUs. Th e experiment results show tha t GPU can significantly acce lerate the flow data process ing, by a factor of 5.38 (G- NetMon vs. H-C PU), or b y a factor of 33.08 (NH-S- NC -G PU vs. NH-CPU ). A t p resent, G-NetM on is d esigned to detect s ub - optimal bulk data moveme nts. In the future, we will e nhance it with se curity featu res. To implem ent secu rity features , G- NetMon needs to examin e flow record s with security rules one by on e in real tim e or semi-real time, which require more computation ca pabilities. The computa tion pattern of examining flow records with security ru les one by one is similar to that of th e non-hash -table imple mentations discussed in the paper, in which GPU can significantly acce lerate the flow data proc essing. R EFER ENCES [1] OWAM P websit e, http:// www.in ternet2.e du/perfor mance/o wamp/ [2] K. P apagiann aki, R. C ruz, C . Di ot, “n etwork perfo rmance monit oring at small time scales,” Proc eedings of the 3rd ACM SIG COMM conferen ce on In ternet me asureme nt, Miam i Beach , FL, US A, 2003 . [3] T. Benson, A. Anand, A. Akella, M. Zhang, “Understa nding Data Center T raffic C haracteri stics,” In Proceed ings of A CM W REN, 2009. [4] V. Pax son, “A utomate d packe t trace analysis of TC P impleme ntations ,” In Pro ceedings of SIGC OMM’9 7, 1997 . [5] V. Pax son, “E nd- to -End Interne t packet dynam ics,” In proceed ings of SIGCOM M’97, 1997. [6] NetFlow website , http://w ww.cis co.com/ [7] S. Han, K. Jang, K. Park , S . M oon, “Pack etShader , a GP U- Accelera ted Softwa re Router,” In Proceedin gs of SIGCO MM’10 , New De lhi, India . [8] S. Mu, X. Zhang, N . Zhang , J. Lu, Y.S. Deng, and S. Z hang, “I P Routing Proces sing wi th Grap hic Proc essors,” In Des ign, Au tomatio n & Test i n Europ e Confere nce & E xhibitio n, 2010. [9] R. Smith, N. Goyal, J. Ormont , C. Estan, and K. Sankaralinga m, “Evalua ting GPUs for Netw ork P acket Signa ture Matchin g,” In IE EE ISPASS , 2009. [ 10 ] G. Vas iliadis, S. Ant onatos, M. Po lychrona kis, E. P. Ma rkatos, and S. Ioannidi s, “G nort: High perform ance network intr usion detectio n using graphics processors,” In Pro ceedings of Recent Adv ances in Intrusion Detecti on (RAI D), 2008 . [ 11 ] H. Shojan ia, B. Li, and X . Wang, “N uclei: GPU -acceler ated many - core netw ork cod ing,” in IEEE IN FOCOM , 2009. [ 12 ] O. H arrison and J. Wald ron, “Practic al S ymmetri c K ey C ryptogra phy on Mod ern Grap hics Hard ware,” I n USEN IX Secur ity, 2008 . [ 13 ] A. Lakh ina, K. Papagi annaki, M. Crov ella, C . Diot, E. D. K olaczyk , and N . Ta ft. S tructural Anal ysis of Ne twork Traffi c Flo ws. In AC M SIGMET RICS’0 4, New York, Ju ne 2004. [ 14 ] A. K alafut, J. Merwe , M. Gup ta, “ Commun ities of interest for internet traffic p rioritiza tion,” In Procee dings o f 28th IEEE Internati onal C onferen ce on Com puter Commu nications Wor kshops, 2009. F i g u re 7 T ra n s f e r ra t e s b e t w e e n F e rm i l a b a n d a C o l l a b o rat i o n S i t e [ 15 ] A. Lakhina , M . Cr ovella, and C. Dio t, “ Diagnos ing Networ k-Wide Traffic A nomali es,” In P roceedin gs of AC M SIGC OMM’0 4, 2004 . [ 16 ] A. Lakh ina, M . Crovel la, C. D iot, “C haracteri zation o f Netwo rk- Wide An omalies in Traff ic Flows ,” In Pro ceedings of IMC ’04, 20 04. [ 17 ] J. Waller ich, H. D reger, A. Feldman n, B. Kri shnamur thy, W. Willinge r. A m ethodol ogy for studyin g persis tency a spects o f intern et flows,” SIGCOM M Com put. Co mmun. R ev. 35, 2 (2005) . [ 18 ] Internet2 NetFlo w, http:/ /netflow .internet 2.edu/we ekly/ [ 19 ] R. So mmer and A. Fe ldmann, “N etFlow: Inf ormation lo ss or win?,” in Proc. ACM In ternet M easurem ent Work shop, 20 02. [ 20 ] C. Gat es, M. Collins , M. D uggan, A. Ko mpanek , M. T homas, “More netflow to ols: for perfo rmance an d s ecurity,” In P roceedin gs of LISA’04 , 2004. [ 21 ] V. K rmicek, J. V ykopal, R. Krejci, “Netf low b ased system for NAT detection ,” In Proc eedings of 5th internat ional student work shop on emergin g networ king exp eriments and tech nologie s, 2009.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment