Use of Devolved Controllers in Data Center Networks

In a data center network, for example, it is quite often to use controllers to manage resources in a centralized man- ner. Centralized control, however, imposes a scalability problem. In this paper, we investigate the use of multiple independent cont…

Authors: Adrian S.-W. Tam, Kang Xi, H. Jonathan Chao

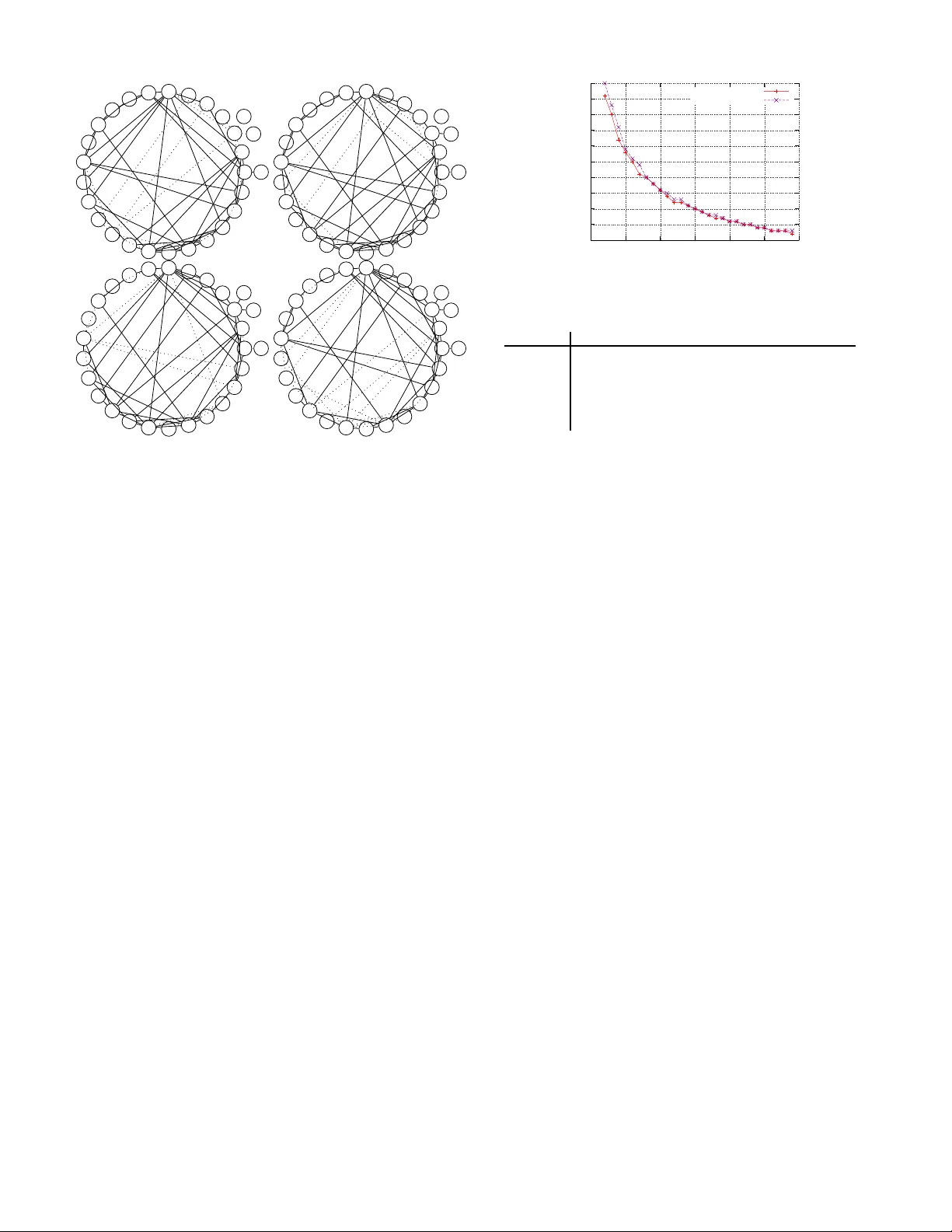

Use of De v olve d Controllers in Data Center Networks Adrian S.-W . T am Kang Xi H. Jona than Chao Departmen t of Electrical and Computer Engine ering Polytechnic Institute of New Y ork University Email: adrian @antioch.po ly .edu, kxi@poly .ed u, chao@poly .ed u Abstract —In a data center network, fo r example, it is qui te often to use controllers to manage resources in a centralized man- ner . Centralized control, howev er , imposes a scalability problem. In this paper , we in vestigate the use of multi ple independen t controllers instead of a single omniscient controller to manage resour ces. Each controller looks after a portion of the network only , but they together cov er the whole network. This therefo re solves the scalability p roblem. W e use flow allocation as an example to see how this approach can manage th e b andwidth use in a distributed manner . The f ocus is on how to assign co mponents of a network to th e controllers so that (1) each controller only need to look after a small part of the n etwork but (2) there is at least one controller that can answer any request. W e outline a way to configure the controllers to fulfill t hese requirements as a p roof that the u se of d ev olved controllers is possible. W e also discuss severa l issues related to such implementation. I . I N T RO D U C T I O N Among rec ent years’ literature o n d ata center networking, using a cen tralized contro ller f or coord ination or resou rce managem ent is a com mon pr actice [1], [2], [3], [4], [5], [6], [7]. In [5], for example, a master server is u sed to h old the metadata f or a distributed file system. In another example, [1], a flow schedu ling server is responsible for compu ting a new route for a r erouted flow at real time. In [3], a co ntroller is also used to enforce a ro ute for a packet so that its use of the network compliances with the policies. Using a centralized contro ller not only makes th e design simp ler , but also sufficient. In [5], the authors claim th at a sin gle co ntroller is en ough to driv e a fairly large network and the p roblem o f single p oint of failure can be mitigated b y rep lication. Nev ertheless, the use of a cen tralized contro ller subjects to scalability co nstraints. Usually the scalability problem s are solved b y loa d ba lancing. For example, replicating the wh ole database to multiple servers is a common way to load balance MySQL servers [ 8]. Howe ver, if th e scalability p roblem is caused b y too much data stored in a controller so th at its response time is degrad ed, balancin g load by id entical con- trollers cannot so lve the p roblem. As the data center network grows larger and larger , we c an expect to h a ve such problem in the ne ar futu re. Theref ore, it is in teresting to stud y an alternative solution to a sing le centralized controller . W e stud y th e u se of devolved con trollers in this p aper . T hey together fu nction as a single logic al centralized contro ller but none of them have the comple te in formation of the wh ole data center n etwork. Th is is ben eficial, for example, when the controller s are suppo sed to provide real time computation s and too much d ata would cause th e compu tation slow . W e take the following flow route assignm ent as an example to see h ow we can use de volved c ontrollers: Whenever a flo w , identified by a sou rce and a d estination no de in the network, is to be established, the send ing node will query the con trollers for the route it shou ld use to avoid cong estion. The co ntrollers are theref ore respo nsible to monitor the n etwork to assist the route selection. If the network topology were too large, the response time would be too long to be u seful. Th us instead of a sing le o mniscient co ntroller to cover the whole network, we use multiple ‘smaller’ contr ollers so that e ach of them covers a partial topolo gy only . When a contro ller is asked for a ro ute, it respond s with the topolog y data it has. Note that this p aper is not about route optimality o r rou ting protoco ls, but to show that an omn iscient c ontroller is not the only solu tion. Th e novelity of this paper is on the con cept of devolved contr o llers , which eliminates the scalability prob lem of traditional omniscient contro ller . Our work is on the contr ol aspe ct of data center networks. In recen t years, there ar e many literature s that focus o n contro l plane design in network s. Exam ples ar e OpenFlow [9], NO X [10] and Ethane [3]. T o ad dress th e scalab ility issue of the controller s in these de signs, their developers prop osed [ 11] to partition th e co ntrollers horizo ntally (i.e . replication o f controller s) an d vertically (i.e. e ach c ontroller serve a part of the n etwork). While horizo ntal p artitioning is tri vial, this paper exp lore into the ways of vertical partitionin g. In the rest o f this paper, we describe an example on controller u se in section II and pr ovide heuristic alg orithms in section III on how to co nfigure th e con trollers. Evaluation is provided i n section IV and discussion on the use of de volved controller s in section V. I I . P R O B L E M S TA T E M E N T On a n etwork rep resented by a con nected graph G = ( V , E ) , a flow is iden tified by the orde red pair ( s, t ) where s, t ∈ V . On such a network, there are q contro llers. Each of th em is managing a portion of the n etwork, r epresented by a subgraph of G . W e say a con troller that manages G ′ = ( V ′ , E ′ ) covers a node v ∈ V or a link e ∈ E if v ∈ V ′ or e ∈ E ′ , respectively . Upon a flow is goin g to be established, th e so urce n ode s A B C a b c Fig. 1. A netw ork managed by three controllers queries the c ontrollers for a route to destination t . Among the q con trollers, at le ast one of them respond s with a path p that connects s to t , which is the route for this flow . T ABLE I summarizes the terms used in this p aper . The controllers are sup posed to r espond to the flow ro ute query in a very shor t tim e. Therefo re, com putationally inten - si ve path -finding algo rithms are not viable. Furtherm ore, we have to ensure at least on e the q contr ollers can provide a route for any sour ce-destination p air ( s, t ) . One way is to have all routes pre-c omputed . Assume for any ordered pair ( s, t ) , we compute k different paths p 1 , . . . , p k that jo in s to t . W e call the set M = { p 1 , . . . , p k } as a k -multipath. Then, we install the m ultipath into a contro ller . Upo n the query is issued , the controller s will r eturn the least congested one of the k p aths. Fig. 1 gives an exam ple of a n etwork with th ree contro llers. The p art of the n etwork that a co ntroller covers is illu strated by a d otted e llipse. Precisely , there is a contro ller that covers all the rou tes b etween nodes in regions A and B as well a s the routes within those region s; an other controller covers that of regions A and C and yet an other is fo r regions B and C. In th is way , non e of the con trollers mon itor every spot in the network but they tog ether can respo nd to any req uest fro m any n ode. For in stance, the ro ute illustrated in Fig. 1 is entirely with in the jurisdiction o f con troller a . So a is the one to provide this ro ute up on request. When the network grows, we can install more contro llers to cover the network so that none of the contr ollers need to manag e a region o f too large in size. Assume we have all the paths p re-compu ted, the immediate questions are then 1) Ho w to optimally allo cate the m ultipaths into the q controller s? W e d efine the optima lity as the smallest number of un ique links to be monitored by the co ntroller . In other words, we p refer the controller to cover as small portion of the network as possible. 2) If n o co ntroller can monito r more than N lin ks on the network, wh at is th e nu mber o f co ntrollers nee ded? I I I . A P P RO X I M A T E S O L U T I O N W e first consider the problem of op timal allocation of the multipaths into q con trollers. For n = | V | nodes on the ne twork, ther e ar e n ( n − 1) different sour ce-destination pairs. This is also the number of multipaths to b e pre-comp uted as men tioned in section II. It is a NP- hard p roblem to find th e o ptimal allocation to ( s, t ) Source-de stinati on pair flo w A data stream from a source node to destinati on node path/ro ute A path that connect s two nodes multipat h A set of paths that each of them connects the same pair T ABLE I D E FI N I T I O N O F T E R M S U S E D I N T H I S PA P E R q contro llers 1 . The size of th e solution space for allocatin g n ( n − 1) multipaths to q controller s is given b y the Stirling number o f the second kind [13]: 1 q ! q X j =0 ( − 1) j q j ( q − j ) n ( n − 1) It becomes intractable quick ly for a mo derately large network. A. P ath- partition appr oach Instead of lookin g for a glo bal o ptimal solution , we d ev e l- oped a heuristic algorithm to o btain an approx imate solution. The alg orithm is in two parts: Firstly it enumera tes all the k -multipath s fo r all sourc e-destination pairs ( s, t ) . Then, it allocates ea ch m ultipath into one of the contro llers accordin g to a cost fu nction. T he multipaths are pre-co mputed with n o knowledge of w here th ey are to be allocated to the c ontrollers. W e call this the path-pa rtition appr oa ch : Algorithm 1: Path-partition heu ristic algo rithm Data : Network G = ( V , E ) , q = number of controllers 1 forea ch s, t ∈ V in rando m ord er do /* Constructing a k -multipath from s to t */ 2 M := k paths j oining s to t ; /* Allocate into a controller */ 3 fo r i := 1 to q do 4 c i := cost of adding multipath M to controller i 5 end 6 Allocate multipath M to the controller j = arg min c j 7 end W e implem ented the mu ltipath en umeration in algorithm 1 (line 2) after [14]. W e find a path from s to t using Dijkstra’ s algorithm with u nit link weight for each link in E . Then , the links used in this path have their link weights increased by a n amount ω . The Dijkstra ’ s algo rithm an d link we ight modification ar e repeated until all k paths are fou nd. It is a straightfo rward algorith m to find k distinct p aths f rom s to t b y usin g Dijkstra’ s algor ithm iteratively with mo dified link we ight. T he link weight increase is su ggested in [1 4] to be ω = | E | to pr efer as much link-disjoin tness as po ssible between paths. When a short rou te is p referred , howev e r , ω should set to a small value. W e use the la tter app roach. Other metho ds to co mpute multipaths in algorith m 1 ar e av ailable, such as [15] or [ 16]. The way the multipaths are found does not affect the discussion herein after . 1 The problem in concern is an exte nded proble m of graph partiti oning. It is well-kno wn in algorithmic graph theory that graph partitionin g is NP-hard. Using heuristic algorit hms such as Kernig han-Lin [12] is the standard way to solve graph partitionin g problems. The e ssential part of the h euristic a lgorithm is the lin es 3 – 6. It a llocates th e m ultipaths to contro llers one by one accord ing to a cost f unction. The g oal of the cost fu nction is to alloc ate the multip ath into controllers such that the maxim um numbe r of links to b e monitored by a co ntroller is min imized. With that in mind, we established the following h euristic: 1) A m ultipath shall b e allo cated to a con troller if th at controller already mon itors most of the links used by that multipath ; and 2) In an optimal allocation, the total num ber of links monitore d by ea ch c ontroller shall be rough ly the same. Therefo re, we define the cost func tion in line 4 as c i = αν i ( M ) + µ i where µ i is the number of links alread y m onitored by con- troller i at the momen t and ν i ( M ) is the num ber of link s in the m ultipath M that is n ot y et mon itored by that co ntroller, i.e. if M is alloc ated to con troller i , the total numb er of links monitored b y controller i would b ecome µ i + ν i ( M ) . Parameter α adjusts th eir weight in the cost function. Whe n α ≈ 0 , we igno re the benefit of reusing existing lin ks in a controller . When α ≈ ∞ , however , we d o not re quire the controller s to b e balanced. T his usually y ields the result that almost all multipath s ar e allo cated to the same con troller, which can be explain ed by the Matthew’ s ef f ect on the allocation process of lines 3 – 6. W e emp irically fo und tha t α between 4 to 8 gives a good r esult. W e set α = 4 in our experiments but the wide rang e of appropr iate values for α suggests th at it is not very sen siti ve. B. P artition- path ap pr oach Another way to allocate multipaths into contro llers, th e partition-p ath ap pr oach , is av ailab le. I ts idea is tha t, if a controller is alread y monito ring certain lin ks, we can find the k -multipath between the sou rce-destination pair ( s, t ) that uses th ose lin ks as long as it is possible. Therefo re, in this approa ch, we first partition th e lin ks in to q con trollers as their pr eferred links. Then the mu ltipath connectin g s to t is computed in dividually in each con troller, with prefer ence giv en to cer tain lin ks. Algo rithm 2 illustrates the id ea. The algorithm begins with a pro cedure to randomly partition the set of link s E into the q contro llers such that each controller i covers a subset E i ⊂ E preliminar ily (lines 1 – 5). Then fo r each sou rce-destination pair ( s, t ) , it find s a k -multipath on ea ch contr oller i with the prefer ence to using links in E i . The same path -finding algor ithm is u sed in Algor ithm 2 as in Algor ithm 1. Note that, the links in E i affects the p ath-findin g algorithm by changin g the initial lin k weight on ly . In the other p art of the algor ithm, such as the co st function in line 10, it is n ot in volved. Th e same cost fun ction as in section III-A is used here. I V . P E R F O R M A N C E W e app lied the heu ristic algorith ms on different topolog ies from th e Rocketfuel pro ject [1 7] to e valuate its perform ance. W e use Roc ketfuel to pologies as th ere is n o detailed da ta Algorithm 2: Partition-path heu ristic algo rithm Data : Network G = ( V , E ) , q = number of controllers /* Partition links to co ntrollers preliminarily */ 1 forea ch i := 1 to q do E i = ∅ 2 forea ch e ∈ E do 3 i := random integer in { 1 , . . . , q } 4 E i := E i ∪ { e } 5 end /* Enumerate multipaths and allocate into controllers */ 6 forea ch s, t ∈ V in rando m ord er do 7 fo reach i := 1 to q do 8 Set link weight w ( e ) = ( 1 for all e ∈ E i ψ for all other e ∈ E 9 M i := k paths joining s to t 10 c i := cost of adding multipath M i to controller i 11 end 12 Allocate multipath M j to controller j = arg m in c j 13 E j := E j ∪ { e : for all links e in M j } 14 end 14 26 6 10 3 13 15 8 4 7 25 11 12 27 0 17 18 2 16 23 1 9 24 5 21 19 20 22 Fig. 2. T opology of an irregular network with 28 nodes and 66 links. center topologies av ailable publicly . W e also ev aluate the algorithm with a fat tree topolo gy , which is likely to be used in d ata center networks, in section I V -D. A. Size o f con tr ollers W e use q = 4 con trollers on a network of 28 nod es and 66 links. The topolog y is illustrate d in Fig. 2. One configu ration of the four c ontrollers computed by a lgorithm 1 is depicted in Fig. 3, with each co ntroller m onitoring 45–47 links. From the figure, we found q uite sig nificant overlap on the n odes and links monito red by each controller . Some links app eared in all co ntrollers, as they are critical link s for the conn ection of the network. So me o ther link s ar e less important a nd appeared in one con troller on ly . The large num ber of overlap is u nav o idable when devolved c ontrollers ar e used. In fact, Theor em 1: When d ev olved contro llers ar e used, there is either a co ntroller that covers all nod es, or any single no de is covered by m ore than one contro ller . Since fo r any nod e v , if it is covered by only one con troller, then fo r any flow ( v , u ) to be routable, no de u must also covered by that controller . Therefo re that contro ller must co ver all nodes on the network. Fig. 3. Links monitored by each of the four controll ers as suggested by algor ithm 1. Critical links are more likely to be incl uded in multiple control lers, where as less important links are appeared in only one controlle r . In other words, th ere must be significant overlap if we want to r educe the scop e o f the n etwork that each con troller monitors. In fact, we can reduce the numbe r of links monitored by each controller if we use Algorithm 2. Applying to th e same topolog y in Fig. 2, each controller monitors only 29–3 1 links, which is significan tly less. Th is better result, howe ver, comes with the price th at the r oute fou nd by Algor ithm 2 is longer . The average hop cou nt of a path ( the mean number of hops over all k n ( n − 1) path s com puted) is 3.5 in Algor ithm 2 whereas that in Algorith m 1 is 2.6. The lengthen ed route may not be fav or able in data center ne tworks, howe ver . T o compare the result, we computed a con figuration with the same set of multipaths using the time-consuming simulated annea ling process 2 . Th e result, pre sented in Fig. 4, turns o ut is no better th an th at obta ined by the heuristic algorithms d espite the lo nger time it took. Indeed , the heu ristic algo rithms often giv es a sligh tly be tter solutio n th an simu lated an nealing. W e also applied the algorithm on several different t opolog ies of different number of no des and links from Rocketfuel. Du e to space limitation , we d o not show th eir topolo gies here but T ABLE II shows the maximu m numb er of links covered by a controller resu lted f rom th e h euristic algorithm comp ared to that from simulated ann ealing. It confirm s that th e contro llers covers around 60-80 % of links on the network wh en q = 4 2 The simulated annea ling process is to replace the loop in lines 3–6 only in Algorithm 1. The multipat h M in the comparison are exact ly the same for a fa ir cont rast. It may seem counte r-int uiti ve that the well-e stablishe d simulate d anneali ng technique does not produce better solution. Partly this can be at trib uted to the choi ce of parame ters such as the cooling funct ion used. More importantly , howe ver , is beca use our algorithm work ‘sm arter’ than simulate d annea ling as our cost function guides to ward optimal ity whereas the latter is simply a brute-force search. 15 20 25 30 35 40 45 50 55 60 65 0 5 10 15 20 25 30 Number of links covered per controller Number of controllers Algorithm 1 Sim Annealing Fig. 4. Number of links covere d per controll er vs number of controlle rs in topology of Fig. 2, comparing Algorithm 1 and simulated annealing T opology # nodes # links Algo. 1 Sim. Anneali ng 1 (Fig.2) 28 66 47 (0.1s) 51 (69.9s) 2 108 141 114 (24.4s) 1 40 (1387.4s) 3 53 456 204 (1.1s) 2 26 (301.6s) 4 44 106 77 (0.5s) 92 (186.6s) 5 51 129 97 (0.9s) 112 (239.9s) 6 (Fig.7) 45 108 83 (0.03s) 83 (46.3s) T ABLE II C O M PA R I N G T H E S I Z E O F C O N T R O L L E R S I N D I F F E R E N T T O P O L O G I E S , W I T H T I M E TA K E N B Y E A C H S O LV E R S H OW N I N B R A C K E T S . and the result provided b y Alg orithm 1 is at least as good a s that o btained b y simu lated an nealing. B. Number of contr ollers and the effect on the size of cover age In orde r to redu ce th e numb er of links covered by any controller, an intuitive way is to u se mo re c ontrollers. W e applied Algorithm 1 with various q to three d ifferent irr egular topolog ies. Fig. 5 plots the result. Obviously , q = 1 shows the to tal n umber of links in the network. In Fig. 5, th e curves show a general d ecreasing trend . In fact, the curves are decreasing g eometrically . This suggests that alth ough we can redu ce the size of a con troller , there is an overhead: As q increases, the average nu mber o f controller s monitorin g a link also increases. Th is mean s the monito ring traffic, altho ugh small, also in creases with q . This is the trade- off that we have to consider when devolved contro llers ar e used in place of a single centralized co ntroller . C. The effect on the numb er of path s While the p arameter q affects th e numbe r of link s covered by each controller, th e parameter k , i.e. the num ber of path s to find between a source-d estination pair, does no t have a significant effect on it. This is shown by Fig. 6. The figure plots th e num ber of lin ks covered by a co ntroller against the number of con trollers u sing the top ology of Fig. 2 , but with the parameter k varied. W e examined with k in ranges o f 1 to 10 an d also some larger values. The varied value o f k d oes not produce a significantly d ifferent resu lt between each other . Th is is explained by the fact that each lin k on the network is reused for fairly large n umber of times in dif ferent flows ( s, t ) . When we pick a multipath fro m a co ntroller, it is likely that e very links on this multipath are also used by 0 20 40 60 80 100 120 140 0 5 10 15 20 25 30 Number of links covered per controller Number of controllers Topology 1 Topology 2 Topology 3 Fig. 5. Number of links cove red per controll er vs number of control lers in three dif ferent topol ogies from Roc ket fuel 15 20 25 30 35 40 45 50 55 60 65 70 0 5 10 15 20 25 30 Number of links covered per controller Number of controllers k = 1 k = 3 k = 5 Fig. 6. Number of links cove red per controll er vs number of control lers in topology of Fig. 2 with diffe rent value of k another multipath fro m th e same controller . In this sense, if we increase the multiplicity k , the add itional path s also likely using the links already covered by the contr oller . D. Using partition-p ath appr o ach on re gular networks As mention ed in section I V -A, Alg orithm 2 pr oduces a better result because it has a path-findin g algorithm that fits the path into the co ntroller, in the expense of resulting in a longer rou te. Th is weakness of par tition-path alg orithm can be removed on regular topolog y networks suc h as fat tree , which ac cording to [18], has been suggested to use in da ta center networks. It is be cause in a regular topology , we know a p riori the len gth of an optimum route and it a lso provides enoug h numb er o f distinct path s of the same length between a source-d estination pair . T o illustra te the idea, we show a 3-lay er fat tr ee network built with switches of 6 ports in Fig. 7. It is tr i vial to see that, given a pair of hosts in a different subtree, there are (6 / 2) 2 = 9 distinct paths (each p ass through a distinct core switch) between them that passes throug h 5 switches. In such a network, no path that traverses mor e than 5 switche s is optimal. W ith such knowledge, we can modify the path-finding algorithm used in Algorithm 2 (line 9) to enf orce a solution of fixed-length path . Note tha t such modification o nly work s on regular topolo gies like fat tre e or Clos network. I n fact, the modified path-fin ding algo rithm c an be used in place of tha t in line 2 of Algor ithm 1 as well. W e also m odified line 2 in Algorith m 2 sligh tly so that o nly the link s conn ecting core an d agg regation switches are p arti- tioned into E i . T his is a reasonable mod ification consider ing that in a fat tree network (see Fig . 7), we fixed th e whole path between two n odes when we fixed the links that it uses connectin g the core and aggr egation switches. Applying the path-pa rtition an d par tition-path algor ithms to the network in Fig. 7 with q = 4 , we find the coverage per controller to be 83 and 56 links respectively over a to tal of 108 link s. Both Algo rithm 1 and Algorith m 2 yield a n av e rage hope cou nt of 3 .8, due to the m odified path- finding alg orithm. V . D I S C U S S I O N S A. The commu nication between a server and c ontr ollers According to the algor ithms af orementio ned, the multipath for each ( s, t ) is installed in on ly one contr oller . T herefore , only one among the q con trollers can reply to a r oute request for ( s, t ) . When node s is in itiating a flow to t , it has to deduce which contro ller ca n answer its rou te req uest. T here are two ways to solve this p roblem. Firstly , nod e s can send its request to all q contro llers and let the one owns the da ta to reply . T r i vially this solutio n incurs ad ditional network traffic. The second solution is to have a mapp ing at nod e s : For each destination t , there i s a table in s tells which controller contains the rou te for ( s, t ) . This is a viable solutio n becau se the total number o f no des | V | (and th e nu mber of destinations t fo r any node s ) is usually limited. Moreover , when we configur ed the controller s, storing the mapping info rmation of { ( s, t ) , ∀ t ∈ V } to s is just on e step fu rther with the existing inform ation. B. P ath- partition vs pa rtition-path Section I V -A men tion that path- partition algorith m is in- ferior to partition-path algorithm in terms of the size of controller s produ ced. Howe ver , only p ath-partition a lgorithm can guarantee shortest-path routes because the path-finding algorithm is not interfered by the configur ation of contro llers. When regular topo logies are u sed, such as fat tr ee network s, we can ha ve a modified path- finding algorithm to ensure shortest-path ro utes are found. Th is makes the partition -path algorithm f av orab le. Th erefore, it is interesting to see t hat path- partition a lgorithm is suitab le fo r u se with irr egular networks while p artition-pa th algorithm is good for regular n etworks. C. Precompute r o utes Using pre-compu ted rou tes in this paper is inten tional. Assume each co ntroller cov ers on ly a par t o f th e network and a flow’ s ro ute is compu ted dy namically when the req uest is arrived. It is hard to guarantee that, amo ng the q controller s, there must b e one ca n fulfill a ny route requ est. The role o f pre-com puted multipaths is therefo re a verifier to guaran tee a controller is responsible for any p ossible flow . D. Lin k failur es While we do not address the actu al o peration of d ev olved controller s in a n etwork, it is expected that whenever there is a lin k failure, i.e. a topolog y c hange, something have to be done in the contro llers to reflect this ch ange. This could be disabling certain p aths (among the k mu ltiple paths of Host Edge Aggr . Core Subtree Fig. 7. A fat tree topology built with switc hes of 6 port s 0 10 20 30 40 50 60 70 0 5 10 15 20 25 30 Number of links covered per controller Number of controllers r = 1 r = 2 r = 3 r = 4 Fig. 8. Number of links cove red per controll er vs number of control lers in topology of Fig. 2 with diffe rent value of r the same source- destination pair), or reconfigu ration o f the network. This overhead could be large and intensive. In ord er to provide a prom pt reactio n, therefor e it is essential to keep ing the number of link s man aged b y a co ntroller small. This justifies o ur objective in th e op timization. E. Redunda ncy While it is p ossible fo r m ore than one controller that can respond to a route re quest, the algorithm s in section III do es not gu arantee this. One way to en sure redund ancy is to modify line 6 o f algorithm 1 o r line 12 of algorithm 2, so that a path is added to r > 1 contro llers. Usually r = 2 is sufficient for resilience. In Fig. 8, we plot the n umber of link s covered by each con troller in different values o f r in topology of Fig. 2, using the modified path- partition algorithm . Tri v ially , as the degree of redu ndancy r incr eases, the numb er of lin ks covered by a con troller increases. The in crement, howev er , is moderate due to the overlap of link coverage between con trollers. In other words, we can hav e redun dancy at just a sma ll pr ice. More details o n the redund ancy de sign, its overhead, an d an example showing its m echanism would be in a f uture pa per . V I . C O N C L U S I O N The focus o f th is paper is to see the po ssibility of using multiple small independent c ontrollers instead of a single centralized omniscien t con troller to man age r esources. W e u se flow routing as an example to see how we can use multiple controller s to assign routes to flows base on dynamic ne twork status. T he main reason to a void a single controller is beca use of scalability concern . Ther efore, we for bid our con trollers to h av e th e com plete network topolog y informa tion in run time, and intr oduced the concep t of devolved c ontr ollers . Furthermo re, we prop ose algor ithms that aims to limit the network top ology info rmation stored in th e controller s. Our r esult shows that, devolved controllers are p ossible. W e propo sed two heu ristic algorithms to limit the size of eac h controller . Although they do not seek for a globally optimal so- lution, their results are as go od as simulated a nnealing solvers but mu ch faster . The heur istic algo rithms, path-partition and partition-p ath algorithms, a re found to be suitable for irregular and regular networks respectively . Such difference is due to the fact th at, in regular networks, we can easily estimate the length o f route a priori. In compu ter n etworks such as data center or compute clouds, contro llers are often used, such as for secur ity policy control, resour ce allocation, b illing, and so on. T his pa per is a precurso r to a new design d irection o n th e use o f co ntrollers, such that they can scale out. R E F E R E N C E S [1] M. Al-Fares, S. Radhakrishna n, B. Raghav an, N. Huang, and A. V ahdat , “Hedera : Dynamic flo w schedul ing for data cente r networks, ” in Pr oc. NSDI , 2010. [2] F . Chang et al. , “Bigta ble: A distrib uted storage system for struc tured data, ” in Pr oc. OSDI , 2006. [3] M. Casado, M. Freedman, J . Pettit, N. McKeo wn, and S. Shenker , “Ethane: T aking control of the enterprise, ” in P r oc. SIGCOMM , 2007. [4] J. Dean and S. Ghemawat, “MapReduce: Simplified data processing on larg e clusters, ” in Proc . 6th OSDI , San Francisco, CA, Dec . 2004. [5] S. Ghemawa t, H. Gobiof f, and S.-T . Leung, “The Google file system, ” in Pr oc. ACM SOSP , Bolton L anding, New Y ork, USA, Oct. 2003. [6] A. Greenber g, N. Jain, S. Kandula et al. , “VL2: A scalable and flexibl e data center network, ” in P r oc. SIGCOMM , 2009. [7] R. N. Mysore et al. , “PortLand: A scalable faul t-tolerant layer 2 data cente r network fabric, ” in Proc. SIGCOMM , 2009. [8] B. Schwartz et al. , High P erformance MySQL , 2nd ed. Sebastopol , CA: O’Reill y Media , 2008. [9] N. McK eo wn, T . Anderson, H. Balakrishnan, G . Prulkar , L. Peterson, J. Rexford, S. Shenker , and J. T urner , “OpenFlo w: Enabli ng inno vati on in campus netw orks, ” ACM SIGCOMM CCR , vol. 38, no. 2, pp. 69–74, Apr . 2008. [10] N. Gude, T . Kopo nen, J. Pettit, B. P faf f, M. Casado, N. McKeo wn, and S. Shenker , “NO X: T o wards an operat ing system for network s, ” ACM SIGCOMM CCR , vol. 38, no. 3, pp. 105–110, Jul. 2008. [11] N. McK eown , Persona l communication, 2010. [12] B. W . Kernig han and S. Lin, “ An efficie nt heuristic procedure for partit ioning graphs, ” B ell Sys T ech Journal , vol. 49, pp. 291–307, 1970. [13] N. L. Johnson and S. Kot z, Urn Models and Their Applicati on . New Y ork: John Wil ey & Sons, 1977. [14] J . Mudigonda et al. , “SP AIN: Design and algorithms for constructing larg e data-cent er etherne ts from commodity switches, ” HP , T ech. Rep. 2009-241, 2009. [15] E . Miniek a, Optimizati on algorithms for network s and graphs . Ne w Y ork: Marcel Dekker , 1978. [16] R. Gu ´ erin and A. Orda, “Co mputing shortest pat hs for any number of hops, ” T rans. on Ne tworking , vol. 10, no. 5, pp. 613–620, Oct. 2002. [17] N. Spring, R. Mahajan, and D. W etherall, “Measuring ISP topologies with rocketfuel , ” in Proc . SIGCOMM , 2002. [18] M. Al-Fares, A. Loukissas, and A. V ahdat , “ A scalable, commodity data cente r netw ork archi tectur e, ” in Proc . SIGCOM M , 2008.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment