Average-Consensus Algorithms in a Deterministic Framework

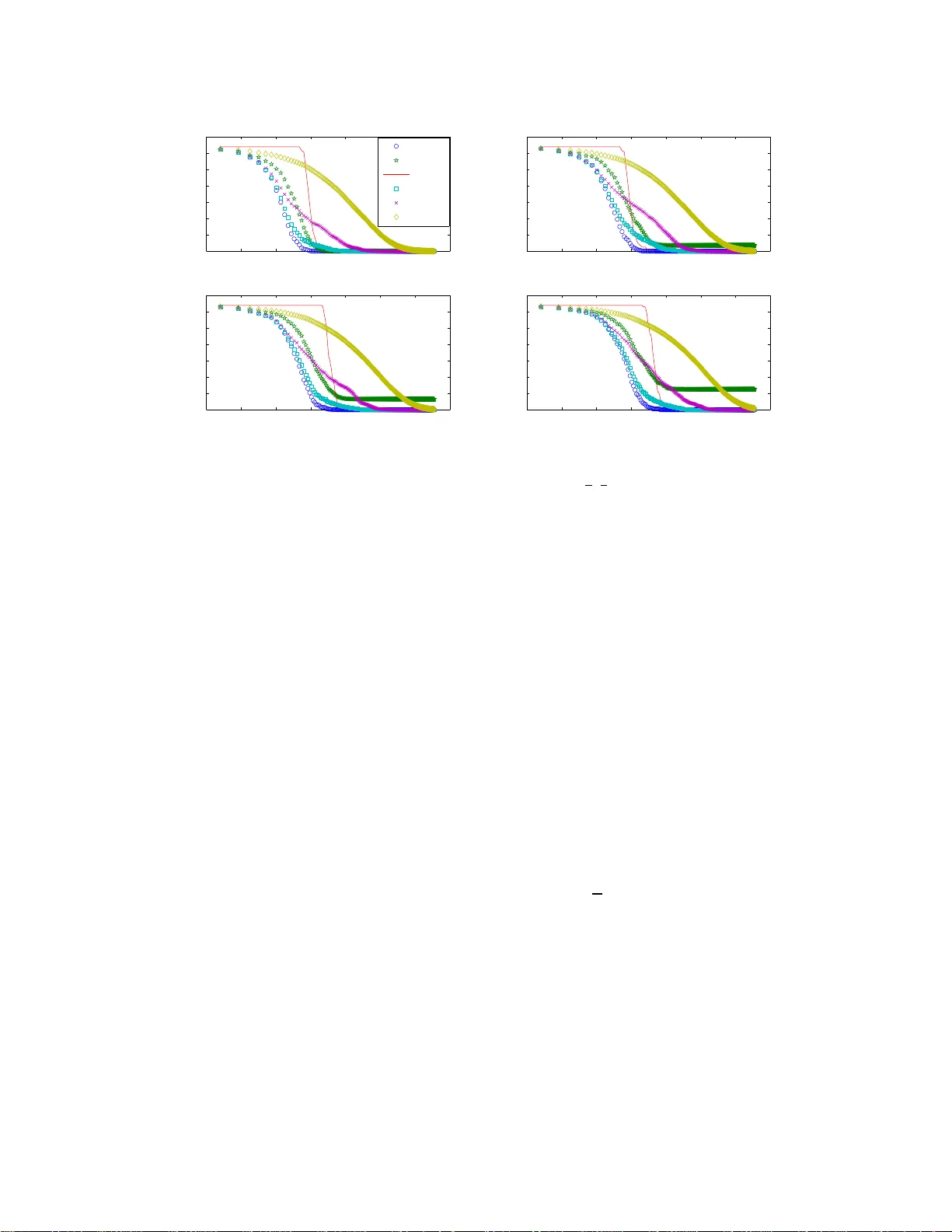

We consider the average-consensus problem in a multi-node network of finite size. Communication between nodes is modeled by a sequence of directed signals with arbitrary communication delays. Four distributed algorithms that achieve average-consensus…

Authors: Kevin Topley, Vikram Krishnamurthy