Hashing Algorithms for Large-Scale Learning

In this paper, we first demonstrate that b-bit minwise hashing, whose estimators are positive definite kernels, can be naturally integrated with learning algorithms such as SVM and logistic regression. We adopt a simple scheme to transform the nonlin…

Authors: Ping Li, Anshumali Shrivastava, Joshua Moore

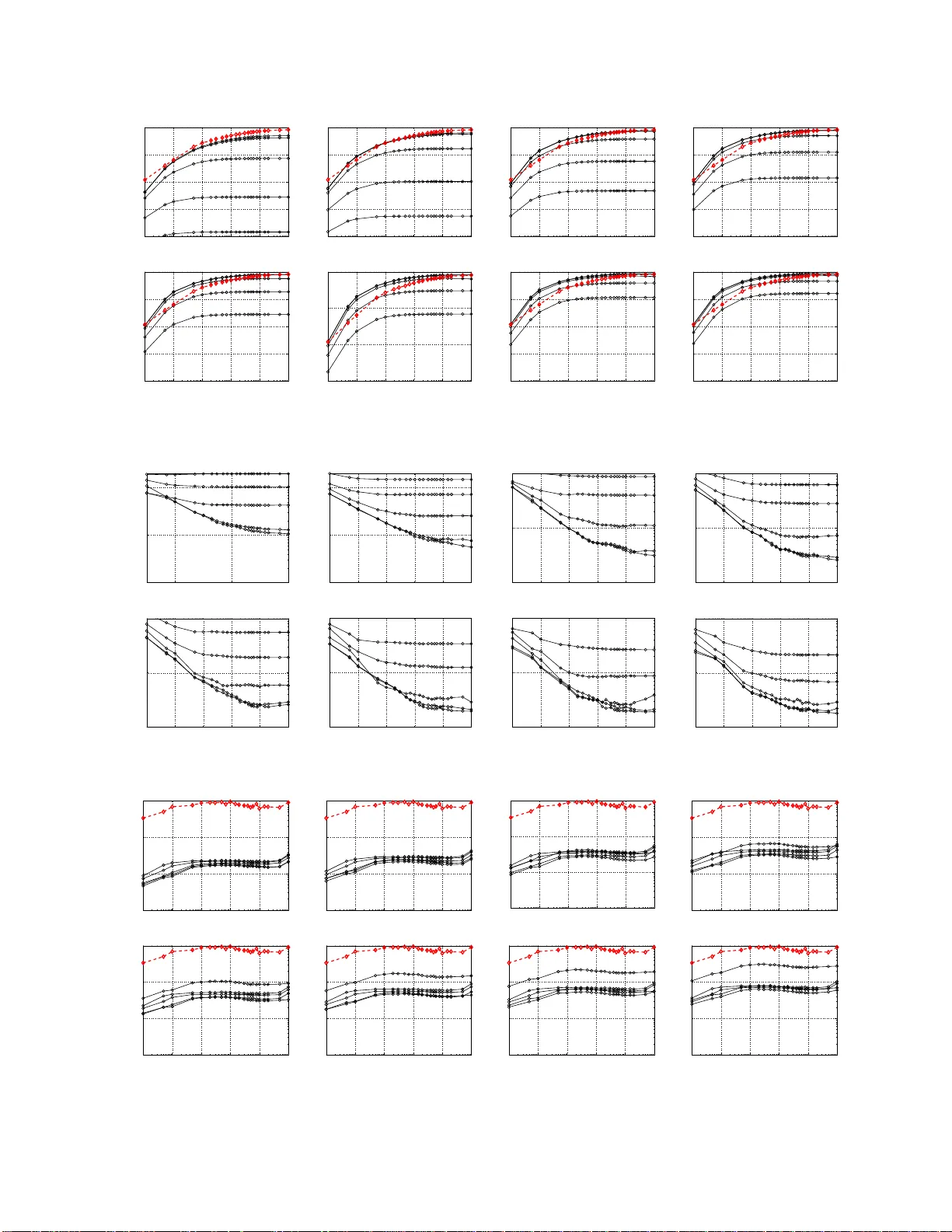

Hashing Algorithm s for Lar ge-Scale Learning Ping Li Department of Statistical Science Cornell Unive rsity Ithaca, NY 14853 pingli@cornell. edu Anshumali Shri vasta v a Department of Computer Science Cornell Unive rsity Ithaca, NY 14853 anshu@cs.cornell.edu Joshua Moore Department of Computer Science Cornell Unive rsity Ithaca, NY 14853 jlmo@cs.cornell.edu Arnd Christian K ¨ onig Microsoft Research Microsoft Corporation Redmond, W A 98052 chrisko@microsoft.com Abstract In t his paper , we first demonstrate th at b -bit minwise hashing, whose estimators are positi ve definite k ernels, c an be naturally integrated with l earning algorithms such as S VM and logistic regression. W e adopt a simple scheme to transform the nonlinear (resemblance) kernel into l inear (inner product) kern el; and hence lar ge-scale problems can be solve d extremely efficiently . Our method prov ides a simple effecti ve solution to large-scale learning in massi ve and ex tremely high-dimensional datasets, especially when data do not fit in memory . W e then compare b -bit minwise hashing with the V owpal W abbit (VW) algorithm (which is related the Count-Min (CM) sketch). Interestingly , VW has the same variances as random projections. Our theoretical and empirical com- parisons illustrate that usually b -bit minwise hashing is significantly more accurate (at the same storage) than VW (and ran dom pro jections) in binary data. Furthermore, b -bit minwise hashing ca n be combined with VW to achie ve further improv ements in terms of training speed, especially when b is large. 1 Introd uction W ith the a dvent of the Interne t, many m achine learn ing application s are faced with very large and in herently h igh- dimensiona l datasets, re sulting in challeng es in scaling u p training algo rithms and storing the data. Especially in the context of search and ma chine translation , corpu s sizes used in indu strial practice have long exceed ed the main memory capacity of single machin e. For examp le, [3 4] experimented w ith a dataset with poten tially 16 trillion ( 1 . 6 × 10 13 ) unique feature s. [3 2] d iscusses training sets with ( on average) 10 11 items and 10 9 distinct features, requiring novel algorithm ic approach es and architectures. As a consequ ence, there h as been a renewed emphasis on scaling up mac hine learning techniques b y using m assi vely p arallel ar chitectures; howe ver, meth ods r elying solely on par allelism can be expensive (b oth with regar ds to hardware requiremen ts and en ergy co sts) and of ten induce sign ificant additional commun ication and data distribution ov erhead. This work app roaches the challeng es posed by large datasets by leveraging techniques from the area of similarity sear ch [2], where similar increase in dataset sizes has made the storage and comp utational r equiremen ts fo r computing exact distances proh ibitive, thus ma king data representations that allow compact storage an d efficient approx imate distance compu tation necessary . The method of b -bit minwise hashing [26, 27, 25] is a very recent pro gress for ef ficie ntly (in both time and space) computin g resemblances a mong extremely high -dimen sional (e.g. , 2 64 ) binar y vectors. In this paper, w e show that b -bit minwise hashing can be seamlessly integrated with linear Suppo rt V ector Machine (SVM) [2 2, 30, 1 4, 19, 35] and logistic regression solvers. In [35], the authors ad dressed a critically impor tant prob lem o f tra ining linear SVM 1 when the d ata can no t fit in memory . In this paper, ou r work also ad dresses the same problem using a very different approa ch. 1.1 Ultra High-Dimensional Large Datasets and Memory Bottlen eck In th e context of search, a stand ard pr ocedur e to r epresent do cuments (e.g. , W eb pag es) is to use w - shingles ( i.e., w contiguo us words), where w can be as large as 5 (or 7) in se veral stud ies [6, 7, 15]. This pr ocedur e can g enerate datasets of extremely h igh dime nsions. For example, suppose we only conside r 10 5 common En glish words. U sing w = 5 m ay requir e the size of dictio nary Ω to be D = | Ω | = 1 0 25 = 2 83 . In pra ctice, D = 2 64 often suffices, as the numb er of available docu ments m ay n ot be large enou gh to exha ust the d ictionary . For w -shingle d ata, norm ally only abscence/p resence (0 /1) info rmation is used, as it is known that word fr equency distributions within docum ents approx imately follow a power -law [ 3], m eaning that m ost single ter ms occu r rarely , ther eby making a w -shingle unlikely to occur m ore than on ce in a d ocumen t. Interestingly , even when the data are not too high-d imensional, empirical studies [9, 18, 20] achieved good perform ance with binary-q uantized data. When the data can fit in memory , linear SVM is often e xtremely efficient after the data a re loaded into the memory . It is howe ver of ten the ca se that, for very large datasets, the data loading time dominates the co mputing time for training the SVM [35]. A mu ch m ore severe pr oblem ar ises wh en the d ata can not fit in memor y . This situation can be very common in pr actice. The publicly available webspam dataset needs a bout 24 GB d isk space ( in LI BSVM in put d ata format), which exceeds the memory capacity of many desktop PCs. Note that webspam , which contains only 350,000 docume nts represented by 3-shin gles, is still a small dataset com pared to the industry applications [32]. 1.2 A Brief Intr oduction of Our Proposal W e prop ose a solution which lev erages b-bit minwise hashing . Our appro ach assume the data vectors are binary , very high-d imensional, and relati vely sparse, which is gen erally true of text documents represented via shingles. W e apply b -bit minwise hashing to obtain a compact representation of the origina l data. In order to use the technique for ef ficient learning, we have to address se veral iss ues: • W e need to prove that the matrices genera ted by b -bit minwise h ashing ar e ind eed positive definite, which will provide the solid foundation for our proposed solution. • If we use b -bit minwise hashing to estimate the resemblance , which is nonlinear , ho w can we ef fectively convert this nonlinear problem into a linear problem? • Compared to oth er hashing techn iques such as ran dom pro jections, Count-Min (CM) sketch [1 2], or V owpal W abb it (VW) [34], does our approach exhibits advantages? It tu rns ou t that o ur pr oof in the n ext section th at b -bit hashing matric es are positive defin ite natur ally provides the construction for conv erting the otherwise nonlin ear S VM prob lem into linear SVM. [35] pr oposed solvin g the m emory b ottleneck by par titioning the data into block s, which ar e r epeatedly loaded into memor y as their appro ach up dates the m odel coefficients. Howe ver, the compu tational bo ttleneck is still at the memory because loading the data blocks f or m any iterations consumes a large numbe r o f d isk I/Os. Clearly , on e should no te that our meth od is not r eally a compe titor o f th e app roach in [35]. In fact, both approach es may work together to solve extremely large problems. 2 Re view Minwise Hashing and b-Bit Minwise Hashing Minwise hashing [6, 7] has b een successfully applied to a very wide r ange of real-world prob lems especially in th e context of search [6, 7, 4, 16, 10, 8, 33, 21, 13, 11, 17, 23, 29], for ef ficiently computing set similarities. Minwise hashing mainly works well with binary data, wh ich can be viewed either as 0/1 vector s or as sets. Given two sets, S 1 , S 2 ⊆ Ω = { 0 , 1 , 2 , ..., D − 1 } , a widely used (no rmalized) measure of similarity is the r esemblanc e R : R = | S 1 ∩ S 2 | | S 1 ∪ S 2 | = a f 1 + f 2 − a , where f 1 = | S 1 | , f 2 = | S 2 | , a = | S 1 ∩ S 2 | . 2 In this method , one applies a random permu tation π : Ω → Ω on S 1 and S 2 . The collision proba bility i s simply Pr ( min ( π ( S 1 )) = min ( π ( S 2 ))) = | S 1 ∩ S 2 | | S 1 ∪ S 2 | = R . (1) One can repeat the permuta tion k times: π 1 , π 2 , ..., π k to estimate R without bias, as ˆ R M = 1 k k X j =1 1 { min( π j ( S 1 )) = min( π j ( S 2 )) } , (2) V ar ˆ R M = 1 k R (1 − R ) . (3) The common p ractice of minw ise hashing is to store each hashe d value, e.g., min( π ( S 1 )) and min( π ( S 2 )) , using 64 bits [15]. The storage (and compu tational) cost will be pro hibitive in tru ly large-scale (industry) applications [28 ]. b-bit minwise hashing [26, 2 7, 25] provides a strikin gly simple solution to th is (storag e and com putationa l) problem by storing on ly th e lowest b bits (instead of 64 bits) of each h ashed value. For co n venience, we de fine the minim um values u nder π : z 1 = min ( π ( S 1 )) and z 2 = min ( π ( S 2 )) , and define e 1 ,i = i th lowest bit of z 1 , and e 2 ,i = i th lowest bit of z 2 . Theorem 1 [26] Assume D is la r ge . P b = Pr b Y i =1 1 { e 1 ,i = e 2 ,i } ! = C 1 ,b + (1 − C 2 ,b ) R (4) r 1 = f 1 D , r 2 = f 2 D , f 1 = | S 1 | , f 2 = | S 2 | C 1 ,b = A 1 ,b r 2 r 1 + r 2 + A 2 ,b r 1 r 1 + r 2 , C 2 ,b = A 1 ,b r 1 r 1 + r 2 + A 2 ,b r 2 r 1 + r 2 , A 1 ,b = r 1 [1 − r 1 ] 2 b − 1 1 − [1 − r 1 ] 2 b , A 2 ,b = r 2 [1 − r 2 ] 2 b − 1 1 − [1 − r 2 ] 2 b . This (approx imate) formu la (4) is remark ably accurate, ev en for v ery small D . Som e numerical comparisons with the exact probabilities are provided in Appen dix A. W e can then estimate P b (and R ) fro m k independ ent permutatio ns: π 1 , π 2 , ..., π k , ˆ R b = ˆ P b − C 1 ,b 1 − C 2 ,b , ˆ P b = 1 k k X j =1 ( b Y i =1 1 { e 1 ,i,π j = e 2 ,i,π j } ) , (5) V ar ˆ R b = V ar ˆ P b [1 − C 2 ,b ] 2 = 1 k [ C 1 ,b + (1 − C 2 ,b ) R ] [1 − C 1 ,b − (1 − C 2 ,b ) R ] [1 − C 2 ,b ] 2 (6) W e will show that we can apply b -bit hashing for learning without explicitly estimating R fr om (4). 3 K er nels from Min wise Hashing and b -Bit Minwise Hashing This section proves some theoretical proper ties of matrices generated by resemblan ce, minwise hashing, or b -bit min- wise hashin g, which a re all p ositiv e definite matrices. Our proof no t only pr ovides a so lid theoretical foundatio n for using b -bit hashing in learnin g, but also illustrates our idea behin d the co nstruction for integratin g b -bit hashin g with linear learning algorith ms. Definition : A sym metric n × n matrix K satisfyin g P ij c i c j K ij ≥ 0 , for all real vectors c is called p ositive de finite (PD) . Note that here we do not differentiate PD from nonn e gative definite . 3 Theorem 2 Consid er n sets S 1 , S 2 , ... , S n ∈ Ω = { 0 , 1 , ..., D − 1 } . A pply one permutatio n π to each set a nd define z i = min { π ( S i ) } . The following thr ee matrices ar e all PD. 1. The resemblance matrix R ∈ R n × n , whose ( i, j ) -th entry is the r esemblance between set S i and set S j : R ij = | S i ∩ S j | | S i ∪ S j | = | S i ∩ S j | | S i | + | S j |−| S i ∩ S j | 2. The minwise hashing matrix M ∈ R n × n : M ij = 1 { z i = z j } 3. The b-bit minwise hashing matrix M ( b ) ∈ R n × n : M ( b ) ij = Q b t =1 1 { e i,t = e j,t } , wher e e i,t is the t -th lowest bit of z i . Consequently , consider k independ ent permutations and denote M ( b ) ( s ) the b-bit minwise hashing matrix generated by the s -th permutation. Then the summation P k s =1 M ( b ) ( s ) is also PD. Proof: A matrix A is PD if it can be written as an inner pr od uct B T B . Because M ij = 1 { z i = z j } = D − 1 X t =0 1 { z i = t } × 1 { z j = t } , (7) M ij is the inner pr od uct of two D-dim vectors. Thu s, M is PD. Similarly , the b-bit minwise hashin g matrix M ( b ) is PD becau se M ( b ) ij = 2 b − 1 X t =0 1 { z i = t } × 1 { z j = t } . (8) The r esemblan ce matrix R is PD because R ij = Pr { M ij = 1 } = E ( M ij ) a nd M ij is the ( i, j ) -th element of the PD matrix M . Note that the expectation is a linear operation. Our proo f that the b -bit minwise h ashing matrix M ( b ) is PD provides us with a simple strategy to expand a nonlinear (resemblan ce) kern el into a linear (inner product) kernel. Af ter concaten ating the k vector s resulting from (8), the ne w (binary ) data vector after the expansion will be of dimension 2 b × k with exactly k ones. 4 Integrating b -Bit Minwise Hashing with (Linear) Learnin g Algorithms Linear algorithms such as linear SVM and log istic regression have become very powerful an d extrem ely pop ular . Representative software packages include SVM perf [22], Pe gasos [3 0], Bottou’ s SGD SVM [5], and LI BLINEAR [14]. Giv en a dataset { ( x i , y i ) } n i =1 , x i ∈ R D , y i ∈ {− 1 , 1 } , th e L 2 -regularized linear SVM solves the fo llowing optimization prob lem: min w 1 2 w T w + C n X i =1 max 1 − y i w T x i , 0 , (9) and the L 2 -regularized logistic regression solves a similar problem: min w 1 2 w T w + C n X i =1 log 1 + e − y i w T x i . (10) Here C > 0 is an important penalty par ameter . Since ou r purpose is to demonstra te the effectiv eness o f our p ro- posed scheme u sing b -bit hashing, we simply pr ovide re sults for a wide range o f C values and assume that the best perfor mance is achiev able if we conduct cross-validations. In our appro ach, we apply k in depend ent rand om perm utations on each featu re vector x i and store the lowest b bits of each hashed value. Th is way , we obtain a new dataset which can be stored using me rely nbk b its. At run -time, we expand each ne w data point into a 2 b × k -leng th vector . 4 For example, s uppose k = 3 and the h ashed values are originally { 1201 3 , 25964 , 20191 } , whose bin ary digits are { 0101 1101 1101101 , 110010 1011 01100 , 100111011011111 } . Con sider b = 2 . Then the binar y d igits are stored as { 01 , 00 , 11 } (which corresponds to { 1 , 0 , 3 } in decimals). At run-time, we need to expand them into a vector of length 2 b k = 12 , to be { 0 , 0 , 1 , 0 , 0 , 0 , 0 , 1 , 1 , 0 , 0 , 0 } , which will be the ne w feature vector fed to a solver: Original hashed v alues ( k = 3) : 12013 25964 20191 Original binary representation s : 01011 1011 101101 11001 0101 101100 10011 1011 011111 Lowest b = 2 binary digits : 01 00 11 Expand ed 2 b = 4 binary digits : 0010 0001 1000 New feature vector fed to a solver : { 0 , 0 , 1 , 0 , 0 , 0 , 0 , 1 , 1 , 0 , 0 , 0 } Clearly , this expansion is directly inspired by the proof that the b -bit minwise hashing matrix is PD in Theorem 2. Note that the total storage cost is still just nbk bits and each new data vector (of length 2 b × k ) has exactly k 1’ s. Also, note that in this procedu re we actually do not explicitly estimate the resemblance R u sing (4). 5 Experimental Results on W ebspam Dataset Our experime ntal setting s follow the work in [35] very closely . Th e author s o f [35] condu cted experimen ts on three datasets, o f which th e webspam d ataset is p ublic and rea sonably high-d imensional ( n = 3 50000 , D = 166091 43 ). Therefo re, our experiments focus on webspam . Follo wing [35], we randomly selected 20% of samp les for testing and used the remaining 80% samples for training. W e chose LIBLINE AR as the tool to demonstrate the effecti veness of ou r algorith m. All exper iments we re con- ducted on w orkstations with Xeon(R) CPU (W5590@3 .33GHz) and 48GB R AM, under W indows 7 S ystem. Thus, in our case, th e origin al data (about 24GB in LI BSVM f ormat) fit in mem ory . In app lications for which the data do no t fit in memory , we expect that b -bit hashing will be ev en more substantially advantageous, because the hashed data are relativ ely very small. In fact, our exper imental results will show that for th is d ataset, using k = 200 and b = 8 can achieve the same testing accu racy as using the original data. The effectiv e s torage for the reduced dataset (with 350K examples, using k = 2 00 a nd b = 8 ) would be merely about 70MB. 5.1 Experimental Results on Nonlinear (Ker nel) SVM W e implemented a new resemblan ce kernel function an d tried to use LIBSVM to train the webspam data set. W e waited for over o ne week 1 but LIBSVM still had not output any results. Fortunately , using b -bit minswise hashing to estimate the r esemblance kernels, we we re ab le to obtain some results. For example, with C = 1 and b = 8 , the training time of LIBSVM ranged from 19 38 secon ds ( k = 30 ) to 13253 secon ds ( k = 5 0 0 ). In particular, when k ≥ 200 , th e test accuracies essentially matched the best test results giv en by LIBLINEAR on the original webspam data. Therefo re, there is a significant benefit of data r ed uction provided by b -bit minwise hashing, for training nonlinear SVM. This experimen t also demonstrates that it is very impo rtant (and fortu nate) that we are able to transform this nonlinear problem into a linear problem . 5.2 Experimental Results on Linear SVM Since there is an impor tant tuning parameter C in line ar SVM and lo gistic r egression, we co nducted o ur extensive experiments for a wide range of C values (from 10 − 3 to 10 2 ) with fine spacings in [0 . 1 , 1 0] . W e m ainly experimen ted with k = 30 to k = 5 00 , and b = 1 , 2, 4, 8, and 16. Figures 1 (average) an d 2 (std, standard deviation) provide th e test accur acies. Figur e 1 demo nstrates th at using b ≥ 8 and k ≥ 150 achieves abo ut the same test accu racies as using the origin al data. Since o ur method is rando mized, we repe ated every experim ent 50 times. W e repor t both the mean and std values. Figure 2 illustrates that the stds are very small, especially with b ≥ 4 . In other words, our algorithm produces st able prediction s. For this dataset, the best performance s were usually achieved when C ≥ 1 . 1 W e will let the program run unless it is accide ntall y terminate d (e.g., due to power outage ). 5 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 85 90 95 100 b = 1 b = 2 b = 4 b = 8 16 C Accuracy (%) svm: k = 30 Spam: Accuracy 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 85 90 95 100 C Accuracy (%) svm: k = 50 Spam: Accuracy b = 2 b = 1 b = 4 b = 8 16 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 85 90 95 100 C Accuracy (%) svm: k = 100 Spam: Accuracy b = 1 b = 2 b = 4 b = 8 16 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 85 90 95 100 C Accuracy (%) svm: k = 150 Spam: Accuracy b = 1 b = 2 b = 4 b = 8 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 85 90 95 100 C Accuracy (%) Spam: Accuracy svm: k = 200 b = 1 b = 2 b = 4 8 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 85 90 95 100 C Accuracy (%) svm: k = 300 Spam: Accuracy b = 2 b = 1 b = 4 8 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 85 90 95 100 C Accuracy (%) b = 1 b = 2 4 8 Spam: Accuracy svm: k = 400 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 85 90 95 100 C Accuracy (%) svm: k = 500 Spam: Accuracy b = 1 b = 2 2 4 8 Figure 1: Linear SVM t est accuracy (averaged over 50 repetitions). W ith k ≥ 100 and b ≥ 8 . b - bit hashing (solid) achieves very similar accu racies as using the orig inal data (dashed , red if colo r is av ailable). Note that after k ≥ 150 , the curves for b = 16 overlap the curves for b = 8 . 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 −2 10 −1 10 0 C Accuracy (std %) svm: k = 30 Spam:Accuracy (std) b = 1 b = 2 b = 4 b = 8 b = 16 10 −2 10 0 10 2 10 −2 10 −1 10 0 C Accuracy (std %) svm: k = 50 Spam:Accuracy (std) b = 16 b = 8 b = 4 b = 2 b = 1 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 −2 10 −1 10 0 C Accuracy (std %) svm: k = 100 Spam:Accuracy (std) b = 1 b = 2 b = 4 b = 8 b = 16 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 −2 10 −1 10 0 C Accuracy (std %) svm: k = 150 Spam:Accuracy (std) b = 1 b = 2 b = 4 b = 8 b = 16 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 −2 10 −1 10 0 C Accuracy (std %) svm: k = 200 Spam:Accuracy (std) b = 1 b = 2 b = 4 b = 8 b = 16 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 −2 10 −1 10 0 C Accuracy (std %) Spam:Accuracy (std) b = 1 b = 2 b = 4 b = 8 b = 16 svm: k = 300 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 −2 10 −1 10 0 C Accuracy (std %) b = 1 b = 2 b = 8 b = 4 b = 16 svm: k = 400 Spam:Accuracy (std) 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 −2 10 −1 10 0 b = 1 b = 2 b = 4 b = 8 b = 16 C Accuracy (std %) svm: k = 500 Spam:Accuracy (std) Figure 2: Linear SVM test accurac y (std) . The standard d eviations are computed from 50 repetitions. When b ≥ 8 , the standard deviations become extremely small (e.g., 0 . 02% ). Compared with the orig inal trainin g time (abo ut 100 seconds) , we can see f rom Figure 3 that our metho d only need abo ut 3 ∼ 7 secon ds near C = 1 (abou t 3 seco nds for b = 8 ). No te that h ere the tra ining time d id not in clude the data loadin g time. Loading the or iginal data took about 12 minutes while loadin g the hashed data took only abo ut 10 seconds. Of cou rse, there is a cost for processing ( hashing) the da ta, which w e find is efficient, con firming p rior studies [6]. In fact, data pro cessing ca n be condu cted du ring data collection, a s is the standard p ractice in search . In other words, prior to co nductin g the learn ing procedur e, the data m ay be alread y processed and stored by ( b -bit) min- wise hashin g, wh ich can be u sed for multiple task s in cluding learning , clu stering, du plicate d etection, near-neighb or search, etc. Compared with the original testing time ( about 10 0 ∼ 200 second s), we can see from Figu re 4 that th e testing time o f our m ethod is m erely about 1 o r 2 seco nds. Note that the testing time includes both the data loading time and co mputing tim e, as designed by LIBLINEAR. The efficiency of testing may b e very impor tant in practice, for example, w hen the classifier is deployed in an user-facing application (such as searc h), while the cost of tr aining or pre-pr ocessing (such as hashing ) may be less critical and can often be conducted of f-line. 6 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Training time (sec) svm: k = 30 Spam:Training Time b = 1 2 16 b=8 4 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Training time (sec) b = 1 2 4 16 b=8 svm: k = 50 Spam:Training Time 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Training time (sec) svm: k = 100 Spam:Training Time b = 16 b = 1 b = 4 2 16 4 b=8 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Training time (sec) svm: k = 150 Spam:Training Time b = 16 b = 1 b=8 b = 4 b = 1 2 4 16 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C svm: k = 200 Spam:Training Time b=8 b = 1 b = 16 b = 4 b = 2 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Training time (sec) b = 16 b = 1 2 4 16 b=8 b = 1 svm: k = 300 Spam:Training Time 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Training time (sec) b = 1 b = 16 b = 1 b = 4 16 2 4 b=8 svm: k = 400 Spam:Training Time 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 b = 1 b = 2 b = 4 b = 8 b = 16 C Training time (sec) svm: k = 500 b=1 b=8 Spam:Training Time Figure 3 : Linear SVM T raining time . Comp ared with the trainin g time of using the orig inal data (da shed, red if color is a vailable), we can see that our method with b -bit hashing only needs a very small fraction of the original cost. 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Testing time (sec) svm: k = 30 Spam:Testing Time 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Testing time (sec) svm: k = 50 Spam:Testing Time 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Testing time (sec) svm: k = 100 Spam:Testing Time 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Testing time (sec) svm: k = 150 Spam:Testing Time 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Testing time (sec) Spam:Testing Time svm: k = 200 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Testing time (sec) Spam:Testing Time svm: k = 300 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Testing time (sec) Spam:Testing Time svm: k = 400 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Testing time (sec) svm: k = 500 Spam:Testing Time Figure 4: Linear SVM testing time . The origina l costs are plotted using dashed (red, if color is a v ailable) curves. 5.3 Experimental Results on Logistic Regression Figure 5 presents the test ac curacies and Fig ure 7 presents the tr aining tim e using logistic regression. Again , with k ≥ 150 (or even k ≥ 100) and b ≥ 8 , b -bit min wise hashing can achieve the same test accuracies as using the original data. Figure 6 p resents th e stand ard deviations, whic h again verify that our algorithm produ ces stable prediction s for logistic regression. From Figure 7, we ca n see that the trainin g time is substantially red uced, fr om about 10 00 seconds to about 30 ∼ 50 seconds only (unless b = 16 an d k is large). In summary , it appears b -bit hashing is highly ef fecti ve in reducing the data size and speeding up the training (and testing), for b oth (no nlinear and linear) SVM a nd log istic regression. W e notice that when usin g b = 16 , the training time c an be m uch larger than using b ≤ 8 . Intere stingly , we find that b -bit hashing can be comb ined with V owpal W abbit (VW) [34] to furthe r reduce the training time, especially when b is large. 7 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 85 90 95 100 C Accuracy (%) logit: k = 30 Spam:Accuracy b = 1 b = 2 b = 4 b = 8 16 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 85 90 95 100 C Accuracy (%) Spam:Accuracy logit: k = 50 b = 1 b = 2 b = 4 b = 8 b=16 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 85 90 95 100 C Accuracy (%) logit: k = 100 Spam:Accuracy b = 1 b = 2 b = 4 b = 8 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 85 90 95 100 C Accuracy (%) Spam:Accuracy logit: k = 150 b = 1 b = 2 b = 4 b = 8 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 85 90 95 100 C Accuracy (%) Spam:Accuracy logit: k = 200 b = 1 b = 2 b = 4 b = 8 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 90 95 100 C Accuracy (%) logit: k = 300 Spam:Accuracy b = 1 b = 2 b = 4 b = 8 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 85 90 95 100 C Accuracy (%) logit: k = 400 Spam:Accuracy b = 1 b = 2 b = 4 b = 8 10 −3 10 −2 10 −1 10 0 10 1 10 2 80 85 90 95 100 b = 1 b = 2 b = 4 b = 8 C Accuracy (%) logit: k = 500 Spam:Accuracy Figure 5: Log istic regress ion test accuracy . The dashed (red if co lor is av ailable) curves represents the results using the original data. 10 −2 10 0 10 2 10 −2 10 −1 10 0 C Accuracy (std %) logit: k = 30 Spam:Accuracy (std) b = 1 b = 2 b = 4 b = 8 b = 16 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 −2 10 −1 10 0 C Accuracy (std %) logit: k = 50 Spam:Accuracy (std) b = 16 b = 8 b = 4 b = 2 b = 1 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 −2 10 −1 10 0 C Accuracy (std %) Spam:Accuracy (std) logit: k = 100 b = 16 b = 8 b = 4 b = 2 b = 1 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 −2 10 −1 10 0 C Accuracy (std %) b = 1 b = 2 b = 4 b = 8 b = 16 logit: k = 150 Spam:Accuracy (std) 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 −2 10 −1 10 0 C Accuracy (std %) Spam:Accuracy (std) logit: k = 200 b = 4 b = 16 b = 8 b = 2 b = 1 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 −2 10 −1 10 0 C Accuracy (std %) Spam:Accuracy (std) logit: k = 300 b = 1 b = 2 b = 4 b = 8 b = 16 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 −2 10 −1 10 0 C Accuracy (std %) logit: k = 400 Spam:Accuracy (std) b = 16 b = 8 b = 4 b = 2 b = 1 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 −2 10 −1 10 0 b = 1 b = 2 b = 4 b = 8 b = 16 C Accuracy (std %) logit: k = 500 Spam:Accuracy (std) Figure 6: Logistic regression test accurac y (std) . The standard deviations are computed from 50 repetitions. 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Training time (sec) b = 1 b = 8 4 2 logit: k = 30 Spam:Training Time 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Training time (sec) b = 1 b = 8 16 logit: k = 50 Spam:Training Time 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Training time (sec) logit: k = 100 Spam:Training Time b = 1 b = 16 b = 4 8 2 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Training time (sec) logit: k = 150 Spam:Training Time b = 16 b = 1 2 b = 8 4 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Training time (sec) b = 16 b = 1 b = 4 2 8 logit: k = 200 Spam:Training Time 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Training time (sec) b = 16 b = 1 b = 4 8 2 logit: k = 300 Spam:Training Time 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 C Training time (sec) Spam:Training Time logit: k = 400 b = 16 b = 1 2 8 b = 4 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 b = 1 2 b = 4 b = 16 C Training time (sec) logit: k = 500 Spam:Training Time 8 Figure 7: Log istic Regression T raining time . The dashed (red if color is a v ailable) curves represents th e results using the original data. 8 6 Random Projections an d V owpal W abbit (VW) The two methods, rand om projections [1, 24] and V o wpal W ab bit (VW) [34, 31] ar e not limited to bin ary data ( although for ultr a high-d imensiona l used in the co ntext of search , the data are often binary ). The VW algor ithm is also related to the Count-Min sketch [12]. I n this paper, we use ”VW“ particularly for the algorithm in [34]. For convenience, we deno te two D -dim data vectors by u 1 , u 2 ∈ R D . Again, th e task is to estimate the inner produ ct a = P D i =1 u 1 ,i u 2 ,i . 6.1 Random Pr ojections The general idea is to multiply the data vecto rs, e.g., u 1 and u 2 , by a rand om matrix { r ij } ∈ R D × k , wher e r ij is sampled i.i.d. from the following generic distrib ution with [24] E ( r ij ) = 0 , V ar ( r ij ) = 1 , E ( r 3 ij ) = 0 , E ( r 4 ij ) = s, s ≥ 1 . (11) W e mu st have s ≥ 1 because V ar ( r 2 ij ) = E ( r 4 ij ) − E 2 ( r 2 ij ) = s − 1 ≥ 0 . This generates two k - dim vectors, v 1 and v 2 : v 1 ,j = D X i =1 u 1 ,i r ij , v 2 ,j = D X i =1 u 2 ,i r ij , j = 1 , 2 , ..., k The general distributions which satisfy (11) includes the standard no rmal distrib ution (in this case, s = 3 ) and the “sparse projection ” distribution specified as r ij = √ s × 1 with prob. 1 2 s 0 with prob. 1 − 1 s − 1 with prob. 1 2 s (12) [24] provided the following unbiased estimator ˆ a r p,s of a and the gene ral v ariance formula: ˆ a r p,s = 1 k k X j =1 v 1 ,j v 2 ,j , E (ˆ a r p,s ) = a = D X i =1 u 1 ,i u 2 ,i , (13 ) V ar (ˆ a r p,s ) = 1 k D X i =1 u 2 1 ,i D X i =1 u 2 2 ,i + D X i =1 u 1 ,i u 2 ,i ! 2 + ( s − 3 ) D X i =1 u 2 1 ,i u 2 2 ,i (14) which mean s s = 1 achieves the smallest variance. Th e only eleme ntary distribution we know that satisfies (11) with s = 1 is the two point distribution in {− 1 , 1 } with equal pro babilities, i.e., (12) with s = 1 . 6.2 V o wpal W abbit (VW) Again, in this paper , “VW” alw ays refers to the particular algorith m in [34]. VW may be viewed a s a “bias-corrected” version of the Count-M in (CM) sketch algorith m [12]. In the orig inal CM algor ithm, the key step is to ind epende ntly and uniform ly hash elemen ts of the da ta vectors to buckets ∈ { 1 , 2 , 3 , ..., k } and the hashed value is the sum o f the elements in the bucket. That is h ( i ) = j with p robab ility 1 k , wher e j ∈ { 1 , 2 , ..., k } . For convenience, we intro duce an indicator function : I ij = 1 if h ( i ) = j 0 otherwise which allow us to write the hashed data as w 1 ,j = D X i =1 u 1 ,i I ij , w 2 ,j = D X i =1 u 2 ,i I ij 9 The estimate ˆ a cm = P k j =1 w 1 ,j w 2 ,j is (severely) biased for the task of e stimating the inner products. T he origi- nal p aper [1 2] suggested a “count-m in” step for po siti ve data, by generatin g multiple indepen dent estimates ˆ a cm and taking the minimum as the final estimate. Th at step can not remove the bias and makes the analysis (such as variance) very difficult. Here we sho uld mention th at the bias of CM m ay no t be a majo r issue in othe r tasks such as sparse recovery (or “heavy-hitter”, or “elephant detection”, by v arious communities). [34] proposed a creative meth od for bias-correctio n, which consists of pre-mu ltiplying (element-wise) the origina l data vectors with a ran dom vector who se entries a re sampled i. i.d. from the two-po int distribution in {− 1 , 1 } with equal prob abilities, which corre sponds to s = 1 in (12). Here, we consider a mo re g eneral situatio n b y co nsidering any s ≥ 1 . After apply ing mu ltiplication an d h ashing on u 1 and u 2 as in [34], the resultant vectors g 1 and g 2 are g 1 ,j = D X i =1 u 1 ,i r i I ij , g 2 ,j = D X i =1 u 2 ,i r i I ij , j = 1 , 2 , ..., k (15) where r i is defined as in (11), i.e., E ( r i ) = 0 , E ( r 2 i ) = 1 , E ( r 3 i ) = 0 , E ( r 4 i ) = s . W e h av e the following Lemma. Lemma 1 ˆ a vw ,s = k X j =1 g 1 ,j g 2 ,j , E ( ˆ a vw ,s ) = D X i =1 u 1 ,i u 2 ,i = a , (16) V ar (ˆ a vw ,s ) = ( s − 1 ) D X i =1 u 2 1 ,i u 2 2 ,i + 1 k D X i =1 u 2 1 ,i D X i =1 u 2 2 ,i + D X i =1 u 1 ,i u 2 ,i ! 2 − 2 D X i =1 u 2 1 ,i u 2 2 ,i (17) Proof: See Append ix B. Interestingly , the variance (1 7) says we d o need s = 1 , otherw ise th e ad ditional term ( s − 1) P D i =1 u 2 1 ,i u 2 2 ,i will not vanish ev en as the sample size k → ∞ . I n other words, the choice of rando m distrib ution in VW is essentially the only option if we want to remove the bias b y pre-multiplyin g the data vectors (element- wise) with a vector of random variables. Of cou rse, once we let s = 1 , th e variance ( 17) becomes identical to the variance of rand om pr ojections (14). 7 Comparing b -Bit Minwise Hashing with VW W e implemen ted VW (which, in this paper , alw ays refers to the algorithm de veloped in [34 ]) and tes ted it on the same webspam dataset. Figur e 8 shows that b -bit minwise hashing is substantially more accura te (at th e same sample size k ) and req uires sign ificantly less trainin g time (to achieve the same accu racy). For examp le, 8 -bit minwise hash ing with k = 200 achieves about the same test accuracy as VW with k = 1 0 6 . Note that we only stored th e non- zeros of the hashed data genera ted by VW . 10 1 10 2 10 3 10 4 10 5 10 6 80 85 90 95 100 C = 0.1 1,10,100 k Accuracy (%) C = 0.01 C = 0.1 0.1 svm: VW vs 8−bit hashing Spam: Accuracy C = 0.01 1,10,100 10 1 10 2 10 3 10 4 10 5 10 6 80 85 90 95 100 C = 0.01 C = 0.1 C = 1 C = 10 C = 100 k Accuracy (%) C = 0.01 0.1 1 10,100 logit: VW vs 8−bit hashing Spam: Training Time 10 1 10 2 10 3 10 4 10 5 10 6 10 0 10 1 10 2 10 3 C = 1 C = 0.1, 0.01 k Training time (sec) C = 0.01 100 C = 100 C = 10 Spam: Training Time svm: VW vs 8−bit hashing 10 1 10 2 10 3 10 4 10 5 10 6 10 1 10 2 10 3 0.1 k Training time (sec) C = 0.01 C = 100 C = 0.01 logit: VW vs 8−bit hashing Spam: Training Time Figure 8: Th e dashed (red if color is av ailable) cur ves r epresent b -bit minwise hashing results (only for k ≤ 500 ) while solid curves represent VW . W e display re sults for C = 0 . 01 , 0 . 1 , 1 , 10 , 100 . This empirical finding is no t surprising, because the variance of b -b it hashing is usually substantially smaller tha n the variance of VW (and ran dom p rojections). In Append ix C, we show tha t, at the same sto rage cost, b -bit ha shing 10 usually improves VW by 10- to 100-fo ld, by ass uming each s ample of VW requires 32 bits storage. Of course, e ven if VW only stores each sample using 16 bits, an imp rovement of 5- to 5 0-fold would still be very substan tial. Note that this comparison makes sense for the purp ose of data r eduction , i.e., the sample size k is substantially smaller than the number of non-zer os in the origin al (massi ve) data. There is one inter esting issue h ere. Unlike rando m pro jections (an d m inwise hashing), VW is a sparsity-pr eserving algorithm , me aning that in the resultant sam ple vector of length k , the number of no n-zero s will not exceed th e number of non -zeros in the origina l vector . In fact, it is easy to see that the fra ction of zeros in the r esultant vector would be (at least) 1 − 1 k c ≈ exp − c k , where c is the num ber of non -zeros in the original d ata vector . When c k ≥ 5 , then exp − c k ≈ 0 . In othe r w ords, if our goal is data r eduction (i.e., k ≪ c ) , then the hashed data by VW are dense. In this paper , we mainly focus on data r ed uction . As discussed in the introduction , f or many in dustry applications, the relati vely sparse datasets are often massi ve in the absolute scale and we assume we can not st ore all the non-zeros. In fact, this is the also one of the basic motiv ations for developing minwise hashing. Howe ver , the case of c ≪ k can also be interesting an d useful in our w ork. This is because VW is an excellent tool for achieving compa ct indexing due to the s parsity-pr eserving property . Basically , we can let k be very large (like 2 26 in [34]). A s the o riginal dictionar y size D is extremely large (e.g. , 2 64 ), even k = 2 26 will be a mean ingful reduction of the indexing. Of cou rse, using a very lar ge k will not be useful for the purpose of data r ed uction . 8 Combining b-Bit Minwise Hashing with VW In our algo rithm, we redu ce th e original massiv e d ata to nbk b its only , wher e n is the num ber of d ata poin ts. W ith (e.g.,) k = 2 00 and b = 8 , our technique achie ves a huge data r edu ction . In the run-time, we need to expand each data point into a b inary vector of leng th 2 b k with exactly k 1’ s. If b is large like 16 , the new binar y vectors will be high ly sparse. In fact, in Figure 3 and Figu re 7, we can see th at wh en using b = 16 , th e tra ining time beco mes substantially larger than using b ≤ 8 ( especially when k is large). On the other hand, once we ha ve expanded the v ectors, the tas k is merely computing inner products, for which we can a ctually use VW . Ther efore, in the run-time, after we have generated the sparse binary vectors of length 2 b k , we hash them u sing VW with sample size m (to differentiate fr om k ). How large shou ld m b e? Lem ma 2 may provid e some insights. Recall Section 2 provides the estimator , deno ted by ˆ R b , of the resemblan ce R , using b -bit minwise hashing. Now , suppose we first apply VW hashing with size m on the bin ary vector o f leng th 2 b k be fore estimating R , which will introdu ce some additio nal rando mness (on to p o f b -bit hashing). W e denote the new estimato r by ˆ R b,vw . Lemma 2 provides its theoretical v ariance. Lemma 2 E ˆ R b,vw = R (18) V ar ˆ R b,vw = V ar ˆ R b + 1 m 1 [1 − C 2 ,b ] 2 1 + P 2 b − P b (1 + P b ) k , (19) = 1 k P b (1 − P b ) [1 − C 2 ,b ] 2 + 1 m 1 + P 2 b [1 − C 2 ,b ] 2 − 1 mk P b (1 + P b ) [1 − C 2 ,b ] 2 wher e V ar ˆ R b = 1 k P b (1 − P b ) [1 − C 2 ,b ] 2 is given by (6) and C 2 ,b is the constant defined in Theor em 1. Proof : The p r oo f is q uite straightforwar d, by fo llowing the conditional expectation formula: E ( X ) = E ( E ( X | Y )) , and the conditiona l variance formula V ar ( X ) = E ( V ar ( X | Y )) + V ar ( E ( X | Y ) . Recall, originally we estimate the r esemblance by ˆ R b = ˆ P b − C 1 ,b [1 − C 2 ,b ] , wher e ˆ P b = T k and T is th e numbe r of matches in the two hashe d data vecto rs of length k gene rated b y b -bit hashin g. E ( T ) = k P b and V ar ( T ) = k P b (1 − P b ) . 11 Now , we apply VW (of siz e m and s = 1 ) on the hashed data vectors to estimate T (instead of counting it e xactly). W e denote this estimates by ˆ T and ˆ P b,vw = ˆ T k . Because we know the VW estimate is unbiased, we have E ˆ P b,vw = E ˆ T k ! = E ( T ) k = k P b k = P b . Using the conditiona l variance formula and the varianc e of VW (17) (with s = 1 ) , we obtain V ar ˆ P b,vw = 1 k 2 E 1 m k 2 + T 2 − 2 T + V ar ( T ) = 1 k 2 1 m k 2 + k P b (1 − P b ) + k 2 P 2 b − 2 k P b + k P b (1 − P b ) = P b (1 − P b ) k + 1 m 1 + P 2 b − P b (1 + P b ) k This completes the pr o of. Compared to the origin al variance V ar ˆ R b = 1 k P b (1 − P b ) [1 − C 2 ,b ] 2 , the additional te rm 1 m 1+ P 2 b [1 − C 2 ,b ] 2 in (19) ca n be rela- ti vely large if m is not large enou gh. Ther efore, we should cho ose m ≫ k (to reduce the additional variance) and m ≪ 2 b k ( otherwise there is no n eed to ap ply this VW step). If b = 16 , then m = 2 8 k m ay be a good trad e-off, because k ≪ 2 8 k ≪ 2 16 k . Figure 8 pr ovides an em pirical study to verify this intuition. Basically , as m = 2 8 k , usin g VW on top of 1 6-bit hashing achieves the same accuracies at using 16-bit hashing directly and reduces the training time quite noticeably . 10 −3 10 −2 10 −1 10 0 10 1 10 2 85 90 95 100 0 1 2 3 8 C Accuracy (%) SVM: 16−bit hashing + VW, k = 200 Spam:Accuracy 10 −3 10 −2 10 −1 10 0 10 1 10 2 85 90 95 100 C Accuracy (%) 1 2 3 8 0 Logit: 16−bit hashing +VW, k = 200 Spam: Accuracy 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 0 1 8 C Training time (sec) Spam:Training Time SVM: 16−bit hashing + VW, k = 200 1 2 8 3 0 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 C Training time (sec) 1 8 Logit: 16−bit hashing +VW, k = 200 Spam: Training Time 8 0 0 Figure 9 : W e a pply VW hashing on top of the bin ary vectors (o f leng th 2 b k ) generated b y b - bit hashin g, with size m = 2 0 k , 2 1 k , 2 2 k , 2 3 k , 2 8 k , f or k = 2 00 and b = 16 . T he n umbers o n th e solid cu rves (0, 1, 2, 3 , 8) are the exponents. Th e dashed (red i f color if av ailable) curves are the results from only using b -bit hashing . When m = 2 8 k , this metho d achieves the same test accuracies (left two panels) wh ile considerab ly re ducing the tra ining time ( right two panels), if we focus on C ≈ 1 . W e also experimented with combin ing 8-bit hashing with VW . W e found that we need m = 2 8 k to achie ve similar accuracies, i.e., th e additional VW step did not bring more im provement (without hurting accuracies) in term s o f training speed when b = 8 . This is unde rstandable from the analysis of the v ariance in Lemma 2. 9 Practical Considerations Minwise h ashing has b een widely u sed in (search) in dustry and b -bit min wise hashing requ ires only very minimal (if any) modifications (by doing less work). Thu s, we e xpect b -bit minwise hashing will b e adopted in practice. It is also well-under stood in practice that we can use (good ) hashing functions to very efficiently simulate permuta tions. In many rea l-world scen arios, the pr eprocessing step is not cr itical becau se it requires on ly one scan of th e da ta, which can be conduc ted o ff-line (or on th e data- collection stage, or at the same time as n-grams ar e gen erated), and it is trivially parallelizable. In fact, be cause b -bit minwise hashing can substantially reduce the memory consumptio n, it may b e now affordab le to store considerably more examples in th e memor y (afte r b -bit hashing ) than befo re, to 12 av oid (or minim ize) disk IOs. Once the hashed data have been generated , they can be used and re-u sed for many tasks such as super vised learning , clustering, du plicate detections, n ear-neighbor search, etc. For example, a learnin g task may ne ed to re-u se the same (hashed) dataset to perform many cross-validations an d param eter tunin g ( e.g., fo r experimenting with many C values in SVM). Nev ertheless, t here might be situations in which the preprocessing time can be an iss ue. For example, when a ne w unpro cessed docu ment (i.e. n-gram s are not y et av ailable) arrives and a par ticular application require s an imm ediate response from the learning algorithm , then the preprocessing cost might (or might not) be an issue. Firstly , ge nerating n-gram s will take some time. Secon dly , i f during the s ession a disk IO occurs, t hen the IO cost will typically mask the cost of preproce ssing for b -bit minwise hashin g. Note that the pr eprocessing cost for the VW algorithm can be substantially lower . Thus, if the time for pre- processing is in deed a concer n (while th e storage cost o r test accurac ies a re not as mu ch), one m ay want to consider using VW (or very sparse random pr o jections [24]) for those applications. 10 Conclusion As data sizes co ntinue to grow faster than the memory and comp utational power , machin e-learning tasks in industrial practice are increasing ly faced with tra ining datasets that exceed th e resources on a single server . A n umber o f approa ches have been pr oposed that ad dress this by either scaling out the train ing p rocess or partition ing the data, but both solutions can be e xpensive. In this paper, we propo se a comp act representatio n of spa rse, binary datasets based on b -b it minw ise hashing . W e show tha t the b -b it minw ise hashing estimators a re positive definite kernels and can be natura lly integrated with learning algorith ms such as SVM and logistic regression, leading to d ramatic improvements in tra ining time and/o r resource r equirem ents. W e also c ompare b -bit minwise hashin g with the V owpal W abbit ( VW) algorithm , which has the same variances as random projections. Our theoretical and empirica l c omparison s illustrate that u sually b -bit minwise hashing is significantly more accur ate (at the same storage) than VW for binary d ata. Interestingly , b -bit minwise hashing can be combine d with VW to achieve further improvements in terms of training speed when b is large (e.g., b ≥ 1 6 ). Refer ences [1] Dimitris Achlioptas. Database-friendly random projections: Johnson -Lindenstrauss wit h binary coins. Journal of Computer and System Sciences , 66(4):671–68 7, 2003. [2] Alexandr Ando ni and Piotr Indyk. Near-optimal h ashing algorithms for a pproximate nearest neighbor in high d imensions. In Commun. ACM , volume 51, pages 117–122 , 2008. [3] Harald Baayen. W or d F r equency Distributions , volume 18 of T ext, Speech and Languag e T echn ology . Kulver Academic Publishers, 2001. [4] Michael Bendersky and W . Bruce Croft. Finding text reuse on the web . In WSDM , pages 262–27 1, Barcelona, Spain, 2009. [5] Leon Bottou. availab le at http://leon.bottou.org/projects/sgd. [6] Andrei Z. Broder . On the resemblance and containment of documents. In the Compressio n and Complexity of Sequences , pages 21–29, Positano, Italy , 1997. [7] Andrei Z. Broder , St e ven C. Glassman, Mark S. Manasse, and Geof frey Zweig. Syntactic clustering of the web . In WWW , pages 1157 – 1166, Santa Clara, CA, 1997. [8] Gregory Buehrer and Kumar Chellapilla. A scalable pattern mining approach to web graph compression with communities. In WSDM , pages 95–106, Stanford, CA, 2008. [9] Olivier Chapelle, Patrick Haffner , and Vladimir N. V apnik. Support vector mach ines for h istogram-based i mage classification. IEEE T ra ns. Neur al Networks , 10(5):1055–1 064, 1999. [10] Ludmila Che rkasov a, Kave E shghi, Ch arles B. Morrey III, Jose ph Tucek, and Alistair C. V eitch. Applying syntactic similarity algorithms for enterprise information management. In KDD , pages 1087–1096, Paris, France, 2009. [11] Flavio Chierichetti, Ravi Kumar , Silvio Lattanzi, Michael Mitzenmacher , Alessandro Panconesi, and Prabhakar Raghav an. On compressing social network s. In KDD , pages 219–228, Paris, France, 2009 . 13 [12] Graham Cormode and S. Muthukrishna n. An i mprov ed data stream summary: the count-min sketch and its applications. J ournal of Algorithm , 55(1):58–7 5, 2005. [13] Y on Dourisboure, F ilippo Geraci, and Marco Pell egrini. Extraction and classification of dense implicit communities in the web graph. ACM T rans. W eb , 3(2):1–36, 2009. [14] Rong-En Fan, Kai-W ei Chang, Cho-Jui Hsieh, Xiang-Rui W ang, and Chih-Jen L in. L iblinear: A library f or large li near classification. Journal of Mach ine Learning Resear ch , 9:1871 –1874, 2008. [15] Dennis Fetterly , Mark Manasse, Marc Najork, and Janet L. Wien er . A large-scale study of the evolution of web pages. In WWW , pages 669–678 , Budapest, Hungary , 2003. [16] George Forman, Kave Eshghi, and Jaap S uermondt. Efficient detection of l arge-scale redundanc y in enterprise fi le systems. SIGOPS Oper . Syst. Rev . , 43(1):84–91, 2009. [17] Sreeniv as Gollapudi and Aneesh Sharma. An axiomatic app roach for result diversification. In WW W , pag es 3 81–390 , Madrid, Spain, 2009. [18] Matthias Hein and Olivier Bousquet. Hilbertian metrics and positiv e definite kernels on probability measures. In AIST A TS , pages 136–143 , Barbados, 2005. [19] Cho-Jui Hsieh, Kai-W ei C hang, Chih-Jen Lin, S. Sathiya Keerthi, and S. Sundararajan. A dual coordinate descent method for large-scale linear svm. In P r oceeding s of the 25th i nternational confer ence on Machine learning , ICML, pages 408–415, 2008. [20] Y ugang Jiang, Chongwah Ngo, and Jun Y ang. T owards optimal bag-of-features for object categorization and semantic video retriev al. In CIVR , pages 494–501, Amsterdam, Netherlands, 2007. [21] Nitin Jindal and Bing Liu. Opinion spam and analysis. In WSDM , pages 219–230 , Palo Alto, California, USA, 2008. [22] Thorsten Joachims. Training linear svms in linear time. In KDD , pages 217–22 6, Pittsburgh, P A, 2006. [23] K onstantinos Kalpakis and Shilang T ang. Collaborati ve data gathering in wi reless sensor networks using measurement co- occurrence. Computer Communications , 31(10):1979–1992 , 2008. [24] Ping Li, Tre vo r J. Hastie, and Kenneth W . Church. V ery sparse random projections. In KDD , pages 287–296, Philadelphia, P A, 2006. [25] Ping Li and Arnd Christian K ¨ onig. Theory and applications b-bit minwise hashin g. In Commun. ACM , T o Appear . [26] Ping Li and Arnd Christian K ¨ onig. b-bit minwise hash ing. In WWW , pages 671–680 , Raleigh, NC, 2010. [27] Ping Li , Arnd Christian K ¨ onig, and W enhao Gui. b-bit minwise hashing for estimating three-way simil arities. In NIPS , V ancouv er , BC, 2010. [28] Gurmeet Singh Manku, Arvind Jain, and Anish Das Sarma. Detecting Near-Duplicates for Web-Crawling. In WWW , B anf f, Alberta, Canada, 2007. [29] Marc Najork, Sreeniv as Gollapudi, and Rina Panigrahy . Less is more: sampling the neighborhoo d graph makes salsa better and faster . In WSDM , pages 242–251, Barcelona, Spain, 2009. [30] Shai S hale v-Shwartz, Y oram Singer , and Nathan Srebro. Pegasos: P rimal esti mated sub-gradient solver for svm. In ICML , pages 807–814 , Corvalis, Ore gon, 2007. [31] Qinfeng Shi, James P etterson, Gideon Dr or , John L angford, Alex Smola, and S.V .N. V ishwana than. Hash kernels for st ruc- tured data. Journ al of Mach ine Learning Resear ch , 10:2615– 2637, 2009. [32] Simon T ong. Lessons learned de velop ing a practical large scale machine learning system. av ail able at http://googleresearch.blog spot.com/2010 /04/lessons-learned-de v eloping-practi cal.html, 2008. [33] T anguy Urv oy , E mmanuel Chauv eau, Pascal Filoche, an d Thomas Lav ergne . T racking web spam with html style similarities. ACM Tr ans. W eb , 2(1):1–28, 2008. [34] Kilian W einberger , Anirban Dasgupta, John Langford, Al ex Smola, and Josh Attenberg. Feature hashing for l arge scale multitask learning. In ICML , pages 1113–1120, 2009. [35] Hsiang-Fu Y u, Cho-Jui Hsieh, Kai-W ei Chang, and Chih-Jen Lin. Large linear classification wh en data cannot fit in memory . In KDD , pages 833–842 , 2010. 14 A A ppr o ximation Errors of the Basic Pr obability Formula Note that the only assumption needed in the proof of Theorem 1 is that D is large, which is virtually alw ays satisfied in practice. In terestingly , (4) is rem arkably accu rate even fo r very small D . Figu re 10 sho ws that when D = 2 0 / 200 / 500 , the absolute error caused b y using (4) is < 0 . 01 / 0 . 001 / 0 . 0004 . Th e exact probability , which has no closed-f orm, can be computed by exhaustiv e enumera tions for small D . 0 0.2 0.4 0.6 0.8 1 0 0.002 0.004 0.006 0.008 0.01 f 2 = 2 f 2 = 4 a / f 2 Approximation Error D = 20, f 1 = 4, b = 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 x 10 −3 f 2 = 2 f 2 = 5 f 2 = 10 a / f 2 Approximation Error D = 200, f 1 = 10, b = 1 0 0.2 0.4 0.6 0.8 1 0 1 2 3 4 x 10 −4 D = 500, f 1 = 50, b = 1 f 2 = 2 f 2 = 25 f 2 = 50 a / f 2 Approximation Error 0 0.2 0.4 0.6 0.8 1 0 0.002 0.004 0.006 0.008 0.01 f 2 = 2 f 2 = 5 f 2 = 10 a / f 2 Approximation Error D = 20, f 1 = 10, b = 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 x 10 −3 f 2 = 2 f 2 = 50 a / f 2 Approximation Error D = 200, f 1 = 100, b = 1 0 0.2 0.4 0.6 0.8 1 0 1 2 3 4 x 10 −4 a / f 2 Approximation Error D = 500, f 1 = 250, b = 1 0 0.2 0.4 0.6 0.8 1 0 0.002 0.004 0.006 0.008 0.01 f 2 = 2 f 2 = 8 f 2 = 16 a / f 2 Approximation Error D = 20, f 1 = 16, b = 1 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 x 10 −3 f2 = 1 f2 = 2 a / f 2 Approximation Error f 2 = 2 D = 200, f 1 = 190, b = 1 0 0.2 0.4 0.6 0.8 1 0 1 2 3 4 x 10 −4 a / f 2 Approximation Error D = 500, f 1 = 450, b = 1 0 0.2 0.4 0.6 0.8 1 0 0.002 0.004 0.006 0.008 0.01 f 2 = 2 f 2 = 16 a / f 2 Approximation Error D = 20, f 1 = 16, b = 4 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 x 10 −3 f 2 = 2 f 2 = 190 a / f 2 Approximation Error D = 200, f 1 = 190, b = 4 0 0.2 0.4 0.6 0.8 1 0 1 2 3 4 x 10 −4 a / f 2 Approximation Error D = 500, f 1 = 450, b = 4 Figure 10: The absolute errors (approx imate - exact) by using (4) are very small even for D = 20 (lef t p anels), D = 200 (midd le panels), and D = 500 ( right panels). The exact probability can b e num erically computed for small D ( from a probability matrix of size D × D ). For each D , we selected three f 1 values. W e always let f 2 = 2 , 3 , ..., f 1 and a = 0 , 1 , 2 , ..., f 2 . B Pr oof of Lemma 1 The VW algorithm [34] provides a bias-corrected version of the Count-Min (CM) sketch algorithm [12]. B.1 The Analysis of the CM Algorithm The key step in CM is to indepen dently an d un iformly hash elemen ts of th e data vectors to { 1 , 2 , 3 , ..., k } . T hat is h ( i ) = q with e qual probab ilities, where q ∈ { 1 , 2 , ..., k } . F or conv enience, we introduc e the following indic ator 15 function : I iq = 1 if h ( i ) = q 0 otherwise Thus, we can denote the CM “samples” after the hashing step by w 1 ,q = D X i =1 u 1 ,i I iq , w 2 ,q = D X i =1 u 2 ,i I iq and estimate the inner prod uct by ˆ a cm = P k q =1 w 1 ,q w 2 ,q , whose expectation and v ariance can be sho wn to be E (ˆ a cm ) = D X i =1 u 1 ,i u 2 ,i + 1 k X i 6 = j u 1 ,i u 2 ,j = a + 1 k D X i =1 u 1 ,i D X i =1 u 2 ,i − 1 k a (20) V ar (ˆ a cm ) = 1 k 1 − 1 k D X i =1 u 2 1 ,i D X i =1 u 2 2 ,i + D X i =1 u 1 ,i u 2 ,i ! 2 − 2 D X i =1 u 2 1 ,i u 2 2 ,i (21) From the definition of I iq , we can easily infer its moments, for example, I iq = 1 if h ( i ) = q 0 otherwise , E ( I n iq ) = 1 k , E ( I iq I iq ′ ) = 0 if q 6 = q ′ , E ( I iq I i ′ q ′ ) = 1 k 2 if i 6 = i ′ The proof of the mean (20) is simple: E (ˆ a cm ) = k X q =1 D X i =1 u 1 ,i I iq D X i =1 u 2 ,i I iq = k X q =1 D X i =1 u 1 ,i u 2 ,i E I 2 iq + X i 6 = j u 1 ,i u 2 ,j E ( I iq I j q ) = D X i =1 u 1 ,i u 2 ,i + 1 k X i 6 = j u 1 ,i u 2 ,j . The v ariance (21 is more complicated: V ar (ˆ a cm ) = E (ˆ a 2 cm ) − E 2 (ˆ a cm ) = k X q =1 E ( w 2 1 ,q w 2 2 ,q ) + X q 6 = q ′ E ( w 1 ,q w 2 ,q w 1 ,q ′ w 2 ,q ′ ) − D X i =1 u 1 ,i u 2 ,i + 1 k X i 6 = j u 1 ,i u 2 ,j 2 The following expansions are helpful: " D X i =1 a i D X i =1 b i # 2 = D X i =1 a 2 i b 2 i + X i 6 = j a 2 i b 2 j + 2 a 2 i b i b j + 2 b 2 i a i a j + 2 a i b i a j b j + X i 6 = j 6 = c a 2 i b j b c + b 2 i a j a c + 4 a i b i a j b c + X i 6 = j 6 = c 6 = t a i b j a c b t X i 6 = j a i b j 2 = X i 6 = j a 2 i b 2 j + a i b i a j b j + X i 6 = j 6 = c a 2 i b j b c + b 2 i a j a c + 2 a i b i a j b c + X i 6 = j 6 = c 6 = t a i b j a c b t D X i =1 a i b i X i 6 = j a i b j = X i 6 = j a 2 i b i b j + b 2 i a i a j + X i 6 = j 6 = c a 2 i b j b c which, combined with the moments of I iq , yield k X q =1 E ( w 2 1 ,q w 2 2 ,q ) = D X i =1 u 2 1 ,i u 2 2 ,i + 1 k X i 6 = j u 2 1 ,i u 2 2 ,j + 2 u 2 1 ,i u 2 ,i u 2 ,j + 2 u 2 2 ,i u 1 ,i u 1 ,j + 2 u 1 ,i u 2 ,i u 1 ,j u 2 ,j + 1 k 2 X i 6 = j 6 = c u 2 1 ,i u 2 ,j u 2 ,c + u 2 2 ,i u 1 ,j u 1 ,c + 4 u 1 ,i u 2 ,i u 1 ,j u 2 ,c + 1 k 3 X i 6 = j 6 = c 6 = t u 1 ,i u 2 ,j u 1 ,c u 2 ,t 16 X q 6 = q ′ E ( w 1 ,q w 2 ,q w 1 ,q ′ w 2 ,q ′ ) = k ( k − 1 ) 1 k 2 X i 6 = j u 1 ,i u 2 ,i u 1 ,j u 2 ,j + 2 k 3 X i 6 = j 6 = c u 1 ,i u 2 ,i u 1 ,j u 2 ,c + 1 k 4 X i 6 = j 6 = c 6 = t u 1 ,i u 2 ,j u 1 ,c u 2 ,t =( k − 1) 1 k X i 6 = j u 1 ,i u 2 ,i u 1 ,j u 2 ,j + 2 k 2 X i 6 = j 6 = c u 1 ,i u 2 ,i u 1 ,j u 2 ,c + 1 k 3 X i 6 = j 6 = c 6 = t u 1 ,i u 2 ,j u 1 ,c u 2 ,t E (ˆ a 2 cm ) = k X q =1 E ( w 2 1 ,q w 2 2 ,q ) + X q 6 = q ′ E ( w 1 ,q w 2 ,q w 1 ,q ′ w 2 ,q ′ ) = D X i =1 u 2 1 ,i u 2 2 ,i + X i 6 = j u 1 ,i u 2 ,i u 1 ,j u 2 ,j + 1 k X i 6 = j u 2 1 ,i u 2 2 ,j + 2 u 2 1 ,i u 2 ,i u 2 ,j + 2 u 2 2 ,i u 1 ,i u 1 ,j + u 1 ,i u 2 ,i u 1 ,j u 2 ,j + 2 k X i 6 = j 6 = c u 1 ,i u 2 ,i u 1 ,j u 2 ,c + 1 k 2 X i 6 = j 6 = c u 2 1 ,i u 2 ,j u 2 ,c + u 2 2 ,i u 1 ,j u 1 ,c + 2 u 1 ,i u 2 ,i u 1 ,j u 2 ,c + 1 k 2 X i 6 = j 6 = c 6 = t u 1 ,i u 2 ,j u 1 ,c u 2 ,t = D X i =1 u 1 ,i u 2 ,i ! 2 + 1 k − 1 k 2 X i 6 = j u 2 1 ,i u 2 2 ,j + u 1 ,i u 2 ,i u 1 ,j u 2 ,j + 2 k D X i =1 u 1 ,i u 2 ,i X i 6 = j u 1 ,i u 2 ,j + 1 k 2 X i 6 = j u 1 ,i u 2 ,j 2 Therefo re, V ar (ˆ a cm ) = E (ˆ a 2 cm ) − D X i =1 u 1 ,i u 2 ,i + 1 k X i 6 = j u 1 ,i u 2 ,j 2 = 1 k − 1 k 2 X i 6 = j u 2 1 ,i u 2 2 ,j + u 1 ,i u 2 ,i u 1 ,j u 2 ,j = 1 k 1 − 1 k D X i =1 u 2 1 ,i D X i =1 u 2 2 ,i + D X i =1 u 1 ,i u 2 ,i ! 2 − 2 D X i =1 u 2 1 ,i u 2 2 ,i B.2 The Analysis of the More General V ersion of the VW Algorithm The nice approach prop osed in the VW paper [34] is to pr e-element-wise- multiply th e d ata vectors with a rand om vector r be fore taking the hashing operation. W e d enote the two resultant vectors (samples) by g 1 and g 2 respectively: g 1 ,q = D X i =1 u 1 ,i r i I iq , g 2 ,q = D X i =1 u 2 ,i r i I iq where r i ∈ { − 1 , 1 } with equal probabilities. Here, we p rovide a m ore g eneral scheme by samp ling r i from a sub- Gaussian distribution with parame ter s an d E ( r i ) = 0 , E ( r 2 i ) = 1 , E ( r 3 i ) = 0 , E ( r 4 i ) = s which includ e no rmal (i.e., s = 3 ) an d the distribution on {− 1 , 1 } with equ al pr obabilities (i.e., s = 1 ) as special cases. 17 Let ˆ a vw ,s = P k q =1 g 1 ,q g 2 ,q . The goal is to show E (ˆ a vw ,s ) = D X i =1 u 1 ,i u 2 ,i V ar (ˆ a vw ,s ) = ( s − 1 ) D X i =1 u 2 1 ,i u 2 2 ,i + 1 k D X i =1 u 2 1 ,i D X i =1 u 2 2 ,i + D X i =1 u 1 ,i u 2 ,i ! 2 − 2 D X i =1 u 2 1 ,i u 2 2 ,i W e can use the previous results and the conditional expectation and variance formulas: E ( X ) = E ( E ( X | Y )) , V ar ( X ) = E ( V ar ( X | Y )) + V a r ( E ( X | Y )) E ( r i ) = 0 , E ( r 2 i ) = 1 , E ( r 3 i ) = 0 , E ( r 4 i ) = s . E (ˆ a vw ,s ) = E ( E ( ˆ a vw ,s | r )) = E D X i =1 u 1 ,i u 2 ,i r 2 i + 1 k X i 6 = j u 1 ,i u 2 ,j r i r j = D X i =1 u 1 ,i u 2 ,i E ( V ar (ˆ a vw ,s | r )) = 1 k − 1 k 2 E X i 6 = j u 2 1 ,i u 2 2 ,j r 2 i r 2 j + u 1 ,i u 2 ,i u 1 ,j u 2 ,j r 2 i r 2 j = 1 k − 1 k 2 X i 6 = j u 2 1 ,i u 2 2 ,j + u 1 ,i u 2 ,i u 1 ,j u 2 ,j As V ar ( E (ˆ a vw ,s | r )) = E ( E 2 (ˆ a vw ,s | r )) − E 2 ( E (ˆ a vw ,s | r )) , we need to compu te E ( E 2 (ˆ a vw ,s | r )) = E D X i =1 u 1 ,i u 2 ,i r 2 i + 1 k X i 6 = j u 1 ,i u 2 ,j r i r j 2 = s D X i =1 u 2 1 ,i u 2 2 ,i + X i 6 = j u 1 ,i u 2 ,i u 1 ,j u 2 ,j + 1 k 2 X i 6 = j u 2 1 ,i u 2 2 ,j + u 1 ,i u 2 ,i u 1 ,j u 2 ,j Thus, V ar ( E (ˆ a vw ,s | r )) = ( s − 1 ) D X i =1 u 2 1 ,i u 2 2 ,i + 1 k 2 X i 6 = j u 2 1 ,i u 2 2 ,j + u 1 ,i u 2 ,i u 1 ,j u 2 ,j V ar (ˆ a vw ,s ) = ( s − 1 ) D X i =1 u 2 1 ,i u 2 2 ,i + 1 k X i 6 = j u 2 1 ,i u 2 2 ,j + u 1 ,i u 2 ,i u 1 ,j u 2 ,j B.3 Another Simple Scheme for Bias -Corr ection By examining the expectation (20), the bias of CM can be easily removed, because ˆ a cm,nb = k k − 1 " ˆ a cm − 1 k D X i =1 u 1 ,i D X i =1 u 2 ,i # (22) is unbiased with variance V ar (ˆ a cm,nb ) = 1 k − 1 D X i =1 u 2 1 ,i D X i =1 u 2 2 ,i + D X i =1 u 1 ,i u 2 ,i ! 2 − 2 D X i =1 u 2 1 ,i u 2 2 ,i , (23) which is essentially the same as the variance of VW . 18 C Comparing b -Bit Minwise Hashing with VW Random Projections W e compar e VW (a nd rand om p rojection s) with b -b it minwise hashin g fo r the task of estimating inner p roduc ts on binary data. W ith b inary data, i.e., u 1 ,i , u 2 ,i ∈ { 0 , 1 } , we have f 1 = P D i =1 u 1 ,i , f 2 = P D i =1 u 2 ,i , a = P D i =1 u 1 ,i u 2 ,i . The variance (17 ) (by using s = 1 ) becomes V ar ( ˆ a vw ,s =1 ) = f 1 f 2 + a 2 − 2 a k W e can com pare th is variance with the variance o f b - bit minwise hashing. Because the variance (6) is for estimating the resemblance, we need to conv ert it into the variance for estimating the inn er product a using th e relation: a = R 1 + R ( f 1 + f 2 ) W e can estimate a fr om the estimated R , ˆ a b = ˆ R b 1 + ˆ R b ( f 1 + f 2 ) V ar ( ˆ a b ) = 1 (1 + R ) 2 ( f 1 + f 2 ) 2 V ar ( ˆ R b ) For b -bit minwise hashing, each sample is stored using only b bits. For VW (and ran dom projection s), we assume each sample is stor ed u sing 3 2 b its (instead of 64 bits) for two reasons: (i) f or binary data, it would be very un likely for the h ashed value to be close to 2 64 , even wh en D = 2 64 ; ( ii) unlike b -b it minwise hashing , which r equires exact bit-matchin g in the estimation stage, rand om projectio ns only need to comp ute the inner prod ucts for wh ich it would suffice to store hashed values as (double precision) real numbers. Thus, we define the following ratio to compare the two methods. G vw = V ar (ˆ a vw ,s =1 ) × 32 V ar (ˆ a b ) × b (24) If G vw > 1 , then b - bit minwise hashing is more accurate than bina ry ran dom pr ojections. Equi valently , when G vw > 1 , in order t o achie ve th e same l ev el of accuracy (variance), b -bit m inwise hashing needs smaller s torage space than rando m projectio ns. Th ere are two issues we need to elabor ate on: 1. Here, we assume th e purp ose of using VW is f or data r eduction . That is, k is small comp ared to the num ber of non-ze ros (i. e., f 1 , f 2 ). W e d o n ot co nsider the case when k is taken to be extrem ely large for the b enefits of compact indexing without achieving data r eductio n . 2. Because we assume k is sma ll, we need to repr esent the sample with enough precision. Th at is why we assume each sample of VW is stored using 32 bits. In fact, since the ratio G vw is usually very large (e.g., 10 ∼ 100 ) by using 32 bits for each VW samp le, it will r emain to b e very large (e.g., 5 ∼ 50 ) even if we only need to store each VW sample using 16 bits. W ithout loss of genera lity , we can assume f 2 ≤ f 1 (hence a ≤ f 2 ≤ f 1 ). Figu res 1 1 to 14 disp lay the ratios (24) for b = 8 , 4 , 2 , 1 , respectively . In order to achieve high learn ing accu racies, b -b it minwise h ashing r equires b = 4 ( or ev en 8). In each figur e, we plot G B P for f 1 /D = 0 . 0001 , 0 . 1 , 0 . 5 , 0 . 9 an d f ull ranges o f f 2 and a . W e can see that G vw is m uch larger th an one ( usually 10 to 10 0), indicating the very substantial advantage of b - bit minwise hashing over rando m projectio ns. Note that the comp arisons ar e essentially indep endent of D . Th is is because in the variance of binar y ran dom projection (24) the − 2 a term is negligible compared to a 2 in binary data as D is very large. T o gener ate the plots, we used D = 10 6 (althoug h practically D should be much larger). Conclusion : Our theo retical analy sis ha s illu strated th e substantial improvements of b -bit minwise hash ing over th e VW algorithm and r andom pr ojections in bin ary d ata, o ften b y 1 0- to 10 0 -fold . W e feel such a large perfo rmance difference should be noted by researchers and practition ers in lar ge-scale machine learning. 19 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio 1 b = 8, f 1 = 10 −4 × D f 2 = f 1 f 2 = 0.1 × f 1 0.9 0.2 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio b = 8, f 1 = 0.1 × D f 2 = f 1 f 2 = 0.1 × f 1 0.2 0.9 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio b = 8, f 1 = 0.5 × D f 2 = f 1 f 2 = 0.1 × f 1 0.2 0.9 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio 0.4 0.5 0.6 0.7 b = 8, f 1 = 0.9 × D f 2 = 0.1 × f 1 f 2 = f 1 0.9 0.8 0.2 0.3 Figure 11 : G vw as defined in (24), fo r b = 8 . G vw > 1 mean s b -bit minwise h ashing is more accurate th an r andom projection s at the sam e storage cost. W e consider four f 1 values. For each f 1 , we let f 2 = 0 . 1 f 1 , 0 . 2 f 1 , ..., f 1 and a = 0 to f 2 . 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio 1 f 2 = f 1 f 2 = 0.1 × f 1 b = 4, f 1 = 10 −4 × D 0.9 0.2 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio 1 b = 4, f 1 = 0.1 × D f 2 = f 1 f 2 = 0.1 × f 1 0.9 0.2 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio 1 b = 4, f 1 = 0.5 × D f 2 = f 1 f 2 = 0.1 × f 1 0.9 0.2 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio 1 b = 4, f 1 = 0.9 × D f 2 = 0.1 × f 1 f 2 = f 1 0.9 0.8 0.7 0.6 0.5 0.4 0.3 0.2 Figure 12 : G vw as define d in (24), for b = 4 . They again in dicate the very sub stantial improvement of b -b it minw ise hashing over rando m projectio ns. 20 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio b = 2, f 1 = 10 −4 × D f 2 = f 1 f 2 = 0.1 × f 1 0.9 0.2 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio b = 2, f 1 = 0.1 × D f 2 = f 1 f 2 = 0.1 × f 1 0.2 0.9 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio b = 2, f 1 = 0.5 × D f 2 = f 1 f 2 = 0.1 × f 1 0.2 0.9 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio b = 2, f 1 = 0.9 × D f 2 = f 1 f 2 = 0.1 × f 1 0.2 0.4 0.3 0.5 0.6 0.7 0.8 0.9 Figure 13: G vw as defined in (24), for b = 2 . 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio f 2 = 0.1 × f 1 f 2 = f 1 0.2 0.9 b = 1, f 1 = 10 −4 × D 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio f 2 = f 1 f 2 = 0.1 × f 1 0.2 0.9 b = 1, f 1 = 0.1 × D 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio b = 1, f 1 = 0.5 × D f 2 = f 1 f 2 = 0.1 × f 1 0.2 0.9 0 0.2 0.4 0.6 0.8 1 10 0 10 1 10 2 10 3 10 4 a / f 2 Ratio b = 1, f 1 = 0.9 × D f 2 = f 1 f 2 = 0.1 × f 1 0.8 0.7 0.6 0.5 0.4 0.3 0.2 0.9 Figure 14: G vw as defined in (24), for b = 1 . 21

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment