Accelerating Reinforcement Learning through Implicit Imitation

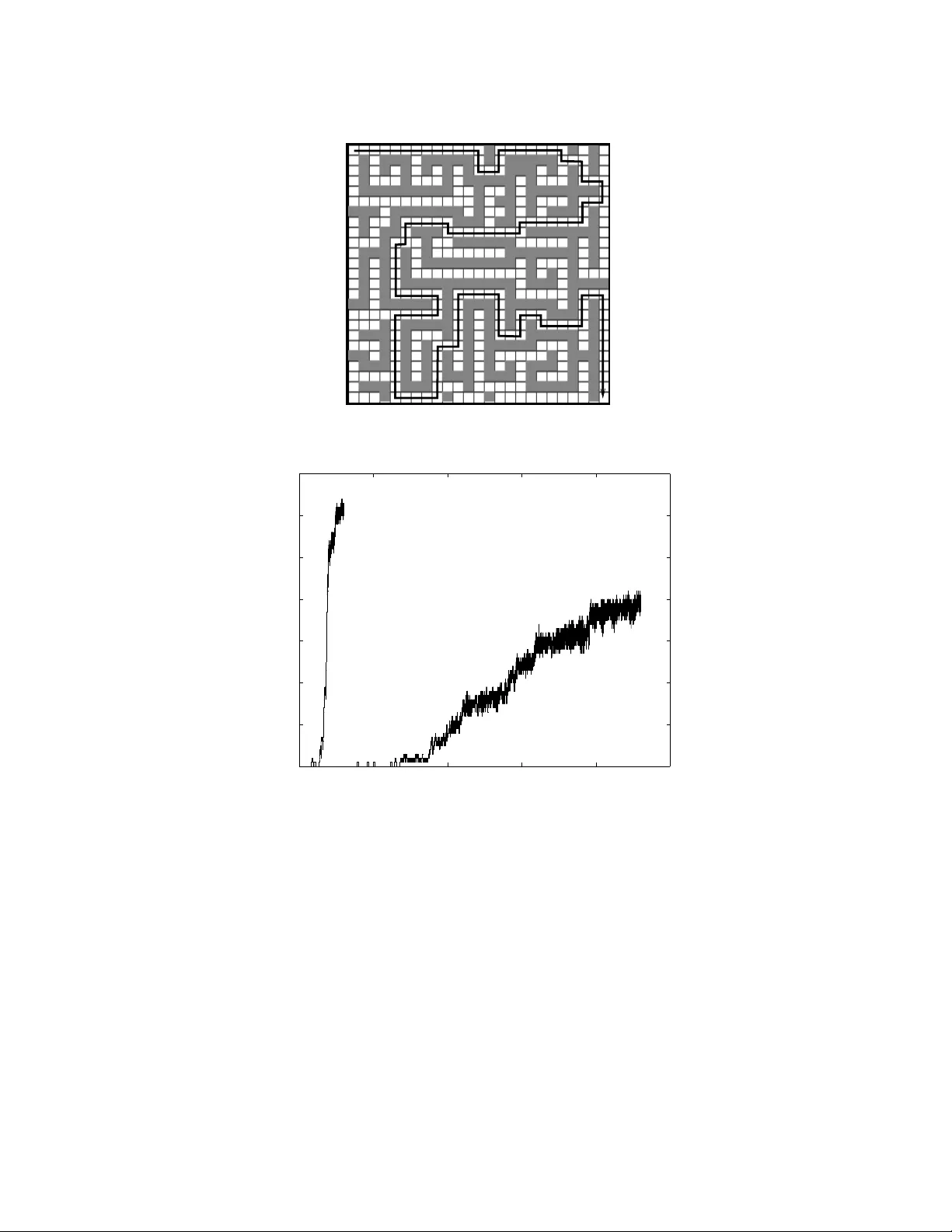

Imitation can be viewed as a means of enhancing learning in multiagent environments. It augments an agent's ability to learn useful behaviors by making intelligent use of the knowledge implicit in behaviors demonstrated by cooperative teachers or oth…

Authors: ** - **Bob Price** – Department of Computer Science, University of British Columbia, Vancouver