Efficient sampling of high-dimensional Gaussian fields: the non-stationary / non-sparse case

This paper is devoted to the problem of sampling Gaussian fields in high dimension. Solutions exist for two specific structures of inverse covariance : sparse and circulant. The proposed approach is valid in a more general case and especially as it e…

Authors: F. Orieux, O. Feron, J.-F. Giovannelli

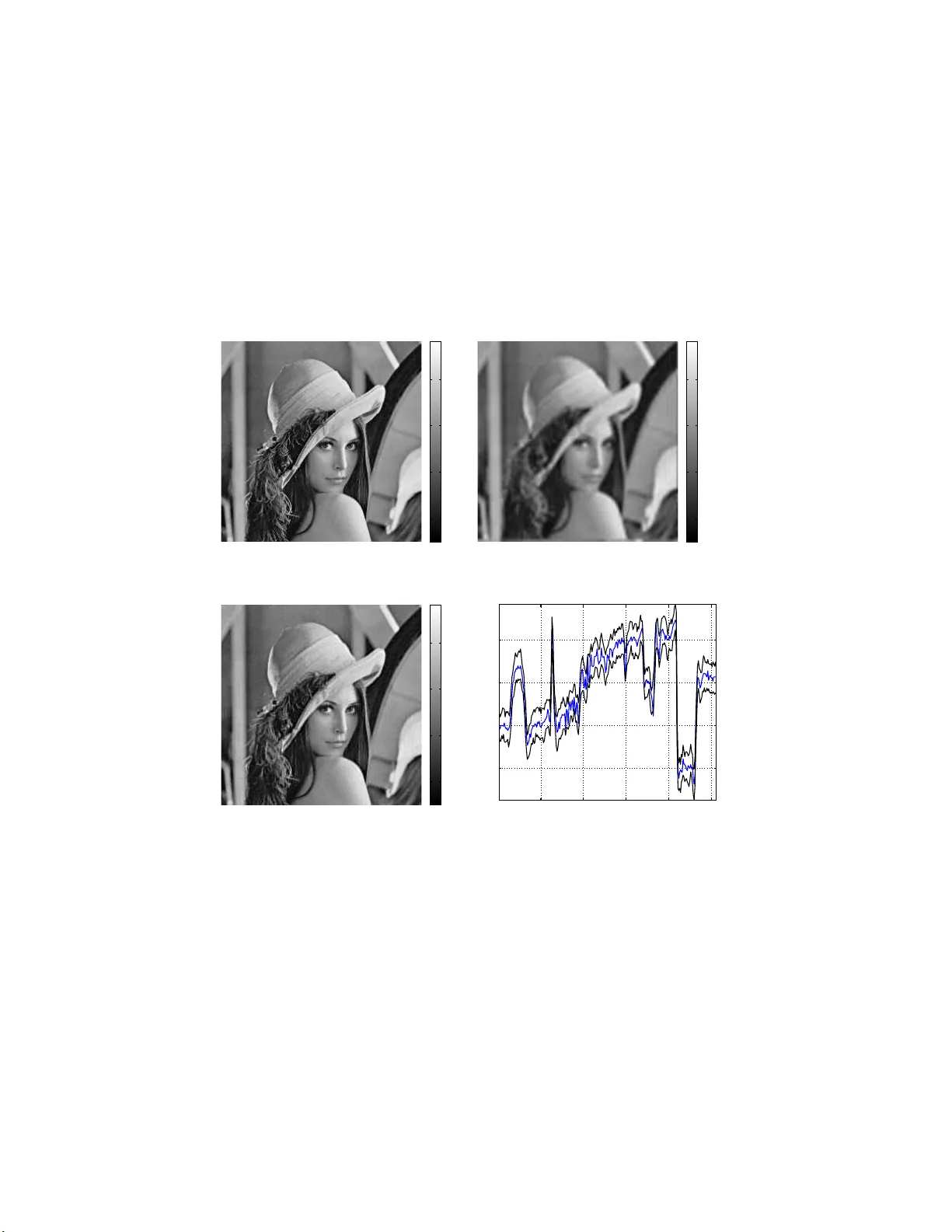

Efficien t sampling of hig h-dimensional Gaussian fields: the non-stat ionary / non-sparse case F. Orieux ∗ , O. Féron and J.-F. Gio v annelli ∗ June 8, 2018 Abstract This pap er is dev oted to the problem of sampling Gaussian fields in high dimension. Solutions exist for tw o sp ecific str uctur es of in verse cov ariance : sparse a nd circula nt. The prop os ed approach is v alid in a more g eneral cas e and es pec ia lly as it emerg e s in inv ers e problems. It relies o n a pe r turbation-optimization principle: adequate sto ch a stic per turbation of a criterion and optimization of the perturb ed crite- rion. It is shown that the criterion minimizer is a sample of the ta r - get density . The motiv ation in inv erse pr oblems is related to g eneral (non-conv olutive) linear observ ation mo dels and their res olution in a Bay esian framework implemen ted throug h sampling a lgorithms when existing sa mplers a re not feasible. It finds a direc t application in m y- opic and/or unsup ervised inv er s ion as well as in s ome non-Ga ussian in version. An illustration fo cused o n hyperpara meter estimation for supe r -resolution problems assesse s the effectiveness of the prop osed approach. 1 In tro duction This w ork deals with s im ulation of high-dim ensional Gaussian and condi- tional Gaussian fields. The problem difficult y is directly related to handling high-dimension al cov ariances R and precision matrices Q = R − 1 . Inv ersion and factorization of these matrices can b e very costly in terms of time and ∗ F. O rieux is with Pas teur Institute, 25 rue du Dr Roux, 75015 Paris , F rance, orieux@pas teur.fr . O. Féron is w ith EDF Researc h & Devel opments, Dpt OSIRIS, 92140 Clamart, F rance, olivier-2.feron@ edf.fr . J.-F. Giov annelli is with th e Lab oratoire de l’Intég ration du Matériau au Système, 33405 T alence, F rance, Giova@IMS-Bord eaux.fr . 1 memory , if not imp ossible. General to ols [1, 2] pro vide pixel-b y-pixel sequen- tial Gibbs or Hastings-Metrop olis algorithms but they are n ot practicable in high dimension. This problem is old and solutions exist in tw o cases. • When Q is sparse, t w o strategies ha ve b een prop osed. The first one [3, c hap. 8], relies on a parallel Gibbs sampler based on a c hessb oard- lik e decomp osition. It takes adv an tage of the sparsity of Q to allo w large blocks of v ariables to b e sim ultaneously up dated. The second strategy [4, 5 ] relies on a Cholesky decomposition Q = L t L : a sample x can b e obtained b y solving the linear system Lx = ε , where ε is a zero-mean white Gaussian vector. The sparsit y of L ensures feasible n umerical resolution of the linear system. • In [6, 7] the authors p oin ted out an efficien t solution for the case of circulan t matrix Q , even non-sparse. In this case, the co v ariance is diagonal in the F ourier domain: the s ampling is based on inde p enden t sampling of the F ourier co efficien ts. Finally , the sampling is efficien tly computed b y FFT and it has b een used in [8–10]. T o our kno wledge there is no solution for sampling more general high-dim ensional Gaussian fields. In this pap er w e prop ose an efficien t algorithm for a more general case where Q is non-sparse, non-circulan t and very large. The pro- p osed approac h is applicable to any precision matrix of the form Q = K X k =1 M t k R − 1 k M k (1) for whic h, to the b est of our knowled ge, no practical s olution exists. A recen t pap er [11] briefly describ es a similar algorithm for a compress sens- ing problem in signal pro cessing. Our pap er deep ens and generalizes this con tribution. The problem of sampling such fields is c ommonly encoun tered in Ba yesian approac hes for in v erse problems and esp ecially in high dimension lik e in image reconstructi on. Indeed, let us consider the general linear forwa rd mo del y = H x + n , (2) where y , n and x denote the observ ations, the noise and the unknow n image and H is a linear op erator. Consider, again, tw o prior densities f or n and x that are Gaussian conditionally to a set of parameters θ and fo cus on the join t estimation of x and θ from the p osterior densit y p ( x , θ | y ) . This framew ork is ver y general and can b e used in man y applications. I n image 2 reconstruction, it co vers a ma jorit y of curren t problems such as unsup ervised [9] or m yopic [10] inv ersion, since acquisition (or instrumen t) parameters and h yp erparameters can b e included in θ . The framew ork also co vers some non- Gaussian priors in volving auxiliary/hidden v ariables [8, 9, 12–14] (lo cation mixture or scale mixture of G auss ian), b y including these v ariables in θ . The join t estimation of x and θ from the p osterior densit y p ( x , θ | y ) commonly requires the handling of the p osterior conditional probabilit y p ( x | θ , y ) . Under the ab ov e assumption, this densit y is Gaussian with pre- cision matrix Q of the form (1), as sho wn in section 2.2. The capabilit y to sample from this density mak es it p ossible to prop ose, for instance, sto ch as - tic optimization [12] or Gibbs sampler [10, 13]. In the general case of inv erse problems, Q is neither s parse nor circulan t so existing sampling method s fail whereas the prop osed s ampling method is effectiv e. Subsequen tly , section 2 presents the prop osed algorithm an d its direct application to general in verse problems. Section 3 illustrates the algorithm through an academic inv erse problem in sup er-resolution imaging. Section 4 concludes and presen ts some p ersp ectiv es. 2 P erturbation-optimizati on algo rithm 2.1 Description Here we fo cus on the problem of sampling f rom a target Gaussia n densit y N (0 , Q − 1 ) where Q is in the f orm (1). When Q is neither sparse nor cir- culan t, existing metho ds fail in high dimension and w e prop ose an efficien t solution based on the Per turbation-Optimization (PO) algorithm describ ed b y Algorithm 1 and Prop osition 1. Algorithm 1 : P erturbation-Optimi zation algorithm. 1: Step P (P ertur bation) : Generate independent Gaussian v ariables η k , k = 1 , . . . , K follo wing η k ∼ N (0 , R k ) , ∀ k = 1 , . . . K (3) 2: Step O (Optimization) : Compute b x as the minimize r of the criterio n J ( x | η 1 , . . . , η K ) = K X k ( η k − M k x ) t R − 1 k ( η k − M k x ) (4) 3 Prop osition 1 The minimiz er b x of criterion (4) r esulting fr om A lgorithm 1 is Gaussian b x ∼ N (0 , Q − 1 ) . (5) Pro of – The minimizer b x of criterion (4) has an analytical expression: b x = " K X k =1 M t k R − 1 k M k # − 1 K X k =1 M t k R − 1 k η k ! = Q − 1 K X k =1 M t k R − 1 k η k ! . (6) It is clearly a zero-mean G auss ian vector as a linear com bination of K zero- mean Gaussian vecto rs . The co v ariance is calculated b elow using elemen tary algebra: from (3) and (6), w e hav e V [ b x ] = Q − 1 h K X k ,k ′ =1 M t k R − 1 k E η k η t k ′ R − 1 k ′ M k ′ i Q − 1 = Q − 1 h K X k =1 M t k R − 1 k E η k η t k R − 1 k M k i Q − 1 = Q − 1 h K X k =1 M t k R − 1 k M k i Q − 1 = Q − 1 that completes the pro of. The criterion J ( x | η 1 , . . . , η K ) b eing quadratic, w e ha ve access to the whole av ailable literature on efficien t n umerical optimization to ols, e.g. iter- ativ e techni q ues such as gradien t based ones (standard, corrected, conjugate, optimal s tep size. . . ). W e ha v e to highligh t that in theory the s ample of the target densit y is the exact optim um of the p erturb ed criter ion. T herefore the optimization step may require as m uch descen t steps as the dimension of the problem. Ho w ever, the optimization pro cedure can b e s topp ed more rapidly without practical loss of efficiency . Ob viously , the efficiency of the algorithm dep ends on the capabilit y to easily sample from Gaussian densities N (0 , R k ) . This will b e actually the case in in verse problem applications as s ho wn in section 2.2 . Moreo v er, we can actually extend Propos ition 1 and Algorithm 1 when the mean of the target Gaussian density is not zero, b y prop osing A lgorithm 2 ab o ve and Corrolary 1 b elow. 4 Algorithm 2 : P erturbation-Optimi zation algorithm. 1: Step P (P ertur bation) : Generate independent Gaussian v ariables ζ k , k = 1 , . . . , K follo wing ζ k ∼ N ( m k , R k ) , ∀ k = 1 , . . . K (7) 2: Step O (Optimization) : Compute e x as the minimize r of the criterio n J ( x | ζ 1 , . . . , ζ K ) = K X k =1 ( ζ k − M k x ) t R − 1 k ( ζ k − M k x ) Corrolary 1 The solution e x r esulting fr om Alg ori thm 2 is Gaussian e x ∼ N Q − 1 K X k =1 M t k R − 1 k m k ! , Q − 1 ! . (8) Pro of – Consider η k = ζ k − m k , k = 1 , . . . , K , and the minimizer b x of the criterion (4). Hence it is trivial to s ho w that e x = b x + Q − 1 P K k =1 M t k R − 1 k m k . Using the results of Prop osition 1 on b x , w e can sho w E [ e x ] = Q − 1 K X k =1 M t k R − 1 k m k ! V [ e x ] = V [ b x ] = Q − 1 and that completes the pro of . 2.2 Application to in verse problems The purpose is to solv e an inv erse problem, stated b y the forward mo del (2), in a Ba yesian framew ork based on the follow ing mo dels: • H describ es an observ ation s ystem that can dep end on unkno wn ac- quisition parameters, • prior densities f or the observ ation noise and for the ob ject are Gaus- sian n ∼ N ( m n , R n ) and x ∼ N ( m x , R x ) , conditionally on a set of auxiliary v ariables. 5 In a general s tatemen t, θ collec ts acquisition parameters, hyperparameters and auxiliary v ariables. This framew ork co vers m y opic (semi-blind) and unsup ervised inv ersion, non-stationary or inhomogeneous Gaussian priors and non-Gaussian priors inv olving auxiliary v ariables. The general in ve rs ion problem then consists in estimating x and θ through the densit y p ( x , θ | y ) . The p osterior mean can b e appro ximated using a Gibbs sampler. It is an iterativ e algorithm whic h alternately samples from p ( θ | x , y ) and p ( x | θ , y ) . The conditional p osterior p ( x | y , θ ) is a correlated Gaussian field: x ∼ N ( m post x , R post x ) with R post x = H t R − 1 n H + R − 1 x − 1 m post x = R post x H t R − 1 n [ y − m n ] + R − 1 x m x where θ is em b edded in H , R n and R x for simpler notations. If H has no particular prop erties then the precision matrix Q = ( R post x ) − 1 is neither sparse nor circulan t, and existing sampling metho ds are not ap- plicable. The P erturbation-Optimiza tion algorit hm mak es it p ossible to ef- ficien tly s ample from N ( m post x , R post x ) . In particular, applying Algorithm 2 with K = 2 , M 1 = H , M 2 = I , R 1 = R n , R 2 = R x , m 1 = m n and m 2 = m x , directly gives a sample from this densit y . Then, this algorithm ensures that correct p osterior mean and co v ariance are obtained, at the s ame time. This increases the usefulness of this method for in verse problems. 3 Illustration The prop osed PO algorithm is an effectiv e to ol for high dimensional in- v erse problems, e.g. image reconstruction. In this context, it op ens up the p ossibilit y to resort to s to c hastic sampling algorithms (MCMC, Gibbs, Metrop olis-Hastings,. . . ) pro viding t w o main adv antage s : • the capabili ty to join tly estimate sev eral unkno wns when the global mo delization is more natural through conditional distributions (hier- arc hical s tructure), • in addition, the access to the en tire distribution of the unkno wns pro- viding uncertain ties (s tandard deviations, confidence in terv als,. . . ). 3.1 T w o examples: electr omagnetics and fluorescen t microscop y F or example, the prop osed PO algorithm has b een applied to an electro- magnetic in verse scatterin g problem b y one of the authors [13]. In a do- main in tegral represen tation, the forward mo del expresses observed data as 6 a bi-linear f unction of unknow n ob ject and unkno wn induced curren t. The bi-linear structure leads to a mo delization with conditional Gaussians: the prior for the induced curren t is Gaussian giv en the ob ject, and the prior for the ob ject is Gauss ian given the induced curren t. The join t estimation of the ob ject and the curren t is tac kled in a Ba yesian f ramew ork and compute d b y means of a Gibbs sampler in which the sampling of the current is made p ossible thanks to the prop os ed PO algorithm. In [15] it has b een applied b y another one of the authors to pro cess data in biology imaging to ac hiev e sup er-resolution in fluorescen t microscop y trough Structured Illumination. The problem is also tackled in a Bay esian framew ork and implemen ted b y means of a Gibbs sampler. The density for the ob ject given the other v ariables is Gaussian with non-in v arian t co v ariance (due to non-in v ariant illumination of the biological s ample) making the use of existing tec hniques imp ossible. Again, the prop osed PO alg orithm ov ercomes this difficul ty and results in the capabilit y to estimate h yp erparameters and acquisition parameters, while also pro v iding uncertain ties. 3.2 Unsupervised sup er-resolution In the followi ng, we detail an application of the prop osed PO algorithm to the sup er-resolution (SR) academic problem: s ev eral blurred and do wn-sampled (lo w resolution) images of a scene are av ailable in order to retriev e the original (high resolution) scene [16, 17]. It is shown that the crucial no velt y , enabled b y the prop osed PO algorithm, is to allo w the use of sampling algorithms in SR metho ds and to provide joint image and h yp erparam eters estimation including uncertain ties. The usual forw ard mo del writes y = H x + n = P C x + n , where y ∈ R M collects the lo w resolution images (5 images of 128 × 128 pixels), x ∈ R N is the original image ( 256 × 256 pixels), n is the noise, C and P are circulan t con v olution and decimation matrices. The prior densit y for n is N ( 0 , γ − 1 n I ) and the one for x is N ( 0 , γ − 1 x D t D ) where D is the Laplacian op erator. The h yp erparameters γ n and γ x are unknown and their prior la w are Jeffreys’. The p osterior density is then p ( x , γ n , γ x | y ) ∝ γ M / 2 − 1 n γ ( N − 1) / 2 − 1 x exp h − γ n 2 k y − P C x k 2 − γ x 2 k D x k 2 i . (9) It is explored b y a Gibbs sampler: iterativ ely sampling γ n , γ x and x under 7 0 50 100 150 200 0 2 4 6 8 10 (a) γ n c h ain 7 7.5 8 8.5 9 0 0.05 0.1 0.15 0.2 (b) γ n histogram 0 50 100 150 200 0 0.2 0.4 0.6 0.8 1 (c) γ x c h ain 1.5 1.6 1.7 1.8 1.9 x 10 −3 0 0.05 0.1 0.15 0.2 (d) γ x histogram Figure 1: Chains and histograms of hyperparameters γ n and γ x . 8 50 100 150 200 (a) T rue 50 100 150 200 (b) Data 50 100 150 200 (c) Estimate 50 100 150 200 250 50 100 150 200 (d) Uncertaint y Figure 2: Image reconstruction: true image 2(a), one of the l o w resolution images 2(b) and the prop osed estimate 2(c). The plot 2(d) is a true image slice inside the 99% confidence in terv al around the estimate. 9 their resp ectiv e conditional probabilities p ( γ ( k ) n | x , γ x , y ) = G 1 + M / 2 , 2 / y − P C x ( k − 1) 2 p ( γ ( k ) x | x , γ n , y ) = G 1 + ( N − 1) / 2 , 2 / D x ( k − 1) 2 p ( x ( k ) | γ x , γ n , y ) = N ( m post x , R post x ) with R post x = γ ( k ) n C t P t P C + γ ( k ) x D t D − 1 m post x = γ ( k ) n R post x P t C t y . The conditional p osteriors for the hyperparameters are Gamma la ws and consequen tly , easy to sample. The conditional posterior f or x is Gaussian, but exis ting sampling ap- proac hes are not op erational due to the structure of the co v ariance R post x , as explained in Section 2.2 with H = P C : H is non-circulan t due to the decimation and H is not s parse esp ecially in the case of large supp ort. In this case, the PO algorithm 2 directly pro vides a desired sample (with b oth correct mean and correct cov ariance). It is important to k eep in mind that the prop osed PO algorithm does not impro v e image qualit y itself (w.r.t. other SR metho ds) but the crucial no ve lty is to allo w for h yp erparameter estimation. In this sense, Fig. 1 shows the h yp erparam eter iterates (that illustrate the op eration and con vergen ce) and histograms (that appro ximate marginal p osteriors) ; the p osterior means are b γ n ≈ 8 and b γ x ≈ 2 × 10 − 3 . Concerning the images themselv es, results are sho wn in Fig. 2: estimated image in 2(c) clearly sho ws a better reso- lution than data in Fig. 2(b) and it is visually close to the original image of 2(a). It is then clear that the approac h produces correct h yp erparam e- ters i.e. correct balance b etw een data and prior. Moreo ver, uncertain ties are deriv ed f rom the s amples through the p osterior standard deviation. It is illustrated in Fig. 2(d) whic h s ho ws that the true image is inside the 99% confidence in terv al around the estimate. As a conclusion, the prop osed PO algorithm mak es it p ossible to includ e sampling algorithms in SR met ho d whereas it w as not p ossible before. It enabl es to provide joi n t image and h yp erparameters estimation as w ell as uncertain ties computations. 10 4 Conclusion This pap er presen ts a no vel approac h for sampling high-dimensional Gaus- sian fields when usual approac hes are ineffectiv e. A sample of the target densit y is pro duced as the minimizer of a precisely designed quadratic crite- rion. It relies on a p erturbation-optimiz ation principle: adequate sto c hastic p erturbation of a criterion and optimization of the p erturb ed criterion. It is sho wn that the criterion minimizer is a sample of the target densit y . The approac h is applicable as so on as a particular f actorization of the precision matrix is a v ailable, and it is usually the case in in verse problems. There is a wide class of applications, in particular an y data pro ces sing problem based on a linear forw ard mo del and conditional Gaussian prior for noise and ob ject. The effectiv eness of the prop osed algorithm has b een illustrated in [13, 15] and in this paper on a more acade mic sup er-resolution imag ing problem allo wing automatic tuning of hyperparameters. 5 A c kno wle dgmen t The authors wou ld lik e to thank Jérôme I dier (IRCyN) for inspiration of this w ork [18], Thomas R odet and Ali M ohammad–Djaf ari (L2S), for f ruitful discussions, and Cornelia V a car (IMS) for carefully reading the pap er. References [1] C. P . Rob ert and G. Casella, Monte-Carlo Statisti c al Metho ds , s er. Springer T exts in Statistics. New Y ork, ny : Springer, 2000. [2] W . R. G ilks , S. Richard s on, and D. J. Spiegelhalter, Markov Chain Monte Carlo in pr actic e . Bo ca R aton: Chapman & Hall/CR C, 1996. [3] G. Winkler, Im age Analysis, R andom Fields and Markov Chain Monte Carlo Metho ds . Springer V erlag, BerlinGerman y , 2003. [4] H . Rue, “F ast sampling of Gaussian Mark ov random fields,” J. R. Statis t. So c B , v ol. 63, no. 2, 2001. [5] P . Lalanne, D. Prév ost, and P . Cha vel, “ Sto chastic artificial retinas: algorithm, opto electronic circuits, and implemen tation,” Appl. Opt , v ol. 40, 2001. 11 [6] R . Chellappa and S. Chatterjee, “Classification of textures using Gaus- sian Mark ov random fields,” IEEE T r ans. Ac oust. Sp e e ch, Signal Pr o- c essing , v ol. ASSP-33, pp. 959–963, August 1985. [7] R . Chellappa and A. Jain, Markov R andom Fields: The ory and Appli- c ation . Acade mic Press Inc, 1992. [8] D. G eman and C. Y ang, “Nonlinear image reco v ery with half-quadratic regularizatio n,” IEEE Tr ans. Im. Pr o c. , vo l. 4, no. 7, July 1995. [9] J.-F. Gio v annelli, “U ns up ervised Ba yesian con v ex decon volut ion based on a field with an explicit partition function,” IEEE Tr ans. Im. Pr o c. , v ol. 17, no. 1, Jan uary 2008. [10] F. Orieux, J.-F. Gio v annelli, and T. Ro det, “Bay esian es timation of regularizatio n and p oin t spread function parameters for wiener–h unt decon v olution,” J. Opt. So c. A m. A , vo l. 27, no. 7, pp. 1593–1607, 2010. [11] X. T an, J. Li, and P . Stoica, “Efficien t sparse Ba ye s ian lea rning via Gibbs sampling,” in A c oustics S p e e ch and Signal Pr o c essing (ICAS SP), 2010 IEEE International Confer enc e on , M arch 2010, pp. 3634 –3637. [12] S. Geman and D. Geman, “Sto c hastic relaxation, Gibbs distribution, and the Bay esian restoration of images,” IEEE T r ans. Pattern Anal. Mach. Intel l. , v ol. 6, no. 6, 1984. [13] O. Féron, B. Duchêne , and A. Mohammad-Djafari, “Micro wa v e imaging of piecewise constan t ob jects in a 2D-TE configuration,” Intern. J ourn. App. Ele ctr. Me c. , vol. 26, no. 3-4, 2007. [14] H. A y asso and A. Mohammad-Djafari, “Join t NDT image restoration and segmen tation using Gauss-Mark ov-P otts prior mo dels and v aria- tional Ba yesian computation,” IEEE T r ans. Image Pr o c essing , vol. 19, no. 9, pp. 2265–2277, 2010. [15] F. Orieux, E. Sepu lveda, V . Lor iette, B. Dub ertret, and J.-C. Oliv o- Marin, “Ba yesian estimation for optimized structured illumination mi- croscop y ,” IEEE Tr ans. Image Pr o c essing , 2011, in revision. [16] S. C. Pa rk, M. K. P ark, and M. G. Kang, “Sup er-resolution image re- construction: a tec hnical o verview, ” IEEE Sig. Pr o c. M ag. , May 2003. 12 [17] G. Ro c hefort, F. Champagnat, G. L. Besnerais, and J.-F. G io v annelli, “An impro v ed observ ation mo del for sup er-resolution under affine mo- tion,” IEEE Tr ans. Image Pr o c essi ng , vol. 15, no. 11, pp. 3325–3337, No vem b er 2006. [18] J. Idier, informal discussion, 2009. 13

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment