Particle Filtering for Large Dimensional State Spaces with Multimodal Observation Likelihoods

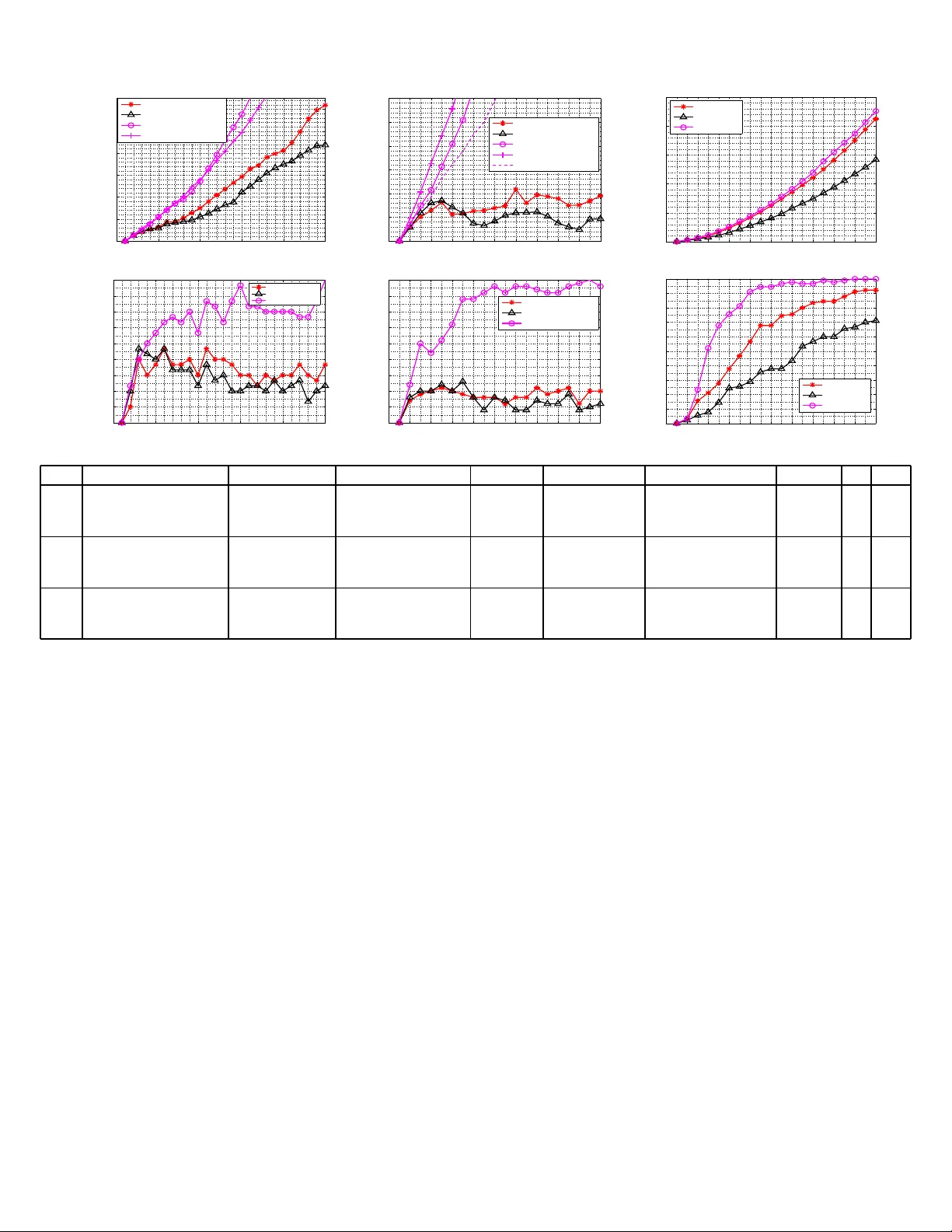

We study efficient importance sampling techniques for particle filtering (PF) when either (a) the observation likelihood (OL) is frequently multimodal or heavy-tailed, or (b) the state space dimension is large or both. When the OL is multimodal, but …

Authors: ** Namrata Vaswani **