Efficient First Order Methods for Linear Composite Regularizers

A wide class of regularization problems in machine learning and statistics employ a regularization term which is obtained by composing a simple convex function \omega with a linear transformation. This setting includes Group Lasso methods, the Fused …

Authors: Andreas Argyriou, Charles A. Micchelli, Massimiliano Pontil

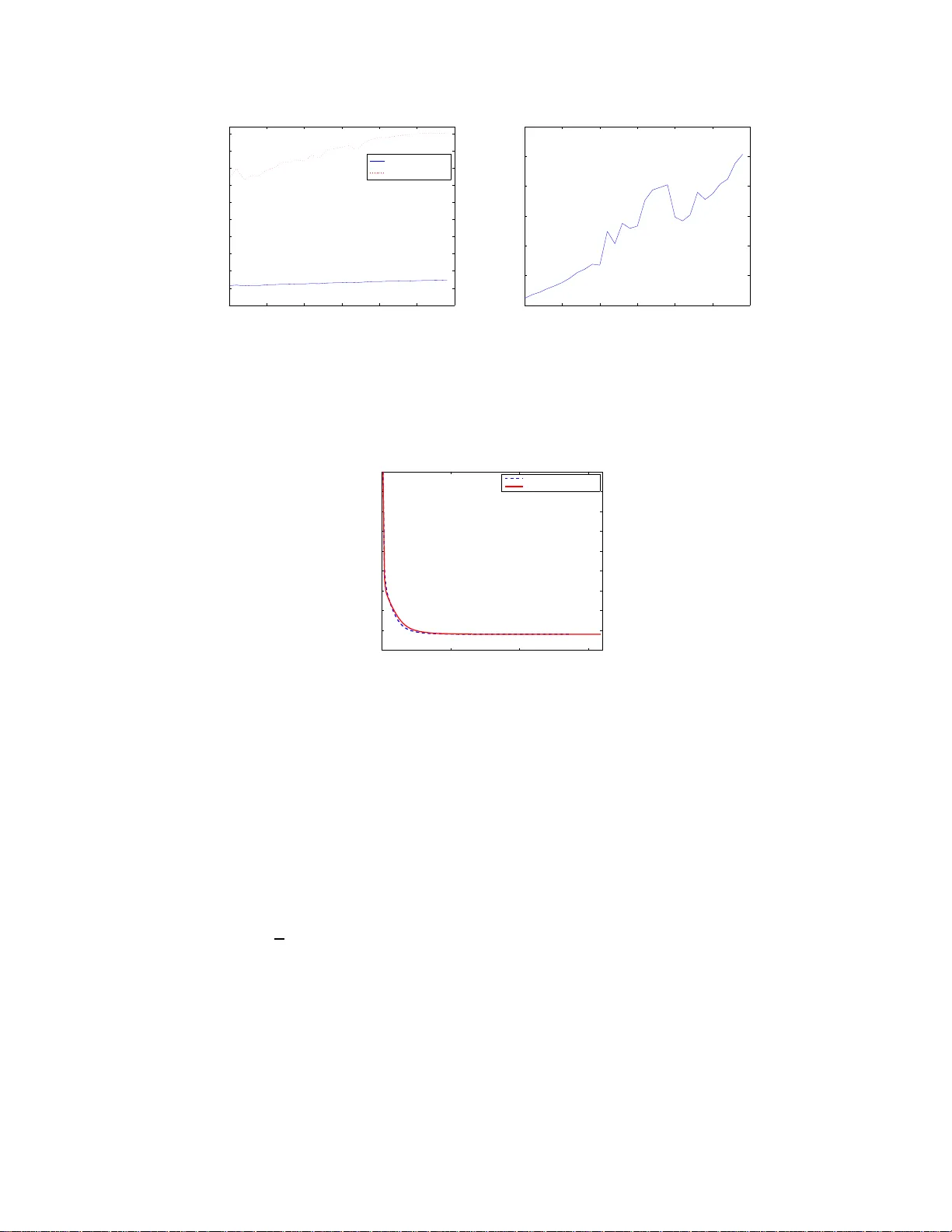

Ef ficient First Order Methods for Linear Composite Re gularizers Andr eas Argyriou T oyota T echnological Institute at Chicago, Uni versity of Chicago 6045 S. K en wood A v e. Chicago, Illinois 60637, USA Charles A. Micchelli ∗ Department of Mathematics, City Uni versity of Hong K ong 83 T at Chee A venue K o wloon T ong, Hong K ong Massimiliano P ontil Department of Computer Science, Uni versity Colle g e London Malet Place London WC1E 6BT , UK Lixin Shen Department of Mathematics, Syracuse Uni versity 215 Carnegie Ha ll Syracuse, NY 13244-1150, USA Y ueshen g Xu Department of Mathematics, Syracuse Uni versity 215 Carnegie Ha ll Syracuse, NY 13244-1150, USA October 26, 2018 Abstract A wide cla ss of reg ulariz ation p roblems in mach ine learning and statis tics employ a re g- ulariza tion term which is obtain ed by composi ng a simple con vex functi on ω with a linear transfo rmation . This sett ing includes Group Lasso methods , th e Fused Lasso and o ther total v ariati on method s, multi-ta sk le arnin g methods and many more. In this paper , we present a genera l a pproac h f or computin g the proximit y operator of this class of reg ularize rs, und er the assumpti on that the proximity operator of the functio n ω is kno wn in adv ance. Our approa ch b uilds on a rece nt line of research on optimal first order optimization methods and uses fixed point itera tions for numericall y computing the proximit y operator . It is more gene ral than cur- rent approaches and, as we sho w with numer ical simula tions, computation ally more ef fi cient ∗ Also with Departmen t of Mathematics and S tatistics, University at Albany , Ear th Science 110 Albany , NY 1222 2, USA. 1 than av ailable first order methods which do not achie ve the optimal rate. In particula r , o ur method outperforms state of the art O ( 1 T ) methods for ov erlapping Group L asso and m atches optimal O ( 1 T 2 ) methods for the Fused Lasso and tree struct ured Group Lasso. 1 Introduction In this paper , we study supervised learning methods which are based on the optim ization problem min x ∈ R d f ( x ) + g ( x ) (1.1) where the function f measures the fit of a vector x t o av ailable training data and g is a penalty term or regularizer which encou rages certain t ypes of s olutions. More precisely we let f ( x ) = E ( y , Ax ) , where E : R s × R s → [0 , ∞ ) is an error fun ction, y ∈ R s is vector of measurement s and A ∈ R s × d a matrix, whose rows are the inp ut vectors. This class of regularization methods arise in machine learning, signal processing and statistics and hav e a wid e range of applications. Diffe rent choices of the error functi on and the penalty fun ction correspond t o sp ecific meth- ods. In this paper , we are interested in so lving problem (1.1 ) wh en f is a s tr ongl y smooth con vex function (s uch as the square error E ( y , Ax ) = k y − Ax k 2 2 ) and the p enalty function g is obtained as the composit ion of a “simple” function with a linear transformation B , that is, g ( x ) = ω ( B x ) (1.2) where B is a prescribed m × d matrix and ω is a nond iffer entiable con vex functi on on R d . The class of regularizers (1.2) includes a plethora of methods, depending on t he choice of the functi on ω and of matrix B . Our mo tiv ation for studying this class of penalty functions arises from sp arsity- inducing regularization methods which consider ω to be either the ℓ 1 norm or a mixed ℓ 1 - ℓ p norm. When B is the identity matri x and p = 2 , th e latter case corresponds t o the well-known Group Lasso method [36], for wh ich well studied optim ization techni ques are av ailable. Other choices of the matrix B give rise t o di ff erent kinds o f Group Lasso with overlapping groups [12, 38], which have p roved to b e ef fecti ve in modeling structured sparse regression p roblems. Further examples can be o btained cons idering com position with the ℓ 1 norm (e.g. this includes th e Fused Lasso p enalty fun ction [32] and other total variation m ethods [21]) as well as compositi on with orthogonally in va riant norms, which are relev ant, for e xample, in t he context of mult i-task learning [2]. A common approach t o solve many op timization problems of t he general form (1.1) is via proximal meth ods. These are first-order iterativ e methods, whose computati onal cost per iteration is comparable t o gradient descent. In some problem s in which g has a s imple enough form, they can be combined with acceleration techniques [3, 26 , 28, 33, 34], to yield significant gains in the num ber of iterations required to reach a certain app roximation accuracy o f the m inimal value. The essenti al step of proxi mal methods requires the computation of the prox imity operator of function g (see Definition 2.1 belo w). In certain cases of pra ctical im portance, th is o perator a dmits a closed form , w hich makes proximal methods appealing to use. Howe ver , in the general case (1.2) the proximity operator m ay not be easily computabl e. W e are aware of techni ques to compute this operator for onl y so me s pecific choices of the function ω and the matrix B . Most related to our work are recent papers for Group Lasso wi th overlap [17] and Fused Lasso [19]. See also [1, 3, 14, 20, 24] for other optimizati on methods f or structured sparsity . 2 The main cont ribution of th is paper is a general techniqu e to comput e the proxim ity operator of the composite regularizer (1.2) from the solution of a certain fixe d p oint p roblem, which d epends on the proximity operator of th e function ω and the matrix B . This fixed poi nt problem can be solved by a sim ple and effi cient iterative scheme when the proxi mity operator of ω has a closed form or can be compu ted i n a finite number of steps. When f is a st rongly sm ooth functi on, the above result can be used together wit h Nes terov’ s accelerated metho d [26 , 28] to provide an ef ficient first-order m ethod for solving the optimization probl em (1.1). Thus, our technique allo ws for the application o f proximal methods o n a m uch wider class of o ptimization prob lems than is currently po ssible. Our techniq ue is both more g eneral than current approaches and also, as we ar gue with n umerical sim ulations, comp utationally efficient. In parti cular , we will d emonstrate th at our method outperforms state o f the art O ( 1 T ) m ethods for overlapping Group Lasso and matches optimal O ( 1 T 2 ) methods for the Fused Lasso and tree structured Group Lasso. The paper is organized as foll ows. In Section 2, we re view the noti on of proxi mity o perator and useful facts from fixed point theory . In Section 3, we discuss some examples of com posite functions of the form (1.2) which are valuable in applications. In Section 4, we present our tech- nique to compute the proximity operator for a composit e re gularizer of t he form (1.2) and then an algorithm to solve the ass ociated optim ization problem (1.1). In S ection 5, we report our numerical experience with this method. 2 Background W e denote by h· , ·i the Eucli dean in ner product on R d and let k · k 2 be the induced norm. If v : R → R , for eve ry x ∈ R d we denote by v ( x ) the vector ( v ( x i ) : i ∈ N d ) , where, for ev ery integer d , we use N d as a shorthand for the set { 1 , . . . , d } . For e very p ≥ 1 , we define the ℓ p norm of x as k x k p = ( P i ∈ N d | x i | p ) 1 p . The proximity operator on a Hilbert space was introduced by Moreau in [22, 23]. Definition 2.1. Let ω be a r eal valued con vex function on R d . The pr oximity operator of ω i s defined, for every x ∈ R d by pro x ω ( x ) := argmin y ∈ R d 1 2 k y − x k 2 2 + ω ( y ) . (2.1) The proximity operator is well defined, because the abov e minimum e xi sts and is unique. Recall that the subdiffer ential of a con vex function ω at x is defined as ∂ ω ( x ) = { u : u ∈ R d , h y − x, u i + ω ( x ) ≤ ω ( y ) , y ∈ R d } . The sub differ ential is a nonempty compact and con vex set. Moreover , if ω is di ff erentiable at x then its subdifferential at x consists only of the g radient of ω at x . The ne xt prop osition establishes a relationshi p between the proximity operator and the subdifferential of ω – see, for example, [21, Prop. 2.6] for a proof. Pr oposition 2.1. If ω is a con vex function on R d and y ∈ R d then x ∈ ∂ ω ( y ) if and only if y = prox ω ( x + y ) . 3 W e proceed to discuss some examples of functions ω and the correspondi ng proxi mity opera- tors. If ω ( x ) = λ k x k p p , where λ is a positive parameter , we hav e that pro x ω ( x ) = h − 1 ( | x | )sign( x ) (2.2) where the function h : [0 , ∞ ) → [0 , ∞ ) is defined, for e very t ≥ 0 , as h ( t ) = λ p t p − 1 + t . This fact follows immediately from the opt imality condit ion of t he optimi zation problem (2.1). Usi ng the above equatio n, we may also compute the proximity map of a multip le of the ℓ p norm, namely the case that ω = γ k · k p , where γ > 0 . Indeed, for ever y x ∈ R d , there exists a v alue of λ , depending only on γ and x , such that the optim ization problem (2.1) for ω = γ k · k p equals to the solutio n of the same problem for ω = λ k · k p p . Hence the proximity map of t he ℓ p norm can be computed by (2.2) to gether wi th a simple line search. The cases that p ∈ { 1 , 2 } are si mpler , see e.g. [7]. For p = 1 w e obtain the well-known so ft-thresholding operator , namely pro x λ k·k 1 = ( | x | − λ ) + sign( x ) , (2.3) where, for ev ery t ∈ R , we define ( t ) + = t if t ≥ 0 and zero otherwise; when p = 2 we have th at pro x λ k·k 2 ( x ) = ( k x k 2 − λ ) + x k x k 2 if x 6 = 0 0 if x = 0 . (2.4) In our last example, we consi der the ℓ ∞ norm, which is defined, for e very x ∈ R d as k x k ∞ = max {| x i | : i ∈ N d } . W e ha ve that pro x λ k·k ∞ ( x ) = min | x | , 1 k X | x i | >s k | x i | − λ sign( x ) where s k is the k -th largest value of the com ponents of the vector | x | and k i s the largest integer such that P | x i | >s k ( | x | i − s k ) < λ . For a proof of the above form ula, see, for example [9, Sec. 5.4]. Finally , we recall some basic facts about fixed point theory which are useful for our study . For more information on the material presented here, we refer the reader to [37]. A m apping ϕ : R d → R d is called strictly non-expansive (or contractive) if there exists β ∈ [0 , 1) such that, for ev ery x, y ∈ R d , k ϕ ( x ) − ϕ ( y ) k 2 ≤ β k x − y k 2 . If t he above inequality holds for β = 1 , th e mappi ng is called none xp ansiv e. As noted in [7, Lemma 2.4], both prox ω and I − prox ω are nonexpansiv e. W e s ay that x is a fixed point of a mapping ϕ if x = ϕ ( x ) . The Picard iterates x n , n ∈ N , starting at x 0 ∈ R d are d efined by the recursiv e equation x n = ϕ ( x n − 1 ) . It is a wel l-known fac t that, if ϕ is strictly nonexpansi ve then ϕ has a unique fixed point x and lim n →∞ x n = x . Howe ver , this result fails if ϕ is nonexpansiv e. W e end this section by stating t he main tool which we use to find a fixed point of a nonexpansi ve mapping ϕ . Theor em 2. 1. (Opial κ -average theor em [30]) Let ϕ : R d → R d be a nonexpansive mapping, which has at l east one fixed point a nd let ϕ κ := κI + (1 − κ ) ϕ . Then, for every κ ∈ (0 , 1) , the Picar d iterates of ϕ κ con ver ge to a fixed point of ϕ . 4 3 Examples of Composit e Functions In this section , we show that several examples of penalty funct ions which hav e appeared in the literature fall within the class of linear composite functions (1.2). W e define for ev ery d ∈ N , x ∈ R d and J ⊆ N d , the restricti on of the vector x to t he index set J as x | J = ( x i : i ∈ J ) . Our first example considers the Group Lasso penalty function, which is defined as ω GL ( x ) = X ℓ ∈ N k k x | J ℓ k 2 (3.1) where J ℓ are prescribed su bsets of N d (also called the “groups ”) such that ∪ k ℓ =1 J ℓ = N d . The standard Group Lasso penalty (see e.g. [36]) correspond s to the case that th e collection o f g roups { J ℓ : ℓ ∈ N k } forms a partition of the ind ex set N d , that is, the groups do not o verlap. In t his case, the opti mization problem (2.1) for ω = ω GL decomposes as t he sum of separate probl ems and the proximity operator is readily obt ained by applying the formula (2.4) to each gro up separately . In many cases of interest, howe ver , the g roups overlap and the proxim ity operator cannot b e easily computed. Note that the function (3.1) is of the form (1.2). W e let d ℓ = | J ℓ | , m = P ℓ ∈ N k d ℓ and define, for e very z ∈ R m , ω ( z ) = P ℓ ∈ N k k z ℓ k 2 , where, for every ℓ ∈ N k we l et z ℓ = ( z i : P j ∈ N ℓ − 1 d j < i ≤ P j ∈ N ℓ d j ) . Moreover , we choose B = [ B ⊤ 1 , . . . , B ⊤ k ] ⊤ , where B ℓ is a d ℓ × d matrix defined as ( B ℓ ) ij = 1 if j = J ℓ [ i ] 0 otherwise where for ev ery J ⊆ N d and i ∈ N | J | , we denote by J [ i ] the i -th largest integer i n J . The second example concerns the Fused Lass o [32], which considers t he penalty function x 7→ g ( x ) = P i ∈ N d − 1 | x i − x i +1 | . It i mmediately follows that this function falls into the class (1.2) if we choose ω to be the ℓ 1 norm and B th e first order divided difference matrix B = 1 − 1 0 . . . . . . 0 1 − 1 0 . . . . . . . . . . . . . . . . . . . (3.2) The int uition behind the Fused Lasso is t hat it fa vors vectors whi ch do not vary much across contiguous com ponents. Further extensions of this case may be ob tained by cho osing B to be the incidence matrix of a graph, a setting which is rele va nt for example in o nline learning over graphs [11]. Other related examples include the anisotropic total v ariatio n, see for example, [ 21]. The next example considers compo sition with orthogonally in v ariant (OI) norms. Specifically , we choose a symm etric g auge fu nction h , that is, a norm h , which is both abs olute and in variant under permutati ons [35] and define the function ω : R d × n → [0 , ∞ ) , at X b y the formula ω ( X ) = h ( σ ( X )) where σ ( X ) ∈ [0 , ∞ ) r , r = min( d, n ) is the vector formed by t he singular values of mat rix X , in non-increasing order . An example of OI-norm are Schatten p -norms , whi ch correspond to the case th at ω is the ℓ p -norm. The next proposit ion provides a formula for the proxi mity operator of an OI-norm. The proof is based o n an i nequality by v on Neumann [35], sometimes called v on Neumann’ s trace theorem or K y F an’ s inequalit y . 5 Pr oposition 3.1. W i th the above notation, it holds that pro x h ◦ σ ( X ) = U diag (pro x h ( σ ( X ))) V ⊤ wher e X = U diag ( σ ( X )) V ⊤ and U an d V ar e the matrices formed by the left and right singular vectors of X , r espectively . Pr oof. The proof is based on an inequ ality b y von Neumann [35], sometim es called von Neu- mann’ s trace t heorem or K y Fa n’ s inequality . It states that h X , Y i ≤ h σ ( X ) , σ ( Y ) i , wi th equality if and only if X and Y share the same ordered sy stem of singular vec tors. Note that k X − Y k 2 2 = k X k 2 2 + k Y k 2 2 − 2 h X , Y i ≥ k σ ( X ) k 2 2 + k σ ( Y ) k 2 2 − 2 h σ ( X ) , σ ( Y ) i = k σ ( X ) − σ ( Y ) k 2 2 and the equality holds if and only if Y = U diag ( σ ( Y )) V ⊤ . Consequently , we ha ve that 1 2 k X − Y k 2 2 + ω ( Y ) ≥ 1 2 k σ ( X ) − prox h ( σ ( X )) k 2 2 + h (pro x h ( σ ( X ))) . T o conclude the proof we need to show that γ := pro x h ( σ ( X )) has the same ordering of σ , that is, γ is non-increasing. Suppose on th e cont rary that there exists i, j ∈ N d , i < j , such that γ i < γ j . Let ˜ γ be the vector obtain ed b y flipping t he i -th and j -th compo nents of γ . A direct computation giv es 1 2 k σ − γ k 2 2 + h ( γ ) − 1 2 k σ − ˜ γ k 2 2 − h ( ˜ γ ) = ( σ i − σ j )( γ i − γ j ) . Since the left hand side of the above equation is p ositive, this leads to a contradiction. W e can compose an OI-norm with a l inear transformation B , this t ime between two spaces of matrices, obtaining yet another subclass of penalty fun ctions of t he form (1.2). This setting is relev ant in the context of mul ti-task learning. For example [10] chooses h t o be the t race or nuclear n orm and considers a specific lin ear transformation which model task relatedness, namely , that g ( X ) = σ X ( I − 1 n ee ⊤ ) 1 , where e ∈ R d is the vector all of whose components are equal to one. 4 Fixed P oint Algorithm s Based on Proximity Operators W e now propose optimi zation approaches which u se fixed point alg orithms for non smooth prob- lems. W e shall focus on problem (1.1) under the assumption (1.2). W e assume that f is a s tr ongl y smooth con ve x functi on, that is, ∇ f is Lipschitz cont inuous wi th constant L , and ω is a nondif- fer entiabl e con vex function . A typical class of s uch problem s occurs in regularization methods where f corresponds to a data error t erm with, say , the square loss . Ou r approach builds on proxi- mal methods and uses fixed point (also known as Picard) i terations for numerically computing the proximity operator . 6 4.1 Computation of a Generalized Proximity Operator with a Fixed Poin t Method As the basic building block of our methods , we consider the optimi zation problem (1.1) in the special case when f is a quadratic function, that is, min 1 2 y ⊤ Qy − x ⊤ y + ω ( B y ) : y ∈ R d . (4.1) where x is a giv en vector in R d and Q a positive definite d × d matrix. Recall the pr oximity op erator in Definition 2.1 . Under the assump tion that we can explicitly or in a finite num ber of steps compute the proximity operator of ω , our aim is to de velop an algorithm for ev aluating a mini mizer of problem (4.1). W e describe the algorith m for a generic Hessi an Q , as it can be appl ied in various contexts. For example, it coul d lead to a second-order method for solving (1.1), which will b e the topic of future work. In t his paper , we will app ly the t echnique to the task of e va luating pro x ω ◦ B . First, we observe that the minim izer of (4.1) exists and is unique . Let us call this minimizer ˆ y . Similar to Proposition 2.1, we hav e the follo wing proposition. Pr oposition 4.1. If ω i s a conv e x function on R m , Q a d × d positive definite mat rix and x ∈ R d then ˆ y is the solution of pr oblem (4.1) if and only if Q ˆ y ∈ x − ∂ ( ω ◦ B )( ˆ y ) . (4.2) The s ubdifferential ∂ ( ω ◦ B ) appearing in the inclusion (4.2) can b e expressed with the chain rule (see, e.g. [6]), which gives the formula ∂ ( ω ◦ B ) = B ⊤ ◦ ( ∂ ω ) ◦ B . (4.3) Combining equations (4.2) and (4.3) yields the fact that Q ˆ y ∈ x − B ⊤ ∂ ω ( B ˆ y ) . (4.4) This inclusion along with Proposition 2.1 allows us to express ˆ y in t erms of the proximity operator of ω . T o formulate our observa tion we introduce the af fine transformation A : R m → R m defined, for fixed x ∈ R d , λ > 0 , at z ∈ R m by Az := ( I − λB Q − 1 B ⊤ ) z + B Q − 1 x and the operator H : R m → R m H := I − pro x ω λ ◦ A . (4.5) Theor em 4.1. If ω is a con vex functi on on R m , B ∈ R m × d , x ∈ R d , λ is a positive number and ˆ y is the minimizer of (4.1) then ˆ y = Q − 1 ( x − λB ⊤ v ) if and only if v ∈ R m is a fixed point of H . 7 Pr oof. From (4.4) we conclude that ˆ y is charac terized by the f act that ˆ y = Q − 1 ( x − λB ⊤ v ) , where v is a vector in the set ∂ ω λ ( B ˆ y ) . Thus it follows that v ∈ ∂ ω λ ( B Q − 1 ( x − λB ⊤ v )) . Using Proposition 2.1 we conclude that B Q − 1 ( x − λ B ⊤ v ) = pro x ω λ ( Av ) . (4.6) Adding and subtracting v on the left hand side and rearranging the terms we see that v is a fixed point of H . Con versely , if v is a fixed point of H , then equation (4.6) h olds. Usi ng again Proposition 2.1 and the chain rule (4.3), we conclude that λB ⊤ v ∈ ∂ ( ω ◦ B ) ( Q − 1 ( x − λB ⊤ v )) Proposition 4.1 togeth er with the above inclusion now i mplies that Q − 1 ( x − λB ⊤ v ) is th e m inimizer of (4.1). Since the operator ( I − prox ω λ ) is nonexpansiv e [7, Lemma 2.1], then k H ( v ) − H ( w ) k 2 ≤ k Av − Aw k 2 ≤ k I − λB Q − 1 B ⊤ k k v − w k 2 . W e conclude th at the mapping H is nonexpansi ve if the spectral n orm o f the matrix I − λB Q − 1 B ⊤ is not greater than one. Let us denote by λ j , j ∈ N m , the eigen va lues of matrix B Q − 1 B ⊤ . W e see that H i s non expansi ve provided that | 1 − λλ j | ≤ 1 , that is if 0 ≤ λ ≤ 2 /λ max , where λ max is th e spectral norm of B Q − 1 B ⊤ . In this case we ca n appeal to Opial’ s Theorem 2.1 to find a fix ed point of H . Note that if, for ev ery j ∈ N m , λ j > 0 , that is, the matrix B Q − 1 B ⊤ is inv erti ble, then th e mapping H is strictly nonexpansive when 0 < λ < 2 /λ max . In this case, the Picard iterates of H con ver g e to the unique fixed point of H , without the need to use Opial’ s Theorem. W e end th is section by noting t hat, when Q = I , t he above theorem provides an algorithm for computing the proximity operator of ω ◦ B . Corollary 4 .1. Let ω be a con vex function on R m , B ∈ R m × d , x ∈ R d , λ a posi tive number and define the mapping v 7→ ( I − prox ω λ )(( I − λB B ⊤ ) v + B x ) . Then pro x ω ◦ B ( x ) = x − λB ⊤ v if and only if v is a fixed point of H . Thus, a fixed point iterative scheme like the above one can be used as part of any proxim al method when the regularizer has the form (1.2). 4.2 Accelerated First-Order Methods Corollary 4.1 motiv ates a general proximal numerical approach t o solving problem (1.1) (Alg o- rithm 1). Recall that L is the Lipschit z con stant of ∇ f . The idea behind proximal methods – see [7, 4, 28, 3 3, 34] and references th erein – is to upd ate the current estim ate of the soluti on x t using 8 Algorithm 1 Proximal & fixed point algorithm. x 1 , α 1 ← 0 f or t=1,2,. . . do Compute x t +1 ← pro x ω L ◦ B α t − 1 L ∇ f ( α t ) by the Picard-Opial process Update α t +1 as a function of x t +1 , x t , . . . end f or the prox imity o perator . This is equ iv alent t o replacing f wi th its linear approximation around a point α t specific to iteration t . The point α t may depend on the current and previous estim ates of the solution x t , x t − 1 , . . . , t he simplest and most common update rule being α t = x t . In particular , in this paper we f ocus on combining P icard iterations with acce lerated first-or d er methods proposed by Nesterov [27, 28]. These m ethods use an α u pdate of a specific ty pe, which requires two lev els of memory of x . Such a scheme has the property of a quadratic decay in terms of the iteration count, that is, the distance of the objectiv e from the minimal v alue is O 1 T 2 after T iterations. This rate of con vergence is optimal for a first order metho d in the sense of the algorithmic model of [25]. It is impo rtant to note that other metho ds may achiev e faster rates, at least under certain con - ditions. For example, interior point metho ds [29] or i terated r eweighted least sq uar es [8, 31, 1] hav e been appli ed successful ly to nons mooth con vex problems. Howe ver , the former require th e Hessian and typi cally have high c ost per iteration. The latter require solving linear systems at each iteration. Accelerated meth ods, on the other hand, have a lower cost per i teration and scale to larger problem sizes. M oreover , in applicatio ns where some t ype of thresholdi ng operator is in volved – for example, the Lasso (2.3) – the zer os in the solutio n ar e exact, which may be desirable. Since their introduction, accelerated methods ha ve quickly bec ome popular in va rious areas of applications, including m achine lear ning, see, f or e xampl e, [ 24, 15, 17, 13] and references therein. Howe ver , their applicabilit y has been restricted by the fact t hat they require exact com putation of the proximit y operator . Only then i s th e quadratic conv ergence rate known to hold, and thus methods usi ng numerical comput ation of the proximity operator are not guaranteed to exhibit this rate. What w e show here, is how to furth er extend t he scope of accelerated methods and that, empirically at least, t hese ne w methods outperform current O 1 T methods whi le matching t he performance of optimal O ( 1 T 2 ) methods. In Al gorithm 2 we describe a version of accelerated m ethods i nfluenced by [33, 3 4]. Nest erov’ s insight w as that an appropriate upd ate of α t which uses tw o lev els of memory achie ves the O 1 T 2 rate. Specifically , the optim al update is α t +1 ← x t +1 + θ t +1 1 θ t − 1 ( x t +1 − x t ) where the sequence θ t is defined by θ 1 = 1 and th e recursi ve e quation 1 − θ t +1 θ 2 t +1 = 1 θ 2 t . W e ha ve adapted [33, Algorithm 2] (equiv alent to FI ST A [4]) by computing the proxi mity operator of ω L ◦ B usin g the Picard-Opial process descr ibed in Section 4.1. W e rephrased the algorit hm us ing the sequence ρ t := 1 − θ t + √ 1 − θ t = 1 − θ t + θ t θ t − 1 for numerical stability . At each iteration, the 9 map A t is defined by A t z := I − λ L B B ⊤ z − 1 L B ( ∇ f ( α t ) − Lα t ) and H t as in (4.5). By Theorem 4.1, the fixed p oint process combin ed with the x update are equiv alent to x t +1 ← pro x ω L ◦ B α t − 1 L ∇ f ( α t ) . Algorithm 2 Accelerated & fixed point algorithm. x 1 , α 1 ← 0 f or t=1,2,. . . do Compute a fixed point v of H t by Picard-Opial x t +1 ← α t − 1 L ∇ f ( α t ) − λ L B ⊤ v α t +1 ← ρ t +1 x t +1 − ( ρ t +1 − 1) x t end f or 5 Numeric al Simulations W e ha ve ev aluated the effic iency of our method with sim ulations on different nonsmooth learning problems. One i mportant aim of the experiments is to demonstrate improvement over a state of the art sui te of m ethods (SLEP) [16] in the cases when the proximity operator is not exactly computable. An example of such cases which we considered in Section 5.1 is the Group Lasso with over- lapping gr oups . An algorithm for computatio n of the proximi ty op erator in a finite nu mber of steps is known o nly in the special case of hierarchy-induced group s [13]. In ot her cases su ch as groups induced by d irected acyclic graphs [38] or more complicated sets of g roups, the b est known theoretical rate for a first-order method is O 1 T . W e demonstrate t hat such a method can be improved. Moreover , in Section 5.2 we report efficient con ver gence in the case of a composit e ℓ 1 penalty used for graph predict ion [11]. In this case, matrix B is th e incid ence matrix of a graph and the penalty is P ( i,j ) ∈ E k x i − x j k 1 , where E is the set of edges. Most work we are aware of for the composite ℓ 1 penalty applies to t he special cases o f total variation [3] or Fused lasso [19], in which B has a simple st ructure. A recent method for t he general case [5] which builds o n Nesterov’ s O 1 T smoothin g technique [ 27] does not hav e publicly a vailable software yet. Another advantage of Algorith m 2 which we highlight is the hi gh efficienc y of Picard itera- tions for computing dif ferent proximity operators. Thi s requires only a small number of iteration s regardless of the size of t he problem. W e also re port a roughly linear scalabil ity with r espect to the dimensionali ty of the problem, which s hows that our methodo logy can be applied to large scale problems. In the following simulati ons, we ha ve cho sen the parameter from Opial’ s theorem κ = 0 . 2 . The parameter λ was set equ al to 2 L λ max + λ min , where λ max and λ min are the lar gest and smallest eigen val- ues, respectively , of 1 L B B ⊤ . W e h a ve focused exclusi vely on the case of t he square loss and we hav e comput ed L using singular v alue decom position (if this were not po ssible, a Frobenius esti- mate could be used). Finally , the implementation ran on a 16GB memory dual core Intel machine. 10 0 50 100 150 200 250 300 350 400 1 2 3 4 5 6 7 8 9 10 x 10 −4 iteration count objective Picard − Nesterov SLEP Figure 1: Ob jectiv e functi on vs. iteration for the overlapping groups data ( d = 35 0 0 ). Note that Picard-Nesterov terminates earlier within ε . 1 2 3 4 5 6 7 0 1 2 3 4 5 6 7 8 9 x 10 −5 iteration count Picard difference Figure 2: ℓ 2 diffe rence of successiv e Picard iterates vs. Picard iteration for the overlapping groups data ( d = 3500 ). The Matlab code is av ailable at http://ttic .uchicago .edu/ ∼ argyrio u/code/ index.html . 5.1 Overlappi ng Groups In t he first sim ulation we consi dered a syntheti c data set which in volv es a fairly sim ple group topol- ogy which, howev er , cannot b e em bedded as a hierarchy . W e generated data A ∈ R s × d , w ith s = [0 . 7 d ] from a uniform di stribution and normalized the matrix. The target vector x ∗ was al so gen- erated randomly so that only 21 o f its components are nonzero. The groups used in the re gul arizer ω GL – s ee eq. (3.1) – are: { 1 , ..., 5 } , { 5 , ..., 9 } , { 9 , ..., 13 } , { 13 , ..., 1 7 } , { 17 , ..., 21 } , { 4 , 2 2 , ..., 30 } , { 8 , 31 , ..., 40 } , { 12 , 41 , ..., 5 0 } , { 16 , 51 , ..., 60 } , { 20 , 61 , ..., 7 0 } , { 71 , ..., 80 } , . . . , { d − 9 , ..., d } . That is, th e first 5 groups form a chain, the next 5 groups ha ve a common element with one of the first g roups and the rest have no overlaps. An iss ue wit h overlapping group norms is the coef ficients assigned to each grou p (see [12] for a discussion). W e chose to use a coefficient o f 1 for ever y grou p and compens ate by normali zing each component of x ∗ according to the number of groups in which it appears (this of course can only be done in a synthetic setting li ke this ). 11 1000 1500 2000 2500 3000 3500 4000 0 500 1000 1500 2000 2500 3000 3500 4000 4500 5000 dimensionality no. iterations Picard − Nesterov SLEP 1000 1500 2000 2500 3000 3500 4000 0 100 200 300 400 500 600 dimensionality CPU time Figure 3 : A verage m easures vs. dim ensionality for the over lapping groups data. T op: num ber of iterations. Bottom: CPU ti me. No te that t his tim e can be reduced to a fraction with a C implementati on. 0 50 100 150 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 iteration count objective Picard − Nesterov SLEP Figure 4: Objectiv e function vs. iteration for the hierarchical overlapping groups. The outpu ts were then generated as y = Ax ∗ + n oise with zero mean Gaussian noise of standard deviation 0 . 0 0 1 . W e used a regularization parameter equal t o 10 − 5 . W e ran the algori thm for d = 1000 , 1100 , . . . , 4000 , wit h 10 random data sets for each value of d , and com pared its effic iency with SLEP . The solutions found recover the correct pattern wi thout exact zeros due to the regularization. Figure 1 shows the number of iterations T in Algorit hm 2 needed for con ver gence in objecti ve value wit hin ε = 10 − 8 . SLEP was run until t he same objective value was reached. W e conclude that we out- perform SLEP’ s O 1 T method. Figu re 2 demonstrates the efficienc y o f t he inner comput ation of the proximity map at o ne i teration t of the algorithm. Just a few Picard iterations are requi red for con ver g ence. The plots for different t are i ndistingu ishable. Similar conclusio ns can be drawn from the plot s in Figure 3 , where a verage counts of iterations and CPU time are shown f or each value of d . W e see that the number of iterations depends alm ost linearly on di mensionalit y and that SLEP requires an order of magnit ude mo re it erations – which grow at a higher ra te. Not e also t hat the cost per i teration is com parable between the two methods. W e also observed t hat computation of the proximity map is insensit iv e to the size of the problem (it o nly requires 7 − 8 it erations for all d ). Finally , we report that CPU tim e grows linearly with 12 dimensionali ty . T o remove v arious overheads t his esti mate was obtained from Matlab’ s profiling statistics for the low-le vel functions called. A comparison wi th SLEP i s meaningless since the latter is a C implement ation. Besides outperforming the O ( 1 T ) meth od, we also show that the Picard-Nesterov approach matches SLEP’ s O ( 1 T 2 ) metho d for the tree structured Group Lasso [18]. T o this end, we ha ve imitated an experiment from [13, Sec. 4.1] us ing the Berkele y segmentation data set 1 . W e have extracted a random d ictionary of 71 16 × 16 patches from these im ages, which we hav e placed on a b alanced t ree with branching factors 10 , 2 , 2 (top to bo ttom). Here the groups correspond to all subtrees o f t his tree. W e ha ve then learned the decomposit ion of new test patches in the dictionary basis by Group Lasso regularization (3.1). As Figure 4 sh ows, our method and SLEP are practically indisting uishable. 5.2 Graph Pred iction The s econd sim ulation is on the graph predictio n of [11] in the limit of p = 1 (composi te ℓ 1 ). W e constructed a synthetic graph of d vertices, d = 100 , 120 , . . . , 36 0 with two clusters o f equal size. The edges in each cluster were selected from a uniform draw with probability 1 2 and we explicit ly connected d/ 25 pairs of vertices be tween the clusters. The labeled data y were the cluster labels of s = 10 randomly drawn vertices. Note that the ef fective dimensionalit y of thi s probl em is O ( d 2 ) . At the time of the paper’ s writing th ere i s not an accelerated method with software av ailable online which handles a generic graph. First, we observed that the solution found recovered perfe ctly the clu stering. Next, we studied the decay of t he objectiv e function for d iffe rent problem sizes (Figure 5). W e noted a striking diffe rence from the case of overlapping groups in that con vergence no w is n ot monotone 2 The na- ture of d ecay also d iffe rs from graph to graph, with some cases makin g fast progress very close to the o ptimal value but long before eve ntual con vergence. Thi s observation suggests future mod- ifications of the algorithm which can accelerate conv ergence by a factor . As an in dication, the distance from the optim um was just 2 . 2 · 10 − 6 , 5 . 4 · 10 − 5 , 1 . 5 · 10 − 5 at iteration 611 , 821 , 4 18 for d = 100 , 120 , 1 40 , respectiv ely . W e verified in th is data as well , that Picard i terations con ver ge very fast (Figure 6). Finally in T able 5.2 we report aver age iteration numbers and running times. These prove the feasibili ty of sol ving problem s with large m atrices B even us ing a “quick and dirty” Matlab implement ation. In addition t o a ra ndom incidence matrix, one m ay consider the sp ecial case of F used Lasso or T otal V ariation in which B has t he simple form (3.2). It has been shown how to a chie ve the opt imal O 1 T 2 rate for this problem in [3]. W e appli ed Fused Lasso (without Lasso re gularization) to the same clustering data as before and com pared SLEP with the P icard-Nesterov approach. As Figure 7 shows, t he two trajectories are identical. This provides even mo re evidence in f av or of opti mality of our method. 1 http://www .eecs.berkeley .edu/Researc h/Projects/CS/vision/bsds/ 2 There is no mon otonicity guarantee for Nes terov’ s accelerated method. 13 0 500 1000 1500 2000 2500 0 0.5 1 1.5 2 2.5 3 3.5 x 10 −3 iteration count objective d = 100 d = 120 d = 140 Figure 5 : Objective function vs. iteration for the graph data. Note the progress in the early stages in some cases. 0 5 10 15 20 0 0.5 1 1.5 2 2.5 x 10 −3 Picard difference iteration count t = 1 t = 1000 Figure 6: ℓ 2 diffe rence of successiv e Picard iterates vs. Picard iteration for the g raph data ( d = 100 ). 6 Conclusio n W e presented an efficient first order method for solving a class of nonsmoo th optimization prob- lems, whose objectiv e function is gi ven by the sum of a smooth term and a nons mooth term, which is obtained b y linear fun ction composition. The prototypical e xample cov ered by this setting in a linear regression regularization metho d, i n which the sm ooth term i s an error term and the no ns- mooth term is a regularizer w hich f a vors certain d esired parameter vectors. An im portant feature of our approach is that it can deal with richer classes of regularizers th an c urrent a pproaches and at the same ti me is at least as com putationally ef ficient as specific existin g approaches for structured sparsity . In p articular our numerical simu lations dem onstrate that the proposed m ethod matches optimal O ( 1 T 2 ) methods on specific problems ( Fused Lasso a nd tree structured Group La sso) wh ile improving over av ail able O ( 1 T ) method s for the overlapping Group Lasso. In addit ion, it can han- dle generic linear composi te regularization problems, for many of which accelerated methods do not yet exist. In the future, we wish to study t heoretically whether the rate of con vergenc e is O 1 T 2 , as suggested by our numerical simulation s. There is also much room for further accelera- tion of the m ethod in t he more challeng ing cases b y using practical h euristics. At the same time, it 14 d no. iterations CPU time (secs.) 100 2599.6 21.461 120 3680.0 54.745 140 4351.8 118.61 160 3124.8 164.21 180 2845.8 241.69 200 3476.2 359.75 220 4490.0 911.67 240 4490.0 911.67 260 3639.2 930.8 T able 1: Graph data. Note that the effecti ve d is O ( d 2 ) . CPU time can be reduced to a fraction with a C implement ation. 0 1000 2000 3000 4000 5000 0 1 2 3 x 10 −4 iteration count objective SLEP Picard − Nesterov Figure 7 : Objective function vs. it eration for the Fused Lasso ( d = 1 0 0 ). The t wo trajectories are identical. will be v aluable t o study further appli cations of our method. These could include machin e learning problems ranging from mult i-task learning, to multiple kernel learning and to di ctionary learning, all of which can be formulated as linearly composite regularization problems. Acknowledgeme nts W e wi sh to thank Luca Baldassarre and Silvia V ill a for useful discus sions. Thi s work was sup- ported by Air Force Grant AFOSR-F A9 550, EPSRC Grants EP/D071542 /1 and EP/H027203/1, NSF Grant ITR-0312113, Royal Society International Join t Project Grant 2012/ R2, as well as by the IST Programme of the European Community , under the P ASCAL Network of E xcellence, IST -2002-506778 . References [1] A. Argyriou, T . Evgeniou, and M. Pontil . Con vex mul ti-task feature learning. Machine Learning , 73(3):243–272, 2008. 15 [2] A. Argyriou, C.A. Micchelli, and M . Pontil. On spectral learning. The J ournal of Machine Learning Resear ch , 11:935–953, 2010. [3] A. Beck and M. T eboulle. Fast gradient-based algorithm s for cons trained total v aria- tion image denois ing and deblurring prob lems. Image Pr ocessing, IEEE T ransacti ons on , 18(11):2419–243 4, 20 09. [4] A. Bec k and M . T ebou lle. A fa st iterati ve s hrinkage-thresholdin g alg orithm for linear in verse problems. SIAM Journal of I maging Sciences , 2(1):183–202, 2009. [5] S. Becker , E. J. Cand ` es, and M . Grant. T emplates for con ve x cone problems with application s to sparse signal recov ery . Preprint, 2010. [6] J. M. Borwein and A. S. Lewis. Con ve x Analysis an d Nonlinear Opti mization: Theory and Examples . CMS Books in Mathematics. Springer , 2005. [7] P .L. Combettes and V .R. W aj s. Signal recov ery by proxim al forward-backward splitti ng. Multiscale Modeling and Simulati on , 4 (4):1168–1200, 2006. [8] I. Daubechies, R. DeV ore, M . Fornasier , and C.S. G ¨ unt ¨ urk. Iterativ ely reweighted least squares m inimizatio n for sparse recovery . Communicatio ns on Pur e and Applied Mathe- matics , 63(1):1–38, 2010. [9] J. Duchi and Y . Sing er . Efficient online and batch learning using forwa rd backward splitting. The J o urnal of Machine L earning Resear ch , 10:2899–29 34, 2 009. [10] T . Evgeniou, M. Pontil, and O. T oubia. A con vex op timization approach to m odeling con- sumer heterogeneity in conjoint estimation. Forthcoming at Mark eting Science, 2007. [11] M. Herbst er and G. Lever . Predicting the l abelling of a graph via minimum p -seminorm interpolation. In Pr oceedings of the 22nd Confer ence on Learni ng Theory (COL T) , 200 9. [12] R. Jenatton, J.-Y . Aud ibert, and F . Bach. Structured variable selection with sparsity-inducing norms. arXiv:0904.3523 v2, 2009. [13] R. Jenatton, J . Mairal, G. Obozinski, and F . Bach. Proxim al metho ds for sparse h ierarchical dictionary l earning. In Internationa l Confer ence on Machine Learning , pages 487–49 4, 2010. [14] D. Kim, S. Sra, and I. S. Dhi llon. A scalable trust-region al gorithm wi th application to mixed-norm re gressi on. In Internationa l Confere nce on Machine Learning , 2010. [15] Q. Lin . A Smoothin g Stochastic Gradient Method for Composite Opti mization. Arxiv pr eprint arXiv:1008 .5204 , 2010. [16] J. Liu, S. Ji, and J. Y e. SLEP: Sparse Learning with E fficient Pr ojections . Arizona State Univ ersity , 2009. [17] J. Liu and J. Y e. Fast Overlapping Group Lasso. Arxi v pr eprint arXiv:1009.0306 , 2010. [18] J. Liu and J. Y e. Moreau-Y osi da regularization for grouped t ree structure learning . In Ad- vances in Neural Information Pr ocessin g Systems , 2010. 16 [19] J. Liu, L. Y uan, and J . Y e. An effi cient algorit hm for a class of fused lasso problems . In Pr oceedings of the 16th A CM SIGKDD int ernational confer ence on Knowledge discovery and data mining , pages 323–332, 2010. [20] J. Mairal, R. Jenatton, G . Obozinski, and F . Bach. Network flow algorithms for structured sparsity . CoRR , abs/100 8.5209, 2010. [21] C.A. Micchell i, L. Shen, and Y . Xu. Proximity algorithms for image models : denoi sing. preprint, September 2010. [22] J.J. Moreau. Fonctions con ve xes d uales et points proximaus dans un espace hilbertien. Acad. Sci. P aris S ´ er . A Mat h. , 255:2897–2899 , 196 2. [23] J.J. Moreau. Proximit ´ e et dualit ´ e dans un espace hilbertien. Bull. Soc. Math. F rance , 93(2):273–299, 1965. [24] S. Mosci, L. Rosasco, M. Santoro, A. V erri, and S. V illa. Solving Structured Sparsity Regular - ization with Proximal Methods. In Pr oc. Eur o pean Conf. Machine Learning and Knowledg e Discovery in Databases , pages 418–433, 2010. [25] A. S. Nemirovsky and D. B. Y ud in. Pr oblem complexity an d method ef fi ciency in optimiza- tion . W iley , 1983. [26] Y . Nesterov . A method of solving a con vex programming p roblem wi th con ver gence rate O (1 /k 2 ) . Soviet Mathematics Doklady , 27(2):372–376, 1983. [27] Y . Nesterov . Smooth mini mization of non-s mooth fun ctions. Mathematical Pr ogramming , 103(1):127–152, 2005. [28] Y . Nesterov . Gradient me thods for minimi zing c omposite objective function . CORE, 20 07. [29] Y . Nesterov and A. Nem irovskii. Interior-point polynomial algorit hms in con vex pro g ram- ming . Number 13. Society for Industrial Mathematics, 1987. [30] Z. Opial. W eak con vergence of the subsequence of successive app roximations for nonexpan- siv e o perators. Bulleti n American Mathematical Society , 73:591–597, 1967. [31] M.R. Osborne. Finite algorit hms in optimizatio n and data anal ysis . John W iley & Sons, Inc. Ne w Y ork, NY , USA, 1985. [32] R. Tibshirani, M. Saunders, S. Rosset, J. Zhu, and K. Knight . Sparsity and sm oothness via the fused lasso. Journal of the Royal S tatistical Society: Series B (Stat istical Methodology) , 67(1):91–108, 2005. [33] P . Tseng. On accelerated proximal gradient methods for con ve x-conca ve opt imization. Preprint, 2008. [34] P . Tseng. Approximati on accurac y , g radient methods, and error bound for structured con vex optimizatio n. Mathematical Pr ogramming , 125(2):263–295, 2010. 17 [35] J. V on Neumann. Some m atrix-inequalities and metrization of matric-space. Mitt. F orsch.- Inst. Math. Mech. Univ . T oms k , 1:286–299, 1937. [36] M. Y uan and Y . L in. Model selection and estimati on in regression with grouped var iables. J ournal of the Royal Statistical Society , Series B , 68(1):49–6 7, 2006. [37] C. Z ˇ a linescu. Con vex Analysis in General V ector Spaces . W orld Scientific, 2002. [38] P . Zhao, G. Rocha, and B. Y u. Grouped and hierarchical model selecti on throu gh composit e absolute penalties. Annals of Statis tics , 37(6A):3468–3497, 2009. 7 A ppend ix In this append ix, we col lect some basi c facts about fixed point theory which are useful for our study . For more information on the material presented here, we refer the reader to [37]. Let X be a closed subset of R d . A mapp ing ϕ : X → X is called st rictly non-expansive (or contractiv e) if there e xi sts λ ∈ [0 , 1) such that, for e very x , y ∈ X , k ϕ ( x ) − ϕ ( y ) k ≤ λ k x − y k . If the above inequality holds for λ = 1 , t he mapping is called nonexpansive . W e say t hat x is a fixed point of ϕ if x = ϕ ( x ) . The Picard iterates x n , n ∈ N starting at x 0 ∈ X are defined by the recursiv e equation x n = ϕ ( x n − 1 ) . It is a well -knwon fact that, if ϕ is strictly nonexpansiv e then ϕ has a unique fixed point x and lim n →∞ x n = x . Ho wever , this resu lt fails if ϕ is non expansi ve. For example, the m ap ϕ ( x ) = x +1 does not hav e a fixed point. On the other hand, the identity map has infinitely many fix ed p oints. Definition 7.1. Let X be a closed subset of R d . A map ϕ : X → X is called asymptoticall y r e gul ar pr ovided that lim n →∞ k x n +1 − x n k = 0 . Pr oposition 7.1. Let X be a closed subset of R d and ϕ : X → X such that 1. ϕ is nonex pansive; 2. ϕ has at least one fixed point; 3. ϕ is asymptotically r e gular . Then the sequence { x n : n ∈ N } con ver ges t o a fixed point of ϕ . Pr oof. W e divide the proof i n three steps. Step 1: The Picard iterates are bounded. Indeed, let x be a fixed point of ϕ . W e hav e that k x n +1 − x k = k ϕ ( x n ) − ϕ ( x ) k ≤ k x n − x k ≤ · · · ≤ k x 0 − x k . Step 2: Let { x n k : k ∈ N } be a con vergent subsequence, whose lim it we denote by y . W e will show t hat y is a fixed point o f ϕ . Since ϕ is continuou s, we have that lim k →∞ ( x n k − ϕ ( x n k )) = y − ϕ ( y ) , and since ϕ is asymptoti cally re gul ar y − ϕ ( y ) = 0 . Step 3: The whole sequence con ver g es. Indeed, following the same reasoning in the proof of Step 1, we conclude th at the sequence { k x n − y k : n ∈ N } is n on-increasing. Let α = lim n →∞ k x n − y k . Since lim k →∞ k x n k − y k = 0 , we conclude that α = 0 and, so, lim n →∞ x n = y . 18 W e note that in general, wit hout the asympt otically regularity assumptio n, t he Picard it erates do not con verge. For example, consider ϕ ( x ) = − x . Its o nly fixed point is x = 0 ; if we st art from x 0 6 = 0 t he Picard iterates wil l oscillate. Moreover , if ϕ ( x ) = x + 1 , which is nonexpansive , the Picard iterates div er ge. W e now discuss the main t ool w hich we use to find a fixed poi nt of a nonexpansiv e mappin g ϕ . Theor em 7.1. (Opial κ -average theorem [30]) Let X be a closed c on vex s ubset of R d , ϕ : X → X a nonex pansive mapping, which has at least one fixed point and let ϕ κ := κI + (1 − κ ) ϕ . Then, for every κ ∈ (0 , 1 ) , the Picar d iterates of ϕ κ con ver ge to a fixed point of ϕ . W e prepare for the proof wi th two useful lemmas. Lemma 7.1. If κ ∈ (0 , 1) , u, w ∈ R d , k u k ≤ k w k , then κ (1 − κ ) k w − u k 2 ≤ k w k 2 − k κw + (1 − κ ) u k 2 Pr oof. The assertion follows from ℓ 2 strong con vexity , κ (1 − κ ) k w − u k 2 + k κw + (1 − κ ) u k 2 = κ k w k 2 + (1 − κ ) k u k 2 ≤ k w k 2 . Lemma 7.2. If { u n : n ∈ N } and { w n : n ∈ N } ar e sequences in R d such that lim n →∞ k w n k = 1 , k u n k ≤ k w n k and lim n →∞ k κw n + (1 − κ ) u n k = 1 , then lim n →∞ w n − u n = 0 . Pr oof. Apply Lemma 7.1 to note that κ (1 − κ ) k w n − u n k 2 ≤ k w n k 2 − k κw n + (1 − κ ) u n k 2 . By hypothesis the right hand side tends to zero as n tends to infinity and the result follows. Pr oof of Theor em 7.1. Let { x n : n ∈ N } be t he iterates of ϕ κ . W e will show that ϕ κ is asymp- totically regular . The result will then fol low by Proposition 7.1 and the fac t that ϕ κ and ϕ have t he same set of fixed points. Let x n +1 = κx n + (1 − κ ) ϕ ( x n ) . No te that, if u i s fixed point of ϕ κ , then k x n +1 − u k ≤ k x n − u k ≤ · · · ≤ k x 0 − u k . Let d := lim n →∞ k x n − u k . If d = 0 the resul t is proved. W e will show t hat if d > 0 we contradict the hypot heses of the theorem. For e very n ∈ N , we define w n = d − 1 ( x n − u ) and u n = d − 1 ( ϕ ( x n ) − u ) . Note that the sequ ences { w n : n ∈ N } and { u n : n ∈ N } satisfy the hypotheses of Lem ma 7.2. Thus, we have t hat lim n →∞ ( x n − ϕ ( x n )) = 0 . Cons equently x n +1 − x n = (1 − κ )( ϕ ( x n ) − x n ) → 0 , showing that { x n : n ∈ N } i s asymptotically re g ular . 19

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment