Anytime Reliable Codes for Stabilizing Plants over Erasure Channels

The problem of stabilizing an unstable plant over a noisy communication link is an increasingly important one that arises in problems of distributed control and networked control systems. Although the work of Schulman and Sahai over the past two deca…

Authors: Ravi Teja Sukhavasi, Babak Hassibi

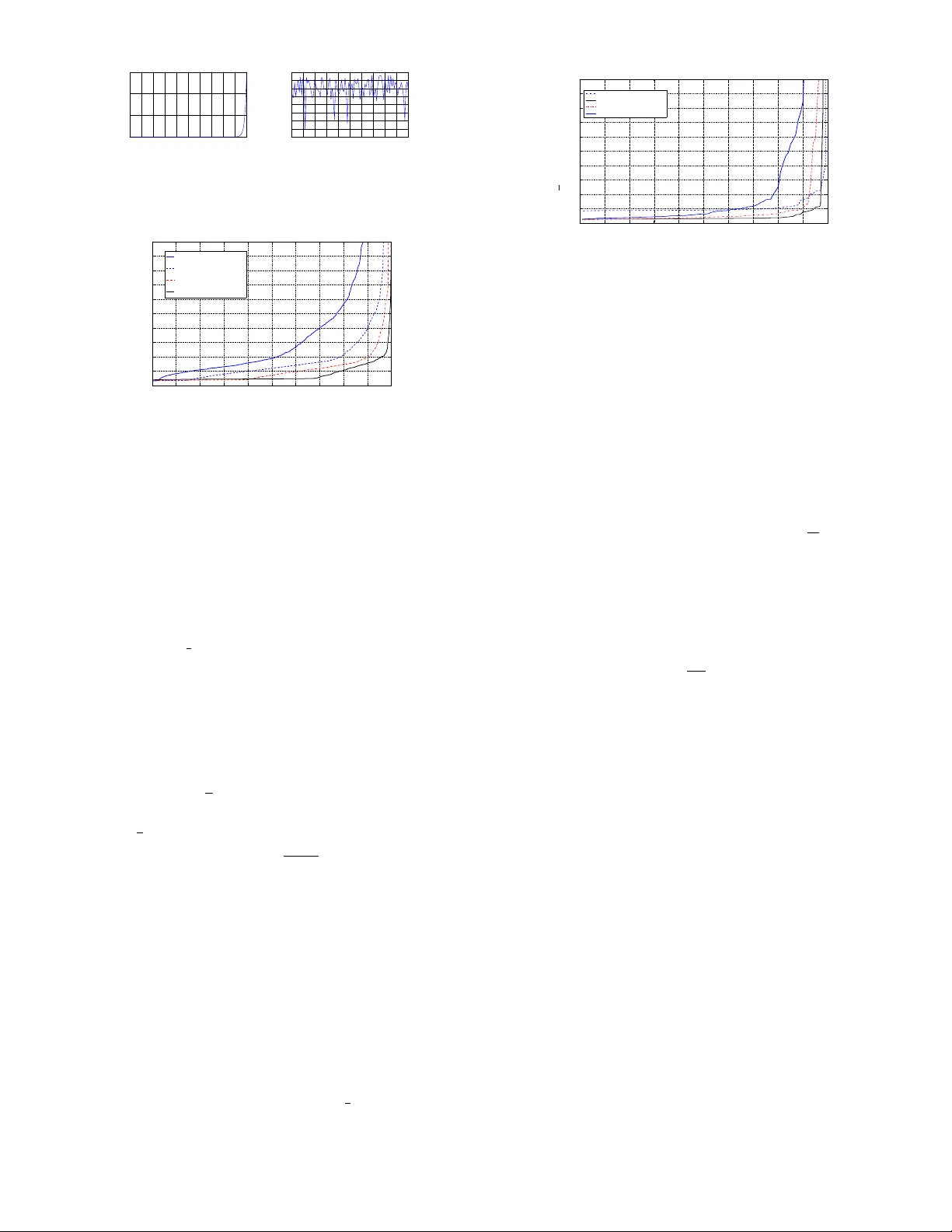

An ytime Reliable Codes for Stabilizing Plants o v er Erasure Channels Ravi T eja Sukhav asi Babak Hassibi Abstract —The problem of stabilizing an unstable plant over a noisy communication link is an increasingly important one that arises in problems of distributed control and networked control systems. Although the work of Schulman and Sahai ov er the past two decades, and their development of the notions of “tree codes” and “anytime capacity”, provides the theoretical framework f or studying such pr oblems, ther e has been scant practical progress in this area because explicit constructions of tree codes with efficient encoding and decoding did not exist. T o stabilize an unstable plant driven by bounded noise over a noisy channel one needs real-time encoding and real-time decoding and a reliability which increases exponentially with delay , which is what tr ee codes guarantee. W e pro ve the existence of linear tree codes with high probability and, for erasure channels, give an explicit construction with an expected encoding and decoding complexity that is constant per time instant. W e give sufficient conditions on the rate and reliability required of the tree codes to stabilize vector plants and argue that they are asymptotically tight. This work takes a major step towards controlling plants over noisy channels, and we demonstrate the efficacy of the method thr ough several examples. I . I N T RO D U C T I O N In control theory , the output of a dynamical system is observed and a controller is designed to regulate its behavior . The controller needs to react and generate control signals in real-time. In most traditional control systems, the controller and the plant are colocated and hence there is no measure- ment loss. There are increasingly many applications such as networked control systems [1] and distributed computing [2] where systems are remotely controlled and where measurement and control signals are transmitted across noisy channels. This necessitates a need to r eliably communicate the measurement and control signals by correcting for the errors introduced by the channels. Although Shannon’ s information theory is concerned with reliable transmission of a message from one point to another over a noisy channel, the reliability is achiev ed at the price of large delays which may lead to instability when they occur in the feedback loop of a control system. Hence, we need practical real-time encoding and decoding schemes with appropriate reliability for controlling systems over lossy networks. Consider a control system with a single observer that com- municates with the controller over a lossy communication channel and where the feedback link from the controller to the plant is noiseless. When the channel is rate-limited and deterministic, significant progress has been made (see eg., [3], [4]) in understanding the bandwidth requirements for stabilizing open loop unstable systems. When the communication channel is stochastic, [5] provides a necessary and sufficient condition on the communication reliability needed over such a channel to stabilize an unstable scalar linear process, and proposes the notion of feedback anytime capacity as the appropriate figure of merit for such channels. In essence, the encoder is causal and the probability of error in decoding a source symbol that was transmitted d time instants ago should decay exponentially in the decoding delay d . Although the connection between communication reliability and control is clear , very little is known about error -correcting codes that can achie ve such reliabilities. Prior to the work of [5], and in a dif ferent context, [2] proved the existence of codes which under maximum likelihood decoding achieve such reliabilities and referred to them as tree codes. Note that any real-time error correcting code is causal and since it encodes the entire trajectory of a process, it has a natural tree structure to it. [2] proves the existence of nonlinear tree codes yet gives no explicit constructions and/or ef ficient decoding algorithms. Much more recently [6] proposed efficient error correcting codes for unstable systems where the state gro ws only polynomially large with time. So, for linear unstable systems that have an exponential growth rate, all that is known in the way of error correction is the existence of tree codes which are, in general, non-linear . Moreov er , the existence results are not with a “high probability”. When the state of an unstable scalar linear process is av ailable at the encoder , [7] and [8] dev elop encoding-decoding schemes that can stabilize such a process over the binary symmetric channel and the binary erasure channel respectiv ely . But little is known in the way of stabilizing partially observed vector -v alued processes ov er a stochastic communication channel. The subject of error correcting codes for control is in its relativ e infancy , much as the subject of block coding was after Shannon’ s seminal work in [9]. So, a first step tow ards realizing practical encoder-decoder pairs with anytime reliabilities is to explore linear encoding schemes. W e consider rate R = k n causal linear codes which map a sequence of k -dimensional binary vectors { b τ } ∞ τ =0 to a sequence of n − dimensional binary vectors { c τ } ∞ τ =0 where c t is only a function of { b τ } t τ =0 . Such a code is anytime reliable if there exist constants β > 0 , η > 0 and a delay d o > 0 such that at all times t , P ˆ b t − d | t 6 = b t − d ≤ η 2 − β nd . The contributions of this paper are as follo ws: 1. W e show that linear tree codes exist and further , that they exist with a high probability . 2. For the binary erasure channel, we propose a maximum likelihood decoder whose average complexity of decoding is constant per each time iteration and for which the probability that the complexity at a giv en time t exceeds K C 3 decays exponentially in C . 3. W e also prov e asymptotically tight E N C O D E R C H A N N E L D E C O D E R b 1 b 2 b t c 1 = f 1 ( b 1 ) c 2 = f 2 ( b 1 ,b 2 ) c t = f t ( b 1 ,...,b t ) z 1 z 2 z t ˆ b 1 | 1 ˆ b 1 | 2 , ˆ b 2 | 2 ˆ b 1 | t ,..., ˆ b t | t . . . . . . . . . . . . Fig. 1. Causal encoding and decoding sufficient conditions on the rate R and exponent β needed to stabilize vector -valued processes ov er a noisy channel. As a consequence, we can efficiently stabilize a partially observed unstable linear process over a binary erasure channel without any channel feedback. In Section II, we introduce the notation and set up the problem. In Section III, we introduce the ensemble of time in v ariant codes and sho w that they are anytime reliable with a high probability . In Section IV, we present a simple decoding algorithm for the BEC and in Section V, we deri ve sufficient conditions for stabilizing unstable linear systems ov er noisy channels. W e present some simulations in Section VIII to demonstrate the efficacy of the decoding algorithm. I I . P RO B L E M S E T U P W e will begin by introducing some notation 1) For an y matrix F , F , abs ( F ) , i.e., F i,j = | F i,j | . ∀ i, j 2) λ ( F ) , largest eigen v alue of F in magnitude. 3) For a vector x , x ( i ) denotes the i th component of x . 4) 1 m , [1 , . . . , 1] T , i.e., a column with m 1’ s. 5) For w , v ∈ R m , w ≷ v denotes component-wise inequality . Consider the following m − dimensional unstable linear system with scalar measurements. Assuming that the system is observ- able, without loss of generality , it can be cast in the following canonical form. x t +1 = F x t + B u t + w t , y t = H x t + v t (1) where F = − a 1 1 0 . . . − a 2 0 1 0 . . . . . . . . . − a m − 1 . . . . . . 0 1 − a m 0 . . . . . . 0 , H = [1 , 0 , . . . , 0] where λ ( F ) > 1 , u t is the control input and, w t and v t are bounded process and measurement noise variables, i.e., k w t k ∞ < W 2 and k v t k ∞ < V 2 . Note that the characteristic polynomial of F is z n + a 1 z n − 1 + . . . + a m . The measurements { y t } are made by an observer while the control inputs { u t } are applied by a remote controller that is connected to the observer by a noisy communication channel. Naturally , the measurements y 0: t − 1 will need to be encoded by the observer to provide protection from the noisy channel while the controller will need to decode the channel outputs to estimate the state x t and apply a suitable control input u t . This can be accomplished by employing a channel encoder at the observer and a decoder at the controller . For simplicity , we will assume that the channel input alphabet is binary . Suppose one time step of system ev olution in (1) corresponds to n channel uses 1 . Then, at each instant of time t , the operations performed by the observer , the channel encoder , the channel decoder and the controller can be described as follows. The observer generates a k − bit message, b t ∈ { 0 , 1 } k , that is a causal function of the measurements, i.e., it depends only on y 0: t . Then the channel encoder causally encodes b 0: t ∈ { 0 , 1 } kt to generate the n channel inputs c t ∈ { 0 , 1 } n . Note that the rate of the channel encoder is R = k /n . Denote the n channel outputs corresponding to c t by z t ∈ Z n , where Z denotes the channel output alphabet. Using the channel outputs recei ved so far , i.e., z 0: t ∈ Z nt , the channel decoder generates estimates { ˆ b τ | t } τ ≤ t of { b τ } τ ≤ t , which, in turn, the controller uses to generate the control input u t +1 . This is illustrated in Fig. 1. Note that we do not assume any channel feedback . Now , define P e t,d = P min { τ : ˆ b τ | t 6 = b τ } = t − d + 1 Thus, P e t,d is the probability that the earliest error is d steps in the past. Definition 1 (Anytime r eliability): W e say that an encoder- decoder pair is ( R, β , d o ) − anytime reliable if P e t,d ≤ η 2 − nβ d , ∀ t, d ≥ d o (2) In some cases, we write that a code is ( R, β ) − anytime reliable. This means that there exists a fixed d o > 0 such that the code is ( R, β , d o ) − anytime reliable. W e will show in Section V (Theorem 5.1) that ( R, β ) − anytime reliability is a sufficient condition to stabilize (1) in the mean squared sense 2 . In what follo ws, we will demonstrate causal linear codes which under maximum likelihood decoding achiev e such exponential reliabilities. I I I . L I N E A R A N Y T I M E C O D E S As discussed earlier , a first step to wards developing practical encoding and decoding schemes for automatic control is to study the existence of linear codes with anytime reliability . W e will begin by defining a causal linear code. Definition 2 (Causal Linear Code): A causal linear code is a sequence of linear maps f τ : { 0 , 1 } kτ 7→ { 0 , 1 } n , τ ≥ 0 and hence can be represented as f τ ( b 1: τ ) = G τ 1 b 1 + G τ 2 b 2 + . . . + G τ τ b τ (3) where G ij ∈ { 0 , 1 } n × k W e denote c τ , f τ ( b 1: τ ) . Note that a tree code is a more general construction where f τ need not be linear . Also note that the associated code rate is R = k n . The abov e encoding is equiv alent to using a semi-infinite dimensional block lower triangular generator matrix, G n,R , whose entries are clear from (3) or equi valently as a semi-infinite dimensional block lower triangular parity check matrix, H n,R (the parity check matrix 1 In practice, the system ev olution in (1) is obtained by discretizing a continuous time dif ferential equation. So, the interv al of discretization could be adjusted to correspond to an integer number of channel uses, provided the channel use instances are close enough. 2 can be easily extended to any other norm satisfies H n,R G n,R = 0 .) H n,R = H 11 0 . . . . . . . . . H 21 H 22 0 . . . . . . . . . . . . . . . . . . . . . H τ 1 H τ 2 . . . H τ τ 0 . . . . . . . . . . . . . . . (4) where 3 H ij ∈ { 0 , 1 } n × n and n = n (1 − R ) . In order to ensure that the code rate is equal to the design rate R = k n , H t n,R needs to be full rank for ev ery t , where H t n,R is the nt × nt leading principal minor of H n,R . This will happen if H ii is full rank for all i . The existence results that follow imply the existence of anytime reliable H n,R whose code rate is same as the design rate. W e will present all our results for binary input output symmet- ric channels 4 . The Bhattacharya parameter ζ for such channels is defined as ζ = ∞ Z −∞ p p ( z | X = 1) p ( z | X = 0) dz where z and X denote the channel output and input, respec- tiv ely . W e will begin by proving the existence of such codes that are ( R, β ) − anytime reliable over a finite time horizon, T , i.e., under ML decoding P e d,t ≤ η 2 − β d , ∀ t ≤ T . W e will then prove their existence for all time. Due to space limitations, proofs for all the results in this section are presented in a companion paper , [10]. A. F inite T ime Horizon Over a finite time horizon, T , a causal linear code is represented by a block lower triangular parity check matrix H n,R,T ∈ { 0 , 1 } nT × nT . The following Theorem guarantees the existence of a H n,R,T that is ( R , β ) − anytime relable. Theor em 3.1: For each time T > 0 , rate R and exponent β such that R < 1 − log 2 (1 + ζ ) , and β < H − 1 (1 − R ) log 2 1 ζ + log 2 2 1 − R − 1 there exists a causal linear code H ( n, k , T ) that is ( R, β ) − anytime reliable. H − 1 (1 − R ) is the smaller root of the equation H ( x ) = 1 − R , where H ( . ) is the binary entropy function. Theorem 3.1 proves the existence of finite dimensional causal linear codes, H n,R,T , that are anytime reliable for decoding instants upto time T . In the following subsection, we demonstrate the existence of semi-infinite causal linear codes, H n,R , that are anytime reliable for all decoding instants. W e also sho w that such codes dra wn from an appropriate ensemble are anytime reliable with a high probability . The key is to impose a T oeplitz structure on the parity check matrix. 3 While for a given generator matrix, the parity check matrix is not unique, when G n,R is block lower , it is easy to see that H n,R can also be chosen to be block lower . 4 which can be easily extended to more general memoryless channels B. T ime Invariant Codes Consider causal linear codes with the follo wing T oeplitz structure H T Z n,R = H 1 0 . . . . . . . . . H 2 H 1 0 . . . . . . . . . . . . . . . . . . . . . H τ H τ − 1 . . . H 1 0 . . . . . . . . . . . . . . . The superscript T Z in H T Z n,R denotes ‘T oeplitz’. H T Z n,R is ob- tained from H n,R in (4) by setting H ij = H i − j +1 for i ≥ j . Due to the T oeplitz structure, we hav e the follo wing inv ariance, P e t,d = P e t 0 ,d for all t, t 0 . The notion of time in v ariance is analogous to the con volutional structure used to sho w the existence of infinite tree codes in [2]. The code H T Z n,R will be referred to as a time-in variant code. This time in v ariance obviates the need to union bound ov er all time t and hence allows us to prove that such codes which are anytime reliable are ab undant. Definition 3 (The ensemble TZ p ): The ensemble TZ p of time-in v ariant codes, H T Z n,R , is obtained as follo ws, H 1 is any full rank binary matrix and for τ ≥ 2 , the entries of H τ are chosen i.i.d according to Bernoulli( p ), i.e., each entry is 1 with probability p and 0 otherwise. Note that H 1 being full rank implies that H t n,R is full rank for ev ery t . For the ensemble TZ p , we have the following result Theor em 3.2 (Abundance of time-in variant codes): For any rate R and exponent β such that R < 1 − log 2 (1 + ζ ) log 2 (1 / (1 − p )) , and β < H − 1 (1 − R ) log 2 1 ζ + log 2 (1 − p ) − (1 − R ) − 1 if H T Z n,R is chosen from TZ p , then P H T Z n,R is ( R, β , d o ) − anytime reliable ≥ 1 − 2 − Ω( nd o ) Note that by choosing p small, we can trade off better rates and exponents with sparser parity check matrices. Note that for BEC( ), ζ = and for BSC( ), ζ = 2 p (1 − ) . For the Binary Symmetric Channel (BSC) with bit flip probability and for p = 1 2 , the threshold for rate in Theorem 3.2 becomes R < 1 − 2 log 2 ( √ + √ 1 − ) . It turns out that this can be strengthened as follo ws. Theor em 3.3 (T ighter bounds for BSC( )): For any rate R and e xponent β such that R < 1 − H ( ) , β < K L H − 1 (1 − R ) k min { , 1 − } if H T Z n,R is chosen from TZ 1 2 , then P H T Z n,R is ( R, β , d o ) − anytime reliable ≥ 1 − 2 − Ω( nd o ) I V . D E C O D I N G OV E R T H E B E C Owing to the simplicity of the erasure channel, it is possible to come up with an ef ficient way to perform maximum likelihood decoding at each time step. W e will show that the av erage complexity of the decoding operation at any time t is constant and that it being larger than K C 3 decays exponentially in C . Consider an arbitrary decoding instant t , let c = [ c T 1 , . . . , c T t ] T be the transmitted codeword and let z = [ z T 1 , . . . , z T t ] T denote the corresponding channel outputs. Recall that H t n,R denotes the nt × nt leading principal minor of H n,R . Let z e denote the erasures in z and let H e denote the columns of H t n,R that correspond to the positions of the erasures. Also, let ˜ z e denote the unerased entries of z and let ˜ H e denote the columns of H t n,R excluding H e . So, we ha ve the following parity check condition on z e , H e z e = ˜ H e ˜ z e . Since ˜ z e is known at the decoder , s , ˜ H e ˜ z e is known. Maximum likelihood decoding boils down to solving the linear equation H e z e = s . Due to the lower triangular nature of H e , unlike in the case of traditional block coding, this equation will typically not hav e a unique solution, since H e will typically not be full rank. This is alright as we are not interested in decoding the entire z e correctly , we only care about decoding the earlier entries accurately . If z e = [ z T e, 1 , z T e, 2 ] T , then z e, 1 corresponds to the earlier time instants while z e, 2 corresponds to the latter time instants. The desired reliability requires one to recover z e, 1 with an exponentially smaller error probability than z e, 2 . Since H e is lo wer triangular , we can write H e z e = s as H e, 11 0 H e, 21 H e, 22 z e, 1 z e, 2 = s 1 s 2 (6) Let H ⊥ e, 22 denote the orthogonal complement of H e, 22 , ie., H ⊥ e, 22 H e, 22 = 0 . Then multiplying both sides of (6) with diag ( I , H e, 22 ) , we get H e, 11 H ⊥ e, 22 H e, 21 z e, 1 = s 1 H ⊥ e, 22 s 2 (7) If [ H T e, 11 ( H ⊥ e, 22 H e, 21 ) T ] T has full column rank, then z e, 1 can be recovered exactly . The decoding algorithm no w sug- gests itself, i.e., find the smallest possible H e, 22 such that [ H T e, 11 ( H ⊥ e, 22 H e, 21 ) T ] T has full rank and it is outlined in Algorithm 1. Algorithm 1 Decoder for the BEC 1) Suppose, at time t , the earliest uncorrected error is at a delay d . Identify z e and H e as defined above. 2) Starting with d 0 = 1 , 2 , . . . , d , partition z e = [ z T e, 1 z T e, 2 ] T and H e = H e, 11 0 H e, 21 H e, 22 where z e, 2 correspond to the erased positions up to delay d 0 . 3) Check whether the matrix H e, 11 H ⊥ e, 22 H e, 21 has full column rank. 4) If so, solv e for z e, 1 in the system of equations H e, 11 H ⊥ e, 22 H e, 21 z e, 1 = s 1 H ⊥ e, 22 s 2 5) Increment t = t + 1 and continue. A. Complexity Suppose the earliest uncorrected error is at time t − d + 1 , then steps 2), 3) and 4) in Algorithm 1 can be accomplished by just reducing H e into the appropriate row echelon form, which has complexity O d 3 . The earliest entry in z e is at time t − d + 1 implies that it was not corrected at time t − 1 , the probability of which is P e d − 1 ,t − 1 ≤ η 2 − nβ ( d − 1) . Hence, the av erage decoding complexity is at most K P d> 0 d 3 2 − nβ d which is bounded and is independent of t . In particular, the probability of the decoding complexity being K d 3 is at most η 2 − nβ d . The decoder is easy to implement and its performance is simulated in Section VIII. Note that the encoding complexity per time iteration increases linearly with time. This can also be made constant on av erage if the decoder can send periodic acks back to the encoder with the time index of the last correctly decoded source bit. V . S U FFI C I E N T C O N D I T I O N S F O R S T A B I L I Z A B I L I T Y Consider an unstable m − dimensional linear system whose state space equations in canonical form are gi ven by (1), i.e., λ ( F ) > 1 , and recall that the characteristic polynomial of F is z n + a 1 z n − 1 + . . . + a m . Suppose the observer does not hav e any feedback from the controller, in particular, it does not have access to the control inputs. Then we can stabilize such a system in the mean squared sense over a noisy channel provided that the rate R and exponent β of the ( R, β ) − anytime reliable code used to encode the measurements satisfy the following sufficient condition. Theor em 5.1 (No F eedback to the Observer): It is possible to stabilize (1) in the mean squared sense with an ( R, β ) − anytime code provided ( F , B ) is controllable and R > R n = 1 n log 2 m X i =1 | a i | , β > β n = 2 n log 2 λ ( F ) (8) If the observer kno ws the control inputs, it turns out that one can make do with lower rates. This is stated as the following Theorem Theor em 5.2 (Observer Knows the Control Inputs): When the observer has access to the control inputs, it is possible to stabilize (1) in the mean squared sense with an ( R, β ) − anytime code pro vided ( F, B ) is controllable and R > R f n = argmin r λ ( F D nr ) < 1 (9a) β > β f n = 2 n log 2 λ ( F ) (9b) where D nr = diag (2 − nr , 1 , . . . , 1) . Moreo ver R f n ≤ 1 n log 2 max | a m | 2 m − 1 , max 1 ≤ i ≤ m − 1 | a i | 2 i (10) The superscript f in R f n denotes ‘feedback’ to emphasize the fact that the observer has access to the control inputs. Before proceeding further , we will giv e a brief outline of the proofs for Theorems 5.1 and 5.2 (details are in Section VII). At each time t , using the channel outputs receiv ed receiv ed till t , we bound the set of all possible states that are consistent with the estimates of the quantized measurements using a hypercuboid, i.e., a region of the form x t ∈ R m | x min,t | t ≤ x t ≤ x max,t | t , where x min,t | t , x max,t | t ∈ R m and the inequalities are component- wise. If ∆ t | t = x max,t | t − x min,t | t , then from Lemma 7.1, ∆ t +1 | t = F ∆ t | t + W 1 m . The an ytime exponent is determined by the growth of ∆ t in the absence of measurements, hence the bound β n = β f n = 2 log 2 λ ( F ) . The bound on the rate is determined by how fine the quantization needs to be for ∆ t to be bounded asymptotically . A. The Limiting Case The sufficient conditions derived above are for the case when the measurements are encoded every time step. Alternately , one can encode the measurements every , say ` , time steps, and consider the asymptotic rate and exponent needed as ` grows. Note that this amounts to working with the system matrix F ` . So, one can calculate this limiting rate and exponent by writing the eigen values of F , { λ i } m i =1 , as λ i = µ n i and letting n scale. The follo wing asymptotic result allows us to compare the suf ficient conditions above with those in the literature (e g., see [3], [5], [11]). Theor em 5.3 (The Limiting Case): Write the eigen values of F , { λ i } m i =1 , in the form λ i = µ n i . Letting n scale, R n and R f n con ver ge to R ∗ , and β n and β f n con ver ge to β ∗ , where R ∗ = X i : | µ i | > 1 log 2 | µ i | , β ∗ = 2 log 2 max i | µ i | (11) In addition, the upper bounds on R f n in (10) also con ver ges to R ∗ . Pr oof: See Section C of the Appendix. For stabilizing plants ov er deterministic rate limited channels, [3] showed that a rate R > R ∗ , where R ∗ is as in (11), is neces- sary and suf ficient. So, asymptotically the suf ficient conditions for the rate R in Theorems 5.1 and 5.2 are tight. Though the abov e limiting case allo ws one to obtain a tight and an intuiti vely pleasing characterization of the rate and exponent needed, it should be noted that this may not be operationally practical. For , if one encodes the measurements e very ` time steps, ev en though Theorem 5.3 guarantees stability , the performance of the closed loop system (the LQR cost, say) may be unacceptably large because of the delay we incur . This is what motiv ated us to present the suf ficient conditions in the form that we did above. B. A Comment on the T rade-off Between Rate and Exponent Once a set of rate-exponent pairs ( R , β ) that can stabilize a plant is av ailable, one would want to identify the pair that optimizes a giv en cost function. Higher rates provide finer resolution of the measurements while larger exponents ensure that the controller’ s estimate of the plant does not drift away; howe v er , we cannot hav e both. One can either coarsely quantize the measurements and protect the bits heavily or quantize them moderately finely and not protect the bits as much. One can easily cook up examples using an LQR cost function with the balance going either way . Studying this trade-off is integral to making the results practically applicable. V I . T I G H T E R B O U N D S O N T H E A N Y T I M E E X P O N E N T From Theorem 5.1, using the technique outlined in the previ- ous section, one needs an e xponent nβ ≥ 2 log λ ( F ) . It turns out that a smaller exponent of 2 log 2 λ ( F ) suffices. The idea is to alternately bound the set of all possible states that are consistent with the estimates of the quantized measurements using an ellipsoid E ( P , c ) , x ∈ R m |h x − c, P − 1 ( x − c ) i ≤ 1 . This can be seen as an extension of the technique proposed in [12] to filtering using quantized measurements. If m = 1 , λ ( F ) = λ ( F ) . So, let m ≥ 2 . In view of the duality between estimation and control, we can focus on the problem of tracking (1) ov er a noisy communica- tion channel. For , if (1) can be tracked with an asymptotically finite mean squared error and if ( F , B ) is stabilizable, then it is a simple exercise to see that there exists a control law { u t } that will stabilize the plant in the mean squared sense, i.e., lim sup t E k x t k 2 < ∞ . In particular , if the control gain K is chosen such that √ 2 F + B K is stable, then u t = K ˆ x t | t will stabilize the plant, where ˆ x t | t is the estimate of x t using channel outputs up to time t . Hence, in the rest of the analysis, we will focus on tracking (1). The control input u t therefore is assumed to be absent, i.e., u t = 0 . W e will first present a recursive state estimation algorithm using the channel outputs and then state the sufficient conditions needed for the estimation error to be appropriately bounded using such a filter . Recall that the channel outputs corresponding to the coded bits c t ∈ GF n 2 are z t ∈ Z n . Let x 0 ∈ E ( P 0 , 0) and suppose using { z τ } τ ≤ t − 1 , we have x t ∈ E ( P t | t − 1 , ˆ x t | t − 1 ) . Note that, since H = [1 , 0 , . . . , 0] , the measurement update provides information of the form x (1) min,t | t ≤ x (1) t ≤ x (1) max,t | t , which one may call a slab . E ( P t | t , ˆ x t | t ) would then be an ellipsoid that con- tains the intersection of the abov e slab with E ( P t | t − 1 , ˆ x t | t − 1 ) , in particular one can set it to be the minimum volume ellipsoid cov ering this intersection. Lemma A.1 giv es a formula for the minimum volume ellipsoid cov ering the intersection of an ellipsoid and a slab . Note that the width of the slab abov e tends to be smaller if the observer has access to the control inputs than when it does not. For the time update, it is easy to see that for any > 0 and P t +1 = (1 + ) F P t | t F T + W 2 4 1 m , E ( P t +1 , F ˆ x t | t ) contains the state x t +1 whenev er E ( P t | t , ˆ x t | t ) contains x t . This leads to the following Lemma. For con venience, we write P t for P t | t − 1 . Lemma 6.1 (The Ellipsoidal F ilter): Whenev er E ( P 0 , 0) contains x 0 , for each > 0 , the following filtering equations giv e a sequence of ellipsoids E ( P t | t , ˆ x t | t ) that, at each time t , contain x t . P t +1 = (1 + ) F P t | t F T + W 2 4 1 m , ˆ x t +1 = F ˆ x t | t (12a) P t | t = b t P t − ( b t − a t ) P t e 1 e T 1 P t e T 1 P t e 1 , ˆ x t | t = ξ t P t e 1 p e T 1 P t e 1 (12b) where a t , b t and ξ t can be calculated in closed form using Lemma A.1. Using this approach, we get the following set of sufficient conditions. The proofs are similar to the proofs of Theorems 5.1 and 5.2, and hence skipped due to space limitations. Theor em 6.2 (No F eedback to the Observer): It is possible to stabilize (1) for m ≥ 2 in the mean squared sense with an ( R, β ) − anytime code provided ( F , B ) is controllable and R > R e,n = 1 n log 2 " √ m 2 m X i =1 | a i | θ i − 1 # (13a) β > β e,n = 2 n log 2 λ ( F ) (13b) where θ = m m − 1 Theor em 6.3 (Observer Knows the Control Inputs): When the observer has access to the control inputs, it is possible to stabilize (1) in the mean squared sense with an ( R, β ) − anytime code pro vided ( F, B ) is controllable and R > R f e,n = argmin r λ ( F D m,nr ) < 1 (14a) β > β f e,n = 2 n log 2 λ ( F ) (14b) where D m,nr = diag √ m 2 − nr , √ θ , . . . , √ θ , θ = m m − 1 . Moreov er R f e,n ≤ 1 2 n log 2 m + 1 n log 2 max | a m | (2 θ ) m − 1 , max 1 ≤ i ≤ m − 1 2 | a i | (2 θ ) i − 1 (15) In the same limiting sense as described in Section V, R f e,n and R e,n con ver ge to R ∗ while β f e,n and β e,n con ver ge to β ∗ , where R ∗ and β ∗ are as in the Lemma 5.3. The proof is in Section C of the Appendix. V I I . P RO O F S O F T H E O R E M S 5 . 1 A N D 5 . 2 The analysis will proceed in two steps. W e will first determine a suf ficient condition on the number of bits per measurement, nR , that are required to track (1) when these bits are av ailable error free. W e will then determine the anytime exponent nβ needed in decoding these source bits when they are communi- cated o ver a noisy channel. At each time, we bound the set of all possible states that are consistent with the quantized measurements using a hyper- cuboid, i.e., a region of the form { x ∈ R m | x min ≤ x ≤ x max } , where x min , x max ∈ R m and the inequalities are component- wise. In what follows, we call ∆ t | τ , x max,t | τ − x min,t | τ , the uncertainty in x t using { b 0 τ } τ 0 ≤ τ , i.e., quantized measurements up to time τ . For con venience, let ∆ t ≡ ∆ t | t − 1 . Then, the time update is given by the follo wing Lemma. Lemma 7.1 (T ime Update): The time update relating ∆ t +1 and ∆ t | t is gi ven by ∆ t +1 = F ∆ t | t + W 1 m Pr oof: From the system dynamics in (1), the following is immediate ∆ ( i ) t +1 = W + max n ± a i ∆ (1) t | t + ∆ ( i +1) t | t , ∆ ( i +1) t | t , a i ∆ (1) t | t o = | a i | ∆ (1) t | t + ∆ ( i +1) t | t + W, i ≤ m − 1 ∆ ( m ) t +1 = | a m | ∆ (1) t | t + W In short, the abov e equations amount to ∆ t +1 = F ∆ t | t + W 1 m . The measurement update depends on whether or not the observer has access to the control inputs. A. Observer does not know the contr ol inputs In this case, the observer simply quantizes the measurements y t according to a 2 nR − regular lattice quantizer with bin width δ , i.e., the quantizer is defined by Q : R 7→ { 0 , 1 , . . . , 2 nR − 1 } , where Q ( x ) = b x δ c mod 2 nR . Assuming that the rate, R , is large enough, we will first find the steady state v alue of the recursion for ∆ t , which we then use to determine R . At each time t , the observer can communicate the measurement y t to within an uncertainty of δ , i.e., the estimator knows that the measurement lies in an interval of width δ . Adding to this the effect of the observation noise, − V 2 ≤ v t ≤ V 2 , the estimator knows x (1) t to within an uncertainty of ∆ (1) t | t = δ + V . Note that ∆ ( i ) t | t = ∆ ( i ) t for i 6 = 1 . Combining this observation with Lemma 7.1, the following is fairly straightforward. Lemma 7.2 (Steady State value of ∆ t without feedbac k): If lim t →∞ ∆ t = ∆ ∞ , then ∆ ∞ = ( δ + V ) L u a + W L u 1 m , where a = [ | a 1 | , . . . , | a m | ] T and L u = [ ` ij ] 1 ≤ i,j ≤ m with ` ij = I i ≤ j . Now , we need to go back and calculate R . Observe that ∆ ∞ does not depend on the starting value ∆ 0 . So we just need δ 2 nR ≥ max n ∆ (1) ∞ , ∆ (1) 0 o + V . From the abo ve Lemma, ∆ (1) ∞ = δ P m i =1 | a i | + V P m i =1 | a i | + mW . So, we need 2 nR > max ( m X i =1 | a i | + V + V P m i =1 | a i | + mW δ , ∆ (1) 0 δ ) The minimum required rate is obtained by letting δ → ∞ , in which case we need R > 1 n log 2 P m i =1 | a i | and this giv es R n in Theorem 5.1. B. Observer knows the contr ol inputs In this case, the observer can infer that the uncertainty in y t at the estimator side is ∆ (1) t + V . So, it can use the nR bits to shrink this to 2 − nR (∆ (1) t + V ) . T aking into account the observation noise, the uncertainty in x t after the measurement update will be given by ∆ (1) t | t = V + 2 − nR (∆ (1) t + V ) and ∆ ( i ) t | t = ∆ ( i ) t for i 6 = 1 . Combining this with Lemma 7.1, the ov erall recursion for ∆ t is gi ven by ∆ t +1 = F D nR ∆ t + W c,nR , where D nR = diag { 2 − nR , 1 , . . . , 1 } W c,nR = [ V (1 + 2 − nR ) + W, W, . . . , W ] T (16) Noting that V (1 + 2 − nR ) ≤ 2 V , the above recursion is bounded if and only if F D nR is stable. It follows that F D nR is stable for all R > R f n , where R f n = 1 n argmin r λ F D nr < 1 . Now consider tracking (1) ov er a noisy channel. Intuiti vely , the desired anytime exponent is determined only by the growth of the tracking error in the absence of measurements, which by Lemma 7.1 is governed by F . This is independent of whether or not control input is av ailable at the observer . This explains the v alue of β n = β f n = 2 n log 2 λ ( F ) in Theorems 5.1 and 0 10 20 30 40 50 60 70 80 90 100 0 1 2 x 10 29 time - t x t (a) Open loop trajectory 0 10 20 30 40 50 60 70 80 90 100 ï 120 ï 100 ï 80 ï 60 ï 40 ï 20 0 20 40 time - t x t (b) Trajectory after closing the loop Fig. 2. 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 0 100 200 300 400 500 600 700 800 900 1000 fraction of codes sup t< 100 E | x t | k=6,n=15,n β = 1.7 k=5,n=15,n β = 2.5 k=4,n=15,n β = 3.5 k=3,n=15,n β = 4.8 Fig. 3. The control performance of the code ensemble improves as the rate decreases 5.2. Making this argument rigorous is simple and has not been presented here due to space limitations. V I I I . S I M U L A T I O N S W e present two examples, one scalar and one vector , and stabilize them ov er a binary erasure channel with erasure probability = 0 . 3 . The number of channel uses per mea- surement is fixed to n = 15 . In both cases, time in v ariant codes H 15 ,R ∈ TZ 1 2 , for an appropriate rate R , were randomly generated and decoded using Algorithm 1. A. Example 1 Consider stabilizing the scalar unstable process obtained by setting m = 1 , − a 1 = 2 in (1) with w t and v t being uniform on [ − 30 , 30] and [ − 1 , 1] respectiv ely . Using Theorem 5.1, inorder to stabilize x t in the first moment sense, one needs a code with exponent β ≥ 1 n = 0 . 0667 and k = nR ≥ 1 . Using Theorem 3.2, causal linear codes exist for β < β ∗ = H − 1 (1 − R ) log 2 1 ζ + log 2 2 1 − R − 1 . A quick calculation shows that for k = 6 , n = 15 , β ∗ = 1 . 1413 n = 0 . 0761 > 0 . 0667 . The observer does not ha ve access to the control inputs, so an s k − regular lattice quantizer with bin-width δ was used to quan- tize the measurements. The control input is just u t = − ˆ x t | t − 1 . The four curves in Fig 3 correspond to the following sets of values: ( k = 3 , δ = 16 ), ( k = 4 , δ = 8 ), ( k = 5 , δ = 4 ) and ( k = 6 , δ = 2 ). Fig 2 shows the plot of a sample path of the abov e process with k = 3 , δ = 16 , L = 2 3 before and after closing the loop, the fact that the plant has been stabilized is clear . By easing up on the rate R , i.e., by performing coarser quantization but better error correction, the control performance of the code ensemble improv es. This is demonstrated in Fig 3. For each v alue of k from 3 to 6, 1000 time inv ariant codes were generated at random from TZ 1 2 . Each such code was used to control the process above over a time horizon of 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 0 100 200 300 400 500 600 700 800 900 1000 fraction of codes 1 2 T P T t =0 E ( k x t k 2 + k u t k 2 ),T= 100 k=2, n=15, nβ =6.3162 k=3, n=15, nβ =4.7593 k=4, n=15, nβ =3.5329 k=5, n=15, nβ =2.5279 Fig. 4. The CDF of the LQR costs for different realizations of the codes T = 100 . The x − axis denotes the proportion of codes for which sup t< 100 E | x t | is below a prescribed value, e.g., with k = 6 , n = 15 , sup t< 100 E | x t | was less than 200 for 50% of the codes while with k = 3 , n = 15 , this fraction increases to more than 95% . The y − axis has been capped at 1000 for clarity . This shows that one can tradeoff utilization of communication resources and control performance. B. Example 2 Consider a 3-dimensional unstable system (1) with a 1 = − 2 , a 2 = − 0 . 25 , a 3 = 0 . 5 and B = I 3 . Each component of w t and v t is generated i.i.d N (0 , 1) and truncated to [-2.5,2.5]. The eigen values of F are { 2 , − 0 . 5 , 0 . 5 } while λ ( F ) = 2 . 215 . The observer has access to the control inputs and we use the hypercuboidal filter outlined in Section VII. Using Theorem 5.2, the minimum required bits and exponent are gi ven by k = nR ≥ 2 and nβ ≥ 2 log 2 2 . 215 = 2 . 29 . The control input is u t = − ˆ x t | t − 1 . For k ≤ 5 , nβ ≥ 2 . 53 . The competition between the rate and the exponent in determining the LQR cost is evident when we look at the LQR cost 1 200 P 100 i =1 E k x t k 2 + k u t k 2 in Fig 4. When k = 2 , the error exponent nβ = 6 . 3 is large. So, at any time t , the decoder decodes all the source bits { b τ } τ ≤ t − 1 with a high probability . Hence, the limiting factor on the LQR cost is the resolution the source bits b t provide on the measurements. But when k = 5 , the measurements are av ailable almost losslessly b ut the decoder makes errors in decoding the source bits. Fig 4 suggest that the best choice of rate is R = 3 / 15 = 0 . 2 . I X . C O N C L U S I O N W e presented an explicit construction of anytime reliable tree codes with efficient encoding and decoding over erasure channels. W e also gave se veral suf ficient conditions on the rate and reliability required of the tree code to guarantee stability , and argued that the y are asymptotically tight. Although the work described here is a major step tow ards controlling plants over noisy channels, there are many issues to study and resolve. The tradeoff between rate and reliability (how finely to quantize the measurements vs. how much error protection to use) to optimize system performance (such as an LQR cost) remains to be studied, as well as how best to quantize and generate control signals. Furthermore, the problem of constructing efficiently decodable tree codes for other classes of channels, such as the BSC and the A WGNC, remains open. R E F E R E N C E S [1] J. Baillieul and P .J. Antsaklis, “Control and communication challenges in networked real-time systems, ” Pr oceedings of the IEEE , vol. 95, no. 1, pp. 9 –28, Jan 2007. [2] LJ Schulman, “Coding for interactiv e communication, ” Information Theory , IEEE T ransactions on , vol. 42, no. 6, pp. 1745 – 1756, 1996. [3] GN Nair and RJ Evans, “Stabilizability of stochastic linear systems with finite feedback data rates, ” SIAM Journal on Contr ol and Optimization , vol. 43, no. 2, pp. 413–436, 2005. [4] Alexe y S. Matveev and Andrey V . Savkin, Estimation and Contr ol over Communication Networks (Control Engineering) , Birkhauser, 2007. [5] Anant Sahai and Sanjoy Mitter , “The necessity and suf ficiency of anytime capacity for stabilization of a linear system over a noisy communication link - part i: Scalar systems, ” Information Theory, IEEE Tr ansactions on , vol. 52, no. 8, pp. 3369–3395, 2006. [6] R Ostrovsk y , Y Rabani, and LJ Schulman, “Error-correcting codes for automatic control, ” Information Theory , IEEE T ransactions on , vol. 55, no. 7, pp. 2931 – 2941, 2009. [7] Serdar Y uksel, “ A random time stochastic drift result and application to stochastic stabilization ov er noisy channels, ” Communication, Contr ol, and Computing, 2009. Allerton 2009. 47th Annual Allerton Conference on , pp. 628–635, 2009. [8] T . Simsek, R. Jain, and P . V araiya, “Scalar estimation and control with noisy binary observations, ” Automatic Control, IEEE T ransactions on , vol. 49, no. 9, pp. 1598 – 1603, 2004. [9] CE Shannon, “ A mathematical theory of communication, ” Bell System T echnical Journal , vol. 27, pp. 379 – 423 and 623 – 656, July and Oct 1948. [10] Ravi T eja Sukhavasi and Babak Hassibi, “Linear error correcting codes with anytime reliability , ” http://arxiv .or g/abs/1102.3526 , 2011. [11] P . Minero, M. Franceschetti, S. Dey , and G.N. Nair, “Data rate theorem for stabilization over time-varying feedback channels, ” Automatic Contr ol, IEEE Tr ansactions on , vol. 54, no. 2, 2009. [12] F . Schweppe, “Recursi ve state estimation: Unknown but bounded errors and system inputs, ” Automatic Control, IEEE T ransactions on , vol. 13, no. 1, Feb. 1968. [13] Osman G ¨ uler and Filiz G ¨ urtuna, “The extremal volume ellipsoids of con vex bodies, their symmetry properties, and their determination in some special cases, ” arXiv , vol. math.MG, Sep 2007. [14] A Sluis, “Upperbounds for roots of polynomials, ” Numerische Mathe- matik , vol. 15, no. 3, pp. 250–262, 1970. A P P E N D I X A. The Minimum V olume Ellipsoid Lemma A.1 (Theorem 6.1 [13]): The minimum volume el- lipsoid E ( ˆ P , c ) co vering n x ∈ R m | x ∈ E ( P , 0) , γ √ h T P h ≤ h h, x i ≤ δ √ h T P h o where | δ | ≥ | γ | , is gi ven by ˆ P = bP − ( b − a ) P hh T P h T P h , c = ξ P h √ h T P h (17) where 1) If γ δ < − 1 m , then ξ = 0 , a = b = 1 2) If γ + δ = 0 and γ δ > − 1 m , then ξ = 0 , a = mδ 2 , b = m (1 − δ 2 ) m − 1 3) If γ + δ 6 = 0 and γ δ > − 1 m , then ξ = m ( γ + δ ) 2 + 2(1 + γ δ ) − √ D 2( m + 1)( γ + δ ) a = m ( ξ − γ )( ξ − δ ) , b = a − aγ 2 a − ( ξ − γ ) 2 where D = m 2 ( δ 2 − γ 2 ) 2 + 4(1 − γ 2 )(1 − δ 2 ) If | δ | < | γ | , change x to − x and apply the abov e result. B. Upper bounds on R f n and R f e,n There are sev eral bounds in the Mathematics literature on the roots of a polynomial in terms of the polynomial coef ficients, a standard and near optimal bound being the Fujiwara’ s bound which we state below . Lemma A.2 (Fujiwara’ s Bound): Consider the monic poly- nomial with complex coefficients f ( x ) = x m + c 1 x m − 1 + . . . + c m and let λ ( f ) denote the largest root in magnitude. Then λ ( f ) ≤ K ( f ) = 2 max | c 1 | , | c 2 | 1 2 , . . . , | c m − 1 | 1 m − 1 , c m 2 1 m The upper bounds on R f n and R f e,n can now be proved as follows. The characteristic polynomial of F D nr is giv en by f c,nr ( x ) = x m − 2 − nr P m i =1 | a i | x m − i . Applying Lemma A.2, if the rate R is larger than the smallest v alue of r that will make K ( f c,nr ) < 1 , then λ ( F D nR ) ≤ K ( f c,nR ) < 1 making F D nR stable. The bound for R f n is then immediate while the bound for R f e,n follows by noting that the characteristic polynomial of F D m,nr is x m − √ m 2 − nr P m i =1 θ i − 1 | a i | x m − i . C. The Limiting Case Let F is any m -dimensional square matrix and f ( x ) denotes its characteristic polynomial. Then the following bounds hold (for details see [14]) λ ( F ) ≤ λ ( F ) ≤ λ ( F ) m √ 2 − 1 , K ( f ) ≤ 2 λ ( F ) (18) The proof for lim n →∞ β f n = β ∗ follows easily from the first bound in (18). By the hypothesis of the Lemma, the eigen values of F n are of the form { µ n i } m i =1 . T o emphasize the fact that F depends on n , we write it as F n and a i as a i,n . Recall that the characteristic polynomial of F n is giv en by f n ( x ) = x m + a 1 ,n x m − 1 + . . . + a m,n . Let I u , { i | | µ i | ≥ 1 } , then the following is easy to prov e lim n →∞ | a i,n | a |I u | ,n = 0 , i 6 = |I u | , lim n →∞ 1 n log 2 a |I u | ,n = X i ∈I u log 2 | µ i | (19) W e will prove that R n and R f n con ver ge to R ∗ , the proof for R e,n and R f e,n is similar . From (19), it is obvious that lim n →∞ R n = P i ∈I u log 2 | µ i | . It remains to show that the limit holds for R f n . The characteristic polynomial of F D nr is gi ven by f c,nr ( x ) = x m − 2 − nr P m i =1 | a i | x m − i . From (C), we ha ve 1 2 K ( f c,nr ) ≤ λ ( F D nr ) ≤ K ( f c,nr )) . Define R f n, 1 , argmin r 1 2 K ( f c,nr ) ≤ 1 and R f n, 2 , argmin r { K ( f c,nr ) ≤ 1 } . Then, it is obvious that R f n, 1 ≤ R f n ≤ R f n, 2 . Using Lemma A.2, some simple algebra and taking limit n → ∞ , we get lim n →∞ R f n = lim n →∞ 1 n log 2 max | a m,n | 2 , max 1 ≤ i ≤ m − 1 | a i,n | Combining this with (19), we get the desired result, i.e., lim n →∞ R f n = P i ∈I u log 2 | µ i | .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment