Improved RIP Analysis of Orthogonal Matching Pursuit

Orthogonal Matching Pursuit (OMP) has long been considered a powerful heuristic for attacking compressive sensing problems; however, its theoretical development is, unfortunately, somewhat lacking. This paper presents an improved Restricted Isometry …

Authors: Ray Maleh

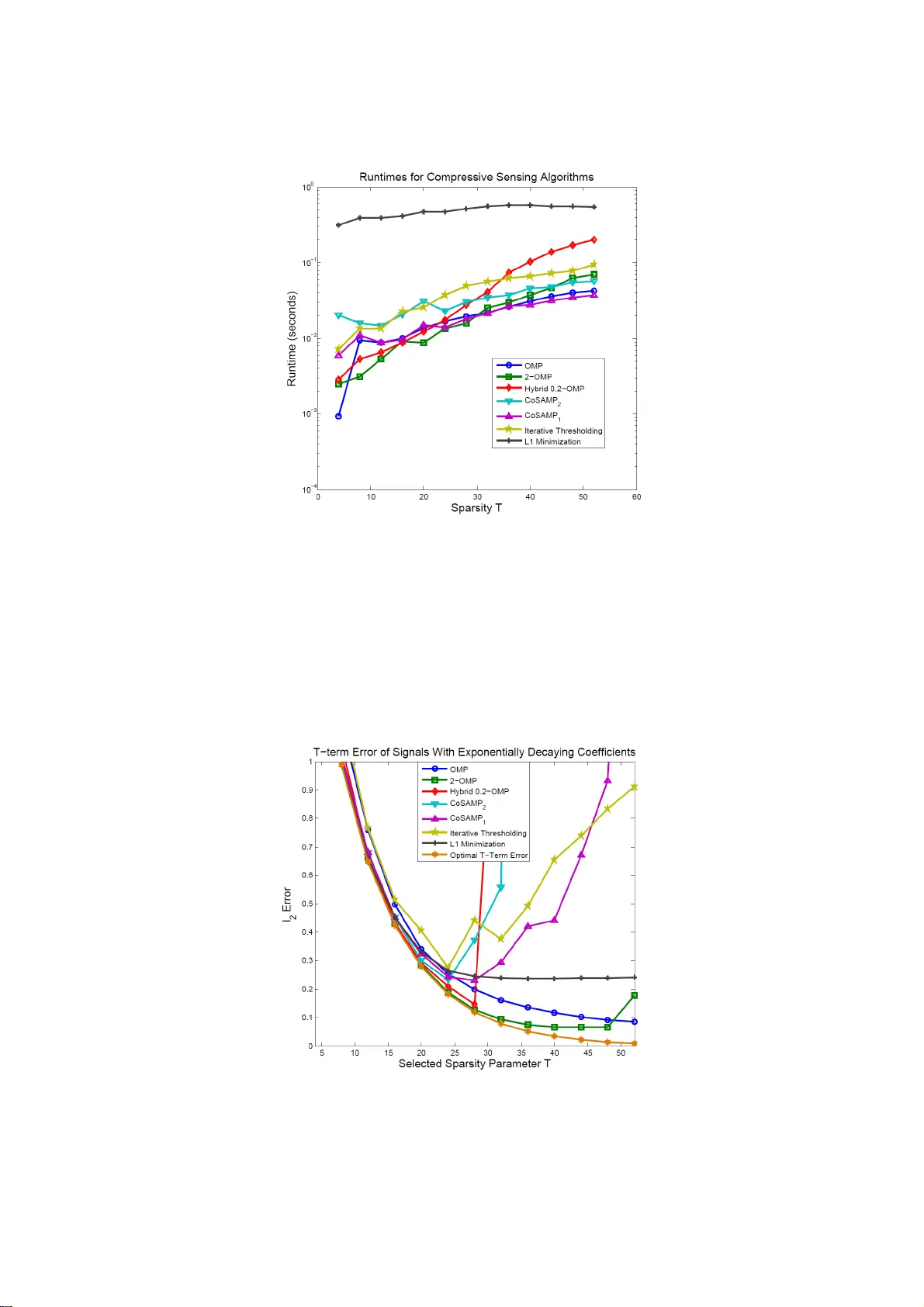

Improved RI P Analys is of Orthogona l Matching Pursuit Ray Maleh a a L-3 Commu nications Miss ion Integration Division, Greenville, TX 10001 Jack Finney Blvd. Greenville, TX 75402 Phone Number: 903-408-2605 Fa x Nu mber: 903-408-9036 Email: Ray.Maleh@L-3com.com Abstract: Ortho gonal Matching P ursuit (OMP) has long been consi dered a powerful heuristi c for attackin g compr essive s ensing p roblems; however, its theo retical d evelopment is , unfort unately, somew hat lackin g. This paper present s an i mproved Rest ricted Isometry P ropert y (RIP) b ased performance guar antee for -spar se signal reconstruction that asymptoticall y appro aches t he conjectured low er bo und given in Davenport et al. We als o furthe r extend th e stat e-of-the-art b y der iving reconstructi on error bo unds fo r the case of general non-spars e signal s subjected to measurement noise. We t hen generalize our results t o the case of K-fold Orthogonal Matchin g Pursuit (KOMP). We finis h by presenting an empiri cal anal y sis suggesti ng that OMP an d KOMP outperform other compressive sens ing algorith ms in average cas e scenari os. This t urns out to be qui te surprising since RIP analysis (i.e. worst case scena rio) suggests t hat these m atching purs uits should perfor m roughly T^0.5 t imes worse than convex op timization, CoSAMP , and Iter ative Thresholdin g. Keywords: co mpressive sensi ng, spars e app roximation, o rthogon al matching pursuit, restric ted i sometr y prope rty, greedy algorith ms, error boun ds. 1. Introduction During the last decade, Orthogon al Matching Pursuit (OMP) has become an i mporta nt co mponent of the toolbox of a ny math ematician o r en gineer workin g in the field of compressive sensi ng (CS). The al gorithm ori g inated as a st atistical method for proj ecting multi-dimensio nal data onto interesting lower dimensional spaces [1 4]. It was then i ntroduced to the s parse approxim ation world in its n on-orthogonal fo rm Matching Pursuit b y M all at et al . i n [ 22]. Toda y , O MP is a h ighl y celebrat ed a lgorithm with applications in medical imaging [18],[21], synthetic aperture radar (SAR) [ 2], wi reless multi-path ch annel es timation [ 1], and others . For the unf amiliar re ader, OMP is a greedy alt ernative to co nvex opti mization (se e [6] and [1 0]) that solves th e under-determi ned linear equation: (1. 1) where t he vector is a sp arse (or highl y compressibl e) signal, t he matrix is a short, fat measurem ent matrix, and the vect or is a small s et of linear measurements o f the signal. While being a powerful heuristic, OMP suffers from a lack of a decent th eoretical an alysis. Up until recentl y, onl y sparse appro ximation performance guar antees have been derived for OMP [ 15] ,[27]. These results depend on the coheren ce (or cumulative coherence) of the matrix . Furth ermore, these res ults onl y bound the error where is a n estimate, pro duced by OMP, which h as a s parse r epresentation in the column span of . In compressive s ensing, we are pri marily con cerned with bounds on , w hich do not triviall y follow f rom bounds on . As stated earlier, OMP is an alter native to convex optimizatio n t hat solves the basic CS problem . Whil e co nvex programs tend t o be slow, their solutions enjoy po werful error b ounds based on a restricted isometr y p ropert y (RIP) [7]. Neede ll a nd Tropp [ 23] later sho wed that CoSAMP, an al gorithm that is inherentl y s imil ar to OMP, d oes poss ess si milar RIP -based guaran tees. The high -level reaso ning fo r t his is that, like convex program ming, CoSAMP works g lobally by simultaneously trying to identif y all the correct non-zero ent ries of a sparse vector at each iter ation. On t he other hand, OMP wo rks locally b y attempti ng t o sel ect on e n on-zero entry of per i teration. In [1 1], an RIP-bas ed condition is d erived t hat guarantees OMP’s ability to recover a -spar se vector. Also, a lo wer bound on t he best possible RIP for OMP is suggested without proof. The main result of [ 11] i s s lightly ti ghtened in [18]. The p aper [ 19] offers an as ymptotic imp rovement over [18], but thi s comes at the expens e of additio nal coh erency assumpt ions and the inco rporation of l arge s caling consta nts. This wor k wil l expand and imp rove the results in [ 11] and [18] without the addition al conditions i mposed in [19 ]. In Se ction 3, we fi rst derive a stron g RIP-bas ed result for strictly -sparse si gnals that asymptoti cally approache s the lo wer bound conje ctured in [11] . Then we de duce an e rror b ound on that des cribes O MP’s abilit y to estimate non -sparse si gnals in the presence of meas urement noise. In Section 4, we generalize t hese result s to the cas e o f K-fold Orthogonal Matchi ng Purs uit (KOMP) w here entri es of are rec overed at every i teration. Section 5 features an empiri cal compariso n of OMP, K OMP, and other popul ar CS algorit hms. W e will show that despit e the fact that the R IP impli es t hat OMP performs ti mes worse than convex opti mization, OMP a nd KOMP still outpe rform other m ethods in average cas e scenarios . 2. Preliminaries Throu ghout this pa per, we wi ll employ th e follow ing notationa l conventi ons. We let denote a si gnal of inte rest. W e say that i s -sp arse if it consists of at most non-zero components. More formally, we can writ e t hat wh ere denot es th e quasi-norm t hat counts the nu mber of non-zero entries in its argum ent. We t y picall y let denote th e support of . We define the nor m of a signal as follows: (2.1) It is assumed that w e d o not have dir ect access to the si gnal . Instead , we h ave access to a s et of linear measurements o f t hat take the form: (2.2 ) where is a measurement matrix and t he signal contains the actual measurements. Our goal is t o solve for given knowled ge of only and . In p ractical appli cations, is oft en either a rando m sub-Gau ssian matrix or a sub-mat rix of a di screte Fourier transform matrix [ 25]; ho wever, in t heor y , can be an y matr ix provided it satisfies a restrict ed isometr y propert y, which is defined as follows : Definitio n 1: A measurement matrix satis fies a restrict ed isomet ry propert y (RIP ) of o rder if th ere exists a co nstant suc h that (2.3 ) for all si gnals that are -spar se. The co nstant is call ed the restricted isometry number of order . An equi valent form ulation of the RIP is that for every ind exing set of si ze , w e have th at the sub- matrix generat ed by selecti ng the col umns of corresp onding to , satis fies: (2.4 ) It is i mmediatel y cl ear that for a given measu rement m atrix , the restricted isomet ry n umbers form a n incr easing seq uence. Furthermor e, N eedell et al. [23 ] s how th at the growth of t hese numbers must be sub- linear , i.e. . (2.5) The kern el of t he measurement mat rix i nduces the quotien t sp ace which consists of the cosets . It is a straight-forw ard exerci se t o show t hat i f , then each cos et can contain at most one -sp arse signal. Thus , th e generally u nderdetermin ed sy ste m (2.2) is well-defined if is -spars e and . Naïve met hods for solvin g ( 2.2) invol ve slow combinato rial s earches o ver all possibl e submatri ces of . This beco mes an in tractable problem for l arge . Candes [7] showed t hat un der the ti ghter r estricted isometr y condition , the system ( 2.2) can be solved via the convex optimiz ation problem: (2.6 ) If is not -s parse or is corrupted b y noise, then the constraint in (2.6) can be fo rmulated as for so me paramet er . In this cas e it can b e shown th at t he estimat e satisfied an error bound of t he form: (2.7 ) The do wnside t o t he minimi zation problem shown in (2.6) is that it runs in pol y nomial time. The re are faster algorithms that solve t he same problem. One classical greedy algorithm that s olves the compressive sensi ng problem is O rthogonal Matching Pursuit (OMP) . The OMP algorithm, which is als o known as Forwar d Stepwis e Regres sion in t he data mining and statistic al learning communiti es [17], is sh own below. TABLE I ORTHOGO NAL MATCHING PURSUIT INPUTS: Observations Measurem ent Matrix Number of Iterations (typically equal to sparsity l evel) OUTPUTS: -sparse est imate of . Residual signal Set o f selected support indices . PROC EDURE: -Initi alize the residual and indexing set . -For from 1 to { -Find the col umn of t hat maximizes the correlat ion with the residual , i.e. l et where denotes the th column of . -Set . -Let denote the pro jection o f onto the colum ns of indexed by , i.e. -Define the new residual as . } - Generate the estim ate of as follows: A popular generalization of Orthogonal Matching Purs uit is K -fold Ortho gonal M atching P ursuit (KOMP) [15]. This procedur e is essentiall y ident ical to OMP excep t for the fact that co lumns of are selected per iteratio n. Thus, at ever y st ep, K OMP in crements its indexing set according to the rule where consists of t he indices correspon ding to the largest values of . Observe that OMP is a special case of K OMP when . W hile clearl y fas ter th an OMP, a surprisin g result of this paper i s that KOMP can sometimes be more accurate th an OMP as we ll. Up until recently, th e t heoretical d evelopment of Orthogonal Matchin g P ursuit has been li mited. In [1 6], a non-unifor m “per signa l” performance guarantee, w hich assumes a Gaussian random measureme nt ensembl e, i s deri ved. Other works, such as [15],[2 7], etc., develop r esults based on dictionar y coheren ce and/or cu mulative coh erenc e. Th e seminal papers [3 ] and [ 23] demon strate that the restricted iso metry prope rty can be us ed in the analysis of the rel ated greedy a lgo rithms Iterative Thresh olding and CoSAMP (Compressi ve Sensi ng Matching Pursuit). These wor ks motivat ed the theor etical thrust t o determine wheth er OMP e njoys an RIP-based performance guarantee as well. In [11] , D avenport et al. demonstrate that OMP ca n su ccessfull y recover an y -spars e signal provided that the measureme nt matrix satisfies the RIP: (2.8 ) The auth ors furthe r allude to the unachi evable l ower bound . In [1 8], Liu et al. i mprove this result slightly and o btain: (2.9 ) Alon g these sam e lin es, Lipshitz [19] shows that wi th ad ditional stringent dic tionary coh erence const raints, the result can be as y mpt otically improved t o: (2.10) for so me ver y lar ge con stant and some ver y sm all constant . Unf ortunatel y , bec ause o f the m agnitudes of t hese c onstants, t he b enefit o f this result will be very di fficult to realiz e in practical compressi ve sensing p roblems. In Sec tion 3, we improve upon t he results ( 2.8) and (2.9) and show, without add itional coherence assumptio ns, that OMP can recover an y -sparse s ignal provided (2.11) which is a hi ghly near -optimal figure. I n a ddition , we s how that for a signal that is not T -sparse and/or has m easurements that ar e corrupted b y nois e, OMP will o btain an estimat e of satisf y ing: (2.12) where represents the measu rement noise and i s the opti mal -spar se estimate of , i .e. tru ncated t o its stron gest entries. In Section 4 , we extend t hese results to the case of K OMP and com pare the theoreti cal p erformance of OMP and KOMP . In particular, we illustrat e situations in which KOMP performs better than OMP. In Section 5, we augment this discussion by usi ng empirical methods to compare OMP, KOMP, and the vario us other pop ular CS algorithm s of the da y. 3. Regular Orthogo nal Matching Purs uit We begi n by citing two le mmas that will be used repeat edly throughout this analysis: Lemma 1: Let be a T -spar se si gnal with support set . Let be any subs et o f s uch that . Let b e a measurement matrix w ith restri cted isometr y n umber s . Then the foll owing two prope rties are true: (3.1 ) and (3.2 ) Lemma 2: Let be a measu rement matri x with restricted isometry number . Let be a ny signal. Then (3.3 ) Both lemmas are proved i n [23]. The first l emma is an i mmediat e conseq uence of t he fact that is nearl y unit ary with respect to T -sparse signals . T he second l emma extends the r estricted isometry energ y bo unds to non-sparse signa ls. With these l emmas in mind, we are now prepared t o develop a sufficient condit ion under which Orthogon al Matching Purs uit will recover any T -sp arse sign al from its me asurements: Theorem 1: Su ppos e that is a measurement matrix whose RIP consta nt satisfies (3.4 ) Then, O MP will recover any T -sparse signal from its m easurements . Proof. Let b e a ny T -sp arse s ignal with sup port . At it eration t , suppose that OMP h as o nly sel ected correct atoms. Let b e the current residual. Then where is a lso supported on . Now observe that, b y Lemma 1 and the fact that the RIP constants are inc reasing in , (3.5 ) This impli es that (3.6 ) Now l et b e any element not in . T hen observe that (3.7 ) This impli es that (3.8 ) OMP will recover the n ext atom correctl y if (3.9 ) which will happen if (3.10) This is equi valent to (3.4 ). □ This s ufficient condit ion is signifi cantly stronger than the one demonstrat ed i n [11]. In fact, it is very clos e to the un achievable boun d suggest ed in the s ame work. Thus, our result is highl y near o ptimal . We next extend this result to m ore general signals . Cert ainly , if is not T -sparse, then it is i mpossible for OMP to r ecover perfe ctly i n T it erations. H owever, we are i nterested in d etermining how cl ose OMP’s T - term approximation error is to the o ptimal T - term representatio n of . The followin g theorem answers this precis e question: Theorem 2 : Let be a m easurement matrix th at s atisfies the RIP sh own in (3.4). Let b e any si gnal with optimal T -ter m ap proximation . Let and let . Suppose OMP h as nois y measur ements of the form where . T hen, after T iterations, OMP will reco ver an estimate of t hat satisfies: (3.11) where, f or reasonabl e RIP numbers, grows asymptoticall y like . Proof. First supp ose that at it eration t , OMP h as selected o nly atoms indexed in . At iteration t + 1, OMP will sel ect another at om from provid ed the greedy selectio n condition (3.12) is satis fied. Now rewrite the r esidual as . Here, is the coefficient vector of th e projection of onto the cur rently selec ted atoms. Then one can bound the numerator of (3.12) b y : (3.13) (3.14) (3.15) On th e other hand, the d enominator c an be bounded fro m below b y : (3.16) (3.17) (3.18) (3.19) A sufficie nt condition for the next atom to be selected f rom is that th e numerator is less t han the denomin ator. This is guarant eed if (3.20) We can rearrange term s to obtain: (3.21) Now l et denote th e first iteration w here (3.21) does not hold. By defi nition of O MP, . We have: (3.22) (3.23) where which has cardinality at most . It is possi ble t o fur ther boun d t he left hand side by: (3.24) (3.25) (3.26) (3.27) where th e second inequalit y comes from the fact that in OMP , the residu al is always decreas ing in magnitu de regardless whet her the selected atoms are from or not. Since (3.21) do es not hold for , it follows t hat: (3.28) where (3.29) We fu rther bound by: (3.30) + (3.31) (3.32) Finall y , let to obt ain that (3.33) (3.34) as was t o be shown. □ The fa ct th at grows as y mpt oticall y li ke should b e no surpris e: it is already w ell known [27] that, under mild coherence constraints, th e signal observati ons obey the bou nd: (3.35) where , , and is the optimal -term repr esentation o f usin g the columns of . We note that it i s not necessaril y th e case that . The n ovelty of our result is that we have derived an err or bound on t he signal its elf, not si mply on its measur ements. In a sparse appr oximation p roblem wher e i s the sign al that we’re trying to estimate usin g the col umns of , t hen a bound such as (3.35) is s ufficient. How ever, in comp ressive sensi ng, if , then a ll t hat we may co nclude is that wher e . If th e spar sity i s not sufficientl y sm all, then may be a non -zero vector an d our es timate may b e quite i naccurate. Thus, we claim th at the result in Theorem 2 is mor e powerful than the sparse appro ximation results of th e past. 4. K-fold Orthogonal Matching Pursuit A po pular extension of O rthogonal Matching P ursuit is K-fold Orthogonal Matching Purs uit (KO MP). KOMP is almost identical to OMP except for the fact t hat atoms are s elected per it eration instead of 1. KOMP has t wo m ain a dvantages over OMP w hich are som ewhat m utuall y excl usive. The fi rst one i s speed: Given a -spars e si gnal, one may use KO MP to recover t he signal in iterations vers us th e usual iterations. Th is yields a si gnificant reductio n in run-time es pecially since t he numb er of least-squa res projections has been cut down b y a fact or of . Unf ortunately, f or accu racy, thi s m ethod requi res th at all atoms selected per iter ation be c orrect. V ery few measurement matrices enjo y en ough coherence t o allow for the correct selection of so man y atoms witho ut some sort of re-o rthogonali zation. As a res ult, we choose to exp loit t he second m utually exclusive advantage o f KOMP o ver OMP: Running it erations of KOMP will s elect a s et of indi ces where , with go od probabilit y , o ur si gnal’s s upport s et w ill be contained in . Thus, we e ffectivel y use KOMP to narrow down all the possible signal indices to the to p candidates . T hen, as suming is not too large, w e can perform a l east-squares projection of our measur ements onto th e span of the select ed columns o f in or der to id entify t he exact support set and recover the si gnal. All of t his can be done i n runt ime commensurate to that achievable with iterati ons of OMP. To anal y ze th e performance of KOMP, we first need to define the top-K norm: Definitio n 2: Let be a s ignal with sorted ent ries wh ere . Then th e top-K norm is defined to be: (4.1 ) In essen ce, the to p-K norm of a vector is the norm of its top en tries. It is n ot difficult t o show t hat this is a well -defined norm on . We begin by examini ng KOMP’s abilit y t o correctl y s elect all the correct non-zero entries of a -spa rse si gnal in addition to at most i ncorrect entries: Theorem 3: Let be a m easurement mat rix satisfying (4.2 ) for each ite ration . Assuming also that , then KOM P will recover an y -spar se s ignal from its m easurements . Proof . Observe first that KOMP will select all the co rrect entries i n iterations if it selects at least o ne correct entr y p er iteration . Assume that after iterations, KO MP has selected at l east cor rect in dices specified b y t he set an d no more t han incorrect i ndices specified by th e set . We define the set , which has no mor e than elements. Defining as in Theorem 1, we see t hat . As a result, a suf ficient con dition to ensur e that K OMP sel ects at least o ne correct i ndex at iteration is that (4.3 ) We can find an upp er bound on the left hand side as foll ows: (4.4 ) . (4.5 ) We next calculate a lower bound on the ri ght hand side: (4.6 ) (4.7 ) (4.8 ) From these boun ds, one can use si mple algebra to show that (4.2) is a sufficient co ndition for (4.3). Since , a simple l east s quares projection onto the s elected co lumns of will r ecover the desired si gnal exactl y. □ For the spe cial case , (4.2 ) takes the form: (4.9 ) For relatively l arge , and, ther efore, we see that 2-OMP enjo y s a si gnificantly s tronger performa nce guarante e than regul ar O MP. This s hould mak e i ntuitive s ense since we are allowi ng for t he sel ection of u p to incorr ect atom s. As l ong as (whi ch i s guaranteed by (4. 9) for ), the l east squar es projection of t he meas urements o nto t he space of sele cted colum ns will yield zeros fo r all incorr ectly chos en entries. In general, the p erforman ce o f KOMP will i mprove for increas ing until the fin al least squares projecti on becomes uns table. At this poi nt, performa nce will degrade rapidly . We can extend the KO MP result to general signals via the following Theorem : Theorem 4 : Let be a m easurement matrix th at s atisfies the RIP sh own in (4.2). Let b e any si gnal with optimal T -ter m ap proximation . Let and let . Suppose OMP h as nois y measur ements of t he form wher e . Then , after T it erations, K OMP will reco ver a -sparse estimate of th at satisfies: (4.10) where, f or reasonabl e RIP numbers, grows as ymptotically like . Proof. The argument is very sim ilar in natur e to that in Th eorem 2. W e retain the n otation used i n the proofs of Th eorem 2 an d Th eorem 3 . As before, we suppose th at at iteration t , OMP has s elected a t least one at om ind exed b y per it eration. A t iter ation t + 1, OMP will select at least one atom from pr ovided the greedy selection co ndition (4.11) is s atisfied where h as no mo re than elements. No w rewrite the residual as . We bou nd the numerat or from below as follows: (4.12) (4.13) (4.14) where . Next, we derive a low er bound for the denominato r: (4.15) (4.16) (4.17) Our bou nds impl y that a su fficient con dition for (4.11) is t hat (4.18) Now let denote the first it eration where this b ound does not hold. By defi nition of KOMP, . We have: (4.19) (4.20) where which h as cardinality at most . It is possibl e to further bou nd the left hand sid e by: (4.21) (4.22) (4.23) (4.24) (4.25) where . The s econd in equality comes f rom the fact th at in O MP, the r esidual is alwa y s decreas ing in ma gnitude regardless o f which atom s are selected . Now let (4.26) Since , whi ch follows from (4.18), we have th at (4.27) where (4.28) We use o ur previous b ound (4.29) and th e definition to obtain: (4.30) (4.31) as was t o be shown. □ We o bserve that the constants fo rm a decreasing sequ ence with respect t o , which suggests that t he errors d ecrease as we l et increase. Of c ourse, one may argue that since for each , is - sparse, and therefore, it is unfair to comp are reconstructions using different values of . As a result, we will let den ote the truncatio n of t o its top values. It is fairly straight-f orward to show the bound (4.32) which implies the foll owing corollar y . Corollary 1: Let be a measurement matrix t hat s atisfies the RIP shown i n (4.2 ). Then, for any s ignal , KOMP will return a -spa rse estimate whose -spars e truncation satisfie s: (4.33) We can now make a comp arison o f OMP and KOMP by compa ring th e co nstants agai nst . As sume for the moment t hat th e restri cted i sometr y num bers obey f or s ome . For sparsit y level , , and , we calcul ated the above constants and plotted them in Figure 1. Figure 1:Comparis on of OM P and KOMP constants and . In the case of and , we see that KOMP achieves better results than OMP when and re spectively. Eventua lly, when th e RIP con stants for sparsity level beco me too l arge (as in the ca se ), the constant begins to increase rapidl y. In t his latter case, KOMP does no t achieve a st ronger error bound than O MP regardless of the c hoice of . As we can see, sel ecting an appropriat e can b e challenging. If is select ed t oo small, then KOMP ’s pe rformance will be subo ptimal when c ompared agai nst OMP and K OMP with lar ger . H owever, if is selected t oo large, then in stability may arise due to the fact that the underlying RIP constants are becomin g increasingly large as well. Selecting t he right value o f is , th us, somew hat of an ar t form : Intui tion d erived from copious experim entation is extr emely helpful. 5. Experimental Results An obser vation that one will quickly m ake regardi ng compressi ve sensing algorithms is that, in practice, they all work b etter than predicted b y t hei r respective t heoretical guarant ees. In other word s, t he restricted isometr y pr operty only af fords relativel y wea k sufficient conditions specif ying when some algorithm can 0 2 4 6 8 10 12 14 16 18 20 10 15 20 25 K Valu e Constan t C K (T) Th eoretic al Com paris on of OMP an d KOMP 2C k +2 ( β β β β = .3) 2C k +2 ( β β β β = .8) 2C k +2 ( β β β β = .95) C 1 ( β β β β = .3) C 1 ( β β β β = .8) C 1 ( β β β β = .95) exactl y reco ver an y s ignal with a given n umber o f n on-zero entries . The reas on f or this is that RIP s provid e worse case esti mates that m ay not appear oft en in pr actice. In order t o address this iss ue, mu ch work has been done in p erformin g “average-case” a nalyses on compressive sensing algorithms (see [26], [12], et c.). In these wo rks, theoreti cal results are o btained regarding t he various algor ithms' perform ance in recoveri ng co mmonplace s parse signals , e.g. w ith Gaussian or binar y coef fici ents. For o ur p urposes, we will empi rically perform a similar anal y sis by designing s everal experim ents which are shown below. In the first ex periment , for every s parsit y le vel fro m 4 to 52 i n increments o f 4, the following test was repeat ed 100 ti mes: A -s parse Gaussian signal of l ength 256 was generat ed and m easurements of the f orm were collect ed where i s a Gaussi an rando m matrix ( selected differently each t ime). Then the f ollowing algorith ms were us ed t o recover : OMP, 2-OMP, Hybrid 0.2-OMP 1 , CoSAMP 2 [2 2], CoSAMP 1 2 , Iterati ve Thresholding [ 3],[8],[13], and Basis Pu rsuit [5],[7 ],[9]. Both versions o f CoSAMP were run with 10 iterations. For Iter ative T hresholdin g, the Hard Thresholding routine in the Sparsify MATLAB packa ge [ 4] was used with all paramet ers bei ng sel ected optimall y by t he soft ware. W e us ed th e L1-Magic package [2 4] for Bas is Pu rsuit w ith the default settings. The t wo performance crite ria e valuated were t he p robability of exact reconstruction (within a 1 % tolerance for r elative er ror) a nd the runtim e. Plots of th e r esults are show n below i n Figu re 2 a nd Fi gure 3. In terms of exact reconst ruction prob ability, Basis Pursuit did slightl y better th an OMP. How ever, the modificatio ns proposed in Section 2.2 ca me in quite hand y becaus e 2-OMP and H ybrid 0.2 OMP both o utperformed Basis Pursuit. Thus, th e s uggestion of allowing multiple ato ms t o be selected p er iter ation w as ex actl y what was needed to give OMP t he extra boost t o put it on top . CoSAMP 1 performed better than CoSAMP 2 and Iterative Th resholding fell rou ghly in between i n t his particular experiment al set up. Wit h respect to runtim e, all of t he algorithms were ver y fast with t he exception o f convex o pti mization. These algorithms took no more than a tenth of a se cond to run wh ereas mini mization took abo ut a half of a second. The overall conclusion of t his experiment is that 2-OMP was the be st overall pe rformer. Figure 2: Probability of exact reconstructi on of T-spars e signals using vario us compressi ve sensing algorit hms. 1 Hybri d -OMP is vari ation of K OMP wh ere at iteration , t he top atoms are selected. Thus, it sel ects more atoms du ring earlier iterations and fewer atoms in su bsequent iterations . 2 CoSAMP 1 is a vari ation of regul ar CoSAMP 2 (see [2 2]) where at oms are s elected per i teration as oppos ed to the standa rd . Figure 3: Runtimes of various compr essive sensin g algorithms wh en recoveri ng T-sparse sign als. Of co urse, the above expe riment only co mpares the various co mpressive sen sing algor ithms with respect t o their abilities to recover sparse signals. In the next experiment, the objective signals were not allowed to strictly b e sparse. Here, 20 instan ces o f a signals o f lengt h 256 w ere generated with exponentiall y deca y ing coefficient s i n random locations. The decay rate was gi ven by . The signals were reconstru cted usin g the same algorithms and sp arsity pa rameters varying fro m to i n incr ements o f four. Figure 4 s hows t he various average reconstructio n errors produced b y thes e algorithms . Figure 4: Average T-ter m reconstructi on errors in recovering sig nals wit h exponentially decayin g coefficients gener ated by the various compressive sensing algorithm s as a f unction of the sparsity param eter T. In this experiment, OMP and its vari ants outperformed the other algori thms. In fact, t he -term erro r produced by 2-OMP is n early i denti cal to t he optimal -term error up u ntil around . The L1- minimi zation error converges to around 0.24 whereas the true optimal error should converge t o zero. An interestin g point to not e i s t hat all of t he above greed y al gorithms ul timat ely exp erience a s udden a nd si gnificant bre akdown in performance when is taken t oo l arge. This is b ecause o f the in stabilit y t hat arises from computin g p rojections w hen the underlying res tricted iso metry num bers a pproach unit y . In other words, th e mo re vectors that are being processe d at any parti cular i teration, the greater the instabi lity. This m akes algorith ms such as Iterati ve Thresholding and CoSAMP, wh ich process a large set of at oms right from the st art, highly suscepti ble t o breakdown if care is not select ed in choosing an appropri ate sparsit y level . I n these cases, becom es a highly sensiti ve parameter that can corrupt the out put very suddenl y and swi ftl y . On the o ther h and, OMP and its variants are more r obust with respect to t olerating a large val ue of . Th is is because th ese algorith ms select no more than a few atoms per iter ation. Thus, an y instabilit y t hat ma y r esult f rom a p oor choice of will defer itself to lat er i terations . The first several sel ected atoms will r emain correct. As a res ult, if one obs erves instabi lity b eginning to d evelop in th e matching p ursuit, t hen h e/sh e can backtrack a f ew i terations and simply decid e to st op t here. T his is not a n option with Iterative T hresholding and CoSAMP. Ultimatel y, all of the greedy algo rithms will experie nce a breakdown in performance; h owever, OMP and it s variants ar e structured so th at th ey can be stopped before the resulting erro r grows out of control. Overall, we see that OMP is an extremel y powerf ul, ef ficient, and robust algorithm th at receives mu ch l ess credit t han it deserves. It is significantl y f aster t han con vex opti mization t echniques and is l ess sensit ive to errors i n sparsity level esti mates. 6. Conclusion Convex optimi zation has long been consid ered th e gold standard compressi ve sensing recovery al gorit hm. Throu ghout the y ears , it has enjo y ed s ignifi cant theor etical d evelopment, putting it ahead o f other fa ster algorithms , which u p u ntil r ecently, have been labeled as mere heuristics. Th e discovery of RIP -based performa nce guarantees for globalized matchi ng p ursuits such as CoSAMP and Iterative Threshol ding h as prompt ed a l andslide of th eoretical r esearch i nto this class of al gorithms. T his p aper pr esented near -optimal RIP-based guar antees for t he mo re loc alized O rthogonal Matchin g Purs uit algori thm and the rel ated method K-fold Orth ogonal Matching Pursuit. In addition to d eriving improved sufficient condit ions guaranteei ng the recovera bility of st rictly spar se signals, we also pr oved reconstru ction err or bounds for general signals possi bly corru pted b y meas urement noise. Whil e making significant contributions t o OMP’s theoreti cal developm ent, w e have fail ed to rigorousl y p rove th at OMP performs better t han convex optimi zation, Co SAMP, Iterative Thresholdi ng, etc. which do not suffer from the bl ow-up f actor that the latter algo rithms s uccessfull y avoid. Thus , o ne m ay be l ed to believe t hat OMP i s an inferior al gorithm. Of course, the e mpirical evidence of Section 5 suggests oth erwise. In pract ice, OMP and KO MP oft en outperfor m other algorith ms in terms of accurac y , converg ence, an d stability. A po ssible expla nation for this oxymoronic behavior i s that RIP anal y sis consi ders w orst case scenario s. In other words, it i s possible to construct “bad” si gnals th at co nvex opti mization wo uld recover m ore su ccessfully t han OMP. However , if an aver age case metri c is used t o t heoretically eval uate t he wi de sui te of compressi ve sensin g algorith ms, we are quit e confident tha t OMP wo uld rank very well. Acknowledgements The auth or would like to thank Anna Gilb ert and Martin Strauss from th e Universit y of Mi chigan for reviewin g this work an d providing co mments and suggestions for im provement. References: [1] C. R. Berger, S. Zh ou, and P . Will ett. “Spars e channel es timation for OFDM: Over-complet e dictionarie s and super -resolution method s,” 2009. [2] S. Bhatt acharya, T. Blumens ath, B. Mulgrew, an d M. E. Davies. “Fast encoding of syntheti c aperture radar raw data usi ng compressi ve s ensing,” I EEE Wor kshop on Statistical Signal Processi ng , Aug 2007 . [3] T. Blumensath and M. E. Davi es. “Iterative hard thresholdi ng for compressed sensing,” Appli ed and Comput ational Harmo nic Analysis , 20 09. [4] T. Blum ensath. “Sparsif y 0.4. ” [5] E. C andes, J Romberg, and T. Tao. “Robust uncertaint y principles: E xact signal reconstruction from highly inco mplete freq uency informati on,” IEEE Trans . Info. Theor y , Feb. 2006. [6] E. Candes, J. Romberg, and T. Tao. “Stable signal recovery from inco mplete and inaccur ate measur ements,” Comm. Pur e Appl. Math , 59:1207 -1223, 2006. [7] E. Candes . “The rest ricted isometr y pro pert y and its impli cations for compressed sen sing,” Comp te Rendus de l'Academie des Sciences, Paris, Serie I , 3 46, 589-592. [8] I. Dau bechies, M . Defris e, and C. De Mol. “A n it erative thresholding a lgorithm f or l inear i nverse problems with a sparsity constraint,” Communic ations on Pure and Applied Mathematics , vol. LVII, pp. 1413 -1457, 2004. [9] D. L. Dono ho. “Compres sed Sensing,” IEEE Trans. on Info. Theor y, Apr. 2006 [10] D. L. Donoho. “For most l arge un derdetermine d s y st ems of l inear equations t he minimal l1-norm solutio n is also the sp arsest solution,” 200 4. [11] M. Davenport and M. Wakin. “Anal ysis of orthogonal matching pursuit using the restricted iso metry prope rty,” Preprint , Au g 2009. [12] Y. Eld ar and H. Rauhut. “Av erage case anal y sis of multichannel b asis pursuit ,” Proc. Sa mpTA09 , 2009 . [13] M. Figu eiredo and R. Nowak. “An EM algorit hm fo r wavelet -based i mage res toration,” IEE E Transa ctions on Ima ge Processing , vol. 12, pp. 906 -916, 2003. [14] J. H. Fried man and J. W . Tukey, “A proj ection pursuit algorithm for exploratory data analysis, IEEE Trans. Computers , vol. C-23(9), pp. 881-89 0, Sep. 1974 . [15] A. C . G ilbert, S. Muthukrishnan, and M. J. S t rauss. “Approximation of fu nctions ov er redundant dictionarie s using cohe rence,” SODA , pp . 243-253, 2003. [16] A. C. Gil bert an d J. A. Tro pp. “Signal reco very from partial infor mation via orth ogonal m atching pursuit, Submitted . [17] T. Hasti e, R. Ti bshirani, and J. Fried man. “The elements of st atistical lea rning: data m ining, infer ence, and predi ction,” Second Edition, Spri nger , 2009. [18] E. Liu, V. N. Teml ykov. “Orthogonal super greed y algori thm and applications in compr essed sensin g,” Preprint, 2010. [19] E. Livshit z, “On effi ciency of o rthogonal matching pursuit,” Pr eprint, 201 0. [20] R. Mal eh, A. C. Gilbert, and M. J . S trauss. “Sparse gradient i mage reconst ruction done faster ,” ICIP Proc. , 2 007. [21] R. Maleh, D. Yoon, A . C. Gilbert . “Fast algorith m for sparse si gnal approxim ation u sing multi ple additive di ctionaries,” SPARS Proceed ings , 2009. [22] S. G. Mal lat and Z. Zhang. “Matchi ng pursuits with ti me-frequency di ction aries,” IEEE Tr ans. Signal Processing , vol. 41(12), Dec. 1993 . [23] D. Needell an d J. A. Tropp. “Cosamp: Iter ative signal recovery from in complete and inaccur ate sampl es,” Appl. Comp. Harmonic Anal. , vol. 26, pp. 301-321, 2008. [24] J. Rom berg. “L1-Magic,” ww w.acm.calt ech.edu/l1magi c/. [25] M. Rudel son and R. Versh y nin. “On spa rse r econstruction from Fo urier and Gaussian measu rements,” Communic ations on Pure and Appli ed Mathematic s , 61, 1025-1045, 2 008. [26] J. A. Tropp . “Average-case a nalysis of greed y pu rsuit, ” SPIE Wavel ets XI , p p. 59040 1.01-11, Aug. 2005 . [27] J. A. Tro pp. “G reed is good : algorit hmic results for sparse appro ximation,” IEEE Trans . In fo. Theory , vol. 50(1 0), pp. 2231-2242 , Oct. 2004.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment