Vector Diffusion Maps and the Connection Laplacian

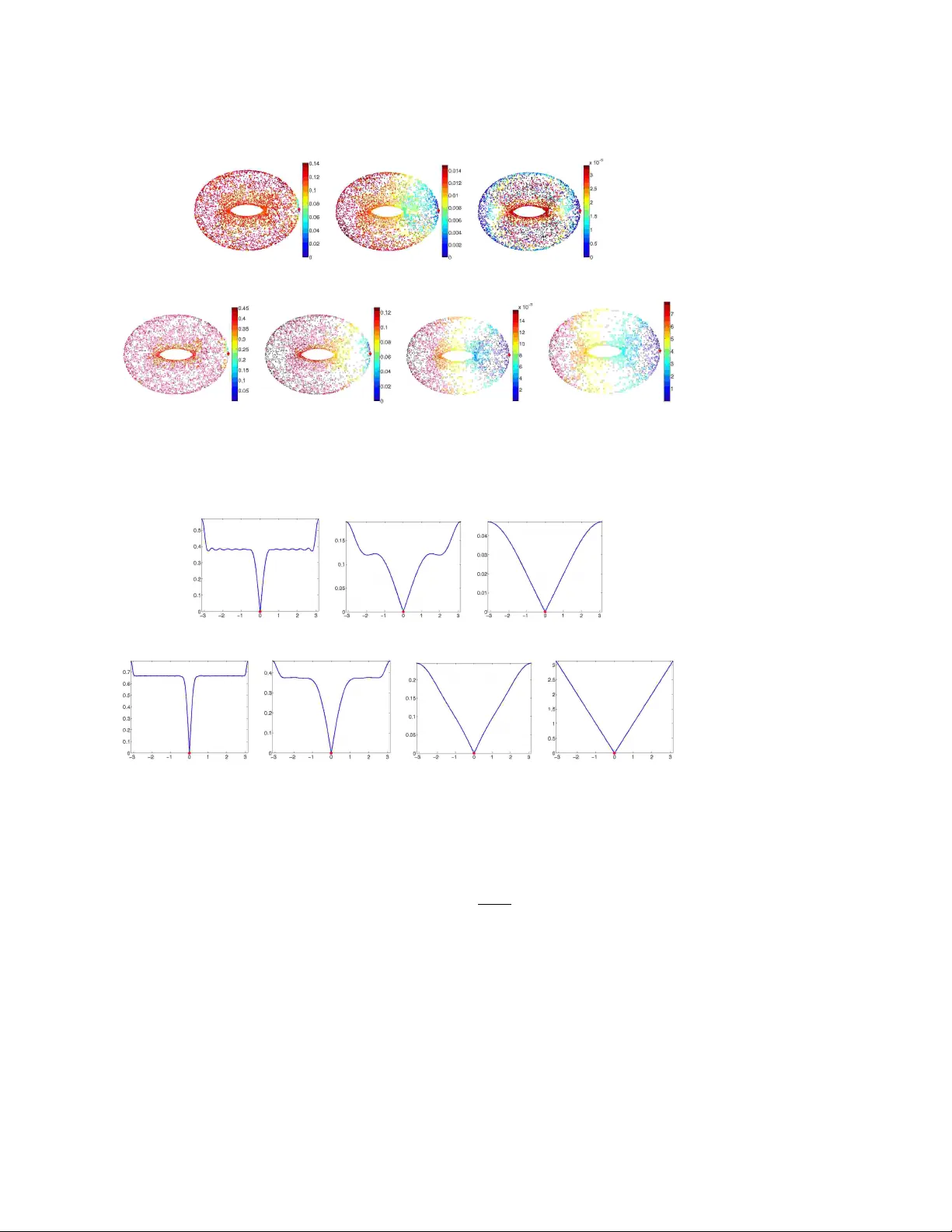

We introduce {\em vector diffusion maps} (VDM), a new mathematical framework for organizing and analyzing massive high dimensional data sets, images and shapes. VDM is a mathematical and algorithmic generalization of diffusion maps and other non-line…

Authors: Amit Singer, Hau-tieng Wu