The semantic mapping of words and co-words in contexts

Meaning can be generated when information is related at a systemic level. Such a system can be an observer, but also a discourse, for example, operationalized as a set of documents. The measurement of semantics as similarity in patterns (correlations…

Authors: Loet Leydesdorff, Kasper Welbers

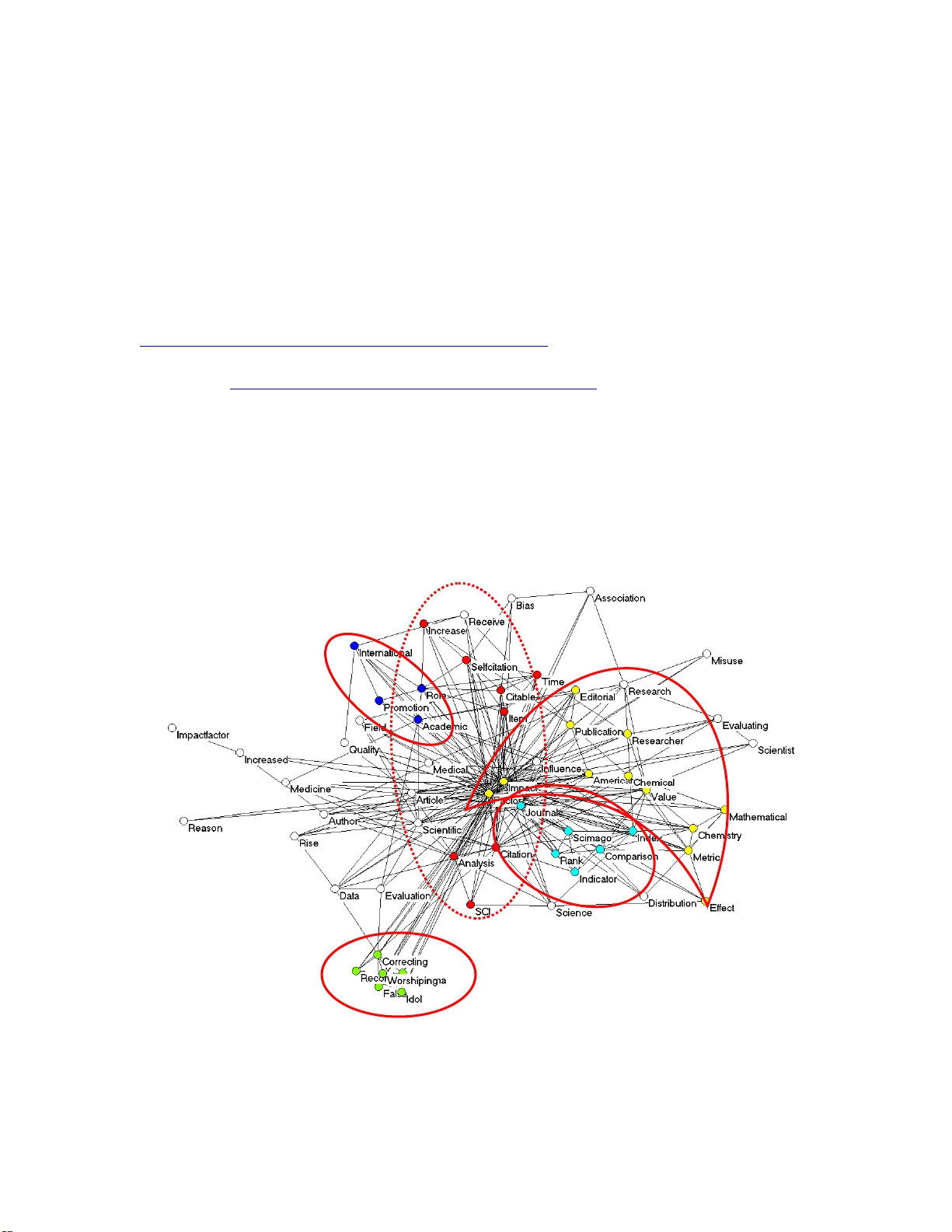

The semantic mapping of words and co-words in contexts Journal of Informetrics (2011; in press); available at http://arxiv.org/abs/1011.5209 Loet Leydesdorff & Kasper Welbers Amsterdam School of Comm unication Research (ASCoR), University of Amsterdam Kloveniersburgwal 48, 1012 CX Amsterdam, The Netherlands Abstract Meaning can be generated when information is related at a system ic level. Such a system can be an observer, but also a discourse, for example, operationa lized as a set of documents. The measurem ent of semantics as similarity in p atterns (correlations) and latent variables (fac tor analysis) has been enhanced by com puter techniques and the use of statistics; for example, in “Latent S ema ntic Analysis.” This communication provides an introduction, an example, pointers to rele vant software, and summarizes the choices that can be made by the analyst. Visualiza tion (“semantic m apping”) is thus made more accessible. Keywords: semantic, map, docum ent, text, word, latent, meaning 1 Introduction In response to the developm ent of co-citation m aps during the 1970s by Small (1973; Small & Griffith, 1974), Callon et a l. (1983) proposed developi ng co-word maps as an alternative to the study of se mantic relations in scientif ic and technology literatures (Callon et al ., 1986; Leydesdorff, 1989). Ever since, these techniques for “co-word mapping” have been further developed, for ex ample, into “Latent Sem antic Analysis” (e.g., Landauer et al ., 1998; Leydesdorff, 1997). These methods operate on a word- document matrix in which the documents can be considered as pr oviding the cases (e.g., rows) to which the words are attributed as variables (columns). Factor-analytic techniques allow for clusteri ng the words in terms of the documents, or similarly, the documents in term s of the semantic structures of the words ( Q -factor analysis). Singular value decomposition com bines these two options, but is not so easily available in standard software packages su ch as SPSS. In this brief communication, we provide an overview and summary for schol ars and students who wish to use these techniques as an instrument, for example, in content analysis (D anowski, 2009). A more extensive manual can be found at http://www.leydesdorff.net/indicators where the corresponding software is also made avai lable. In this communication, we provide arguments for choices that were made when developing the software. Our aim is to keep the free software up-to-date, and to keep th e applications as vers atile and universally applicable as possible. 2 The word-document matrix The basic matrix for the analysis repr esents the occurrences of words in documents. Documents are considered as the units of analys is. These documents can vary in size from large documents to single sentences, such as, for exam ple, document titles. The documents contain words which can be or ganized into sentences, paragraphs, and sections. The semantic structures in the re lations among the words can be very different at these various levels of aggregation (Le ydesdorff, 1991, 1995). Thus, the researcher has first to decide what will be considere d as relevant units of analysis. Secondly, which words should be included in the analysis? An obvious candidate for the selection is frequency of word occurrences (after correction for stopwords). Salton & McGill (1983), however, suggested that the m ost frequently and least frequently occurring words can be less significant than words with a moderate frequency. For this purpose, these authors proposed a measure: the so-called “ter m frequency-inverse document frequency,” that is, a weight which increases with the frequency of the term i , but decreases as the term occurs in more docum ents ( k ) in the set (of n docum ents). The tf-idf can be formalized as follows: Tf-Idf ik = FREQ ik * [log 2 ( n / DOCFREQ k )] (1) The function assigns a high degree of importa nce to terms occurring more frequently in only a few documents of a collection, and is commonly used in inform ation retrieval 3 (Spark Jones, 1972). Given its background in practice, however, the measure has not been further developed into a statistics fo r distinguishing the relative sig nificance of terms. The proper statistics to compare the rows or columns of a m atrix is provided by χ 2 or— using the Latin alphabet—“chi-square” (e.g., Mogoutov et al ., 2008; Van Atteveldt, 2005). Chi-square is defined as follows: (2) i i i i Expected Expected Observed / ) ( 2 2 The chi-square is summed over the cells of the m atrix by comparing for each cell the observed value with the expectation—calcu lated in terms of the margin totals of the matrix. The resulting sum values can then be tested against a standard table. Both the relevant routines and the chi-square table are now widely availabl e on the Internet; for example, at http://people.ku.edu/~preacher/chisq/chisq.htm . (If the observed values are smaller than five, one should apply the so -called Yates correction; the corresponding statistics is available, for example, at http://www.fon.hum.uva.nl/Service /Statistics/EqualDistribX 2.html .) Our programs—to be discussed below in more detail and available from http://www.leydesdorff.net/indicators —provide the user with the chi-square values for each word as a variable (by summing the chi-sq uare values for cells over a column of the matrix) and additionally a file “expected.dbf” which contains the expected values in the 4 same format as the observed values in the file “matrix.dbf.” The user can thus easily compute the chi-square values using Excel. 1 Furthermore, the comparison between observed and expected values allows for a third measure which is easy to understand, albeit not based on a statistics, namel y, the value of observed over expected ( obs/exp ). As in the case of chi-square, observed/expected values can be computed for each cell. However, these values can also be summed, for example, over the columns in order to enable the analyst to assess to which extent a word (as a column variable) occurs above or below expectation. In summary, one can use four criteria for sele cting the list of words to be included in the analysis: ( i ) word frequency, ( ii ) the value of tf-idf, ( iii ) the contribution of the column to the chi-square of the matrix, and ( iv ) the margin totals of observed/expected for each word. In case studies, we found this last m easure most convenient. However, all four measures are made available. The analysis The asymmetrical word-document matrix—in social network analysis also called a 2- mode matrix—can be transformed into a symm etrical co-occurrence matrix (1-mode) using matrix algebra. This can be done in bot h (orthogonal) directions, that is, in terms of co-words or co-occurring documents. 2 The resulting matrix is called an af filiations matrix in social n etwork analysis, and is st andardly available in software for social 1 Chi-square is not a vailable for matrices in SPSS b eca use SPSS pres umes that the two variables have first to be cross-t abled. 2 Technically, one multiplies the matrix ( A ) with its transposed as either AA T or A T A. 5 network analysis (such as Pajek and UCINet ). This network is relational and allows, among other things, for the analysis of path ways. Pathways have been identified as indicators of inventions a nd innovations (e.g., Bailón-Moreno et al ., 2005 and 2007; Stegmann & Grohmann, 2003). The word-document matrix can also be analyzed in terms of its latent dimensions using factor analysis, m ulti-dimensional scaling (MD S) or singular value dec omposition (SVD), etc. Note that factor analysis and SVD opera te in the vector-space that is generated b y first transforming the m atrix using the Pearson correlation coefficients between the variables. In the vector space, however, si m ilarity is no longer defined in terms of relations, but correlations among the distributions (vectors). Since the distributions of words in texts ar e skewed (Ijiri & Sim on, 1977), the use of the Pearson correlation—implying regression to the m ean—is debatable (Ahlgren et al ., 2003). Salton’s cosine has the advantage of not using the mean, but otherwise its formulation is completely analogous. Cosine -normalization of the variables therefore provides an attractive alternative, but one loses the advantage of orthogonal rotation possible with factor analysis and statis tical testing (Bensm an, 2003; White, 2003 and 2004). However, one can use the factor-analytic results to color the semantic m aps based on cosine-normalized variables (Egghe & Leydesdorff, 2009). Note that it is preferable to factor analyze not the (1-m ode) co-occurren ce matrix, but the 2-mode word-docum ent matrix if available as a result of the data collection (Leydesdorff & Vaughan, 2006). 6 In other words, the vector space can be a pproximated by cons tructing a semantic m ap on the basis of cosine-normalized variable patter ns or by using factor analysis (or SVD), but these two representations will not be precis ely similar because the facto r analysis is based on the Pearson correlation matrix. No te that the transition to the ve ctor space changes the perspective from a network pe rspective (as pred ominant in social network or co-word analysis) to a systemic perspective. The word s are provided with m eaning in terms of the semantic structures in the sets, and theref or e one can legitimately u se concepts such as “latent semantic analysis ” and “ semantic mapping.” The results can also be considered as a quant itative form of content analysis (Danowski, 2009; Carley & Kaufer, 1993; Leydesdorff & Hellsten, 2005). Unlike content analysis, however, the semantic structure (for example, the grouping of words as variables in terms of clusters or factors) is induced from the data and not provided on the basis of an a priori scheme. Thus, one can potentially reduce the so-called “indexe r effect” (e.g., Law & Whittaker, 1992). Alternatively, one can use th is techn ique for sets which are too large for qualitative content analysis or for the validation of content analysis using samples. In summary, the developm ent of statistical techniques has enabled us to move from Osgood et al. ’s (1957) initial attempts to measure meaning using 7-point (Likert) scales to automated content analysis which provides us with semantic maps of the intrinsic meaning contained in document sets. Relevant software and techniques for these m apping efforts are available on the Internet. 7 An empirical example As an empirical example, we searched th e W eb-of-Science (WoS) of Thomson Reuters with the search string ‘ti= “impact fact or” and py = (2008 or 2009)’ on Novemb er 12, 2010. This search resulted in 195 documents ; these documents contain 59 words which occur more than twice (after correction for stop words; for example, at http://www.lextek.com/manuals/onix/stopwords1.htm l ). Using the routine “ti. exe”— available at http://www.leydesdorff.net/software/ti/index.htm —one can perform factor analysis of the matrix and/or normalize the co lumn variables using the cosine for the visualization. In Figure 1, th e rotated factor matrix was us ed to colour the nodes in the map based on the cosine-normalized word occurrences over the docum ents. Factor 4 Factor 3 Factor 2 Factor 5 Factor 1 Figure 1 : Five factors in 59 word s as variables occurring m ore than twice in 195 documents with “impact factor” in the title and published in 2008 or 2009 (Kamada & Kawai, 1989; Factor loadings and co sine values < 0.1 are suppressed). 8 The results as shown in Figure 1 are not completely satisfactory; words which load on Factor 3 are displayed on both sides of the search terms “Im pact” and “Factor”. These two words which were also the original search s trings are positioned in the center be cause they have a cosine similarity with all ot her words above the threshold level (cosine ≥ 0.1). By changing to the observed/expected ratios , Figure 2 can be ge nerated analogously. In this case, we do not use the word-document ma trix with word frequency values, but the corresponding matrix of observed/expected ratios in each cell. Using this matrix as in put to the factor analysis, the search terms “Impact” and “Factor” no longer load positively on any of the five factors, but exhibit in terfactorial complexity. The (consequently different!) factor structu re can in this cas e be penciled continge ntly on top of this semantic map. Note that many words do not exhi bit factor loadings on any of the factors above the level of 0.1 (and ar e therefore left white). 9 Factor 1 Factor 4 Factor 2 Factor 5 Factor 3 Figure 2 : Five factors as in Figure 1, but now with observed/expected values as input instead of observed values. In the above figures, only positive factor load ings were used for the colo ring. Another visualization which includes also neg ative fa ctor loadings can be generated by feeding the rotated component matrix directly into th e visualization program as an asymmetrical (2-mode) matrix. This leads in th is case to Figure 3. The factor lo adings are by definition equal to the Pearson correlation coefficients among the variables (vectors) and latent dimensions (eigenvectors). The two constr ucts—vectors and eigenve ctors—can thus be projected onto a single vector sp ace (Leydesdorff & Probst, 2009). 10 Figure 3 : Visualization of the rotated factor m atr ix; dotted lines repres ent negativ e factor loadings (Fruchterman & Reingold, 1991). Fact or loadings between -0.1 and +0.1 were suppressed. One possible advantage of this representation is facilitation of the factor designation. For example, Factor 1 is otherwise isolated a nd indicates a set of words (a “frame”; cf. Hellsten et al ., 2010; Scheufele, 1999) critical to the use of impact factors in research evaluation. Factor 5 seems most connected to the other three groupings; it shows the conceptual origins of “impact factor.” This F actor 5 is most interre la ted to Factor 3 which provides the frame of “citation analysis.” Th e words “Factor,” “Value,” and “Metric” 11 provide articulation points between these two star-shaped graphs. “Value” and “Metric” also relate to Factor 2, which indicates m ore recent ranking efforts. Figures for different years can also be anim ated using a version of the network program Visone specially designed fo r this purpose (at http://www.leydesdorff.net/visone ; Leydesdorff & Schank, 2008). In addition to stat istical packages, the various output files (in the Pajek format) can also be import ed into other network programs such as VosViewer available at http://www.vosviewer.org (Van Eck & Waltman, 2010) or the Social Networks Image Animator (S oNIA) at http://www.stanford.edu/group/sonia/ (Bender-de Moll & Mc Farland, 2006; Moody et al ., 2005). In addition to these possibilities for seman tic m apping, the word-document m atrix can, as noted, also be multiplied with its tran sposed in order to generate the word co-occurrence matrix. This matrix is made available by the pro grams in the Pajek form at as the file coocc.dat. Figure 4 provides the resulting co-word map using all co-occu rrences with a threshold of values larger than unity. 12 Factor 1 Figure 4 : Co-word map of 59 words occurring mo re than twice in 195 docum ents with “impact factor” in the title and publis hed in 2008 or 2008 (Kam ada & Kawai, 1989; single co-occurrences of words are suppressed; k -core algorithm used for coloring the nodes). In this map (Figure 4), the words “Factor” a nd “Impact” are v ery central because they co- occur in all items of the set. Other words with frequent co-occurrenc es such as “Journal” and “Indicator” occur in the vici nity of these two search terms. In this representation, Factor 1 distinguished above is still clearly visible at the top of the figure because these words—used exclusively in papers of critics of using impact factors—co-occur more in a grouping than others. Although the words attri buted to Factor 2 can still be found as a separate group—colored in grey around th e center—the distincti on of structures upon 13 visual inspection becomes increasingly diffi cult when one would attempt to retrieve factors which explain less of the common variance. The star-shaped center-periphery structure dom inates the graph in this network space and tends to overshadow the semantic structures of the vector space that provide the relations with meaning at the systems level. I n the relational paradigm, however, relations are considered performatively, that is, as the potential construction of new m eaning which crosses hitherto shaped divi des and thus can function as indicators of invention and innovation (Bailón-Moreno et al ., 2005 and 2007; Stegmann & Grohmann, 2003; cf. Leydesdorff, 1992). Conclusions and summary Despite the emphasis in the wording on semantic s (as in “la tent semantic analysis,” “ the semantic web” or “semantic m apping”), the measurem ent of the dynamics of meaning is still in its infancy. Meaning is generated when different bits of information are r elated at the systems level, and thus positioned in a vector space. Perh aps, one can define “knowledge” recursively as th e positioning of different m eanings in relation to one another. Note that in the above, semantics is cons idered as a property of language, whereas meaning is often defined in terms of use (W ittgenstein, 1953), that is, at th e level of agency. Ever since the exploration of inters ubjective “meaning” in different philosophies 14 (e.g., Husserl, 1929; Mead, 1932), the focus in the measurement of meaning has gradually shifted to the intrinsic meaning of te xtual elem ents in discourses and texts, that is, to a more objective and s upra-individual level. The prag matic aspects of meaning can be measured using Osgood et al .’s (1957) Likert-scales a nd by asking respondents. Modeling the dynamics of meaning requires a nother elaboration (cf. Leydesdorff, 2010). References: Ahlgren, P., Jarneving, B., & Rousseau, R. (2003). Requirement for a Cocitation Similarity Measure, with Special Refere nce to Pearson’s C orrelation Coefficient. Journal of the American Society fo r Information Science and Technology, 54 (6), 550-560. Bailón-Moreno, R., Jurado-Almeda, E., Ruiz-B años, R., & Courtial, J. P. (2005). The unified scientometric model: Fr actality and transf ractality, Scientometrics, 65 (2), 231-257. Bailón-Moreno, R., Jurado-Almeda, E., Ruiz-B años, R., Courtial, J. P., & Jiménez- Contreras, E. (2007). The pulsing structur e of science: Ortega y Gasset, Saint Matthew and transfractality, Scientome trics, 71 (1), 3-24. Bender-deMoll, S., & McFarland, D. A. (2006) . The art and science of dynamic network visualization. Journal of Social Structure, 7 (2), http://www.cmu.edu/joss/content/articles/volume7/deMollMcFarland/ . Bensman, S. J. (2004). Pearson’s r and Au thor Cocitation Analysis: A Commentary on the Controversy. Journal of the American Society for Information Science and Technology, 55 (10), 935-936. Callon, M., Courtial, J.-P., Turner, W. A., & Bauin, S. (1983). From Translations to Problematic Networks: An Intr oduction to Co-word Analysis. Social Scie nce Information 22 (2), 191-235. Callon, M., Law, J., & Rip, A. (Eds.). (1986). Mapping the Dynamics of Science and Technology . London: Macmillan. Carley, K. M., & Kaufer, D. S. (1993). Seman tic connectivity: An approach for analyzing symbols in semantic networks. Communication Theory, 3 (3), 183-213. Danowski, J. A. (2009). Inferences from word networks in messages. In K. Krippendorff & M. A. Bock (Eds.), The content analysis read er (pp. 421-429). Los Angeles, etc.: Sage. Fruchterman, T., & Reingold, E. (1991). Gra ph drawing by force-directed replacem ent. Software--Practice and Experience, 21 , 1129-1166. Hellsten, I., Dawson, J., & Leydesdorff, L. (2010). Implicit media fram es: Automated analysis of public debate on artificial sweeteners. Public Understanding of Science, 19 (5), 590-608. 15 Husserl, E. (1929). Cartesianische Meditationen und Pariser Vorträge [Cartesian meditations and the Paris lectures] . The Hague: Marti nus Nijhoff, 1973. Ijiri, Y., & Simon, H. A. (1977). Skew Distributions and the Sizes of Business Firms Amsterdam, etc.: North Holland). Kamada, T., & Kawai, S. (1989). An algorithm for drawing general undirected graphs. Information Processing Letters, 31 (1), 7-15. Landauer, T. K., Foltz, P. W., & Laham , D. (1998). An introduction to latent semantic analysis. Discourse processes, 25 (2), 259-284. Law, J., & Whittaker, J. (1992). Mapping acidi fication research: A te st of the co-word method. Scientometrics, 23 (3), 417-461. Leydesdorff, L. (1989). Words and Co-Words as Indicators of Intellectual Organization. Research Policy, 18 (4), 209-223. Leydesdorff, L. (1991). In Search of Epistemic Networks. Social Studies of Science, 21 , 75-110. Leydesdorff, L. (1992). A Valid ation Study of “LEXIMAPPE.” Scientometrics, 25, 295- 312. Leydesdorff, L. (1995). The Challenge of Scientometrics: The development, measurement, and self-organization of sc ientific communications . Leiden: DSWO Press, Leiden University; at http://www.universal- publishers.com/book.php?method=ISBN&book=1581126816 . Leydesdorff, L. (1997). Why Words and Co-W ords Cannot Map the Development of the Sciences. Journal of the American Society for Information Science, 48 (5), 418- 427. Leydesdorff, L. (2010). The Communication of Meaning and the Structuration of Expectations: Giddens’ “Structura tion Theory” and Luhmann’s “Self- Organization”. Journal of the American Society for Information Science and Technology, 61 (10), 2138-2150. Leydesdorff, L., & Hellsten, I. (2005). Metaphors and Diaphors in Science Communication: Mapping the Case of ‘Stem-Cell Research’. Science Communication, 27 (1), 64-99. Leydesdorff, L., & Probst, C. (2009). The Delinea tion of an Interdisci plinary Specialty in terms of a Journal Set: The Ca se of Communication Studies. Journal of the American Society for Information Science and Technology, 60 (8), 1709-1718. Leydesdorff, L., & Vaughan, L. (2006). Co-occurr ence Matrices and thei r Applications in Information Science: Extending ACA to the Web Environm ent. Journal of the American Society for Information Science and Technology, 57 (12), 1616-1628. Mead, G. H. (1934). The Point of View of So cial Behaviourism. In C. H. Morris (Ed.), Mind, Self, & Society fr om the Standpoint of a Social Behaviourist. Works of G. H . Mead (Vol. 1, pp. 1-41). Chicago and London: University of Chicago Press. Mogoutov, A., Cambrosio, A., Keating, P., & Mu star, P. (2008). Bi omedical innovation at the laboratory, clinical and commerci al interface: A new method for mapping research projects, publica tions and patents in th e field of microarrays. Journal of Informetrics, 2 (4), 341-353. Moody, J., McFarland, D., & Bender-deMoll, S. (2005). Dynamic Netw ork Visualization. American Journal of Sociology, 110 (4), 1206-1241. 16 17 Osgood, C. E., Suci, G., & Tannenbaum, P. (1957). The measurement of meaning . Urbana: University of Illinois Press. Salton, G., & McGill, M. J. (1983). Introduction to Modern Information Retrieval . Auckland, etc.: McGraw-Hill. Scheufele, D. A. (1999). Framing as a theory of media effects. The Journal of Communication, 49 (1), 103-122. Small, H. (1973). Co-citation in the Scient ific Literature: A New m easure of the Relationship between Two Documents. Journal of the American Society for Information Science, 24 (4), 265-269. Small, H., & Griffith, B. (1974). The St ructure of Scientif ic Literature I. Science Studies 4 , 17-40. Spark Jones, K. (1972). A sta tistical interpretati on of term importance in automatic indexing. Journal of Documentation, 28 (1), 11-21. Stegmann, J., & Grohmann, G. (2003), H ypothesis generation guided by co-word clustering, Scientometrics, 56 (1), 111-135. Van Atteveldt, W. (2005). Automatic codebook acquisition. Paper presented at the workhop Methods and Techniques Innovations and Applications in Political Science Politicologenetmaal 2005 Antw erp, 19-20 May 2005; available at http://www.vanatteveldt .com/pub/pe05WvA.pdf . Van Eck, N. J., & Waltman, L. (2010). Software survey: V OSviewer, a computer program for bibliometric m apping. Scientometrics, 84 (2), 523-538. White, H. D. (2003). Author Coci tation Analysis and Pearson’s r . Journal of the American Society for Information Science and Technology, 54 (13), 1250-1259. White, H. D. (2004). Reply to Bensman. Journal of the American Society for In formation Science and Technology, 55 (9), 843-844. Wittgenstein, L. (1953). Philosophical investigations . New York: Macmillan.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment