MM Algorithms for Minimizing Nonsmoothly Penalized Objective Functions

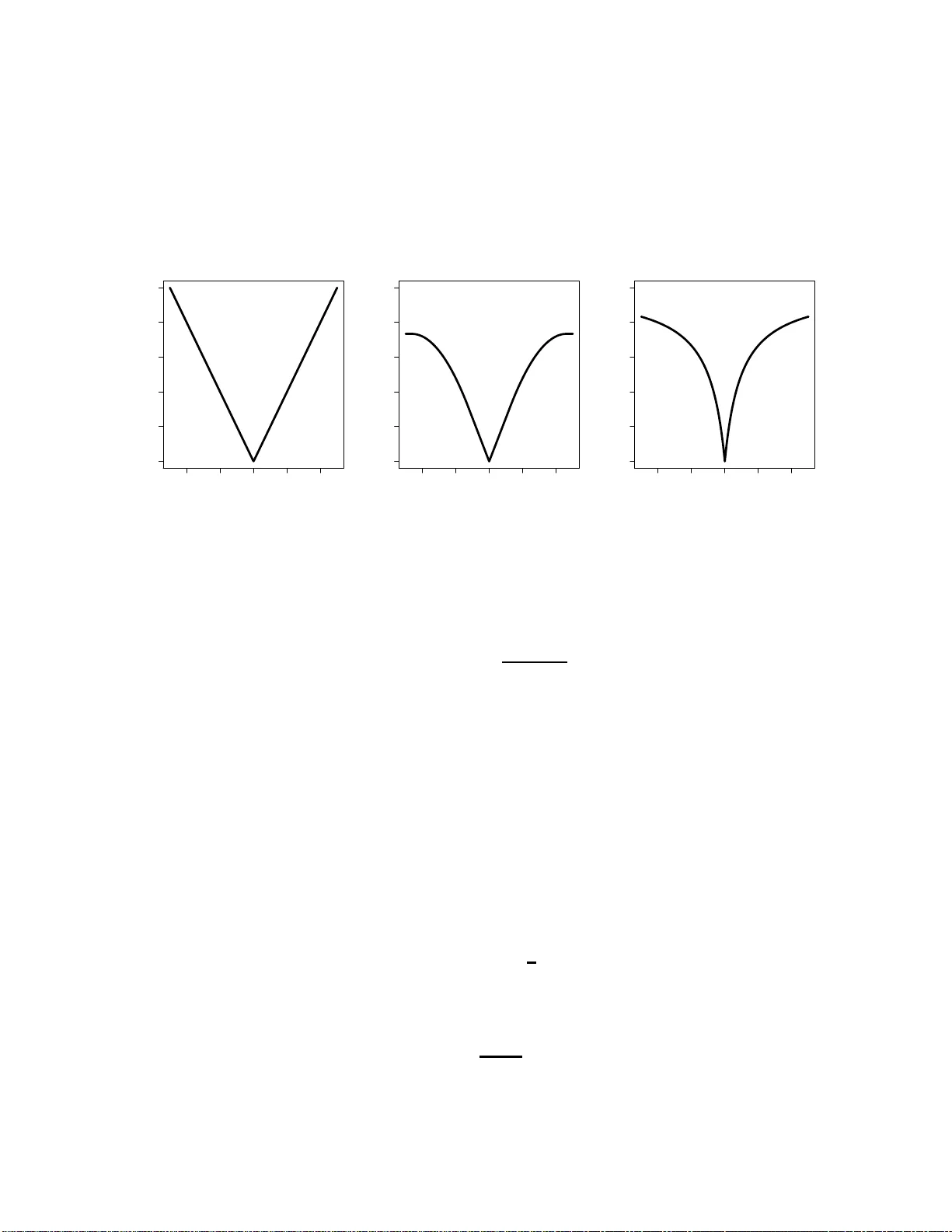

In this paper, we propose a general class of algorithms for optimizing an extensive variety of nonsmoothly penalized objective functions that satisfy certain regularity conditions. The proposed framework utilizes the majorization-minimization (MM) al…

Authors: Elizabeth D. Schifano, Robert L. Strawderman, Martin T. Wells