On the Equivalence of the General Covariance Union (GCU) and Minimum Enclosing Ellipsoid (MEE) Problems

In this paper we describe General Covariance Union (GCU) and show that solutions to GCU and the Minimum Enclosing Ellipsoid (MEE) problems are equivalent. This is a surprising result because GCU is defined over positive semidefinite (PSD) matrices wi…

Authors: Ottmar Bochardt, Jeffrey Uhlmann

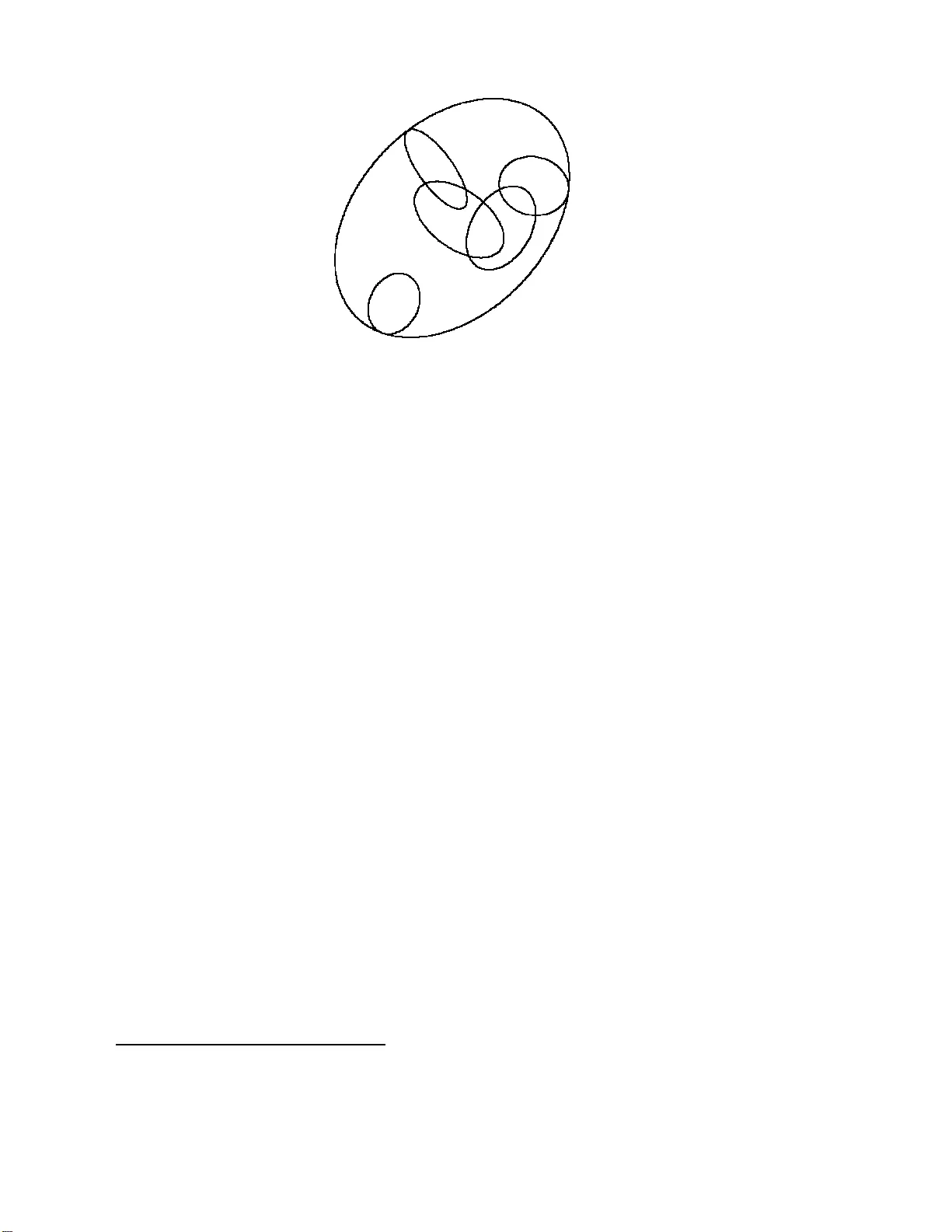

On the Equiv alence of t he General Cov ariance Union (GCU) and Minim um Enclosing Ellip soid (MEE) Problems Ottmar Bo chardt and Jeffrey Uhlmann Department of Computer Science, 201 EBW, Universit y of Missouri, Columbia, MO 65211 Phone: 573 8 8 4-212 9, F ax: 573 882-83 18 Abstract In this pap er we describ e General Co v ariance Union (GCU) and sho w that solutions to GCU and the Minimum Enclosing Ellipsoid (MEE) pr oblems are equiv ale nt. This is a surpr ising result b ecause GCU is defined o v er p ositiv e semidefinite (PSD) matrices with statistic al in terpretations while MEE in v olv es PSD matrices with geometric in terpr etatio n s . T heir equiv alence establishes an in tersection b etw een the see mingly disp arate methodologies of co v aria n ce-based (e.g., Kalman) fi ltering and b oun ded region approac hes to data fu s ion. 1 In tro duction P ositiv e semidefinite matrices are often c hosen to r epresen t uncertaint y in state es- timation and con trol algorithms b ecause of their special linear-algebraic prop erties. The Kalman filter, for example, uses PSD matrices to r eprepresen t cov ariance upp er b ounds on the second cen tral moments of unknow n proba bilit y distributions relating to the state of a system of in terest. Bounded region filters, b y con trast, use PSD matrices to define ellipsoidal regions whic h b ound t he state of the system o f in terest. The c hoice b et w een repres enting uncertaint y with cov ariance upp er b ounds v ersus ellipsoidal bounded regions leads to very differen t data f usion algorithms with v ery differen t filtering and con trol prop erties. The statistical in terpretation asso ciated with cov ariance matrices leads to use of the K a lman f usion equations in the case of indep enden t estimates or Co v ariance Interse ction (CI) when the statistical relation- ships among estimates to b e fused cannot b e establish ed[9 ]. Under their respectiv e assumptions, Ka lman and CI yield a fused estimate with the smallest p ossible co- v ariance that is g uaran teed to b e an upp er b ound on the second cen tral momen t of 1 the unkno wn pro babilit y distribution defining the state of the system. A b ounded region filter, on the o ther hand, determines the minim um-size ellipsoid tha t b ounds the intersec tion of the given ellipsoids whic h are assumed to b ound the true stat e of the system. In summary , one fra mew ork is statistical while the other is geometric. Despite the use of PSD matrices and the exploitation o f linearity prop erties, co v ari- ance and ellipsoidal framew orks employ very differen t mathematical tec hniques and ha v e almost completely disjoint literatures. (This relationship also holds t r ue more generally b et w een statistics and computational geometry .) A consequence of this fact is that the sophisticated to ols dev elop ed separately within eac h framew ork seem to ha v e no applicabilit y within the other framew ork. In this pap er w e ma ke progress to w ard bridging this gap by establishing a surprising equiv alence result. The structure of this pap er is as follows : The next section provides background for the Kalman filter, Co v ariance Inte rsection (CI), and Co v ariance Union (CU). W e then presen t General Co v ariance Union (GCU) and show that its solution is equiv alent to determining the Minim um Enclosing Ellipsoid ( MEE) of the ellipsoids defined b y its co v ariance matrix arg uments. In other w ords, G CU and MEE are equiv a len t. W e end with a discussion of t he implications of this unexp ected result. 2 The Co v ariance Upp er Bound F ramew ork for Data F usio n An estimate of the squared erro r, or c ovarianc e , asso ciated with measuremen ts from a particular sensor c an b e mo deled empirically b y examining measuremen ts tak en of reference ob jects whose true states are kno wn. This p ermits an error co v ariance matrix to b e asso ciated with subsequen t measuremen ts of ob jects whose true states are not kno wn. F or example, the measured p osition o f an ob ject in tw o dimensions can b e represen ted a s a v ector a consisting of the ob j ect’s estimated mean p osition, e.g., a = [ x , y ] T , and an error co v ariance matrix A t hat expresses the uncertaint y asso ciated with the estimated mean. If the error in the estimated mean vector is denoted as ˜ a , then the error co v ariance matrix is an estimate of the exp ected squared error, E[ ˜ a ˜ a T ]. Ideally , A would equal E[ ˜ a ˜ a T ], but this is not generally p ossible to achiev e in practice b ecause measuremen t and pro cess mo dels are nev er p erfect. T o accommo date the effect of mo del errors, prediction and measuremen t cov ariances are t ypically ov ere s- timated so a s to av oid underestimating the actual squared errors. I n other w ords, a more conserv ative o ve restimate of errors is deemed preferable to underestimating the errors. One of many reasons for this preference is that it av oids the consequences o f spuriously small errors causing a co v ariance matrix to b ecome singular or n umerically unstable. 2 F ormally , a mean and cov ariance estimate is said to b e consisten t (or conserv ativ e) if and only if A - E[ ˜ a ˜ a T ] is p ositive semidefinite (i.e., has no negativ e eigen v alues): A E[ ˜ a ˜ a T ] (1) The statistical prop erties asso ciated with a mean v ector and its asso ciated co v ariance upp er b ound are completely defined b y the definion of consiste ncy: all that can b e said ab out a mean and cov ariance pair, ( a , A ), is that the cov ariance matrix, A ), is greater than the exp ected squared error in its asso ciated mean, a ). Ha ving established a rigorous definition of what constitutes a c onsistent or c onser- vative estimate, it is p o ssible to certify the perfo r mance of the Kalman filter and Co v ariance Interse ction. 2.1 The Kalman Filter Giv en t wo mean and co v ariance estimates ( a , A ) and ( b , B ), the data fusion problem of interes t in this pap er consists of determining a fused estimate ( c , C ) that is guar- an teed to b e consisten t and summarizes the informat io n in the t w o estimates with error (in terms of the size of C ) that is less than or equal to that of either estimate. If the tw o estimates are consisten t and presumed to b e statistically indep enden t, then a join t estimate can b e constructed as: a b , A 0 0 B . (2) Letting ˜ a and ˜ b denote the errors in the resp ectiv e mean estimates, the k ey prop erty of the joint co v ariance estimate is that it satisfies: A 0 0 B E[ ˜ a ˜ a T ] 0 0 E[ ˜ b ˜ b T ] , (3) where t he RHS mat rix represen ts the true but unkno wn joint error co v ariance, whic h has zero cross cov ariance, ˜ a ˜ b T = 0 , due to the assumption of statistical indep endence. The estimated joint co v ariance is a conserv ativ e estimate of the t r ue jo in t cov ariance b ecause in practice A E[ ˜ a ˜ a T ] and B E[ ˜ b ˜ b T ]. The latter inequalities hold b y design in that inten tional efforts are made to ensure that estimate error cov ariances do not underestimate t he actual squared errors asso ciated with sensor and kinematic mo dels. Giv en a consisten t join t co v ar ia nce for t w o given n -dimensional estimates, the Kalman filter defines the optimal linear pro jection of the 2 n - dimensional join t estimate bac k to the n -dimensional state space o f intere st. The result of the Kalman pro j ection is a mean and cov ariance estimate ( c , C ) that represen ts the optimal fusion of the t w o giv en mean and cov ariance estimates. In fact, if there is no additional information 3 a v ailable (e.g., distribution informatio n) , then the Kalman fusion estimate is optimal according to virtually any error criteria [7]. In the case of statistically indep enden t estimates ( a 1 , A 1 ) , ( a 2 , A 2 ) , ..., ( a m , A m ), the Kalman fusion equations hav e a particularly simple form [7]: C = A − 1 1 + A − 1 2 + ... + A − 1 m − 1 c = C A − 1 1 a 1 + A − 1 2 a 2 + ... + A − 1 m a m . (4) If its underlying assumptions hold (i.e., consistency and indep endence), then t he ab ov e Kalman equations ensure that the fused estimate ( c , C ) is consisten t, and C A i ∀ i, 1 ≤ i ≤ m . Ho w ev er, an y presumption of statistical indep endence in practical data fusion con texts should b e carefully considered. Sp ecifically , virtua lly any sensor is sub ject to time-cor r elat ed errors induced by the particular conditions of its use (e.g., changes in temp erature, plat f orm vibrations, relativ e h umidit y), a nd errors asso ciated with the nonlinear transformation of its measure men ts (e.g., fro m lo cal spherical co o rdinates to a global co ordinate fra me) are deterministic and therefore non-indep enden t. If estimates ( a 1 , A 1 ) , ( a 2 , A 2 ) , ..., ( a m , A m ) are each consisten t, but not completely indep enden t, then it is p ossible for the Kalman fused estimate ( c , C ) to b e inc onsis- tent . In fact, if A i = ˜ a i ˜ a T i ∀ i, 1 ≤ i ≤ m , then any degree of correlation guaran tees inconsistency , i.e., C 6 E[ ˜ c ˜ c T ]. The ke y p oint is t ha t a Kalman fused estimate is not guaran teed to b e consisten t ev en if eac h o f its giv en estimates a re consiste nt. The reason wh y the Kalman filter f ails is because although t wo given estimates ( a , A ) and ( b , B ) ma y b e individually consisten t, the implicit joint cov ariance ma y not b e if indep endence is assumed when the cross cov ariance b etw een the es timates is X = E[ ˜ a ˜ b T ] 6 = 0 . Sp ecifically: E[ ˜ a ˜ a T ] 0 0 E[ ˜ b ˜ b T ] 6 E[ ˜ a ˜ a T ] X X T E[ ˜ b ˜ b T ] . (5) In other w ords, the Kalman filter only fails to pro duce a consis tent fused estimate when the implicit joi n t es timate is inconsisten t. Altho ug h the equations are ve ry sim- ple and elegan t for indep endent estimates, the Kalman filter is also defined generally for an y consisten t join t co v ariance with X 6 = 0 [7]. Therefore, the Kalman filter is guaran teed to pro duce consisten t estimates as long as the giv en estimates are con- sisten t and their cross cov ariance is kno wn [3]. Unfortunately , t his poses significan t c hallenges. The first c hallenge is that the cross co v ariance info rmation must in prin- ciple b e determined exactly . This can b e see n b y examining the difference b et w een t w o join t co v ariance matrices with differen t cross terms: A X X T B − A Y Y T B = 0 X − Y ( X − Y ) T 0 . (6) The difference matrix is not PSD f o r an y case in whic h X 6 = Y . The need fo r absolutely p erfect cross co v ariance information presen ts difficulties when estimates are 4 the pro ducts of nonlinear op erations (e.g., co ordinate transformations, kinematic time pro jections, h uman-deriv ed estimates) b ecause the error pro cesses are not p erfectly mo deled. F or example, the same approximate nonlinear transforma t io n equations ma y b e applied to con v ert differen t radar observ atio ns of an ob ject to a common co ordinate frame, so the error s committed are clearly not indep enden t, but it may not b e p ossible to determine exact cross co v ariances. Kalman’s original deriv a t ion of his ep on ymous filter was based on o rthogonal pro- jection t heory , and the fact that there exists a simple Bay e sian in terpretation of the result when error distributions are Gaussian w as presen ted only as an in teresting sp ecial case [5]. Ho w ev er, man y subseq uent referenc es to the Kalman filter incor- rectly suggest that the Kalman filter r e quir es assumptions of Gaussianit y 1 . It turns out that the assumption of estimate independence is actually the only problematic assumption b ecause it typically cannot b e g uaran teed in practice. Relaxing the in- dep endence a ssumption leads to the more general fusion equations of the Co v ariance In tersection (CI) metho d whic h has pro ve n in v aluable for a wide v ariet y of pra ctical applications[2, 4]. 3 Co v ariance In te rsectio n (CI) The general mean and cov ariance da t a fusion problem can b e formulated in terms of the joint co v ariance structure that implicitly exists b etw een a g iven pair o f estimates ( a , A ) and ( b , B ): A X X T B (7) where X represen ts the actual, but unknow n, cross co v ariance b et w een the tw o esti- mates. If X w ere kno wn, then it would b e po ssible to apply more general form ulations of the Kalma n filter equations to pro duce an optimal fused estimate. Unfortunately , these generalizations only guarantee consistency if the cross cov ariance is know n ex- actly , i.e., it cannot b e conserv ativ ely approximated in any w ay analogous to the w a y conserv ative co v ariance estimates are used. Without know ledge of X , the only w a y to ensure consistency in the application of the Kalman filter is to iden tify a join t co v ariance that is guaran teed to b e consiste nt based on the info rmation av ailable. In the presen t con text, therefore, a join t cov ariance 1 In fact, one commonly-cited motiv a tion fo r inv estiga ting the applicatio n of neur al net works and fuzzy logic is the cla im that the Kalman filter imp ose s restrictive Gauss ianity as sumptions that cannot be satisfied in many applications. The fact is that the use of cov ariance upp er b ounds was recognized as necessa ry when the first Kalma n filters w er e implemen ted in the late 1960 s, a nd such bo unds ar e incompatible with PDF interpretations. It was s hown by Jazw ins ki in his class ic 1970 bo ok that the standard practice of using cov ariance upp er es timates do es not under mine the integrity of the Ka lman filter[3]. 5 matrix M must b e determined suc h that: M A X X T B (8) for ev ery p ossible cross co v ariance X . It can b e inf erred from the symmetry of the unkno wn cross cov ariance informatio n (i.e., M m ust b e consisten t for any instan ti- ation X = X and for X = −X ) that the off-diagonal blo c ks of M should b e zero, and its diagonal blo c ks m ust b e sufficien tly larger than A and B to accoun t for the effects o f all p ossible degrees of correlation among the error comp onen ts o f the mean estimates a and b . It has b een sho wn (app endix 14 of [9]) that a consisten t and t ig h t join t co v ariance M can b e generated b y selecting a scalar v alue ω , 0 ≤ ω ≤ 1 as: 1 ω A 0 0 1 (1 − ω ) B A X X T B (9) where ω is c hosen to minimize the size (e.g., determinan t) o f the co v ariance pro duced b y the Kalman filter up date equations for the estimates ( a , 1 ω A ) and ( b , 1 1 − ω B ). This can b e generalized for an arbitrary n um b er of estimates: 1 ω 1 A 1 0 ... 0 0 1 ω 2 A 2 ... 0 . . . . . . . . . . . . 0 0 ... 1 ω m A m A 1 X 1 , 2 ... X 1 ,m X 2 , 2 A 2 ... X 2 ,m . . . . . . . . . . . . X m, 1 X m, 2 ... A m (10) where P m i =1 ω i = 1, and the parameters are determined t o minimize t he cov ariance resulting fr om the fusion of t he n estimates. The ab o v e inequality provide s a general (and optimal) mec hanism fo r obtaining a consisten t jo in t co v ariance when giv en only the blo c k diag onals of an unkno wn jo in t cov ariance matrix. The use of appropria te ω - param terized co v ariances in the Kalman up date equations yields the CI fusion equations. The general approach of determining consisten t join t co v ariances can also b e a pplied to solv e related problems. F or example, giv en estimates ( a , A ) and ( b , B ) tha t are correlated to an unknown exten t, the cov ariance of a + b can b e computed a s 1 ω A + 1 1 − ω B . This is referred t o as Cov ariance Addition (CA). 3.1 Co v ariance Union (CU) Co v ariance Interse ction addresses the general form of the data fusion problem for mean and co v ariance estimates, but in pra ctice a differen t problem can arise b efore data fusion can ev en b e p erformed. Sp ecifically , what is to b e done if tw o estimates ( a , A ) a nd ( b , B ), purp ortedly relating to the stat e of the same real-world ob ject, are 6 determined to b e mutually inconsisten t with each other, i.e., the differences b etw ee n their means is m uc h larger than what can b e exp ected based on their resp ectiv e error co v ariance estimates? F or example, if t w o mean p o sition estimates differ by more than a kilometer, but their resp ectiv e co v ariances suggest that each mean is accurate to within a meter, then clearly something is wrong. One mechanis m for detecting statistically significan t deviations b et w een estimates is to compute Mahalanobis distances [6]. The Mahalano bis distance b etw ee n estimates ( a , A ) and ( b , B ) is defined as: ( a − b ) T ( A + B ) − 1 ( a − b ) , (11) whic h is essen tially just t he squared distance b etw een the means a s normalized b y the sum of their r esp ectiv e co v ariances. In tuitiv ely , if the co v ariances are larg e, then a large difference b et w een the mean v ectors a and b is not surprising, so the Maha- lanobis distance is small. Ho w ev er, if the co v ariances are ve ry small, then even small differences b etw ee n the means ma y yield a larg e Mahalanobis distance. A large Maha- lanobis distance ma y tend to indicate that the estimates are not consisten t with eac h other, but a user-defined threshold is required to define what constitutes a n accept- able deviation 2 . When the threshold is exceede d, the estimates are regarded as b eing con tradictory and some kind of action must b e t a k en. R esolving suc h inconsistencies among estimates is sometimes r eferred to as de c on fliction [8]. The Co v ariance Inte rsection metho d guarantee s consistency a s long as the estimates to b e fused are eac h consisten t. In t he deconfliction problem it is only kno wn that one o f the estimates, either ( a , A ) or ( b , B ), is a consisten t estimate of the stat e of the ob ject of in terest. Because it is not generally p o ssible to kno w whic h estimate is spurious, the only w ay to rigorously com bine the estimates is to form a unioned estimate, ( u , U ), that is gua r an teed to b e consisten t with resp ect to b oth o f the tw o estimates. Suc h a unioned estimate can be constructed[10] b y computing a mean v ector u and cov ariance matr ix U such that: U A + ( u − a )( u − a ) T U B + ( u − b )( u − b ) T (12) where some measure of the size of U , e.g., determinan t, is minimized. This Cov ariance Union (CU) of t he t w o estimates can b e subsequen tly fused with other consisten t estimates using CI. The CU equations generalize directly for the case of m > 2 t w o 2 It must be emphasized that the use of a threshold o n Ma halanobis distance is not the only po ssible mechanism for iden tifying p otentially spur ious estimates, but some user-defined mechanism is r e q uired. Otherwise there is no wa y to disting uish fault conditions from low pro bability even ts. In other w ords , models for fault conditions are inherently application-sp ecific. 7 estimates: U A 1 + ( u − a 1 )( u − a 1 ) T U A 2 + ( u − a 2 )( u − a 2 ) T . . . U A m + ( u − a m )( u − a m ) T (13) In tuitiv ely , the CU equations simply sa y that if the estimate ( a , A ) is consisten t, then the translation of the ve ctor a to u will require its co v ariance to b e enlarged b y the addition of a matrix at least as large as the outer pro duct o f ( u − a ) in o rder to b e consisten t. The same reasoning applies if the estimate ( b , B ) is consisten t. Cov ariance Union therefore determines the mean v ector u ha ving the smallest co v ariance U that is large enough to guaran tee consistency regardless of whic h of the t w o giv en estimates is consisten t. The resulting co v ariance may b e significantly la r g er t han either of the giv en co v aria nces, but this is an accurate reflection of the actual uncertain t y that exists due to the conflict b et w een t he t wo estimates. The k ey fact is that the CU estimate satisfies the definition o f consistency . As a simple example of a CU construction, consider tw o estimates ( a , A ) and ( b , B ) of the lo cation o f an ob ject observ ed from tw o no des in a netw ork. The estimate from the first no de places the mean p osition at a = [0 , 0] T , and the second no de places it at b = [4 , 4 ] T , and eac h has an error cov ariance equal to the iden tity matrix I . If it is determined that the tw o estimates are statistically inconsisten t with eac h other, t hus implying that one of the es timates is not a consisten t estimate of the o b j ect’s lo cation, then deconfliction m ust b e p erformed. The o ptimal CU deconflicted estimate is: u = 2 2 , U = 5 4 4 5 (14) It is straightforw ard to v erify that this estimate ( u , U ) is in fact consisten t with resp ect to either/b o t h of the estimates ( a , A ) and ( b , B ). If ( a , A ) is a consisten t estimate of t he target’s state, then the cov ariance U for mean u m ust b e greater than or equal to A + ( u − a )( u − a ) T , whic h it is. It can b e v erified that the estimate ( u , U ) is similarly consisten t with resp ect to the estimate ( b , B ) . Therefore, if either of the tw o estimates represen ts a consisten t estimate of the state of the ob ject, then the CU estimate is also consisten t. 4 General Cov ariance Union (GCU) Consistency of the CU equations rests o n a n implicit assumption t ha t the estimates to b e com bined are not correlated. Sp ecifically , the CU inequalities for the estimate 8 ( u , U ): U A 1 + ( u − a 1 )( u − a 1 ) T U A 2 + ( u − a 2 )( u − a 2 ) T . . . U A m + ( u − a m )( u − a m ) T (15) tec hnically hold o nly if the errors asso ciated with A i are uncorrelated with a i . This is true for most ty p es of common pro cess mo dels, but not for certain kinds of recursiv e con trol and colored noise mo dels. F or example, supp ose that w e are giv en a 1 D estimate (0 , 0), i.e., zero mean and zero v ariance. The underpinning assumptions of CU imply that if t he mean is translated to 1 , the new consisten t estimate m ust ha v e v ariance 0 + 1 2 = 1. If instead the mean is translated to 2, the v ariance m ust b e 0+ 2 2 = 4. How ev er, suppose a 2-step t r a nslation is p erformed: First the estimate is translated 1 unit to pro duce (0 + 1 , 0 + 1 2 ) = (1 , 1), then that estimate is translated 1 more unit. According to CU the result should b e (1 + 1 , 1 + 1 2 ) = (2 , 2 ) , but this is clearly incorrect b ecause tra nslating the estimate (0 , 0) by 2 units mus t pro duce ( 2 , 0 + 2 2 ) = (2 , 4) to ensure consistenc y . The problem, of course, is that the translations in the sequence of steps ar e not indep enden t. More generally , giv en a mean and cov ariance estimate ( a , A ), the co v ariance of a + x is equal to A + xx T if and only if the estimate error, ˜ a , is independent of the tra nslation x . If they a r e corr elated to an unknown exten t, then the co v ariance of a + x m ust b e form ulated using Co v ariance Addition (CA) as 1 ω A + 1 1 − ω xx T . Applying CA to the CU inequalities (15) gives the generalized G CU fo rm ulation: U 1 ω 1 A 1 + 1 1 − ω 1 ( u − a 1 )( u − a 1 ) T U 1 ω 2 A 2 + 1 1 − ω 2 ( u − a 2 )( u − a 2 ) T . . . U 1 ω m A m + 1 1 − ω m ( u − a m )( u − a m ) T (16) where the o ptimization problem now in v olv es n f r ee parameters (0 ≤ ω i ≤ 1), lik e CI, but with the semidefinite inequalities of standard CU. This app ears to represen t a daun ting c hallenge to solv e efficie ntly . Ho w ev er, plots of solutions for lo w-dimensional problems pro vide a critical insigh t. Sp ecifically , Figure 1 depicts the 1-sigma solu- tion contour that minimally encloses the 1- sigma contours of the estimates that are unioned. This suggests – but of course do es not pro v e – that the GCU solution is equiv alent to the corresp onding MEE solution o btained by in terpreting the co v ari- ances as ellipsoids defined b y their 1-sigma con tours. That they are in fact equiv alen t is established in the following section. 9 Figure 1: A typical Genera l Cov ar iance Unio n (GCU) o ptimization problem. The five small cov a ri- ance ellipses represent the fiv e estimates to be unioned. Surprisingly , they seem to be circumscrib ed by the minimal-determinant GCU solution. 5 The Equiv alence of GC U and MEE This section prov ides a formal pro of o f the equiv alence of MEE a nd G CU 3 Our treat- men t of ellipsoid enclosure follo ws the discuss ion in § 3.7.1 of [1], so sev eral of its equations are rep eated here f o r easy reference. In [1], the enclosing and enclosed ellipsoids are represen ted via parameter triples { A i , b i , c i } where index 0 refers to the enclosing ellipsoid: { x ∈ R n | x T A i x + 2 x T b i + c i ≤ 0 } , i = 0 . . . m, (17) A i = A T i ≻ 0 and b T i A − 1 i b i − c i > 0 The triples { A i , b i , c i } are ho mo g enous so the authors normalize the enclos ing ellip- soid’s par ameters via c 0 = b T 0 A − 1 0 b 0 − 1 and then use the S-lemma to represen t the ellipsoid-enclosure constraint a s a matrix inequalit y: A 0 b 0 b T 0 b T 0 A − 1 0 b 0 − 1 − τ i A i b i b T i c i 0 , τ i ≥ 0 , i = 1 . . . m. (18) That inequalit y is nonlinear so they use a Sc h ur complemen t a rgumen t to expand it: A 0 b 0 0 b T 0 − 1 b T 0 0 b 0 − A 0 − τ i A i b i 0 b T i c i 0 T 0 0 0 0 , τ i ≥ 0 , i = 1 . . . m. (19) 3 T o make the expositio n co ncise our pro o f as sumes that the cov ariance ma trices ar e nonsingula r, but the eq uiv alence ho lds generally . 10 The minimu m-determinant ellipsoid enclosure can then b e p osed as a Maxdet [11] optimization: minimize log det A − 1 0 sub j ect to A 0 ≻ 0 , τ i ≥ 0 , i = 1 . . . m. A 0 b 0 0 b T 0 − 1 b T 0 0 b 0 − A 0 − τ i A i b i 0 b T i c i 0 0 0 0 0 , i = 1 . . . m. (20) 5.1 Co v ariance ellipsoid enclosure as a M axdet In this section, w e tra nslate the ellipse-enclosure fo rm ulation (2 0) from t he original { A, b, c } triples to cov ariance/mean estimation para meters ( u, U ). The co v ariance ellipsoid E ( u, U ) for an estimate at mean u with cov ariance matrix U is defined a s: E ( u , U ) = { x ∈ R n | ( x − u ) T U − 1 ( x − u ) ≤ 1 } = { x ∈ R n | x T U − 1 x + 2 x T ( − U − 1 u ) + ( u T U − 1 u − 1 ) ≤ 0 } (21) Comparison of (17 ) and (21) rev eals the following corr esp o ndences: A ⇔ U − 1 , b ⇔ − U − 1 u, c ⇔ u T U − 1 u − 1 (22) Apply (22) to the nonlinear matrix inequalit y (18) - w e will use the result in § 5.2. The result is a G CU equation so A i no w denotes a constraint cov ariance, and a i its mean: U − 1 − U − 1 u ( − U − 1 u ) T u T U − 1 u − 1 − τ i A − 1 i − A − 1 i a i ( − A − 1 i a i ) T a T i A − 1 i a i − 1 0 , τ i ≥ 0 , i = 1 . . . m. (23) Returning to t he co v ariance ellipsoid Maxdet, apply (22) to (20): minimize log det U sub j ect to U ≻ 0 , τ i ≥ 0 , i = 1 . . . m, (24) U − 1 − U − 1 u 0 ( − U − 1 u ) T − 1 ( − U − 1 u ) T 0 − U − 1 u − U − 1 − τ i A − 1 i − A − 1 i a i 0 ( − A − 1 i a i ) T a T i A − 1 i a i − 1 0 0 0 0 0 , i = 1 . . . m. The matrix inequalities in (24) are nonlinear, due to the (quadratic) U − 1 u terms. Linearize them with c hange of v ariables v = − U − 1 u . Also, let W = U − 1 to obta in a more standardized Maxdet formulation: minimize log det W − 1 sub j ect to W ≻ 0 , τ i ≥ 0 , i = 1 . . . m, W v 0 v T − 1 v T 0 v − W − τ i A − 1 i − A − 1 i a i 0 ( − A − 1 i a i ) T a T i A − 1 i a i − 1 0 0 0 0 0 , i = 1 . . . m. (25) 11 After solving (25) for v a nd W , the optimal ( u, U ) a re recov ere d as U = W − 1 and u = − U v . 5.2 Enclosure of a single elli psoid Consider a GCU constraint from (16) for a single estimate ( a, A ) where A is full rank: U 1 ω A + 1 1 − ω ( u − a )( u − a ) T , ω ∈ [0 , 1] (26) W e shall prov e t ha t (26) defines the set of all estimates ( u, U ) whose cov ariance ellipsoid E ( u, U ) encloses E ( a, A ). It then follow s that the com bined GCU constrain ts (16) define the set of all cov ariance ellipsoids whic h enclose all the E ( a k , A k ) so the solution to the (25) Maxdet formulation is a minim um-determinant solution to (16). Note t ha t (26) only depends on t he difference b etw ee n u and a rather than their absolute v alues, and must hold for any arbitrary v alue of u . Therefore we may , without loss of generality , assume that a = 0 (simple coo rdinate shift) whic h simplifies (26) to: U 1 ω A + 1 1 − ω uu T , ω ∈ [0 , 1] (27 ) A similar argumen t can b e applied to a single instance f r o m the ellipsoid-enclosure inequalities (23): it mus t hold for arbitrary v alues of u , and ellipsoid enclosure is a geometric pro p ert y unaffected b y co ordinate shifts. So w e may again assume that a = 0, whic h simplifies ( 2 3) to : U − 1 − U − 1 u ( − U − 1 u ) T u T U − 1 u − 1 − τ A − 1 0 0 T − 1 0 , τ ≥ 0 (28) Equation (28) implies the following scalar inequalit y for t he lo w er righ t-hand main diagonal en try: u T U − 1 u − 1 + τ ≤ 0. Since u T U − 1 u ≥ 0 w e m ust hav e τ ≤ 1. Make that restriction explicit, to bring τ in line with the equation (27) ω parameter: U − 1 − U − 1 u ( − U − 1 u ) T u T U − 1 u − 1 − τ A − 1 0 0 T − 1 0 , τ ∈ [0 , 1] (29) W e will prov e equiv alence by demonstrating that (27) and (29) ar e mutual in ve rses. But first w e m ust consider the singular p oin ts. F or (27) they are at ω = 0 and ω = 1. As ω approac hes 0, U b ecomes un b ounded and therefore E ( u, U ) encloses an y (b ounded) co v ariance ellipsoid E (0 , A ). The behavior for ω = 1 dep ends on the v alue of u : if u = 0 then the ellipsoids are concen tric and (27) r educes to the exp ected enclosure requiremen t U A . If u 6 = 0 then the right-hand side approaches the sum of A and an un b ounded rank-1 adjustmen t in the direction of u . The result is a n elliptic cylinder - with radial axis thro ugh 0 and u - whic h t ig h tly encloses E (0 , A ). So b oth singular p o ints lead to enclosure of E (0 , A ). 12 No w that the singular p oints hav e b een considered, w e can simply in v ert (27): mo v e the rank-1 term to the left-ha nd side and apply the Sherman-Morrison formula. The result simplifies to: U − 1 + ( U − 1 u )( U − 1 u ) T 1 − ω − u T U − 1 u ω A − 1 (30) The ra nk-1 term in (30) is bo unded a nd po sitiv e semidefinite, since it is related to the b ounded and p ositiv e semidefinite ra nk-1 term 1 1 − ω uu T from (27). Therefore, 1 − ω − u T U − 1 u > 0 so we can a pply a Sch ur complemen t: ω A − 1 − U − 1 U − 1 u ( U − 1 u ) T 1 − ω − u T U − 1 u 0 (31) Finally , rearrange terms: U − 1 − U − 1 u ( − U − 1 u ) T u T U − 1 u − 1 − ω A − 1 0 0 T − 1 0 , ω ∈ (0 , 1) (32) Equation (32) has the same for m as (29) (except for the singular p oin ts ω = 0 and ω = 1 whic h w ere considered earlier). This completes the pro of. 6 Discuss ion In this pap er w e ha v e established a connection b etw een co v ariance-based and b ounded- region mo dels of uncertaint y . In particular, w e hav e sho wn that GCU and MEE hav e equiv alent solutio ns. Thus , tec hniques for solving one problem can b e directly applied to solv e instances of the ot her. This equiv alenc e is also suggestiv e of a p otentially more general framew ork that subsumes the cov ariance and b ounded-region approaches . A cknow l e dgements : This w ork was supp orted b y grants from the O ffice of Nav al Researc h a nd the Nav al Research Lab orato ry . References [1] Stephen Boyd, Lauren t El Ghaoui, Eric F eron, and V enk ataramanan Ba la krish- nan. Line ar matrix ine qualities in s ystem and c ontr ol the ory , v olume 15 of SIAM studies in a p plie d mathem atics . So ciet y for Industrial and Applied Mathematics, Philadelphia, P A, USA, 1994. [2] J. F erreira and J. W aldmann. Co v ariance intersec tion- based sens or fusion for sounding ro c ke t tr a c king and impact ar ea prediction. Contr ol Engine ering Pr ac- tic e , 1 5(4):389–4 09, April 2 007. [3] A. H. Jazwinski. S to chastic Pr o c esses and Fi l terin g The o ry . Academic Press, 1970. 13 [4] S. J. Julier and J. K. Uhlmann. Using co v ariance in tersection for SLAM. R ob otics and Autonomous Systems , 55(1 ) :3 –20, Jan uary 2 007. [5] R.E. Ka lman. A new approac h to linear filtering and prediction problems. ASME J. Basic Eng. , 82:34–4 5 , 196 0 . [6] P . Mahala nobis. On the generalized distance in statistics. Pr o c. Natl. Inst. Scienc e , 12:49–55, 1936 . [7] P . S. Ma yb ec k. S to chastic Mo dels, Estimation, and Contr ol , v olume 1 . Academic Press, 19 79. [8] Ranjeev Mittu and F rank Segaria. Common op erat io nal picture (COP) and common tactical picture (CTP) managemen t via a consisten t netw ork ed infor- mation stream (CNIS). Pr o c e e dings of the Command and C ontr ol R ese ar ch and T e chnolo gy Symp osium , 2000. [9] J. K. Uhlmann. Dynami c Map Buildi n g and L o c alization for Autonomous V ehi- cles . PhD thesis, Unive rsity of Oxfor d, 1995 . [10] Jeffrey Uhlmann. Cov ariance consistency metho ds for fault- toleran t distributed data fusion. Information F usio n , 4(3):2 01–215, Sept 20 03. [11] S.-P . W u, L. V anden b erghe, and S. Boyd . Maxdet: Soft w are for determinan t maximization pro blems, alpha ve rsion. Stanfor d University , April 199 6. 14

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment