A Quasi-Newton Approach to Nonsmooth Convex Optimization Problems in Machine Learning

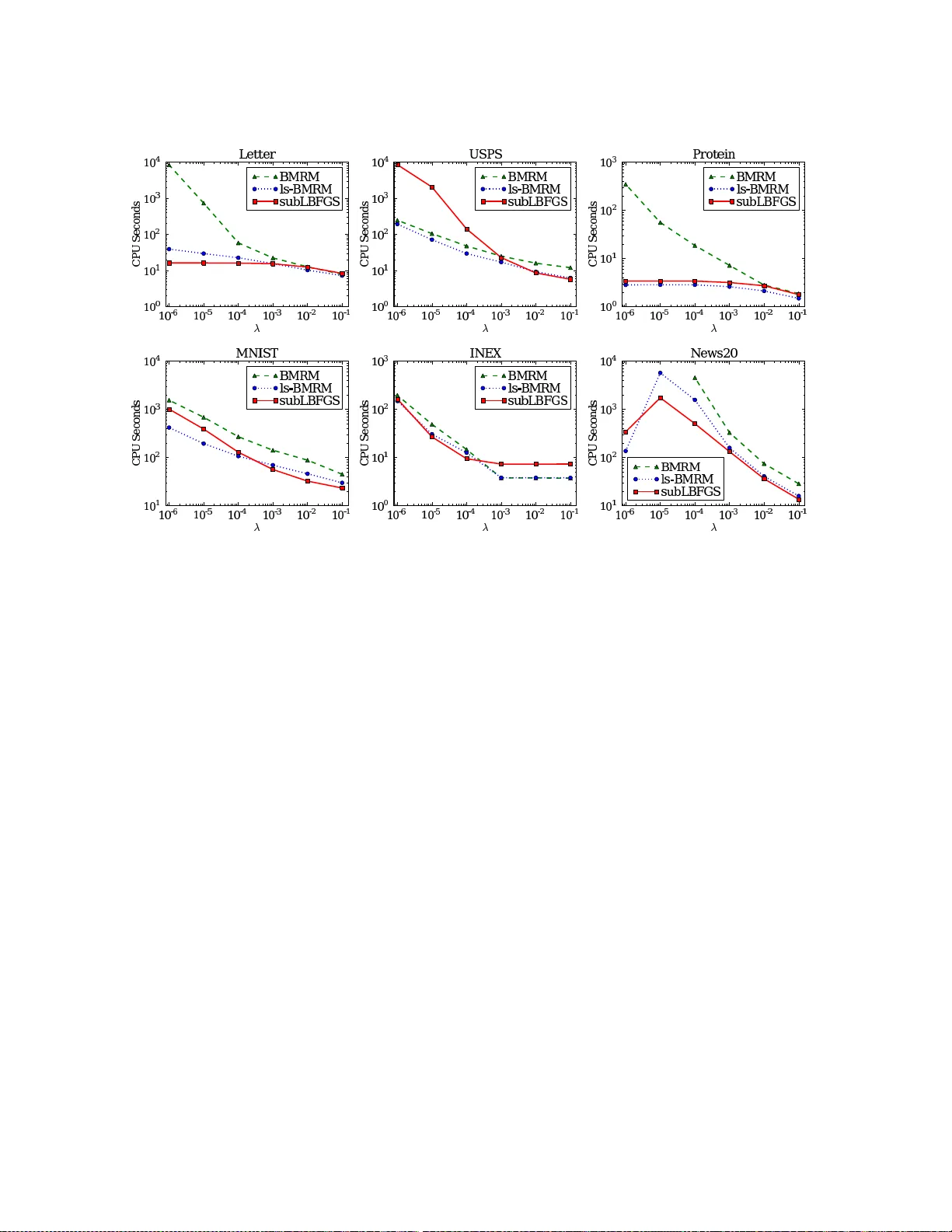

We extend the well-known BFGS quasi-Newton method and its memory-limited variant LBFGS to the optimization of nonsmooth convex objectives. This is done in a rigorous fashion by generalizing three components of BFGS to subdifferentials: the local quad…

Authors: Jin Yu, S.V.N. Vishwanathan, Simon Guenter