Non-Sparse Regularization for Multiple Kernel Learning

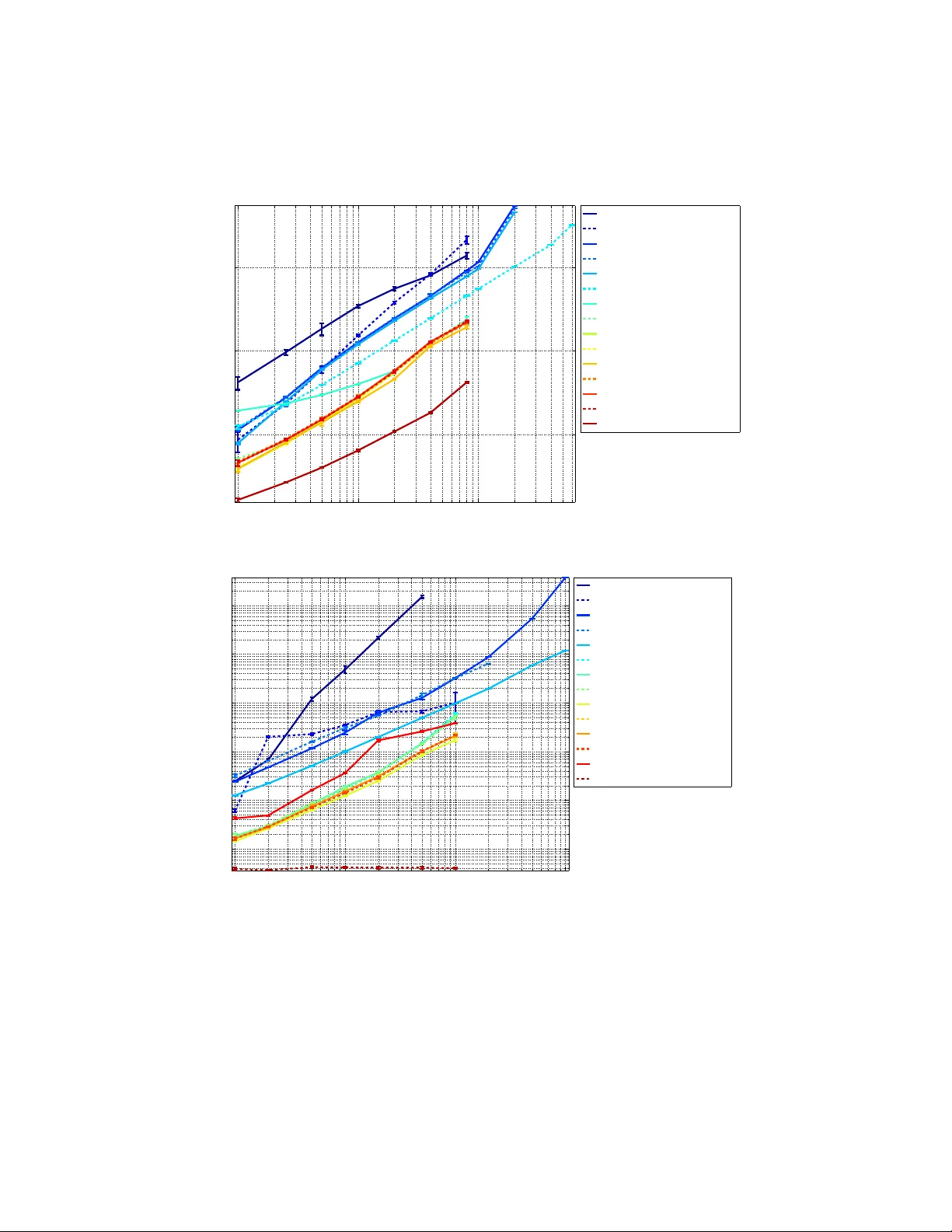

Learning linear combinations of multiple kernels is an appealing strategy when the right choice of features is unknown. Previous approaches to multiple kernel learning (MKL) promote sparse kernel combinations to support interpretability and scalabili…

Authors: ** Marius Kloft, Jörg Brefeld, S. Sonnenburg