BART: Bayesian additive regression trees

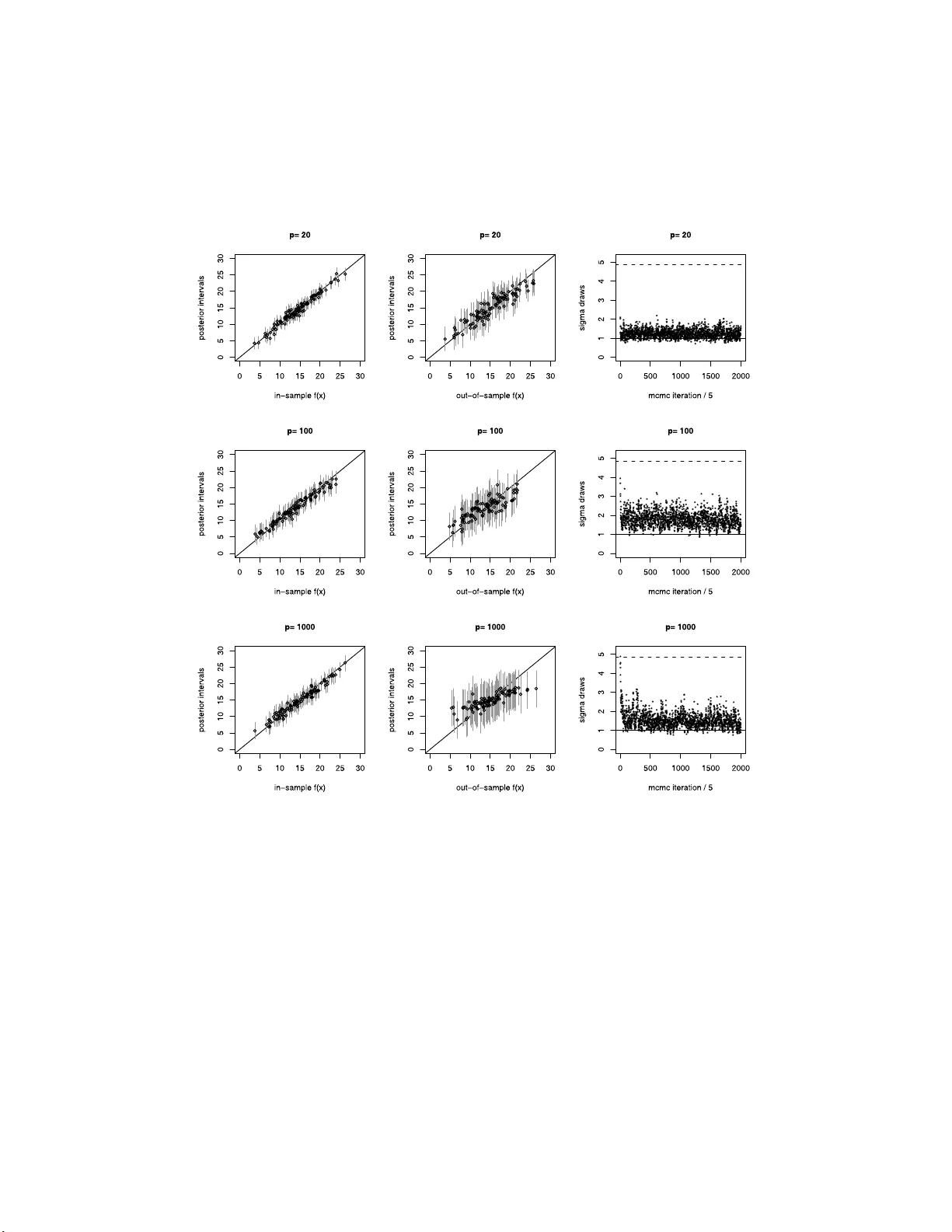

We develop a Bayesian "sum-of-trees" model where each tree is constrained by a regularization prior to be a weak learner, and fitting and inference are accomplished via an iterative Bayesian backfitting MCMC algorithm that generates samples from a po…

Authors: Hugh A. Chipman, Edward I. George, Robert E. McCulloch