Large Scale Variational Inference and Experimental Design for Sparse Generalized Linear Models

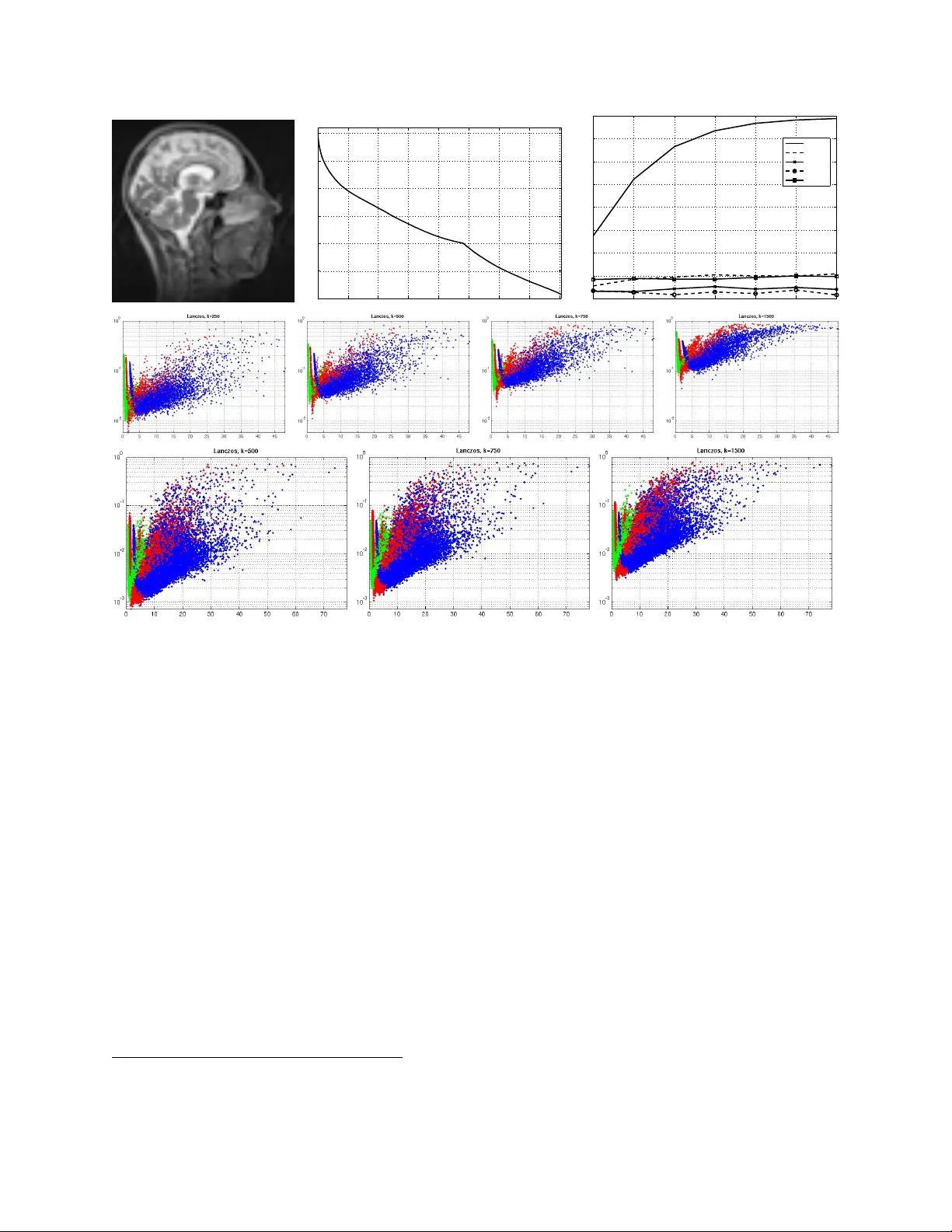

Many problems of low-level computer vision and image processing, such as denoising, deconvolution, tomographic reconstruction or super-resolution, can be addressed by maximizing the posterior distribution of a sparse linear model (SLM). We show how h…

Authors: Matthias W. Seeger, Hannes Nickisch