Penalized Likelihood Regression in Reproducing Kernel Hilbert Spaces with Randomized Covariate Data

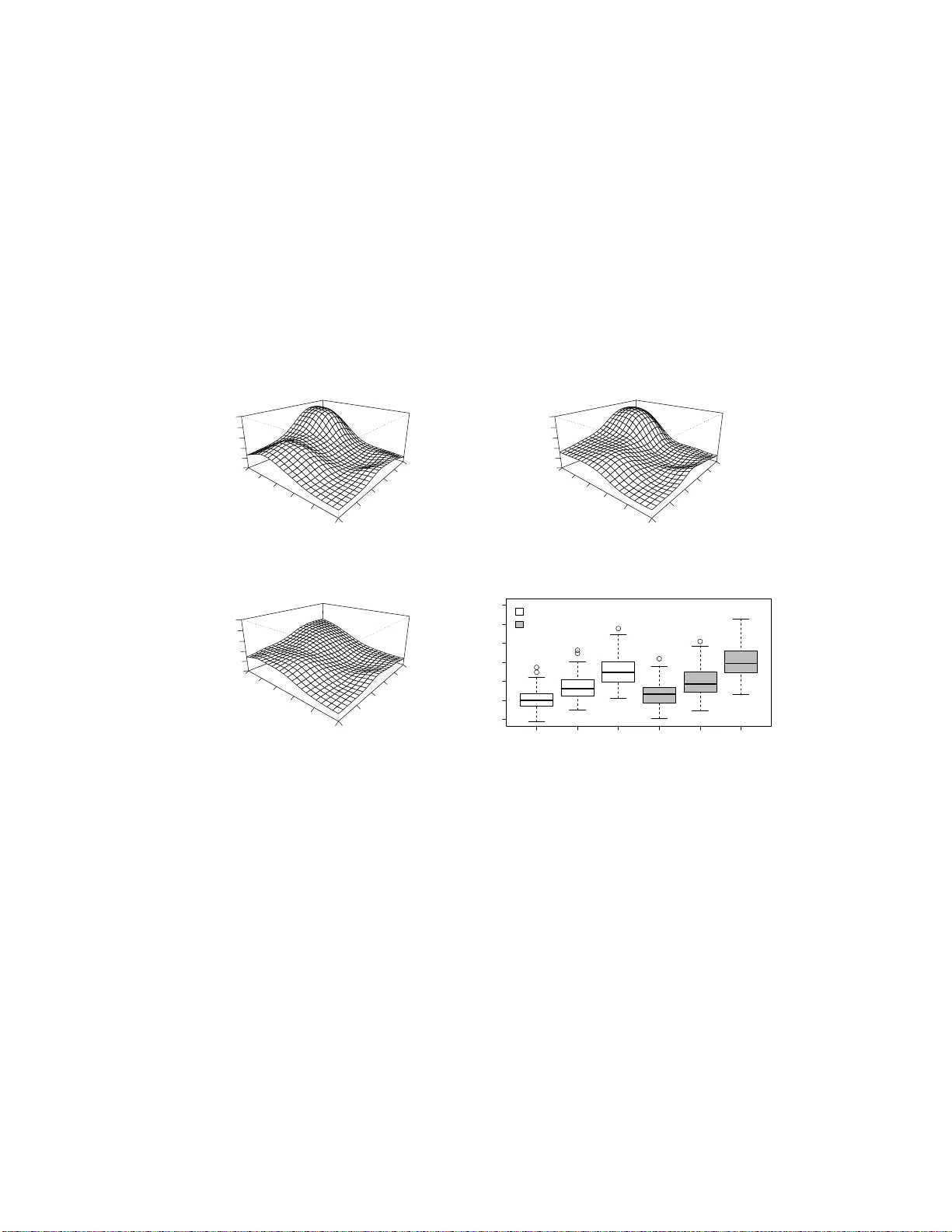

Classical penalized likelihood regression problems deal with the case that the independent variables data are known exactly. In practice, however, it is common to observe data with incomplete covariate information. We are concerned with a fundamental…

Authors: Xiwen Ma, Bin Dai, Ronald Klein