An Indirect Genetic Algorithm for Set Covering Problems

This paper presents a new type of genetic algorithm for the set covering problem. It differs from previous evolutionary approaches first because it is an indirect algorithm, i.e. the actual solutions are found by an external decoder function. The gen…

Authors: Uwe Aickelin

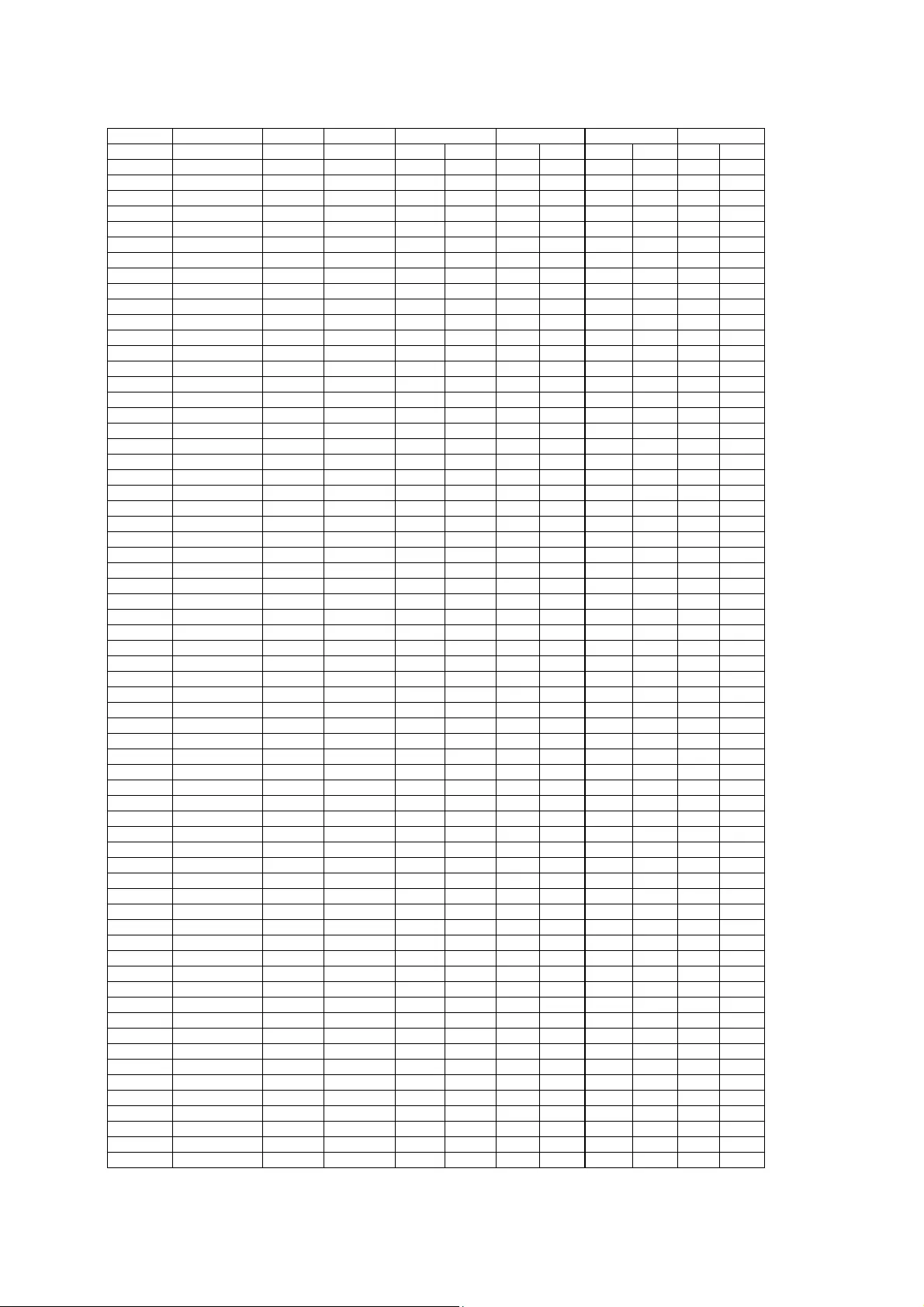

1 An Indirect Gen etic Algorith m for Set Covering Pro blems Journal of the Operationa l Research S ociety , 53 (10 ): 1118-112 6, 2002. Uwe Aickelin, School o f Computer Science, Univers ity of Notting ham, NG8 1B B UK, uxa@cs.nott.ac.uk Abstract This paper presents a new ty pe of genetic algorithm for the set covering proble m. It differs fro m previo us evolutionar y approaches first bec ause it i s a n indirect algori thm, i.e. the act ual solutions a re fou nd b y an exter nal decode r function. T he genetic algor ithm itself provides this d ecoder with per mutations o f the solution variab les and other para meters. Seco nd, it will b e sho wn that results can be further improved by adding a nother indirect optimisation layer. The decoder w ill not d irectly seek out low cost so lutions but i nstead ai ms for good exploitable solutions. These are then post optimised by a nother hi ll-climbing algorithm. Although seemingly more co mplicated, we will sho w that th is three- stage appro ach has advantages in ter ms of solution q uality, speed and adaptability to ne w t ypes of pro blems o ver more direct a pproaches. Extensive computational results are presented and c ompared to the latest evolutionar y and other heuri stic approac hes to the same data in stances. Keywords: Heuri stics, Optimisatio n, Scheduling. Introduction In recent years, genetic algorithms have beco me increasingl y popular for solving co mplex op timisation prob lems such as those found i n the areas of sc heduling or timetabli ng. The general appro ach in the past was to dir ectly optimise p roble ms with a gene tic algorit hm often co upled with a post optimisatio n pha se, i.e. both optimisation phases are directed to w ards loweri ng t he cost o f solut ions. The new ap proach presented here is d ifferent i n t wo respects. First, a separa te decoding routi ne, with para meters pro vided by the ge netic algorith m, sol ves the actual proble m. Seco nd, the aim o f this deco der op timisation is not to achieve the lo west cost solutio ns in the first instance. Instead, good so lutions with other desir able qualities ar e found, which are then e xploited b y a post optimisation hill-c limber. Although sounding more complica ted, this ap proach actually simplifies the algor ithm into three separ ate components. The co mponents themsel ves are r elatively stra ightforward and self-containe d making t heir use for other proble ms p ossible. For instance, we ar e curr ently working on a genetic algorit hm to op timise the set partitioning prob lem. T o do this the ge netic a lgorithm mod ule can be left intact. T he decode r module will undergo o nly very slig ht change s with most changes b eing made in the hill-climbi ng module. Thus, thi s appro ach is particularl y suitab le for p eople who have exp ert knowled ge of the p roble m to be optimised b ut who do not know evolutionar y co mputation, b ecause only the non-evo lutionary parts have to b e adjusted to the new proble m. 2 The appr oach has gro wn o ut of the ob servation that there is no general way o f includin g constraints into genetic algorithms. T his is one of t heir b iggest drawback s, as it does not make them readily a pplicable to most real world opti misation problems. So me methods for d ealing with constraints do e xist, notabl y b y use o f penalty and repair functions. However, their applicatio n and s uccess is pro blem specific. 1 I n the modul ar ap proach, problems involving co nstraints are solved b y the de coder m odule, whi ch frees the g enetic al gorith m fro m constrain t handling. The work described in thi s paper has two objectives. First, to develop a fast, flexible and modular solutio n appro ach to set covering pro blems and seco nd, to add to the bod y of knowled ge on so lving constrained p roblems using genetic algorithms indirectl y. We use a modular thre e-stage appro ach. First, t he genetic algor ithm finds t he ‘best’ per mutation o f ro ws and goo d pa rameters for stage t wo. The decoder routine, a si mple heuristic, then assigns good c olumns to ro ws in given orde r. Finally a post- hill-climber op timises the solutio ns fully. The Set Cover ing Proble m In thi s p aper, we conside r the set coverin g pr oblem. T his i s the prob lem o f coverin g t he rows of an m -row, n - column, zero-one m × n m atri x a ij b y a subset o f the columns at minimal cost. For mally, the problem c an be defined as follo ws: Defining x j = 1 if col umn j with cost c j is in the so lution and x j = 0 other wise. { } { } { } { } (3) ,..., 1 , ,..., 1 1 , 0 , (2) ,..., 1 1 subject to (1) Minimise 1 n 1 n j m i a x m i x a x c ij j n j j ij j j j ∈ ∈ ∀ ∈ ∈ ∀ ≥ ∑ ∑ = = Constraint set ( 2) guarantees that eac h row i is covered by at least o ne column. If th e inequalities in these equations are replaced by equalities, the resulting problem is called the set p artitioning prob lem, where o ver- covering i s no lon ger allo wed. The set-covering prob lem has been proven to b e NP-complete 2 and is a model for several important applications suc h as crew or railway scheduling. A more d etailed description of this clas s o f proble m and an overview of s olution method s is given by Cap rara, Fischetti and T oth. 3,4 3 Genetic Algorit hms Genetic algorithms are genera lly a ttributed to Holland 5 a nd his students in the 1970s, although evolutionar y computation d ates back further. 6 Genetic a lgorithms a re meta-heuristics that mimic natural evolution, i n particular Darwin’s idea of t he survival o f the f ittest. Canonical genetic algorithms were not intended f or function opti mis ation. 7 However, slightly modified versi ons proved very successful. 8 Many e xamples of successful i mplementations ca n be found in Bä ck, 9 Chaiyara tana and Zalzala 10 and o thers. 11 In short, genetic algorithm s copy the evolutionar y process by processing a population of sol utions simultaneousl y. Starti ng with usually ra ndomly created solutions better ones ar e more li kely to be chosen for recombination with other s to for m new solutions; i.e. the fitter a solution, the more li kely it is to p ass on its information to future generati ons of sol utions. Thus new solutions in herit good p arts from old so lutions and b y repeating t his proce ss over many ge nerations e ventually very good to o ptimal solutio ns should be r eached. I n addition to recombining solut ions, new solutions may be formed through mutati ng or randomly changing old solutions. Some of the b est solutions of each generation are kept whilst the others are replaced b y the newly formed solution s. The pr ocess is repeated until stopping crit eria are met. However, constrained o ptimisation with genetic algorithms r emains di fficult. T he roo t of the p roble m is that simply follo wing the build ing b lock hypothesis, i.e. combining good building b locks or partial solutions to form good full solutions, is no longer eno ugh, a s this does not check for co nstraint co nsistenc y. For instance, t he recombination of t wo par tially good solution s, whic h befor ehand satis fied all constraints , might no w no lo nger be feasible. To solve this dilemma, many ideas ha ve bee n prop osed, 1 ho w ever none so far has proved to be superior and widely applicable . In this work, we will follo w a new way of dealing with constraints: a n indirect route. For the set coveri ng proble m this mean s that t he genetic algorith m no lon ger o ptimises the actual p roble m directl y. Instead, t he algorithm tries to fi nd a n opti mal ord ering of all rows to b e covered. Once such a n ‘opti mal’ orde ring is found, a secondar y decoding routine assig ns columns follo wing a set o f rules. The obje ctive of this app roach is the overc oming of the pro blem known as epistasi s. For a detailed explanation of this pheno mena see Ree ves, 12 but brie fly it describes th e inability of t he genetic algo rithm to asse mble good 4 full so lutions fro m pr omising sub-solution s d ue to non-linear effects betwee n pa rts of different sub-solutions. For insta nce, in t he pr esence o f constra ints sub- solutions might be feasible i n t heir o wn right, b ut once combined they violate some con straint. T raditional genetic algorithms c annot cope with this well, and such algorithms ca n be dece ived to search in the wr ong areas. The indirect ap proach pr esented here is one possible way of alleviating t hese pro blems. By d ecouplin g the act ual solution space from the sear ch space of the genetic al gorithm, the epista sis within t he evolutionar y search is reduced. Our earlier work s howed that this is a promisi ng id ea to pursue with good results a chieved for complex manpower sc heduling and tenant selection p roblems. 13,14 Here, we will ta ke these ide as one step furt her b y introducing yet another indire ct step . Rather than immediately trying to find the optimal solution through the decode r, we instead aim for goo d and further e xploitable solution s. These are then in tur n pro cessed by a post- hill-climber. T his ap proach is based on obser vations made whilst designing a traditio nal direct genetic algorithm for a nurse scheduling p roblem. 15 T here it was found that by aiming for balanced solutions in the first i nstance and then subseq uently optimis ing those, super ior results coul d be achieved. Here similar obser vations are made. The actual genetic algorithm c omponent of o ur algorithm follo ws closel y traditional ev olutionary appr oaches using a pe rmutation-based en coding. T his means t hat a solutio n, as handled by the g enetic algor ithm, is a permuted li st of a ll ro ws to be covered . For insta nce, if there were five ro ws to be co vered, one possible solution might look li ke (2, 4, 5, 1, 3 ). To arrive at an actual solu tion to the se t covering pr oblem de tailing which co lumns will be used, thi s permutation is the n post-processed b y the d ecoder routine as de scribed in the nex t section. We will use an e litist and generatio nal appr oach; i.e. most of t he po pulation is eli minated at one time leavin g the fittest individ uals. An a lternative to this would have b een a steady-state app roach where one o r two me mbers of the population are replaced at a time. The in herently higher convergence pre ssure of this a pproach co uld possibly be off-set b y higher mutation ra tes. Ho wever, follo wing pr eliminary e xperiments the ge nerational appr oach that had w orked well i n the past see med to be s uperior and was therefore adopted for this study. T he stead y-state appro ach will be investigated further in future resear ch. Fitness is measured as t he cos t of the targe t function ( 1). For this particular implementatio n, the decod er ro utine ensures t hat all solu tions are feasible. For more generic ap proaches, this condition will no t al w ays be desirab le 5 and / or possible to be upheld as simply finding any feasible solution might b e extre mely difficult. I n those cases a penalty fu nction approac h, penalisin g solutions pro portional to their violation of t he constraints, 16,17 seems a sensible app roach. Such an app roach led to success in our earlier work. 13,14 To avoid bias from ‘super-fit’ individuals, which potentiall y could take o ver the po pulation, and to enhance diversity of selection later in the sear ch when solutions and thus fitness values will begin to converge, a r ank- based selection mechanis m is used. After the fitness value is evaluated for each so lution, all solutions are r anked according to this measure, wit h tie s br oken by taking the me mber with the lo wer i ndex a s numbered during the initialisation. S ubsequent roulette wheel type select ion is based on these ran ks. For i nstance in a populatio n with 200 members, the fittest i ndividual would be assigned rank 200 , the second fittest r ank 1 99 etc, down to ran k 1 for the lea st fittest member. T his res ults in the best i ndividua l b eing c hosen roughly twice a s often as an avera ge individual and far more often t han below avera ge members. Due to the nature of the permutation-based e ncodings, traditio nal cr ossover operato rs such as 1-po int or uniform crossover canno t be used as the y c ould lead to infeasible p er mu tations. Instead, or der-based cro ssovers have to be employed. Unless other wise stated, the oper ator used in all experi ments is the per mutation uniform-like crossover (PUX) with p = 0.66. 13 This is a r obust cro ssover pro ven for d ifferent pro blems and encodings. For the mutation operator a simple swap operator that exchanges the position of t wo gene s is used. A summary of t he basic indirect ge netic algorithm u sed can be seen i n Table 1 . The Decoder At the heart of our ap proach lies the deco der function. I t is this sub -algorithm that tr ansfor ms the encoded permutations into ac tual soluti ons to the pro blem; i.e. it transfor ms the stri ngs of r ow numb ers into columns used to co ver these. As o utlined before, the thin king behi nd this is t hat the genetic algorit hm c annot cop e well with the epistasis present i n the o riginal prob lem. However, it can find pr omising regions in the simpli fied search space that can t hen b e exploi ted b y the d ecoder. Thus onc e an ‘optimal’ or dering ha s been found a relatively simple decodin g routine s hould b e able to assign the right values, i.e. o nce the ro ws have b een sorted t he decoder tries to find the co rresponding c olumns. What qualities s hould such a d ecoder therefore possess? I t seems that at least, it 6 • Must be co mputationally effic ient. • Must be deter ministic, i.e. the same permutatio n always yield s the same solution. • Must be able to reach all solutio ns in the solution spac e. • Should pr oduce good solutions. Let us consider e ach of the abo ve, in turn. Firstl y, the de coder has to b e computationall y efficient, because it will be used o nce for each ne w solution. T hus, a genetic algorithm with 200 members in the pop ulation, to be r un for 500 generations, will i nvolve approximately 100,000 decoder execut ions. In traditional evolutionar y algorith ms, the fitness evaluatio n function is the m ost ti me-critical component. T his shows a paralle l between the fitness evaluation and the decode r. One co uld say that in many respects the decod er is a n e xtended fitness evaluatio n function, since it eval uates t he fitness a fter first decod ing the solution by assigning values to the variables. It follows from the freq uency o f its use that the decoder must be kept computationall y si mple. T his fits with our early conjecture that if the or dering is right, a simple decod er will achieve good solutions. Secondly, we expect the d ecoder to be deter ministic in the sense t hat the same i nput, i.e. a particular permutation of r ows, always produces the same output, i.e. a par ticular set o f columns. Although at first glance restrictin g, this chara cteristic is neces sary to fit in with evolutionary algorithm theory. O ne of the f oundatio ns of the workings o f these algorithms is the idea that fitter individ uals w ill receive proportio nally more samplings. However, in o ur case, fitness can only b e measured a fter a string has b een pr ocessed b y t he d ecoder. This fitnes s is the n as sociated with the o riginal string for reprod uction purposes. If o ne stri ng could decode into different solutions these would m ost likely have different fitness values. This could potentia lly confuse the genetic algorithm, a s the f itness o f p articular solution parts i s then not alwa ys the sa me, which i s contrar y to the fundamental b uilding block h ypothesis. 5 The third criterio n is that we want to be able to reach all possible solutions. T his see ms a sensible thi ng to ask at first, howe ver on closer inspe ction it might not be necessary a nd possibl y not eve n desirable to d o so. T he reason is t hat one stren gth of having a multi-level app roach is that we c an possibly c ut d own on the size of the solu tion space we search. For insta nce, high c ost or infeasib le sol utions will generall y not be in t he user's interest. Therefore, we will con struct a d ecoder that purp osely b iases the search to wards pr omising regions b y cut ting o ut 7 undesirable areas. However, by d oing so we d o run the ris k of excludin g good a nd possibly the opti mal solution from the sear ch. We will give a n example o f such a case in the ne xt section and we will sho w ho w this po ssible hazard can be minimised b y having intelligent wei ghts. Finally, we ask t hat the deco der is capa ble of pro ducing good solutions. Clearly this i s what it is designed for and should come as no surprise. Ho wever, as we will point out later, it might p ay not to aim for the seemingly best solution straight away and rather go for a solutio n t hat is further exploitable by a hill-climber. As mentioned above, the d ecoder has to be kep t co mputationally fast, so too co mplicated hill -climbing or other r outines cannot be incorpo rated. It would o ften b e a waste of ti me to apply these reso urce intensive hill-climber s to the who le o f the p opulation. T hus, the appr oach outlined in the following will be one of two sta ges. The de coder searches for promising feasible b ut not yet fully opti mised solutions. These are then further exploite d and op timised by a secondar y decoder or hill-cli mber. We will sho w that such a two tier algorithm is superio r to a single s tage decode r. How to choose a colu mn ? Having e stablished some theoretical background for the dec oder, we now need to build o ne for the set co vering proble m at hand. As outli ned abo ve, the genetic a lgorithm is feeding permuta tions of th e row indices into the decode r and the desired output is a lo w-cost lis t of colu mns used to c over all ro ws. For in stance, an e xample for 5 r ows and 10 columns might look as follo ws: The evolutionary algor ithm pro vides the foll owing per mutation o f rows: (2, 3, 4, 5, 1). This is then fed i nto the d ecoder, which assigns the follo wing co lumns (4, 6, 4 , 7, 1). As ca n be seen, the total number o f columns used is less than or eq ual to the total number of ro ws to be covered as columns may co ver more than one ro w . To achieve this t he following scheme is p roposed using a methodolog y that ensures all solutions are feasible as every ro w is covered at leas t once. In ord er to b uild solutions, the d ecoder w ill c ycle thro ugh all possib le candidate co lumns for each row a nd then choose t he column most suited based on the following criteria: (1) Cost o f the column. ( C 1 ) (2) Ho w many uncovere d rows does it co ver? (C 2 ) (3) Ho w many rows do es it cover in total? (C 3 ) 8 For ea ch candidate column a (weighted) score S will be calculated b ased o n the above criteria , i.e. S = w 2 C 2 + w 3 C 3 - w 1 C 1 . The incl usion o f criteria 1 a nd 2 is self-explanator y. Criter ion 3 is i ncluded to be fairer to late - coming columns, i.e. those that cover many ro ws at low cost, but, because of th e order of rows, are not considered in the b eginning. T he idea be hind this i s that by includin g suc h colu mns, others might beco me redundant a nd can then be rem oved. Ho w c an C 1 , C 2 and C 3 be measured? The first and the third criteria are straightfor ward, as t he cost i nfor mation as well as t he total number o f ro ws a col umn co vers are parameters o f the model. No calcu lations ar e req uired at all. T he second criteria needs to be ca lculated for each candid ate b y taking into acco unt rows alre ady covered b y o ther columns. Fr om all possible ca ndidates the col umn with the highest score is chose n. Possi ble weights for t he score co mponents are disc ussed in the fo llowing. The pre sented decoder is computationally simple as req uired, b ut does it meet all the o ther criteria d iscussed in the previous sect ion? Encod ed permutations s hould always decod e to the same solutio n, provided that ties are broken in a deterministic way. For the remainder of this p aper all ties will be br oken on a first c ome first ser ved basis. It is les s clear if t he decod er is capable of reaching all solutions i n the solution spa ce. Clearly all infeasible solutions have bee n c ut o ut. This is in line with our discussion abo ve, p urposely biasin g t he search. However, one might arg ue that so me useful infor mation might be lost by excl uding all infea sible solution s a priori, as some of them might c ontain good information. Based on experi mental experience, it see ms that on b alance by excluding all in feasible solutions more is gained than b y in cluding in feasible one s that unneces sarily distra ct and burden the searc h. However, the prop osed decoder is not capable o f reaching all feasible sol utions either . Firstly, by c hoosing candidates w ith high scores, the search is biased to wards the inclu sion of low cost columns. T hus, certain columns with ver y high costs mi ght never be consider ed at all b y the d ecoder, which, in most cases, should be a sensible de cision. Of more concern, lo w-cost colu mns might also be excluded if a ce rtain fixed set of wei ghts is used. Con sider a ro w R that ca n onl y be co vered b y two columns CO1 and CO2. Further, let us as sume t hat CO1 is c heaper than CO2 but only covers row R whilst CO2 cover s ro w R plus an add itional ro w. Dependi ng o n how the wei ghts are set, eit her C O1 or CO2 will always be c hosen to cover R . If, unfortunately, the weights have been set wron g, a column requ ired for the o ptimal solution co uld be excluded. 9 To overcome these proble ms of fixed weights and to avoid having to fi nd good weights in the first place , e.g. via complex pa rameter op timisation, the follo wing extension to the algorithm is prop osed. I n addition to searching for the be st possible p ermutation of ro w indices, the genetic algorithm will simultane ously search for good weights: 18,19 T o the end o f the string, as many a dditional genes are a ttached as there are weights / c riteria. So, for instance, in the case of five ro ws and three criteria, the s tring will no w look like t his: (2 , 3 , 4 , 5, 1; w 1 , w 2 , w 3 ), where w 1 , w 2 and w 3 are the weights for the criter ia as set out p reviously. Originally, these weights are r andomly i nitialised in a sufficientl y large range to include suitable val ues. T o avoid the pr oblems of m issing v alues a nd pre mature co nvergence, t he weights al so take place in a si mple mutation opera tion. In ever y generation, t here is a chance, e qual to the mutation prob ability, that a weight is reset to a random value. I n additio n, these additional weight genes will not undergo normal cr ossover and, instead, t he rank-weighted average of b oth p arents will b e passed on to the children. T hus, the weights found in better parents will do minate and e ventually t he pop ulation will co nverge to one set, or a few sets, of weights suitable to the particular set o f data. T hus, more importa nt criteria will have higher weig hts assigned to them. The final component of the optimisation process is a si mple hill-cli mbing routi ne emplo yed after the deco der has finished. T his i s necessary b ecause it will usually happen that rows are covered by more than one co lumn, possibly in such a way that some columns are redu ndant and can be removed from the solution witho ut influencing fea sibility. T he hill-climber w e use will be a simple improvement routi ne that c ycles once through all used col umns i n desce nding cost order starting with the most expensive column. I f a co lumn is found redundant, it is removed a nd the solution value i s adjusted acc ordingly. The following example will clar ify the decod er’s functioning. First, t he ge netic al gorith m per forms its work creating new so lutions via selectio n, cro ssover and m utatio n. For a simple exa mple o f five ro ws and three criteria, one string pr oduced might be (2 , 3 , 4 , 5 , 1; 10, 30, 15 ). T his tells the dec oder to find a suitable colu mn for ro w 2, the n one for ro w 3 etc. T he score for each c andidate will be calculated using a weight o f 10 for the first cri terion, a weight of 30 for the second and of 1 5 for t he third. So, the d ecoder would star t loo king at a ll columns t hat can c over r ow 2 first. For eac h colu mn i that c overs ro w 2 a score S i is then calc ulated a s 30 x the number of uncovered ro ws it cover s + 15 x tota l number of ro ws it covers – 10 c ost of the column. For instance, 10 if a column with a cost o f 5, covers 3 rows, all of which are yet to b e covered, it score w ould b e 30 x 3 + 15 x 3 – 10 x 5 = 85. Once the column s with the highest score have been chose n (or, in the case of a tie, the first such column), the decode r moves o n to co vering the ne xt r ow, in this example ro w 3. Before moving on, the cover provided b y the chosen column(s) is update d. If row 3 is already covered by t he column(s) chosen so far, then the decoder proceed s to find a co ver for row 4 . In subsequent stages, th e scores calculated for candidate columns must take into account other columns that ha ve alre ady been picked. T his influences the second cr iteria, the number o f uncovered ro ws the column co vers. T he d ecoder will the n proceed in this fa shion until a cover for t he la st ro w, (here, row 1 ) has been fou nd. Befor e calculating its fitness, the solution goes through the simple hill-climber, outlined above, r emoving redu ndant columns. Table s 2 and 3 report the results found with the abo ve versi on of d ecoder under the 'Basic' column label. Fo r the genetic algorithm part onl y one crosso ver operator (PU X) with p = 0.66 was used. Thus, it is a uniform-like crossover whilst at the sa me ti me showing si milar proprieties as PMX regard ing the number o f ge nes retaini ng absolute and relative po sitions. 13 All e xperiments were car ried o ut on a 450 MHz Pentium II PC using the freeware LCC co mpiler s ystem. To co mpare solutio ns ti mes with those of other researc hers, these were adj usted in the manner suggested by Caprara, Fischettit & T oth, t o DECstat ion 500 0/240 CPU seco nds. 3 T his leads to some u navoidable app roximations, b ut gives suf ficient acc uracy to pro vide some insight s. T he tables show the best re sults o ut o f 10 runs for each data insta nce and the aver age solution ti me over all 10 runs with the stopp ing criteria as spec ified above. The data used is ta ken Fro m Beasle y’s OR library 20 a nd is identical to that used i n the pape rs our results are compared to. T hese comparisons are with a direct genetic algor ithm lab elled BeCh 21 and a Lagrangean -based heuristic labelled CFT. 3 I n all, 65 data sets were used, ranging i n size fro m 200 rows x 1000 columns to 1 000 rows x 10 000 columns a nd in d ensity (average propo rtion of ro ws covered b y a colu mn) from 2 % to 2 0%. Summarised results can b e seen in table 2 and figure 1and detailed results i n ta ble 3. As can be seen fro m the tables, the r esults ar e encouraging, but weaker than those fo und b y other researchers. In par ticular r esults are poor for the larger data sets a nd take very lon g to co mpute. Hence, furt her refinements o f our strate gy are required. 11 The experiments were also r epeated for a set of fixed weights, i.e. all individ uals using the same set of weights throughout the whole opti misation process rat her than using adj usting weig hts a s propo sed above. T he val ues of the fixed weights were chosen a s the weight set u sed by t he overall b est individual in the final ge neration of eac h run. T hus, the weights were not necessaril y the sa me for all ten runs on a pro blem set of data. The results of these experi ments, which ar e not rep orted here in detail, were of signi ficantly poo rer quality. T his leads u s to believe that adj usting weights is super ior to finding the ‘best’ se t o f w eights. Finally, adjustin g weights allo ws the algorith m to c hange the weights as the search progresses, for e xample b y putting more w eight o n the covering criteria early in the search, and relati vely higher weights o n the cost cr iteria later on. This effect has been observed. Perhaps t he b iggest ad vantage of per mitting weights to be adj usted is the fact that more variet y exists within the al gorithm, ult imately leading to a more thoro ugh exploration a nd better solution s. Further Enhance ments Although pro mising, the resul ts found so far a re no match for those found el sewhere in the literature for t he same data sets. 3,20 In this sectio n, we will su ggest some further algorithm en hancements, namely a modi fication of the cost cr iterion, the introduct ion of a fo urth criterion and the use of d ifferent cr ossover o perato rs. The first two enhancements are intended mainl y to improve the look-ahead capacity of the dec oder, which curr ently is restricted to c riterion 3, the total number of ro ws co vered by a co lumn. B y 'look-ahead' we mean the cap ability of making good early choices that are not too ‘greedy’ and allow for equall y good choices towards the e nd o f the string. With t hese impro vements i n place, we hope our algorithm will show si gnificantly improved results when applied to the set covering prob lems. One of t he most i mportant crit eria when c hoosing a co lumn is it s cost, as t he overa ll aim is to arrive at a lo w cost solution. When co mparing columns for a particular row, comparing the cost o f the c olumns provide s indeed a like-for-like c omparison. However, in ter ms of lo oking ahea d, simply comparing the cost of a column i s not the best possible move. Co nsider the follo wing e xample: T wo c olumns CO1 and CO2 have only the cover of ro w R in common. Furthermore, both cover nine ro ws in total, all of which are c urrently u ncovered. CO1 has a c ost of 8 whilst CO2 has a c ost of 10 . Therefor e, it seems at first gla nce that CO1 is t he better choi ce. 12 One might agree that for the particular row R currentl y under investigation CO1 d ominates CO2 . Ho wever, what about the remai nder of the ro ws they cover? I t might well be that CO2 with a cost of 10 is a cheap way o f covering those other ei ght rows, if only more expe nsive colu mns would be available to do so. Equally, it could be the case that CO1 is a c heap op tion for R, but an expensive one for the r emainder of the ro ws it cover s as possibly cheaper colu mns co uld do that. Hence, it seems to be sensible to use rank-based c ost information r ather than a direct cost -based one. To implement this, the original cost criterion C 1 c ould be split into the follo wing two sub-criteria: • The average co lumn’s cost ran k for all uncovered rows it would co ver. (C 1a ) • The average co lumn’s cost ran k for all ro ws it would cover. (C 1b ) For exa mple a column t hat c overs five rows in total and whose cost amongst all colu mns coveri ng each of the five ro ws are ranked 3 rd , 5 th , 3 rd , 1 st and 2 nd would have an average all row cos t rank o f (3 + 5 + 3 + 1 + 2) / 5 = 2.8. T he average uncovered rows cost ra nk would be determined in a similar fashion. T he score of a candidate column is no w calcula ted a s S = w 2 C 2 + w 3 C 3 - w 1 (C 1a + C 1b ). Summarised results for this ne w type of de coder are reported in Table 2 under the 'New Cost' lab el. One can see that the quality of solutions has i mproved, although not yet to the sa me level as t he best evolutionar y app roach b y Beasley. T he res ults also sho w the exte nt of the additional computation al burd en bei ng intro duced b y these extra calc ulations proving how t ime critical any changes to the decoder routine are, espe cially for the larger d ata sets. To improve our algorith m further, it see ms we have to extend its look-ahead capabilities more. Currently, the decode r tries to get it ri ght the first time, i.e. t he decoder attempts to fi nd the best p ossible solutio n straight a way. That is, on the first ( and o nly) pass o ver the per mutation, t he dec oder has to identify the b est po ssible match for each ro w. To wards the e nd of the string, when many columns have alre ady been fixed, it seems a reasonable assumption that t he deco der will make sensible choices for the remaining ro ws to be cover ed. However, earl y on in the search, e ven with its impro ved co st criterion, many cho ices w ill still b e made arbitrar ily. To overco me this limitation, the follo wing fourth cr iterion for choosi ng candidate columns is introduced : • Will the i nclusion of the ca ndidate m ake another column r edundant? If so, what i s the cost of t hat redundant col umn? (C 4 ) 13 Unfortunatel y, in this form C 4 is not a ver y viable approach be cause the calculations re quired to establish the criterion for ever y candidate f or each ro w would b e so computationall y expensive as to dwarf the re mainder of the al gorithm. In t he light of this, it was decided to change the criterion into the following, which should be a good indicato r whilst being fa r less expensive co mputationall y. • How many ro ws does a colu mn share with those alread y chosen? ( C 4a ) Which lead s to the follo wing ne w equation to calc ulate the sco re of eac h ca ndidate S = w 2 C 2 + w 3 C 3 - w 1 (C 1a + C 1b ) + w 4 C 4a . This new criterion i n conjunctio n with criterion 3 (ho w many rows does a c olumn cover in total) has the effect that overlappin g cover is enco uraged, whilst still taki ng into acco unt the co st and effici ency criteria. Ho w ca n this be a good thing? Bec ause solutions will go t hrough the hill-climber, skimming off redunda nt and expe nsive columns at negligible e xtra computatio nal time. One could look at this as having intro duced a second level of ‘indirectness ’. T he goal is not to get the best solutio n str aight a way, but instead a solution that is well balanced and can then b e exploited by another simple algorit hm. As the resu lts i n tab le 2 under the '4 Criteria' label show, this works very wel l here with results much i mproved and now ver y similar to the b est evolutionar y ones. Additionall y, co mputation times have be en significantly reduced par ticularly for larger d ata instances. T his is attributed to faster converge nce made possible b y the syne rgy effects between the new c riterion and the hill- climber. The final e nhancement of our algorithm will be to u se a var iety o f c rossover operator s (fro m co nservative to aggressive), co ntrolled b y the genetic algor ithm. Three dif ferent order -based crossover operators ar e used: 1- Point equivalent crossover, 22 Partial Mapping Crossover PMX 23 and Per mutation b ased Unifor m-like Cros sover PUX. 13 When comparing the per formance of these t hree crossover op erators individually, little d ifference in overall solution q uality was notice d (no results pr ovided). T he final results pr esented in tables 2 and 3 under the 'IG A' (Indirect Genetic Algorith m) l abel are for an algorith m where the cho ice o f cros sover is l eft to the evolu tionary 14 process itself. This is achieve d by ad ding one ad ditional gene to the string, whic h indicat es the t ype of cro ssover used, i.e. 1 = 1 -point, 2 = P UX, 3 = P MX. Originall y initialised at rando m, duri ng repr oduction the c hildren ar e created using the crossover of the fitter parent. Both children then inherit this crossover type. T o avoid bias and premature convergence, the crossover parameter also undergoes mutation with the same mutation probab ility as the remainder o f the string. A mutation in this case eq uals a ne w random initialisatio n. The results show that t his imp roves solution quality furt her, rivalli ng those o f the be st evolutionar y appro ach. Moreover, average so lution time is further reduced to w ell belo w tha t of t he app roach by Beasley. T his can be understood by examining some op timisation r uns. I n the early stages of o ptimisatio n, t he m ore aggressive crossover o perators PMX and P UX dominate. However, lat er, wh en smaller cha nges are required, the algorith m switches to 1-po int crossover and thereb y c utting down on the number of ge nerations need ed for co nvergence and hence termi nation. Conclusions and F urther Wo rk This paper has p resented a novel genetic algorit hm approac h to solving the set covering pr oblem. T he algorit hm differs fro m previous evolutio nary appro aches by taki ng an indirect route. T his is ac hieved b y splitting t he search i nto three distinct phas es. Firs t, t he genetic algorithm finds good pe rmutations of the ro ws to be covered along with suitab le para meters for the second stage. The second stage co nsists o f a d ecoder that b uilds a solutio n from t he per mutations using the parameters provided. How ever, the best possible s olution s are not so ught outright, instead good but furt her explo itable o nes a re b uilt. Third ly, these are the n full y optimised using a hill- climber. This ap proach has a number o f advanta ges. M ost i mportantly, the search is conducted in such a way that the genetic algorith m can co ncentrate on what it is b est at: identi fying promising regio ns i n the solution space . Furthermore, no p arameter opti misation is required as t his is do ne b y the al gorithm i tself a utomatically adjusting to re quirements. B y decoupli ng the d ecoder from the hill -climber, the former ha s a b etter chance of looking ahead and p roducing better s olutions. The o verall results achieved r ival i n quality th ose found by the best evolutionar y algorithm whilst significantly less co mputation time is used. 15 Nevertheless, more work needs to b e done i n this pro mising area. First, the resu lts found ar e not the b est possible as our algorith m is outperformed by a more pro blem spec ific he uristic. 3 O ur res ults co uld po ssibly be f urther enhanced b y additio nal criteri a to select the columns or a m ore intelli gent for m o f muta tion of the stri ngs. Also, in light o f the fast r un times o f our a lgorithm, a re laxation o f the stopp ing criterion might impro ve results further. However, we feel that the strength of our appr oach is in its modularity and hence easy adaptab ility to new proble ms . Once a suitable order-based encodi ng is found, onl y the decodin g and hill-cli mbing criteria need to be changed rather t han having t o redesign ever ything. We a re currently inves tigating the use of such a sli ghtly modified algor ithm on the set partitioning pro blem with enco uraging results. References 1 Michalewicz Z (1 995). A Survey of Constrai nt Handling T echniques in Evolutionar y Comp utation Methods. In Jo hn R. McDonnell, Robert G. Re ynolds, a nd Da vid B. Fogel (ed itors). P roceedings of t he 4 th Ann ual Conference o n Evolu tionary Programming , MIT Press, Ca mbridge, pp 13 5-155. 2 Garey M and J ohnson D (1 979). Computers and Intractab ility: A guid e to the theory of NP-co mpleteness . W.H. Free man, San Francisco. 3 Caprara A, Fischetti M a nd T oth P (1999). A Heuristic Me thod for the Set Coveri ng Problem, Operations Research 47 : 73 0-743. 4 Caprara A, Fischetti M a nd Toth P (1999) , Algorithms for t he Set Covering Pro blem, working paper, DE IS, University of Bo logna, Italy. 5 Holland J ( 1976). Adapta tion in Natural a nd Artificial Syste ms . Ann Arbor , University of Michi gan Press. 6 Fogel D (1 998). Evolutiona ry Computation : The Fossil Reco rd . IEEE Press, Jo hn Wiley & So ns, Ne w York. 7 De Jo ng K (1 993). Genetic Algor ithms are NOT Function Optimisers. In Whitley D (Edito r). F oundations of Genetic A lgorithms 2. Morgan Kaufmann P ublishers, San Mate o, pp 5 -17. 8 Deb K (1996). Genetic A lgor ithms for Functio n Optimisation. In F. Herr era, J.L. Verdegay (E ditors.) Studies in Fuzziness and Soft Computing Volume 8 , pp 4-31. 9 Bäck T (1993). Applica tions of Evolutiona ry Algorithms . 5th E dition, Dortmund, Germany. 10 Chaiyaratana N and Zalzala A (1997). Recent Developments in Evolutionar y and Genetic A lgorith ms: Theory and Applications. In Fle ming P, Zalzala S. (editors ). Genetic Algorithms in Engineering S ystems 2: Innovatio ns and Applicatio ns . IEEE Proc eedings, Letchworth: O mega Print & Desi gn, pp 2 70-277. 16 11 Reeves C (19 97). Genetic Alg orithms for the Oper ations Resear cher, INFORMS 4 5 : 231 -250. 12 Davidor Y (1 991). Epistasis Variance: A Vie wpoint on GA-Hard ness, in Rawlins G (editor) , Foun dations of Genetic Alg orithms , Morgan Kaufmann Publis hers, San Mateo , pp 23-35. 13 Aickelin U and Dowsland K (2001). A Co mparison of I ndirect Genetic Algorithm Approaches to Multiple Choice Prob lems, Journal of Heuristics , in print. 14 Aickelin U a nd Do wsland K ( 1999). An i ndirect genetic al gorithm appr oach to a nurse scheduling pro blem. Submitted to Co mputers and Operations Resear ch. 15 Aickelin U and Do wsland K (200 0). Exploiting p roblem str ucture in a genetic algorithm appr oach to a nurse rostering prob lem. Journal of Scheduling 3 : 139-153. 16 Richardson J, Pal mer M, Lie pins G and H illiard M (1989). So me Guideli nes for G enetic Algorit hms with Penalty Functions, in Schaf fer J (editor), Proceedings of the Third Interna tional Conference on Genetic Algorithms a nd their Ap plications , Mor gan Kaufmann Publishers, San Mateo, pp 191-197 . 17 Smith A and T ate D (199 3). Genetic Optimizat ion Usi ng a Penalty F unction, in Forrest S (editor ), Proce edings of the Fifth Inter nationa l Reference on Genetic Algorithms , Mo rgan Kau fmann Publishers, San Mateo, p p 499-505. 18 Davis L ( 1985) . Adap ting Ope rator Probabilities in Genetic Algorithms, in Grefe nstette J (e ditor), Proce edings o f the First In ternation al Reference on Gene tic Alg orithms and their Application s , Lawrence Erlbaum Associate s Publisher s, Hillsdale New J ersey, pp 61-67. 19 Tuson A, Ross P (1 998). Adapting Ope rator Settings in Genetic Algorithms, Evolutiona ry Compu tation 6 : 161-184 . 20 Beasley J (1990). OR-librar y: distributing test pr oblems b y electro nic mail, Jou rnal of the Ope rational Research S ociety 41 : 10 69-1072. 21 Beasley J a nd Chu P (19 96). A Genet ic Algorithm for th e Set Cover ing P roble m, Europ ean Journal of Operationa l Research 9 4 : 392-404. 22 Reeves C (199 6). Hybrid Genetic Al gorithms for Bin-Packing and Related P roblems. Ann als of OR 63 : 371- 396. 23 Goldber g D and Lingle R (1985). Alleles, Loci, and the Travelling Salesman Prob lem. I n Gre fenstette J. editor. Proceedings of t he First International Reference on Genetic Algorithms and t heir Applica tions , Hillsdale New J ersey: Lawren ce Erlbau m Associates Publishers, p p 154 -159. 17 Parameter / Strate gy Setting Population Size 200 Population T ype Generational Initialisation Random Selection Rank Based Crossover PUX Ord er-Based cro ssover Swap Mutatio n Probab ility 1.5% Replacement Strate gy Keep 20 % Best of each Gener ation Stopping Criteria No improve ment for 50 generations 18 BeCh 21 CFT 3 Basic New Co st 4 Criteria IGA Problem Set Dev. Time Dev. Time Dev. Time Dev. Time Dev. Time Dev. Time 4 0.00% 163 0.00% 6.5 0.22% 33.6 0.00% 112.4 0.0 0% 76.5 0.00% 93.3 5 0.09% 540.2 0.00% 3.2 0.25% 49.5 0.16% 85.4 0.00% 78.6 0.00% 61.2 6 0.00% 57.2 0.00% 9.4 1.38% 66 0.96 % 54.3 0.00% 10.2 0.00% 7.6 A 0.00% 149.4 0.00% 106.6 0.56% 146.4 0.4 4% 182.4 0.0 6% 79.2 0.00% 81 B 0.00% 155.4 0.00% 7.4 1.06% 337.2 0.9 4% 232.4 0.0 0% 104.7 0.00% 30.4 C 0.00% 199.2 0.00% 66 0.35% 277.2 0.1 1% 368.22 0.00% 145.3 0.00% 82.8 D 0.0 0% 230.4 0.00% 17.2 2.47% 721.2 2.0 2% 1100 .2 0.48% 220.4 0.32% 69 E 0 .00% 8724 .2 0.00 % 118.2 0.67% 1592 .4 0.59% 2395 .7 0.00% 120.5 0.00% 56 F 0.00% 2764 .8 0.00 % 109 1.54% 2125 .2 0.68% 3154 .3 0.21% 450.4 0.00% 142.8 G 0.1 3% 12851.4 0.00% 504.8 4.70% 2827 .2 3.84% 4343 .2 0.13% 687.4 0.13% 342.8 H 0.6 3% 6341 .6 0.00 % 858.2 5.68% 3188 .4 4.55% 4123 .8 1.88% 701.5 1.30% 412 Overall 0.0 8% 2925 0.00% 164 1.72% 1033 1.30% 1468 0.25% 243 0.16% 125 19 0.00% 0.20% 0.40% 0.60% 0.80% 1.00% 1.20% 1.40% 1.60% 1.80% 2.00% BeCh CF T Ba si c N Co st 4 Cri t IGA Algo rithm Mean Deviatio n from Optima 0 50 0 100 0 150 0 200 0 250 0 300 0 350 0 BeCh CFT Basi c N Cost 4 Crit IGA Algorithm Mean Solution Time [s] 20 BeCh 21 CFT 3 Basic IGA Problem Size Density O ptimum Sol Time Sol Time Sol Time Sol Time 4.1 200x1000 2% 429 429 295 429 2 429 36 429 105 4.2 200x1000 2% 512 512 9 512 1 512 42 512 57 4.3 200x1000 2% 516 516 16 516 2 520 36 516 63 4.4 200x1000 2% 494 494 142 494 10 495 27 494 90 4.5 200x1000 2% 5 12 512 44 512 2 512 18 512 120 4.6 200x1000 2% 560 560 16 560 19 560 33 560 39 4.7 200x1000 2% 430 430 139 430 3 433 42 430 144 4.8 200x1000 2% 492 492 819 492 22 492 21 492 93 4.9 200x1000 2% 641 641 136 641 2 644 24 641 159 4.10 200x1000 2% 514 514 14 514 2 514 57 514 63 5.1 200x2000 2% 253 253 42 253 3 253 51 253 27 5.2 200x2000 2% 302 302 1333 302 2 307 45 302 81 5.3 200x2000 2% 226 228 11 226 2 228 36 226 39 5.4 200x2000 2% 242 242 10 242 2 242 27 242 120 5.5 200x2000 2% 211 211 15 211 1 211 30 211 87 5.6 200x2000 2% 213 213 30 213 1 213 87 213 15 5.7 200x2000 2% 293 293 195 293 15 293 48 293 135 5.8 200x2000 2% 288 288 3733 288 2 288 69 288 27 5.9 200x2000 2% 279 279 14 279 3 279 78 279 48 5.10 200x2000 2% 265 265 19 265 1 265 24 265 33 6.1 200x1000 5% 138 138 46 138 23 142 60 138 9 6.2 200x1000 5% 146 146 211 146 18 147 69 146 5 6.3 200x1000 5% 145 145 12 145 2 148 60 145 3 6.4 200x1000 5% 131 131 5 131 2 131 60 131 3 6.5 200x1000 5% 161 161 12 161 2 163 81 161 18 A.1 300x3000 2% 2 53 253 222 253 82 255 180 253 105 A.2 300x3000 2% 252 252 328 252 116 256 162 252 96 A.3 300x3000 2% 232 232 127 232 250 233 147 232 51 A.4 300x3000 2% 234 234 46 234 5 234 87 234 108 A.5 300x3000 2% 236 236 24 236 80 236 156 236 45 B.1 300x3000 5% 69 69 20 69 4 70 519 69 30 B.2 300x3000 5% 76 76 12 76 6 77 252 76 27 B.3 300x3000 5% 80 80 710 80 18 80 351 80 13 B.4 300x3000 5% 79 79 30 79 6 81 405 79 78 B.5 300x3000 5% 72 72 5 72 3 72 159 72 4 C.1 400x4000 2% 227 227 188 227 74 227 312 227 132 C. 2 400x4000 2% 219 219 41 219 64 221 240 219 33 C.3 400x4000 2% 243 243 541 243 70 245 420 243 171 C.4 400x4000 2% 219 219 145 219 62 219 213 219 45 C.5 400x4000 2% 215 215 81 215 60 215 201 215 33 D.1 400x4000 5% 60 60 14 60 23 61 633 60 177 D.2 400x4 000 5% 66 66 199 66 22 66 498 66 51 D.3 400x4000 5% 72 72 785 72 23 75 840 72 30 D.4 400x4000 5% 62 62 74 62 8 63 1002 63 6 D.5 400x4000 5% 61 61 80 61 10 64 633 61 81 E.1 500x5000 10% 29 29 38 29 26 29 1161 29 17 E.2 500x5000 10% 30 30 14648 30 408 3 1 2346 30 63 E.3 500x5000 10% 27 27 28360 27 94 27 2163 27 60 E.4 500x5000 10% 28 28 540 28 26 28 1278 28 41 E.5 500x5000 10% 28 28 35 28 37 28 1014 28 99 F.1 500x5000 20% 14 14 76 14 33 14 3510 14 21 F.2 500x5000 20% 15 15 78 15 31 15 1059 15 44 F.3 500x5000 20% 14 14 267 14 249 14 1392 14 234 F.4 500x5000 20% 14 14 210 14 31 14 1863 14 174 F.5 500x5000 20% 13 13 13193 13 201 14 2802 13 241 G.1 1000x10000 2% 176 176 30200 176 147 182 3708 176 144 G.2 1000x10000 2% 154 155 361 154 783 161 2691 155 327 G.3 1000x10000 2% 166 166 7842 166 978 176 1182 166 408 G.4 1000x10000 2% 168 168 25305 168 379 178 2988 168 303 G.5 1000x10000 2% 168 168 549 168 237 174 3567 168 532 H.1 1000x10000 5% 63 64 1682 63 1451 66 3513 63 668 H.2 1000x10000 5% 63 64 53 0 63 887 67 3123 66 443 H.3 1000x10000 5% 59 59 1804 59 1560 64 2472 59 648 H.4 1000x10000 5% 58 58 27242 58 238 61 3018 59 235 H.5 1000x10000 5% 55 55 450 55 155 57 3816 55 66 21 Table1: P arameters and strategies used for the indire ct genetic algor ithm. Table 2 Summarised results a veraged for data sets o f same size and density. Table 3: Detailed Results for t he Set Covering P roblem Figure 1: Grap hical compariso n of different he uristic approac hes to the set coveri ng problem. 22 23 An Indirect Genetic A lgorithm for Set Covering Pr oblems Contribution State ment The Set Cover ing Problem (SCP) is a m ain model for many i mportant application s in the fiel d of Operational Research. For i nstance, it can be used to describe staff scheduling, railway timetabling or aircraft scheduling type problem s. T he SCP has been proven to be NP-hard, therefore to solve problems of the siz e encountered in practice heurist ic algorithms hav e to be employed. Amongst these heuristic methods, evolutionary a lgorithm s and in particular gen etic algorithm s have become increasingly popular in recent yea rs. These alg orithms have been s uccessfully us ed to solve NP-hard problems, amongst t hem the SCP. However often these algorithms were only abl e t o ac hieve this by employ ing problem specific strategies and therefore lacked the flexibility a nd robustness to b e used for different probl ems. The approach presented in this paper is a new type of self-tuning g enetic a lgorithm . This new algorithm solves the problem indi rectly. This ha s the adv antage t hat the genetic algorithm component is almost independent from the problem specific decoder com ponent. Hence, it can be re-used for other problems unchanged. Thus, our approach could be used by us ers unfamiliar with evolutionary computation. Furthermore, we also explain that the d ecoder itself can remain intact with only minor problem specific modifications required for different problems. As will be seen, t he success of this approach lies in not seeking 'optimal' soluti on directly, but by gradually improving solutions over a number of optimisation steps. Extensive computational results are presented and compare favourably t o so lutions found by the latest ev olutionary and other heu ristic approaches to the same data in stances.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment