Performance of LDPC Codes Under Faulty Iterative Decoding

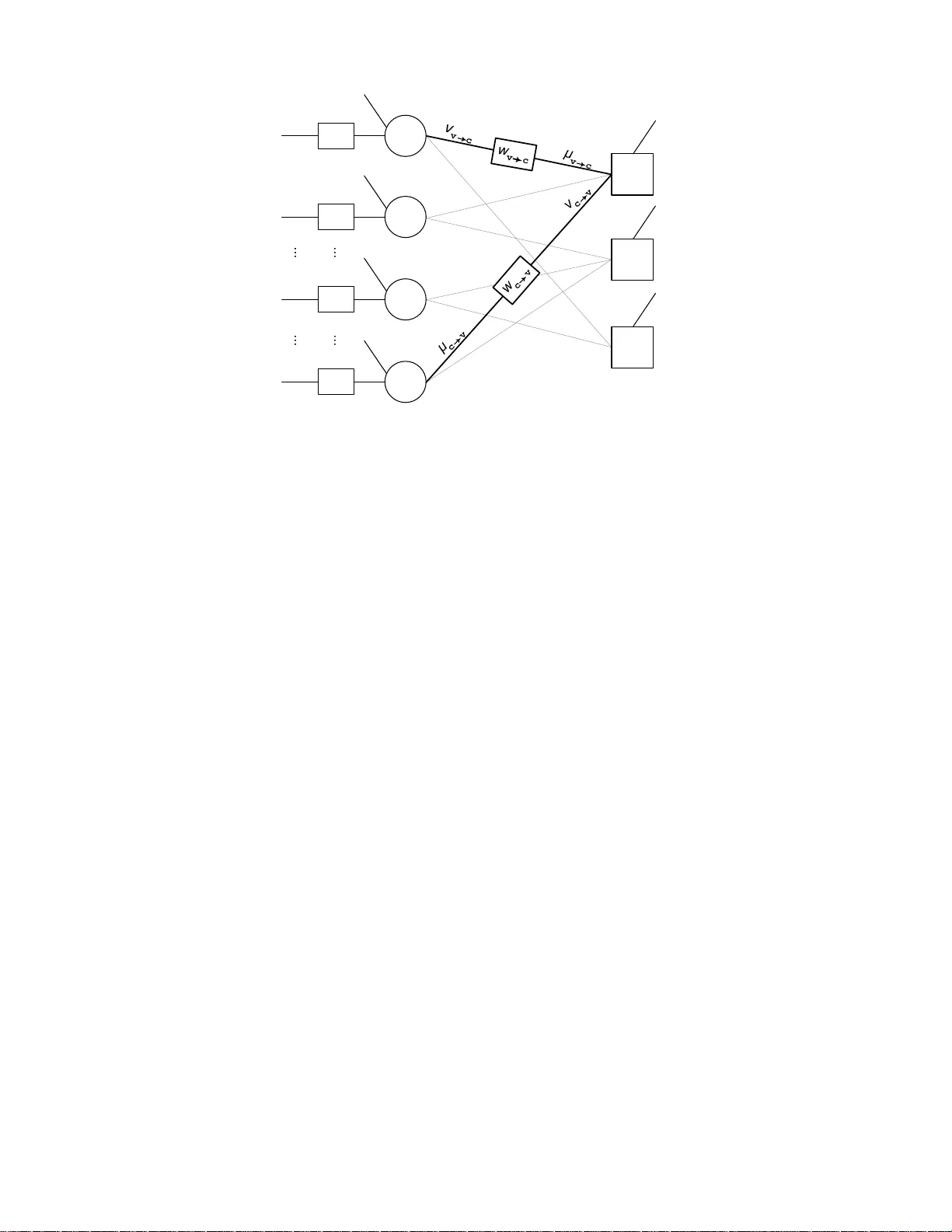

Departing from traditional communication theory where decoding algorithms are assumed to perform without error, a system where noise perturbs both computational devices and communication channels is considered here. This paper studies limits in proce…

Authors: Lav R. Varshney