Comment on "Fastest learning in small-world neural networks"

This comment reexamines Simard et al.'s work in [D. Simard, L. Nadeau, H. Kroger, Phys. Lett. A 336 (2005) 8-15]. We found that Simard et al. calculated mistakenly the local connectivity lengths Dlocal of networks. The right results of Dlocal are pre…

Authors: Z.X. Guo

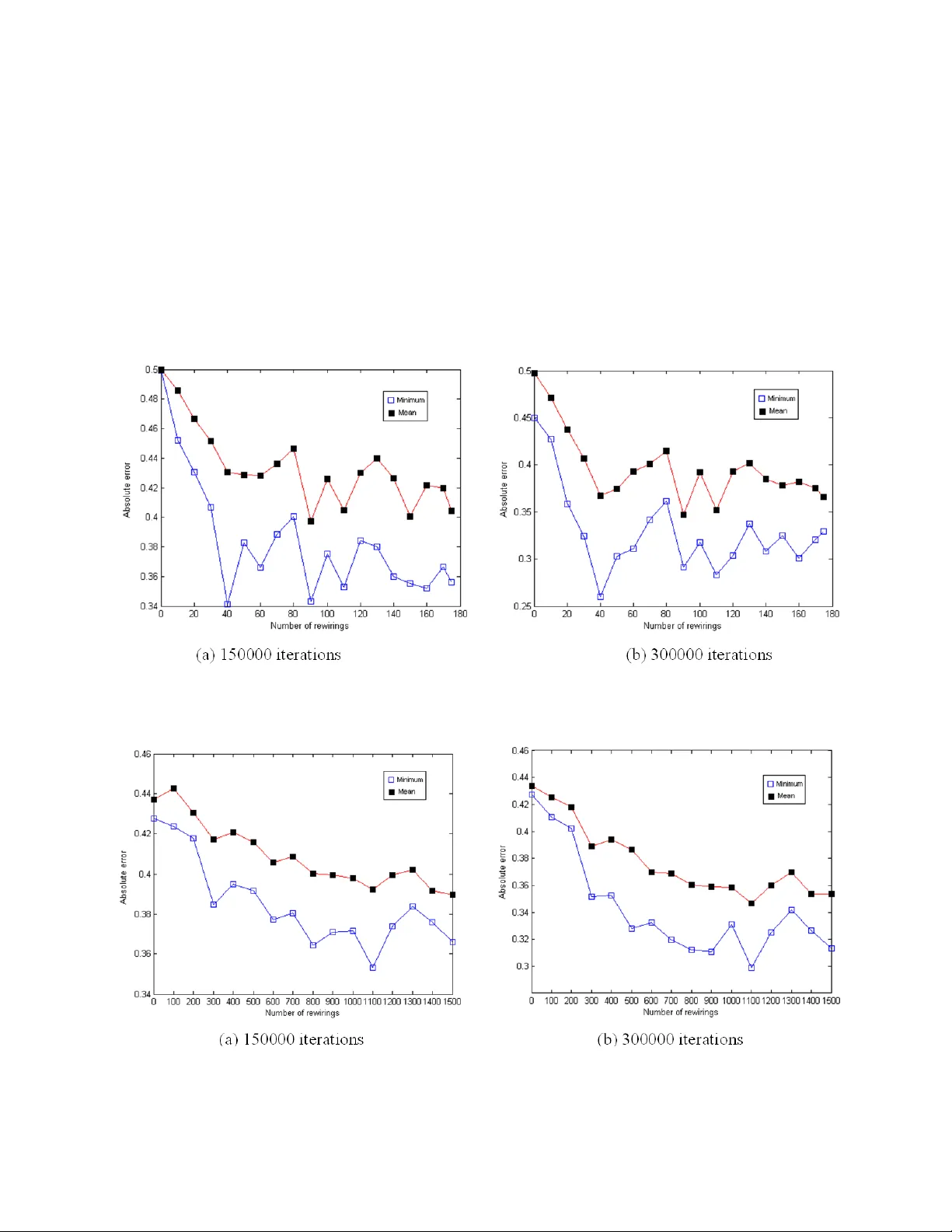

* Corresponding a uthor. Tel.: +855 27664024, Fax: +85 5 27664024 E-mail address: tcguozx@inet.polyu.edu.hk . - 1 - Comment on “Fastest learning in small-world neural networks” Z.X. Guo Institute of T extiles and Clothing, The Hong Ko ng Polytechnic University , Hong Kong, China Abstract This comment reexam ines Simard et al.’ s work in [D. Simard, L. Nadeau, H. Kröger , Phys. Lett. A 336 (2005) 8-15]. W e found that Simard et al . calculated m istakenly the local connectivity lengths local D of networks. The right results of local D are presented and the supervised learning performance of feedforward neural networks (FNN s) with dif ferent rewirings are re-investigated in this comment. This comment discredits Sim ard et al’ s work by two c onclusions: 1) Rewiring connections of FNNs cannot gene rate networks with small-worl d connectivity; 2) For dif ferent training sets, there do not exist networks with a certain number of rewi rings generating reduced learning errors than networks w ith other numbers of rewiring. P ACS: 84.35.+i Keywords: Feed-forward neural network, sm all-world network, random network - 2 - 1. Intr oduction Simard et al. [1] claimed that networks with small-world co nnectivity can be constructed by rewiring some connections of feed-forward ne ural networks (FNNs), which give less learning errors than the networks of regular or random connectivity. In [1], the small-world network architecture is measured by small global and local connectivity lengths global D and local D, w h i c h are defined via the concept of global and local efficiency global E a n d local E [2,3]. local E is defined as the average efficiency of subgraphs. Subgraph i G of neighbors of neuron i is formed by the neurons directly connected to neuron i according to the definition in [3,4]. However, in [1], all neurons occurring in the same layer as neuron i are also included in i G. T h a t i s , i G i s mistakenly defined in [1]. The conclusion in [1], that the sm all-world ne twork can be constructed by randomly rewiring the connections of FNNs, is thus questionable. In [1], the learning performance of the ne twork with a certain num ber of rewired connections is observed based on one trai ning set and one random network connectivity. However, different training sets and different network connectivities can generate different learning performances, by which differe nt conclusions can be drawn. In this comment, we reinvestigate the values of global D and local D of FNNs with different numbers of rewired connections, and the supervis ed learning perform ance of these networks. - 3 - 2. Network connectivity lengths In this comm ent, the i G is formed by the neurons di rectly con nected to neuron i accord ing to the definition in [ 3,4]. We investig ate the relations between local D and the number of rewirings in terms of the f ollowing 4 FNNs: Network A: a network of 5 neurons per layer and 5 layers Network B: a network of 5 neurons per layer and 8 layers Network C: a network of 15 neurons per layer and 8 layers Network D: a network of 10 ne urons per layer and 10 layers Given a specified number of rewired connectio ns, different network co nnectivities can be obtained by randomly cutting and rewiring con nections. We use 100 different connectiv ities generated randomly to compute local D and global D of a specified number of rewirings. Figure 1 shows local D and global D as a function of the number of rewi red connections for the 4 networks, in which figures 1.(a)-(d ) represents the results of networks A - D respectively. It is evident that the regime of sm all-world architecture does not ex ist for any of the 4 networks. That is, rewiring some connections of FNNs cannot (at least for ne tworks A-D) form networks with small-world connectivity although previous studies revealed th at the small-world network can be obtained by randomly rewiring some connections of regular lattice [3,4]. Simard et al. m ake a wrong conclusion in [1] because they mistakenly compute local D and global D . - 4 - Fig. 1. local D and global D versus number of rewired connections. 2. Supervised learning In this section, we reexamine whether the FNN with some random rewirings gives reduced learning errors. The supervised learning performan ces of networks B, C and D are investigated by training them with random binary input and output pattern s (training set): Except for different training sets and network co nnectivities, the networ ks are trained based on the same network setting presen ted in [1]. The learning algorithm is back-propagation. For the 3 networks, the relationships be tween learning error and the number of rewi rings are shown in - 5 - Fig.s 2-4 respectively. In each figure, (a ) and (b) show the resu lts of 150000 and 300000 iterations respectively. ‘ □ ’ and ‘ ■ ’represent the minimum and the m ean of m ean absolute errors (MAEs) of multiple tests respectiv ely. For netw orks B-D, their smallest m eans of MAEs are generated, respectively, by the networks at rewire N =90, 1100, and 750. These results are quite inconsistent with the results in [1] , in which rewire N equals 28, 830, 400 respectively. Fig. 2. Learning results of network B. Learning of 40 patterns, learning rate 0.01, 20 statistical tests. Fig. 3. Learning results of network C. Learning of 40 patterns, learning rate 0.01, 17 statistical tests. - 6 - Fig. 4. Learning results of network D. Learning of 30 patterns, learning rate 0.02, 20 statistical tests. Next, we examine further whether the network with a certain rewire N (like in the regime of small-world architecture) can generate reduced l earning errors than networks with other number of rewiring. For a specified rewire N , different network connectivitie s can be generated. Taking network D as an example, its learning performan ce is investigated f urther based on 4 cases in terms of different connectivities and training sets. The learning results of 300000 iterations are shown in Fig. 5. It is clear that the best learni ng performances are obtained, for different cases, at quite different number of rewirings. That is, for di fferent training sets, there do not exist networks with a certain rewire N capable of generating less learnin g errors than networks with other rewire N , which is also inconsistent with th e conclusion in [1]. In [1], Simard et al. claimed that the networks at a certain rewire N give reduced learning errors based on only one traini ng set and one network connectiv ity. Our experimental results discredited this conclusion because different conc lusions were drawn in term s of more training sets and network connectivities. - 7 - Fig. 5. Learning results of network D based on di fferent training sets a nd network connectivities. (a) results based on training set 1 and connectivity 1; (b) results based on training set 1 and connectivity 2; (c) results based on training set 2 and connectivity 2; (d) results based on training set 2 and connectivity 3. 3. Conclusions This comment reexamines Sim ard et al.’s work [1] by re-calculating local D of networks and re-investigating their supervised learning perfor mance. Two im portant conclusions can be drawn from experimental results th at: 1) Rewiring randomly some connections of FNNs cannot - 8 - construct small-world networks; 2) For different training sets, resu lts generated by networks with some random rewirings are superior to those generated by regular FNNs. However, there do not exist networks with a certain rewire N generating less learning errors than networks with other rewire N . These conclusions discredit Simard et al’s conclusions in [1]. Refer ence [1] D. Simard, L. Nadeau, H. Kr öger, Phys. Lett. A 336 (2005) 8-15. [2] M. Marchiori, V. Latora, Physica A 285 (2000) 539-546. [3] V. Latora, M. Marchiori, P hys. Rev. Lett. 87 (2001) 198701-1-4. [4] D.J. Watts, S.H. Stro gatz, Nature 393 (1998) 440-442.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment