Structure-Aware Stochastic Control for Transmission Scheduling

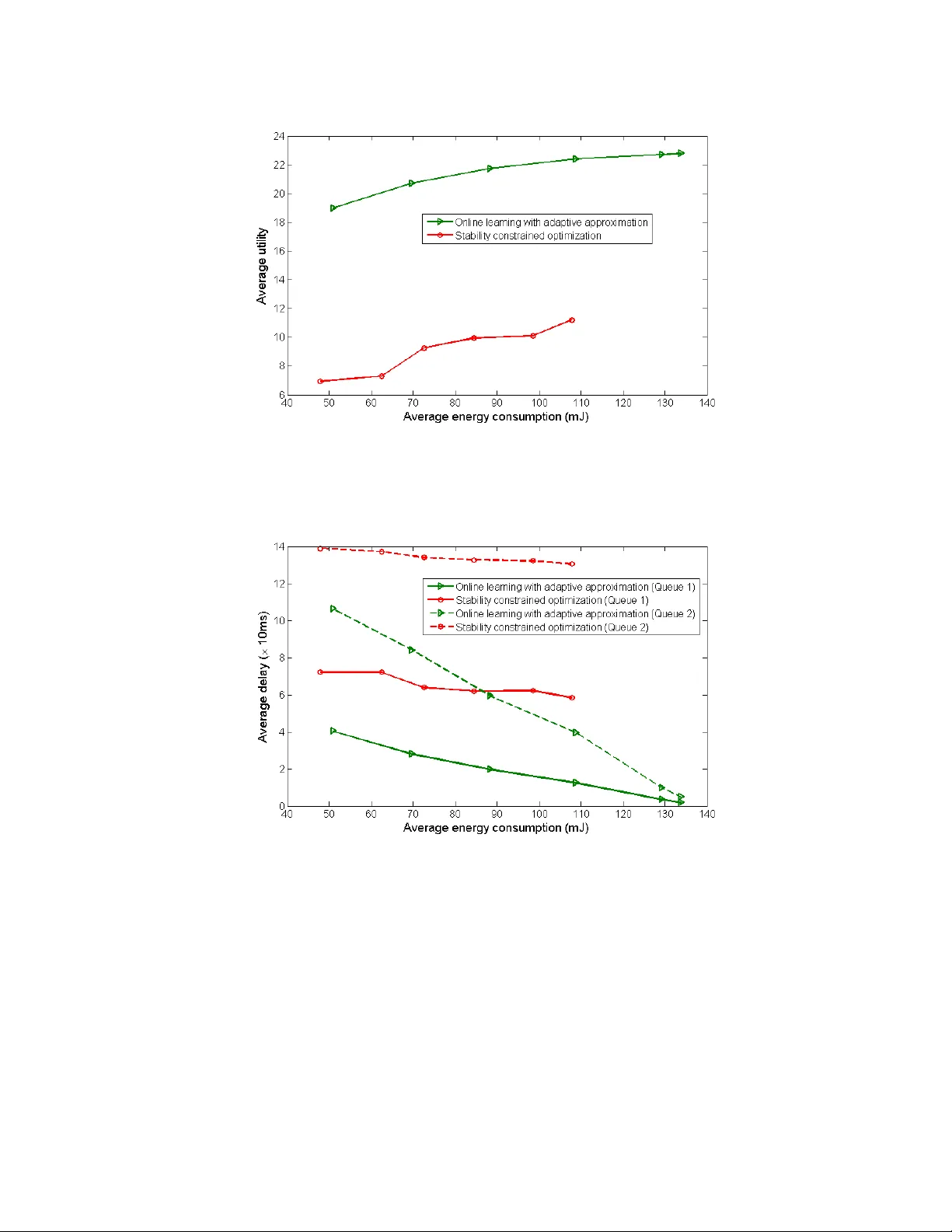

In this paper, we consider the problem of real-time transmission scheduling over time-varying channels. We first formulate the transmission scheduling problem as a Markov decision process (MDP) and systematically unravel the structural properties (e.…

Authors: ** Fangwen Fu, Mihaela van der Schaar **