On computational tools for Bayesian data analysis

While Robert and Rousseau (2010) addressed the foundational aspects of Bayesian analysis, the current chapter details its practical aspects through a review of the computational methods available for approximating Bayesian procedures. Recent innovati…

Authors: Christian P. Robert, Jean-Michel Marin

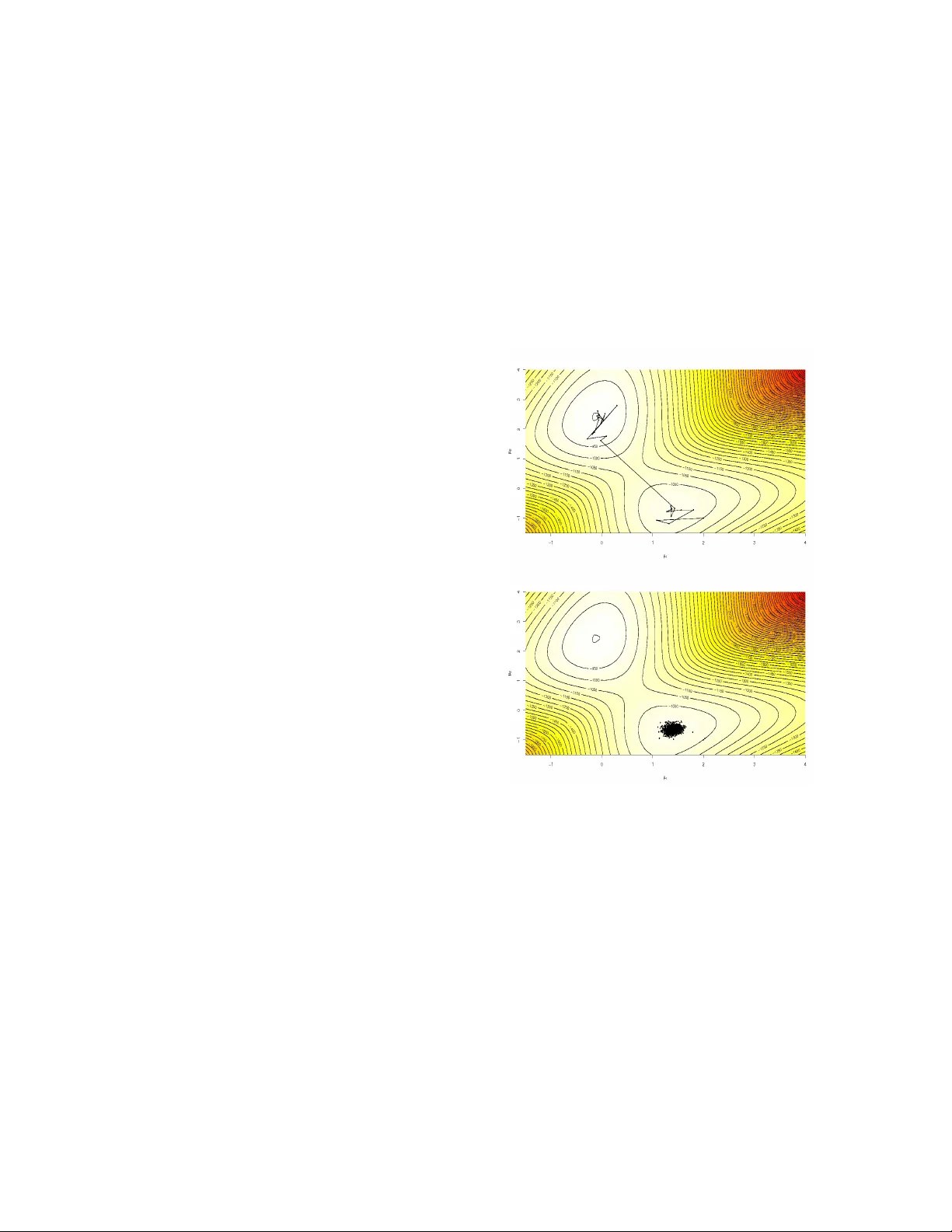

On computational to ols for Ba y esian data analysis Christian P. R ober t and Jean-Michel Marin ∗ No vem b er 17, 2018 Abstract While the previous c hapter (Robert and Rousseau, 2010) addressed the foundational aspects of Bay esian analysis, the curren t chapter details its practical as- p ects through a review of the computational meth- o ds a v ailable for appro ximating Ba yesian procedures. Recen t inno v ations like Monte Carlo Marko v c hain, sequen tial Monte Carlo metho ds and more recen tly Appro ximate Ba yesian Computation tec hniques ha v e considerably increased the p otential for Ba yesian ap- plications and they ha v e consequently op ened new a v en ues for Ba y esian inference, first and foremost Ba y esian mo del choice. Keyw ords: Ba y esian inference, Monte Carlo meth- o ds, MCMC algorithms, Appro ximate Ba y esian Computation techniques, adaptivit y , laten t v ariables mo dels, mo del choice. 1 In tro duction The previous chapter (Rob ert and Rousseau, 2010) has (hop efully) stressed the unique coherence of Ba y esian data analysis —the complete inferential sp ectrum (estimators, predictors, tests, confidence regions, etc.) is deriv ed from a unique p ersp ective, ∗ C.P . Robert is Professor of Statistics at Univer- sit´ e Paris-Dauphine, CEREMADE, 75775 Paris cedex 16, and Head of the Statistics Lab at CREST, IN- SEE, F rance. Email: xian@ceremade.dauphine.fr W ebpage: www.ceremade.dauphine.fr/ ∼ xian Blog: xianblog.wordpress.com Jean-Michel Marin is Professor of Statistics at Institut de Math´ ematiques et Mo d´ elisation de Montpellier, Universit ´ e Mon tpellier 2, Case Courrier 51 34095 Montpellier cedex 5, and associate researcher at CREST, IN- SEE, P aris, Email: jean-michel.marin@math.univ-montp2.fr once b oth a loss function and a prior distribution are constructed—, but it has not adressed the complex issues related to the practical implementation of this analysis that usually inv olves solving integration, op- timisation and implicit equation problems, most of- ten simultaneously . This computational challenge offered by Bay esian inference has led to a sp ecific branch of Ba y esian statistics concerned with these issues, from the early appro ximations of Laplace to the n umerical prob- abilit y dev elopments of the current days. In par- ticular, the past tw ent y y ears hav e witnessed a tremendous surge in computational Bay esian statis- tics, due to the in tro duction of p ow erful approxima- tion methods like Marko v chain (MCMC) and se- quen tial Monte Carlo techniques. T o some exten t, this branc h of Ba yesian statistics is now so in tricately connected with Ba yesian inference that some notions lik e Bay esian mo del choice and Ba yesian mo del com- parison hardly make sense without it. The probabilistic nature of the ob jects, inv olved in those computational c hallenges, as w ell as their p o- ten tialy high dimension, led the comm unity to opt for simulation based, rather than n umerical, solu- tions. While numerical techniques are indeed used to solve some optimisation or some appro ximation setups, even producing sp ecific approaches lik e v aria- tional Bay es (Jaakkola and Jordan 2000), the metho d of choice is sim ulation, i.e. essentially the use of com- puter generated random v ariables and the reliance on the Law of Large Num b ers. F or instance, all ma- jor softw ares that hav e b een built tow ards Bay esian data analysis like WinBUGS and JAGS , are en tirely dep ending up on sim ulation approximations. W e will therefore abstain from describing an y further the n u- 1 merical adv ances found in this area, referring the reader to Spall (2003) and Gentle (2009) for proper co v erage. In this chapter, we thus discuss simulated-based computational methods in connection with a few mo del c hoice examples (Section 2), separating non- Mark o vian (Section 3) from Marko vian (Section 4) solutions. F or detailed entries on Ba y esian com- putational statistics, we refer the reader to Chen et al. (2000), Liu (2001) or Rob ert and Casella (2004, 2009), p oin ting out that b o oks lik e Alb ert (2009) and Marin and Robert (2007) encompass both Bay esian inference and computational metho dologies in a sin- gle unifying p ersp ective. 2 Computational difficulties In this section, w e consider tw o particular types of statistical mo dels with computational c hallenges that can only b e pro cessed via simulation. 2.1 Generalised linear mo dels Generalised linear mo dels (McCullagh and Nelder 1989) are extensions of the standard linear regres- sion model. In particular, they b ypass the compul- sory selection of a single transformation of the data that must ac hiev e the possibly conflicting goals of normalit y and linearity , goals whic h are imp osed by the linear regression model but that are imp ossible to achiev e for binary or count resp onses. Generalised linear mo dels formalise the connection b et w een a resp onse v ariable y ∈ R and a v ector x ∈ R p of explanatory v ariables. They assume that the dep endence of y on x is partly linear in the sense that the conditional distribution of y given x is defined in terms of a linear com bination x T β of the components of x ( x T b eing the transp ose of x ), y | x , β ∼ f ( y | x T β ) . W e use the notation y = ( y 1 , . . . , y n ) for a sample of n resp onses and X = [ x 1 . . . x p ] = x 11 x 12 . . . x 1 p x 21 x 22 . . . x 2 p x 31 x 32 . . . x 3 p . . . . . . . . . . . . x n 1 x n 2 . . . x np = x 1 . . . x n for the n × p matrix of corresp onding explanatory v ariables, p ossibly with x 11 = . . . = x n 1 = 1 ( y and x correspond to generic notations for single-resp onse and cov ariate v ectors, resp ectiv ely). A generalized linear model is specified by t wo func- tions: (i) a conditional density f on y conditional on x that b elongs to an exp onential family and that is parameterized b y an exp ectation parameter µ = µ ( x ) = E [ y | x ] and p ossibly a disp ersion parameter φ > 0 that do es not dep end on x ; and (ii) a link function k that relates the mean µ = µ ( x ) of f and the co v ariate vector, x , through k ( µ ) = ( x T β ), β ∈ R p . F or identifiabilit y reasons, the link function k is a one-to-one function and w e hav e E [ y | x , β , φ ] = k − 1 x T β . W e can thus write the (conditional) likelihoo d as ` ( β , φ | y , X ) = n Y i =1 f y i | x i T β , φ . In practical applications lik e econometrics or ge- nomics, p can b e v ery large and even larger than the n um b er of observ ations n . Ba yesian data analysis on β and possibly φ proceeds through the p osterior dis- tribution of ( β , φ ) given ( X , y ): π ( β , φ | X , y ) ∝ n Y i =1 f y i | x i T β , φ π ( β , φ | X ) (1) whic h is never a v ailable as a standard distribution outside the normal linear mo del. Indeed, the choice of the prior distribution π ( β , φ | X ) dep ends on the prior information av ailable to the mo deller. In cases when 2 φ = 1, we will use the default solution advocated in Marin and Robe rt (2007), namely the extension of Zellner’s g -prior that w as originaly in tro duced for the linear mo del, as discussed in the previous chapter: β | X ∼ N 0 , n X T X − 1 . The motiv ation b ehind the factor n is that the infor- mation brought by the prior is scaled to the level of a single observ ation. Ev en this simple mo deling does not av oid the computational issue of exploiting the p osterior density (1). Example 1. A specific if standard case of gener- alised linear model for binary data is the pr obit mo del : Y (Ω) = { 0 , 1 } and w e ha v e P ( Y = 1 | x ) = 1 − P ( Y = 0 | x ) = Φ( x T β ) , where Φ denotes the standard normal cum ulativ e dis- tribution function. Under the g -prior π ( β | X ) pre- sen ted ab ov e, the corresp onding p osterior distribu- tion, prop ortional to π ( β | X ) n Y i =1 Φ( x i T β ) y i Φ( − x i T β ) 1 − y i , (2) is a v ailable in closed form, up to the normalising con- stan t, but is not a standard distribution and th us cannot b e easily handled! In this c hapter, w e will use as illustrative data the Pima Indian diab etes study av ailable in R (R Dev el- opmen t Core T eam 2008) as the Pima.tr dataset with 332 women registered and consider a probit mo del predicting the presence of diab etes from three pre- dictors, the glucose concen tration (glu), the diastolic blo o d (bp) pressure and the diab etes p edigree func- tion (p ed), P ( y = 1 | x ) = Φ( x 1 β 1 + x 2 β 2 + x 3 β 3 ) . A maxim um likelihoo d estimate of the regression co- efficien ts is provided by R glm function as Deviance Residuals: Min 1Q Median 3Q Max -2.1347 -0.9217 -0.6963 0.9959 2.3235 Coefficients: Estimate Std. Error z value Pr(>|z|) glu 0.012616 0.002406 5.244 1.57e-07 *** bp -0.029050 0.004094 -7.096 1.28e-12 *** ped 0.350301 0.208806 1.678 0.0934 . --- Signif. codes: ’***’ 0.001 ’**’ 0.01 ’*’ 0.05 ’.’ 0.1 Null deviance: 460.25 on 332 degrees of freedom Residual deviance: 386.73 on 329 degrees of freedom AIC: 392.73 Number of Fisher Scoring iterations: 4 the final column of stars indicating a p ossible sig- nificance of the first tw o cov ariates from a classical viewp oin t. J This type of mo del is characteristic of conditional mo dels where there exist a plethora of co v ariates x i — again, potentially more than there are observ ations— and one of the strengths of Bay esian data analysis is to be able to assess the impact of those co v ariates on the dep enden t v ariable y . This ob viously is a special case of mo del choice, where a given set of cov ariates is asso ciated with a sp ecific mo del. As discussed in the previous chapter, the standard Ba yesian solution in this setting is to compute p osterior probabilities or Ba yes factors for all mo dels in comp etition. F or instance, if a single cov ariate, x 3 (p ed) say , is under scrutin y , the Bay es factor asso ciated with the n ull h yp othesis H 0 : β 3 = 0 is B π 01 = m 0 ( y ) m 1 ( y ) (3) where m 0 and m 1 are the marginal densities under the null and the alternative h yp otheses, 1 i.e. m i ( y ) = Z f ( y | β , X i ) π i ( β | X i )d β , π 0 b eing the g -prior excluding the cov ariate x 3 . If w e denote by X 0 the 332 × 2 matrix containing the v alues of glu and bp for the 332 individuals and b y X 1 the 332 × 3 matrix con taining the v alues of the co v ariates glu , bp and ped , the Bay es factor B 01 is giv en by 1 As will become clearer in Section 3.3, Bay es factor appro x- imations are in trinsically link ed with the normalising constants of the posterior distributions of the mo dels under comp etition. W e already stressed in Rob ert and Rousseau (2010) that this is a sp ecial case when normalising constants matter! 3 (2 π ) 1 / 2 n 1 / 2 | ( X T 0 X 0 ) | − 1 / 2 | ( X T 1 X 1 ) | − 1 / 2 × Z R 2 n Y i =1 { 1 − Φ (( X 0 ) i, · β ) } 1 − y i Φ (( X 0 ) i, · β ) − y i exp n − β T ( X T 0 X 0 ) β / 2 n o d β Z R 3 n Y i =1 { 1 − Φ ( X 1 ) i, · β ) } 1 − y i Φ ( X 1 ) i, · β ) − y i exp n − θ T ( X T 1 X 1 ) β / 2 n o d β using the shortcut notation that A i, · is the i -th line of the matrix A . The appro ximation of those marginal densities, whic h are not av ailable outside the normal mo del (see, e.g., Marin and Robert 2007, Chapter 3), is th us paramoun t to decide about the inclusion of av ailable co v ariates. In this setting of selecting cov ariates in a condi- tional mo del, an additional and non-negligible com- putational difficult y is that the n umber of h yp otheses to b e tested is 2 p if each of the p cov ariates is under scrutin y . When p is large, it is simply imp ossible to en vision all p ossible subsets of cov ariates and a fur- ther level of appro ximation must b e accepted, namely that only the most lik ely subsets will b e visited by an appro ximation metho d. 2.2 Challenging lik eliho o ds A further degree of difficulty in the computational pro cessing of Bay esian mo dels is reac hed when the lik eliho o d function itself cannot b e computed in a rea- sonable amoun t of time. Examples ab ound in econo- metrics, physics, astronomy , genetics, and b eyond. The level of difficulty may b e that the computation time of one single v alue of the lik eliho o d function re- quires several seconds, as in the cosmology analysis of W raith et al. (2009) where the lik eliho o d is repre- sen ted by an in v olv ed computer program. It ma y also b e that the only p ossible represen tation of the likeli- ho o d function is as an integral o ver a p ossibly large n um b er of latent v ariables of a join t (unobserved) lik eliho o d. Example 2. (Con tin uation of Example 1) Although the lik eliho o d of a probit model is a v ailable in closed form, this probit mo del can be represented as a natu- ral laten t v ariable mo del. If we introduce an artificial sample z = ( z 1 , . . . , z n ) of n indep enden t laten t v ari- ables asso ciated with a standard regression mo del, i.e. such that z i | β ∼ N x i T β , 1 , where the x i T ’s are the p -dimensional co v ariates and β is the vec- tor of regression coeffic ien ts, then y = ( y 1 , . . . , y n ) defined by y i = I z i > 0 is a probit sample. Indeed, giv en β , the y i ’s are indep endent Bernoulli rv’s with P ( Y i = 1 | x i , β ) = Φ x i T β . J Suc h latent v ariables mo dels are quite p opular in most applied fields. F or instance, a sto c hastic v olatil- it y mo del (Jacquier et al. 1994, Chib et al. 2002) in- cludes as man y (volatilit y) latent v ariables as obser- v ations. In a time series with thousands of p erio ds, this feature means a considerable increase in the com- plexit y of the problem, as the volatilities cannot b e in tegrated analytically and thus need to b e simulated. Similarly , phylogenetic trees (REF) that reconstruct ancestral histories in p opulation genetics are random trees and a nuisance parameter for inference ab out ev olutionary mec hanisms, but, once more, they can- not b e integrated. Example 3. Capture-recapture exp eriments are used in ecology to assess the size and the patterns of a p opulation of animals b y a series of captures where captured animals are marked, i.e. individualy iden ti- fied as having b een captured once, and released. The o ccurence of recaptures is then informativ e ab out the whole p opulation. A longer description is pro vided in Marin and Rob ert (2007, Chapter 5), but we only consider here a three stage op en p opulation capture- recapture mo del, where there is a probability q for eac h individual in the p opulation to lea ve the p op- ulation betw een eac h capture episo de. Due to this p ossible emigration of animals, the asso ciated like- liho o d in volv es unobserved indicators and w e study here the case where only the individuals captured during the first capture exp eriment are marked and subsequen t recaptures are registered. This mo del is th us describ ed via the summary statistics n 1 ∼ B ( N , p ) , r 1 | n 1 ∼ B ( n 1 , q ) , r 2 | n 1 , r 1 ∼ B ( n 1 − r 1 , q ) , c 2 | n 1 , r 1 ∼ B ( n 1 − r 1 , p ) , and c 3 | n 1 , r 1 , r 2 ∼ B ( n 1 − r 1 − r 2 , p ) , 4 where only the first capture size, n 1 , the first recap- ture size, c 2 , and the second recapture size, c 3 , are ob- serv ed. The n umbers of marked individuals remo ved at stages 1 and 2, r 1 and r 2 , are not observed and are therefore latent v ariables of the mo del. If we incor- p orate those missing v ariables within the parameters, the likelihoo d ` ( N , p, q , r 1 , r 2 | n 1 , c 2 , c 3 ) is given by N n 1 p n 1 (1 − p ) N − n 1 n 1 r 1 q r 1 (1 − q ) n 1 − r 1 × n 1 − r 1 c 2 p c 2 (1 − p ) n 1 − r 1 − c 2 × n 1 − r 1 r 2 q r 2 (1 − q ) n 1 − r 1 − r 2 × n 1 − r 1 − r 2 c 3 p c 3 (1 − p ) n 1 − r 1 − r 2 − c 3 and, if we use the improp er prior π ( N , p, q ) = N − 1 I [0 , 1] ( p ) I [0 , 1] ( q ), the p osterior on the ( N , p, q , r 1 , r 2 | n 1 , c 2 , c 3 ) is av ailable up to a constant. Summing o ver all p ossible v alues of ( r 1 , r 2 ) to obtain the posterior associated with the “observ ed” lik eliho o d creates some difficulties when n 1 is large. Indeed, this summation typically in tro duces a lot of n umerical errors. The dataset asso ciated with this example is ex- tracted from Marin and Robert’s (2007, Chapter 5) eur o dip dataset and is related to a p opulation of birds called Eur op e an dipp ers . 2 F or the 1981 captures, we ha v e n 1 = 22, c 2 = 11, and c 3 = 6. J The follo wing example is a different case where the lik eliho o d is missing a term that cannot b e reconsti- tuted b y completion and th us requires a custom-built solution. Example 4. The k -ne ar est-neighb our procedure is a classification pro cedure that uses a training dataset ( y i , x i ) 1 ≤ i ≤ n for prediction purp oses. The observ- ables y i are class lab els, y i ∈ { 1 , . . . , G } , while the x i are cov ariates, p ossible of large dimension. When 2 European dippers are strongly dependent on streams, feed- ing on underwater inv ertebrates, and their nests are alwa ys close to water. The capture–recapture data contained in the eur o dip dataset cov ers 7 years of observ ations in a zone of 200 km 2 in eastern F rance. observing a new cov ariate x n +1 , the corresp onding unobserv ed lab el y n +1 is predicted as the most com- mon class lab el found in the k nearest neighbours of x n +1 in X = { x 1 , . . . , x n } , the neighbours of a co- v ariate vector b eing defined by the usual Euclidean norm. Cucala et al. (2009) ha v e proposed a proba- bilistic model for this classification mechanism. They first prop ose to mo del the distribution of y : f ( y | X , β , k ) = exp β n X i =1 X ` ∼ k i δ y i ( y ` ) k !, Z ( β , k ) (4) where δ x ( y ) denotes the Kro enec ker delta, Z ( β , k ) is the normalising constant of the density and where ` ∼ k i means that the summation is tak en ov er the observ ations x i for whic h x ` is a k -nearest neigh b our. The motiv ation for this mo delling is that the full con- ditionals corresp onding to (4) are given by f ( y i | y − i , X , β , k ) ∝ exp ( β /k X ` ∼ k i δ y i ( y ` ) + X i ∼ k ` δ y ` ( y i ) !) . (5) The normalising constan t Z ( β , k ) cannot therefore b e expressed in closed form. Indeed, the computation of this constant calls for a summation ov er G n terms. Based on (5), the predictive distribution of a new observ ation y n +1 giv en its cov ariate x n +1 and the training sample ( y , X ) is, for g = 1 , . . . , G, P ( y n +1 = g | x n +1 , y , X , β , k ) ∝ exp β /k X ` ∼ k ( n +1) δ g ( y ` ) + X ( n +1) ∼ k ` δ y ` ( g ) , where X ` ∼ k ( n +1) δ g ( y ` ) and X ( n +1) ∼ k ` δ y ` ( g ) are the num b ers of observ ations in the training dataset from class g among the k nearest neigh b ours of x n +1 and among the observ ations for whic h x n +1 is a k -nearest neighbour, resp ectively J 5 3 Mon te Carlo Metho ds The generic approach for solving computational prob- lems related with Bay esian analysis is to use sim ula- tion, i.e. to pro duce via a computer program a sam- ple from the p osterior distribution and to use the sim ulated sample to approximate the pro cedures of in terest. This approach goes under the generic name of Mon te Carlo metho ds, in reference to the casino of Monaco (Metrop olis 1987). Recall that a stan- dard Bay esian estimate is the p osterior exp ectation of functions h ( θ ) of the parameter, I = Z Θ h ( θ ) π ( θ | y ) d θ . A formal Mon te Carlo algorithm asso ciated with the target I pro ceeds as follows: Basic Mon te Carlo Algorithm F o r a computing effo rt N 1) Set i = 1 , 2) Generate indep endent θ ( i ) from the p osterior distribution π ( ·| y ) , 3) Set i = i + 1 , 4) If i ≤ N , return to 2) . The corresp onding crude Monte Carlo appro xima- tion of I is given b y: b I MC = 1 N N X i =1 h θ ( i ) . When the computing effort N grows to infinit y , the appro ximation b I MC con v erges to I and the sp eed of con v ergence is 1 / √ N if h is square-in tegrable against π ( θ | y ) (Robert and Casella 2004). The assessmen t of this conv ergence relies on the Cen tral Limit Theorem, as describ ed in Robert and Casella (2009, Chapter 4). 3.1 Imp ortance sampling and resam- pling A generalisation of the basic Mon te Carlo algorithm stems from an alternativ e representation of the ab ov e in tegral I , c hanging both the in tegrating densit y and the integrand: I = Z Θ h ( θ ) π ( θ | y ) g ( θ ) g ( θ ) d θ , (6) where the support of the posterior distribution π ( ·| y ) is included in the supp ort of g ( · ). Imp ortance Sampling Scheme F o r a computing effo rt N 1) Set i = 1 , 2) Generate indep endent θ ( i ) from the importance distribution g ( · ) , 3) Calculate the imp ortance w eight ω ( i ) = π θ ( i ) | y g θ ( i ) , 4) Set i = i + 1 , 5) If i ≤ N , return to 2) . The corresp onding imp ortance sampling approxi- mation of I is given b y b I IS g = 1 N N X i =1 ω ( i ) h θ ( i ) . (7) F rom a formal p ersp ective, the p osterior density g ( θ ) = π ( θ | y ) is a p ossible (and the most natural) c hoice for the importance function g ( θ ), leading back to the basic Monte Carlo algorithm. How ever, (6) states that a single integral ma y b e appro ximated in infinitely man y wa ys. Ma yb e surprisingly , the c hoice of the posterior g ( θ ) = π ( θ | y ) is generaly far from b e- ing the most efficient c hoice of imp ortance function. While the represen tation (6) holds in wide generalit y (the only requiremen t is that the supp ort of π ( ·| x ) should b e included in the one of g ( · )), the choice of g ( · ) is fundamental to pro vide go o d approximations of I . P o or c hoices of g ( · ) lead to unreliable approxi- mations: for instance, if Z Θ h 2 ( θ ) ω 2 ( θ ) g ( θ )d θ 6 is infinite, the v ariance of the estimator (7) is also infinite (Rob ert and Casella 2009, Chapters 3 and 4) and then (7) cannot b e used for approximation purp oses. W e stress here that, while Monte Carlo methods do not formaly suffer from the “curse of dimensionality” in the sense that, con trary to numerical metho ds, the error of the Monte Carlo estimators is alwa ys decreas- ing in 1 / √ N , notwithstanding the dimension of the parameter space Θ, the difficulty increases with the dimension p of Θ in that deriving satisfactory impor- tance sampling distributions b ecomes more difficult as p gets larger. As detailed in Section 3.2, a so- lution for deriving satisfactory imp ortance functions in large dimensions is to turn to iterative v ersions of imp ortance sampling. Example 5 (Contin uation of Example 1) . In the case of the probit model, the p osterior distribution, prop ortional to (2) cannot b e easily simulated, even though it is bounded from abov e b y the prior densit y π ( β | X ). 3 In this setting, we prop ose to use as imp ortance distribution a normal distribution with mean equal to the maximum likelihoo d (ML) estimate of β and with co v ariance matrix equal to the estimated co v ari- ance matrix of the ML estimate. While, in general, those normal distributions pro vide crude appro xima- tions to the p osterior distributions, the sp ecific case of the probit mo del sho ws this is an exceptionally go o d appro ximation to the p osterior. F or instance, if we compare the w eights resulting from using this normal distribution with the weigh ts resulting from using the prior distribution as imp ortance function, the range of the former w eigh ts is m uch more concen- trated than for the later w eights, as sho wn b y Figure 1. (Note that, due to the missing normalising con- stan t in π ( β | X , y ), the weigh ts are computed with the pro duct (2) as the target function.) J As noted in the ab o v e example, a common feature of Ba yesian in tegration settings is that the normalis- ing constant of the posterior distribution, m ( y ), can- 3 This feature means that the accept-reject algorithm (Robert and Casella 2009, Chapter 2) could formally b e used for the simulation of π ( β | X , y ), but the efficiency of this ap- proach would b e quite p o or. not be computed in closed form. In that case, ω ( i ) and b I IS g cannot be used and they are replaced b y the unormalised version ω ( i ) = m ( y ) π ( θ ( i ) | y ) /g ( θ ( i ) ) and by the self-normalized version b I SNIS g = N X i =1 ω ( i ) h θ ( i ) N X i =1 ω ( i ) , resp ectiv ely . The self-normalized b I SNIS g also con- v erges to I since P N i =1 ω ( i ) con v erges to the normal- ising constant m ( y ). The weigh ts ( i = 1 , . . . , T ) ω ( i ) = ω ( i ) N X j =1 ω ( j ) are then called normalise d weights and, since they sum up to one, they induce a probability distribution on the sample of θ ( i ) ’s. When c hosing an imp ortance function, the adequation with the p osterior distribu- tion needs to get higher as the dimension p increases. Otherwise, very few w eights ω ( i ) are different from 0 and even the largest w eight, whic h is then close to 1, ma y corresp ond to an unlikely v alue for the pos- terior distribution, its closeness to 1 b eing then an artifact of the renormalisation and not an indicator of its w orth. A related measure of p erformance of the imp ortance function is given b y the effe ctive sample size ESS N = 1 N X i =1 ω ( i ) 2 . F or a uniformly weigh ted sample, ESS N is equal to N , while, for a completely degenerated sample where all imp ortance weigh ts but one are zero, ESS N is equal to 1. The effective sample size th us ev aluates the size of the iid sample equiv alen t to the weigh ted sample and allows for a direct comparison of samplers. Example 6 (Contin uation of Example 5) . F or the t w o schemes tested in the probit mo del of Example 5, using the same num b er N = 10 , 000 of sim ulations, the effective sample sizes are T 1 = 6291 . 45 and T 2 = 9 . 77 for the ML based and prior normal imp ortance functions, resp ectively . J 7 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● Prior sampling Importance sampling −700 −600 −500 −400 −300 −200 Prior sampling −700 −500 −300 0 20 40 60 80 100 140 Importance sampling −210 −205 −200 −195 0 200 400 600 800 Figure 1: Boxplot and histograms of the logarithms of the imp ortance weigh ts corresp onding to 10 4 sim u- lations from the prior distribution (prior sampling) and from the MLE normal approximation (imp ortanc e sampling) in the setup of the Pima Indian diab etes study of Example 1. The graphs for the prior sampling are excluding the 1690 zero weigh ts from the representation. While imp ortance sampling is primarily an in tegral appro ximation metho dology , it can also b e used for sim ulation purp oses, via the sampling imp ortanc e r e- sampling (SIR) metho dology of Rubin (1988). Giv en a weigh ted sample ( θ (1) , ω (1) ) , . . . , ( θ ( N ) , ω ( N ) ) sim- ulated from g ( · ), it is possible to deriv e a sample appro ximately distributed from the target distribu- tion π ( ·| x ), ˜ θ (1) , . . . , ˜ θ ( M ) , b y resampling from the instrumen tal sample θ (1) , . . . , θ ( N ) using the imp or- tance weigh ts, that is, ˜ θ ( i ) = θ ( J i ) , 1 ≤ i ≤ M , where the random v ariables J 1 , . . . , J M are dis- tributed as P J l = i θ (1) , . . . , θ ( N ) = ω ( i ) (see, e.g., Rob ert and Casella 2009, Chapter 3). 3.2 Sequen tial imp ortance sampling In general, importance sampling tec hniques require a rather careful tuning to b e of any use, esp ecially in large dimensions. While MCMC methods (Section 4) are a ready-made solution to this problem, giv en that they can break the global distribution in to distribu- tions with smaller dimensions, the recent literature has seen an extension of importance sampling that adaptiv ely calibrates some importance functions to- w ards more similarit y with the target densit y (Capp ´ e et al. 2004, Del Moral et al. 2006, Douc et al. 2007a, Capp ´ e et al. 2008). The metho d is called sequential Monte Carlo (SMC) b ecause it evolv es along a time axis either through the target—as in regular sequential statis- tical problems—or through the importance function, and also p opulation Monte Carlo (PMC), following Iba (2000), b ecause it produces p opulations rather than p oin ts. 4 Although the idea has connections with the earlier particle filter literature (Gordon et al. 1993, Doucet et al. 2001, Capp ´ e et al. 2004), the main principle of this metho d is to build a sequence of increasingly b etter—against a p erformance criterion that may b e the en tropy divergence from the tar- get distribution or the v ariance of the corresp onding estimator of a fixed integral—proposal distributions through a sequence of sim ulated samples (whic h thus b eha v e like p opulations). Giv en that the v alidation 4 This simulated p opulation is then used to devise new and hopefully impro ved importance (or proposals) functions. 8 of the tec hnique is still based on sampling imp or- tance resampling principles, the resulting dep endence on past samples can be arbitrarily complex, while the appro ximation to the target remains v alid (un bi- ased) at e ach iter ation and while it do es not require asymptotic conv ergence as MCMC metho ds do (see Section 4). A very recent connection b etw een b oth approac hes can b e found in Andrieu et al. (2010) and the discussion therein. While the follo wing algorithm do es app ear as a re- p eated (or sequential) sampling imp ortance resam- pling algorithm (Rubin 1988), the ma jor update is the op en choice of q it in the first step, since q it can dep end on all past simulated samples as w ell as on the index of the currently sim ulated v alue. F or instance, in Capp´ e et al. (2008), mixtures of standard kernels are used with an up date of the weigh ts and of the parameters of those kernels at eac h iteration in such a wa y that the entrop y distance of the corresp ond- ing importance sampling estimator to the target are decreasing from one iteration to the other. General Population Mon te Carlo Algo- rithm F o r a computing effo rt N 1) Generate ( θ i, 0 ) 1 ≤ i ≤ N iid ∼ q 0 and compute ω i, 0 = π ( θ i, 0 | y ) /q 0 ( θ i, 0 ) , 2) Generate ( J i, 0 ) 1 ≤ i ≤ N iid ∼ M (1 , ( ω i, 0 ) 1 ≤ i ≤ N ) and set ˜ θ i, 0 = θ J i, 0 , 0 (1 ≤ i ≤ N ) , 3) Set t = 1 , 4) Conditionally on past θ i,j ’s and ˜ θ i,j ’s, generate indep endently θ i,t ∼ q i,t and compute ω i,t = π ( θ i,t | y ) /q i,t ( θ i,t ) , 4) Generate ( J i,t ) 1 ≤ i ≤ N iid ∼ M (1 , ( ω i,t ) 1 ≤ i ≤ N ) and set ˜ θ i,t = θ J i,t ,t (1 ≤ i ≤ N ) , 5) Set t = t + 1 , 6) If t ≤ N return to 4) . In this representation, while the choice of q it is completely open, a conv enient case is when the θ i,t ’s are simulated either from a non-parametric kernel- lik e prop osal of the form n X j =1 % j,t − 1 K t ( ˜ θ j,t − 1 , θ ) , where K t is a Mark ov kernel mo dified at each iter- ation (Douc et al. 2007b) or from a mixture of the form n X j =1 % j,t − 1 g j ( ˜ θ j,t − 1 | ξ j,t − 1 ) , where g j is a standard distribution from an exp o- nen tial family parameterised b y ξ , b oth parameters and w eights being up dated at each iteration (Capp´ e et al. 2008). An illustration of the p erformances of this PMC algorithm for a cosmological target is giv en in W raith et al. (2009), while an ABC extension has b een introduced by Beaumont et al. (2009). Since PMC pro duces at each iteration a v alid ap- pro ximation to the target distribution, the p opula- tions ˜ θ i,t pro duced at each of those iterations should not b e dismissed for appro ximation purp oses. Cor- n uet et al. (2009) hav e developped a nearly opti- mal strategy recycling all past simulations, based on the multiple mixture technique of Owen and Zhou (2000) and called adaptive multiple imp ortanc e sam- pling (AMIS). 3.3 Appro ximations of the Bay es fac- tor As already explained abov e, when testing for an null h yp othesis (or a mo del) H 0 : θ ∈ Θ 0 against the alternativ e hypothesis (or the alternativ e model) H 1 : θ ∈ Θ 1 , the Bay es factor is defined b y B 01 ( y ) = Z Θ 0 f 0 ( y | θ 0 ) π 0 ( θ 0 )d θ 0 Z Θ 1 f 1 ( y | θ 1 ) π 1 ( θ 1 )d θ 1 . The computation of Monte Carlo approximations of the Ba yes factor (3) has undergone rapid c hanges in the last decade as illustrated by the b o ok of Chen et al. (2000) and the recen t survey of Robert and 9 Marin (2010). W e assume here that the prior dis- tributions under b oth the null and the alternativ e h yp otheses are prop er, as, typically they should be. (In the case of common n uisance parameters, a com- mon improper prior measure can b e used on those, see Berger et al. (1998), Marin and Robert (2007). This complicates the computational asp ect, as some metho ds like crude Mon te Carlo cannot be used at all, while others are more prone to suffer from infi- nite v ariance.) In that setting, the most elementary appro ximation to B 01 ( y ) consists in using a ratio of t w o standard Monte Carlo approximations based on sim ulations from the corresponding priors. Indeed, for i = 0 , 1: Z Θ i f i ( y | θ ) π i ( θ )d θ = E π i [ f ( y | θ )] . Then, if θ 0 , 1 , . . . , θ 0 ,n 0 and θ 1 , 1 , . . . , θ 1 ,n 1 are tw o in- dep enden t samples generated from the prior distribu- tions π 0 and π 1 , resp ectively , n − 1 0 P n 0 j =1 f 0 ( y | θ 0 ,j ) n − 1 1 P n 1 j =1 f 1 ( y | θ 1 ,j ) is a strongly consisten t estimator of B 01 ( y ). Defining tw o imp ortance distributions with densi- ties g 0 and g 1 , with the same supports as π 0 and π 1 , resp ectiv ely , w e ha v e: B 01 ( y ) = E g 0 f 0 ( y | θ ) π 0 ( θ ) g 0 ( θ ) E g 1 f 1 ( y | θ ) π 1 ( θ ) g 1 ( θ ) . Therefore, giv en tw o independent samples gener- ated from distributions g 0 and g 1 , resp ectively , θ 0 , 1 , . . . , θ 0 ,n 0 and θ 1 , 1 , . . . , θ 1 ,n 1 , the corresp onding imp ortance sampling estimate of B 01 ( y ) is n − 1 0 P n 0 j =1 f 0 ( y | θ 0 ,j ) π 0 ( θ 0 ,j ) /g 0 ( θ 0 ,j ) n − 1 1 P n 1 j =1 f 1 ( y | θ 1 ,j ) π 1 ( θ 1 ,j ) /g 1 ( θ 1 ,j ) . Compared with the standard Mon te Carlo approxi- mation ab ov e, this approach offers the adv antage of op ening the choice of the representation in that it is p ossible to pick importance distributions g 0 and g 1 that lead to a significan t reduction in the v ariance of the imp ortance sampling estimate. In the sp ecial case when the parameter spaces of b oth mo dels under comparison are iden tical, i.e. Θ 0 = Θ 1 , a bridge sampling approac h (Meng and W ong 1996) is based on the general represen tation B 01 ( y ) = Z f 0 ( y | θ ) π 0 ( θ ) α ( θ ) π 1 ( θ | y )d θ Z f 1 ( y | θ ) π 1 ( θ ) α ( θ ) π 0 ( θ | y )d θ ≈ n 1 − 1 n 1 X j =1 f 0 ( y | θ 1 ,j ) π 0 ( θ 1 ,j ) α ( θ 1 ,j ) n 0 − 1 n 0 X j =1 f 1 ( y | θ 0 ,j ) π 1 ( θ 0 ,j ) α ( θ 0 ,j ) where θ 0 , 1 , . . . , θ 0 ,n 0 and θ 1 , 1 , . . . , θ 1 ,n 1 are t wo inde- p enden t samples coming from the posterior distribu- tions π 0 ( θ | y ) and π 1 ( θ | y ), respectively . That applies for any positive function α such that the upp er inte- gral exists. Some choices of α can lead to v ery p o or p erformances of the metho d in connection with the harmonic mean approach (see b elow), but there ex- ists a quasi-optimal solution, as provided b y Gelman and Meng (1998): α ? ( y ) ∝ 1 n 0 π 0 ( θ | y ) + n 1 π 1 ( θ | y ) . This optim um cannot b e used p er se , since it re- quires the normalising constants of b oth π 0 ( θ | y ) and π 1 ( θ | y ). As suggested by Gelman and Meng (1998), an appro ximate but practical version uses iterative v ersions of α ? , the curren t approximation of α ? b eing used to pro duce a new bridge sampling approxima- tion of B 01 ( y ), which in its turn is used to set a new appro ximation of α ? . Note that this solution recy- cles sim ulations from b oth p osteriors, which is quite appropriate since one mo del is selected via the Ba yes factor, instead of using an imp ortance weigh ted sam- ple common to b oth approximations. W e will see be- lo w an alternativ e representation of the bridge factor that bypasses this difficulty (if difficult y there is!). Those deriv ations are how ever restricted to the case when b oth mo dels ha ve the same complexit y and 10 th us they do not apply to embedded models, when Θ 0 ⊂ Θ 1 in such a w ay that θ 1 = ( θ , ψ ), i.e. when the submo del corresp onds to a sp ecific v alue ψ 0 of ψ : f 0 ( y | θ ) = f 1 ( y | θ , ψ 0 ). The extension of the most adv anced bridge sam- pling strategies to such cases requires the introduc- tion of a pseudo-p osterior density, ω ( ψ | θ , y ), on the parameter that do es not app ear in the embedded mo del, in order to reconstitute the equiv alence b e- t w een both parameter spaces. Indeed, if w e augmen t π 0 ( θ | y ) with ω ( ψ | θ , y ), we obtain a joint distribution with density π 0 ( θ | y ) × ω ( ψ | θ , y ) on Θ 1 . The Bay es factor B 01 ( y ) can then b e expressed as Z Θ 1 f 1 ( y | θ , ψ 0 ) π 0 ( θ ) α ( θ , ψ ) π 1 ( θ , ψ | y )d θ ω ( ψ | θ , y ) d ψ Z Θ 1 f 1 ( y | θ , ψ ) π 1 ( θ , ψ ) α ( θ , ψ ) π 0 ( θ | y ) ω ( ψ | θ , y )d θ d ψ , (8) b ecause it is clearly indep enden t from the choice of b oth α ( θ , ψ ) and ω ( ψ | θ , y ). Obviously , the p erfor- mances of the appro ximation ( n 1 ) − 1 n 1 X j =1 f 1 ( y | θ 1 ,j , ψ 0 ) π 0 ( θ 1 ,j ) ω ( ψ 1 ,j | θ 1 ,j , y ) α ( θ 1 ,j , ψ 1 ,j ) ( n 0 ) − 1 n 0 X j =1 f 1 ( y | θ 0 ,j , ψ 0 ,j ) π 1 ( θ 0 ,j , ψ 0 ,j ) α ( θ 0 ,j , ψ 0 ,j ) , where ( θ 0 , 1 , ψ 0 , 1 ) , . . . , ( θ 0 ,n 0 , ψ 0 ,n 0 ) and ( θ 1 , 1 , ψ 1 , 1 ) , . . . , ( θ 1 ,n 1 , ψ 1 ,n 1 ) are t wo inde- p enden t samples generated from distributions π 0 ( θ | y ) × ω ( ψ | θ , y ) and π 1 ( θ , ψ | y ), resp ectiv ely , do dep end on this completion b y the pseudo-p osterior as well as on the function α ( θ , ψ ). Chen et al. (2000) establish that the asymptotically optimal choice for ω ( ψ | θ , y ) is the ob vious one, namely ω ( ψ | θ , y ) = π 1 ( ψ | θ , y ) , whic h most often is una v ailable in closed form (esp e- cially when considering that the normalising constan t of ω ( ψ | θ , y ) is required in (8)). Another approach to approximating the marginal lik eliho o d is based on harmonic means. If θ i,j ∼ π i ( · ) ( i = 1 , 2 , j = 1 , . . . , N ), the prior distribution, then 1 N N X j =1 1 f i ( y | θ i,j ) is an unbiased estimator of 1 /m i ( y ) (Newton and Raftery 1994). This generic harmonic mean is to o often asso ciated with an infinite v ariance to ever be recommended (Neal 1994), but the representation (Gelfand and Dey 1994) ( i = 0 , 1) E π i ϕ i ( θ ) π i ( θ ) f i ( y | θ ) y = Z ϕ i ( θ ) π i ( θ ) f i ( y | θ ) π i ( θ ) f i ( y | θ ) m i ( y ) d θ = 1 m i ( y ) holds, no matter what the density ϕ i is, provided ϕ i ( θ i ) = 0 when π i ( θ i ) f i ( y | θ i ) = 0. This repre- sen tation is remark able in that it allows for a direct pro cessing of Monte Carlo (or MCMC) output from the p osterior distribution π i ( θ i | y ). As with imp or- tance sampling approximations, the v ariability of the corresp onding estimator of B 01 ( y ) will b e small if the distributions ϕ i ( i = 0 , 1) are close to the cor- resp onding p osterior distributions. How ever, as op- p osed to usual importance sampling constrain ts, the densit y ϕ i m ust hav e lighter—rather than fatter— tails than π i ( · ) f i ( y |· ) for the approximation of the marginal m i ( x ) N − 1 N X j =1 ϕ i ( θ i,j ) π i ( θ i,j ) f i ( y | θ i,j ) − 1 , when θ i,j ∼ π i ( θ | y ), to enjoy finite v ariance. F or in- stance, using ϕ i ’s with constrained s upports derived from a Monte Carlo sample, like the conv ex hull of the sim ulations corresp onding to the 10% or to the 25% HPD regions—that again is easily deriv ed from the sim ulations—is b oth completely appropriate and implemen table (Rob ert and W raith 2009). Example 7 (Contin uation of Example 5) . In the case of the probit mo del, if we use as distributions ϕ i the normal distributions with means equal to the ML estimates and cov ariance matrices equal to the estimated co v ariance matrices of the ML estimates, the results of Rob ert and Marin (2010), obtained o v er 100 replications with N = 20 , 000 sim ulations eac h are repro duced in Figure 2. They compare b oth approac hes—harmonic mean and imp ortance sampling—to the approximation of the Bay es factor 11 ● ● ● Harmonic mean Importance sampling 3.102 3.104 3.106 3.108 3.110 3.112 3.114 3.116 Figure 2: Mon te Carlo experiment comparing the v ariabilit y of the approximations to the Bay es fac- tor B 10 ( y ) based on harmonic mean and imp ortance sampling for the Pima Indian diab etes study of Ex- ample 1. The boxplots are obtained for 100 repli- cations of 20 , 000 simulations from the normal im- p ortance sampling distribution. (Sour c e: R ob ert and Marin 2010) . testing for the significance of the p ed cov ariate and sho w a very clear proximit y b etw een both imp ortance solutions in this sp ecial case, ev en though the imp or- tance sampling estimate is muc h faster to compute. Sim ulation from the p osterior distribution is obtained b y an MCMC algorithm describ ed in Section 4. J A final approach to the appro ximation of Bay es fac- tors that is worth exploring is Chib’s (1995) metho d. First, it is a direct application of Ba yes’ theorem: giv en y ∼ f i ( y | θ ), w e hav e that m i ( y ) = f i ( y | θ ) π i ( θ ) π i ( θ | y ) , for all θ ’s (since b oth the lhs and the rhs of this equalit y are constan t in θ ). Therefore, if an arbitrary v alue θ ∗ , is selected and if a go o d approximation to π i ( θ ∗ | y ) is av ailable, denoted ˆ π i ( θ ∗ | y ), Chib’s (1995) appro ximation to the marginal likelihoo d (and hence to the Bay es factor) is m i ( y ) = f i ( y | θ ∗ ) π i ( θ ∗ ) ˆ π i ( θ ∗ | y ) . (9) In a general setting, ˆ π i ( θ ∗ | y ) may b e the normal ap- pro ximation based on the MLE, already used in the imp ortance sampling, bridge sampling and harmonic mean solutions, but this is unlikely to b e accurate in a general framework. A second solution is to use a nonparametric appro ximation based on a prelimi- nary MCMC sample, even though the accuracy may also suffer in large dimensions. In the sp ecial set- ting of laten t v ariables mo dels introduced in Section 2.2, Chib’s (1995) approximation is particularly at- tractiv e as there exists a natural appro ximation to π k ( θ | y ), based on the Rao–Blac kwell (Gelfand and Smith 1990) estimate ˆ π k ( θ ∗ | y ) = 1 N N X j =1 π k ( θ ∗ | y , z j ) , where the z j ’s are the laten t v ariables simulated by the MCMC sampler. The estimate ˆ π k ( θ ∗ | y ) is indeed a parametric unbiased approximation of π k ( θ ∗ | y ) that conv erges with rate O(1 / √ N ). It obviously re- quires the full conditional density π k ( θ ∗ | y , z ) to b e a v ailable in closed form (constant included) but, for instance, this is the case for the probit mo del of Ex- ample 1. Example 8 (Contin uation of Example 7) . Figure 3 repro duces the results of Rob ert and Marin (2010) obtained for 100 replications of Chib’s approxima- tions of B 01 ( y ) for the same test as in Example 7 with N = 20 , 000 sim ulations for each appro xima- tion of m i ( y ) ( i = 0 , 1). While Chib’s method is usually very reliable and dominates importance sam- pling, the incredibly goo d appro ximation pro vided b y the asymptotic normal distribution implies that, in this highly sp ecial case, Chib’s metho d is dominated b y b oth the imp ortance sampling and the harmonic mean estimates. J While the metho ds presen ted abov e cannot rank ed in a fixed order for all t yp es of problems, the con- clusion of Robert and Marin 2010 is worth rep eating here. In cases when a go o d approximation g ( · ) to the true p osterior distribution of a mo del is av ail- able, it should b e used in a regular imp ortance sam- pling ev aluation of the marginal likelihoo d. Giv en 12 ● ● ● Chib Harmonic mean IS 3.06 3.08 3.10 3.12 3.14 Figure 3: Mon te Carlo experiment comparing the v ariabilit y of the appro ximations to the Bay es factor B 10 ( y ) based on Chib’s represen tation and on har- monic mean and imp ortance sampling for the Pima Indian diab etes study of Example 1. The b oxplots are obtained for 100 replications of 20 , 000 simulations from the p osterior and the imp ortance sampling dis- tributions, respectively . (Sour c e: R ob ert and Marin 2010) . that this go o d fit rarely o ccurs in complex and new settings, more generic solutions like Chib’s (1995) should b e used, whenever av ailable. (When used with a b ounded supp ort on the ϕ i ’s, the harmonic mean approximation can be considered as a generic metho d.) At last, when faced with a large num b er or ev en an infinit y of mo dels to compare, the only a v ailable solution is to use mo del jump techniques lik e reversible jump MCMC (Green 1995). 4 Mark o v c hain Mon te Carlo metho ds 4.1 Basics Giv en the difficulties inv olved in constructing an effi- cien t imp ortance function in complex setting, Mark ov c hain Monte Carlo (MCMC) metho ds (Gelfand and Smith 1990, Rob ert and Casella 2004, 2009, Marin and Rob ert 2007) try to ov ercome some of the limi- tations of regular Mon te Carlo methods (particularly dimension-wise) by simulating a Marko v chain with stationary (and limiting) distribution the target dis- tribution. 5 There exist fairly generic w ays of pro duc- ing such chains, including the Metrop olis–Hastings and Gibbs algorithms defined below. Besides the fact that stationarity of the target distribution is enough to justify a sim ulation method b y Mark ov chain gen- eration, the idea at the core of MCMC algorithms is that lo c al exploration, when prop erly w eighted, can lead to a v alid ( glob al ) representation of the distri- bution of interest. This includes for instance using only comp onent-wise (and hence small-dimensional) sim ulations—that escap e (to some extent) the curse of dimensionality—as in the Gibbs sampler. This very short introduction may give the impres- sion that MCMC simulation is only sup erficially dif- feren t from other Monte Carlo metho ds. When com- pared with alternatives such as imp ortance sampling, MCMC metho ds differ on tw o issues: 5 The theoretical foundations of MCMC algorithms are both sound and simple: as stressed b y Tierney (1994) and Mengersen and Tweedie (1996), the existence of a stationary distribution almost immediately v alidates the principle of a simulation algorithm based on a Marko v k ernel. 13 1. the output ( θ ( t ) ) of an MCMC algorithm is only asymptotically distributed from the target distri- bution. While this usually is irrelev ant, in that the θ ( t ) ’s are very quic kly distributed from that target, it may also happ en that the algorithm fails to conv erge in the prescrib ed num b er of it- erations and thus that the resulting estimation is biased; 2. the sequence ( θ ( t ) ) b eing a Marko v c hain, the θ ( t ) ’s are correlated and therefore this mo difies the ev aluation of the asymptotic v ariance as w ell as the effective sample size asso ciated with the output. W e also note here that trying to pro duce an iid se- quence out of a MCMC method is highly inefficien t and thus not recommended. 4.2 Metrop olis–Hastings algorithm The Metrop olis–Hastings algorithm truly is the generic MCMC method in that it offers a straight- forw ard and universal solution to the problem of sim ulating from an arbitrary 6 p osterior distribution π ( θ | x ) ∝ f ( y | θ ) π ( θ ): starting from an arbitrary p oin t θ 0 , the corresp onding Marko v chain explores the surface of this p osterior distribution using an in- ternal Mark ov k ernel (prop osal) q ( θ | θ ( t − 1) ) that pro- gressiv ely visits the whole range of the possible v alues of θ . This internal Marko v kernel should b e irre- ducible with resp ect to the target distribution (that is, the Marko v chain asso ciate whith the proposal q ( ·|· ) should b e able to visit the whole supp ort of the target distribution). The reason why the resulting c hain does conv erge to the target distribution despite the arbitrary choice of q ( θ | θ ( t − 1) ) is that the pro- p osed v alues are sometimes rejected b y a step that relates with the accept-reject algorithm. 6 The only restriction is that this function is known up to a normalising constant. Metrop olis–Hastings Algorithm F o r a computing effo rt N 1) Cho ose θ (0) , 2) Set t = 1 , 3) Generate θ 0 from q ( ·| θ ( t − 1) ) , 4) Generate u from U [0 , 1] , 5) If u ≤ π ( θ 0 ) f ( y | θ 0 ) q ( θ ( t − 1) | θ 0 ) π ( θ ( t − 1) f ( y | θ ( t − 1) ) q ( θ 0 | θ ( t − 1) ) , set θ ( t ) = θ 0 else θ ( t ) = θ ( t − 1) , 6) Set t = t + 1 , 7) If t ≤ N return to 3) . A generic c hoice for q ( θ | θ ( t − 1) ) is the random w alk prop osal: q ( θ | θ ( t − 1) ) = g ( θ − θ ( t − 1) ) with a symmet- ric function g , which provides a simplified acceptance probabilit y . Indeed, in that case, step 5) of the pre- vious algorithm is replaced with: if u ≤ π ( θ 0 ) f ( y | θ 0 ) π ( θ ( t − 1) ) f ( y | θ ( t − 1) ) , set θ ( t ) = θ 0 else θ ( t ) = θ ( t − 1) . This ensures that v alues θ 0 that are more likely than the curren t θ ( t − 1) are alw a ys accepted while v alues that are less lik ely are sometimes accepted. Example 9 (Con tinuation of Example 1) . If w e con- sider the Pima Indian diab etes dataset with only its first tw o co v ariates, the parameter β is of dimension 2 and the random walk prop osal can be easily im- plemen ted. W e use for g a normal distribution with co v ariance matrix the asymptotic co v ariance matrix ˆ Σ of the MLE and the proposed v alue β 0 is then sim- ulated at iteration t as β 0 ∼ N 2 ( β ( t − 1) , ˆ Σ) . The MLE ma y also b e used as starting v alue for the chain. Figure 4 illustrates the b ehaviour of the Metrop olis–Hastings algorithm for this dataset, the lhs graph describing the path of the sub chain ( β (100 t ) ) and the rhs detailing the first comp onent 14 β ( t ) 1 for 5000 ≤ t ≤ 6000. Although this is not clearly visible on the rhs graph, the acceptance rate of the algorithm is close to 50%, whic h means that half of the prop osed β ’s are rejected. 7 Using a co v ariance matrix that is five times larger leads to an accep- tance rate of 25%, while the larger 10 ˆ Σ produces an acceptance rate of 15%. J Finding the prop er scale is not alwa ys as straigh t- forw ard as in Example 8 and asymptotic normal ap- pro ximations to the p osterior distribution may b e v ery inefficient. While the Metropolis–Hastings algo- rithm recov ers b etter from facing large-dimensional problems than standard imp ortance sampling tech- niques, this still is a strong limitation to its use in large-dimensional setups. 4.3 Gibbs sampling In contrast, the alternative Gibbs sampler is an at- tractiv e algorithm for large-dimensional problems b e- cause it naturally fits the hierarchical structures of- ten present in Ba y esian mo dels and more gener- ally in graphical and latent v ariable mo dels. The fundamen tal strength of the Gibbs sampler is its abilit y to break a joint target distribution like π ( θ 1 , . . . , θ p | y ) in the corresp onding conditional dis- tributions π i ( θ i | y , θ − i ) ( i = 1 , . . . , n ) and to simulate successiv ely from these lo w-dimensional targets: 7 This rate happens to be almost optimal for small dimen- sions (Gelman et al. 1996). p -comp onen t systematic scan Gibbs sam- pler F o r a computing effo rt N 1) Cho ose θ (0) , 2) Set t = 1 , 3) Generate θ ( t ) 1 from π 1 θ 1 | y , θ ( t − 1) − 1 , 4) Generate θ ( t ) 2 from π 2 θ 2 | y , θ ( t ) 1 , θ ( t − 1) − (1:2) , 5) . . . 6) Generate θ ( t ) p ∼ π p θ p | y , θ ( t ) (1:( p − 1)) , 7) Set t = t + 1 , 8) If t ≤ N return to 3) . While this algorithm seems restricted to mostly hi- erarc hical multidimensional mo dels, the sp ecial case of the slic e sampler (Robert and Casella, 2004, Chap- ter 8) sho ws that the Gibbs sampler applies in a wide v ariet y of mo dels. Example 10. (Contin uation of Example 2) As noted in Example 2, the probit mo del allo ws for a latent v ariable representation based on the artificial normal v ariable z t connected with the observ ed v ariable y t . This representation op ens the do or to a Gibbs sam- pler (Alb ert and Chib 1993) aimed at the joint p os- terior distribution of ( β , z ) giv en y . Indeed, the con- ditional distribution of the laten t v ariable z t giv en β and y t , z t | y t , β ∼ N + x T t β , 1 , 0 if y t = 1 , N − x T t β , 1 , 0 if y t = 0 , (10) is clearly a v ailable, 8 . The corresponding full condi- tional on the parameters is given b y the standard 8 Here, N + x T t β , 1 , 0 denotes the normal distribution with mean x T t β and v ariance 1 that is left-truncated at 0, while N − x T t β , 1 , 0 denotes the symmetrical normal distribution that is right-truncated at 0. 15 0.008 0.010 0.012 0.014 0.016 0.018 0.020 −0.040 −0.035 −0.030 −0.025 −0.020 β β 1 β β 2 ● ● ● ● ● 5000 5200 5400 5600 5800 6000 0.010 0.015 0.020 iterations β β 1 Figure 4: Random-walk Metrop olis–Hastings algorithm applied to the Pima Indian diab etes dataset. The left graph describ es the path of the subchain ( β (100 t ) ). The righ t graph shows the path of the first component c hain β ( t ) 1 for 5000 ≤ t ≤ 6000. normal distribution (which do es not depend on y ) β | z ∼ N n n + 1 ( X T X ) − 1 X T z , n n + 1 ( X T X ) − 1 . (11) Therefore, given the curren t v alue of β , one cycle of the Gibbs algorithm pro duces a new v alue for z as simulated from the conditional distribution (10), whic h, when substituted into (11), pro duces a new v alue for β . Although it do es not impact the long- term prop erties of the sampler, the starting v alue of β ma y once again b e taken as the maximum lik eliho o d estimate to av oid (useless) burning steps in the Gibbs sampler. The implementation of this Gibbs sampler is straigh tforw ard. There is no parameter to cali- brate (as opp osed to the scale in the random-w alk Metrop olis–Hastings scenario). When comparing Figure 4 and 5, the raw plot of the sequence ( β ( t ) 1 ) sho ws that the mixing b ehaviour of the Gibbs sam- pling c hain is sup erior to the one for the Metropolis– Hastings chain. J 4.4 Hybrid solutions Mixing b oth Metrop olis–Hastings and Gibbs algo- rithms often result in b etter p erformances like faster con v ergence of the resulting Marko v chain, the former algorithm b eing often used for global exploration of the target and the later for lo cal improv ement. A classic h ybrid algorithm replaces a non- a v ailable Gibbs up date by a Metrop olis–Hastings step. Another hybrid solution alternates Gibbs and Metrop olis–Hastings prop osals. The corresp onding algorithms are v alid: they pro duce ergo dic Marko v c hains with the p osterior target as stationary distri- bution. Example 11 (Contin uation of Example 5) . F or p = 1, the probit mo del can b e ov er-parameterised as P ( Y i = 1 | x i ) = 1 − P ( Y i = 0 | x i ) = Φ( x i β /σ ) , while only dep ending on β /σ . Using a proper prior lik e π ( β , σ 2 | x ) = π ( β | x ) π ( σ 2 | x ) ∝ σ − 4 exp {− 1 /σ 2 } exp {− β 2 / 50) , 16 0.008 0.010 0.012 0.014 0.016 0.018 −0.035 −0.030 −0.025 −0.020 β β 1 β β 2 ● ● ● ● ● 5000 5200 5400 5600 5800 6000 0.010 0.015 0.020 Iterations β β 1 Figure 5: Gibbs sampling algorithm applied to the Pima Indian diab etes dataset. The left graph describ es the path of the sub chain ( β (100 t ) ). The right graph shows the path of the first comp onent chain β ( t ) 1 for 5000 ≤ t ≤ 6000. the corresponding Gibbs sampler sim ulates β and σ 2 alternativ ely , from π ( β | x , y , σ ) ∝ n Y i =1 Φ( x i β /σ ) y i Φ( − x i β /σ ) 1 − y i π ( β | x ) and π ( σ 2 | x , y , β ) ∝ n Y i =1 Φ( x i β /σ ) y i Φ( − x i β /σ ) 1 − y i π ( σ 2 | x ) resp ectiv ely . Since b oth of these conditional dis- tributions are non-standard, we replace the direct sim ulation by one-dimensional Metrop olis–Hastings steps, 9 using normal N ( β ( t ) , 1) and log-normal LN (log σ ( t ) , . 04) random walk prop osals, resp ec- tiv ely . (The scales w ere found b y trial-and-error.) F or a simulated dataset of 1 , 000 p oints, the con tour plot of the log-p osterior distribution is giv en in Figure 6, along with the last 1 , 000 points of a corresp onding MCMC sample after 100 , 000 iterations. This graph 9 In this Metropolis-within-Gibbs strategy , note that a sin- gle step of a Metropolis–Hastings mov e is sufficient to v alidate the algorithm, since stationarity , not convergence, is the issue. Figure 6: Contour plot of the log-p osterior distri- bution for a probit sample of 1 , 000 observ ations, along with 1 , 000 p oin ts of an MCMC sample (Sour c e: R ob ert and Casel la 2004) . sho ws a very satisfactory repartition of the sim ulated parameters o ver the likelihoo d surface, with higher concen trations near the largest p osterior regions. J Let us note as a conclusion to this short section that an alternativ e meaning for hybrid solutions is the sim ultaneous use of different Marko v k ernels (Tierney 17 1994). A mixture of MCMC kernels do es remain an MCMC kernel with the same stationary distribution and its performances are at least as goo d as the best comp onen t in the mixture. There is therefore very little to say against advocating this extension. 4.5 Scaling and adaptivit y A difficulty with Metropolis–Hastings algorithms, in- cluding random w alk versions, is the calibration of the prop osal distribution: this proposal must be suf- ficien tly related to the target distribution so that, in a reasonable n umber of steps, the whole supp ort of this distribution can b e visited. If the scale of the random w alk prop osal is to o small, this will not happ en as the algorithm stays “too local” and, if for instance there are several mo des on the target, the algorithm may remain trapped within one modal region b ecause it cannot reac h other mo dal regions with jumps of to o small a magnitude. Example 12. F or a sample y 1 , . . . , y n from the mix- ture distribution p N ( µ 1 , σ 2 ) + (1 − p ) N ( µ 2 , σ 2 ) where both p and σ 2 are kno wn, the p osterior distri- bution asso ciated with the prior N (0 , 10 σ 2 ) on b oth µ 1 and µ 2 is multimodal, with a ma jor mode close to the true v alue of µ 1 and µ 2 (when n is large enough) and a secondary and spurious mo de (that stems from the nonindentifiable case p = 0 . 5). When running a random w alk Metrop olis–Hastings algorithm on this mo del, with a normal proposal N 2 (( µ ( t ) 1 , µ ( t ) 2 ) , τ I 2 ), a small scale τ prev ents the Marko v c hain from visit- ing the ma jor mo de. Figure 7 compares tw o choices of τ for the same dataset: for τ = 1, the spurious mo de can b e escap ed but for τ = . 3 the chain re- mains trapp ed in that starting mo de. J The larger the dimension p is, the harder the de- termination of the scale is, b ecause a. the curse of dimensionality implies that there is an increasingly important part of the space with zero probability under the target; Figure 7: Ev olution of a random walk Metrop olis– Hastings c hain on a mixture log-p osterior surface for n = 500 observ ations and (top) τ = 1 and 1 , 000 iterations; (b ottom) τ = . 3 and 10 , 000 iterations. 18 b. the knowledge and in tuition ab out the modal re- gions get weak er (for complex distributions, it is imp ossible to iden tify none but a few of the mo des); c. the prop er scaling of a random w alk prop osal in- v olv es a symmetric ( p, p ) matrix. Ev en when diagonal, this matrix gets harder to scale as the dimension increases (unless one resorts to a Gibbs like implementation, where each direction is scaled separately). In addition to these difficulties, learning ab out the sp ecificities of the target distribution while running an MCMC algorithm and tuning the prop osal ac- cordingly , i.e. constructing an adaptiv e MCMC pro- cedure, is difficult b ecause this cancels the Mark ov prop ert y of the original me thod and thus jeopar- dizes conv ergence. F or instance, Figure 8 shows the discrepancy b et w een an histogram of a sim ulated Mark o v chain and the theoretical limit (solid curve) when the prop osal distribution at time T is a kernel appro ximation based on the first T − 1 simulations of the “chain”. Similarly , using an on-line scaling of the algorithm against the empirical acceptance rate in or- der to reach a golden n um b er like 0 . 234 (see Rob ert and Casella 2004, Note 7.8.4) is inherently flaw ed in that the attraction of a modal region may give a false sense of conv ergence and may thus lead to a c hoice of too small a scale, simply because other modes will fail to b e visited during the scaling exp eriment. Ho w ev er, there are algorithms that preserv e ergo d- icit y (conv ergence to the target) while implemen ting adaptivit y . See, e.g., Gilks et al. (1998) who use re- generation to create block indep endence and preserv e Mark o vianit y on the paths rather than on the v alues, Haario et al. (1999, 2001) who deriv e a prop er adap- tation sc heme by using a ridge-like correction to the empirical v ariance in v ery large dimensions for satel- lite imaging data, and Andrieu et al. (2005) who pro- p ose a general framework of v alid adaptivity based on sto c hastic optimisation and the Robbin-Monro al- gorithm. More recently , Rob erts and Rosenthal (2007) con- sider basic ergo dicity prop erties of adaptive Marko v c hain Monte Carlo algorithms under minimal as- sumptions, using coupling constructions. They Figure 8: Sample (left) pro duced by 50 , 000 iter- ations of a nonparametric adaptiv e MCMC scheme and comparison (right) of its distribution with the target distribution (solid curve) . (Sour c e: R ob ert 2004) pro v e conv ergence in distribution and a weak law of large num b ers. Moreov er, in Rob erts and Rosen- thal (2009), they inv estigate the use of adaptive MCMC algorithms to automatically tune the Marko v c hain parameters during a run. Examples include the adaptiv e Metrop olis multiv ariate algorithm of Haario et al. (2001), Metrop olis-within-Gibbs algo- rithms for nonconjugate hierarchical mo dels, region- ally adjusted Metrop olis algorithms, and logarith- mic scalings. Rob erts and Rosenthal (2009) present some computer simulation results that indicate that the algorithms p erform very well compared to non- adaptiv e algorithms, even in high dimensions. 5 Appro ximate Ba y esian com- putation techniques There exist situations where the likelihoo d function f ( y | θ ) is o verly exp ensive or even imp ossible to cal- culate, but where sim ulations from the densit y f ( y | θ ) are reasonably pro duced. A generic class of such sit- uations is made by latent v ariable mo dels where the analytic in tegration of the latent v ariables is imp ossi- ble, while handling the latent v ariables as additional parameters in a joint distribution causes any MCMC 19 to face conv ergence problems. Another illustration is given b y in v erse problems where computing the function f ( y | θ ) for a given pair ( y , θ ) inv olves solv- ing a n umerical equation. In suc h cases, it is almost imp ossible to use the computational to ols presented in the previous section to sample from the p osterior distribution π ( θ | y ). Approximate Bay esian compu- tation (ABC) is an alternative to such tec hniques that only requires being able to sample from the lik eliho o d f ( ·| θ ). It was first prop osed for p opulation genetic mo dels (Beaumont et al. 2002) but applies in muc h wider generality . Lik eliho o d free rejection sampling F o r a computing effo rt N 1) Set i = 1 , 2) Generate θ 0 from the prio r distribution π ( · ) , 3) Generate z from the lik eliho o d f ( ·| θ 0 ) , 4) If ρ ( η ( z ) , η ( y )) ≤ , set θ i = θ 0 and i = i + 1 , 5) If i ≤ N , return to 2) . This likelihoo d free algorithm samples from the marginal in z of the following joint distribution: π ( θ , z | y ) ∝ π ( θ ) f ( z | θ ) I P , y ( z ) with the tuning parameters as • ρ ( · , · ) a distance, • η ( · ) a summary statistic, • > 0 a tolerance level, • P , y = { z | ρ ( η ( z ) , η ( y )) < } . The idea behind ABC (Beaumont et al. 2002) is that the summary statistics coupled with a small tolerance should pro vide a goo d approximation of the posterior distribution: π ( θ | y ) = Z π ( θ , z | y )d z ≈ π ( θ | y ) . It has b een shown in Marjoram et al. (2003) that it is p ossible to construct a Metrop olis–Hastings algorithm that samples from π ( θ , z | y ), and the marginally from π ( θ | y ); this algorithm only requires the ability to sample from f ( ·| θ ). This is the likeli- ho o d free MCMC sampler: Lik eliho o d free MCMC sampler F o r a computing effo rt N 1) Use the lik eliho o d free rejection sampling to get a realization θ (0) from the ABC ta rget distribu- tion π ( θ | y ) , 2) Set t = 1 , 3) Generate θ 0 from the Mark ov k ernel q ·| θ ( t − 1) , 4) Generate z from the lik eliho o d f ( ·| θ 0 ) , 5) Generate u from U [0 , 1] , 6) If u ≤ π ( θ 0 ) q ( θ ( t − 1) | θ 0 ) π ( θ ( t − 1) ) q ( θ 0 | θ ( t − 1) ) I P , y ( z ) , set θ ( t ) = θ 0 else θ ( t ) = θ ( t − 1) , 7) Set t = t + 1 , 8) If t ≤ N return to 3) . Rejection sampling and MCMC methods can p er- form p o orly if the tolerance lev el is small. Con- sequen tly v arious sequential Mon te Carlo algorithms ha v e b een constructed as an alternative to b oth meth- o ds. F or instance, Beaumont et al. (2009) prop osed an ABC version of the P opulation Mon te Carlo algo- rithm presen ted ab ov e. The k ey idea is to decompose the difficult issue of sampling from π ( θ , z | y ) into a series of simpler subproblems. The algorithm b egins at time 0 sampling from π 0 ( θ , z | y ) with a large v alue 0 , then simulating from an increasing difficult se- quence of target distribution π t ( θ , z | y ), that is when t < t − 1 . Example 13 (Con tinuation of Example 8) . Figure 9 pro vides an illustration of the abov e algorithm when applied to the probit mo del with the three cov ari- ates describ ed in Example 1. In this artificial case, 20 −0.005 0.010 0.020 0.030 0 20 40 60 80 100 Density −0.05 −0.03 −0.01 0 20 40 60 80 Density −1.0 0.0 1.0 2.0 0.0 0.2 0.4 0.6 0.8 1.0 Density Figure 9: Comparison b etw een density estimates of the marginal posterior distributions of β 1 (left), β 2 (cen ter) and β 3 (righ t) obtained b y ABC (in red) and MCMC samples (in black) in the setup of the Pima Indian diab etes study . the ABC outcome can b e compared with the MCMC “exact” sim ulation describ ed ab ov e and the result is striking in that the ABC approximation is con- founded with the exact p osterior densities. The tun- ing of the ABC algorithm is to use 10 6 sim ulations o v er 10 iterations, with b ounds t set as the 1% quan- tile of the sim ulated ρ ( η ( z ) , η ( y )), ρ chosen as the Euclidean distance, and η ( z ) as the predictive dis- tribution based on the current parameter β , made of the Φ( x T i β )’s, while η ( y ) is the predictiv e distribution based on the MLE ˆ β ( y ) made of the Φ( x T i ˆ β ( y ))’s. In this sp ecial case w e are therefore av oiding the sim- ulation of the observ ations themselves as predictive functions are av ailable. This choice reduces the v ari- abilit y in the divergence betw een η ( z ) and η ( y ), and explains for the very goo d fit b etw een the densities. J 6 Final remarks This tutorial is necessarily incomplete and biased: the insistance on mo del choice and on v ariable di- mension mo dels is also a reflection of the author’s o wn interests. Others w ould hav e rather c hosen to stress the relev ance of these sim ulation metho ds for optimal design M ¨ uller 1999, M ¨ uller et al. 2004 in con- jonction with simulated annealing (e.g. Andrieu and Doucet 2000, Doucet et al. 2002), for non-parametric regression (Holmes et al. 2002) or for the analysis of contin uous time sto chastic processes (Dellap ortas et al. 2004, Beskos et al. 2006). That suc h a w ealth of choices is a v ailable indicates that the field still un- dergo es a formidable expansion that should benefit a wide range of areas and disciplines and, conv ersely , that the contin ued attraction of new areas within the orbit of Ba yesian computational metho ds backfeeds their creativity by in tro ducing new c hallenges and new paradigms. Ac kno wledgemen t This work had b een partly supp orted b y the Agence Nationale de la Rec herc he (ANR, 212, rue de Bercy 75012 Paris) through the 2009-2012 pro jects Big’MC and EMILE. References Alber t, J. (2009). Bayesian Computation with R . 2nd ed. Springer-V erlag, New Y ork. Alber t, J. and Chib, S. (1993). Bay esian analysis of binary and p olychotomous resp onse data. J. A meric an Statist. Asso c. , 88 669–679. Andrieu, C. and Doucet, A. (2000). Simu- lated annealing for Bay esian estimation of hidden Mark o v mo dels. IEEE T r ans. Information The ory , 46 994–1004. Andrieu, C. , Doucet, A. and Holenstein, R. (2010). Particle Mark ov chain Mon te Carlo (with discussion). J. R oyal Statist. So ciety Series B , 72 . (to app ear). 21 Andrieu, C. , Moulines, E. and Priouret, P. (2005). Stability of sto chastic approximation un- der verifiable conditions. SIAM J. Contr ol Optim. , 44 283–312. Beaumont, M. , Cornuet, J.-M. , Marin, J.-M. and Rober t, C. (2009). Adaptive approximate Ba y esian computation. Biometrika , 96(4) 983– 990. Beaumont, M. , Zhang, W. and Balding, D. (2002). Approximate Bay esian computation in p opulation genetics. Genetics , 162 2025–2035. Berger, J. , Pericchi, L. and V arsha vsky, J. (1998). Ba y es factors and marginal distributions in inv arian t situations. Sankhya A , 60 307–321. Beskos, A. , P ap aspiliopoulos, O. , Rober ts, G. and Fearnhead, P. (2006). Exact and compu- tationally efficien t likelihoo d-based estimation for discretely observed diffusion pro cesses (with dis- cussion). J. R oyal Statist. So ciety Series B , 68 333–382. Capp ´ e, O. , Douc, R. , Guillin, A. , Marin, J.- M. and Rober t, C. (2008). Adaptiv e imp ortance sampling in general mixture classes. Statist. Com- put. , 18 447–459. Capp ´ e, O. , Guillin, A. , Marin, J.-M. and R ober t, C. (2004). Population Monte Carlo. J. Comput. Gr aph. Statist. , 13 907–929. Capp ´ e, O. , Moulines, E. and R yd ´ en, T. (2004). Hidden Markov Mo dels . Springer-V erlag, New Y ork. Chen, M. , Shao, Q. and Ibrahim, J. (2000). Monte Carlo Metho ds in Bayesian Computation . Springer-V erlag, New Y ork. Chib, S. (1995). Marginal lik eliho o d from the Gibbs output. J. Americ an Statist. Asso c. , 90 1313–1321. Chib, S. , Nadari, F. and Shephard, N. (2002). Mark o v chain Mon te Carlo metho ds for sto chastic v olatilit y mo dels. J. Ec onometrics , 108 281–316. Cornuet, J.-M. , Marin, J.-M. , Mira, A. and R ober t, C. (2009). Adaptive multiple imp ortance sampling. T ec h. Rep. arXiv.org:0907.1254, CERE- MADE, Universit ´ e Paris Dauphine. Cucala, L. , Marin, J.-M. , Rober t, C. and Tit- terington, D. (2009). Bay esian inference in k - nearest-neigh b our classification mo dels. J. Ameri- c an Statist. Asso c. , 104 (485) 263–273. Del Moral, P. , Doucet, A. and Jasra, A. (2006). Sequential Mon te Carlo samplers. J. R oyal Statist. So ciety Series B , 68 411–436. Dellapor t as, P. , P ap aspiliopoulos, O. and R ober ts, G. (2004). Bay esian inference for non- Gaussian Ornstein-uhlen b eck stochastic v olatility pro cesses. J. R oyal Statist. So ciety Series B , 66 369–393. Douc, R. , Guillin, A. , Marin, J.-M. and R ober t, C. (2007a). Con vergence of adaptive mixtures of importance sampling schemes. Ann. Statist. , 35(1) 420–448. Douc, R. , Guillin, A. , Marin, J.-M. and R ober t, C. (2007b). Minim um v ariance im- p ortance sampling via p opulation Monte Carlo. ESAIM: Pr ob ability and Statistics , 11 427–447. Doucet, A. , de Freit as, N. and Gordon, N. (2001). Se quential Monte Carlo Metho ds in Pr ac- tic e . Springer-V erlag, New Y ork. Doucet, A. , Godsill, S. and Rober t, C. (2002). Marginal maximum a p osteriori estimation using Mark o v chain Monte Carlo. Statistics and Com- puting , 12 77–84. Gelf and, A. and Dey, D. (1994). Bay esian mo del c hoice: asymptotics and exact calculations. J. R oyal Statist. So ciety Series B , 56 501–514. Gelf and, A. and Smith, A. (1990). Sampling based approac hes to calculating marginal densities. J. A meric an Statist. Asso c. , 85 398–409. Gelman, A. , Gilks, W. and Rober ts, G. (1996). Efficien t Metrop olis jumping rules. In Bayesian 22 Statistics 5 (J. Berger, J. Bernardo, A. Dawid, D. Lindley and A. Smith, eds.). Oxford Univ ersity Press, Oxford, 599–608. Gelman, A. and Meng, X. (1998). Simulating nor- malizing constan ts: F rom imp ortance sampling to bridge sampling to path sampling. Statist. Scienc e , 13 163–185. Gentle, J. E. (2009). Computational Statistics. Springer–V erlag, New Y ork. Gilks, W. , R ober ts, G. and Sahu, S. (1998). Adaptiv e Mark ov chain Monte Carlo. J. Ameri- c an Statist. Asso c. , 93 1045–1054. Gordon, N. , Salmond, J. and Smith, A. (1993). A nov el approac h to non-linear/non-Gaussian Ba y esian state estimation. IEEE Pr o c e e dings on R adar and Signal Pr o c essing , 140 107–113. Green, P. (1995). Reversible jump MCMC computation and Bay esian mo del determination. Biometrika , 82 711–732. Haario, H. , Saksman, E. and T amminen, J. (1999). Adaptive proposal distribution for random w alk Metrop olis algorithm. Computational Statis- tics , 14(3) 375–395. Haario, H. , Saksman, E. and T amminen, J. (2001). An adaptiv e Metrop olis algorithm. Bernoul li , 7 223–242. Holmes, C. , Denison, D. , Mallick, B. and Smith, A. (2002). Bayesian metho ds for nonlin- e ar classific ation and r e gr ession . John Wiley , New Y ork. Iba, Y. (2000). P opulation-based Monte Carlo algo- rithms. T r ans. Jap anese So c. Artificial Intel l. , 16 279–286. Jaakkola, T. and Jordan, M. (2000). Bay esian parameter estimation via v ariational metho ds. Statistics and Computing , 10 25–37. Jacquier, E. , Polson, N. and Rossi, P. (1994). Ba y esian analysis of sto chastic v olatilit y mo dels (with discussion). J. Business Ec onomic Stat. , 12 371–417. Liu, J. (2001). Monte Carlo Str ate gies in Scientific Computing . Springer-V erlag, New Y ork. Marin, J.-M. and Rober t, C. (2007). Bayesian Cor e . Springer-V erlag, New Y ork. Marjoram, P. , Molitor, J. , Plagnol, V. and T a v ar ´ e, S. (2003). Marko v chain Mon te Carlo without lik eliho o ds. Pr o c. Natl. A c ad. Sci. USA , 100 15324–15328. McCullagh, P. and Nelder, J. (1989). Gener al- ize d Line ar Mo dels . Chapman and Hall, New Y ork. Meng, X. and W ong, W. (1996). Simulating ratios of normalizing constan ts via a simple iden tity: a theoretical exploration. Statist. Sinic a , 6 831–860. Mengersen, K. and Tweedie, R. (1996). Rates of con v ergence of the Hastings and Metropolis algo- rithms. A nn. Statist. , 24 101–121. Metropolis, N. (1987). The b eginning of the Monte Carlo metho d. L os Alamos Scienc e , 15 125–130. M ¨ uller, P. (1999). Sim ulation based optimal design. In Bayesian Statistics (J. Bernardo, J. Berger, A. Dawid and A. Smith, eds.), vol. 6. Springer–V erlag, New Y ork, 459–474. M ¨ uller, P. , P armigiani, G. , Rober t, C. and R ousseau, J. (2004). Optimal sample size for m ultiple testing: the case of gene expression mi- croarra ys. J. A meric an Statist. Asso c. , 99 990– 1001. Neal, R. (1994). Con tribution to the discus- sion of “Approximate Ba yesian inference with the w eigh ted likelihoo d b o otstrap” by Michael A. New- ton and Adrian E. Raftery . J. R oyal Statist. So ciety Series B , 56 (1) 41–42. Newton, M. and Rafter y, A. (1994). Approxi- mate Ba yesian inference b y the w eighted likelihoo d b o otstrap (with discussion). J. R oyal Statist. So- ciety Series B , 56 1–48. O wen, A. and Zhou, Y. (2000). Safe and effective imp ortance sampling. J. A meric an Statist. Asso c. , 95 135–143. 23 R Development Core Team (2008). R: A L an- guage and Envir onment for Statistic al Comput- ing . R F oundation for Statistical Computing, Vi- enna, Austria. ISBN 3-900051-07-0, URL http: //www.R- project.org . R ober t, C. (2004). Bay esian computational meth- o ds. In Handb o ok of Computational Statistics (J. Gentle, W. H¨ ardle and Y. Mori, eds.). Springer- V erlag, New Y ork. R ober t, C. and Casella, G. (2004). Monte Carlo Statistic al Metho ds . 2nd ed. Springer-V erlag, New Y ork. R ober t, C. and Casella, G. (2009). Intr o duc- ing Monte Carlo Metho ds with R . Springer-V erlag, New Y ork. R ober t, C. and Marin, J.-M. (2010). Imp ortance sampling metho ds for Ba yesian discrimination b e- t w een embedded mo dels. In F r ontiers of Statisti- c al De cision Making and Bayesian A nalysis (M.-H. Chen, D. K. Dey , P . Mueller, D. Sun and K. Y e, eds.). (T o app ear.). R ober t, C. and Wraith, D. (2009). Computa- tional metho ds for Ba yesian model choice. In Max- Ent 2009 pr o c e e dings (P . M. Goggans and C.-Y. Chan, eds.), vol. 1193. AIP . R ober ts, G. and Rosenthal, J. (2007). Coupling and ergo dicity of adaptive Mark o v Chain Mon te carlo algorithms. J. Applie d Pr ob a. , 44(2) 458– 475. R ober ts, G. and R osenthal, J. (2009). Examples of adaptive MCMC. J. Comp. Gr aph. Stat. , 18 349–367. R ubin, D. (1988). Using the SIR algorithm to sim u- late p osterior distributions. In Bayesian Statistics 3: Pr o c e e dings of the Thir d V alencia International Me eting, June 1-5, 1987 (J. Bernardo, M. Degro ot, D. Lindley and A. Smith, eds.). Clarendon Press. Sp all, J. C. (2003). Intr o duction to Sto chastic Se ar ch and Optimization . John Wiley , New Y ork. Tierney, L. (1994). Marko v chains for explor- ing p osterior distributions (with discussion). A nn. Statist. , 22 1701–1786. Wraith, D. , Kilbinger, M. , Benabed, K. , Capp ´ e, O. , Cardoso, J.-F. , F or t, G. , Pr unet, S. and Rober t, C. (2009). Estimation of cosmo- logical parameters using adaptive imp ortance sam- pling. Physic al R eview D , 80 023507. 24

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment