Divide & Concur and Difference-Map BP Decoders for LDPC Codes

The "Divide and Concur'' (DC) algorithm, recently introduced by Gravel and Elser, can be considered a competitor to the belief propagation (BP) algorithm, in that both algorithms can be applied to a wide variety of constraint satisfaction, optimizati…

Authors: Jonathan S. Yedidia, Yige Wang, Stark C. Draper

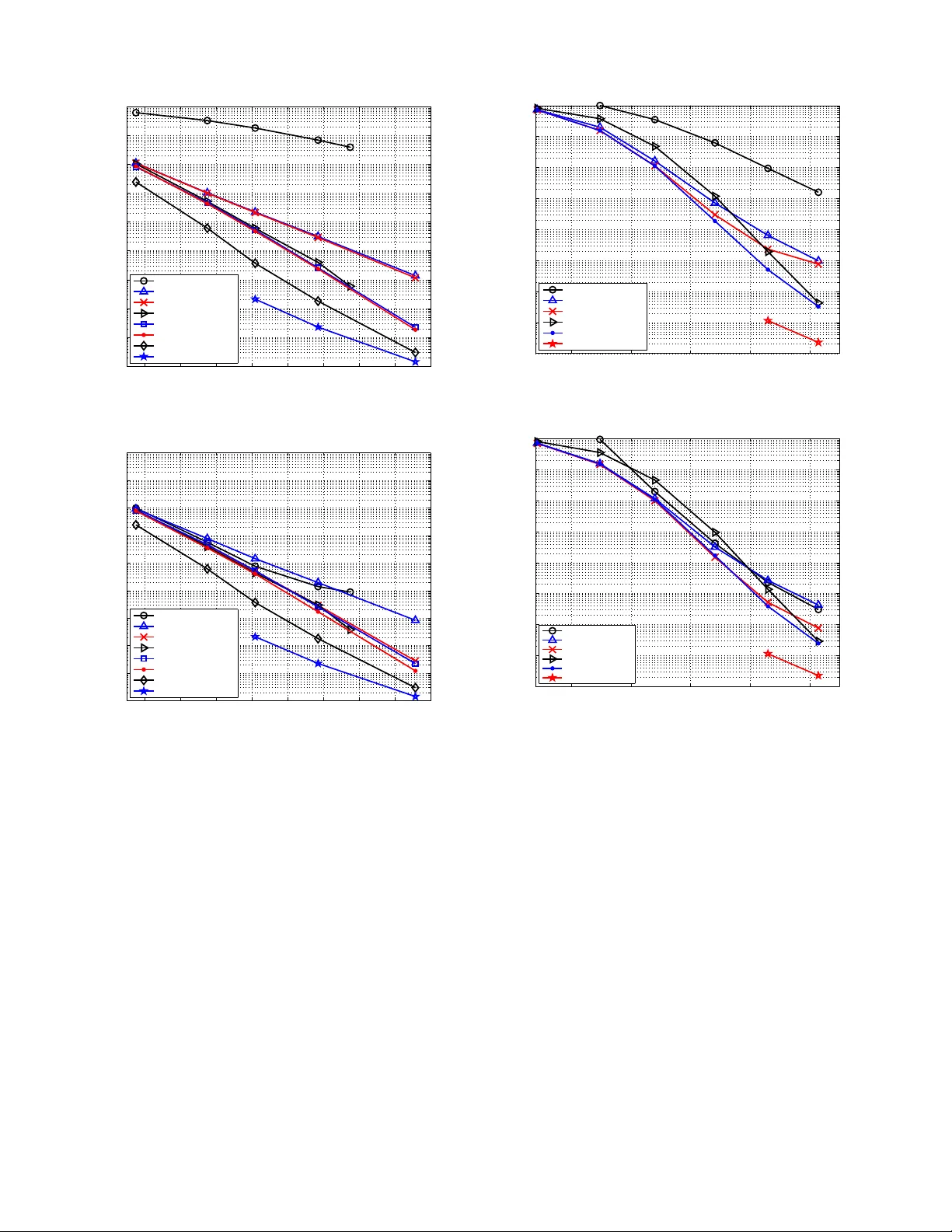

1 Di vide & Concur and Dif ferenc e-Map B P Decoders for LDPC codes Jonathan S. Y edidia, Member , IEEE, Y ige W ang , Member , IEEE, Stark C. Draper , Member , IEEE Abstract —The “Divide and Co ncur” (DC) algorithm, re cently introduced by Grav el and El ser , can be considered a competitor to the belief propagation (BP) algorithm, in th at both algorithms can be applied to a wide variety of constraint sa tisfaction, optimization, and p robabilistic inference problems. W e show that DC can be interpreted as a messag e-passing algorithm on a constraint g raph, which helps make the comparison with BP more clear . The “difference-map” dynamics of the DC algorithm enables it to av oid “traps” which may be related to the “trapping sets” or “pseudo-codewor ds” that plague BP decoders of l ow- density parity check ( LDPC) codes in the error -floor regime. W e in vestiga te two decoders f or low-density parity-check (LDPC) codes based on these i deas. The fi rst decoder is based directly on DC, while the second decoder borro ws the i mportant “difference-map” concept from the DC algorithm and translates it into a BP-like decoder . W e show that this “difference-map belief propagation” (DM BP) decoder has dramatically improve d error - floor perform ance compared to stand ard BP decoders, while maintaining a similar computational complexity . W e present simulation results f or LDPC codes on the additive white Gaussian noise and b inary symmetric channels, comparing DC and DMBP decoders with other d ecoders based on BP , linear programming, and mixed-integer l inear progra mming. Index T erms —iterative algorithms, graphical models, LDPC decoding, projection algorithms I . I N T RO D U C T I O N Properly d esigned low-density p arity-chec k (LDPC) codes, decoded using efficient message-p assing belief pro pagation (BP) decoder s, achieve near Sh annon limit perfor mance in the so-called “water-fall” regime where the signal-to-noise ratio (SNR) is ne ar the code thre shold [ 1]. Unfortun ately , BP decod ers of LDPC codes often suffer fro m “ error floor s” in the high SNR regime, which is a sign ificant prob lem for applicatio ns that have extreme reliab ility requirem ents, including magnetic reco rding an d fi ber-optic co mmun ication systems. There has been considera ble effort in tr ying to find LDPC codes and dec oders th at have improved error floor s wh ile maintaining good w ater-f all beha vior . In ge neral, such work can be d i vided into two appro aches. The first line o f attack tries to construc t codes or repr esentations of codes that have improved error floors wh en decoded using BP . Error flo ors in LDPC codes using BP decod ers are usually attributed to closely related ph enomen a that go u nder the names of “pseudoco dewords, ” “ne ar-codew ords, ” “trap ping sets, ” “in- stantons, ” an d “a bsorbing sets” [2][3][4][5][6][7]. Th e nu mber J. S. Y edidia and Y . W ang are with Mitsubishi Elect ric Research Labora- tories, Cambridge, MA 02139 (yedidia@ merl.com; yigew ang@mer l.com). S. C. Draper is with the Dept. of Electrical and Computer Engineering, Uni ve rsity of W isconsi n, Madison, WI 53706 (sdraper@ece.wisc.ed u). of these trap ping sets (to choose o ne of these term s), and therefor e the erro r flo or perfor mance, can b e improved by removing short cycles in the code grap h [8][9][10]. One can also con sider special classes of LDPC co des with fewer trapping sets, such as EG-LDPC codes [1 1], or gen eralized LDPC co des [12][1 3]. The secon d approa ch, taken h erein, is to try to impr ove upon the sub-optimal BP decoder . This approach is log ical because already when h e intro duced regu lar LDPC co des, Gallager showed that they hav e excellent distance prope rties and therefore will not have erro r floors if decod ed using optimal maximum- likelihood (ML) decoding [14]. Building on the the ory of tr apping sets, Han and Ryan pr opose a “b i-mode syndrom e-erasure decoder . ” This deco der can improve e rror floor perfo rmance given the kn owledge of dominan t trapp ing sets [15]. Howe ver, deter mining the d ominan t trapp ing sets o f a p articular code can be a challen ging task. An other recently introdu ced improved deco der is the mixed-integer linear pro - grammin g (MILP) d ecoder [16], which r equires no infor ma- tion about tra pping sets an d approach es M L p erform ance, but with a large decod ing co mplexity . T o d eal with the com plexity of the MILP decoder, a mu lti-stage deco der is proposed in [17], where very fast but po or-perform ing decod ers a re com- bined with the mo re powerful but m uch slower MILP d ecoder . The resu lt is a deco der that p erform s as well as the MILP decoder and with a h igh average throughp ut. T his m ulti-stage decoder ne vertheless po ses considerable practical d ifficulties for ce rtain app lications in that it requires imp lementation o f multiple decoders, an d the worst-case through put will be as slow as the MILP d ecoder . Our goal in this pap er is to develop decoder s that perform much b etter in the error floor re gime than BP , but with comparab le complexity , an d n o significant disadvantages. Our starting point is the iterativ e “Divide and Concur” (DC) algorithm recently proposed by Gravel and Elser [ 18] for constraint satisfaction problems. When using DC, o ne first describes a prob lem as a set of variables and lo cal co nstraints on those variables. O ne then introd uces “replicas” of the variables; one r eplica f or each constraint a variable is inv olved in. 1 The DC algorithm then iterativ ely pe rforms “d ivide” projection s which mov e the replicas to the values clo sest to their curren t values that also satisfy the local constraints, an d “concur ” projections which equ alize the values of the d ifferent replicas of the same variable. A key ide a in the DC algorith m is to avoid local traps in the dyn amics by using the so- 1 The use of the term “replica” in the current context should not be confused with the “replica method” for averag ing ove r disorder in statistical physics, for a re vie w of which we refer the reader to [19]. 2 called “Dif ference-M ap” (DM) combinatio n of “divide” a nd “concur ” projection s at each iteration. LDPC co des h ave a structure that make them a good fit for the DC algorithm. In f act, Grav el reported on a DC d ecoder for LDPC cod es in h is Ph.D. th esis, altho ugh his simulation s were very limited in scope [20]. W e were curio us about whether a DC dec oder could be co mpetitive with—or b etter than —more standard BP decoder s. W e were particular ly motiv ated b y the idea that the “traps” that the DC algor ithm’ s “Difference-Map” dynamics prom ises to a void mig ht be related to the “trappin g sets” that plag ue BP decoder s of LDPC cod es. T o construct a DC decoder , we need to ad d an important “energy” co nstraint, in addition to the more obvious par ity check constraints. Th e energy constraint enforces that th e correlation b etween the c hannel obser vations and the desired codeword should be at least some minim um amou nt. T he effect of th is constraint is to ensure tha t during the d ecoding process the cand idate so lution does not wander too far fro m the channel ob servation. W e found th at the DC decod er can be comp etitiv e with BP decod ers, but only if m any iteration s ar e allowed. Unf or- tunately , DC errors ar e o ften “ undetected er rors” in that the decoder returns a code word that is not the most likely one. Failures of BP decoding, in contrast, almost al ways correspond to failures to conver ge or convergence to a n on-cod ew ord, and therefor e are detecta ble. W e show ho w the DC de coder can be described as a message-passing algorithm. Using this formulation, we can see how to imp ort the difference-map idea into a BP setting. W e thus also co nstructed a n ovel decoder called the “d ifference- map belief p ropag ation” (DMBP) d ecoder . Essentially , DMBP is a min-su m BP decoder with modified dynamics motivated by the DC deco der . Our simulations show that the DMBP decoder impr oves p erform ance in th e erro r floor regime quite significantly wh en c ompared with standard sum -prod uct belief propag ation (BP) decoders. W e present results for both the additive white Gaussian noise (A WGN) chan nel and the binary symmetric channel (BSC). The rest of the p aper is organized as follows. In Section II, th e DC algorithm is presented, and re-fo rmulated as a message-passing alg orithm. The DC decod er for LDPC co des is d escribed in Section III. Th e DMBP algorithm is in troduc ed in Section IV . In Section V we present simulation results. Conclusions are given in Section VI. I I . D I V I D E A N D C O N C U R In this section, we r evie w Gravel a nd Elser’ s “ Divide and Concur” (DC) algorithm . Gr av el and Elser did not formulate DC as a message-p assing algo rithm, or otherwise compare DC to BP , but the c omparison is illuminating , and helped us design the DMBP d ecoder . Th us we present DC in a way that is con sistent with Gravel and E lser’ s presentatio n, but makes compa risons to BP easier . W e start by introducing the idea of “replicas” in Section I I-A in the context of the familiar alternating pr ojection approach to co nstrained satisfaction problems. In Section II-B we introdu ce and d iscuss the difference-map dy namics of DC. Then, in Section II -C we reformu late DC as a message-passing algorithm directly compara ble to BP . A. R eplicas an d alternating pr ojectio ns Consider a system with N variables and M constraints on those v a riables. W e seek a configuratio n of the N variables such that all M constrain ts a re satisfied. For each constraint that a v ariable is inv olved in, we create on e “replica” o f the v ariable. The idea b ehind DC is that b y c onstructing a dynamics of rep licas ra ther than of variables, each constrain t can be locally satisfied (the “divide” step), and then later the possibly different values o f replicas of the same variable can be fo rced to equal each o ther (th e “con cur” step). Denote u sing r ( a ) the vector containing the v alues o f all the replicas associated with the a th constraint and let r [ i ] be the vector of all the values of replicas associated with the i th variable. Let r be the vector conta ining all the v alues of replicas o f all the variables. Now r ( a ) for a = 1 , 2 , · · · , M and r [ i ] for i = 1 , 2 , · · · , N are two dif f erent ways to p artition r into mu tually exclusive sets. There ar e two pro jection opera tions, the “divide” projec - tion and the “conc ur” pr ojection, den oted by P D and P C , respectively . Both p rojection s ac t on r an d outpu t a n ew r that satisfies certain requirem ents. Since r can be partitione d into mutually exclusive sets, the projec tions are actua lly applied to eac h set in depend ently . Th e divide projection is a pr oduct of local divide projec tions P a D ( r ( a ) ) that operate on each r ( a ) for a = 1 , 2 , · · · , M . If r ( a ) satisfies the a th constraint, P a D ( r ( a ) ) = r ( a ) ; otherwise, P a D ( r ( a ) ) = ˜ r ( a ) such that ˜ r ( a ) is the closest vector to r ( a ) that satisfies the a th con straint. Th e metric used is norm ally ordinary Eu clidean distanc e. The divide p rojection forces a ll constraints to be satisfied, but has the effect that replicas of th e same variable do no t necessarily agree with o ne another . The co ncur projection is a prod uct of loca l concur proje ctions P i C ( r [ i ] ) that act on r [ i ] for i = 1 , 2 , · · · , N . Let ¯ r [ i ] be the average of all th e elemen ts in r [ i ] and construct a vector ¯ r [ i ] with each element equal to ¯ r [ i ] , with dimen sionality the same as r [ i ] . Then P i C ( r [ i ] ) = ¯ r [ i ] . While the co ncur projection equalizes th e v a lues of the replicas of the same v ariable, the ne w v alues of the re plicas may violate some con straints. The overall pro jection P D ( r ) [altern ately P C ( r ) ] is d efined as ap plying P a D ( · ) [ P i C ( · ) ] to r ( a ) for a = 1 , 2 , . . . , M [ r [ i ] for i = 1 , 2 , . . . , N ]. Th e M [ N ] o utput vectors are then reassembled into the u pdated r vector throug h appropriate orderin g. A strate gy is n eeded to comb ine these two projections to find a set of rep lica values such that all constrain ts are satisfied and all rep licas o f the same variable are equal. The simplest ap- proach is to alternate t wo projections, i.e., r t +1 = P C ( P D ( r t )) , where r t is the vector of rep lica values at the t th iteration. This scheme works well for conve x c onstraints, but it is prone to getting stuck in short cycles (“trap s”) th at d o not corr espond to solution s. T o illustrate this point, c onsider the situation shown in Fig. 1, where we imagine that the space of replicas of a particular variable is only two-dimensiona l, i.e., the variable in question 3 A B A B -2 -1 0 1 2 3 4 -2 -1 0 1 2 3 4 C D Fig. 1. A simple exa mple of a trap in an iterate d projecti on strateg y . If one iterat i ve ly projects to the nearest point that satisfies the constraints ( A or B ), and then the neare st point where the replica values are equal (the diagona l line) one may be trapped in a short cycle ( B to C to B and so on) and ne ver find the true solutio n at point A . participates in two constraints. The diagonal line represents the requirem ent that all replicas are eq ual, since they are replicas of the same variable. T he points A an d B are the two p airs of replica values that satisfy th e variable’ s constraints. The only common value that the replicas ca n take that satisfies both constraints is ze ro, i.e. point A . However , if one in itializes replica values near po int B , say at D , and applies the divide projection , then one will move to B , the near est point that satisfies the co nstraints. Next, th e concur projection will move to p oint C, the ne arest poin t (along the diagon al) where th e replica values a re equal. Con tinued applicatio n of divide and concur projection s, in sequence, moves th e system to B , then back to C , th en back to B , an d so forth. Alternating projection s cause the system to be stuck in a simp le trap. Of course, this is only a toy two-dimen sional e xample, b ut in non- conv ex hig h-dime nsional spaces it is plausible that an iterated projection stra tegy is p rone to falling into such traps. B. Differ ence Map The difference map (DM) is a strategy that im proves al- ternating pr ojections b y turn ing traps in the dynam ics into repellers. It is defined b y Gravel and E lser as follows: r t +1 = r t + β [ P C ( f D ( r t )) − P D ( f C ( r t ))] (1) where f s ( r t ) = (1 + γ s ) P s ( r t ) − γ s r t for s = C or D with γ C = − 1 /β and γ D = 1 /β . The p arameter β can b e ch osen to optimize perf ormance . W e f ocus here exclusively on the c ase β = 1 , which is u su- ally an excellent cho ice and co rrespon ds to what Fienup called the “hybrid input-o utput” algorithm, originally dev eloped in the context o f image reconstruc tion [21][22]. See [23] for a revie w of Fienup ’ s algorithm and other projectio n algo rithms for image reconstruction , and their relation ship with earlier conv ex optim ization metho ds. For β = 1 , th e dy namics (1) simplify to r t +1 = P C r t + 2[ P D ( r t ) − r t ] − [ P D ( r t ) − r t ] . (2) It can be pr oved th at if a fixed point in th e dynamics r ∗ is reached, i.e., r t +1 = r t = r ∗ , then th at fixed po int m ust corr espond to a so lution o f th e p roblem . It is imp ortant to note that the fixed point itself is n ot necessarily a solution. The solution r sol correspo nding to a fixed point r ∗ can be obtained using r sol = P D ( r ∗ ) or r sol = P C ( r ∗ + 2[ P D ( r ∗ ) − r ∗ ]) . W e hav e fo und it very useful to th ink o f th e difference- map dynamics f or a sing le iter ation as b reaking d own into a thr ee-step process. T he expression [ P D ( r t ) − r t ] rep resents the change to the curren t values of the replicas resulting from the divide pro jection. I n the first step, the values of the replicas move twice the desired amount ind icated by the divide projection . W e refer to these ne w values o f the replicas as the “overshoot” values r ov er t = r t + 2[ P D ( r t ) − r t ] . Next the concur pro jection is app lied to the overshoot v alues to obta in the “concu rred” values of the rep licas r conc t = P C ( r ov er t ) . Finally the ov ershoot, i.e. , the e xtra motion in the first step, is s ubtracted from the concur p rojection resu lt to obtain the replica value for the next iteration r t +1 = r conc t − [ P D ( r t ) − r t ] . In Fig. 2 we return to o ur previous example and see that the DM d ynamics do not get stuck in a trap . Sup pose, as before, that point A is at (0 , 0 ) , p oint B is at (3 , 1 ) , and and that we now start initially at point r 1 = (2 , 2) . The divide p rojection would take us to poin t B , but the ov ershoot tak es us twice as far to r ov er 1 = (4 , 0 ) . The concur pr ojection takes u s back to r conc 1 = ( 2 , 2 ) . Fin ally , the overshoot is corrected so that r 2 = (1 , 3) . Th e next full iter ation takes u s to r 3 = (0 , 4) (sub - steps ar e tabulated in Fig. 2). Now howe ver , we a re closer to A then to B . Therefo re, th e next overshoo t take u s to r ov er 3 = (0 , − 4) , from which we would m ove to r conc 3 = ( − 2 , − 2 ) , and r 4 = r ∗ = ( − 2 , 2) . Finally , at r 4 we have reached a fixed po int in the d ynamics that c orrespon ds to the solution a t A (wh ich can be obtained from the final value of P D ( r t ) or r conc t ). W e can generalize from this examp le to und erstand how the DM dynam ics tu rns a trap into a “repeller, ” where at each iteration, one moves aw ay from the repeller by an a mount equal to the distance between th e co nstraint inv olved and the nearest point that satisfies the requir ement that the replicas be equal. Of course, DM dynamics are not a panacea; it is possible that DC can get cau ght in mor e com plicated cycles or “stran ge attrac tors” and never fin d an existing solutio n; but least it will does not ge t caugh t in simp le tr aps. C. DC as a me ssage-passing algorithm W e now turn to developing an alternativ e interpr etation of DC, as a message-passing algo rithm on a grap h. “Mes- sages” a nd “beliefs” are similar to those in BP , but message- update and belief-u pdate rules are different. T o b egin with, we co nstruct a bi-par tite “constraint grap h” of variable nodes and c onstraint no des, wh ere each variable is co nnected to the constraints it is in volved in. A constra int grap h can be though t of as a special ca se o f a factor grap h [ 24], wh ere ea ch allowed con figuration is g iv en the same weight, an d disallo wed configur ations are given zero weight. W e identif y the DC “r eplicas” with the edges of the gra ph. W e deno te b y r [ i ] a ( t ) the value of the replica on the edge joining variable i to con straint a at the beginnin g of iteration t , 4 r 1 r 2 r 3 r ∗ r ov er 1 A B -2 -1 0 1 2 3 4 -2 -1 0 1 2 3 4 t r t P D ( r t ) r ov er t r conc t 1 (2 , 2 ) (3 , 1 ) (4 , 0 ) (2 , 2 ) 2 (1 , 3 ) (3 , 1 ) (5 , − 1) (2 , 2 ) 3 (0 , 4 ) (0 , 0 ) (0 , − 4) ( − 2 , − 2) 4 ( − 2 , 2) (0 , 0) (2 , − 2) (0 , 0) 5 ( − 2 , 2) Fig. 2. An example sho wing how DM dynamics avoi ds traps. If we start at the point r 1 , an iterat ed projecti ons dynamics woul d be trapped betwee n point B and r 1 , and nev er find the solution at A . DM dynamics will instead be repelled from the trap and move to r 2 (via the three sub-steps denoted with dashed lines r ov er 1 , r conc 1 = r 1 , and r 2 ), then mov e to r 3 , and then end at the fixed point r 4 = r ∗ , which correspond s to the solution at A . i.e., the appr opriate elemen t of r [ i ] ( t ) . W e similar ly den ote by r ov er [ i ] a ( t ) and r conc [ i ] a ( t ) the “overshoot” and “co ncurr ed” values of the same replica. W e note th at these are all scalars. W e can alternati vely th ink of the initial v a lue o f a replica r [ i ] a ( t ) as a “message” fro m the variable n ode i to the con - straint n ode a tha t we de note as m i → a ( t ) . The set o f incom ing messages to constraint nod e a , m → a ( t ) ≡ { m i → a ( t ) : i ∈ N ( a ) } wher e N ( a ) is the set of variable indexes inv olved in constraint a , can th erefore be expressed as m → a ( t ) = r ( a ) ( t ) . In the th ree-step interpretatio n of the DM d ynamics d e- scribed above, the se replica values a re n ext transfo rmed into overshoot v alues by moving by twice the amo unt indicated by the divide proje ction. Because the overshoot values are computed locally at a con straint node using the message s into to the co nstraint node, we can th ink of the overshoot values r ov er [ i ] a ( t ) as messages from the con straint n ode a to their neighb oring variable nod es i , deno ted by m a → i ( t ) . The set of outgoin g m essages from co nstraint node a is m a → ( t ) ≡ { m a → i ( t ) : i ∈ N ( a ) } . This set can thu s be calcu lated as m a → ( t ) = r ov er a ( t ) = r ( a ) ( t ) + 2[ P a D ( r ( a ) ( t )) − r ( a ) ( t )] = m → a ( t ) + 2[ P a D ( m → a ( t )) − m → a ( t )] . The next step of the DC algo rithm takes the overshoot replica values r ov er [ i ] a ( t ) a nd compu tes concurred v alues r conc [ i ] a ( t ) using the c oncur pro jection. Note tha t the co ncurr ed v alues for replicas that ar e connected to the same variable nod e i are all equal to ea ch other . W e can think of these concurred values as “beliefs, ” denoted by b i ( t ) . Just as in BP , the be liefs at a variable no de i are com puted using all the messages co ming into th at variable n ode. Howev er , while the BP belief is a sum of inco ming message s, the DC b elief is an av erage: b i ( t ) = P i C ( r [ i ] ( t )) = 1 |M ( i ) | X a ∈ M ( i ) m a → i ( t ) (3) where M ( i ) is the set of constraint indexes in wh ich variable i par ticipates. Finally , the DC rule for compu ting the new r eplica values at the n ext iteration is to ta ke the con curred values and subtract a correction for the amou nt we overshot when we computed the overshot values. In term s of our b elief and message formula tion, we com pute the outgoing messages from a variable nod e at the n ext iteration using th e rule m i → a ( t + 1) = b i ( t ) − 1 2 [ m a → i ( t ) − m i → a ( t )] . (4) Comparing with the ordin ary BP r ule m i → a ( t + 1) = b i ( t ) − m a → i ( t ) , (5) we note that the message ou t of a variable n ode in DC also depend s on the value of th e same me ssage at the previous iteration, which is not the case in BP . T o summarize, the overall structure of BP and DC as message-passing alg orithms is similar . In bo th on e iterativ ely updates b eliefs at variable nod es and messages betwee n vari- able nod es and constrain t nodes. Furtherm ore, messages out of a con straint node are co mputed based on the messages into the constraint nod e, beliefs are co mputed based o n the messages into a variable node, and the messages out o f the variable no de depend o n the beliefs and the messages into a variable n ode. The dif ferences are in the spe cific forms of the message-update and belief-upd ate rules, and the fact that a message-update rule for a m essage out o f a variable no de in DC also depen ds o n the value of the same message in the previous iteration. I I I . D C D E C O D E R F O R L D P C C O D E S Decoding of LDPC codes can be d escribed as a constrain t satisfaction pro blem. W e restrict o urselves here to binar y LDPC co des, althou gh gen eralizations to q -ary code s are straightfor ward. Sear ching fo r a cod ew ord is equiv alent to seeking a b inary sequence wh ich satisfies all th e sing le-parity check (SPC) constraints simultaneously . W e also add one importan t additional c onstraint, which is th at th e likelihoo d of a binary sequence must be gr eater than some minimum amount. Then the d ecoding pr oblem can be divided into m any simple sub -prob lems which can be solved ind ependen tly using the DC app roach. Let M and N be the num ber o f SPC constra ints and bits of a binar y LDPC cod e, respectively . Let H be the parity check matrix wh ich defines the code. Assume BPSK signaling with unit energy , which maps a binary codeword c = ( c 1 , c 2 , . . . , c N ) into a sequ ence x = ( x 1 , x 2 , . . . , x N ) , accordin g to x i = 1 − 2 c i , f or i = 1 , 2 , . . . , N . The sequence x is transm itted thro ugh a chann el and the received 5 channel observations are den oted y = ( y 1 , y 2 , . . . , y N ) . Let the log-likeliho od ratios (LLR’ s) corresp onding to the received channel ob servations be L = ( L 1 , L 2 , . . . , L N ) , wh ere L i = log Pr[ y i | x i = 1 ] Pr[ y i | x i = − 1 ] . Our go al is to recover the transmitted sequence o f variables x . T o do th is, we will search f or a sequence of ± 1 ’ s that satisfies all the SPC co nstraints and has the h ighest likelihood or , eq uiv alently , the lowest “energy , ” where the energy is defined as E = − P N i =1 L i x i . Note th at a lthough our d esired sequence c onsists on ly of ± 1 variables, the “replica” values, or eq uiv alently “messages” and “beliefs, ” ar e real-valued. In all, we hav e N variables x k , and M + 1 constraints, of which M are SPC constraints, with on e addition al e nergy constraint. W e will write the energy constrain t as − P i L i x i ≤ E max , where different ch oices of E max result in different decoder s. It is not obvious how to ch oose E max ; we perfo rmed preliminar y experiments to search for an E max that optimizes decodin g perform ance. Somewhat surprisingly , the best cho ice for E max is o ne th at fo r wh ich th e energy co nstraint can never actually b e satisfied: we foun d that E max = − (1 + ǫ ) P i | L i | , with 0 < ǫ ≪ 1 was an excellent choice. The fact that the energy c onstraint is ne ver satisfied is n ot a p roblem because the decoder ter minates if it finds a c odeword that satisfies all the SPC constraints. Until then, the effect o f the en ergy c onstraint is to keep th e r eplica values near the tr ansmitted seq uence. W e will describe the DC decoder as an iterativ e message- update alg orithm on a co nstraint gra ph, following the fo rmula- tion in section II-C. W e use N variable indexes i = 1 , 2 , · · · , N and M + 1 constrain t ind exes a = 0 , 1 , 2 , · · · , M , where the 0 th con straint is the energy constraint. SPC co nstraints inv olve a small numbe r of variables, but the ene rgy constraint inv olves ev ery variable. T o lay the gr oundwork for the overall DC decoder, we now explain h ow to perform the di v ide and concur projection s. A. Divid e an d con cur pr ojections for LDPC decoding The di vide projection P D can be partitioned into a collection of M + 1 pro jections P a D , wher e each projection op erates indepen dently on a vector of messages m → a ( t ) ≡ { m i → a ( t ) : i ∈ N ( a ) } and o utputs a vector (o f the same dimen sionality) of projecte d m essages P a D ( m → a ( t )) . The o utput vector is as close as po ssible to the original v alues m → a ( t ) while satisfying the a th con straint. The SPC co nstraints require that th e variables inv olved in a co nstraint are all ± 1 , with an even number of − 1 ’ s. For these co nstraints we efficiently p erform th e divide projectio n as fo llows: • M ake a har d decision h ia on each of m i → a ( t ) such that h ia = 1 if m i → a ( t ) > 0 , h ia = − 1 if m i → a ( t ) < 0 , and h ia is chosen to be 1 o r − 1 rand omly if m i → a ( t ) = 0 . • Ch eck if h a contains a n even numbe r of − 1 ’ s. If it does, set P a D ( m → a ( t )) = h a and r eturn. • O therwise, let ν = argmin i | m i → a ( t ) | . Especially fo r the BSC, it is possible that se veral messages h ave equally minimal | m i → a ( t ) | . In this case, we rando mly pick on e of them and use its index as ν . • Flip h ν a , i.e., if h ν a = − 1 , set it to 1 and if h ν a = 1 , set it to − 1 . Then set P a D ( m → a ( t )) = h a and r eturn. Recall that the energy constrain t is − P N i =1 x i L i ≤ E max . This im plies a divide pr ojection on the vector of m essages m → 0 ( t ) , pe rforme d as follows: • I f the energy constraint is already satisfied by the messages m → 0 ( t ) , return the current messages, i.e., P 0 D ( m → 0 ( t )) = m → 0 ( t ) . (Recall howe ver that the en- ergy constraint will nev er be satisfied for the choice of E max = − (1 + ǫ ) P i | L i | that we u se in o ur simulatio ns.) • O therwise, find h 0 which is the closest vector to m → 0 ( t ) and satisfi es the energy con straint. An easy ap plication of vector calculu s can be used to derive that the i th compon ent h i 0 is giv en by th e for mula h i 0 = m i → 0 ( t ) − L i ( P i L i m i → 0 ( t ) + E max ) P i L 2 i (6) Set P 0 D ( m → 0 ( t )) = h 0 and r eturn. Finally , th e c oncur p rojection P C can be partitioned in to a set of N pro jection operator s P i C , where each P i C operates indepen dently on the vector of me ssages m → i ≡ { m a → i ( t ) : a ∈ M ( i ) } and outpu ts the belief b i ( t ) , the average over the compon ents of the vector m → i . B. DC algo rithm for LDPC d ecoding The overall DC decoder p roceeds as fo llows. 0. In itialization: Set the max imum n umber of iterations to T max and the current iteration to t = 1 . In itialize th e messages out of variable n odes m i → a ( t = 1) for all i and a ∈ M ( i ) to equ al 2 p i − 1 , where p i is the a priori probab ility that the i th transmitted sym bol x i was a 1 , giv en by p i ≡ exp( L i ) / (1 + exp( L i )) . 1. Update message s from checks to variables: Giv en th e messages m → a ( t ) ≡ { m i → a ( t ) : i ∈ N ( a ) } into each constraint a , com pute the messages o ut of eac h constrain t m a → ( t ) ≡ { m a → i ( t ) : i ∈ N ( a ) } using th e overshoot formu la m a → ( t ) = m → a ( t ) + 2[ P a D ( m → a ( t )) − m → a ( t )] (7) where P a D ( m → a ( t )) is the divide pr ojection o peration for constraint a . 2. Upda te beliefs: Com pute the b eliefs at each variable nod e i using the concu r projection s b i ( t ) = P i C ( m → i ( t )) = 1 |M ( i ) | X a ∈ M ( i ) m a → i ( t ) . (8) 3. Check if codeword ha s been found: Create ˆ c = { ˆ c i } such that ˆ c i = 1 if b i ( t ) < 0 , ˆ c i = 0 if b i ( t ) > 0 and flip a coin to de cide ˆ c i if b i ( t ) = 0 . If H ˆ c = 0 output ˆ c as the deco ded cod ew ord and stop. 4. Update message s from variables to checks: In crement t := t + 1 . If t > T max stop and return FAILURE . Otherwise, update each message out of the variable nod es using the “oversho ot correction ” rule giv en in equatio n (4) and go back to Step 1. 6 As alread y mentio ned in the introd uction, the DC decoder perfor ms reasonably well, but with some problem s. W e de- fer a d etailed discu ssion o f the DC simulation results u ntil section V. First we descr ibe a seco nd and novel deco der, the difference-map belief pr opagation (DMBP) decoder . I V . D M B P D E C O D E R Our motivation in creating the DMBP deco der was tha t BP deco ders generally perfo rm well, but they seem to use something like an iterated pr ojection strategy , and perhaps the trapping sets that pla gue th e er ror-floor regime ar e r elated to th e “traps” that the d ifference-map d ynamics i s supposed to am eliorate. Since we can also describe DC decoder s as message-passing d ecoders, we could try to create a new BP decoder th at was a m ixture of BP and d ifference-map ideas. For simplicity , we work with a min-sum BP d ecoder us- ing messages and b eliefs that co rrespon d to log-likelihood ratios. Note that the min -sum message upd ate rule is much simpler to implemen t in hardware than the stand ard su m- produ ct rule. Normally , sum-pro duct (or some appro ximation to sum-pro duct) B P decoder s are fav ored over min- sum BP decoder s becau se they perform better , but we f ound that th e straightfor ward min- sum DMBP decoder will out-pe rform the more com plicated sum-prod uct BP dec oder . Our preliminary simulations also show , somewhat surprisingly , that the min- sum DMBP decod er slightly o ut-perf orms a su m-pro duct DMBP decoder . (W e don’t further discuss the sum-pr oduct DMBP dec oder her ein.) W e use the same notation for messages and beliefs th at were used in the discussion of the DC decoder in Section III. W e co mpare, on an intuitiv e le vel, th e min-su m BP deco der with the DC decod er in term s of belief upd ates an d message- updates a t b oth the variable and ch eck no des. Beginning with the m essage-upd ates at a check node, the standard min -sum BP update rules ar e to take incom ing messages m i → a ( t ) and c ompute o utgoin g messages acco rding to the rule that m a → i ( t ) = min j ∈ N ( a ) \ i | m j → a ( t ) | ! Y j ∈ N ( a ) \ i sgn ( m j → a ( t )) , (9) where sgn ( z ) = z / | z | if z 6 = 0 , and sgn ( z ) = 0 if z = 0 . Com- paring with the DC “overshoo t” message-up date rule, we n ote that the min-sum up dates, in some sense, also “overshoot”. For examp le, at a check no de th at has three incoming positive messages and o ne incom ing negativ e message , we o btain three outgoing n egativ e m essages and on e outgoing po siti ve message. This overshoots the “corr ect” solution o f having an ev en number of negative messages (since the p arity check must ultimately be co nnected to an ev en number of variables with value − 1 ). Becau se the min-sum rule f or messages outgo ing tow ards a par ticular variable ignore the incoming message from that v ariable, all th e outg oing messages move beyond what is necessary (at least in terms o f sign) to satisfy the constraint. Since we want an overshoot, we decid ed to leav e this ru le un modified. T ur ning to the b elief up date rule, the standard BP rule is to compute the b elief as the sum of incoming message s (including the message fro m th e observation), while the DC r ule is that the belief is the aver age of incom ing messages. W e d ecided to use the comp romise rule b i ( t ) = Z L i + X a ∈ M ( i ) m a → i ( t ) (10) where Z is a parameter ch osen b y optimizing deco der perf or- mance. Finally , fo r the message-upd ate rule for messages at the variable nodes, we directly co py the “correction ” rule from DC. Our intuitive idea is that perh aps standard BP is missing the correction that is im portant in repelling DM dynamics from traps. T o su mmarize, the DMBP decode r works as follows: 0. Initializa tion: Set the maximum number of iteration s to T max and the curr ent iteration to t = 1 . In itialize the the messages out of variable n odes m i → a ( t = 1) for all i and a ∈ M ( i ) to equa l L i . 1. Update messages from checks to variables: Given the messages m i → a ( t ) coming in to th e constraint no de a , comp ute the outgoing messages using th e min -sum message up date rule given in equatio n (9). 2. Upda te beliefs: Com pute the b eliefs at each variable nod e i using the belief u pdate ru le given in equ ation (10). 3. Check if codeword ha s been found: Create ˆ c = { ˆ c i } such that ˆ c i = 1 if b i ( t ) < 0 , ˆ c i = 0 if b i ( t ) > 0 and flip a coin to de cide ˆ c i if b i ( t ) = 0 . If H ˆ c = 0 output ˆ c as the deco ded cod ew ord and stop. 4. Update message s from variables to checks: In crement t := t + 1 . If t > T max stop and return FAILURE . Otherwise, update each message out of the variable nod es using the “oversho ot correction ” rule giv en in equatio n (4) and go back to Step 1. V . S I M U L AT I O N R E S U LT S In this section, we compar e simulation results of the DC and DMBP decoder s to those o f a variety o f other deco ders. T he decodin g algo rithms are ap plied to two kinds of LDPC cod es and simulated over both the BSC and the A WGN channel. One code is a r andom regular L DPC code with len gth 1 057 and rate 0.77, obtain ed fro m [2 5]. The other code is a q uasi-cyclic (QC) “ array” LDPC code [26][6] with length 2209 and rate 0.916 . The first p oint of co mparison of o ur propo sed d ecoders is to sum-produ ct BP decoding. When simulating transmission over the BSC, in order better to p robe the erro r floor region, we implement th e multistage decoder introdu ced in [17]. Multistage decod ers pre-app end simp ler deco ders ( in ou r case Richardson & Urb anke’ s Alg orithm-E [27] a nd/or re gular su m- produ ct BP) to the more complex deco ders o f in terest ( e.g., DC). Th e simple r de coders eithe r de code or fail to decode in a detectable way (e.g., by no t converging in BP’ s case). Failures to decode trigger the use of the more complex decoders. In this way one can o ften achieve the WER perfo rmance of th e most complex decoder at an expected complexity close to that of the most simple de coder . Our first u se o f the mu ltistage a pproac h 7 in this p aper is to calculate the performance of sum -prod uct BP d ecoding fo r the BSC. W e implem ent a multistage deco der that combines a first-stage Algorithm-E to a second-stage sum- produ ct BP . W e term the c ombinatio n E-BP . For the sum- produ ct BP simula tions o f th e A WGN cha nnel simulatio ns we implement a standar d s um-pro duct BP deco der (a nd not a multistage d ecoder) as we have fo und Algorith m-E has very poor performance on the A WGN channel and thus does not appreciab ly reduce simu lation time. For DC and DMBP we provide r esults for stand ard (single- stage) implementatio ns of both algorithms as well as for multi- stage im plementatio ns. As per the discussion ab ove, we use E-BP as the initial stages fo r simulation s over the BSC and BP by itself as a first stage fo r simu lations o f th e A WGN chann el. W e denote th e resultin g m ulti-stage deco ders by E-BP-DMBP , E-BP-DC, BP-DM BP and BP-DC. Our final p oints of co mparison are to l inear prog ramming (LP) decoding an d mixed-integer LP (MI LP) decoding. Our LP decoder s were accelerated using T aghavi and Siegel’ s “adaptive” metho ds [28], a nd u ltimately relied on th e simplex algorithm as implemen ted in the GLPK linear p rogram ming library [29]. For the BSC, we imp lement the multistage decoder s E-BP-LP and E -BP-MILP( l ) for l = 10 , where l is the maximum nu mber of in teger (in fact b inary) constrain ts the MILP d ecoder is allowed. Fur ther details o f these deco ders and r esults can be f ound in [1 7]. Regarding the d ecoding par ameters of o ur n ew algorithms, for th e rand om LDPC code, we use Z = 0 . 35 f or th e DMBP decoder over both BSC and the A WGN chann el. For the array code, we use Z = 0 . 405 over the BSC an d Z = 0 . 445 over the A WGN chan nel. Finally , we ar e o ften ab le to estimate a lower b ound o n the word e rror rate ( WER) of ML decoding . When our d ecoders return a codeword that is different from the transmitted code- word, but has a h igher prob ability , we know that an optimal ML decoder would also h av e ma de a decodin g “err or . ” Th e propo rtion of such events pr ovides an estimated lower b ound on ML perfo rmance. (The tru e ML WER could b e above the lower b ound because an ML decoder may a lso make err ors on b locks fo r which our deco der fails to conver ge, events that our e stimate assumes ML would dec ode corr ectly .) Figure 3 plots the word erro r r ates of the various algor ithms for the len gth-1 057 rando m LDPC code whe n transm itted over the BSC. W e plot WER versus SNR, assuming that the BSC re sults fr om hard- decision demod ulation of a BPSK ± 1 sequence tr ansmitted over an A WGN channe l. The re- sulting relation between the crossover pro bability p of the equiv alent BSC- p and the SNR of the A WGN chan nel is p = Q √ 2 R · 1 0 S N R/ 10 , where R is th e rate of the co de and Q ( · ) is th e Q-f unction . In Figure 3(a) we p lot results when all iterative algo rithms are limited to T max = 5 0 iterations, and in Figure 3 (b) to T max = 30 0 iteration s. W e ob serve that E- BP-DMBP improves th e error floor per forman ce dramatically compare d with E-BP (E-BP-DC also improves significantly compare d with E- BP if on e allows fo r 300 iterations) a nd in th e high SNR region E-BP-DMBP with 50 iterations is very close to th e estimated lower b ound of th e maximum 5 5.5 6 6.5 7 7.5 8 10 −10 10 −9 10 −8 10 −7 10 −6 10 −5 10 −4 10 −3 10 −2 10 −1 10 0 Eb/N0 (dB) WER DC E−BP E−BP−DC DMBP E−BP−DMBP E−BP−LP E−BP−MILP(10) ML est. lower bound (a) Results when T max = 50 iteration s 5 5.5 6 6.5 7 7.5 8 10 −10 10 −9 10 −8 10 −7 10 −6 10 −5 10 −4 10 −3 10 −2 10 −1 10 0 Eb/N0 (dB) WER DC E−BP E−BP−DC DMBP E−BP−DMBP E−BP−LP E−BP−MILP(10) ML est. lower bound (b) Results when T max = 300 iterati ons Fig. 3. Error performance comparisons for a length-1057 , rate-0.77 random LDPC code ov er the BSC. likelihood (ML) deco der . Note also that a p ure DMBP dec oder has alm ost the sam e perform ance as E-BP-DMBP for both 50 an d 300 iteratio ns, so the E-BP-DMBP performan ce in the very high SNR regime should be indicative of th e pu re DM BP perfor mance. From Figu re 3, we also observe that the p ure DC de- coder needs many more iterations to ob tain good perform ance compare d with both B P and DM BP . For 30 0 iterations, DC perfor ms better tha n E-BP at lower SNR, but exhibits an apparen t error floor as the SNR increa ses. This h igh error floor is mostly the resu lt of the DC de coder retur ning a cod ew ord with lower prob ability than the tra nsmitted codeword. For example, fo r an SNR of 6 .60 dB, 80 % of DC errors are of this typ e, while fo r an SNR of 7.31 d B, the p ercentag e r ises to 98%. I n contrast, the BP and DM BP decoder s essentially never make this kin d of error . Notice tha t E-BP-LP has a very similar per forman ce to 8 6.2 6.4 6.6 6.8 7 7.2 7.4 7.6 7.8 10 −9 10 −8 10 −7 10 −6 10 −5 10 −4 10 −3 10 −2 10 −1 10 0 Eb/N0 (dB) WER DC E−BP E−BP−DC DMBP E−BP−LP E−BP−DMBP E−BP−MILP(10) ML est. lower bound (a) Results when T max = 50 iteration s 6.2 6.4 6.6 6.8 7 7.2 7.4 7.6 7.8 10 −9 10 −8 10 −7 10 −6 10 −5 10 −4 10 −3 10 −2 10 −1 10 0 Eb/N0 (dB) WER DC E−BP E−BP−DC DMBP E−BP−LP E−BP−DMBP E−BP−MILP(10) ML est. lower bound (b) Results when T max = 300 iterations Fig. 4. Error performance comparisons for a length-2 209, rate-0.916 array LDPC code ove r the BSC. DMBP , and also that E-BP-MILP with 10 fixed bits performs the best am ong all the d ecoders and almost a pproac hes the estimated M L lower bo und. Howe ver , DMBP deco ders shou ld be s ignificantly more pr actical to construct in har dware, be- cause they are message-passing decoders sim ilar to existing BP decoder s, while LP and MILP decod ers d o n ot cu rrently have efficient and hardware-friend ly message-p assing imp le- mentations. Figure 4 depicts the WER per forman ce com parison of the length-2 209 array LDPC code over the BSC. For this QC- LDPC code, we observe broa dly similar performa nce to the random L DPC cod e. Figure 5 sh ows the WER performance com parison of the length-1 057 random LDPC code over the A WGN ch annel. W e observe that the BP decod er for this code exhibits an erro r floor . DMBP im proves the error floor perf ormanc e compared with BP and does not have an apparent err or floo r . When 20 0 2.5 3 3.5 4 4.5 10 −8 10 −7 10 −6 10 −5 10 −4 10 −3 10 −2 10 −1 10 0 Eb/N0 (dB) WER DC BP BP−DC DMBP BP−DMBP ML est. lower bound (a) Results when T max = 50 iteration s 2.5 3 3.5 4 4.5 10 −8 10 −7 10 −6 10 −5 10 −4 10 −3 10 −2 10 −1 10 0 Eb/N0 (dB) WER DC BP BP−DC DMBP BP−DMBP ML est. lower bound (b) Results when T max = 200 iterati ons Fig. 5. Error performance comparisons for a length-1057 , rate-0.77 random LDPC code ov er the A W GN channel. iterations ar e used, th e DC decoder has a similar p erform ance to BP . In the high SNR region , the DC deco der d oes not conv erge to an inco rrect co dew ord as freq uently as it d oes over the B SC. Note also that on the A WGN channel, while the DMBP d ecoder outper forms BP in the er ror-floor regime, it actu ally starts out worse in th e low SNR regime. Figure 6 depicts the WER p erform ance compariso n of the leng th-220 9 a rray L DPC cod e over the A WGN channel. For this QC-LDPC code, we observe similar per forman ce to the random LDPC co de. Note again that while all deco ders benefit from addition al allowed iterations, the DC deco der in particular becom es inc reasingly competitive as the n umber of allowed iterations incr eases. Our basic mo tiv atio n for the DC and DMBP deco ders was that the difference-ma p d ynamics may help a decod er av o id dynamica l “tra ps” th at could be related to the tra pping sets that are b eliev ed to cause error floo rs. The very good perfor mance of the DMBP decoder in the error floor regime 9 3.4 3.6 3.8 4 4.2 4.4 4.6 4.8 5 5.2 10 −7 10 −6 10 −5 10 −4 10 −3 10 −2 10 −1 10 0 Eb/N0 (dB) WER DC BP BP−DC DMBP BP−DMBP (a) Results when T max = 50 iteration s 3.4 3.6 3.8 4 4.2 4.4 4.6 4.8 5 5.2 10 −7 10 −6 10 −5 10 −4 10 −3 10 −2 10 −1 10 0 Eb/N0 (dB) WER DC, it=200 DC, it=500 BP, it=200 BP−DC, it=200 DMBP, it=200 BP−DMBP, it=200 (b) Results when T max = 200 or 500 iterat ions Fig. 6. Error performance comparisons for a lengt h-2209 and rate-0 .916 array L DPC code over the A W GN channel. indicates that th ere may in fact b e a redu ction in the num ber of trapping sets, but on the o ther han d, some trapping sets clea rly continue to e xist, e ven for the DMBP decoder . In p articular, we followed the app roach o f [6] and p erfor med some pr eliminary in vestigations o f individual “ab sorbing sets” in the array co de that they studied, a nd fo und tha t altho ugh the DMBP decoder perfor med better on a verage than the BP d ecoder, it still would not escape if started sufficiently close to particular difficult absorbing sets. V I . C O N C L U S I O N In this paper , we in vestigate two d ecoder s f or LDPC cod es: a DC decoder that dir ectly app lies th e d ivide and co ncur approa ch to deco ding LDPC codes, an d a DMBP deco der that imports th e d ifference-map idea into a min- sum BP-typ e decoder . The DMBP decoder sho ws particularly promising im- provements in erro r-floor per forman ce com pared wit h the stan- dard sum -prod uct B P d ecoder, with comparable comp utational complexity , and is amen able to hardware imp lementation. The DMBP decoder can b e criticized for lacking a solid theoretical b asis: it was co nstructed using intuitiv e ideas and is m ostly in teresting bec ause of its excellent pe rforma nce. The fact that its perfo rmance closely p arallels t hat of linear pro- grammin g deco ders suggests that it might be related to them. In fact, ou r work was p artially motiv ated b y o ur earlier r esults which sho wed th at LP dec oders can significan tly improve upon BP per forman ce in the error floor regime [1 7]; we aimed to develop a message-passing decoder that could reprod uce LP perfor mance w ith c omplexity similar to BP . W ork in the direction o f c reating a n ef ficient message- passing linear program ming decoder tha t could r eplace LP solvers that relied on simplex or interior poin t methods w as begun by V onto bel and K oetter [30], a nd m essage-passing algorithm s th at converge to an LP solutio n for some pr oblems were suggested by Glob erson an d Jaakkola [31]. Our DMBP update equations are q uite s imilar to those in the GEMPLP algorithm su ggested by Globerso n and Jaakkola, but our limited experiments w ith a GEMPLP decoder sho w that it d oes not rep roduce LP d ecoding perfo rmance. For th at ma tter , we have been unable to devise any other m essage-passing decod er with complexity similar to BP that exactly reprod uces linear progr amming dec oding. Elucidatin g the prec ise relationship between DMBP and LP decod ers r emains an outstand ing theoretical problem , but from the practical point o f view , o ur results show that the DMBP decod er alread y serves as an efficient message-passing decoder that sign ificantly impr oves error floo r perf orman ce c ompared with standard BP . R E F E R E N C E S [1] T . Richardson and R . Urbank e, Modern Coding Theory . Cambridge Uni ve rsity Press, 2008. [2] B. J. Frey , R. K oette r , and A. V ardy , “Signa l-space chara cteri zatio n of iterat i ve decoding” , IEEE T rans. Inform. Theory , vol . 47, Feb . 2001, pp. 766-781. [3] P . O. V ontobel and R. Koett er , “Graph-cov er decoding and finite-l ength analysi s of messa ge-passing iterat i ve decoding of LDPC codes, ” to appear in IEE E T rans. Inform. Theory http:/ /www .arxi v .org/abs/c s.IT/0512078 . [4] D. MacKay and M. Postol, “W eak nesses of Margul is and Ramanuja n- Margul is lo w-densit y parity-chec k code s, ” Electr onic Notes in Theoret- ical Computer Science , vol. 74, 2003. [5] T . Rich ardson, “Error floors of LDPC codes, ” Proc. 41st A llerton Conf . on Communicat ions, Contr ol, and Computi ng , Allerton House, Montice llo, IL, Oct. 2003. [6] L . D olece k, P . L ee, Z . Zhang, V . Anatharam, B. Nikolic , and M.J. W ain- wright, “Predict ing error floors of structured LDPC codes: determini stic bounds and estimates, ” to appear in IEEE Jou r . Sel. Areas in Comm. , 2009. [7] See www . hpl.hp.com/perso nal/P ascal V ontobel/pse udocode words/papers/ for a colle ction of papers on pseudocode words and related ideas. [8] X. -Y . Hu, E. Eleftheriou, and D. M. Arnold. “Regula r a nd irreg ular progressi ve edge-gro wth T anner graphs, ” IEEE T rans. Inform. Theory , vol. 51, no. 1, Jan. 2005, pp. 386–398. [9] T . Ti an, C. Jones, J. D. V illasenor , and R. D. W esel, “Construction of irregula r LDPC codes wit h l o w error floors, ” IEEE Internati onal Confer ence on Communications , v ol. 5, A nchorage , AK, May 2003, pp. 3125–3129. [10] Y . W ang, J. S. Y edidia, S. C. Draper , “Construct ion of high- girth QC-LDPC codes, ” Fif th Internatio nal Symposium on T urbo Codes and Related T opics 2008. A vail able online at http:/ /www .merl.com/public ations/TR2008-061/ . 10 [11] J. Zhang, J. S. Y edidia , M. P . C. Fossorier , “Low- laten cy decoding of EG LDPC codes, ” Journal of Lightwave T echn olog y , vol. 25, Sept. 2007, pp. 2879–2886. [12] R. M. T anner , “ A recursi ve approach to lo w complexit y codes, ” IE EE T rans. Inform. Theory , vol. 27, Sept. 1981, pp. 533–547. [13] Y . W ang and M. Fossorier , “Doubly generaliz ed LDPC codes, ” IEEE Int. Symp. on Inform. Theory , Seattl e, W A, Jul. 2006. pp. 669–673. [14] R. G. Gallager , Low-Density P arity-Chec k Codes . Cambridge, MA: M.I.T . Press, 1963. [15] Y . Han and W . E . Ryan, “Low-floor decoder s for LDPC codes, ” F orty- F ifth Annual Allerton Confe re nce , Allerton, IL, Sept. 2007, pp. 473–479. [16] S. C. Draper , J. S. Y edidia, and Y . W ang, “ML decoding via mixed- inte ger adapti ve linear programming, ” Proc . IEEE In t. Symp. In formation Theory , Nice, France, Jun. 2007, pp. 1656–1660. [17] Y . W ang, J. S. Y edidia, and S. C. Draper , “Multi-st age decoding of LDPC codes, ” Pr oc. IEE E Int. Symp. Information Theory , Seoul, Korea , Jun. 2009. pp. 2151–2155. [18] S. Grav el and V . Elser , “Divide a nd conc ur: a general approac h to constrai nt satisfacti on, ” Phys. Rev . E 78, 2008. p. 036706. A vaila ble online at . [19] M. M ´ ezard and A. Montanari, Information, Physics, and Computat ion , Oxford Univ ersity Press, 2009. Chapter 8. [20] S. Grav el, Using Symmetries to Solv e Asymmetric Pr oblems , Ph.D. Thesis, Cornell Univ ersity 2009. See s ectio n 5.4. [21] J. R. Fienup, “Phase retrie val algori thms: a compari son, ” Applied Optics , vol. 21, 1982, pp. 2758–2769 . [22] V . Elser , I. Rankenb ur g, and P . Thibault, “Searching with iterated maps, ” Pr oc. Nat. A cad. Sci. USA , vol. 104, 2007, pp. 418–423. [23] H. H. Baushk e, P . L. Combettes, a nd D. R. Luke, “Phase re trie val, error reduction algor ithm, a nd Fienup v ariant s: a vie w from con vex optimiza tion, ” J. Op. Soc. Am. A , vol. 19, July 2002, pp. 1334-1345. [24] F . R. Kschischang, B. J. Frey , and H .-A. Loeliger , “Factor graphs and the sum-product algorith m, ” IEEE T rans. on Info. Theory , vol. 47, Feb . 2001, pp. 498–519. [25] “Encycloped ia of Sparse Graph Codes, ”, av ailable online at http:/ /www .inference.phy .cam.ac.uk/mackay/codes/data.html . Code 1057.244.3.457. [26] J. L. Fan , “ Array codes as low-d ensity parity- check codes, ” Pro c. 2nd Int. Symp. T urbo Codes , Brest, France, Sept. 2000, pp. 545–546. [27] T . J. Richardson and R. Urbanke, “The capacity of low-de nsity parity- check codes under m essage-passi ng decoding, ” IEEE T rans. on Info. Theory , vol. 47, Feb . 2001, pp. 599–618. [28] M. -H. N. T agha vi and P . H. Siegel , “ Adapti ve methods for linear programming decoding, ” IEEE T rans. Inf orm. Theo ry , v ol. 54, Dec. 2008, pp. 5396-5410. [29] “GNU Linear Programming Kit, ” http:// www .gnu.org/softw are/glpk . [30] P . V ontobel and R. Koe tter , “T owa rds lo w-comple xity linear - programming decoding, ” P r oc. 4th Int. Symposium on T urbo Codes and Related T opics , Munich, Germany , 2006. [31] A. Globerson and T . J aakk ola, “Fixing max-product: con verge nt mes- sage passing algorit hms for MAP LP-relaxat ions, ” A dvances in Neural Informatio n Proce ssing Systems 20, V ancouv er , Cana da, 2007.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment