High-dimensional additive modeling

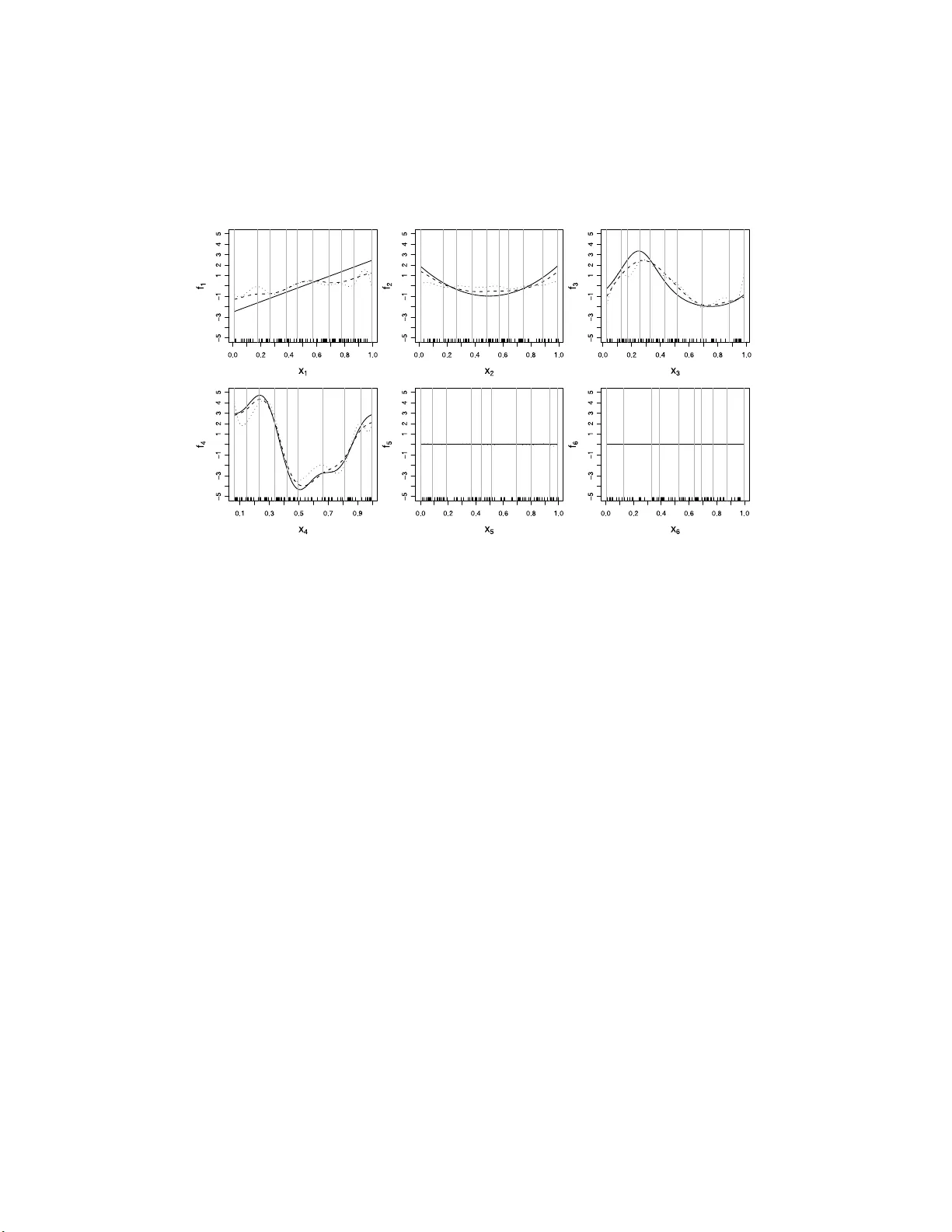

We propose a new sparsity-smoothness penalty for high-dimensional generalized additive models. The combination of sparsity and smoothness is crucial for mathematical theory as well as performance for finite-sample data. We present a computationally e…

Authors: Lukas Meier, Sara van de Geer, Peter B"uhlmann