Decoding Beta-Decay Systematics: A Global Statistical Model for Beta^- Halflives

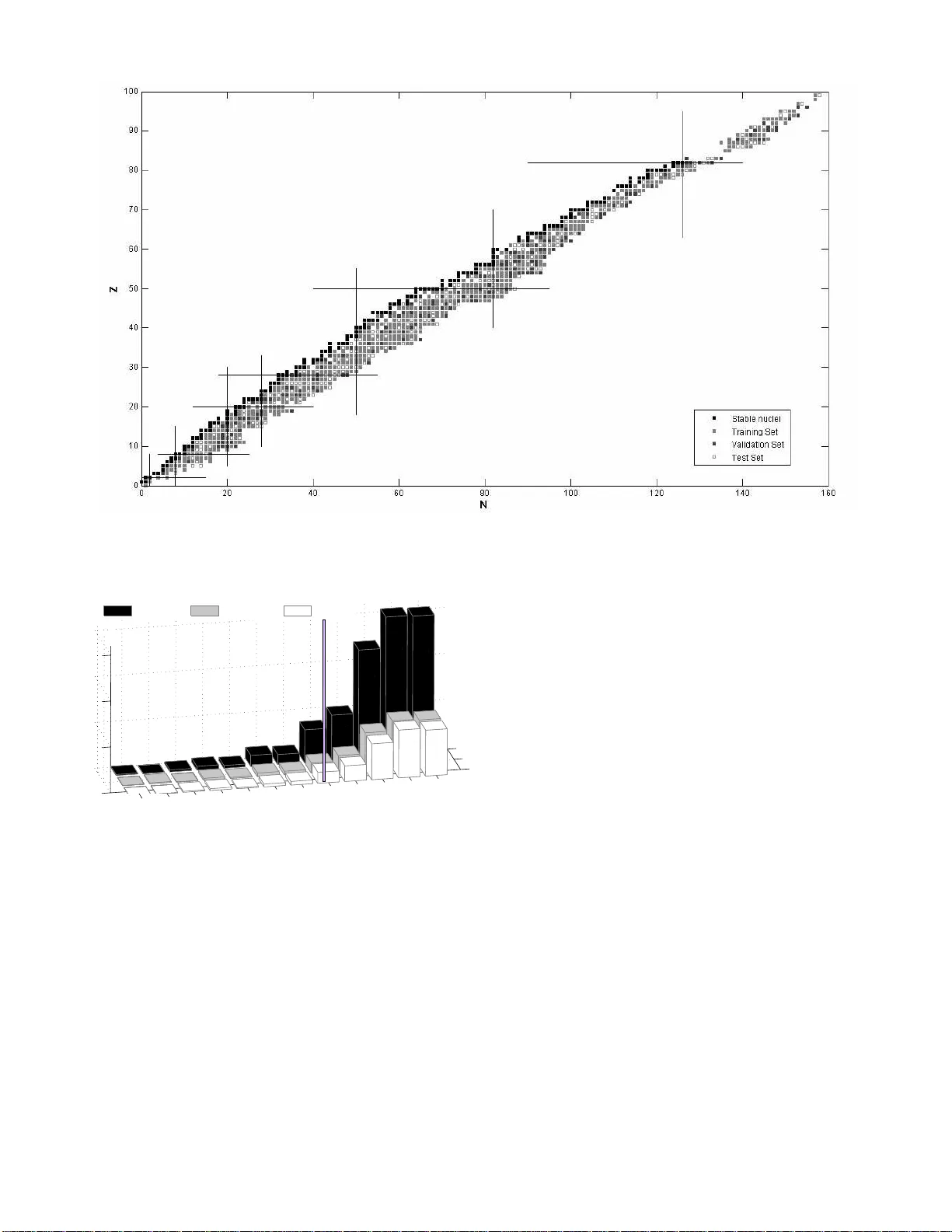

Statistical modeling of nuclear data provides a novel approach to nuclear systematics complementary to established theoretical and phenomenological approaches based on quantum theory. Continuing previous studies in which global statistical modeling i…

Authors: N. J. Costiris, E. Mavrommatis, K. A. Gernoth