The nested Chinese restaurant process and Bayesian nonparametric inference of topic hierarchies

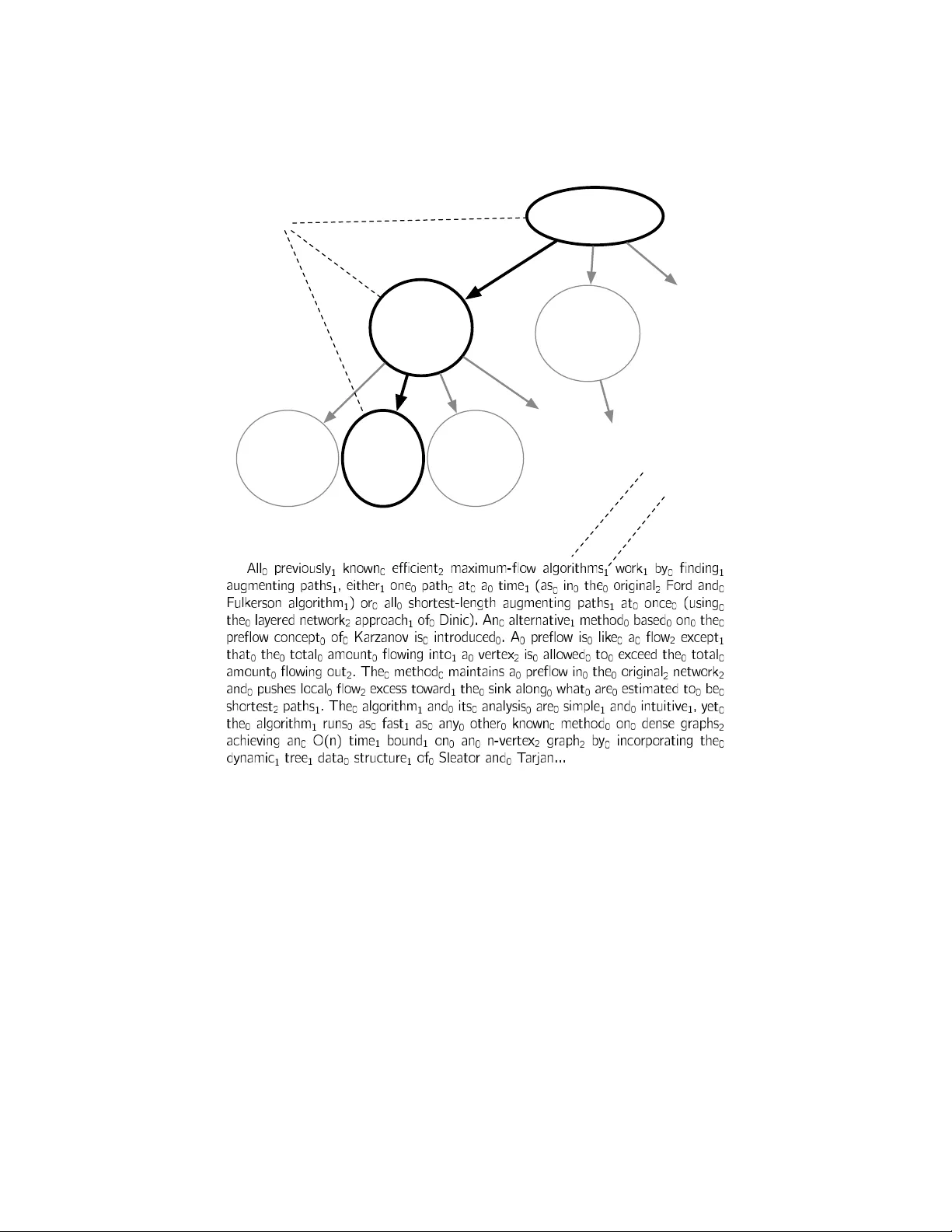

We present the nested Chinese restaurant process (nCRP), a stochastic process which assigns probability distributions to infinitely-deep, infinitely-branching trees. We show how this stochastic process can be used as a prior distribution in a Bayesia…

Authors: ** - **David M. Blei** (University of Washington) - **Thomas L. Griffiths** (University of Pennsylvania) - **Michael I. Jordan** (University of California, Berkeley) - **John Lafferty** (University of Chicago) *(일부 버전에서는 공동 저자로 포함)* *(원 논문에 따라 공동 저자 명단이 약간 달라질 수 있음)* --- ### **