Adaptive design and analysis of supercomputer experiments

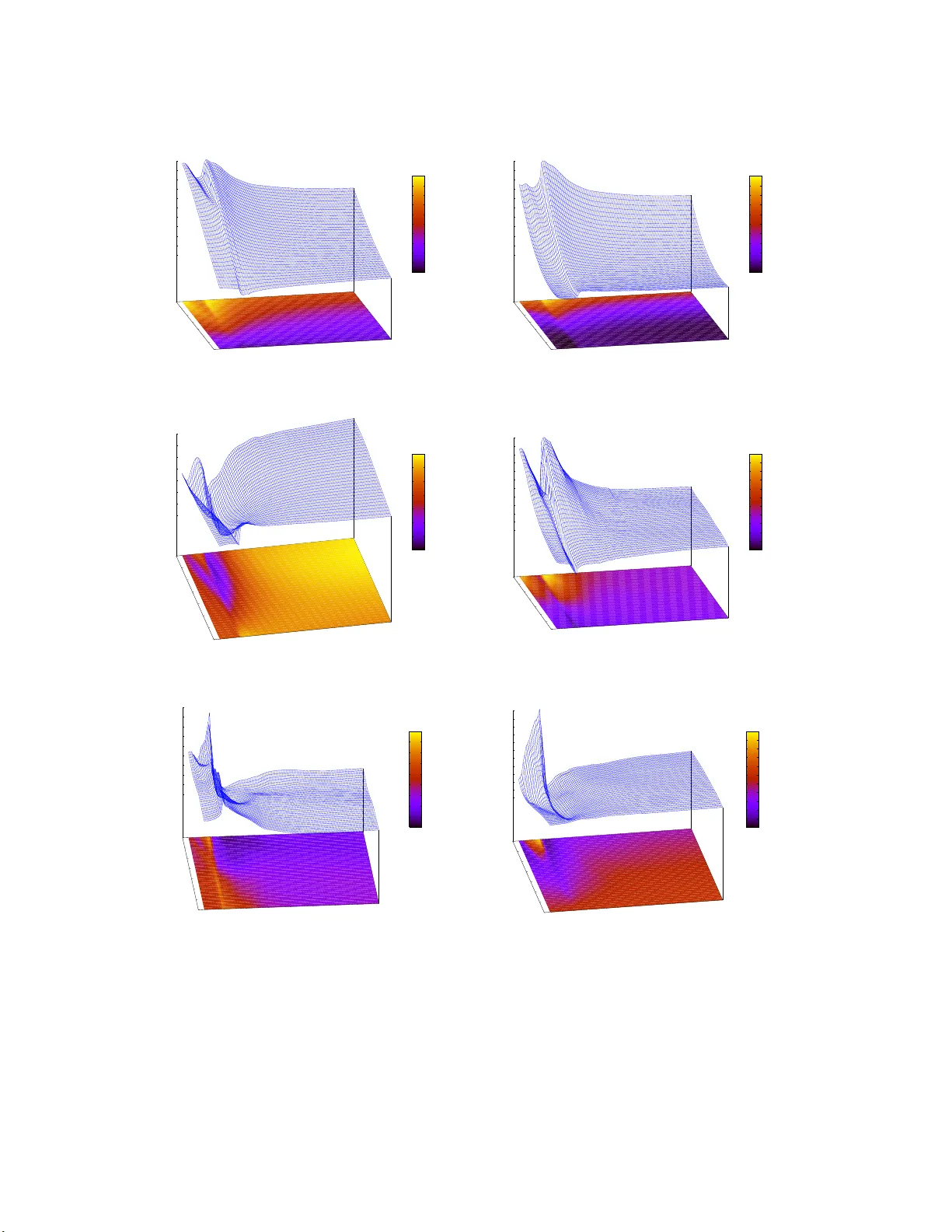

Computer experiments are often performed to allow modeling of a response surface of a physical experiment that can be too costly or difficult to run except using a simulator. Running the experiment over a dense grid can be prohibitively expensive, ye…

Authors: Robert B. Gramacy, Herbert K. H. Lee