On the Distribution of the Adaptive LASSO Estimator

We study the distribution of the adaptive LASSO estimator (Zou (2006)) in finite samples as well as in the large-sample limit. The large-sample distributions are derived both for the case where the adaptive LASSO estimator is tuned to perform conserv…

Authors: Benedikt M. P"otscher, Ulrike Schneider

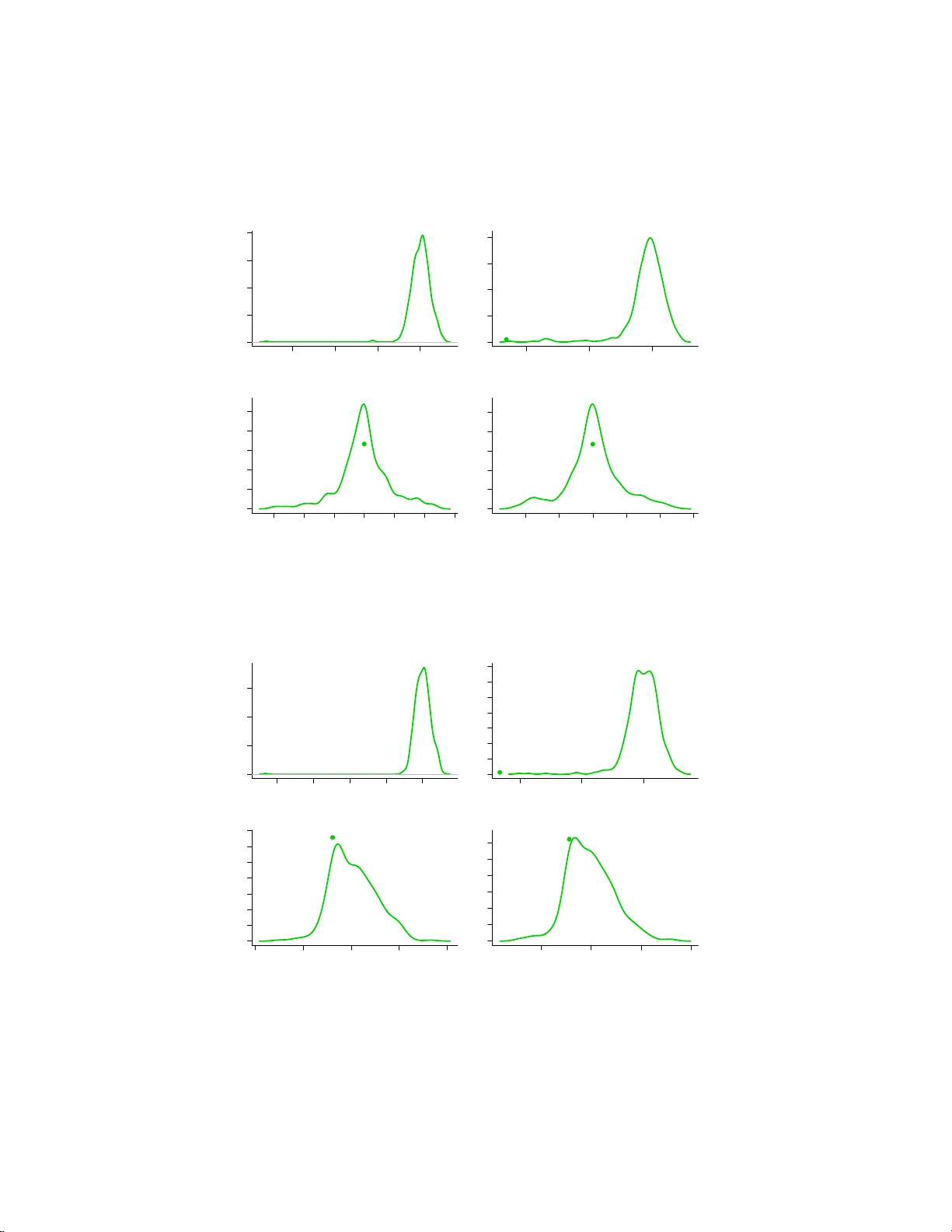

On the Distribution of the Adaptiv e LASSO Estimator Benedikt M. P¨ otsc her and Ulrik e Schne ider Departmen t of Statistics, Univ ersit y o f Vienna First v ersion: Decem b er 2007 This v ersion: Decem b er 2008 Abstract W e study the d istribution of the adaptive LASSO estimator ( Z ou (2006)) in finite sa mples as we ll as in the large-sample limit. The large- sample distributions are derived b oth for the case where t h e ad ap t ive LASSO estimator is tu ned to p erform conserv ative mo del selection as w ell as fo r the case where the tuning results in consistent mo del selection. W e sh o w that t he finite-sample as well as the large-sample distributions are t ypically highly non-n ormal, regardless of the choice of the t u ning pa- rameter. The uniform conv ergence rate is also obtained, and is show n to b e slo we r than n − 1 / 2 in case the estimator is tuned to p erform consistent mod el selection. In particular, these results question th e statistical rele- v ance of the ‘oracle’ prop erty of the adaptive LASSO estimato r established in Zou (200 6). Moreov er, we also pro vide an impossibilit y result regarding the estima tion of the distribution fun ction of the adaptiv e LASSO esti- mator. The theoretical results, whic h are obtained for a regression mod el with orthogonal design, are complemented by a Monte Carlo study using non-orthogonal regressors. MSC 2000 subje ct classific ation . Primary 62F11, 62F12, 62E 15, 62J05, 62J07. Key wor ds and phr ases . P enalized maxim um likelihood , LASSO, adap- tive LAS SO, nonnegative garotte, finite-sample distribution, asymptotic distribution, oracle property , estimatio n of distribution, uniform consis- tency . 1 In tro duction Penalized max imum likelihoo d estimator s hav e b een studied in tensively in the last few years. A prominent exa mple is the lea st absolute s election and shr ink- age (LASSO) estimator o f Tibshira ni (1996). Related v a riants o f the LASSO include the Bridge estimator s studied b y F rank & F riedman (1993), least angle regres s ion (LARS) of E fron et al. (200 4), or the smo othly clipp ed abso lute devi- ation (SCAD) es timator o f F an & Li (2001). Other estima to rs tha t fit into this 1 framework are ha r d- and soft-thresholding es timators. While man y pro p erties of p ena lized maxim um likeliho o d estimato rs a re now well understo o d, the un- derstanding of their distributional prop erties, such as finite-sa mple and large- sample limit dis tributions, is still incomplete. The pr obably most imp or ta nt contribution in this resp ec t is Knight & F u (2000) who study the asy mptotic distribution of the LASSO estimator (and of Br idge es tima to rs more generally ) when the tuning parameter gov erning the influence of the p e na lty term is c hosen in such a way that the LASSO acts as a conser v ative mo del selection pro c edure (that is, a pr o cedure that do es not sele c t underpar a meterized models asymp- totically , but selects overparameterized models with p ositive pro ba bility a symp- totically). In K night & F u (2000), the asymptotic distribution is obtained in a fixed-parameter as well as in a standard local alter natives setup. This is comple- men ted by a result in Zou (2006) who co nsiders the fixed-parameter as ymptotic distribution o f the LASSO when tuned to act as a consistent mo del selection pro - cedure. Ano ther contribution is F an & Li (2001) who deriv e the fixed- parameter asymptotic distribution of the SCAD estimator when the tuning para meter is chosen in suc h a way that the SCAD e s timator perfo r ms consisten t model se- lection; in particula r , they establish the so- called ‘oracle’ prop er ty for this esti- mator. Z o u (2 006) in tro duced a v ariant o f the LASSO, the so -called adaptiv e LASSO estimator, and established the ‘o racle’ prop erty for this estimator when suitably tuned. Since it is well-kno wn that fixed-para meter (i.e., point wise) asymptotic r esults can g ive a wrong picture of the es timator’s actual b ehav- ior, especia lly when the e stimator p er forms mo del s e lection (see, e.g., Kabaila (1995), or Leeb & P¨ otscher (2005), P¨ otscher & Leeb (2007), Leeb & P¨ otscher (2008b)), it is imp ortant to take a closer lo ok at the actual distributional prop- erties of the adaptive LASSO estimator . In the present pap er we study the finite-sample as well as the lar g e-sample distribution of the adaptive LASSO estimator in a linea r r egressio n mo del. In particular, we study b o th the ca s e where the estimator is tuned to p erform co n- serv ative mo del selectio n as well as the case where it is tuned to p er form co n- sistent mo del selection. W e find that the finite-sample distributions are highly non-normal (e.g., ar e often m ultimo dal) and that a standard fixed-par ameter asymptotic analys is gives a highly misleading impression of the finite-sa mple be- havior. In particular, the ‘ora cle’ prop erty , which is ba sed on a fixed- parameter asymptotic analysis, is shown not to provide a r eliable assessment o f the es ti- mators’ actual per formance. F or these reasons, we also obtain the lar ge-sa mple distributions of the abov e mentioned estimators under a genera l “moving param- eter” asy mptotic framework, which m uc h better captures the actual b ehavior of the estimato r. [Interestingly , it turns o ut that in ca se the estimator is tuned to p erfor m consis tent mo del selection a “moving par ameter” asymptotic frame- work more general than the usual n − 1 / 2 -lo cal asymptotic framew ork is necess ary to exhibit the full rang e o f p ossible limiting distributions.] F urthermor e, we ob- tain the unifor m con vergence r ate of the adaptive LASSO estimato r and show that it is slow er than n − 1 / 2 in the ca se wher e the estimato r is tuned to p er- form consistent model selection. This again e x p o ses the misleading character of the ‘oracle’ prop erty . W e a lso show that the finite-sample distribution o f the 2 adaptive LASSO estimator cannot b e estimated in any reasonable sense, co mple- men ting res ults of this sor t in the liter ature such as Lee b & P¨ o tscher (2006 a ,b), P¨ otscher & Leeb (2007), L e eb & P¨ otscher (2008a) and P ¨ otscher (200 6). Apart from the papers alr e ady mentioned, there has b een a recent surg e of publications establishing the ‘or a cle’ pro p erty fo r a v ariety of penalized maxi- m um likeliho o d or rela ted estimator s (e.g., Bunea (2004), Bunea & McKeag ue (2005), F an & Li (2002, 20 04), Li & Liang (2007), W ang & Leng (20 07), W ang, G. Li and Jia ng (2007), W ang , G. Li and Tsai (2 007), W ang, R. Li and Tsai (2007), Y uan & Lin (20 07), Zhang & L u (2007), Zou & Y uan (2 0 08), Zou & Li (20 0 8), Johnson et a l. (2008)). The ‘ora cle’ prop erty also pa ints a misleading picture of the b ehavior of the estimators considered in these pa pe r s; s ee the discus sion in Leeb & P¨ otscher (2005), Y ang (2005), P ¨ otscher (200 7), P¨ otscher & Leeb (2007), Leeb & P¨ o tscher (2008b). The pa p er is or ganized as follows. The mo del and the adaptive LASSO estimator are introduced in Section 2 . In Sec tio n 3 we study the estimato r theoretically in an orthog onal linear regress io n mo del. In particular , the model selection probabilities implied b y the adaptive LASSO estimator are discussed in Section 3.1. Consistency , uniform c o nsistency , a nd unifor m conv erge nce rates of the e s timator are the s ub ject of Section 3.2. The finite-sa mple distr ibutions are derived in Section 3.3.1, whe r eas the asymptotic distributions a re studied in Sec tio n 3.3.2. W e provide an imp ossibility result r egar ding the estimation of the adaptive LASSO’s distribution function in Section 3.4. Sectio n 4 s tudies the behavior of the adaptive LASSO estimator b y Monte Carlo without imp osing the simplifying assumption of or thogonal reg ressor s. W e finally s ummarize our findings in Section 5. Pro ofs and some technical details are deferr e d to a n app endix. 2 The A daptiv e LASSO Estimator W e consider the linea r reg ression mo del Y = X θ + u (1) where X is a nonsto chastic n × k matrix of rank k and u is multiv a riate normal with mean zero and v aria nce-cov aria nc e matrix σ 2 I n . Let ˆ θ LS = ( X ′ X ) − 1 X ′ Y denote the lea st squar es (maximum lik eliho o d) estimato r. The adaptive LASSO estimator ˆ θ A is defined as the solution to the minimization pr oblem ( Y − X θ ) ′ ( Y − X θ ) + 2 nµ 2 n k X i =1 | θ i | / | ˆ θ LS,i | (2) where the tuning pa rameter µ n is a p ositive real n umber. As long as ˆ θ LS,i 6 = 0 for every i , the function given by (2) is well-defined and strictly conv ex and hence has a uniquely defined minimizer ˆ θ A . [The even t where ˆ θ LS,i = 0 for some i has probability zer o under the probability measure gov erning u . Hence, 3 it is inconsequential how we define ˆ θ A on this event ; for r easons o f conv enience, we shall a dopt the conv ention that µ 2 n / | ˆ θ LS,i | = 0 if ˆ θ LS,i = 0. F urthermor e, ˆ θ A is a measurable function of Y .] Note that Zou (2006) uses λ n = 2 nµ 2 n as the tuning para meter . Zou (2006) a lso co nsiders versions of the adaptive LASSO es timator fo r which | ˆ θ LS,i | in (2) is repla ced by | ˆ θ LS,i | γ . How ever, we shall exclusively c oncentrate on the leading case γ = 1. As p ointed o ut in Zou (2006), the adaptiv e LASSO is closely r elated to the no nnegative Gar otte estimator of B reiman (199 5). 3 Theoretical Analysis F or the theoretical a nalysis in this section we s hall mak e some simplifying as- sumptions. First, we a ssume that σ 2 is known, whence we may assume without loss of genera lity that σ 2 = 1. Second, we assume orthog onal regresso rs, i.e., X ′ X is dia gonal. The latter a ssumption will be removed in the Mo nte Ca rlo study in Section 4. Orthogo nal regr essors o cc ur in many imp or tant settings, including wa velet reg ressio n or the ana lysis of v ariance. More sp ecifica lly , we shall assume X ′ X = nI k . In this case the minimizatio n of (2) is equiv alent to separately minimizing n ( ˆ θ LS,i − θ i ) 2 + 2 nµ 2 n | θ i | / | ˆ θ LS,i | (3) for i = 1 , . . . , k . Since the estimators ˆ θ LS,i are indep endent, so are the comp o- nent s of ˆ θ A , pr ovided µ n is nonrandom which w e shall ass ume for the theoretica l analysis thro ughout this section. T o study the joint distribution o f ˆ θ A , it hence suffices to study the distr ibution of the individua l comp onents. Hence, we may assume without loss of gener ality that θ is sca lar, i.e., k = 1, for the rest of this section. In fact, as is eas ily seen, ther e is then no loss of generality to even assume that X is just a column of 1’s, i.e., we may then cons ider a simple Gaussian lo ca tion problem where ˆ θ LS = ¯ y , the ar ithmetic mean of the indep en- dent a nd identically N ( θ, 1 )-distributed observ ations y 1 , . . . , y n . Under these assumptions, the minimization pr oblem defining the ada ptive LASSO has an explicit so lution of the fo r m ˆ θ A = ¯ y (1 − µ 2 n / ¯ y 2 ) + = 0 if | ¯ y | ≤ µ n ¯ y − µ 2 n / ¯ y if | ¯ y | > µ n . (4) The explicit for mula (4 ) also shows that in the lo cation model (and, mor e gener- ally , in the diagonal regres sion model) the adaptive LASSO and the nonnegative Garotte co incide, and thus the results in the present sectio n also apply to the latter estima to r. In view of (4) we also note that in the diago nal r egressio n mo del the adaptive LASSO is nothing else than a p ositive-part Stein estimator applied c omp onentwise . Of course, this is not in the spir it of Stein estimation. 4 3.1 Mo del selection probabilities and tuning parameter The a daptive LASSO estimator ˆ θ A can be viewed as p erfor ming a selection betw een the restricted mo del M R consisting o nly o f the N (0 , 1)-distribution and the unrestr icted mo del M U = { N ( θ, 1) : θ ∈ R } in a n obvious w ay , i.e., M R is selected if ˆ θ A = 0 a nd M U is selected otherwise. W e now s tudy the mo del selection probabilities, i.e., the proba bilities that model M U or M R , resp ectively , is selected. As these selection pr obabilities add up to o ne, it suffices to consider one of them. The probability o f selecting the restricted mo del M R is given b y P n,θ ( ˆ θ A = 0) = P n,θ ( | ¯ y | ≤ µ n ) = Pr( | Z + n 1 / 2 θ | ≤ n 1 / 2 µ n ) = Φ( − n 1 / 2 θ + n 1 / 2 µ n ) − Φ( − n 1 / 2 θ − n 1 / 2 µ n ) , (5) where Z is a standar d normal r andom v a riable with cumulativ e distribution function (cdf ) Φ. W e use P n,θ to denote the proba bilit y governing a sample of size n when θ is the tr ue parameter, and Pr to deno te a gener ic probability measure. In the following w e shall a lwa ys imp o s e the condition that µ n → 0 for asymp- totic considera tio ns, which g uarantees that the probability o f incorrectly se lect- ing the restricted mo del M R (i.e., selecting M R if the true θ is non-zero) v anishes asymptotically . Conv ersely , if this pro bability v anishes asymptotica lly for every θ 6 = 0, then µ n → 0 follows, hence the condition µ n → 0 is a basic o ne and without it the estimator ˆ θ A do es no t seem to be of m uch interest. Given the condition that µ n → 0, tw o cas e s need to be disting uished: (i) n 1 / 2 µ n → m , 0 ≤ m < ∞ and (ii) n 1 / 2 µ n → ∞ . 1 In case (i), the adaptive LASSO e stimator acts as a co nserv ative mo del selection pro cedur e, mea ning that the proba bilit y of selecting the larg er mo del M U has a p ositive limit even when θ = 0, where as in cas e (ii), ˆ θ A acts as a co nsistent mo del se lection pro ce- dure, i.e., this probability v anishes in the limit w he n θ = 0. This is immediately seen by insp ection of (5). In different guis e, these facts hav e lo ng b een known, see Bauer et al. (1988). In his a nalysis of the a daptive LASSO estimator Zou (2006) assumes n 1 / 4 µ n → 0 and n 1 / 2 µ n → ∞ , hence he cons iders a sub case of case (ii). W e shall discuss the reaso n wh y Zou (2006) imp oses the s tricter condition n 1 / 4 µ n → 0 in Section 3 .3 .2. The asymptotic behavior of the model selectio n probabilities discussed in the preceding parag r aph is o f a “ p oint wise” asymptotic na ture in the sens e that the v alue of θ is held fixed when n → ∞ . Since p oint wise asymptotic results often miss essential asp ects of the finite-sample b ehavior, we next present a “moving parameter” asymptotic analysis, i.e., we allow θ to v ar y with n in the asy mpto tic analysis, which be tter r eveals the features of the problem in finite sa mples. Note that the following pr o p osition in particula r sho ws tha t the conv ergence of the mo del selectio n probability to its limit in a p oint wise asymptotic analysis is not uniform in θ ∈ R (in fa c t, it fa ils to b e uniform in any neig hborho o d of θ = 0). 1 There is no loss in generalit y here in the sense that the general case where only µ n → 0 holds can alwa ys b e reduced to case (i) or case (ii) by passi ng to subsequences. 5 Prop ositi on 1 Assum e µ n → 0 and n 1 / 2 µ n → m with 0 ≤ m ≤ ∞ . (i) Assume 0 ≤ m < ∞ (c orr esp onding to c onservative mo del sele ction). Sup- p ose that t he true p ar ameter θ n ∈ R satisfies n 1 / 2 θ n → ν ∈ R ∪ { −∞ , ∞} . Then lim n →∞ P n,θ n ( ˆ θ A = 0) = Φ( − ν + m ) − Φ( − ν − m ) . (ii) Assume m = ∞ (c orr esp onding to c onsistent mo del sele ction). Supp ose θ n ∈ R s atisfi es θ n /µ n → ζ ∈ R ∪ {−∞ , ∞} . Then 1. | ζ | < 1 implies lim n →∞ P n,θ n ( ˆ θ A = 0) = 1 , 2. | ζ | = 1 and n 1 / 2 ( µ n − ζ θ n ) → r for some r ∈ R ∪ { −∞ , ∞} , implies lim n →∞ P n,θ n ( ˆ θ A = 0) = Φ( r ) , 3. | ζ | > 1 implies lim n →∞ P n,θ n ( ˆ θ A = 0) = 0 . The proof of Pr op osition 1 is identical to the pro o f of Prop osition 1 in P¨ otscher & Leeb (200 7) and hence is omitted. The ab ov e prop ositio n in fact completely desc rib es the larg e-sample b ehavior of the mo del selection pro babil- it y without any co nditions o n the parameter θ , in the sense tha t all po ssible accumulation p oints of the mo del selection probability along arbitr ary sequence s of θ n can b e obtained in the following manner: Apply the r esult to subsequences and observe that, by compactness of R ∪ {−∞ , ∞} , we can select from ev ery sub- sequence a further subsequence suc h that a ll relev ant quantities such a s n 1 / 2 θ n , θ n /µ n , n 1 / 2 ( µ n − θ n ), or n 1 / 2 ( µ n + θ n ) c o nv erge in R ∪ {−∞ , ∞} a long this further subsequence. In the case of conser v ative mo del selectio n, Prop os ition 1 shows that the usual local alterna tive parameter sequences describ e the asymptotic b ehavior. In particula r, if θ n is lo cal to θ = 0 in the s e ns e that θ n = ν /n 1 / 2 , the lo cal alternatives pa rameter ν gov erns the limiting mo del selection probability . De- viations of θ n from θ = 0 of order 1 /n 1 / 2 are detected with pos itive probability asymptotically and deviations of lar ger order are detected with probability one asymptotically in this cas e. In the cons istent mo del selection cas e, how ever, a different picture emerges. Here, Pro p osition 1 shows that lo cal deviations o f θ n from θ = 0 that are of the or der 1 /n 1 / 2 are not detected by the mo del selection pro cedure at all! 2 In fact, even larger deviations from zero go asymptotically unnoticed by the mo del selection pro cedure, namely a s long as θ n /µ n → ζ , | ζ | < 1. [Note that these lar ger deviations would b e picked up by a c onservative pro cedure with probability one asy mptotically .] This unpleasant consequence of mo del selec tion consistency has a n umber of re p er cussions as we sha ll see later on. F or a mor e detailed discussion of these facts in the co nt ext of p o st-mo del- selection estimator s see Leeb & P¨ otscher (2 005). The sp eed of con vergence of the mo del selection probability to its limit in part (i) of the pro p o sition is governed by the slo wer of the co nvergence sp e eds of n 1 / 2 µ n and n 1 / 2 θ n . In part (ii), it is exp onential in n 1 / 2 µ n in cases 1 and 3, 2 F or such deviations this als o im mediately f ollows from a contiguit y argumen t. 6 and is governed by the conv ergence s p e ed of n 1 / 2 µ n and n 1 / 2 ( µ n − ζ θ n ) in ca se 2. 3.2 Uniform consistency and uniform c on v ergence rate of the adaptive LASSO estimator It is easy to see that the natur al condition µ n → 0 discussed in the preceding section is in fact equiv alent to co ns istency o f ˆ θ A for θ . Moreover, under this basic condition the estimator is even uniformly co nsistent with a certain rate as we show next. Theorem 2 Assume t hat µ n → 0 . Then ˆ θ A is uniformly c onsistent for θ , i.e., lim n →∞ sup θ ∈ R P n,θ ˆ θ A − θ > ε = 0 (6) for every ε > 0 . F urt hermor e, let a n = min( n 1 / 2 , µ − 1 n ) . Then, for every ε > 0 , ther e exists a ( n onne gative) re al numb er M such that sup n ∈ N sup θ ∈ R P n,θ a n ˆ θ A − θ > M < ε (7) holds. In p articular, ˆ θ A is uniformly a n -c ons ist ent. F or the case where the estima tor ˆ θ A is tuned to p erfor m cons e rv a tive mo del selection, the preceding theorem shows that these estimators are uniformly n 1 / 2 - consistent. In contrast, in case the estimators ar e tuned to p er form co ns istent mo del s election, the theorem o nly gua r antees uniform µ − 1 n -consistency; that the estimator do es a c tua lly not conv erge fas ter than µ n in a uniform sense will be shown in Sectio n 3.3 .2. Remark 3 In case n 1 / 2 µ n → m with m = 0 , the adaptive LASSO estimator is uniformly asymptotica lly equiv alent to the unrestricted ma x imum likeliho o d estimator ¯ y in the sens e that sup θ ∈ R P n,θ ( n 1 / 2 | ˆ θ A − ¯ y | > ε ) → 0 for n → ∞ and for every ε > 0. Using (4) this follows easily fro m P n,θ ( n 1 / 2 | ˆ θ A − ¯ y | > ε ) ≤ 1 ( n 1 / 2 µ n > ε ) + P n,θ ( n 1 / 2 µ 2 n / | ¯ y | > ε, | ¯ y | > µ n ) ≤ 2 · 1 ( n 1 / 2 µ n > ε ) → 0 . 3.3 The distribution of the adaptiv e LASSO 3.3.1 Finite-sampl e dis tributions W e now deriv e the finite-sa mple distribution o f n 1 / 2 ( ˆ θ A − θ ). F or purp ose o f compariso n we note the obvious fact that the distribution of the unrestricted maximum likelihoo d e s timator ˆ θ U = ¯ y (corr esp onding to mo del M U ) as well as 7 the dis tribution o f the restricted maximum likelihoo d estimator ˆ θ R ≡ 0 (corre- sp onding to mo del M R ) are normal. More pr ecisely , n 1 / 2 ( ˆ θ U − θ ) is N (0 , 1)- distributed and n 1 / 2 ( ˆ θ R − θ ) is N ( − n 1 / 2 θ , 0)-distr ibuted, where the singular normal distributio n is to b e interpreted as p ointmass a t − n 1 / 2 θ . [The latter is simply an instance o f the fact that in case k > 1 the restr icted estimato r has a singular nor mal distribution co ncentrated on the subspa ce defined by the zero restrictions.] The finite-sa mple distribution F A,n,θ of n 1 / 2 ( ˆ θ A − θ ) is given by P n,θ ( n 1 / 2 ( ˆ θ A − θ ) ≤ x ) = P n,θ ( n 1 / 2 ( ˆ θ A − θ ) ≤ x, ˆ θ A = 0) + P n,θ ( n 1 / 2 ( ˆ θ A − θ ) ≤ x, ˆ θ A > 0) + P n,θ ( n 1 / 2 ( ˆ θ A − θ ) ≤ x, ˆ θ A < 0) = A + B + C. By (5) we clearly hav e A = 1 ( − n 1 / 2 θ ≤ x ) n Φ( − n 1 / 2 θ + n 1 / 2 µ n ) − Φ( − n 1 / 2 θ − n 1 / 2 µ n ) o . F urther more, using expression (4) we find that B = P n,θ ( n 1 / 2 ( ¯ y − µ 2 n / ¯ y − θ ) ≤ x, ¯ y > µ n ) = P n,θ ( n 1 / 2 ( ¯ y 2 − µ 2 n − θ ¯ y ) ≤ ¯ y x, ¯ y > µ n ) = Pr( Z 2 + n 1 / 2 θZ − nµ 2 n ≤ Z x + n 1 / 2 θ x, Z > − n 1 / 2 θ + n 1 / 2 µ n ) = Pr( Z 2 + ( n 1 / 2 θ − x ) Z − ( nµ 2 n + n 1 / 2 θ x ) ≤ 0 , Z > − n 1 / 2 θ + n 1 / 2 µ n ) , where Z follo ws a standa rd normal distribution. The quadratic form in Z is conv ex and hence is less than or equal to zero pre cisely b etw een the zero es of the equatio n z 2 + ( n 1 / 2 θ − x ) z − ( nµ 2 n + n 1 / 2 θx ) = 0 . The solutions z (1) n,θ ( x ) and z (2) n,θ ( x ) of this equation with z (1) n,θ ( x ) ≤ z (2) n,θ ( x ) are given by − ( n 1 / 2 θ − x ) / 2 ± q (( n 1 / 2 θ + x ) / 2) 2 + nµ 2 n . (8) Note that the expressio n under the ro ot in (8) is a lwa ys p ositive, so that B = P n,θ z (1) n,θ ( x ) ≤ Z ≤ z (2) n,θ ( x ) , Z > − n 1 / 2 θ + n 1 / 2 µ n . Observe tha t z (1) n,θ ( x ) ≤ − n 1 / 2 θ + n 1 / 2 µ n alwa ys holds and that − n 1 / 2 θ + n 1 / 2 µ n ≤ z (2) n,θ ( x ) is eq uiv alent to n 1 / 2 θ + x ≥ 0, so that we can write B = 1 ( n 1 / 2 θ + x ≥ 0) n Φ z (2) n,θ ( x ) − Φ( − n 1 / 2 θ + n 1 / 2 µ n ) o . The term C can b e treated in a similar fas hion to arrive at C = 1 ( n 1 / 2 θ + x ≥ 0) Φ( − n 1 / 2 θ − n 1 / 2 µ n ) + 1 ( n 1 / 2 θ + x < 0) Φ z (1) n,θ ( x ) . 8 Adding up A , B and C , w e now obtain the finite-sample distr ibution function of n 1 / 2 ( ˆ θ A − θ ) a s F A,n,θ ( x ) = 1 ( n 1 / 2 θ + x ≥ 0 ) Φ z (2) n,θ ( x ) + 1 ( n 1 / 2 θ + x < 0) Φ z (1) n,θ ( x ) . (9) It follows that the distribution of n 1 / 2 ( ˆ θ A − θ ) c o nsists of an atomic par t given by n Φ( n 1 / 2 ( − θ + µ n )) − Φ( n 1 / 2 ( − θ − µ n )) o δ − n 1 / 2 θ , (10) where δ z represents pointmass at the p oint z , and an abs olutely contin uous part that has a Leb esgue density given by 0 . 5 × n 1 ( n 1 / 2 θ + x > 0) φ z (2) n,θ ( x ) (1 + t n,θ ( x )) + 1 ( n 1 / 2 θ + x < 0) φ z (1) n,θ ( x ) (1 − t n,θ ( x )) o , (11) where t n,θ ( x ) = 0 . 5( n 1 / 2 θ + x ) / (( n 1 / 2 θ + x ) / 2) 2 + nµ 2 n 1 / 2 . Fig ure 1 illus- trates the shap e of the finite-sa mple distribution of n 1 / 2 ( ˆ θ A − θ ). Obviously , the distribution is highly no n- normal. −3 −2 −1 0 1 2 3 0.0 0.1 0.2 0.3 0.4 Figure 1: Distribution of n 1 / 2 ( ˆ θ A − θ ) fo r n = 10, θ = 0 . 1, µ n = 0 . 0 5 . The plot shows the density of the absolutely contin uous par t (11), a s well as the to ta l mass of the atomic part (1 0) lo cated at − n 1 / 2 θ = − 0 . 32 . 3.3.2 Asymptotic distributions W e next obta in the asymptotic distributions of ˆ θ A under gener al “moving pa- rameter” asymptotics (i.e., asymptotics where the true parameter can depend on 9 sample size), s ince – as alr eady noted ear lier – considering only fix ed-parameter asymptotics may paint a very mis le ading picture of the b ehavior of the estima- tor. In fact, the results given b elow a mo unt to a complete descriptio n o f a ll po ssible accumulation p o int s of the finite-sample dis tr ibution, cf. Remarks 7. Not surprisingly , the r esults in the conserv ative mo del selection case ar e different from the o nes in the consistent model selection ca se. Conserv ative case The lar ge-sample b ehavior of the distribution F A,n,θ n of n 1 / 2 ( ˆ θ A − θ n ) for the case when the estimator is tuned to pe rform conserv ative mo del selection is characterized in the following theorem. Theorem 4 Assume µ n → 0 and n 1 / 2 µ n → m , 0 ≤ m < ∞ . Supp ose t he true p ar ameter θ n ∈ R satisfies n 1 / 2 θ n → ν ∈ R ∪ {− ∞ , ∞} . Then, for ν ∈ R , F A,n,θ n c onver ges we akly to the distribution 1 ( x + ν ≥ 0)Φ − ν − x 2 + r ( ν + x 2 ) 2 + m 2 ! + 1 ( x + ν < 0)Φ − ν − x 2 − r ( ν + x 2 ) 2 + m 2 ! . If | ν | = ∞ , then F A,n,θ n c onver ges we akly to Φ , i.e., t o a standar d normal distribution. The fix e d-parameter asymptotic distribution ca n b e obtaine d fro m Theo - rem 4 by setting θ n ≡ θ : F or θ = 0, we g e t 1 ( x ≥ 0 ) Φ( x/ 2 + p ( x/ 2) 2 + m 2 ) + 1 ( x < 0 ) Φ( x/ 2 − p ( x/ 2) 2 + m 2 ) , which co incides with the finite-s ample distribution in (9) except for replac ing n 1 / 2 µ n with its limit m . How ev er, for θ 6 = 0 , the resulting fixed- pa rameter asymptotic dis tribution is a standa rd nor mal distribution whic h clearly misr ep- resents the actua l distribution (9). This disagreement is most pro nounced in the s ta tistically interesting ca se wher e θ is close to, but not equal to, zer o (e.g ., θ ∼ n − 1 / 2 ). In contrast, the distr ibution given in T he o rem 4 muc h b etter ca p- tures the b ehavior of the finite-sample distribution also in this case b ecause it coincides with the finite-sample distribution (9) except for the fact that n 1 / 2 µ n and n 1 / 2 θ n hav e s ettled down to their limiting v a lues. Consistent c ase In this s ubsection we consider the cas e where the tuning parameter µ n is chosen s o that ˆ θ A per forms consistent mo de l selection, i.e. µ n → 0 and n 1 / 2 µ n → ∞ . Theorem 5 Assume that µ n → 0 and n 1 / 2 µ n → ∞ . S upp ose the t rue p ar am- eter θ n ∈ R satisfies θ n /µ n → ζ for some ζ ∈ R ∪ {−∞ , ∞} . 10 1. If ζ = 0 and n 1 / 2 θ n → ν ∈ R , then F A,n,θ n c onver ges we akly to the c df 1 ( · ≥ − ν ) . 2. The total mass F A,n,θ n esc ap es to either ∞ or −∞ for the fol lowing c ases: If −∞ < ζ < 0 , or if ζ = 0 and n 1 / 2 θ n → −∞ , or if ζ = −∞ and n 1 / 2 µ 2 n /θ n → −∞ , then F A,n,θ n ( x ) → 0 for every x ∈ R . If 0 < ζ < ∞ , or if ζ = 0 and n 1 / 2 θ n → ∞ , or if ζ = ∞ and n 1 / 2 µ 2 n /θ n → ∞ , then F A,n,θ n ( x ) → 1 for every x ∈ R . 3. If | ζ | = ∞ and n 1 / 2 µ 2 n /θ n → r ∈ R , then F A,n,θ n c onver ges we akly to the c df Φ( · + r ) . The fixed-para meter asymptotic b ehavior of the adaptive LASSO estimato r is obtained from Theore m 5 by setting θ n ≡ θ : F or θ = 0, the asymptotic distribution reduces to p oint-mass at 0, which coincides with the asymptotic distribution of the restr icted ma x imum likelihoo d estimator. In the case of θ 6 = 0, the asymptotic distribution is Φ( x + ρ/θ ) provided n 1 / 2 µ 2 n → ρ (with the o bvious in terpretatio n if | ρ | = ∞ ). Tha t is, it is a shifte d version of the asymptotic distribution of the unrestricted ma ximum likeliho o d estimator (the shift b eing infinitely large if | ρ | = ∞ ). Observe that (for | ρ | < ∞ ) the s hift gets larger as | θ | , | θ | 6 = 0, gets smaller . The ‘o r acle’ pro p e rty in the sense of Zo u (2006) is hence satisfied if and only if ρ = 0, that is, if the tuning par ameter additionally also s atisfies n 1 / 4 µ n → 0. This is precisely the condition imposed in Theor em 2 in Zou (2006) which establis he s the ‘oracle’ prop er ty . [Note that n 1 / 4 µ n → 0 translates into the assumption λ n /n 1 / 2 → 0 in The o rem 2 in Zou (2006).] If n 1 / 2 µ 2 n → ρ 6 = 0, the adaptive LASSO estimator provides an example of a n estimator that p er fo rms consistent mo del selection, but do e s not satisfy the ‘orac le ’ prop erty in the sense that for θ 6 = 0 its asymptotic distr ibutio n do es not coincide with the asymptotic distribution o f the unrestricted maximum likelihoo d estimato r . In any case , the ‘ora cle’ prop er t y , which is gua r anteed under the additional requirement n 1 / 4 µ n → 0, carries little statistical meaning: Imp os ing the addi- tional conditio n n 1 / 4 µ n → 0 still allows all three cases in Theorem 5 abov e to o ccur, showing that – notwithstanding the v alidity of the ‘or acle’ prop erty – non-normal limiting distributions ar is e under a moving-parameter asymptotic framework. These la tter distributions are in b etter agreement with the features exhibited by the finite-sample distribution (9), wher eas the ‘o r acle’ prop erty alwa ys predicts a normal limiting distribution (a s ingular one in case θ = 0), showing that it do es not capture essen tial fea tures of the finite-sample dis- tribution. In pa rticular, the prece ding theorem shows that the estimator is not uniformly n 1 / 2 -consistent a s the sequence of finite-sample distributions of n 1 / 2 ( ˆ θ A − θ n ) is s to chastically unbounded in some ca ses a rising in Theo rem 5 . All this g o es to s how that the ‘oracle ’ prop erty , which is based o n the point wise asymptotic distribution only , paints a highly misleading pic tur e of the b ehavior of the adaptive LASSO estimator and should not b e taken at face v alue. See also Remark 1 0. 11 It transpir es from Theo rem 5 that F A,n,θ n conv erges w eakly to the sing ular normal distribution N (0 , 0) if θ n = 0 for all n , a nd to the s tandard normal N (0 , 1) if θ n satisfies | θ n | / ( n 1 / 2 µ 2 n ) → ∞ . Hence, if one, for ex a mple, allows as the para meter space for θ only the set Θ n = { θ ∈ R : θ = 0 or | θ | > b n } where b n > 0 satisfies b n / ( n 1 / 2 µ 2 n ) → ∞ , then the conv erg e nc e of F A,n,θ n to the limiting distributions N (0 , 0) and N (0 , 1), resp ectively , is uniform over Θ n , i.e., the ‘oracle’ prop erty holds uniformly o ver Θ n . Do es this line of re a soning restore the cr edibilit y of the ‘oracle’ prop erty? W e do no t think so for the following reaso ns: The choice of Θ n as the par ameter space is highly artificial, depe nds on sample size as well as on the tuning par ameter (and hence on the estimation pro cedur e). F urthermore , in case Θ n is ado pted as the para meter space, the ‘forbidden’ set R − Θ n will always ha ve a diameter that is o f orde r larger tha n n − 1 / 2 ; in fact, it will a lways contain elements θ n 6 = 0 such that θ n would be cor rectly classified as no n-zero with pr o bability conv erging to one by the a da ptive LASSO pro cedure use d, i.e., P n,θ n ( ˆ θ A 6 = 0) → 1 (to see this note that R − Θ n contains elements θ n satisfying θ n /µ n → ζ with | ζ | > 1 and use P r op osition 1 ). This shows tha t adopting Θ n as the par ameter spa ce r ules out v alues of θ that are substantially different from zero, and not only v alues of θ that are difficult to statistically distinguish from zero; consequently the ‘forbidden’ set is sizable. Summarizing, there a ppe a rs to be little reason wh y Θ n would be a natural c hoice of par ameter s pace, esp ecially in the context of mo del selection where in terest naturally foc us ses on the neigh b orho o d o f zero. W e there fore be lieve that using Θ n as the parameter space is ha rdly supp orted by s ta tistical r easoning but is more reflective of a search for conditio ns that are fav orable to the ‘o racle’ prop er ty . As men tioned ab ove, Theor em 5 shows, in particular , that ˆ θ A is not uni- formly n 1 / 2 -consistent. This pr ompts the question of the b e havior of the dis - tribution of c n ( ˆ θ A − θ n ) under a seq ue nc e o f norming consta nt s c n that are o ( n 1 / 2 ). Insp ection of the pro o f of Theorem 5 reveals that the sto chastic un- bo undedness phenomenon p ers ists if c n is o ( n 1 / 2 ) but is of o rder larger than µ − 1 n . F or c n = O ( µ − 1 n ), we alw ays ha ve sto chastic bo undedness by T he o rem 2. Hence, the unifor m conv erg ence rate of ˆ θ A is seen to be µ n which is slower than n − 1 / 2 . The precise limit dis tributions of the es timator under the scaling c n ∼ µ − 1 n is obtained in the next theo rem. [The case c n = o ( µ − 1 n ) is trivial since then these limits are alw ays p ointmass at zero in view of T heo rem 2. 3 ] A co nsequence of the next theor em is that with such a sca ling the p ointwise limiting dis tributions alwa ys degenerate to p ointmass at ze r o. This p o int s to something o f a dilemma with the adaptive LASSO estimator when tuned to p erfo rm consistent model selection: If we s c ale the estimator by µ − 1 n , i.e., by the ‘r ight’ uniform rate, the po int wis e limiting distributions degener ate to po int mass at zero . If we scale the estimator by n 1 / 2 , which is the ‘right’ po int w is e ra te (at least if n 1 / 4 µ n → 0), then we end up with sto chastically un b ounded sequences of distributions under 3 There is no loss in generalit y here in the sense that the general case where c n = O ( µ − 1 n ) holds can – by passing to subsequences – alwa ys b e reduced to the cases where c n ∼ µ − 1 n or c n = o ( µ − 1 n ) holds. 12 a moving pa r ameter as ymptotic framework (for certain sequences θ n ). Let G A,n,θ stand fo r the finite-sample distribution of µ − 1 n ( ˆ θ A − θ ) under P n,θ . Clearly , G A,n,θ ( x ) = F A,n,θ ( n 1 / 2 µ n x ). The limits of this distribution under ‘moving par ameter’ asymptotics are given in the subsequent theore m. It turns out that the limiting distributions are alwa ys p ointmasses, how ever, no t alwa ys lo cated a t z e ro. Theorem 6 Assume that µ n → 0 , n 1 / 2 µ n → ∞ , and that θ n /µ n → ζ for some ζ ∈ R ∪ {−∞ , ∞} . 1. If | ζ | < 1 , then G A,n,θ n c onver ges we akly to the c df 1 ( · ≥ − ζ ) . 2. If 1 ≤ | ζ | < ∞ , then G A,n,θ n c onver ges we akly the c df 1 ( · ≥ − 1 /ζ ) . 3. If | ζ | = ∞ , then G A,n,θ n c onver ges we akly to the c df 1 ( · ≥ 0) . 3.3.3 Some Remarks Remark 7 Theorems 4 and 5 actua lly completely descr ib e all accumulation po ints of the finite-sa mple distribution o f n 1 / 2 ( ˆ θ A − θ n ) without any co ndition on the s equence of parameter s θ n . T o see this, just a pply the theo rems to subsequences and note that by co mpactness of R ∪ {−∞ , ∞} we ca n select from every subse q uence a further subsequence such that the relev ant quantit ies like n 1 / 2 θ n , θ n /µ n , and n 1 / 2 µ 2 n /θ n conv erge in R ∪ {−∞ , ∞} along this further subsequence. A similar co mment also applies to Theor em 6. Remark 8 As a po int of interest w e note tha t the full complexit y of the pos- sible limiting distributions in Theorems 4, 5, and 6 already a rises if we restrict the sequences θ n to a b ounded neighborho o d of zero. Hence , the pheno mena describ ed by the ab ov e theorems are of a local natur e, and are not tied in an y wa y to the un b oundedness o f the par ameter space. Remark 9 In case the estimato r is tuned to p erform co ns istent mo de l selec- tion, it is mainly the behavior o f θ n /µ n that gov erns the form of the limiting distributions in Theorems 5 and 6. Note that θ n /µ n is of smaller order than n 1 / 2 θ n bec ause n 1 / 2 µ n → ∞ in the co nsistent case. Hence, a n ana ly sis rely- ing only on the class ical lo cal asymptotics bas ed on per turbations of θ o f the order of n − 1 / 2 do es not pr op erly reveal all p ossible limits of the finite-s a mple distributions in that case. [This is in contrast to the conserv ative case, where classical lo cal asymptotics reveal all p ossible limit distributions .] Remark 10 The mathematical rea s on for the failure of the p oint wise as y mp- totic distributions to ca pture the behavior of the finite-sample distributions well is that the convergence o f the latter to the former is not unifor m in the under- lying par ameter θ ∈ R . See Leeb & P¨ otscher (2003, 2005) for more discussion in the context of p os t-mo del-selection e s timators. 13 Remark 11 The theoretical analysis ha s b een restricted to the case of orthog - onal regr essor s. In the case of correla ted r egresso rs we can exp ect to see similar phenomena (e.g., non-normality o f finite-sample cdfs, non-unifor mit y pro blems, etc.), although details will b e different. E vidence for this is provided by the simulation s tudy pr esented in Section 4, b y c o rresp o nding theoretical results for a class o f p ost-mo del- selection estimator s (Leeb & P¨ otscher (2 0 03, 2006 a, 2008b)) in the co rrelated regress or case as well as by gene r al re sults o n es- timators p ossessing the spar sity pro p erty (Leeb & P¨ otscher (2008a), P¨ otscher (2007)). 3.4 Imp ossibilit y results for est imating the distribution of the adaptive LASSO Since the cdf F A,n,θ of n 1 / 2 ( ˆ θ A − θ ) de p ends on the unknown para meter , as shown in Section 3.3.1, one might b e in terested in estimating this cdf. W e show that this is an int rinsica lly difficult estimation problem in the sense that the cdf ca nnot b e estimated in a unifor mly consistent fashion. In the following, we pr ovide lar ge-sa mple re sults that co ver b oth consistent a nd conse rv a tive choices o f the tuning para meter, a s well as finite-sample results that hold for any choice of tuning pa rameter. F or related results in different contexts see Leeb & P¨ otscher (2006a,b, 20 08a), P¨ otscher (2006), P¨ otscher & Leeb (2007). It is stra ig htf orward to co nstruct co ns istent estimators for the distribution F A,n,θ of the (centered and scaled) estimator ˆ θ A . One p opula r choice is to use subsampling or the m out of n b o otstr a p w ith m/n → 0. Another p ossibility is to use the p oint wise large-sa mple limit distributions derived in Section 3 .3.2 together with a prop e rly chosen pre-test of the hypothesis θ = 0 versus θ 6 = 0. Because the p oint wise lar ge-sample limit dis tr ibution takes only tw o different functional for ms dep ending on whether θ = 0 or θ 6 = 0 , one can p e rform a pr e - test that rejects the hypothesis θ = 0 in case | ¯ y | > n − 1 / 4 , say , and estimate the finite-sample distribution b y that large-sa mple limit formula that corr esp onds to the outcome of the pr e -test; 4 the tes t’s cr itica l v alue n − 1 / 4 ensures that the correct larg e-sample limit formula is selected with probability a pproaching one as sample size increases . Howev er, as we s how next, any consistent es timator of the cdf F A,n,θ is necessar ily badly b ehaved in a w ors t-case sense. Theorem 12 L et µ n b e a se quenc e of tuning p ar ameters such t hat µ n → 0 and n 1 / 2 µ n → m with 0 ≤ m ≤ ∞ . L et t ∈ R b e arbitr ary. Then every c onsistent estimator ˆ F n ( t ) of F A,n,θ ( t ) satisfies lim n →∞ sup | θ | < c/n 1 / 2 P n,θ ˆ F n ( t ) − F A,n,θ ( t ) > ε = 1 for e ach ε < (Φ( t + m ) − Φ( t − m )) / 2 and e ach c > | t | . In p articular, no uniformly c onsistent estimator for F A,n,θ ( t ) exists. 4 In the conserv ative case, the asymptotic distribution can als o dep end on m which i s then to b e replaced by n 1 / 2 µ n . 14 W e stress that the ab ove result also a pplies to any kind o f b o otstra p- or subsampling-bas e d estimator o f the cdf F A,n,θ whatso ever, since the results in Leeb & P¨ otscher (200 6b) on whic h the pr o of of Theorem 12 rests a pply to arbitrar y randomized estimators, cf. Lemma 3 .6 in Leeb & P¨ otscher (200 6b). The same applies to Theor ems 13 and 14 that follow. Lo osely spe aking, Theorem 12 states that any consistent estimator for the cdf F A,n,θ suffers from an unav oidable w orst-ca se error of at least ε with ε < (Φ( t + m ) − Φ( t − m )) / 2. The er ror range , i.e., (Φ( t + m ) − Φ( t − m )) / 2, is gov erned b y the limit m = lim n n 1 / 2 µ n . In cas e the estima to r is tuned to b e consistent, i.e., in cas e m = ∞ , the erro r ra nge equals 1 / 2, and the phenomenon is most pr onounced. If the estimato r is tuned to b e conser v ative so that m < ∞ , the er ror range is less than 1 / 2 but ca n still b e substa nt ial. Only in cas e m = 0 the erro r rang e equals zero, and the condition ε < (Φ( t + m ) − Φ( t − m )) / 2 in Theorem 1 2 leads to a trivia l conclus ion. This is, how ever, not surpr ising as then the r esulting estimato r is unifor mly asy mpto tica lly equiv a lent to the unrestricted maximum likelihoo d e stimator ¯ y , cf. Remark 3. A similar no n-uniformity phenomeno n as des crib ed in Theo rem 12 for consis - ten t estimators ˆ F n ( t ) a lso o ccurs for no t necessarily consistent estimators. F or such ar bitrary estimators we find in the following that the phenomenon can b e somewhat les s pronounced, in the sense that the lower bound is now 1 / 2 instead of 1, c f. (13 ) b elow. The following theorem gives a large-sa mple limit result that parallels Theorem 12, as well as a finite-sample result, both for arbitrary (and not necessar ily consistent) estimators of the cdf. Theorem 13 L et 0 < µ n < ∞ and let t ∈ R b e arbitr ary. Then every est imator ˆ F n ( t ) of F A,n,θ ( t ) satisfies sup | θ | < c/n 1 / 2 P n,θ ˆ F n ( t ) − F A,n,θ ( t ) > ε ≥ 1 2 (12) for e ach ε < (Φ( t + n 1 / 2 µ n ) − Φ( t − n 1 / 2 µ n )) / 2 , for e ach c > | t | , and fo r e ach fixe d sample size n . If µ n satisfies µ n → 0 and n 1 / 2 µ n → m as n → ∞ with 0 ≤ m ≤ ∞ , we thus have lim inf n →∞ inf ˆ F n ( t ) sup | θ | < c/n 1 / 2 P n,θ ˆ F n ( t ) − F A,n,θ ( t ) > ε ≥ 1 2 (13) for e ach ε < (Φ( t + m ) − Φ( t − m )) / 2 and for e ach c > | t | , wher e the infi mum in (13) extends over al l estimators ˆ F n ( t ) . The finite-sample statement in Theorem 13 c learly reveals how the estima- bilit y of the cdf of the estimator dep ends on the tuning parameter µ n : A larger v alue of µ n , which results in a ‘more sparse’ estimator in view of (5), directly corres p o nds to a large r range (Φ( t + n 1 / 2 µ n ) − Φ( t − n 1 / 2 µ n )) / 2 for the error ε within which a ny es timator ˆ F n ( t ) p erfo rms po orly in the sense of (12 ). In large samples, the limit m = lim n →∞ n 1 / 2 µ n takes the role of n 1 / 2 µ n . An imp ossibility result par alleling Theorem 13 for the cdf G A,n,θ ( t ) of µ − 1 n ( ˆ θ A − θ ) is given next. 15 Theorem 14 L et 0 < µ n < ∞ and let t ∈ R b e arbitr ary. Then every est imator ˆ G n ( t ) of G A,n,θ ( t ) satisfies sup | θ | < cµ n P n,θ ˆ G n ( t ) − G A,n,θ ( t ) > ε ≥ 1 2 (14) for e ach ε < (Φ( n 1 / 2 µ n ( t + 1)) − Φ( n 1 / 2 µ n ( t − 1))) / 2 , for e ach c > | t | , and for e ach fixe d sample size n . If µ n satisfies µ n → 0 and n 1 / 2 µ n → ∞ as n → ∞ , we thus have for e ach c > | t | lim inf n →∞ inf ˆ G n ( t ) sup | θ | < cµ n P n,θ ˆ G n ( t ) − G A,n,θ ( t ) > ε ≥ 1 2 (15) for e ach ε < 1 / 2 if | t | < 1 and for e ach ε < 1 / 4 if | t | = 1 , wher e the infimu m in (15) extends over al l estimators ˆ G n ( t ) . This result shows, in par ticular, that no uniformly consis tent es timator ex- ists for G A,n,θ ( t ) in case | t | ≤ 1 (not e ven ov er co mpact subsets of R con- taining the orig in). In v iew of Theore m 6, we see that for t > 1 we hav e sup θ ∈ R | G A,n,θ ( t ) − 1 | → 0 as n → ∞ , hence ˆ G n ( t ) = 1 is trivially a uniformly consistent estimator in this ca se. Similarly , for t < − 1 we ha ve s up θ ∈ R | G A,n,θ ( t ) | → 0 as n → ∞ , hence ˆ G n ( t ) = 0 is trivially a uniformly consistent estimator in this cas e . 4 Some M on te Carlo Results W e provide simulation results for the finite-sample distribution of the adaptive LASSO es timator in the case of non-ortho gonal regressor s to complemen t our theoretical findings for the orthog o nal ca se. W e present our results by showing the mar ginal distribution for ea ch component of the ce nt ered and sc a led estima- tor. No t sur prisingly , the gra phs exhibit the sa me hig hly non-no rmal featur es of the co rresp o nding finite-sample distribution of the estimator derived in Section 3.3 for the case of orthogo na l reg resso r s. The simulations were carried out the following w ay . W e consider 1000 rep e- titions of n simulated data p oints from the mo del (1) with σ 2 = 1 and X such that X ′ X = n Ω with Ω ij = 0 . 5 | i − j | for i , j = 1 , . . . , k . More concr etely , X was partitioned into d = n/k blocks o f siz e k × k (where d is a ssumed to b e integer) and eac h of these blo cks was set equa l to k 1 / 2 L , with LL ′ = Ω, the Cholesky factorization o f Ω. W e used k = 4 regres sors a nd v arious v alues of the true parameter θ given by θ = (3 , 1 . 5 , γ n − 1 / 2 , γ n − 1 / 2 ) ′ where γ = 0 , 1 , 2. This mo del with θ = (3 , 1 . 5 , 0 , 0) ′ (i.e., γ = 0) is a downsized version o f a mo del considered in Monte Carlo studies in Tibshirani (19 96), F an & Li (200 1), and Zou (200 6). F or apparent reaso ns it is of in terest to investigate the perfor ma nce of the es- timator not only at a single parameter v a lue, but also at other (neighbo ring) po ints in the parameter spac e. The cases with γ 6 = 0, represent the statistically 16 int eresting case where some components o f the true parameter v alue are close to but not equal to zero. F or each simulation, w e computed the a daptive LASSO estimator ˆ θ A using the LARS pack age of E fron et al. (200 4) in R. Each c omp onent of the esti- mator was centered and scaled, i.e., C − 1 / 2 j j ( ˆ θ A,j − θ j ) was co mputed, where C = ( n Ω) − 1 . The tuning pa rameter µ n was chosen in t wo differ ent ways. In the first cas e, it w as s et to the fixed v alue of µ n = n − 1 / 3 , a choice that cor - resp onds to consistent mo del selection and additionally satisfies the condition n 1 / 4 µ n → 0 required in Zou (200 6) to obtain the ’oracle’ pr op erty . In the sec- ond case, in each simulation the tuning parameter was selected to minimize a mean-squar ed pr ediction error obtained through K - fold cro ss-v alidation (whic h can b e co mputed using the LARS pack ag e, in our ca se with K = 10). The results for bo th choices o f the tuning para meters, for n = 10 0, and γ = 0 , 1 , 2 are shown in Figur es 2-7 b elow. F or each comp onent of the estima- tor, the discrete comp onent of the distribution cor resp onding to the zero v alues of the j -th comp onent o f the estimator ˆ θ A,j (app e aring at − C − 1 / 2 j j θ j for the centered and scaled estimator) is re presented b y a dot dr awn at the heigh t of the corr esp onding relative frequency . The his to gram formed fro m the rema ining v alues of C − 1 / 2 j j ( ˆ θ A,j − θ j ) was then smo o thed b y the kernel smo other av a ilable in R, resulting in the curves representing the density o f the abs olutely contin- uous pa rt of the finite-sample distribution of C − 1 / 2 j j ( ˆ θ A,j − θ j ). Na turally , in these plots the density was r escaled by the appro priate r elative frequency o f the estimator not b eing equal to zero. W e firs t discuss the case where the tuning par ameter is set at the fixed v alue µ n = n − 1 / 3 . F o r γ = 0, i.e., the case where the last tw o comp o nent s of the true parameter are iden tically zero, Figur e 2 shows that the adaptive LASSO estimator finds the zero comp onents in θ = (3 , 1 . 5 , 0 , 0 ) ′ with pro bability close to one (i.e., the distributions o f C − 1 / 2 j j ( ˆ θ A,j − θ j ), j = 3 , 4, pra ctically coincide with pointmass at 0). F ur thermore, the distributions o f the first t wo comp onents seem to somewhat res emble normalit y . The o utcome in this case is hence roug hly in line with what the ’or acle’ prop er ty predicts. This is due to the fact that the comp onents of θ a re either zero or lar ge (note that C − 1 / 2 j j θ j is approximately equal to 26 and 12, resp ectively , for j = 1 , 2). The results ar e quite different for the cases γ = 1 a nd γ = 2 (Figur es 3 and 4), which re pr esent the case where some of the compo nents of the parameter vector θ are large and some are different fro m zero but small (note that C − 1 / 2 33 θ 3 ≈ 0 . 7 7 γ and C − 1 / 2 44 θ 4 ≈ 0 . 8 7 γ ). In bo th cases the dis tributions o f C − 1 / 2 j j ( ˆ θ A,j − θ j ), j = 3 , 4 , are a mixtur e of an atomic part and an absolutely contin uous part, b oth shifted to the left o f the origin. F urthermore, the absolutely contin uo us pa rt app ear s to b e highly non- normal. This is p erfectly in line with the theoretical results obtained in Section 3.3. It once aga in demonstrates tha t the ’oracle ’ prop erty gives a misleading impression of the actual p er formance of the estimator. In the case wher e the tuning parameter is c hosen b y cr o ss-v alidation, a similar pictur e emerges, e xcept for the fac t that in case γ = 0 the ada ptive 17 −3 −2 −1 0 1 2 3 0.0 0.1 0.2 0.3 0.4 theta1 −4 −2 0 2 0.0 0.1 0.2 0.3 0.4 theta2 −2 −1 0 1 2 0.0 0.2 0.4 0.6 0.8 1.0 theta3 −2 −1 0 1 2 3 0.0 0.2 0.4 0.6 0.8 1.0 theta4 Figure 2: Marg ina l distributio ns of the s c a led a nd c entered adaptive LASSO estimator for n = 100, γ = 0 , i.e., θ = (3 , 1 . 5 , 0 , 0 ) ′ , and µ n = n − 1 / 3 = 0 . 22. −4 −2 0 2 0.0 0.1 0.2 0.3 0.4 theta1 −3 −2 −1 0 1 2 3 4 0.0 0.1 0.2 0.3 0.4 theta2 −1 0 1 2 3 0.0 0.2 0.4 0.6 0.8 theta3 −2 −1 0 1 2 0.0 0.2 0.4 0.6 0.8 theta4 Figure 3: Marg ina l distributio ns of the s c a led a nd c entered adaptive LASSO estimator for n = 100, γ = 1 , i.e., θ = (3 , 1 . 5 , 0 . 1 , 0 . 1) ′ , and µ n = n − 1 / 3 = 0 . 22. 18 −2 0 2 4 0.0 0.1 0.2 0.3 0.4 theta1 −4 −2 0 2 4 0.0 0.1 0.2 0.3 0.4 theta2 −2 −1 0 1 2 3 4 0.0 0.1 0.2 0.3 0.4 0.5 theta3 −2 −1 0 1 2 3 0.0 0.1 0.2 0.3 0.4 0.5 theta4 Figure 4: Marg ina l distributio ns of the s c a led a nd c entered adaptive LASSO estimator for n = 100, γ = 2 , i.e., θ = (3 , 1 . 5 , 0 . 2 , 0 . 2) ′ , and µ n = n − 1 / 3 = 0 . 22. LASSO estimator now finds the zero compo ne nt less frequently , cf. Fig ure 5. [In fact, the pr o bability of finding a zero v alue o f ˆ θ A,j for j = 3 , 4 is smaller in the cross- v alidated ca se rega rdless of the v alue of γ considere d.] The reason for this is that the tuning parameters o btained through cro ss-v alidation were typically found to be smaller than n − 1 / 3 , r esulting in an estimator ˆ θ A that acts more like a conser v ative ra ther than a consistent mo de l selection pro cedure . [This is in line with theo retical results in Leng et al. (2006), see also Leeb & P¨ otscher (2008b).] In agreement with the theoretical results in Section 3.3, the absolutely contin uous components of the distributio ns o f C − 1 / 2 j j ( ˆ θ A,j − θ j ) are no w typically highly no n-normal, esp ecially for j = 3 , 4, cf. Figures 5-7. [Note that cro s s- v alidatio n leads to a data-dep ending tuning para meter µ n , a situa tion that is strictly sp eaking not cov ered by the theoretical r esults.] W e ha ve also exper iment ed with other v alues of θ suc h as θ = (3 , 1 . 5 , γ n − 1 / 2 , 0) ′ or θ = (3 , 1 . 5 , 0 , γ n − 1 / 2 ) ′ , other v alues of γ and other sample sizes suc h as n = 6 0 or 200. The r esults were found to b e qualitatively the same. 5 Conclusion W e hav e studied the distribution of the adaptive LASSO estimator , a p ena lized least squares estimator in tro duced in Zou (2006), in finite-samples as well as in the large-s ample limit. The theore tical study assumes an orthogo nal regr ession mo del. The finite-sa mple distribution w as found to be a mixture of a sing ula r 19 −15 −10 −5 0 0.0 0.1 0.2 0.3 0.4 theta1 −10 −5 0 0.0 0.1 0.2 0.3 0.4 theta2 −3 −2 −1 0 1 2 3 0.0 0.1 0.2 0.3 0.4 0.5 theta3 −2 −1 0 1 2 3 0.0 0.1 0.2 0.3 0.4 0.5 theta4 Figure 5: Marg ina l distributio ns of the s c a led a nd c entered adaptive LASSO estimator for n = 10 0, γ = 0, i.e., θ = (3 , 1 . 5 , 0 , 0 ) ′ , and µ n chosen b y cross - v alidatio n. −20 −15 −10 −5 0 0.0 0.1 0.2 0.3 theta1 −10 −5 0 0.00 0.10 0.20 0.30 theta2 −4 −2 0 2 4 0.00 0.10 0.20 0.30 theta3 −2 0 2 4 0.00 0.10 0.20 0.30 theta4 Figure 6: Marg ina l distributio ns of the s c a led a nd c entered adaptive LASSO estimator fo r n = 100 , γ = 1, i.e., θ = (3 , 1 . 5 , 0 . 1 , 0 . 1) ′ , and µ n chosen by cross- v alidation. 20 −20 −15 −10 −5 0 0.0 0.1 0.2 0.3 0.4 theta1 −10 −5 0 0.0 0.1 0.2 0.3 0.4 theta2 −4 −2 0 2 0.00 0.10 0.20 0.30 theta3 −3 −2 −1 0 1 2 3 0.00 0.10 0.20 0.30 theta4 Figure 7: Marg ina l distributio ns of the s c a led a nd c entered adaptive LASSO estimator fo r n = 100 , γ = 2, i.e., θ = (3 , 1 . 5 , 0 . 2 , 0 . 2) ′ , and µ n chosen by cross- v alidation. normal distribution and an absolutely contin uous distribution, which is non- normal. The la rge-sa mple limit of the distributions dep ends on the choice of the estimator ’s tuning para meter, and we can distinguish tw o cases: In the fir st case the tuning is such that the estimator acts as a conserv a- tive mo del selector. In this case, the adaptive LASSO estimator is found to be uniformly n 1 / 2 -consistent. W e also show that fixed-parameter asymptotics (where the true pa rameter r emains fixed while sa mple size increas es) only par - tially reflect the actual behavior of the distribution where as “moving-para meter” asymptotics (where the true par ameter ma y depend on sample size) giv es a mor e accurate picture. The moving-para meter analysis shows that the distribution may b e highly no n-normal irresp ective of sample size, in particula r, in the sta - tistically in teresting ca se wher e the true parameter is close (in an a ppr opriate sense) to a low e r-dimensional submo de l. This als o implies that the finite-sample phenomena that w e have observed ca n o cc ur at any sample size. In the second case, where the estimator is tuned to per form consistent mo del selection, again fixed-par ameter asy mptotics do not ca pture the whole rang e of large-s ample pheno mena that can o ccur. With ‘moving parameter’ asymptotics, we hav e shown that the distribution of these estimators can aga in b e highly non- normal, even in larg e samples. In addition, we hav e found that the obs erved finite-sample phenomena not only can p ers ist but actually can be mor e pro - nounced for larger sample sizes. F o r example, the distribution of the estimator (prop erly centered a nd scaled by n 1 / 2 ) can diverge in the sens e that a ll its mas s 21 escap es to either + ∞ or −∞ . In fact, we hav e es tablished that the uniform conv ergence rate of the a daptive LASSO estimator is slow er than n − 1 / 2 in the consistent mo del selection case. These findings are esp ecially imp o rtant as the adaptive LASSO estimator has b een shown in Zou (2006) to po ssess an ’ora- cle’ pro pe r ty (under an a dditional a ssumption o n the tuning parameter), w hich promises a con vergence r a te of n − 1 / 2 and a normal distribution in large sam- ples. How ever, the ’oracle’ prop er ty is based on a fixed-parameter a symptotic argument which, as our results show, g ives hig hly misleading r esults. The findings men tioned ab ove are based on a theoretical analysis (Section 3) of the adaptive LASSO estimator in an or thogonal linear regression mo del. The or tho gonality res triction is r emov ed in the Monte Carlo analy sis in Sectio n 4. The results fro m this simulation study confir m the theor e tical results. Finally , we hav e studied the pro ble m o f estimating the cdf of the (centered and scaled) a daptive LASSO es timator. W e hav e shown that this cdf cannot be estimated in a uniformly consistent fashion, even though po int wis e consistent estimators can b e constructed with rela tive ease. W e would like to stress that our r esults s hould not b e read as a condemna tion of the ada ptive LASSO estimator, but as a warning that the dis tributional prop erties of this estimator are quite intricate and complex . A App endix Pro of of T heorem 2: Since (7) implies (6), it suffices to prov e the former. F or this, it is instructive to write ˆ θ A in terms of the hard-thr esholding estimator ˆ θ H as defined in P¨ otscher & Leeb (200 7) (with η n = µ n ) by observing that ˆ θ A = ˆ θ H − sign( ˆ θ H ) µ 2 n / | ¯ y | . Here sign( x ) = − 1 , 0 , 1 dep ending on whether x < 0 , = 0 , > 0. Since ˆ θ H satisfies (7) a s is shown in Theorem 2 in P ¨ otscher & Leeb (2 007), it suffices to consider sup θ ∈ R P n,θ ( a n | ˆ θ H − ˆ θ A | > M ) = sup θ ∈ R P n,θ ( a n µ 2 n / | ¯ y | > M , ˆ θ H 6 = 0) = sup θ ∈ R P n,θ ( a n µ 2 n / | ¯ y | > M , | ¯ y | > µ n ) ≤ 1 ( a n µ n > M ) . Since a n µ n ≤ 1, the r ight-hand side in the a bove expression equals zero for a ny M > 1. Prop ositi on 15 L et θ n ∈ R and 0 < µ n < ∞ . If θ n /µ n → − ∞ and n 1 / 2 θ n → −∞ , then z (1) n,θ n ( x ) − x ∼ n 1 / 2 µ 2 n /θ n as n → ∞ for every x ∈ R . If θ n /µ n → ∞ and n 1 / 2 θ n → ∞ , t hen z (2) n,θ n ( x ) − x ∼ n 1 / 2 µ 2 n /θ n for every x ∈ R . 22 Pro of. W e prov e the first cla im. W e ca n write z (1) n,θ n ( x ) − x = − ( n 1 / 2 θ n + x ) / 2 − q (( n 1 / 2 θ n + x ) / 2) 2 + nµ 2 n = n 1 / 2 α n ( x ) n − 1 + p 1 + ( µ n /α n ( x )) 2 o with n 1 / 2 α n ( x ) = ( n 1 / 2 θ n + x ) / 2 where the last eq uality holds for la rge n since n 1 / 2 α n ( x ) < 0 even tually . Through an expa nsion of √ 1 + z ab out zero, we obtain z (1) n,θ n ( x ) − x = n 1 / 2 ( µ 2 n /α n ( x ))(1 + ¯ z n ) − 1 / 2 / 2 = ( n 1 / 2 µ 2 n /θ n )(1 + x/ ( n 1 / 2 θ n )) − 1 (1 + ¯ z n ) − 1 / 2 , with 0 ≤ ¯ z n ≤ ( µ n /α n ( x )) 2 . Note that µ n /α n ( x ) = 2( µ n /θ n )(1+ x/ ( n 1 / 2 θ n )) − 1 → 0, and hence ¯ z n → 0 holds. The cla im now follows. The second claim is prov ed analogo usly . Pro of of Theorem 4: W e derive the corresp onding asymptotic dis tr ibu- tions by studying the limit b ehavior o f (9) with θ replaced by θ n . If ν ∈ R the result immediately follows, since F A,n,θ n ( x ) conv erg e s to the limit giv en a b ove for every x 6 = − ν as a consequence o f (8) and n 1 / 2 θ n → ν . F or the case ν = ∞ , note that the indicator function o f the first term in (9) go es to 1 for every x ∈ R , whereas the second one go es to 0. F ur thermore, we clearly hav e θ n /µ n → ∞ since 0 ≤ m < ∞ holds. Ther efore we can apply Pr op osition 15 to find that z (2) n,θ n ( x ) → x s ince n 1 / 2 µ 2 n /θ n = n 1 / 2 µ n ( µ n /θ n ) → m · 0 = 0 . This implies that F A,n,θ ( x ) → Φ( x ) for all x ∈ R in ca se ν = ∞ . A similar ar gument can be made to prov e the claim for ν = −∞ . Pro of of Theorem 5 : If | ζ | < 1 , Prop os ition 1 shows tha t the total mas s of the atomic par t (1 0) of the distribution F A,n,θ n go es to 1; furthermore, the lo cation of the atomic part, i.e., − n 1 / 2 θ n , then conv erges to − ν ∈ R or to ± ∞ . This proves the theorem in case | ζ | < 1. W e prove the remaining cas es by insp e cting the limit behavior of (9), aga in with θ n replacing θ . T o derive the limits for 1 ≤ | ζ | ≤ ∞ , note that n 1 / 2 θ n → s ig n( ζ ) ∞ , so that by ass e s sing the limit of the indicator functions in (9), it can easily b e seen that F A,n,θ n ( x ) conv erges to the limit o f Φ( z (2) n,θ n ( x )) for ζ > 0 and to the limit o f Φ( z (1) n,θ n ( x )) for ζ < 0. Elementary calculations show that z (2) n,θ n ( x ) → ∞ fo r 1 ≤ ζ < ∞ and that z (1) n,θ n ( x ) → −∞ for −∞ < ζ ≤ − 1. As a c o nsequence o f P rop ositio n 15, also z (2) n,θ n ( x ) → ∞ if ζ = ∞ and n 1 / 2 µ 2 n /θ n → ∞ ; simila rly , z (1) n,θ n ( x ) → −∞ if ζ = −∞ and n 1 / 2 µ 2 n /θ n → − ∞ . This then prov es the remaining cases in part 2. Under the a s sumptions o f part 3, an a pplication o f Pr o p osition 15 gives that z (2) n,θ n ( x ) → x + r if ζ = ∞ a nd tha t z (1) n,θ n ( x ) → x + r if ζ = −∞ , which then prov es pa rt 3 . 23 Pro of of Theorem 6: T o prov e part 1, obser ve that Prop osition 1 implies lim n →∞ P n,θ n ( ˆ θ A = 0) = 1 for | ζ | < 1. This entails lim n →∞ P n,θ n ( µ − 1 n ( ˆ θ A − θ n ) ≤ x ) = lim n →∞ P n,θ n ( µ − 1 n ( ˆ θ A − θ n ) ≤ x, ˆ θ A = 0) = lim n →∞ 1 ( − θ n /µ n ≤ x ) = 1 ( x ≥ − ζ ) for x 6 = − ζ , which establishes part 1. Next, observe that G A,n,θ n ( x ) = 1 ( θ n /µ n + x ≥ 0 )Φ( w (2) n,θ n ( x )) + 1 ( θ n /µ n + x < 0 )Φ( w (1) n,θ n ( x )) (16) where w (1) n,θ n ( x ) and w (2) n,θ n ( x ) with w (1) n,θ n ( x ) ≤ w (2) n,θ n ( x ) ar e given by n 1 / 2 µ n n ( − θ n /µ n + x ) ± p ( θ n /µ n + x ) 2 + 4 o / 2 . (17) Under the conditions of pa r t 2, the first indicator function in (16) tends to 1 for x > − ζ and to 0 for x < − ζ . Conseq uently , G A,n,θ n ( x ) conv erges to lim n →∞ Φ( w (2) n,θ n ( x )) if x > − ζ , and to lim n →∞ Φ( w (1) n,θ n ( x )) if x < − ζ (provided the limits exist). Elementary calculatio ns show that fo r ζ ≥ 1 we hav e w (1) n,θ n ( x ) → − ∞ for all x ∈ R , w (2) n,θ n ( x ) → − ∞ for x < − 1 /ζ , and w (2) n,θ n ( x ) → ∞ for x > − 1 /ζ . F or ζ ≤ − 1 we obta in w (1) n,θ n ( x ) → −∞ fo r x < − 1 / ζ , w (1) n,θ n ( x ) → ∞ for x > − 1 /ζ , and w (2) n,θ n ( x ) → ∞ for all x ∈ R . Con- sequently , for x 6 = − ζ , w e find G A,n,θ n ( x ) → 0 for x < − 1 /ζ and G A,n,θ n ( x ) → 1 for x > − 1 /ζ . If | ζ | = 1, the result in part 2 follo ws. If | ζ | > 1, conv ergence of G A,n,θ n ( − ζ ) to the prop er limit follows fro m monotonicity of G A,n,θ n and the fact that x = − ζ is a contin uit y p o int of the limit dis tr ibution. This then completes the pr o of of part 2. F or part 3 w e c o nsider first the cas e ζ = ∞ . Clearly , G A,n,θ n ( x ) converges to lim n Φ( w (2) n,θ n ( x )). Since w (2) n,θ n ( x ) = n 1 / 2 µ n n ( − θ n /µ n + x ) + p ( θ n /µ n + x ) 2 + 4 o / 2 by (17), and b ecause θ n /µ n → ∞ , it is e a sy to see that w (2) n,θ n ( x ) conv erg e s to ∞ if x > 0 a nd to −∞ if x < 0. The case where ζ = −∞ is proved analogo usly . Pro of of Theorem 12: Let θ n ( δ ) b e short-hand for − ( t + δ ) /n 1 / 2 . Ele- men tary calc ula tions show that lim δ ↓ 0 F A,n,θ n ( − δ ) ( t ) − F A,n,θ n ( δ ) ( t ) = Φ( t + n 1 / 2 µ n ) − Φ( t − n 1 / 2 µ n ) . (18) In particular, this implies that the supremum o f F A,n,θ n ( − δ ) ( t ) − F A,n,θ n ( δ ) ( t ) ov er 0 ≤ δ < c − | t | is b ounded from b elow b y Φ( t + n 1 / 2 µ n ) − Φ( t − n 1 / 2 µ n ). 24 The rest of the argument then proceeds s imilar as in the proo f of Theo rem 13 in P¨ otscher & Leeb (20 07). Pro of of Theorem 13: Analogous to the pro of of Theo r em 14 in P¨ otsc her & Leeb (2007) except for using (18) in place o f (11) in P¨ otscher & Leeb (2007). Pro of of Theorem 14: Analogous to the pro of of Theo r em 18 in P¨ otsc her & Leeb (2007). References Bauer, P. , P ¨ otscher, B. M. & Hackl, P. (19 8 8). Mo del s election by mul- tiple test pro cedures. St atistics 19 39–44 . Breiman, L. (1995). B etter subset regres sion using the nonneg ative gar otte. T e chnometrics 37 3 7 3–38 4. Bunea, F. (2004). Co nsistent cov aria te selection a nd po st mo del s election inference in s emiparametric reg ression. Annals of Statistics 32 898– 927. Bunea, F. & McKea gue, I . W. (2005). Cov ariate selec tio n for semiparametric hazard function r egress io n mo dels. Journal of Multivariate Analysis 9 2 186– 204. Efron, B. , Hastie, T. , Johnstone, I. & Tibshirani, R. (20 04). Least ang le regres s ion. Annals of Statistics 32 40 7–49 9. F an, J. & Li, R. (2001). V ariable s e le ction via no nconcav e p enalized likelihoo d and its oracle pro p e rties. Journal of the Americ an Statistic al A sso ciation 96 1348– 1360 . F an, J. & Li, R. (200 2). V ariable selectio n fo r Cox’s prop o rtional hazards mo del and fr ailty mo del. Annals of Statistics 30 74 –99. F an, J. & Li, R. (2004 ). New es timation a nd model selection pro cedures for semiparametr ic mo deling in longitudinal data analysis. Journal of t he Americ an Statistic al Asso ciation 99 710 –723. Frank, I. E. & Friedman, J. H . (19 93). A statistical view of some c hemo- metrics reg r ession to o ls (with discussion). T e chnometrics 35 10 9–14 8. Johnson, B. , Lin, D. & Z eng, D. (200 8). Penalized estimating functions and v ar iable s election in semipar ametric regre s sion mo dels. Journal of the Americ an Statistic al Asso ciation 103 67 2–680 . Kabaila, P . (1995). The effect of mo del selection on confidence regions and prediction reg ions. Ec onometric The ory 11 537– 549. Knight, K. & Fu, W. (2000). Asymptotics o f lasso -type estimators. Annals of Statistics 28 1356 –137 8. 25 Leeb, H . & P ¨ otscher, B. M. (2 0 03). The finite-sample dis tr ibution of p o st- mo del-selection estimators a nd uniform versus nonuniform a pproximations. Ec onometric The ory 19 10 0–14 2 . Leeb, H . & P ¨ otscher, B. M. (2005). Mo del selection and inference: F acts and fiction. Ec onometric The ory 21 2 1–59. Leeb, H. & P ¨ otscher, B. M. (2 006a). Can one es timate the conditional distribution of po st-mo del-selec tio n estimators? Annals of Statist ics 3 4 2554– 2591. Leeb, H. & P ¨ otscher, B. M. (20 06b). Performance limits for estimators of the risk or distribution of shrink age-type estimato rs, and some gener al low er risk-b ound r esults. Ec onometric Th e ory 22 69– 97. (Corrections : ibidem, 24 , 581-5 83). Leeb, H. & P ¨ otscher, B. M. (2008 a). Can one estimate the unconditional distribution of p ost-mo del- selection estimators? Ec onometric The ory 2 4 338– 376. Leeb, H. & P ¨ otscher, B. M. (2008b). Spars e estimators and the ora c le prop erty , or the return of Ho dges’ estima to r. J ournal of Ec onometrics 142 201–2 11. Leng, C. , Lin, Y. & W ahba, G . (20 0 6). A no te on the la sso and rela ted pro cedures in mo de l selection. Statistic a Sinic a 16 12 73–1 284. Li, R. & Liang, H. (20 0 7). V aria ble selection in semipa rametric regr e ssion mo deling. Annals of Statistics 36 26 1–28 6 . P ¨ otscher, B. M. (200 6). The distribution of mo del av era ging estimators and an imp os sibility res ult rega r ding its estimation. IMS L e ctu r e Notes - Mono gr aph Series 52 11 3–12 9 . P ¨ otscher, B. M. (200 7). Confidence sets based o n spa r se estimators a re necessarily lar ge. Manuscript ArXiv:07 11.10 36. P ¨ otscher, B. M. & Leeb, H. (2 007). On the distribution of pe nalized maximum likelihoo d estimators: The LASSO, SCAD, and thr esholding. Manuscript ArXiv:071 1.0660 . Tibshirani, R. (1996 ). Regressio n shr ink ag e and selection via the lasso. Journal of the R oyal Statistic al So ciety Series B 5 8 2 67–2 8 8. W ang, H. & Leng, C. (2007). Unified lasso estimation b y least squares ap- proximation. Journ al of the Americ an Statistic al Asso ciation 102 1 0 39–1 048. W ang, H . , Li, G. & Jiang, G. (200 7 ). Robust regr ession shrink age and consistent v ariable selec tio n through the LAD-la sso. Journal of Business and Ec onomic Statistics 25 34 7–35 5. 26 W ang, H. , Li, G. & Tsai, C. L. (2007). Regression co efficient and autore- gressive order shrink ag e and s election via the lasso . Journal of the R oyal Statistic al So ciety Series B 69 63 –78. W ang, H. , Li, R. & Tsai, C. L. (20 07). T uning parameter selectors for the smo othly clipp ed absolute deviatio n metho d. Biometrika 94 553–5 68. Y a ng, Y. (2005). Can the streng ths of AIC and BIC b e sha red? A conflict betw een mo del indentification and reg ression estimation. Bio metrika 92 937– 950. Yuan, M. & Lin, Y . (20 07). Mo del selection a nd es timation in the gaussian graphical mo del. Biometrika 94 19 –35. Zhang, H. H. & Lu, W. (200 7). Adaptive lasso fo r Cox’s prop o rtional hazar ds mo del. Biometrika 94 691–7 03. Zou, H. (2006). The adaptive lass o and its orac le prop erties. Journ al of t he Americ an Statistic al Asso ciation 101 14 18–14 29. Zou, H. & Li, R. (2008). O ne-step spa r se estimates in nonconcave penalize d likelihoo d mo dels. Annals of Statistics 36 15 09–15 33. Zou, H. & Yuan, M. (2 0 08). Comp o s ite quantile regressio n and the o racle mo del selec tion theory . Annals of Statistics 36 11 0 8–11 26. 27

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment