Two-way source coding with a helper

Consider the two-way rate-distortion problem in which a helper sends a common limited-rate message to both users based on side information at its disposal. We characterize the region of achievable rates and distortions where a Markov form (Helper)-(U…

Authors: Haim Permuter, Yossi Steinberg, Tsachy Weissman

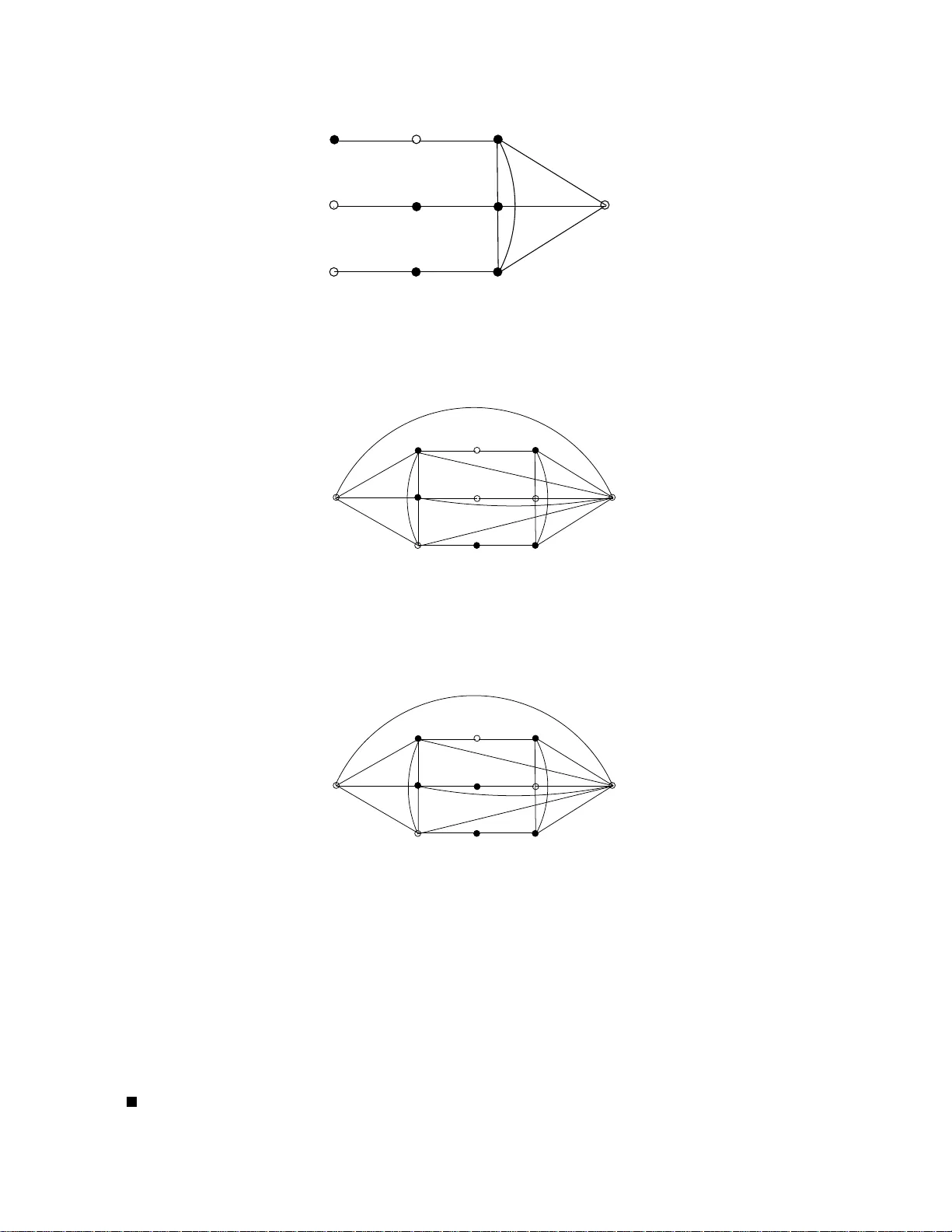

1 T wo-w ay source coding with a helper Haim Permuter , Y ossef Steinbe r g an d Tsach y W eissman Abstract Consider the two-way rate-distortion problem in which a helper sends a common limited-rate message to both users based on side information at its disposal. W e characterize the re gion of achie vable rates and distortions where a Marko v form (Helper)-(User 1)-(User 2) holds. The main insight of t he result is that i n order to achieve the optimal rate, the helper may use a binning scheme, as in W yner-Zi v , where t he side i nformation at the decoder is the “further” user , namely , User 2. W e deri ve these regio ns explicitly for the Gaussian sources with square error distortion, analyze a trade-off between the rate from the helper and the rate from the source, and examine a special case where the helper has the freedom to send differen t messages, at different rates, to the encoder and the decoder . The con verse proofs use a new technique for verifying Marko v relations via undirected graphs. Index T erms Rate-distortion, two-way rate distortion, undirected graphs, verification of Markov relati ons, W yner-Zi v source coding. I . I N T RO D U C T I O N In this paper, we consid er th e problem of two-way source encoding with a fidelity criterion in a situation where both users receive a co mmon message from a help er . The pr oblem is pre sented in Fig. 1. Note that the case in a P S f r a g r e p l a c e m e n t s X User X Helper R User Z ˆ X ˆ Z Y Z R 1 R 2 R 3 Fig. 1. The two-way rate distortion probl em wit h a helper . First Helper Y sends a common message to User X and to User Z, then User Z sends a message to User X, and final ly User X sends a message to User Z. The goal is that User X w ill rec onstruct the sequenc e Z n within a fidelity criter ion E h 1 n P n i =1 d z ( Z i , ˆ Z i ) i ≤ D z , and User Z will reconstruct the source X n within a fidelity criterion 1 n E h P n i =1 d x ( X i , ˆ X i ) i ≤ D x . W e assume that the side informati on Y and the two s ources X, Z are i.i.d. and form the Markov chain Y − X − Z . which the help er is absent was introduced and solved by Kasp i [1]. The work of H. Permuter and T . W eissman is suppor ted by NSF grants 072911 9 and 0546535. The work of Y . Steinbe rg is supported by the ISF (grant No. 280/07). Author’ s emails: haimp@bgu.ac .il, ystei nbe@ee.techn ion.ac.il, and tsachy@sta nford.edu 2 The en coding and d ecoding is don e in blocks of len gth n . Th e com munication pr otocol is that Helper Y first sends a common message at rate R 1 to User X and to User Z, an d then User Z sends a message at rate R 2 to User X, and finally , User X send s a message to User Z at rate R 3 . Note that u ser Z sends his message af ter it received only one message, while Sender X sends its message after it received tw o messages. W e assum e th at th e so urces and the he lper sequences are i.i.d. an d form the Markov chain Y − X − Z . User X receives two messages (one from the helper and on e fro m User Z) and reconstructs the source Z n . W e assume that the fid elity (o r distortio n) is of the fo rm E h 1 n P n i =1 d z ( Z i , ˆ Z i ) i and that this te rm should be less than a threshold D z . User Z also receives two m essages (one fr om the h elper and on e from User X) and reconstructs the sou rce X n . The reconstruction ˆ X n must lie within a fidelity criter ion of the fo rm 1 n E h P n i =1 d x ( X i , ˆ X i ) i ≤ D x . Our main result in th is paper is that the achievable region for this p roblem is given by R ( D x , D z ) , which is defined as th e set of all rate triples ( R 1 , R 2 , R 3 ) that satisfy R 1 ≥ I ( Y ; U | Z ) , (1) R 2 ≥ I ( Z ; V | U, X ) , (2) R 3 ≥ I ( X ; W | U, V , Z ) , (3) for some join t distribution of the f orm p ( x, y , z , u, v , w ) = p ( x, y ) p ( z | x ) p ( u | y ) p ( v | u, z ) p ( w | u, v , x ) , (4) where U , V and W are auxiliary ra ndom variables with bou nded cardinality . The r econstructio n variable ˆ Z is a deterministic functio n of the triple ( U, V , X ) , an d the re construction ˆ X is a determ inistic fu nction o f th e triple ( U, W, Z ) such that E d x ( X, ˆ X ( U, V , Z )) ≤ D x , E d z ( Z, ˆ Z ( U, W, X )) ≤ D z . (5) The main in sight g ained fr om th is r egion is th at the helpe r may u se a code based on b inning that is designed for a decoder with side inform ation, as in W yner and Ziv [2] . User X and User Z do n ot have the same side in formatio n, but it is su fficient to design th e h elper’ s code assuming th at th e side info rmation at the decode r is the one that is “further ” in the Mar kov ch ain, namely , Z . Since a d istribution of the fo rm (4) imp lies that I ( U ; Z ) ≤ I ( U ; X ) , a W yner-Zi v code at rate R 1 ≥ I ( Y ; U | Z ) would b e decoded successfully both by User Z an d by User X. On ce the helper’ s message has been deco ded by both users, a two-way source codin g is perf ormed where both users have additional side in formation U n . Sev eral paper s on related prob lems have app eared in the p ast in th e liter ature. W yner [3] studied a problem of network sou rce co ding with compr essed side information that is provided only to the decod ers. A special case o f his model is the system in Fig. 1 but without the memo ryless side information Z an d where the stream carry ing the helper’ s message arriv es o nly at the decoder (User Z) . A full characterizatio n of th e ac hiev able region can be conclud ed from the results of [ 3] fo r the spec ial case where the so urce X has to be reco nstructed losslessly . This 3 problem was solved in depend ently by Ah lswede and K ¨ orner in [4] , but the extension of these results to the case of lossy reco nstruction of X remains open. Kaspi [5] and Kaspi and Berger [6] derived an achiev ab le r egion for a p roblem th at contain s the helper pr oblem with degenerate Z as a spe cial case. Howe ver , the con verse par t does not m atch. In [7] , V asudev an and Perr on describ ed a gen eral rate d istortion pro blem with enco der breakdown and there they solved the case w here in Fig. 1 one of the sources is a constant 1 . Berger an d Y eun g [9] solved the mu lti-terminal source codin g p roblem where one of th e two sources n eeds to be r econstructed perfectly and th e othe r sour ce needs to be reconstructed with a fidelity criterion. Ooham a solved the multi-ter minal sou rce coding case fo r th e two [10 ] an d L + 1 [11] Gaussian sources, in which only one source needs to be rec onstructed with a mean square error, that is, the o ther L sourc es are helpers. More recently , W ag ner , T avildar, and V iswanath character ized the region wh ere both sou rces [12] or L + 1 sou rces [13] n eed to be reconstru cted at the deco der with a m ean square error criterion. In [1], Ka spi h as in troduced a mu ltistage co mmunica tion between two users, where each user may transmit up to K messages to the other user that depen ds on the sourc e an d p revious received m essages. In this paper we also consider the multi-stage source cod ing with a com mon h elper . Th e case wher e a helper is ab sent and the commun ication betwe en th e u sers is via mem oryless ch annels was recently solved by Ma or and Mer hav [1 4] whe re they showed tha t a sou rce chann el separation theorem holds. The remain der of th e pap er is organize d as follows. In Section II we presen t a new techniq ue for verifying Markov relations between rando m variables based on undire cted graph s. The techn ique is used thro ughou t the conv erse proo fs. The problem definition a nd th e a chiev able region fo r two way rate d istortion pro blem with a common helper are presented in Section III. Then we consider two special cases, first in Section IV we con sider the case of R 2 = 0 and D z = ∞ , a nd in Section V we consider R 3 = 0 and D x = ∞ . T he proofs of these two special cases provide the insigh t and th e tr icks th at are used in the proof of the general two-way rate disto rtion problem with a helper . The pr oof of the ach iev able region for the two-way rate distortio n problem with a helper is given in Section VI and it is extended to a multi-stage two way rate distortio n with a helper in Section V II. In Section VI II we consider th e Gauissan instance of the problem and derive th e region explicitly . In Section IX we return to the special case wh ere R 2 = 0 and D z = ∞ and analyze the trade-o ff between the bits from th e h elper and bits f rom so urce and g ain insight fo r th e case where the helper sends different messages to each user , which is an o pen problem . I I . P R E L I M I N A RY : A T E C H N I Q U E F O R C H E C K I N G M A R K O V R E L A T I O N S Here we p resent a new techn ique, based on un directed gra phs, that p rovides a sufficient co ndition for establishing a Markov chain fro m a join t distribution. W e use this technique throug hout the paper to verify M arkov relation s. A different technique using d irected graphs was intro duced by Pearl [1 5, Ch 1.2] , [16]. 1 The case where one of the sources is constant was also considered independently in [8]. 4 Assume we have a set of rand om variables ( X 1 , X 2 , ..., X N ) , wh ere N is the size o f the set. W ithout loss of generality , we assume that the joint distribution has the f orm p ( x N ) = f ( x S 1 ) f ( x S 2 ) · · · f ( x S K ) , (6) where X S i = { X j } j ∈S i , wher e S i is a subset o f { 1 , 2 , . . . , N } . The fo llowing grap hical techniq ue provides a sufficient co ndition for the Markov relation X G 1 − X G 2 − X G 3 , where X G i , i = 1 , 2 , 3 den ote three d isjoint subsets of X N . The techniq ue comprises two steps: 1) draw an un directed gra ph where all the ran dom variables X N are nod es in the g raph and for all i = 1 , 2 , ..K draw edges between all the nodes X S i , 2) if all pa ths in the grap h fro m a nod e in X G 1 to a node in X G 3 pass through a nod e in X G 2 , then the Markov chain X G 1 − X G 2 − X G 3 holds. P S f r a g r e p l a c e m e n t s X 1 X 2 Y 1 Y 2 Z 1 Z 2 Fig. 2. The undirected graph that cor responds to the joint dist ribution giv en in (7). The Marko v form X 1 − X 2 − Z 2 holds since all paths from X 1 to Z 2 pass through X 2 . The node wit h the open circ le, i.e., ◦ , is the m iddle term in the Mark ov chain and all the other nodes are with solid circles, i.e., • . Example 1: Consider the joint distribution p ( x 2 , y 2 , z 2 ) = p ( x 1 , y 2 ) p ( y 1 , x 2 ) p ( z 1 | x 1 , x 2 ) p ( z 2 | y 1 ) . (7) Fig. 2 illustrates the above technique for verifying the Markov relation X 1 − X 2 − Z 2 . W e conclu de that since all the path s from X 1 to Z 2 pass thro ugh X 2 , the Markov c hain X 1 − X 2 − Z 2 holds. The pro of of the techniqu e is based on the ob servation that if three random variables X, Y , Z have a joint d istribution of the for m p ( x, y , z ) = f ( x, y ) f ( y , z ) , th en the Markov chain X − Y − Z holds. The proo f a ppears in App endix A. I I I . P RO B L E M D E FI N I T I O N S A N D M A I N R E S U LT S Here we formally define the two-way rate- distortion p roblem with a helpe r and p resent a single-letter charac- terization of the achiev ab le region. W e use the r egular definitions of rate distortion and we follow the notation of [17]. The sou rce sequences { X i ∈ X , i = 1 , 2 , · · · } , { Z i ∈ Z , i = 1 , 2 , · · · } and the side inf ormation sequence 5 { Y i ∈ Y , i = 1 , 2 , · · · } ar e discrete r andom variables drawn f rom finite alphabets X , Z and Y , respectively . The rand om variables ( X i , Y i , Z i ) are i.i. d. ∼ p ( x, y , z ) . Let ˆ X and ˆ Z be the re construction alph abets, and d x : X × ˆ X → [0 , ∞ ) , d z : Z × ˆ Z → [0 , ∞ ) be single letter d istortion measur es. Distortion between sequences is defined in the usual way d ( x n , ˆ x n ) = 1 n n X i =1 d ( x i , ˆ x i ) d ( z n , ˆ z n ) = 1 n n X i =1 d ( z i , ˆ z i ) . (8) Let M i , d enote a set of positive in tegers { 1 , 2 , .., M i } for i = 1 , 2 , 3 . Definition 1: An ( n, M 1 , M 2 , M 3 , D x , D z ) c ode for two source X and Z with help er Y co nsists of three encoders f 1 : Y n → M 1 f 2 : Z n × M 1 → M 2 f 3 : X n × M 1 × M 2 → M 3 (9) and two deco ders g 2 : X n × M 1 × M 2 → ˆ Z n g 3 : Z n × M 1 × M 3 → ˆ X n (10) such that E " n X i =1 d x ( X i , ˆ X i ) # ≤ D x , E " n X i =1 d z ( Z i , ˆ Z i ) # ≤ D z , (11) The rate tr iple ( R 1 , R 2 , R 3 ) of the ( n, M 1 , M 2 , M 3 , D x , D z ) co de is defined by R i = 1 n log M i ; i = 1 , 2 , 3 . (12) Definition 2: Giv en a d istortion pa ir ( D x , D z ) , a ra te triple ( R 1 , R 2 , R 3 ) is said to be achievable if, f or any ǫ > 0 , and sufficiently large n , there exists an ( n, 2 nR 1 , 2 nR 2 , 2 nR 3 , D x + ǫ, D z + ǫ ) code f or th e sou rces X , Z with side info rmation Y . Definition 3: The (ope rational) achievable r egion R O ( D x , D z ) of rate distortio n with a help er k nown at the encoder and d ecoder is the closure of the set of all ac hiev able rate pairs. The next theore m is the main re sult of this work. Theor em 1: In the two way-rate distortion proble m with a help er , as depicte d in Fig. 1, where Y − X − Z , R O ( D x , D z ) = R ( D x , D z ) , (13) where the region R ( D x , D z ) is sp ecified in (1)- (5). Furthermo re, the region R ( D x , D z ) satisfies the following properties, which are pr oved in A ppendix B. 6 Lemma 2: 1) The region R ( D x , D z ) is c on vex 2) T o exhaust R ( D x , D z ) , it is enoug h to restrict the alp habet of U , V , and W to satisfy |U | ≤ |Y | + 4 , |V | ≤ |Z ||U | + 3 , |W | ≤ |U ||V ||X | + 1 . (14) Before provin g the main result (T heorem 1), we would like to co nsider two special cases, first wher e R 2 = 0 and D z = ∞ and seco nd where R 3 = 0 and D x = ∞ . The m ain techniqu es and insight are gain ed through those special cases. Both cases are dep icted in Fig. 3 where in the first case we assume the Markov f orm Y − X − Z and in the second case we assume a Markov form Y − Z − X . The proofs o f th ese tw o cases are quite dif fere nt. In the achie vability of the first case, we use a W yn er-Zi v co de that is designed only for the deco der , and in the achievability of the secon d case we use a W y ner-Zi v code th at is designed on ly for the encod er . In the con verse for the first case, the main idea is to o bserve that the achiev able region d oes n ot increase by letting the en coder kn ow Y , and in th e c on verse of th e second case the m ain idea is to use the chain rule in two opposite direction s, conditioning once on th e past and o nce on the f uture. a P S f r a g r e p l a c e m e n t s X Encoder Helper R Decoder ˆ X Y Z R 1 Fig. 3. W yner-Zi v problem with a helper . W e consider two cases; first the source X, Helper Y and the side information Z form the Markov chain Y − X − Z and in the second case they form the Markov chain Y − Z − X . I V . W Y N E R - Z I V W I T H A H E L P E R W H E R E Y - X - Z In this Section, we consider the rate distortion pr oblem with a helper and additional side inform ation Z , k nown only to the decod er , as shown in Fig. 3. W e also assume that the source X , th e helper Y , and the side informatio n Z , form the Markov chain Y − X − Z . This setting correspon ds to the case where R 2 = 0 and D z = ∞ . Let us denote by R O Y − X − Z ( D ) the (operationa l) achiev ab le region R O ( D x = D , D z = ∞ ) . W e now pre sent our main result of this section. Let R Y − X − Z ( D ) be th e set of all rate pairs ( R , R 1 ) that satisfy R 1 ≥ I ( U ; Y | Z ) , (15) 7 R ≥ I ( X ; W | U, Z ) , (16) for some join t distribution of the f orm p ( x, y , z , u, v ) = p ( x, y ) p ( z | x ) p ( u | y ) p ( w | x, u ) , (17) E d x ( X, ˆ X ( U, W, Z )) ≤ D , (18) where W and V are a uxiliary rando m variables, and th e reconstru ction variable ˆ X is a determin istic fu nction of the tr iple ( U, W, Z ) . Th e n ext lemma states pro perties of R X − Y − Z ( D ) . It is the a nalog of L emma 2 and the proof is omitted. Lemma 3: 1) The region R X − Y − Z ( D ) is convex 2) T o exhaust R X − Y − Z ( D ) , it is enough to restrict th e alphabets of V and U to satisfy |U | ≤ |Y | + 2 |W | ≤ |X | ( |Y | + 2) + 1 . (19) Theor em 4: The ach iev able rate re gio n fo r the setting illustrated in F ig. 3, wh ere X , Y , Z are i. i.d. rand om variables forming the Markov chain Y − X − Z is R O Y − X − Z ( D ) = R Y − X − Z ( D ) . (20 ) Let us define an add itional region R X − Y − Z ( D ) the same as R X − Y − Z ( D ) but the term p ( w | x, u ) in (17) is replaced by p ( w | x, u, y ) , i.e., p ( x, y , z , u, w ) = p ( x, y ) p ( z | x ) p ( u | y ) p ( w | x, u, y ) . (21) In the proof of Theorem 4, we show that R Y − X − Z ( D ) is achiev able and that R Y − X − Z ( D ) is an outer bound , and we co nclude the pro of by apply ing the following lemma, which states that the two regions are equ al. Lemma 5: R X − Y − Z ( D ) = R X − Y − Z ( D ) . Pr oof: T rivially we h av e R X − Y − Z ( D ) ⊇ R ( D | Z ) . Now we prove that R X − Y − Z ( D ) ⊆ R X − Y − Z ( D ) . L et ( R, R 1 ) ∈ R X − Y − Z ( D ) , and p ( x, y , z , u, w ) = p ( x, y ) p ( z | x ) p ( u | y ) p ( w | x, u, y ) (22) be a distribution th at satisfies (15),(16) and (18). Now we show that ther e exists a distribution o f the form (17) such that ( 16),(15) and (18) hold. Let p ( x, y , z , u, w ) = p ( x, y , z ) p ( u | y ) p ( w | x, u ) , (23) where p ( w | x, u ) is ind uced by p ( x, y , z , u, w ) . W e n ow show that the terms I ( U ; Y | Z ) , I ( X ; W | Z , U ) and E d ( X, ˆ X ( U, W, Z )) are the same whether we ev aluate them by the joint distribution p ( x, y , z , u, w ) o f (23), or by p ( x, y , z , u, w ) ; hence ( R , R 1 ) ∈ R X − Y − Z ( D ) . In ord er to show that th e terms ab ove are the sam e it is enough to show th at the marginal distributions p ( y , z , u ) and p ( x, z , u, w ) ind uced by p ( x, y , z , u, w ) ar e equal t o the 8 marginal distrib ution s p ( y , z , u ) an d p ( x, z , u, w ) induc ed b y p ( x, y , z , u, w ) . Clearly p ( y , u, z ) = p ( y , u, z ) . In the rest of the proof we show p ( x, z , u , w ) = p ( x, z , u, w ) . A distrib utio n of th e form p ( x, y , z , u, w ) as given in (22) implies that the Markov ch ain W − ( X , U ) − Z holds as shown in Fig. 4. Therefo re p ( w | x, u, z ) = p ( w | x, u ) . Now con sider p ( x, z , u, w ) = p ( x, z , u ) p ( w | x, u ) , an d since P S f r a g r e p l a c e m e n t s X Y U Z W V Fig. 4. A grap hical proof of the Markov chain W − ( X, U ) − Z . The undirected graph corr esponds to the joi nt distrib ution gi ven in (22), i.e., p ( x, y , z , u, v , w ) = p ( x, y ) p ( z | x ) p ( u | y ) p ( w | u, x, y ) . The Markov chain holds since the re is no path from Z to W that does not pass through ( X, U ) . p ( x, z , u ) = p ( x, z , u ) and p ( w | x, u ) = p ( w | x, u ) we co nclude that p ( x, z , u , w ) = p ( x, z , u, w ) . Pr oof of Theo r em 4: Achievability: Th e pro of follows classical argumen ts, an d there fore the tech nical details will be omitted. W e describe only the coding structure an d the associated Markov cond itions. Note that the condition (17) in the definition of R X − Y − Z ( D ) , implies the Markov chain U − Y − X − Z . The helper (encod er o f Y ) em ploys W yner-Ziv cod ing with decod er side information Z and external random v ariable U , as seen fr om (15). The Markov conditions required for such coding, U − Y − Z , are satisfied, hence the source decoder, at th e destination, can re cover th e codew ord s constructed from U . Moreover , since (17) implies U − Y − X − Z , the encod er of X can also recon struct U (this is the point where the Markov assumption Y − X − Z is n eeded). Therefor e in the coding/d ecoding scheme of X , U serves as side infor mation av ailable at both sides. The sour ce ( X ) en coder now employs W yner-Zi v co ding for X , with d ecoder side informatio n Z , co ding random variable W , an d U a vailable at bo th sides. The Markov condition s needed fo r th is scheme are W − ( X , U ) − Z , which again are satisfied by (17). The ra te nee ded fo r this coding is I ( X ; W | U, Z ) , reflected in the boun d on R in (16). On ce the two codes (helper and source c ode) are decoded , the d estination can u se all the a vailable rando m variables, U , W , and th e side information Z , to construc t ˆ X . Con verse: Assum e that we have an ( n, M 1 = 2 nR 1 , M 2 = 1 , M 3 = 2 nR , D x = D, D z = ∞ ) cod e as in Definition 4. W e will show the existence of a triple ( U, W, ˆ X ) th at satisfy (15)- (18). Denote T 1 = f 1 ( Y n ) ∈ { 1 , ..., 2 nR 1 } , and T = f 3 ( X n , T 1 ) ∈ { 1 , ..., 2 nR } . Th en, nR 1 ≥ H ( T 1 ) ≥ H ( T 1 | Z n ) ≥ I ( Y n ; T 1 | Z n ) = n X i =1 H ( Y i | Z i ) − H ( Y i | Y i − 1 , T 1 , Z n ) 9 ( a ) = n X i =1 H ( Y i | Z i ) − H ( Y i | X i − 1 , Y i − 1 , T 1 , Z n ) ≥ n X i =1 H ( Y i | Z i ) − H ( Y i | X i − 1 , T 1 , Z n ) , (24) where equ ality (a) is du e to the Markov form Y i − ( Y i − 1 , f 1 ( Y n ) , Z n ) − X i − 1 . Furth ermore, nR ≥ H ( T ) ≥ H ( T | T 1 , Z n ) ≥ I ( X n ; T | T 1 , Z n ) = n X i =1 H ( X i | T 1 , Z n , X i − 1 ) − H ( X i | T , T 1 , Z n , X i − 1 ) (25) Now , let W i , T and U i , ( X i − 1 , Z n \ i , T 1 ) , where Z n \ i denotes the vector Z n without the i th element, i.e., ( Z i − 1 , Z n i +1 ) . Then ( 24) and (25) b ecome R 1 ≥ 1 n n X i =1 I ( Y i ; U i | Z i ) R ≥ 1 n n X i =1 I ( X i ; W i | U i , Z i ) . (26) Now we observe that the Markov chain U i − Y i − ( X i , Z i ) holds since we h av e ( X i − 1 , Z n \ i , T 1 ( Y n )) − Y i − ( X i , Z i ) . Also the M arkov c hain W i − ( U i , X i , Y i ) − Z i holds since T ( T 1 , X n ) − ( X i , Y i , T 1 ( Y n ) , Z n \ i ) − Z i . The reconstruc tion at time i , i. e., ˆ X i , is a determin istic fu nction of ( Z n , T , T 1 ) , and in particular it is a determin istic function o f ( U i , W i , Z i ) . Finally , let Q b e a ran dom variable in depend ent o f X n , Y n , Z n , and un iformly d istributed over the set { 1 , 2 , 3 , .., n } . Define the ran dom v ariab les U , ( Q, U Q ) , W , ( Q, W Q ) , and ˆ X , ( ˆ X Q ) ( ˆ X Q is a short notation for time sharin g over th e estimato rs). The Markov r elations U − Y − ( X , Z ) and W − ( X , U , Y ) − Z , the inequality E d ( X, ˆ X ) = P n i =1 1 n E d ( X, ˆ X i ) ≤ D , the fact that ˆ X is a deter ministic function of ( U, W, Z ) , and the in equalities R 1 ≥ I ( Y ; U | Z ) and R ≥ I ( X , Y ; W | U, Z ) (imp lied by (26)), imply th at ( R, R 1 ) ∈ R X − Y − Z ( D ) , completing the pr oof by Lemm a 5. V . W Y N E R - Z I V W I T H A H E L P E R W H E R E Y − Z − X Consider the the rate-d istortion prob lem with side info rmation and h elper as illustrated in Fig . 3, where the random variables X , Y , Z f orm the Markov chain Y − Z − X . This setting corr esponds to the case where R 3 = 0 and exchang ing betwee n X and Z . Le t us deno te by R O Y − Z − X ( D ) the (op erational) achiev able region. Let R Y − Z − X ( D ) be the set of all ra te pairs ( R, R 1 ) that satisfy R 1 ≥ I ( U ; Y | X ) , (27) R ≥ I ( X ; V | U, Z ) , (28) for some join t distribution of the f orm p ( x, y , z , u, v ) = p ( z , y ) p ( x | z ) p ( u | y ) p ( v | x, u ) , (29) 10 E d ( X, ˆ X ( U, V , Z )) ≤ D, (30) where U and V are au xiliary ran dom variables, an d the rec onstruction variable ˆ X is a deterministic function of the triple ( U, V , Z ) . The next lemma states pr operties of R Y − Z − X ( D ) . I t is the analog of Lemm a 2 a nd therefore omitted. Lemma 6: 1) The region R Y − Z − X ( D ) is convex 2) T o exhaust R Y − Z − X ( D ) , it is enough to restrict th e alphabets of V and U to satisfy |U | ≤ |Y | + 2 |V | ≤ |X | ( |Y | + 2) + 1 . (31) Theor em 7: The achievable r ate region fo r th e setting illustrated in Fig. 3, wh ere X i , Y i , Z i are i.i.d. triplets distributed according to the ra ndom variables X, Y , Z for ming the Mar kov chain Y − Z − X is R O Y − Z − X ( D ) = R Y − Z − X ( D ) . (32 ) Pr oof: Achievability: Th e pro of follows classical argumen ts, an d there fore the tech nical details will be omitted. W e describe on ly the coding structure an d the associated Markov conditio ns. Th e helpe r (encod er of Y ) employs W yner-Zi v co ding with decod er side information X and e xtern al rand om variable U , as seen from (27). The Markov cond itions r equired for such coding, U − Y − X , ar e satisfied, henc e the source encod er , at the destination, can recover the cod ew ord s con structed from U . Moreover , since (29) implies U − Y − Z − X , the decoder, at the destination, can also recon struct U . Th erefore in the coding/de coding scheme of X , U serves as side inform ation av ailable at bo th sides. The sou rce X encoder now emp loys W yner-Zi v c oding f or X , with decode r side in formatio n Z , coding ran dom v aria ble V , an d U a vailable at both sides. The M arkov cond itions needed for this scheme are V − ( X, U ) − Z , which again ar e satisfied by (29). Th e rate needed for this cod ing is I ( X ; V | U, Z ) , reflected in the boun d o n R in (2 8). Once the two codes (help er a nd source code) are d ecoded, the destination can use all th e av ailable rand om variables, U , V , and the side inform ation Z , to construc t ˆ X . Con verse: Assume that we have a co de for a sou rce X with helper Y and side info rmation Z at r ate ( R 1 , R ) . W e will show th e existence of a triple ( U, V , ˆ X ) th at satisfy (27)-(30). De note T 1 ( Y n ) ∈ { 1 , ..., 2 nR 1 } , an d T ( X n , T 1 ) ∈ { 1 , ..., 2 nR } . Then, nR 1 ≥ H ( T 1 ) ≥ H ( T 1 | X n ) ≥ I ( Y n ; T 1 | X n ) = n X i =1 H ( Y i | X i ) − H ( Y i | Y i − 1 , T 1 , X n ) ( a ) = n X i =1 H ( Y i | X i ) − H ( Y i | Y i − 1 , T 1 , X n i +1 , X i ) , 11 ( b ) = n X i =1 H ( Y i | X i ) − H ( Y i | Y i − 1 , T 1 , X n i +1 , X i , Z i − 1 ) , ( c ) ≥ n X i =1 H ( Y i | X i ) − H ( Y i | T 1 , X n i +1 , X i , Z i − 1 ) , (33) where (a) and (b) follow fro m the Markov chain Y i − ( Y i − 1 , T 1 ( Y n ) , X n i ) − ( X i − 1 , Z i − 1 ) (see Fig. 5 for the P S f r a g r e p l a c e m e n t s X i − 1 X i X n i +1 Y i − 1 Y i Z n i + 1 Z i − 1 Z i Z n i +1 Y n i +1 T ( X n , T 1 ) T 1 ( Y n ) Fig. 5. A graphical proof of the Marko v chain Y i − ( Y i − 1 , T 1 ( Y n ) , X n i ) − ( X i − 1 , Z i − 1 ) . The undirected graph corresponds to the joint distrib ution p ( x i − 1 , z i − 1 ) p ( y i − 1 | z i − 1 ) p ( x i , z i ) p ( y i | z i ) p ( x n i +1 , z n i +1 ) p ( y n i +1 | z n i +1 ) p ( t 1 | y n ) . The Mark ov chain hol ds since all paths from Y i to X i − 1 , Z i − 1 pass through ( Y i − 1 , T 1 ( Y n ) , X n i ) . The nodes with the ope n circl e, i.e., ◦ , constitute the middle term in the Mark ov cha in, i.e., ( Y i − 1 , T 1 ( Y n ) , X n i ) and all the other nodes are with solid circles, i.e., • . The nodes Y i − 1 , Y i , Y n i +1 and T 1 are connected due to the term p ( t 1 | y n ) . proof ), an d (c) follows from the fact that conditio ning reduces entropy . Consider, nR ≥ H ( T ) ≥ H ( T | T 1 , Z n ) ≥ I ( X n ; T | T 1 , Z n ) = n X i =1 H ( X i | X n i +1 , T 1 , Z n ) − H ( X i | X n i +1 , T 1 , Z n , T ) ( a ) = n X i =1 H ( X i | X n i +1 , T 1 , Z i − 1 , Z i ) − H ( X i | X n i +1 , T 1 , Z n , T ) ( b ) ≥ n X i =1 H ( X i | X n i +1 , T 1 , Z i − 1 , Z i ) − H ( X i | X n i +1 , T 1 , Z i − 1 , Z i , T ) , (34) where (a) is due to the Markov chain X i − ( X n i +1 , T 1 ( Y n ) , Z i ) − Z n i +1 (this can be seen from Fig. 5 sin ce all p aths from X i to Z n i +1 goes through Z i ), and ( b) is due to the fact that conditioning red uces entropy . No w let us denote U i , Z i − 1 , T 1 ( Y n ) , X n i +1 , and V i , T ( X n , T 1 ) . The M arkov chains U i − Y i − ( X i , Z i ) and V i − ( X i , U i ) − ( Z i , Y i ) hold (see Fig. 6 for the proof of the last Markov relation). Next, we need to sh ow that there exists a sequen ce of fun ction ˆ X i ( U i , V i , Z i ) such that 1 n n X i =1 E [ d ( X i , ˆ X i ( U i , V i , Z i ))] ≤ D . (35) 12 P S f r a g r e p l a c e m e n t s X i − 1 X i X n i +1 Y i − 1 Y i Z n i + 1 Z i − 1 Z i Z n i +1 Y n i +1 T ( X n , T 1 ) T 1 ( Y n ) Fig. 6. A graphi cal proof of the Mark ov chain X i − 1 − ( Z i − 1 , T 1 ( Y n ) , X n i ) − ( Z i , Y i ) , whic h implies V i − ( X i , U i ) − ( Z i , Y i ) . The undirec ted graph corresponds to the joi nt distri bution p ( x i − 1 , z i − 1 ) p ( y i − 1 | z i − 1 ) p ( x i , z i ) p ( y i | z i ) p ( x n i +1 , z n i +1 ) p ( y n i +1 | z n i +1 ) p ( t 1 | y n ) . The Marko v chain holds since all paths from X i − 1 to ( Z i , Y i ) pass through ( Z i − 1 , T 1 ( Y n ) , X n i ) . By assumption we know that there exists a s eq uence of function s ˆ X i ( T , T 1 , Z n ) such that P n i =1 E [ d ( X i , ˆ X i ( T , T 1 , Z n ))] ≤ nD , and trivially this implies that th ere exists a sequenc e of function s ˆ X i ( X i − 1 , T , T 1 , Z n ) such that n X i =1 E [ d ( X i , ˆ X i ( X n i +1 , T , T 1 , Z i , Z n i +1 ))] ≤ D . (36) Note that th e Markov ch ain X i − ( X n i +1 , T 1 , Z i , T ) − Z n i +1 holds (see Fig. 7 for the pro of). Th erefore , for an arbitrary fun ction ˜ f of the form ˜ f ( X n i +1 , T 1 , Z i , T ) we hav e n X i =1 E [ d ( X i , ˆ X i ( X n i +1 , T , T 1 , Z i , Z n i +1 ))] ≤ min ˜ f n X i =1 E [ d ( X i , ˆ X i ( X n i +1 , T , T 1 , Z i , ˜ f ( X n i +1 , T 1 , Z i , T )))] , (37) and since each sum mand on the RHS of (37) inclu des only the rando m variables ( X n i +1 , T , T 1 , Z i ) we conclud e that ther e exists a sequence of fu nctions { X i ( X n i +1 , T , T 1 , Z i ) } for which (35) holds. P S f r a g r e p l a c e m e n t s X i − 1 X i X n i +1 Y i − 1 Y i Z n i + 1 Z i − 1 Z i Z n i +1 Y n i +1 T ( X n , T 1 ) T 1 ( Y n ) Fig. 7. A graphical proof of the Markov chain X i − ( X n i +1 , T 1 , Z i , T ) − Z n i +1 . T he undirecte d graph corresponds to the joint distrib ution p ( x i − 1 , z i − 1 ) p ( y i − 1 | z i − 1 ) p ( x i , z i ) p ( y i | z i ) p ( x n i +1 , z n i +1 ) p ( y n i +1 | z n i +1 ) p ( t 1 | y n ) p ( t | x n , t 1 ) . The Marko v chain holds since all paths from X i to Z n i +1 pass through ( X n i +1 , T 1 , Z i , T ) . Finally , let Q b e a rando m variable indep endent of X n , Y n , Z n , an d unifo rmly d istributed over the set { 1 , 2 , 3 , .., n } . D efine the rand om variables U , ( Q, U Q ) , W , ( Q, W Q ) , and ˆ X , ˆ X Q ( ˆ X Q is a short no tation for time sharing over the estimators). Then (3 3)-(35) implies that (27)-(30) hold. 13 V I . P R O O F O F T H E O R E M 1 In th is section we prove Theorem 1, which states that the (ope rational) achiev ab le region R O ( D x , D z ) o f the two-way sour ce coding with helper problem as in Fig. 1 e quals R ( D x , D z ) . In the conv erse proof we use the ideas used in proving the conv erses of Theo rems 4 and 7 . Namely , we will use the ch ain rule based o n the p ast and future, an d will show that R O ( D x , D z ) ⊆ R ( D x , D z ) , where R ( D x , D z ) is defin ed as R ( D x , D z ) in (1)-(5) but with on e difference: th e term p ( w | u, v , x ) in (4) sho uld be replaced b y p ( w | u, v , x, y ) , i.e., p ( x, y , z , u, v , w ) = p ( x, y ) p ( z | x ) p ( u | y ) p ( v | u, z ) p ( w | u, v , x, y ) . (38) The following lemma states th at the two regions R ( D x , D z ) and R ( D x , D z ) are e qual. Lemma 8: R ( D x , D z ) = R ( D x , D z ) . Pr oof: T rivially we hav e R ( D x , D z ) ⊇ R ( D z , D z ) . No w we prove that R ( D x , D z ) ⊆ R ( D x , D z ) . L et ( R 1 , R 2 , R 3 ) ∈ R ( D x , D z ) , and p ( x, y , z , u, v , w ) = p ( x, y ) p ( z | x ) p ( u | y ) p ( v | u, z ) p ( w | u, v , x, y ) , (39 ) be a distribution th at satisfies (1)-( 3) a nd ( 5). Next we show that th ere exists a distribution of the for m of (4) (which is explicitly given in (39)) such that (1)-(3) and (5) hold. Let p ( x, y , z , u, v , w ) = p ( x, y ) p ( z | x ) p ( u | y ) p ( v | u, z ) p ( w | u, v , x ) , (40) where p ( w | u , v , x ) is ind uced b y p ( x, y , z , u, v ) . W e show that all the terms in (1)-(3) and (5) i.e., I ( Y ; U | Z ) , I ( Z ; V | U, X ) , E d z ( Z, ˆ Z ( U, V , X )) , I ( X ; W | U, V , Z ) , and E d x ( X, ˆ X ( U, W, Z )) are th e same whether we e valuate them b y the joint distribution p ( x, y , z , u, v ) of (40), or by p ( x, y , z , u, v , w ) of (3 9); hence ( R 1 , R 2 , R 3 ) ∈ R ( D x , D z ) . In o rder to show that the terms ab ove are the sam e it is enough to show that the m arginal distributions p ( x, y , z , u, v ) an d p ( x, z , u, v , w ) in duced by p ( x, y , z , u, v , w ) are equal to the marginal distributions p ( x, y , z , u, v ) and p ( x, z , u, v , w ) induced b y p ( x, y , z , u, v , w ) . Clearly p ( x, y , z , u, v ) = p ( x, y , z , u, v ) . In th e r est o f the proo f we show p ( x, z , u, v , w ) = p ( x, z , u, v , w ) . P S f r a g r e p l a c e m e n t s X Y U Z W V Fig. 8. A graphi cal proof of the Marko v chain W − ( X, U, V ) − Z . The undirected graph corresponds to the joint distrib ution giv en in (39), i.e., p ( x, y , z , u, v , w ) = p ( x, y ) p ( z | x ) p ( u | y ) p ( v | u, z ) p ( w | u, v , x, y ) . The Markov chain holds sinc e there is no path from Z to W that doe s not pass through ( X, U, V ) . A distrib ution of th e form p ( x, y , z , u, v , w ) as giv en i n (39) implies tha t the Mar kov chain W − ( X , U , V ) − Z hold s (see Fig. 8 f or the proo f). The refore p ( w | u, x, v , z ) = p ( w | u, x, v ) . Since p ( x, z , u, v , w ) = p ( x, z , v , u ) p ( w | x, u , v ) , 14 and sin ce p ( x, z , v , u ) = p ( x, z , v , u ) and p ( w | x, u, v ) = p ( w | x, w , v ) we conclud e that p ( x, z , u, v , w ) = p ( x, z , u, v , w ) . pr oof of Th eor em 1: Achievability: The achiev ability scheme is based on the fact that for th e two special c ases co nsidered ab ove, namely R 2 = 0 and R 3 = 0 , the co ding scheme fo r the help er was based on a W yner-Zi v scheme, wh ere the side informa tion at th e decod er is the rando m variable th at is ”f urther” in th e Markov chain Y − X − Z , nam ely Z . The helper (enc oder of Y ) em ploys W yne r-Zi v coding w ith d ecoder side in formatio n Z and extern al ran dom variable U , as seen from (1), i.e., R 1 ≥ I ( Y ; U | Z ) . The M arkov con ditions requir ed fo r such co ding, U − Y − Z , are satisfied, hence the sou rce decoder, at the de stination, can recover th e codewords constru cted from U . Moreover, since ( 29) implies U − Y − Z − X , the e ncoder of X can also reconstru ct U . Theref ore in the coding/d ecoding schem e of X , U serves as side information available at bo th sid es. The sour ce Z encod er now employs W yn er-Zi v co ding fo r Z , with decod er sid e inform ation X , cod ing ra ndom variable V , an d U av ailable at both sides. The Mar kov co nditions needed for this scheme are V − ( X, U ) − Z , which again are satisfied by (4). T he rate needed for this coding is I ( X ; V | U, Z ) , reflected in the boun d o n R 2 in (2). Finally , the source X encod er n ow emp loys W yner-Ziv codin g for X , with decoder side in formatio n Z , coding rando m variable W , and U, V available at both sides. The Markov condition s needed for this scheme are W − ( X , U, V ) − Z , which again are satisfied b y (4). The rate needed f or this co ding is I ( X ; W | U, V , Z ) , reflected in the bou nd on R 3 in (3). Once the co des are decod ed, the destination can use all the av ailable random v aria bles, ( U, V , X ) at User X, and, ( U, W, Z ) at User Z, to construc t ˆ Z and ˆ X , respectively . Con verse: Assume that we have a ( n, M 1 , M 2 , M 3 , D x , D z ) code. W e now show the existence of a triple ( U, V , W, ˆ X , ˆ Z ) that satisfy (1)-(5). Deno te T 1 = f 1 ( Y n ) , T 2 = f 2 ( Z n , T 1 ) , and T 3 = f 3 ( X n , T 2 , T 1 ) . Then using the same argum ents as in (33) an d (34) (just exch anging between X an d Z ), we o btain nR 1 ≥ n X i =1 H ( Y i | Z i ) − H ( Y i | X i − 1 , T 1 , Z n i ) , (41) nR 2 ≥ n X i =1 H ( Z i | Z n i +1 , T 1 , X i − 1 , X i ) − H ( Z i | Z n i +1 , T 1 , X i − 1 , X i , T 2 ) , (42) respectively . For up per-bounding R 3 , con sider nR 3 ≥ H ( T 3 ) ≥ H ( T 3 | T 1 , T 2 , Z n ) ≥ I ( X n ; T 3 | T 1 , T 2 , Z n ) = n X i =1 H ( X i | X i − 1 , Z n , T 1 , T 2 ) − H ( X i | X i − 1 , Z n , T 1 , T 2 , T 3 ) ( a ) = n X i =1 H ( X i | X i − 1 , Z n i , T 1 , T 2 ) − H ( X i | X i − 1 , Z n , T 1 , T 2 , T 3 ) 15 ≥ n X i =1 H ( X i | X i − 1 , Z n i , T 1 , T 2 ) − H ( X i | X i − 1 , Z n i , T 1 , T 2 , T 3 ) , (43) where equality ( a) is d ue to the Markov cha in X i − ( X i − 1 , Z n i , T 1 , T 2 ) − Z i − 1 (see Fig. 9). Now let us denote P S f r a g r e p l a c e m e n t s X i − 1 X i X n i +1 Y i − 1 Y i Z n i + 1 Z i − 1 Z i Z n i +1 Y n i +1 T 1 ( Y n ) T 2 ( Z n , T 1 ) Fig. 9. A gra phical proof of the Marko v chain X i − ( X i − 1 , Z n i , T 1 , T 2 ) − Z i − 1 . The undire cted graph corresponds to the joint distributi on p ( x i − 1 , z i − 1 ) p ( y i − 1 | x i − 1 ) p ( x i , z i ) p ( y i | x i ) p ( x n i +1 , z n i +1 ) p ( y n i +1 | x n i +1 ) p ( t 1 | y n ) p ( t 2 | z n , t 1 ) . The Marko v chain holds since all paths from Z i − 1 to X i pass through ( X i − 1 , Z n i , T 1 , T 2 ) . U i , X i − 1 , T 1 , Z n i +1 , V i , T 2 and W i , T 3 , and we o btain fro m (41)-(43) R 1 ≥ 1 n n X i =1 I ( Y i ; U i | Z i ) , R 2 ≥ 1 n n X i =1 I ( Z i ; V i | U i , X i ) , R 3 ≥ 1 n n X i =1 I ( X i ; W i | U i , V i , Z i ) , (44) Now , we verify th at the joint distribution of ( X i , Y i , Z i , U i , V i , W i ) is of the form (38), i.e., U i − Y i − ( Z i , X i ) , V i − ( U i , Z i ) − ( Y i , X i ) a nd W i − ( U i , V i , X i , Y i ) − Z i , hold. Th e Markov chain ( T 1 ( Y n ) , X i − 1 , Z n i +1 ) − Y i − ( Z i , X i ) trivially holds, and the Markov chains Z i − 1 − ( T 1 ( Y n ) , X i − 1 , Z n i ) − ( Y i , X i ) , (45) X n i +1 − ( T 1 ( Y n ) , T 2 ( T 1 , Z n ) , X i , Z n i +1 , Y i ) − Z i (46) are proven in is proven in Fig. 1 0, 11, respectively . Next, we sho w th at exist sequences of func tions { ˆ Z i ( U i , W i , Z i ) } , and { ˆ X i ( U i , V i , Z i ) } such th at 1 n n X i =1 E [ d ( X i , ˆ X i ( U i , V i , Z i ))] ≤ D x , 1 n n X i =1 E [ d ( X i , ˆ Z i ( U i , W i , X i ))] ≤ D z . (47) The only difficulty here is that the terms in ( U i , V i , Z i ) do not include Z i − 1 and th e terms ( U i , W i , X i ) d o not include X n i +1 . Howe ver, this is solved b y the sam e argu ment a s for the W yn er-Zi v with h elper at the end of Section V, by showing the Markov fo rms X i − ( U i , V i , Z i ) − Z i − 1 and Z i − ( U i , W i , X i ) − X n i +1 for which the proofs are giv en in Figures 12 and 1 3, respectively . 16 P S f r a g r e p l a c e m e n t s X i − 1 X i X n i +1 Y i − 1 Y i Z n i + 1 Z i − 1 Z i Z n i +1 Y n i +1 T 1 ( Y n ) T 2 ( Z n , T 1 ) Fig. 10. A graphical proof of the Marko v chain Z i − 1 − ( T 1 ( Y n ) , X i − 1 , Z n i ) − ( Y i , X i ) . The undi rected graph cor responds to the joint distrib ution p ( x i − 1 , z i − 1 ) p ( y i − 1 | x i − 1 ) p ( x i , z i ) p ( y i | x i ) p ( x n i +1 , z n i +1 ) p ( y n i +1 | x n i +1 ) p ( t 1 | y n ) . The Ma rkov chain holds since all pat hs from Z i − 1 to ( X i , Y i ) pass through ( X i − 1 , Z n i , T 1 ) . P S f r a g r e p l a c e m e n t s X i − 1 X i X n i +1 Y i − 1 Y i Z n i + 1 Z i − 1 Z i Z n i +1 Y n i +1 T 1 ( Y n ) T 2 ( Z n , T 1 ) Fig. 11. A graphical proof of the Marko v chain X n i +1 − ( T 1 ( Y n ) , T 2 ( T 1 , Z n ) , X i , Z n i +1 , Y i ) − Z i . The undirected graph correspond s to the joint distrib ution p ( x i − 1 , y i − 1 ) p ( z i − 1 | y i − 1 ) p ( x i , y i ) p ( z i | y i ) p ( x n i +1 , y n i +1 ) p ( z n i +1 | y n i +1 ) p ( t 1 | y n ) p ( t 2 | z n , t 1 ) . T he Markov chain holds since all paths from Z i to X n i +1 pass through ( T 1 ( Y n ) , T 2 ( T 1 , Z n ) , X i , Z n i +1 , Y i ) . P S f r a g r e p l a c e m e n t s X i − 1 X i X n i +1 Y i − 1 Y i Z n i + 1 Z i − 1 Z i Z n i +1 Y n i +1 T 1 ( Y n ) T 2 ( Z n , T 1 ) Fig. 12. A graphic al proof of the Marko v chain Z i − 1 − ( T 1 ( Y n ) , T 2 ( T 1 , Z n ) , X i − 1 , Z n i ) − X i . The undirected graph corresponds to the joint distrib ution p ( x i − 1 , z i − 1 ) p ( y i − 1 | x i − 1 ) p ( x i , z i ) p ( y i | x i ) p ( x n i +1 , z n i +1 ) p ( y n i +1 | x n i +1 ) p ( t 1 | y n ) p ( t 2 | z n , t 1 ) . The Marko v chain holds since all paths from Z i − 1 to X i pass through ( T 1 ( Y n ) , T 2 ( T 1 , Z n ) , X i − 1 , Z n i ) . Finally , let Q b e a rando m variable indep endent of X n , Y n , Z n , an d unifo rmly d istributed over the set { 1 , 2 , 3 , .., n } . Define the r andom variables U , ( Q, U Q ) , V , ( Q, V Q ) , W , ( Q, W Q ) , ˆ X , ˆ X Q , a nd ˆ Z , ˆ Z Q . Then (44)-(47) imp ly that the equations that define R ( D x , D z ) i.e., (1) -(5), hold . 17 P S f r a g r e p l a c e m e n t s X i − 1 X i X n i +1 Y i − 1 Y i Z n i + 1 Z i − 1 Z i Z n i +1 Y n i +1 T 1 ( Y n ) T 3 ( X n , T 1 ) Fig. 13. A graphical proof of the Marko v chain Z i − ( U i , W i , X i ) − X n i +1 . The undirected graph corresponds to the joint distrib ution p ( x i − 1 , z i − 1 ) p ( y i − 1 | x i − 1 ) p ( x i , z i ) p ( y i | x i ) p ( x n i +1 , z n i +1 ) p ( y n i +1 | x n i +1 ) p ( t 1 | y n ) p ( t 3 | x n , t 1 ) . The Markov chain holds since all paths from Z i to X n i +1 pass through ( T 1 ( Y n ) , T 3 ( T 1 , X n ) , X i , Z n i +1 ) . V I I . T W O - W AY M U LT I S T AG E Here we consider the two-way mu lti-stage rate-d istortion prob lem with a he lper . First, the help er sen ds a comm on message to both users, a nd then users X and Z send to each other a total r ate R x and R z , re spectiv ely , in K round s. W e use the definition o f two-way sourc e coding as given in [1], wh ere each user may tran smit up to K messages to the other user tha t depends on the source and p revious r eceiv ed messages. Let M denote a set of p ositiv e in tegers { 1 , 2 , .., M } an d let M K the c ollection of K sets { M 1 , M 2 , ..., M K } . a P S f r a g r e p l a c e m e n t s X User X Helper R y User Z ˆ X ˆ Z Y Z R y R z ,k R x,k Fig. 14. The tw o-way m ulti-sta ge with a helper . First Helper Y sends a common message to User X and to User Z at rate R y , and then we hav e K rounds whe re in each round k ∈ { 1 , ... , K } User Z sends a message to User X at rate R z ,k , and User X sends a message to User Z at rate R x,k . The limita tion is on rate R y and on the sum rates R x = P K k =1 R x,k and R z = P K k =1 R z ,k . W e assume that the side informat ion Y and the two sources X , Z are i.i.d. and form the Marko v chain Y − X − Z . Definition 4: An ( n, M y , M K x , M K z , D x , D z ) co de fo r two sources X an d Z with he lper Y consists of the encoder s f y : Y n → M y f z ,k : Z n × M k − 1 x × M y → M z ,k , k = 1 , 2 , ..., K 18 f x,k : X n × M k z × M y → M x,k , k = 1 , 2 , ..., K (48 ) and two deco ders g x : X n × M y × M K z → ˆ Z n g z : Z n × M y × M K x → ˆ X n (49) such that E " n X i =1 d x ( X i , ˆ X i ) # ≤ D x , E " n X i =1 d z ( Z i , ˆ Z i ) # ≤ D z , (50) The rate tr iple ( R x , R y , R z ) of the code is defin ed by R y = 1 n log M y ; R x = 1 n K X i =1 log M x,i ; R z = 1 n K X i =1 log M z ,i ; (51 ) Let u s den ote b y R O K ( D x , D z ) the (o perational) achievable region of th e mu lti-stage r ate d istortion with a helper, i.e., th e closure o f the set of a ll trip le rate ( R x , R y , R z ) th at are achiev able with a distortion p air ( D x , D z ) . Let R K ( D x , D z ) be th e set o f all triple rates ( R x , R y , R z ) tha t satisfy R y ≥ I ( U ; Y ) , (52) R z ≥ K X k =1 I ( Z ; V k | X , U, V k − 1 , W k − 1 ) , (53) R x ≥ K X k =1 I ( X ; W k | Z, U , V k , W k − 1 ) , (54) for some au xiliary random variables ( U, V K , W k ) that satisfy U − Y − ( X, Z ) , (55) V k − ( Z, U, V k − 1 , W k − 1 ) − ( X, Y ) , k = 1 , 2 , ..., K, (56) W k − ( X, U, V k , W k − 1 ) − ( Z , Y ) , k = 1 , 2 , ..., K, (57) E d x ( X, ˆ X ( U, W K , Z )) ≤ D x , E d z ( Z, ˆ Z ( U, V K , X )) ≤ D z . (58) 19 The Markov chain Y − X − Z an d the M arkov ch ains giv en in (55)-(57) imply that the joint d istribution o f X , Y , Z , U, V k , W k is of the fo rm p ( x, y ) p ( z | x ) p ( u | y ) Q K k =1 p ( v k | z , u, v k − 1 , w k − 1 ) p ( w k | x, u, v k , w k − 1 ) . Further- more, (53) an d (54) can b e written as R z ≥ I ( Z ; V K , W K | X , U ) , (59) R x ≥ I ( X ; V K , W K | Z, U ) , (60) due to the the Markov chains Z − ( X , U, V k , W k − 1 ) − W k and X − ( Z , U, V k − 1 , W k − 1 ) − V k . Lemma 9: 1) The region R K ( D x , D z ) is c on vex 2) T o exhaust R K ( D x , D z ) , it is enoug h to restrict the alp habet of U , V , and W to satisfy |U | ≤ |Y | + 2 K + 1 , |V k | ≤ |Z ||U ||V k − 1 ||W k − 1 | + 2( K + 1 − k ) + 1 , for k = 1 , .., K, |W k | ≤ |X ||U ||V k ||W k − 1 | + 2( K + 1 − k ) , for k = 1 , .., K. (61) The proo f of the lemm a is analog ous to the pro of of Lemma 2 an d therefo re omitted. Theor em 10 : In the two-way pr oblem with K stages of commun ication a nd a helper, as depicted in Fig. 14, where Y − X − Z , R O K ( D x , D z ) = R K ( D x , D z ) . (62) Theorem 10 is a generalization of T heorem 1 (equatio ns (52)-(58) where K = 1 a re equiv alent to (1)-( 5)) an d its pr oof is a straigh tforward extension. Here we explain only the extension s. Sketch o f a chievability: In the ach iev ability pro of of Theo rem 1, we generated th e sequences ( U n , V n 1 , W n 1 ) that are jointly typical with X n , Y n , Z n . Using th e same id ea of W yner-Zi v coding w e continu e an d g enerate at any stage k = 1 , 2 , ..., K , the sequen ce V n k that is jointly typica l with the other sequen ces b y transmitting a message at rate I ( Z ; V k | X , U, V k − 1 , W k − 1 ) from User Z to User X, and similarly the sequen ce W n k that is jointly typical with the other seq uences by transmitting a message at rate I ( X ; W k | Z, U , V k , W k − 1 ) fr om User X to User Z. In the final stage , User X uses the sequences ( X n , U n , V n 1 , ..., V n K ) to c onstruct ˆ Z n and, similarly , User Z uses the sequences ( Z n , U n , W n 1 , ..., W n K ) to co nstruct ˆ X n . Sketch of Con verse: Assume that we have an ( n, M y , M K x , M K z , D x , D z ) code and we will show th e existence of a vector ( U, V K , W K , ˆ X , ˆ Z ) that satisfy (52 )-( 58). Denote T y = f y ( Y n ) , T z ,k = f z ,k ( Z n , T y , T k − 1 x ) , and T x,k = f x,k ( X n , T y , T k z ) . Then th e same argum ents as in (41) we obtain nR y ≥ n X i =1 H ( Y i ; X i − 1 , T y , Z n i +1 | Z i ) (63) Then we hav e nR z ≥ H ( T K z ) = K X k =1 H ( T z ,k | T k − 1 z ) ≥ K X k =1 H ( T z ,k | T k − 1 z , T k − 1 x ) , (64) 20 nR x ≥ H ( T K x ) = K X k =1 H ( T x,k | T k − 1 x ) ≥ K X k =1 H ( T x,k | T k − 1 x , T k z ) . (6 5) Applying the same arguments as in (42) and (43) on the ter ms in (64) and (65), respe cti vely , we obtain that H ( T z ,k | T k − 1 z , T k − 1 x ) ≥ n X i =1 I ( Z i ; T z ,k | Z n i +1 , X i , T y , T k − 1 z , T k − 1 x ) H ( T x,k | T k − 1 x , T k z ) ≥ n X i =1 I ( X i ; T x,k | Z n i , X i − 1 , T y , T k z , T k − 1 x ) . (66) W e d efine the auxiliary rando m variables as U , X Q − 1 , T y , Z n Q +1 , V k = T z ,k and W k = T x,k , wh ere Q is distributed uniform ly o n the integers { 1 , 2 , ..., n } . V I I I . G AU S S I A N C A S E In this sub section we consider the Gaussian instance of the two way setting with a help er as defined in Section III a nd explicitly express the region fo r a mean square error d istortion (we also note that the multi stag e o ption does not incr ease the rate region fo r this case). a P S f r a g r e p l a c e m e n t s X = Z + A User X Helper R y User Z ˆ X ˆ Z Y = Z + A + B Z R y R z R x A ∼ N (0 , σ 2 A ) , B ∼ N (0 , σ 2 B ) , Z ∼ N (0 , σ 2 Z ) , A ⊥ B ⊥ Z , square-er ror distor tion Fig. 15. The Gaussian two-way with a helper . The side information Y and the two sources X, Z are i.i.d., jointly Gaussian and form the Marko v chain Y − X − Z . The distortion is the square error , i.e., d x ( X n , ˆ X n ) = 1 n P n i =1 ( X i − ˆ X i ) 2 and d z ( Z n , ˆ Z n ) = 1 n P n i =1 ( Z i − ˆ Z i ) 2 . Since X , Y , Z for m th e Markov chain Y − X − Z , we assume , without loss of generality , that X = Z + A and Y = Z + A + B , wh ere the ran dom variables ( A, B , Z ) are zero -mean Gaussian and ind ependen t of each oth er , where E [ A 2 ] = σ 2 A , E [ B 2 ] = σ 2 B and E [ Z 2 ] = σ 2 Z . Cor o llary 11 : T he achiev ab le rate region of the pro blem illustrated in Fig. 1 5 is R z ≥ 1 2 log σ 2 A σ 2 Z D z ( σ 2 A + σ 2 Z ) , (67) R x ≥ 1 2 log σ 2 A σ 2 B + σ 2 A 2 − 2 R y D x ( σ 2 A + σ 2 B ) . (68) 21 Pr oof: The converse and achiev ability o f (6 7) fo llows fr om the Gaussian W y ner-Zi v coding [18 ] result, which states th at the achievable rate for th e Gau ssian W yner-Ziv setting is the same as the case wh ere the side infor mation is k nown to the enc oder an d deco der . Furthermore, b ecause of the Markov ch ain Z − X − Y , the rate R y does not have any influence on R z , since th is rate is the achievable rate e ven if Y is known to both users. Th e ach ie vability and the converse fo r R x is given in the following corollary . a P S f r a g r e p l a c e m e n t s X = Z + A Y = Z + A + B Z T x ∈ 2 nR x ˆ X n ˆ X A ∼ N (0 , σ 2 A ) , B ∼ N (0 , σ 2 B ) , Z ∼ N (0 , σ 2 Z ) , A ⊥ B ⊥ Z , square-er ror distortion T y ∈ 2 nR y Fig. 16. Gaussia n case: the zero-mean Gaussian random vari ables A, B , Z are i.i.d. and independe nt of each other . Their vari ances are σ 2 A , σ 2 B and σ 2 Z , respecti vely . The source X and the helper Y satisfy X = A + Z and Y = Z + A + B . The distortion is the square error, i.e., d ( X n , ˆ X n ) = 1 n P n i =1 ( X i − ˆ X i ) 2 . Cor o llary 12 : T he achiev ab le rate region of the pro blem illustrated in Fig. 1 6 is R ≥ 1 2 log σ 2 A 1 − σ 2 A σ 2 A + σ 2 B (1 − 2 − 2 R y ) D (69) It is interesting to note that the r ate region does not depend on σ 2 Z . Furth ermore, we show in the p roof that f or the Gaussian ca se the rate region is the same as when Z is known to the source X and th e helper Y . Pr oof of Cor ollary 12: Con verse : Assum e that both encoder s observe Z n . Without loss of generality , th e encoders can subtract Z fro m X and Y ; hence th e pr oblem is equiv alent to new rate distortion pr oblem with a h elper , where the source is A and the helper is A + B . Now using the resu lt for the Gaussian case from [ 7], adapted to ou r n otation, we o btain (69). Achievability: Before proving the direct-p art of Coro llary 12, we establish the following lemma which is p roved in App endix C. Lemma 13: Gaussian W yner-Ziv rate-distortion pr o blem with ad ditional side information kno wn to the en coder and decoder . Let ( X, W, Z ) be jointly Gaussian. Consider the W yner-Zi v rate distortion problem wher e the so urce X is to be c ompressed with quadratic distor tion measure, W is available at th e encod er an d deco der, a nd Z is av ailable only a t the deco der . The r ate-distortion region for this problem is given b y R ( D ) = 1 2 log σ 2 X | W ,Z D , (70) where σ 2 X | W ,Z = E [( X − E [ X | W, Z ]) 2 ] , i.e., the minimum squar e error of estimating X fro m ( W , Z ) . 22 Let V = A + B + Z + D , where D ∼ N (0 , σ 2 D ) and is inde penden t of ( A, B , Z ) . Clearly , we h av e V − Y − X − Z . Now , let us gen erate V at the sourc e-encode r and at th e d ecoder u sing the achiev ability sch eme of W y ner [18]. Since I ( V ; Z ) ≤ I ( V ; X ) a rate R ′ = I ( V ; Y ) − I ( V ; Z ) would suf fice, an d it m ay be expressed as follows: R ′ = I ( V ; Y | Z ) = h ( V | Z ) − h ( V | Y ) = 1 2 log σ 2 A + σ 2 B + σ 2 D σ 2 D , (71) and this im plies that σ 2 D = σ 2 A + σ 2 B 2 2 R ′ − 1 . (72) Now , we in voke Lem ma 13, where V is th e side inform ation known both to th e en coder a nd decoder; hence a r ate that satisfies the following inequality achiev es a d istortion D ; R ≥ 1 2 log σ 2 X | V ,Z D = 1 2 log σ 2 A D 1 − σ 2 A σ 2 A + σ 2 B + σ 2 D (73) Finally , b y replacing σ 2 D with the identity in (72) we obtain (69). I X . F U RT H E R R E S U LT S O N W Y N E R - Z I V W I T H A H E L P E R W H E R E Y − X − Z In this section we investigate two prop erties o f the rate-r egion of the W yn er-Zi v setting ( Fig. 17) with a Markov form Y − X − Z . First, we investigate the tradeoff between the rate sen t b y the helper a nd the rate sent by the source and roughly speaking we conc lude that a bit from the so urce is m ore “v aluab le” tha n a b it from the helpe r . Second, we examine th e case where the h elper has the freedom to send different messages, a t d ifferent rates, to the enco der and th e decod er . W e show that “more help” to the enco der than to the decoder does n ot yield any perfor mance gain and that in such cases the fr eedom to send different messages to th e encoder a nd th e deco der yields no ga in over the case of a comm on m essage. Further, in this setting of different messages, the rate to the encoder can b e strictly less than that to th e decod er with no perfo rmance loss. A. A bit fr om the sour ce-enc oder vs. a bit fr om the helper Assume that we have a sequence of ( n, 2 nR , 2 nR 1 ) co des that achie ves a distortio n D , such that the triple ( R, R 1 , D ) is on the border of the region R Y − X − Z ( D ) (r ecall the definition o f R Y − X − Z ( D ) in ( 15)-(17)). No w , suppose that the helper is allowed to in crease the rate by an am ount ∆ ′ > 0 to R 1 + ∆ ′ ; to wh at rate R − ∆ can the sour ce-encod er red uce its rate and a chieve the sam e distortion D ? Despite the fact that the additional rate ∆ ′ is transmitted both to the decoder and encoder, we show that alw ays ∆ ≤ ∆ ′ . Let us denote by R ( R 1 ) the bound ary of the region R Y − X − Z ( D ) fo r a fixed D . W e fo rmally sho w that ∆ ≤ ∆ ′ by proving that the slope o f the cu rve R ( R 1 ) is always less th an 1. The pro of uses similar technique as in [19 ]. 23 a P S f r a g r e p l a c e m e n t s X Encoder Helper R Decoder ˆ X Y Z R 1 Fig. 17. W yner-Zi v problem with a helper where the Marko v chain Y − X − Z holds. Lemma 14: For any X − Y − Z , D , and R 1 , the sub gradients of the curve R ( R 1 ) are le ss than 1 . Pr oof: Since R Y − X − Z ( D ) is a conve x set, R ( R 1 ) is a c on vex fun ction. Further more, R ( R 1 ) is non increasing in R 1 . Now , let u s define J ∗ ( λ ) as J ∗ ( λ ) = min p ( x,y ,z ,u,w ) ∈P I ( X ; W | U, Z ) + λI ( Y ; U | Z ) , (74) where P is t h e s et of distrib utions satisfying p ( x, y , z , u, w , ˆ x ) = p ( x, y ) p ( z | y ) p ( u | y ) p ( w | u, x ) p ( ˆ x | u, w , z ) , E d ( X , ˆ X ) ≤ D . The line J ∗ ( λ ) = R + λR is a support line o f R ( R 1 ) , an d th erefore, λ is a subgradie nt. The value J ∗ ( λ ) is the inter section b etween th e supp ort line with slope − λ and the axis R , as shown in Fig. 18. Because of the conv exity and the mono tonicity of R ( R 1 ) , J ∗ ( λ ) is up per-bounded b y R (0) , i.e., J ∗ ( λ ) ≤ min p ( ˆ x,x,y ,z , u, w ) ∈ P R (0) = min p ( ˆ x,x,y ,z ,w ) ∈P W Z I ( X ; W | Z ) , (75) where P W Z is the set of distributions that satisfies p ( ˆ x, x, z , w ) = p ( x ) p ( z | x ) p ( w | x ) p ( ˆ x | w , z ) , E d ( X, ˆ X ) ≤ D . In addition , we observe that P S f r a g r e p l a c e m e n t s J ∗ ( λ ) R R 1 support line with slope − λ min p ( ˆ x | x ) I ( X ; ˆ X ) → min p ( ˆ x | x,y ) I ( X ; ˆ X | Y ) → Fig. 18. A support line of R ( R 1 ) with a slope − λ . J ∗ ( λ ) is the intersectio n of the support line with the R axis. J ∗ (1) = min p ( x,y ,z ,u,w , ˆ x ) ∈P I ( X ; W | U, Z ) + I ( Y ; U | Z ) 24 P S f r a g r e p l a c e m e n t s X n Encoder H e l p e r T , rate R T d , rate R d Decoder ˆ X n Helper Y n Z n T e , rate R e Fig. 19. The rat e distortion proble m with decode r side informatio n, and ind ependent helper rat es. W e assume the Markov relation Y − X − Z (a) = min p ( x,y ,z ,u,w , ˆ x ) ∈P I ( X , Y ; W, U | Z ) ≥ min p ( x,y ,z ,u,w , ˆ x ) ∈P I ( X ; W | Z ) , = min p ( ˆ x,x,y ,z , w ) ∈ P W Z I ( X ; W | Z ) , (76) where step (a) is due to the Markov chains U − Y − ( Z , X ) and W − ( U, X ) − ( Y , Z ) . Combining (75) and (76), we co nclude that for any subgrad ient − λ , J ∗ ( λ ) ≤ J ∗ (1) . Sinc e J ∗ ( λ ) is increasing in λ , we conclude that λ ≤ 1 . An alternative and equi valent proof would be to claim that, since R ( R 1 ) is a conv ex and non increasing fu nction, ∆ ∆ ′ ≤ dR dR 1 R 1 =0 , and the n to claim that the largest slope at R 1 = 0 is when Y = X , which is 1. For the Gau ssian case, the de riv ativ e may be calcu lated explicitly from (6 9), in particu lar for R 1 = 0 , an d we ob tain ∆ ≤ σ 2 A σ 2 A + σ 2 B ∆ ′ . (77) B. The case of in depend ent rates In this subsection we tr eat the rate distortion scenario where side infor mation from the h elper is encoded using two d ifferent messages, possibly at different rates, one to the encoder an d o ne to the decod er , as shown in Fig. 19. The comp lete characterization of achievable rate s for this scenar io is still an open problem. Howe ver, the solution that is given in previous sections, where th ere is o ne m essage known b oth to the encod er and decoder, provid es us insight that allo ws us to solve several cases of the problem shown here. W e start with the d efinition of the general case. Definition 5: An ( n, M , M e , M d , D ) cod e for sourc e X with side inf ormation Y and different h elper messages to the en coder and deco der, c onsists of thre e encoder s f e : Y n → { 1 , 2 , ..., M e } f d : Y n → { 1 , 2 , ..., M d } f : X n × { 1 , 2 , ..., M e } → { 1 , 2 , ..., M } 25 (78) and a d ecoder g : { 1 , 2 , ..., M } × { 1 , 2 , ..., M d } → ˆ X n (79) such that E d ( X n , ˆ X n ) ≤ D . (80) T o avoid cum bersome statemen ts, we will not repeat in the sequel the words “... d ifferent helper m essages to the encoder and decoder, ” as this is the to pic o f this section, and shou ld be clear from th e context. The rate pair ( R, R e , R d ) o f the ( n, M , M e , M d , D ) cod e is R = 1 n log M R e = 1 n log M e R d = 1 n log M d (81) Definition 6: Giv en a d istortion D , a r ate triple ( R, R e , R d ) is said to be achievable if for any δ > 0 , and sufficiently lar ge n , th ere exists an ( n, 2 n ( R + δ ) , 2 n ( R e + δ ) , 2 n ( R d + δ ) , D + δ ) co de for the sou rce X with side informa tion Y . Definition 7: The (operationa l) achievable re g ion R O g ( D ) of rate distortion with a helper known at th e encoder and deco der is the closu re of the set of all ach iev able rate triples at distortion D . Denote by R O g ( R e , R d , D ) the section of R O g ( D ) at h elper rates ( R e , R d ) . That is, R O g ( R e , R d , D ) = { R : ( R , R e , R d ) are achiev able with distortio n D } (82) and similarly , deno te by R ( R 1 , D ) the section of the region R Y − X − Z ( D ) , defined in (15)-(18) at helper rate R 1 . Recall that, accord ing to Th eorem 4, R ( R 1 , D ) co nsists of all a chiev able sourc e cod ing rates when th e helper sends common m essages to the source enco der and destination at r ate R 1 . Th e main result of this section is the fo llowing. Theor em 15 : F or any R e ≥ R d , R O g ( R e , R d , D ) = R ( R d , D ) (83) Theorem 15 h as interesting im plications on the co ding strategy taken by th e helper . It says that no g ain in perfor mance can be achie ved if th e source encode r gets “mo re help” than the d ecoder at the d estination (i.e., if R e > R d ), and thus we m ay restrict R e to be no h igher than R d . Moreover , in those cases w here R e = R d , optimal p erforma nce is a chieved wh en the helper sends to the enco der and decoder exactly the same message. Th e proof of this statement uses ope rational arguments. 26 Pr oof of Th eor em 15: Clearly , the claim is proved onc e we show the statement fo r R e = H ( Y ) . In th is situation, we can eq ually well assume that the encoder has full access to Y . Thus, fix a general scheme like in Defin ition 5 with R e = H ( Y ) . The en coder is a fu nction of the form f ( X n , Y n ) . Define T 2 = f d ( Y n ) . Th e M arkov chain Z − X − Y implies that Z n − ( X n , T 2 ) − Y n also forms a Markov ch ain. This implies, in turn that there exists a function φ an d a ran dom variable W , un iformly distributed in [0 , 1] and in depend ent o f ( X n , T 2 , Z n ) , such that Y n = φ ( X n , T 2 , W ) . (84) Thus the source encoder operatio n can be written a s f ( X n , Y n ) = f ( X n , φ ( X n , T 2 , W )) △ = ˜ f ( X n , T 2 , W ) (85) implying , in turn, that the d istortion of th is scheme can b e expressed as E d ( X n , ˆ X n ) = E h d ( X n , ˆ X n ( ˜ f ( X n , T 2 , W ) , T 2 , Z n )) i (a) = Z 1 0 E h d ( X n , ˆ X n ( ˜ f ( X n , T 2 , w ) , T 2 , Z n )) i dw (b) = Z 1 0 E h d ( X n , ˆ X n ( f w ( X n , T 2 ) , T 2 , Z n )) i dw (86) where (a) h olds since W is indepen dent of ( X n , T 2 , Z n ) , and ( b) by defin ing f w ( X n , T 2 ) = ˜ f ( X n , T 2 , w ) . (87) Note th at for a given w , the function f w is of th e fo rm of encod ing functio ns where the helper sends one message to the encod er an d decoder . Therefore we conclud e that anythin g ach ie vable with a sch eme from Definition 5, is achiev able by time-sh aring where the he lper sends one m essage to the encoder and decod er . The statement of Theo rem 15 can be extended to r ates R e slightly lower than R d . This extension is based on the simple obser vation that th e source encode r knows X , which can serve as side in formatio n in deco ding th e message sent b y the helper . Therefor e, any m essage T 2 sent to the source decoder can un dergo a stage of binnin g with respect to X . As an extrem e example, consider the case where R e ≥ H ( Y | X ) . Th e source en coder can fully recover Y , hence there is no advantage in transmitting to the encoder at r ates higher than H ( Y | X ) ; the decod er , on the othe r hand, can ben efit from rates in the region H ( Y | X ) < R d < H ( Y | Z ) . T his rate interval is not empty due to the Markov chain Y − X − Z . These ob servations ar e summar ized in the next theorem. Theor em 16 : 1) Let ( U, V ) achiev e a po int ( R, R ′ ) in R Y − X − Z ( D ) , i.e., R = I ( X ; U | V , Z ) R ′ = I ( Y ; V | Z ) = I ( V ; Y ) − I ( V ; Z ) (88) D ≥ E d ( X , ˆ X ( U, V , Z )) , (89) 27 V − Y − X − Z . (90) Then ( R, R e , R ′ ) ∈ R O g ( D ) for every R e satisfying R e ≥ I ( V ; Y | Z ) − I ( V ; X | Z ) = I ( V ; Y ) − I ( V ; X ) . (9 1) 2) Let ( R, R ′ ) be an outer point of R Y − X − Z ( D ) . That is, ( R, R ′ ) 6∈ R Y − X − Z ( D ) . (92) Then ( R, R e , R ′ ) is a n outer po int of R O g ( D ) for any R e , i.e., ( R, R e , R ′ ) 6∈ R O g ( D ) ∀ R e . (93) The p roof of Part 1 is based on binning , as d escribed above. In particular, obser ve that R e giv en in (9 1) is lower than R ′ of (88) d ue to the Markov chain V − Y − X − Z . Part 2 is a partial converse, and is a direct consequen ce of Theor em 15. The details, being straightfor ward, are o mitted. A P P E N D I X A P R O O F O F T H E T H E T E C H N I Q U E F O R V E R I F Y I N G M A R K O V R E L A T I O N S Pr oof First let us p rove that th ree rando m variables X , Y , Z , with a joint distribution of th e form p ( x, y , z ) = f ( x, y ) f ( y , z ) , (94) satisfy the Mar kov ch ain Y − X − Z . Con sider , p ( z | y, x ) = f ( x, y ) f ( y , z ) f ( x, y ) ( P z f ( y , z )) = f ( y , z ) P z f ( y , z ) , (95) and since the expression does not includ e the argument x we co nclude that p ( z | y , x ) = p ( z | y ) . For the mor e gene ral case, we first extend the sets X G 1 X G 3 . W e start by defining G 1 = G 1 and G 3 = G 3 , a nd then we a dd to X G 1 and to X G 3 all their neighb ors that are not in X G 2 (a neig hbor to a group is a node that is connected by one edge to the an element in the grou p). W e repea t this p rocedur e till there are no more nodes to add to X G 1 or X G 3 . Note tha t since there are no pa ths fr om X G 1 to X G 3 that do not pass thr ough X G 2 , then a node can not be added to both sets X G 1 and X G 3 . The set of nodes th at are not in ( X G 1 , X G 2 , X G 3 ) is denoted as X G 0 . The sets X G 0 and X G 1 and X G 3 are conn ected on ly to X G 2 and not to each other, he nce the jo int distribution of ( X G 0 , X G 1 , X G 2 , X G 3 ) is o f the f ollowing f orm p ( X G 0 , X G 1 , X G 2 , X G 1 ) = f ( X G 0 , X G 2 ) f ( X G 1 , X G 2 ) f ( X G 3 , X G 2 ) . (96) By marginalizing over X G 0 and using the claim introduced in the first sentence of the p roof we ob tain th e Markov chain X G 1 − X G 2 − X G 3 , whcih im plies X G 1 − X G 2 − X G 3 . 28 A P P E N D I X B P R O O F O F L E M M A 2 Pr oof: T o prove Part 1, let Q be a time sh aring random variable, indep endent of the sou rce trip le ( X , Y , Z ) . Note that I ( Y ; U | Z , Q ) ( a ) = I ( Y ; U, Q | Z ) = I ( Y ; ˜ U | Z ) , I ( Z ; V | U, X , Q ) = I ( Z ; V | ˜ U , X ) , I ( X ; W | U, V , Z, Q ) = I ( X ; W | ˜ U , V , Z ) , where ˜ U = ( U, Q ) , and in step (a) we u sed the fact that Y is indepe ndent of Q . This proves the c on vexity . T o prove Part 2, we inv oke the sup port lem ma [ 20, p p. 3 10] three times, each time fo r o ne of the auxiliary random variables U, V , W . The external ran dom variable U must have |Y | − 1 letter s to p reserve p ( y ) plus five more to preserve the expressions I ( Y ; U | Z ) , I ( Z ; V | U, X ) , I ( X ; W | U, V , Z ) and the distor tions E d x ( X, ˆ X ( U, V , Z )) E d z ( Z, ˆ Z ( U, W, X )) . No te that th e joint p ( x, y , z ) is preserved becau se of the Markov f orm U − Y − X − Z , and the structur e of th e joint d istribution given in (4) d oes not change. W e fix U , which now h as a b ounde d card inality , and w e apply the support lem ma for bound ing V . Th e external ran dom variable V m ust have |U ||Z | − 1 letters to pre serve p ( u, z ) plus four more to p reserve the expressions I ( Z ; V | U, X ) , I ( X ; W | U, V , Z ) and the distortion s E d x ( X, ˆ X ( U, V , Z )) , E d z ( Z, ˆ Z ( U, W , X )) . Note that because of th e Mar kov structure V − ( U, Z ) − ( X, Y ) the jo int distribution p ( u , z , x, y ) does no t chang e. Finally , we fix U, V which now have a bounde d car dinality and we apply the supp ort lemma for b oundin g W . The external random variable W mu st have |U ||V ||X | − 1 letters to preserve p ( u, v , x ) plus two more to pr eserve the exp ressions I ( X ; W | U, V , Z ) and the distortion s E d z ( Z, ˆ Z ( U, W, X )) . Note that becau se o f the Markov struc ture W − ( U , V , X ) − ( Z, Y ) the jo int distribution p ( u, v , x, y , z ) do es not change. A P P E N D I X C P R O O F O F L E M M A 1 3 Since W , X , Z are jointly Gau ssian, we have E [ X | W , Z ] = αW + β Z , for som e scala rs α, β . Furtherm ore, we have X = αW + β Z + N , (97) where N is a Gau ssian random variable in depend ent of ( W, Z ) with zero mean and variance σ 2 X | W ,Z . Since W is known to the encoder an d deco der we can sub tract αW fr om X , and then using W y ner-Zi v cod ing f or the Gau ssian case [18 ] we obtain R ( D ) = 1 2 log σ 2 X | W ,Z D . (98) Obviously , one can not achieve a rate smaller than this even if Z is kn own both to the encoder an d decoder, an d therefor e this is the ach iev able region. 29 R E F E R E N C E S [1] A. H. Kaspi. T wo-w ay s ource coding with a fidelity criterion. IEEE T rans. Inf. Theory , 31(6):735–740, 1985. [2] A. D. W yner and J. Zi v . The rat e-distortion functi on for source coding with side in formation at the decoder . IEEE T rans. Inf. Theory , 22(1):1–10 , 1976 . [3] A. D. W yner . On source coding with side-informatio n at the decoder . IEEE T rans. Inf. Theory , 21:294–300, 1975. [4] R. Ahlswede and J. Korner . Source coding with side information and a conv erse for de graded broadcast ch annels. IEE E Tr ans. Inf . Theory , 21(6):629– 637, 1975. [5] A. Kaspi. Rate-dist ortion for corr elated sources with partially separated encoder s . 1979. Ph.D. dissertation . [6] A. Kaspi and T . Berger . Rate -distortion for corr elated sources with par tially separated encoders. IEEE T rans. Inf. Theory , 28:828–8 40, 1982. [7] D. V asude va n and E . Perron. Cooperati ve source coding with encod er breakdown . In Proc . International Symposium on Information Theory (ISIT) , Nice, France., June, 2007. [8] H. Permuter , Y . Steinbe rg, and T . W eissman. Rate-distorti on with a limited-rate helper to the encoder and decode r,. A va ilble at http:/ /arxi v .org/abs/08 11 .4773v1 , Nov . 2008. [9] T . Berger and R.W . Y eung . Multitermin al source encoding with one distortion criterion. IEEE T rans. Inf. Theory , 35:228–236, 1989. [10] Y . Oohama. Gaussian multitermi nal source coding. IEEE T rans. Inf. Theory , 43:1912–1923, 1997. [11] Y . Oohama. Rate -distortion theory for gaussian multiterminal source coding systems with s e veral s ide informatio ns at the decoder . IE EE T rans. Inf . Theory , 51:2577–2 593, 2005. [12] A. B. W agner , S. T avilda r, and P . V iswan ath. Rate region of th e quadratic gaussian two-enc oder source-coding problem. IEEE T rans. Inf . Theory , 54:1938–1961, 2008. [13] S. T avil dar , P . V iswan ath, an d A. B. W agner . The gaussian many-hel p-one distr ibuted source coding problem. submitted to IEE E T rans. Inf. Theory . A v ailable at http://a rxiv .org/abs/0 80 5.1857 , 2008. [14] A. Maor and N. Merha v . T wo-wa y successi vely refined joint source-cha nnel codi ng. IEEE T rans. Inf. Theory , 52(4):1483–1494 , 2006. [15] J. Pearl. Causality: Models, R easoning and Infer ence . Cambridge Uni v . Press, 2000. [16] G. Kramer . Capacity results for the discrete memoryless network. IEE E T rans. Inf. Theory , IT -49:4–21, 2003. [17] T . M. Cov er and J. A. Thomas. Elements of Information Theory . W iley , New-Y ork, 2nd edition, 2006. [18] A.D. W yner . The rate-dist ortion functi on for source coding with side information at the decoder-II: Genera l sources. Informatio n and Contr ol , 38:60–80, 1978. [19] Y . Stei nberg. Coding for chann els with rate- limited side informat ion at the dec oder , with applic ations. IEEE T rans. Inf . Theory , 54:42 83– 4295, 2008. [20] I. Csisz ´ ar and J. K ¨ orne r . Information Theory: Coding Theorems for Discret e Memoryless Systems . Academic, New Y ork, 1981.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment