Generalization of Jeffreys divergence based priors for Bayesian hypothesis testing

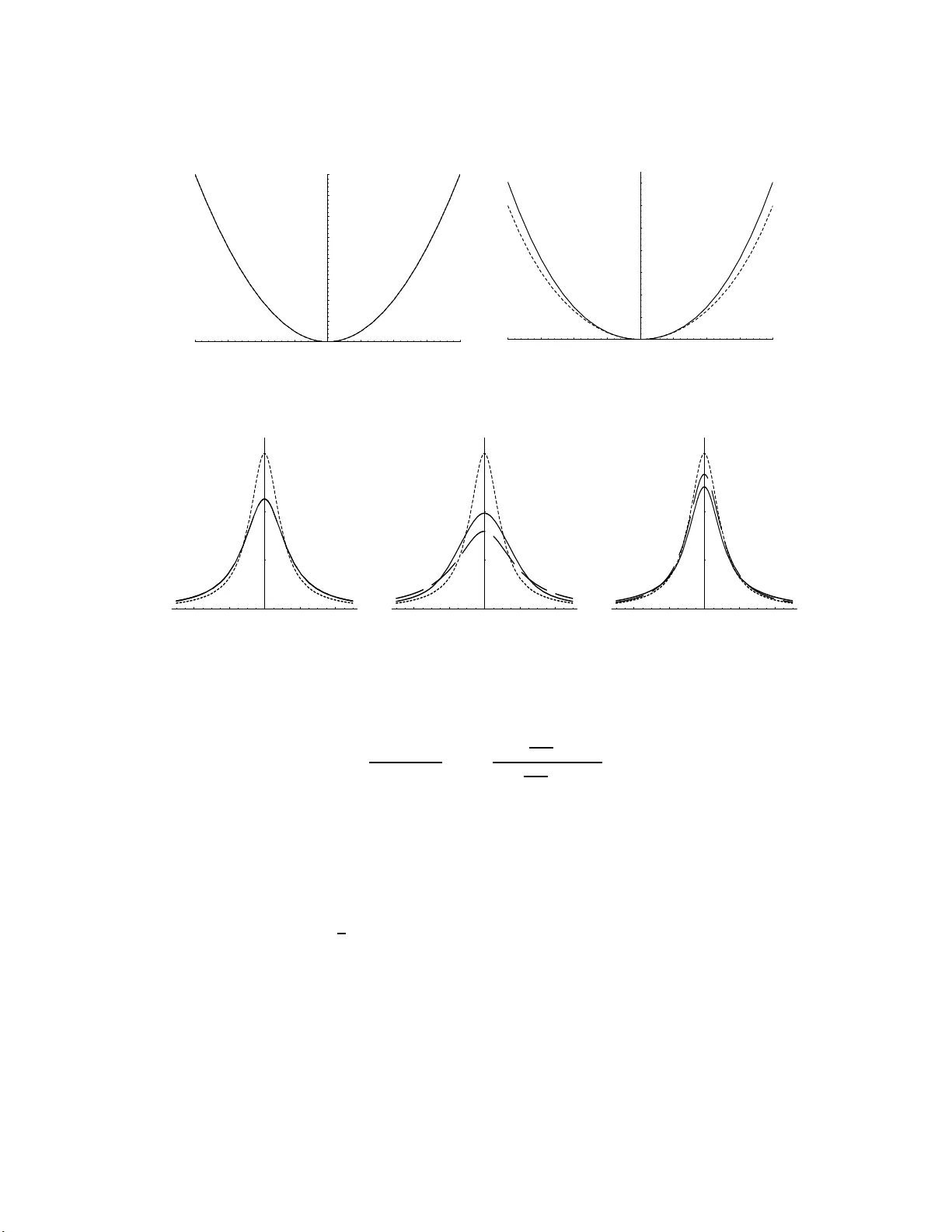

In this paper we introduce objective proper prior distributions for hypothesis testing and model selection based on measures of divergence between the competing models; we call them divergence based (DB) priors. DB priors have simple forms and desira…

Authors: M.J. Bayarri, G. Garcia-Donato