Gaussian Interference Networks: Sum Capacity in the Low Interference Regime and New Outer Bounds on the Capacity Region

Establishing the capacity region of a Gaussian interference network is an open problem in information theory. Recent progress on this problem has led to the characterization of the capacity region of a general two user Gaussian interference channel w…

Authors: V. Sreekanth Annapureddy, Venugopal V. Veeravalli

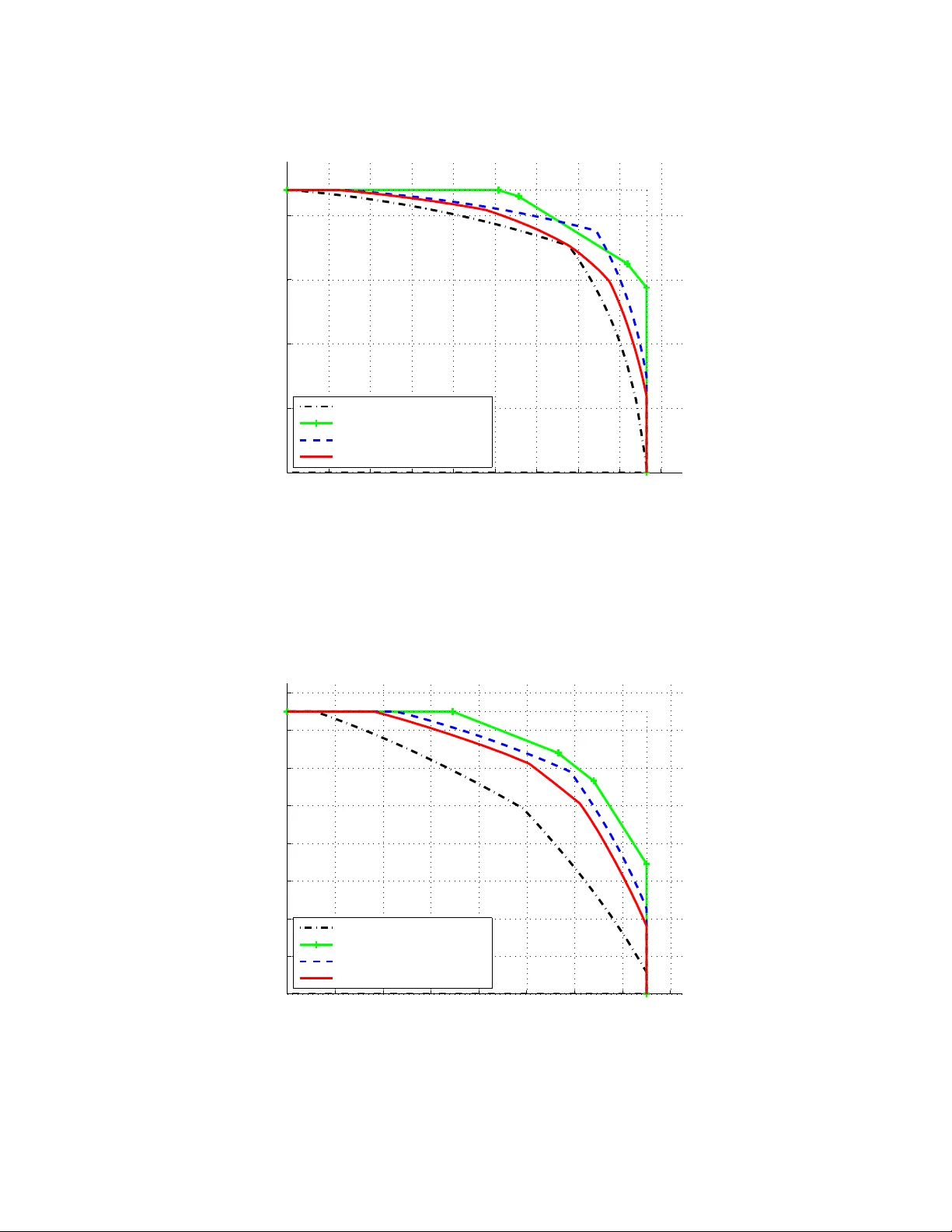

1 Submitted to IEEE T ransactions on Information Theory , February 2008. Revised No v 2008. Gaussian Interference Networks: Sum Capacity in the Lo w Interference Re gime and Ne w Outer Bounds on the Capacity Re gion V . Sreekanth Annapureddy and V enugopal V . V eerav alli ∗ { vannapu2,vvv } @uiuc.edu. Abstract Establishing the capacity region of a Gaussian interference network is an open problem in information theory . Recent progress on this problem has led to the characterization of the capacity region of a general two-user Gaussian interference channel within one bit. In this paper , we de velop ne w , improved outer bounds on the capacity region. Using these bounds, we show that treating interfer ence as noise achiev es the sum capacity of the two-user Gaussian interference channel in a low interfer ence r e gime , where the interference parameters are below certain thresholds. W e then generalize our techniques and results to Gaussian interference networks with more than two users. In particular , we demonstrate that the total interference threshold, below which treating interference as noise achie ves the sum capacity , increases with the number of users. Index T erms W eak interference channel, genie-aided bound, treating interference as noise. ∗ The authors are with the Coordinated Science Laboratory and the Department of Electrical and Computer Engineering, Univ ersity of Illinois at Urbana-Champaign, Urbana, IL 61801 USA. This research was supported in part by the NSF award CCF 0431088, through the Univ ersity of Illinois, by a V odafone Foundation Graduate Fellowship, and a grant from T exas Instruments. This paper was presented in part at the Information Theory and Applications (IT A) w orkshop, UCSD, San Diego CA, January 2008 [1] and at the International Symposium on Information Theory (ISIT), T oronto, Canada, July 2008 [2]. 2 I . I N T RO D U C T I O N In his celebrated paper [3], Shannon established the capacity of the additi ve white Gaussian noise (A WGN) channel, where the performance is limited by thermal noise. In multiuser wireless networks, the performance is also limited by the interference from other users sharing the same spectrum. Unlike thermal noise, interference has a definite structure since it is generated by other users. Can this structure be exploited to decrease the uncertainty and thus improve the performance of the communication network? If so, what are the optimal signaling strategies? In this paper , we establish the some what counter- intuiti ve result that exploiting the structure of the interference in Gaussian interference channels does not improve the overall system throughput in a low interfer ence regime. In other words, it is possible to tr eat interfer ence as noise and still achieve the maximum possible throughput, if the interference lev els are belo w certain thresholds. h X 1 X 2 Y 1 Y 2 Z 2 Z 1 Fig. 1. T wo-user symmetric Gaussian interference channel Interference management is of vital importance in wireless communication systems, with se veral users contending for the same limited spectrum. As a first step to wards an information-theoretic study of interference management, consider two users sharing a wireless channel as shown in Figure 1, where each user’ s recei ver is interested in only the information transmitted by the corresponding transmitter . Each user’ s rate of communication is limited by the Gaussian noise at the recei ver and the interference caused by the other user . Carleial [4] showed that interference does not reduce the capacity of such a two-user Gaussian interfer ence channel in the very str ong interference setting, where each recei ver can completely cancel the interference by exploiting its structure. Subsequently , the capacity region was determined in the str ong interference setting [5], [6], where it was shown that each user can decode the 3 information transmitted to the other user . Establishing the capacity region in the other regimes remains an open problem. The best known achiev able region for the two-user Gaussian interference channel is based on the Han-K obayashi (HK) scheme [5], [7]. Here the users split message into pri v ate and common messages, and each user jointly decodes its o wn messages and the common message of the interfering user . This is in general a sophisticated scheme, requiring multi-user encoders and decoders and coordination between the users. What we establish in this paper is that if the interference le vels are lo w enough, then the receiv ers can treat interference as noise, and single users encoders and decoders can be employed without any loss in sum capacity . In order to establish the sum capacity in the low interference regime, we need to prove a con verse, i.e., deri ve an outer bound on the sum capacity that matches with the sum rate achiev ed by treating interference as noise. The concept of a genie gi ving side information to the receiv ers was used in [8], [9] to derive outer bounds on the capacity region. Since the receivers can choose not to use the side information, the capacity re gion of the genie-aided channel is an obvious outer bound to the capacity region of the interference channel. In [9], a specific set of genie-aided outer bounds are sho wn to be within one bit of the capacity region. W e sho w that the bounding technique dev eloped in [9] is applicable to a wider class of genie signals. W e further show that if the channel parameters satisfy a condition for lo w interference, the genie can be selected in a clev er way so that the resulting genie-aided outer bound matches the sum rate achiev able by treating interference as noise. W ith this wider class of genie signals and using the entropy po wer inequality [10], we also deri ve outer bounds on the entire capacity region that are tighter than existing outer bounds. Similar results hav e also been established independently by Shang et. al in [11] and Motahari et. al. in [12]. W e then generalize the results to Gaussian interference networks with more than two users. Using a genie similar to that used for the two-user channel, we deri ve lo w interference regime conditions for the many-to-one interference channel, where the interference is experienced by only one user and one- to-many interference channel, where the interference is generated by only one user . W e also propose a ne w genie construction, where each receiv er is provided with multiple genie signals, for any arbitrary Gaussian interference network. This genie is a generalization of the genie used in [9] and the purpose of this generalization is to de velop results analogous to [9] for arbitrary Gaussian interference networks. W e show that treating interference as noise with Gaussian inputs achie ves the sum capacity of the vector genie-aided channel. As done for the two-user channel, this outer bound can be tightened to establish the sum capacity in a low interference regime. W e tighten the bound for a three-user symmetric Gaussian interference channel, and demonstrate the existence of channels for which treating interference as noise 4 is optimal, but the total interference to noise ratio (INR) is greater than the INR threshold of the two-user interference channel. A. Notation and Organization W e use the following notation. For deterministic objects, we use lowercase letters for scalars and uppercase letters in blackboard bold font for matrices. For example, we use h to denote a deterministic scalar and H to denote a deterministic matrix. For random objects, we use uppercase letters for scalars, and underlined uppercase letters for vectors. Random objects with superscripts denote sequences of the random objects in time. For example, we use X to denote a random scalar , X to denote a random vector , and X n and X n to denote the sequences of length n of the random scalars and vectors, respecti vely . W e use Cov ( X ) to denote the v ariance of a random v ariable X , and Cov ( X | Y ) denote the minimum mean square error in estimating the random variable X from the random variable Y , with similar notation for random vectors. W e use N ( µ, σ 2 ) to denote the Gaussian distrib ution with mean µ and v ariance σ 2 , and N ( µ, Σ) to denote the Gaussian vector distribution with mean µ and covariance matrix Σ . W e use h ( . ) to denote the differential entropy of a continuous random v ariable or vector and I ( . ; . ) to denote the mutual information. The rest of the paper is or ganized as follows. In section II, we introduce the model for the Gaussian interference network that we study . In Section III, we summarize mathematical results such as the entropy po wer inequality and prov e some ne w results that are required in establishing our new outer bounds. In Section IV, we re vie w the existing bounds on the capacity region of the two-user Gaussian interference channel. In Section V, we establish the sum capacity of two-user Gaussian interference channel in a low interference regime. In Section VI, we present ne w outer bounds on the capacity region of the two-user channel. In Section VII, we present extensions of our results on the sum capacity in the low interference regime to Gaussian interference networks with more than two users. In Section VIII, we provide some concluding remarks. I I . I N T E R F E R E N C E N E T W O R K M O D E L Consider a Gaussian interference network with M users, i.e., M pairs of transmitters and receivers, where no user is interested in the information transmitted to the other users. Over one symbol period, the channel is described by Y r = M X t =1 h rt X t + Z r , 1 ≤ r ≤ M (1) 5 where X t is the signal transmitted by transmitter t , h rt is the fixed channel gain from transmitter t to recei ver r , and the receiver noise terms { Z r } M r =1 are assumed to be zero mean, unit variance, indepen- dent Gaussian random variables. Furthermore, the noise is assumed to be independent and identically distributed (i.i.d.) in time. Transmitter t has an a verage power constraint P t . In vector notation, (1) is equi valent to Y = H X + Z (2) where H is a deterministic M × M -matrix with elements { h r,t } . The interference network is said to be in standard form [13], if h rt = 1 , ∀ r = t. Any interference network (2) can be expressed in an equiv alent standard form for the purposes of an information-theoretic analysis. For each user i , let the message index m i be uniformly distributed ov er { 1 , 2 , . . . , 2 nR i } , and let C i ( n ) be a code consisting of an encoding function X n i : { 1 , 2 , . . . , 2 nR i } → I R n satisfying the po wer constraint || X n i ( m i ) || 2 ≤ nP i , ∀ m i ∈ { 1 , 2 , . . . , 2 nR i } and a decoding function g i : I R n → { 1 , 2 , . . . , 2 nR i } . The corresponding probability of decoding error λ i ( n ) is defined as P { m i 6 = g i ( Y n i ) } . A rate tuple ( R 1 , R 2 , . . . , R M ) is said to be achiev able if there exists a sequence of codes {C 1 ( n ) , C 2 ( n ) , . . . , C M ( n ) } ∞ n =1 such that the error probabilities λ 1 ( n ) , λ 2 ( n ) , . . . , and λ M ( n ) all go to zero as n goes to infinity . Capacity region is the closure of all the achie vable rate tuples. I I I . M A T H E M AT I C A L P R E L I M I NA R I E S In this section, we re view the information inequalities that are useful in establishing our new outer bounds. The first result is a generalization of the maximum entropy theorem. Consider a sequence of random variables { X j } n j =1 with av erage power constraint P n j =1 E h X 2 j i ≤ nP . It is well kno wn that h ( X n ) ≤ n 2 log(2 π eP ) , and equality is achiev ed if and only if (iff) { X j } n j =1 are i.i.d. N (0 , P ) [10, Theorem 8.6.5]. The follo wing lemma is a generalization of this result. Lemma 1: Let X be a random vector , and let Y and S be noisy observations of X . Y = A X + Z S = B X + W 6 where Z and W are correlated, zero-mean, Gaussian random vectors, and A and B are real v alued matrices. Consider the random vector sequence X n = ( X 1 , . . . , X n ) with the cov ariance constraint 1 n P n j =1 Σ xj Σ x , where Σ xj is the cov ariance matrix of X j . Furthermore, let Y n and S n be the corresponding observ ations when the noise vector sequences Z n and W n each ha ve components that are i.i.d. in time. Then, we ha ve h ( Y n | S n ) ≤ n h ( Y G | S G ) where Y G and S G are Y and S when X = X G ∼ N (0 , Σ x ) . Pr oof: Let Q be a time sharing random v ariable taking v alues from 1 to n with equal probability . Let ˜ X G ∼ N (0 , 1 n P n i =1 Σ xi ) , and ˜ Y G and ˜ S G be the corresponding Y and S . h ( Y n | S n ) = n X i =1 h ( Y i | Y i − 1 , S n ) ( a ) ≤ n X i =1 h ( Y i | S i ) = n h ( Y Q | S Q , Q ) ( b ) ≤ n h ( Y Q | S Q ) ( c ) ≤ n h ( ˜ Y G | ˜ S G ) where the steps (a), (b) follow from the fact that conditioning reduces entropy and step (c) follo ws because Gaussian distribution maximizes the conditional distrib ution for a giv en cov ariance constraint [14, Lemma 1]. No w letting ˆ X G ∼ N (0 , Σ x − 1 n P n i =1 Σ xi ) , and further assuming that ˆ X G is independent of ˜ X G , Z and W , we hav e h ( ˜ Y G | ˜ S G ) = h ( A ˜ X G + Z | B ˜ X G + W ) = h ( A ( ˜ X G + ˆ X G ) + Z | B ( ˜ X G + ˆ X G ) + W , ˆ X G ) ( d ) ≤ h ( A ( ˜ X G + ˆ X G ) + Z | B ( ˜ X G + ˆ X G ) + W ) = h ( Y G | S G ) where the step (d) follo w from the fact that conditioning reduces entropy . The follo wing is the celebrated entropy po wer inequality (EPI) [10, Theorem 17.7.3] originally proposed by Shannon. Lemma 2 (EPI): For any independent random sequences X n and Z n , 2 2 n h ( X n + Z n ) ≥ 2 2 n h ( X n ) + 2 2 n h ( Z n ) . 7 Often, we are interested in the case where the sequence Z n is i.i.d. Gaussian, in which case we ha ve the follo wing corollary . Cor ollary 1: Let X n be a random sequence and Z n be an independent random sequence with com- ponents that are i.i.d. N (0 , σ 2 ) . Then h ( X n + Z n ) ≥ n 2 log 2 2 n h ( X n ) + 2 π eσ 2 . Equi valently h ( X n ) ≤ n 2 log 2 2 n h ( X n + Z n ) − 2 π eσ 2 . As a corollary of the EPI, we ha ve the worst case noise result that says that if the input distribution is i.i.d. Gaussian, then the noise that minimizes the mutual information under an a verage power constraint is also i.i.d. Gaussian. (See the mutual information game problem: 9.21 in [10].) W ith a little abuse of notation, the worst case noise results in the scalar and vector cases are as follo ws: Lemma 3 (W orst Case Noise: Scalar Case): Let X n be a random sequence with average power con- straint P , i.e., P n j =1 E h X 2 j i ≤ nP , and let Z n be an independent random sequence with components that are i.i.d. N (0 , σ 2 ) . Then h ( X n ) − h ( X n + Z n ) ≤ n h ( X G ) − n h ( X G + Z ) where X G ∼ N (0 , P ) , and equality is achie ved if X n = X n G , where X n G denotes the random sequence with components that are i.i.d. N (0 , P ) . Pr oof: The result follows from the EPI (see proof of Lemma 5 below); a different proof is given in [15]. Interestingly , the result can be established as a direct consequence of the Lemma 1, as seen belo w in the proof of the Lemma 4. Lemma 4 (W orst Case Noise: V ector Case): Let X n be a random vector sequence with an av erage cov ariance constraint, i.e., P n j =1 Σ xj n Σ x , and let Z n be an independent random vector sequence, with components that are i.i.d. N (0 , Σ z ) . Then h ( X n ) − h ( X n + Z n ) ≤ n h ( X G ) − n h ( X G + Z ) where X G ∼ N (0 , Σ x ) , and equality is achiev ed if X n = X n G , where X n G denotes the random sequence with components that are i.i.d. N (0 , Σ x ) . 8 Pr oof: Although the proof follows from results giv en in [15], we provide a dif ferent simple proof based on Lemma 1. h ( X n ) − h ( X n + Z n ) = − I ( Z n ; X n + Z n ) = − h ( Z n ) + h ( Z n | X n + Z n ) = − n h ( Z ) + h ( Z n | X n + Z n ) ( a ) ≤ − n h ( Z ) + n h ( Z | X G + Z ) = n h ( X G ) − n h ( X G + Z ) where step (a) follo ws from Lemma 1. Remark 1: As we hav e noted in Lemma 3, the scalar case of the worst case noise result is a corollary of the EPI. Howe ver , in the vector case, Lemma 4 does not follo w from the EPI, unless Σ x is a scaled version of Σ z . W e now provide an extension of the scalar version of the worst case noise result, which is useful in deri ving outer bounds on the sum capacity of interference networks with more than two users. This result might also be useful in other multiuser information theory problems. Lemma 5: For i = 1 , 2 , . . . , M , let X n i be a random sequence with av erage power constraint P i , i.e., P n j =1 E h X 2 ij i ≤ nP i . Further, let Z n be a sequence with components that are i.i.d. N (0 , σ 2 ) . Assume that the sequences X n i are independent of each other and also independent of Z n , and let X iG ∼ N (0 , P i ) . Then M X i =1 λ i h ( X n i ) − h M X i =1 X n i + Z n ! ≤ n M X i =1 λ i h ( X iG ) − nh M X i =1 X iG + Z ! (3) for all λ i ≥ P i P M i =1 P i + σ 2 and equality is achieved in (3) if for i = 1 , . . . , M , X n i = X n iG , where X n iG denotes the random sequence with components that are i.i.d. N (0 , P i ) . Pr oof: W e will prove the lemma for λ i = P i P M i =1 P i + σ 2 The result with λ i > P i P M i =1 P i + σ 2 follo ws because the additional positiv e entropy quantities are easily seen to be maximized by X n iG . 9 Denote h ( X n i ) n by t i and 2 π eσ 2 by c . Using the EPI (Lemma 2), we ha ve M X i =1 λ i h ( X n i ) − h M X i =1 X n i + Z n ! ≤ n M X i =1 λ i t i − n 1 2 log M X i =1 2 2 t i + c ! . Let f ( t ) = P M i =1 λ i t i − 1 2 log P M i =1 2 2 t i + c . The concavity of f in t follows from the con ve xity of the log-sum-e xp function [16]. No w , using ∂ f ∂ t i = λ i − 2 2 t i P M i =1 2 2 t i + c it can be easily check ed that { t j = 1 2 log (2 π eP j ) } M j =1 satisfy ∂ f ∂ t i = 0 for all i . Thus, { t j = 1 2 log (2 π eP j ) } M j =1 maximizes the function f ( t ) , and hence M X i =1 λ i h ( X n i ) − h M X i =1 X n i + Z n ! ≤ nf ( t ) ≤ n M X i =1 λ i 1 2 log (2 π eP i ) − n 1 2 log 2 π e M X i =1 P i + 2 π eσ 2 ! = n M X i =1 λ i h ( X iG ) − nh M X i =1 X iG + Z ! . W e now prov e the follo wing straightforward lemma, which is nev ertheless useful in handling the side information provided by the genie in our genie-aided outer bounds. Lemma 6: Let X n be a random vector sequence, and let Z n and W n be (possibly correlated) zero- mean Gaussian random vector sequences, independent of X n and i.i.d. in time. Then h ( X n + Z n | W n ) = h ( X n + V n ) where V n is i.i.d. N (0 , Cov ( Z | W )) . Pr oof: Let ˆ Z n be the MMSE estimate of Z n gi ven W n . Then we hav e Z n = ˆ Z n + V n . No w h ( X n + Z n | W n ) = h ( X n + ˆ Z n + V n | W n ) ( a ) = h ( X n + V n | W n ) ( b ) = h ( X n + V n ) where the step (a) follo ws because the MMSE estimate ˆ Z n is a function of W n , and the step (b) follo ws because the (observ ation) W n is independent of the MMSE error V n and X n . 10 Lemma 7: For any random vectors X , Y and S , 1) I ( X ; S | Y ) = 0 iff X − Y − S form a Marko v chain. 2) X − Y − S form a Markov chain iff ˆ S ( X , Y ) , the MMSE estimate of S given ( X , Y ) , is equal to ˆ S ( Y ) , the MMSE estimate of S given Y . 3) Furthermore if X , Y and S are Gaussian random variables such that Y = X + Z S = X + N where the zero mean Gaussian random variables Z and N are independent of X , then X − Y − S form a Marko v chain iff E [ N Z ] = E Z 2 . Pr oof: 1) Claim 1 follo ws from Theorem 2.8 in [17]. 2) If X − Y − S form a Markov chain, then ˆ S ( X , Y ) = E [ S | X , Y ] = E [ S | Y ] = ˆ S ( Y ) . T o prove the con verse, suppose ˆ S ( X , Y ) = ˆ S ( Y ) . No w let E be the error in estimation of S gi ven ( X , Y ) , which is independent of X and Y . Then, P S | X ,Y ( s | X = x, Y = y ) = P E ( s − ˆ S ( x, y )) = P E ( s − ˆ S ( y )) = P S | Y ( s | Y = y ) . 3) Observe that ˆ S ( X , Y ) = E [ S | X , Y ] = E [ S | X , Z ] = X + E [ N | Z ] = X + E [ N Z ] E [ Z 2 ] Z = E [ N Z ] E [ Z 2 ] Y + 1 − E [ N Z ] E [ Z 2 ] X . From Claim 2, it follo ws that X − Y − S ) form a Markov chain iff E [ N Z ] = E Z 2 . 11 Lemma 8: For an y Gaussian random variables X , Y , S 1 and S 2 , I ( X ; S | Y ) = 0 if f I ( X ; S 1 | Y ) = 0 and I ( X ; S 2 | Y ) = 0 . Pr oof: Since I ( X ; S i | Y ) < I ( X ; S | Y ) for i = 1 , 2 , the ‘only if ’ part of the Lemma is clear . It remains to pro ve the ‘if ’ part of the Lemma and using Lemma 7, it is enough to show that X − Y − S form a Marko v chain if X − Y − S 1 and X − Y − S 2 form Marko v chains. Let ˆ X ( Y ) be the MMSE estimate of X giv en Y and E be the error in estimate. For i = 1 , 2 , since X − Y − S i form a Markov chain, it follo ws from Lemma 7 that ˆ X ( Y , S i ) = ˆ X ( Y ) . Hence E is independent of both S 1 and S 2 . Since E , S 1 and S 2 are all Gaussian, E is also independent of S . Therefore ˆ X ( Y , S ) = ˆ X ( Y ) and hence X − Y − S form a Markov chain. I V . T W O U S E R I N T E R F E R E N C E C H A N N E L : E X I S T I N G B O U N D S The information-theoretic study of interference channels has mainly been limited to the two-user case, with the hope that the insights obtained from studying the two-user case can be generalized to an interference network with more than two users. W ith M = 2 in (2), we get the two-user Gaussian interference channel parameterized by { P 1 , P 2 , h 12 , h 21 } : Y 1 = X 1 + h 12 X 2 + Z 1 Y 2 = h 21 X 1 + X 2 + Z 2 (4) with a verage transmit power constraints P 1 and P 2 on users 1 and 2 , respectiv ely . The capacity region of this channel is kno wn only in the very strong interference [4] and strong interference [5], [6] settings, where it can be established that both the users can decode all the transmitted messages, and thus the capacity region is the same as that of the compound multiple access channel. In the rest of this section, we summarize the existing bounds on the capacity region of the weak interference channel, where h 12 < 1 and h 21 < 1 . A. Inner bounds Simple schemes: In the interference free scenario, where h 12 = h 21 = 0 , single-user Gaussian code- books at the transmitters are obviously capacity-achieving. Thus, if the interference is low , a reasonable strategy is to treat interference as noise at the receiv ers, and employ single-user Gaussian codebooks at the transmitters to achie ve the following sum rate. Pr oposition 1 (T reating interfer ence as noise): The sum capacity ( C sum ) of the two-user Gaussian in- terference channel (4) is lo wer bounded by C sum ≥ 1 2 log 1 + P 1 1 + h 2 12 P 2 + 1 2 log 1 + P 2 1 + h 2 21 P 1 12 Clearly such a strategy will not work if the interference is moderate, in which case, another simple alternati ve is to orthogonalize the users in time or frequency . Sophisticated schemes: Interference, unlike noise, is generated by other users and hence has a definite structure. Sophisticated schemes that exploit the interference structure could potentially perform better than the simple schemes described abov e. Han and Kobayashi introduced such a sophisticated scheme in [5], which results in the best known achie vable re gion for the two-user channel. And while Chong, Motani and Gar g have recently simplified the Han-K obayashi region [7], it still remains formidable to compute. B. Outer Bounds The best kno wn outer bounds to the capacity region of the two-user Gaussian interference channel are the one due to Sato, Costa and Kramer [18], [19], [8], which we refer to as the br oadcast c hannel outer bound ; and the one due to Etkin, Tse, and W ang [9], which we refer to as the ETW outer bound . In the rest of this section, we revie w these outer bounds. W e also giv e a simple and more direct proof of the broadcast channel outer bound, and illustrate that it is a tightened version of the Z-channel sum rate outer bound [8], [20]. W e make use of this connection to tighten the ETW outer bound in Section VI. A salient feature of these outer bounds is that they are based on a genie providing side information to the receivers. Since the receiv ers can choose not to use the side-information, the capacity re gion of the genie-aided channel is an obvious outer bound on the capacity region of the interference channel. Throughout this paper, we will assume that the side information is linear in the inputs with additiv e Gaussian noise that is i.i.d. in time. Thus, the side information will be Gaussian if all the inputs are Gaussian. Some notation is required before proceeding further . The variable S r denotes the side information gi ven to receiv er r , r = 1 , 2 . The v ariable X tG denotes the zero-mean Gaussian random variable with v ariance P t , t = 1 , 2 . The variables Y rG and S rG denote the Gaussian outputs and side information at recei ver r , respectiv ely , that result when all the channel inputs are Gaussian, i.e., when X t = X tG , for t = 1 , 2 . The quantities X n tG , Y n rG and S n rG denote i.i.d. sequences of the corresponding Gaussian random v ariables. 13 C. Bounding T ec hniques Consider the follo wing possible ways of bounding the rate ( R 1 ) of user 1 • No Side Information: If the receiv ers do not receiv e any side information, then R 1 can be bounded using Fano’ s equality as follows: n ( R 1 − n ) ≤ I ( X n 1 ; Y n 1 ) = h ( Y n 1 ) − h ( Y n 1 | X n 1 ) ≤ n h ( Y 1 G ) − h ( h 12 X n 2 + Z n 1 ) . (5) • Interfer ence F ree: Providing receiv er 1 with the knowledge of the interfering signal X 2 can only increase the achie vable rate R 1 , hence n ( R 1 − n ) ≤ I ( X n 1 ; Y n 1 , X n 2 ) = I ( X n 1 ; Y n 1 | X n 2 ) = h ( Y n 1 | X n 2 ) − h ( Y n 1 | X n 1 , X n 2 ) ( b ) = h ( X n 1 + Z n 1 ) − n h ( Y 1 G | X 1 G , X 2 G ) ( c ) = h ( h 21 X n 1 + h 21 Z n 1 ) − n h ( h 21 Y 1 G | X 1 G , X 2 G ) (6) where the step (b) follows because Y 1 | X 1 , X 2 is the Gaussian noise at the receiv er, which is not a function of the input distrib utions. The scaling in the step (c) is done for con venience. • Genie-aided: Here a genie provides side information S 1 to receiv er 1. As we stated earlier , we assume that the side information is linear in the inputs with additive Gaussian noise that is i.i.d. in time. For the two-user case, we further restrict our attention to genie signals such that, conditioned on the input sequence X n i , the sequence S n i is i.i.d. Gaussian (this holds, for e xample, if S n i = X n i + W n i , where W n i is i.i.d. Gaussian). Then we can write n ( R 1 − n ) ≤ I ( X n 1 ; Y n 1 , S n 1 ) = I ( X n 1 ; S n 1 ) + I ( X n 1 ; Y n 1 | S n 1 ) = h ( S n 1 ) − h ( S n 1 | X n 1 ) + h ( Y n 1 | S n 1 ) − h ( Y n 1 | S n 1 , X n 1 ) ( d ) = h ( S n 1 ) − n h ( S 1 G | X 1 G ) + h ( Y n 1 | S n 1 ) − h ( Y n 1 | S n 1 , X n 1 ) ( e ) ≤ h ( S n 1 ) − n h ( S 1 G | X 1 G ) + n h ( Y 1 G | S 1 G ) − h ( Y n 1 | S n 1 , X n 1 ) (7) where the step (d) holds because of the assumption on the genie signal, and the step (e) follows from Lemma 1. 14 The term nR 2 can bounded in similar ways: • No Side Information: n ( R 2 − n ) = I ( X n 2 ; Y n 2 ) ≤ n h ( Y 2 G ) − h ( h 21 X 1 + Z n 2 ) . (8) • Interfer ence F r ee: n ( R 2 − n ) ≤ I ( X n 2 ; Y n 2 | X n 1 ) ≤ h ( h 12 X n 2 + h 12 Z n 2 ) − n h ( h 12 Y 2 G | X 1 G , X 2 G ) . (9) • Genie-aided: n ( R 2 − n ) ≤ I ( X n 2 ; Y n 2 , S n 2 ) ≤ h ( S n 2 ) − n h ( S 2 G | X 2 G ) + n h ( Y 2 G | S 2 G ) − h ( Y n 2 | S n 2 , X n 2 ) . (10) D. Etkin, Tse and W ang (ETW) Outer Bound [9] If the genie signals are defined as S 1 = h 21 X 1 + Z 2 S 2 = h 12 X 2 + Z 1 (11) then the follo wing relations hold true h ( Y n 2 | S n 2 , X n 2 ) = h ( S n 1 ) h ( Y n 1 | S n 1 , X n 1 ) = h ( S n 2 ) . (12) Since h 12 ≤ 1 , h 21 ≤ 1 , we can use the worst case noise result (Lemma 3) to obtain the following inequalities: h ( h 21 X n 1 + h 21 Z n 1 ) − h ( h 21 X n 1 + Z n 2 ) ≤ n h ( h 21 X 1 G + h 21 Z 1 ) − n h ( h 21 X 1 G + Z 2 ) h ( h 12 X n 2 + h 12 Z n 2 ) − h ( h 12 X n 2 + Z n 1 ) ≤ n h ( h 12 X 2 G + h 12 Z 2 ) − n h ( h 12 X 2 G + Z 1 ) . (13) The relations (12) and (13), together with bounding techniques described in the previous subsection, lead succinctly to the outer bound on the capacity re gion giv en by Etkin, Tse and W ang [9]: 15 Lemma 9 (Etkin, Tse and W ang [9]): The capacity region of a two-user Gaussian interference channel with h 12 ≤ 1 and h 21 ≤ 1 is contained in the region: R 1 ≤ I ( X 1 G ; Y 1 G | X 2 G ) (14) R 2 ≤ I ( X 2 G ; Y 2 G | X 1 G ) (15) R 1 + R 2 ≤ I ( X 1 G ; Y 1 G | X 2 G ) + I ( X 2 G ; Y 2 G ) (16) R 1 + R 2 ≤ I ( X 1 G ; Y 1 G ) + I ( X 2 G ; Y 2 G | X 1 G ) (17) R 1 + R 2 ≤ I ( X 1 G ; Y 1 G , S 1 G ) + I ( X 2 G ; Y 2 G , S 2 G ) (18) 2 R 1 + R 2 ≤ I ( X 1 G ; Y 1 G | X 2 G ) + I ( X 1 G ; Y 1 G ) + I ( X 2 G ; Y 2 G , S 2 G ) (19) R 1 + 2 R 2 ≤ I ( X 1 G ; Y 1 G , S 1 G ) + I ( X 2 G ; Y 2 G | X 1 G ) + I ( X 2 G ; Y 2 G ) (20) where the genie signals { S 1 , S 2 } are defined in (11). Pr oof: The outer bounds immediately follow by choosing the appropriate bounding technique from Section IV -C, and using the relations (13) and (12), where necessary . For example, to deri ve the bound (17), use (5) and (9) and use the worst case noise result (13) to show that h ( h 12 X n 2 + h 12 Z n 2 ) − h ( h 12 X n 2 + Z n 1 ) is maximized by X n 2 G . T o derive the bound (18), use (7) and (10) and use (12) to show that the right hand side (RHS) of (7) plus the RHS of (10) is maximized by X n 1 G and X n 2 G . Remark 2: The RHS terms in the outer bounds can easily be shown to be equiv alent to those in Theorem 3 of [9] by making the follo wing substitutions: I ( X 1 G ; Y 1 G | X 2 G ) = 1 2 log (1 + P 1 ) I ( X 1 G ; Y 1 G ) = 1 2 log 1 + P 1 1 + h 2 12 P 2 I ( X 1 G ; Y 1 G , S 1 G ) = 1 2 log 1 + h 2 21 P 1 + P 1 1 + h 2 12 P 2 and similar substitutions for the terms corresponding to user 2 . The form of the outer bound gi ven in Lemma 9 is strikingly similar to the simplified HK region [7], and in fact a special case of the HK region is sho wn to be within one bit of the outer bound [9],[21]. E. Outer Bounds to One-Sided Interference Channels In deriving the bounds (16) and (17), one of the receivers is made interference free. Thus these outer bounds are deri ved for the one-sided interference channel, where only one user experiences the interference. Such a channel is also called the Z-channel, and we therefore refer to the outer bounds (16) and (17) as the Z-channel sum rate outer bounds. 16 In [19], Costa showed the equiv alence between the Z-channel and the degraded interference channel, and in [18], Sato sho wed that the capacity region of the degraded interference channel is contained in the capacity region of a broadcast channel. Using these ideas, Kramer established an outer bound to the capacity region of the Z-channel [8]. W e refer to this outer bound as the broadcast channel outer bound. W e sho w that broadcast channel outer bound is a tightened version of Z-channel sum rate outer bound, and thus pro vide a simple and direct proof of the broadcast channel outer bound. In deri ving the Z-channel sum rate outer bound (17), we have used the worst case noise result to relate the terms h ( h 12 X n 2 + h 12 Z n 2 ) and h ( h 12 X n 2 + Z n 1 ) . Instead, the EPI can be used to obtain a tighter relation, which results in the broadcast channel outer bound. Lemma 10 (Br oadcast channel outer bound [18], [19], [8]): The capacity region of a two-user Gaus- sian interference channel with h 12 ≤ 1 and h 21 ≤ 1 is contained in the region R 1 ≤ 1 2 log 1 + P 1 + h 2 12 P 2 1 + h 2 12 (2 2 R 2 − 1) . (21) By changing the order of the users, we also ha ve R 2 ≤ 1 2 log 1 + P 2 + h 2 21 P 1 1 + h 2 21 (2 2 R 1 − 1) . (22) Pr oof: Using (5) and (9), we ha ve nR 1 ≤ n 2 log(2 π e (1 + P 1 + h 2 12 P 2 )) − h ( h 12 X n 2 + Z n 1 ) nR 2 ≤ h ( h 12 X n 2 + h 12 Z n 2 ) − n 2 log(2 π eh 2 12 ) . From the EPI (Corollary 1), it follo ws that h ( h 12 X n 2 + Z n 1 ) ≥ n 2 log 2 π e (1 − h 2 12 ) + 2 2 n h ( h 12 X n 2 + h 12 Z n 2 ) ≥ n 2 log 2 π e (1 − h 2 12 ) + 2 πeh 2 12 2 2 R 2 . Therefore, nR 1 ≤ n 2 log(2 π e (1 + P 1 + h 2 12 P 2 )) − n 2 log 2 π e (1 − h 2 12 ) + 2 πeh 2 12 2 2 R 2 = n 2 log 1 + P 1 + h 2 12 P 2 1 − h 2 12 + h 2 12 2 2 R 2 . Remark 3: The outer bound in Lemma 10 can be sho wn to be identical to that presented in Theorem 2 of [8]. 17 F . T ightening the Outer Bounds The outer bounds presented abov e can be tightened by using the follo wing observ ations: • Generalized Genie: In [9], the genie (11) is selected to satisfy (12). Ho wever the techniques de veloped in [9] can be generalized to a larger class of genie signals, by using the worst case noise result to relate the terms in (12) instead of canceling the terms. In fact one of the main results of this paper , the sum capacity of the two-user Gaussian interference channel in the low interference re gime, is a direct consequence of this observ ation. • EPI-based bounds: W e hav e sho wn that the broadcast channel outer bound is a tightened version of sum rate bounds of (16) and (17) by using the EPI instead of the worst case noise result. W e can similarly apply the EPI to the other outer bounds in the Lemma 9. W e now proceed to use these observ ations to tighten the existing outer bounds. V . T W O U S E R I N T E R F E R E N C E C H A N N E L : S U M C A PAC I T Y I N L O W I N T E R F E R E N C E R E G I M E Consider the limiting scenario where the interference parameters { h rt } r 6 = t go to zero uniformly . In the limit, when there is no interference, single user Gaussian codes are optimal. Given this fact, a natural question to ask is the following: In terms of the optimality of single user Gaussian codes, is the transition fr om “no interfer ence” to “interfer ence” continuous? If any other strategy performs better than treating interference as noise, then this implies that the recei vers are able to exploit the structure in the interference. On the other hand, for low enough interference levels the receiv ers may not be able to exploit such structure. Thus it is reasonable to expect the transition to be continuous. In this section, we establish this notion mathematically by sho wing that treating interference as noise indeed achiev es the sum capacity in a lo w (but nonzero) interference regime. A. Symmetric Interference Channel The essential ideas and results on the sum capacity of the two-user interference channel are captured in the symmetric interference channel, for which P 1 = P 2 = P and h 12 = h 21 = h . For this channel we shall establish the follo wing result. Theor em 1: For the symmetric interference channel, if the interference parameter h satisfies the con- dition | h + h 3 P | ≤ 0 . 5 (23) 18 then treating interference as noise achie ves the sum capacity , which is giv en by C sum = log 1 + P 1 + h 2 P . (24) Since the achiev ability part of the theorem is obvious, we only need to establish an upper bound on C sum that matches the expression giv en on the RHS of (24). W e use the concept of the genie-aided outer bound (see Section IV -C), but with a class of genie signals that is more general than that used for the ETW bound of Section IV -D. In particular , we wish to choose the genie to produce the tightest possible upper bound. T o this end, we introduce the following two qualities of a good genie. • Useful Genie: The ETW genie (11) is useful in deri ving an outer bound on the sum capacity of the interference channel. The reason behind its usefulness is the property (12) that facilitates the deri vation of the sum capacity of the genie-aided channel. Using (12), it can be shown that Gaussian inputs, which are i.i.d. in time and satisfy the po wer constraint with equality , are capacity achie ving for the genie-aided channel. Hence the sum capacity of the genie-aided channel equals I ( X 1 G ; Y 1 G , S 1 G ) + I ( X 2 G ; Y 2 G , S 2 G ) . (25) Interestingly , there exists a larger class of genie signals for which the optimality of Gaussian inputs holds. W e therefore define a genie to be useful , if it results in a genie-aided channel whose sum capacity (is achie ved by Gaussian inputs and) is given by (25). A second example of a useful genie signal is the interference removal genie, i.e., the genie that provides side information S 1 = X 2 to receiver 1 and side information S 2 = X 1 to receiver 2. Such a genie is clearly useful because the resulting genie-aided channel is the parallel Gaussian channel whose sum capacity is easily seen to be gi ven by (25). Howe ver , being too generous, such a genie does not result in a tight upper bound. This leads us to the notion of a smart genie. • Smart Genie: A smart genie results in a tight upper bound on the sum capacity . More precisely , if Gaussian inputs are used, then the presence of the genie does not improve the sum rate, i.e., I ( X 1 G ; Y 1 G , S 1 G ) = I ( X 1 G ; Y 1 G ) I ( X 2 G ; Y 2 G , S 2 G ) = I ( X 2 G ; Y 2 G ) . An example of the smart genie is one that does not interact with the receivers at all; ho wev er , it is obviously not useful. If the genie is useful and smart, then the sum capacity is upper bounded by I ( X 1 G ; Y 1 G , S 1 G ) + I ( X 2 G ; Y 2 G , S 2 G ) = I ( X 1 G ; Y 1 G ) + I ( X 2 G ; Y 2 G ) , which is the sum rate achieved by treating interference 19 as noise. Thus it is enough to show the existence of a genie that is both useful and smart to prove Theorem 1. So the essential question is: Is ther e a “divine” genie that is both useful and smart? The quest for the di vine genie can be simplified by imposing a structure on the side information it provides. Follo wing (11), we set: S 1 = hX 1 + hη W 1 S 2 = hX 2 + hη W 2 (26) where W 1 , W 2 ∼ N (0 , 1) and η is a positi ve real number . Howe ver , unlike in (11), we allow W 1 to be correlated to Z 1 (and W 2 with Z 2 ), with correlation coef ficient ρ . Lemma 11 (Useful Genie): The sum capacity of the genie-aided channel with side information giv en in (26) is achieved by using Gaussian inputs and by treating interference as noise at the receiver if the follo wing condition holds: | hη | ≤ p 1 − ρ 2 . (27) Hence the sum capacity of the symmetric interference channel is bounded as C sum ≤ I ( X 1 G ; Y 1 G , S 1 G ) + I ( X 2 G ; Y 2 G , S 2 G ) . (28) Pr oof: Add (7) and (10) to get the follo wing outer bound on n ( R 1 + R 2 − 2 ) . h ( S n 1 ) − n h ( S 1 G | X 1 G ) + n h ( Y 1 G | S 1 G ) − h ( Y n 1 | S n 1 , X n 1 ) + h ( S n 2 ) − n h ( S 2 G | X 2 G ) + n h ( Y 2 G | S 2 G ) − h ( Y n 2 | S n 2 , X n 2 ) . Thus it only remains to sho w that h ( S n 1 ) − h ( Y n 2 | S n 2 , X n 2 ) + h ( S n 2 ) − h ( Y n 1 | S n 1 , X n 1 ) is maximized by X n 1 G and X n 2 G . No w consider h ( S n 1 ) − h ( Y n 2 | S n 2 , X n 2 ) = h ( hX n 1 + hη W n 1 ) − h ( hX n 1 + Z n 2 | W n 2 ) ( a ) = h ( hX n 1 + hη W n 1 ) − h ( hX n 1 + V n ) ( b ) ≤ n h ( hX 1 G + hη W 1 ) − n h ( hX 1 G + V ) where V ∼ N (0 , 1 − ρ 2 ) , independent of X 1 . Step (a) follo ws from Lemma 6 and step (b) follo ws form condition (27) and the worst case noise Lemma 3. Thus h ( S n 1 ) − h ( Y n 2 | S n 2 , X n 2 ) is maximized by X n 1 G and similarly h ( S n 2 ) − h ( Y n 1 | S n 1 , X n 1 ) is maximized by X n 2 G . 20 Lemma 12 (Smart Genie): If Gaussian inputs are used, the interference is treated as noise, and the follo wing condition holds η ρ = 1 + h 2 P (29) then the genie does not increase the achie v able sum rate, i.e., I ( X 1 G ; Y 1 G , S 1 G ) = I ( X 1 G ; Y 1 G ) I ( X 2 G ; Y 2 G , S 2 G ) = I ( X 2 G ; Y 2 G ) . (30) The con verse is also true, i.e., (30) implies (29) Pr oof: Since I ( X iG ; Y iG , S iG ) = I ( X iG ; Y iG ) + I ( X iG ; S iG | Y iG ) (30) is equi valent to I ( X iG ; S iG | Y iG ) = 0 ⇐ ⇒ I ( X iG ; X iG + η W i | X iG + hX j G + Z 1 ) = 0 ⇐ ⇒ E [ η W i ( hX j G + Z i )] ( a ) = E ( hX j G + Z i ) 2 ⇐ ⇒ η ρ = 1 + h 2 P . where the step (a) follo ws from Lemma 7 and the index j = 2 if i = 1 and vice versa. In Figure 2, we plot the usefulness and smartness constraints (27) and (29) in the Hilbert space L 2 of random variables. Figure 2 only shows the plane containing the transmitted signal X 1 G , the receiv ed signal Y 1 G = X 1 G + hX 2 G + Z 1 and the genie signal S 1 G h = X 1 G + η W 1 with origin shifted to X 1 G . W e can vie w the usefulness and smartness constraints (27) and (29) on the genie as regions in the L 2 space: • Useful Genie: The genie is useful, if it lies inside the dashed curve in Fig. 2. The boundary of the curve is obtained using the usefulness condition (27). • Smart Genie: The genie is smart, if it lies on the solid line in Fig. 2. This is expected because X 1 G − ( X 1 G + hX 2 G + Z 1 ) − ( X 1 G + η W 1 ) form a Marko v chain iff X 1 G + η W 1 is a degraded version of X 1 G + hX 2 G + Z 1 . There exists a genie that is both useful and smart if the usefulness region intersects with the smartness line in Fig. 2, i.e., if there exist η and ρ satisfying the conditions of both Lemma 11 and Lemma 12. Eliminating η from (27) and (29) we get | h + h 3 P | ≤ | ρ | p 1 − ρ 2 21 Y 1 G Q Y 1 : ( √ 1 + h 2 P , 0) (0 , 1 h ) Q S 1 : ( η , θ ) η W 1 hX 2 G + Z 1 X 1 G Useful Smart S 1 G Fig. 2. The figure is a Hilbert space representation of the channel input, channel output and genie signal. The genie is a) useful if it lies inside the dashed curve, and b) smart if it lies on the solid line. If the dashed curve and solid line intersect, treating interference as noise achiev es sum capacity . which is possible if f | h + h 3 P | ≤ 0 . 5 . This completes the proof of Theorem 1. Remark 4: Lemma 11 is v alid ev en if the interference channel is not in the low interference regime. Therefore, minimizing the expression (28) over all possible genie signals satisfying the usefulness con- straint (27) results in a valid outer bound. The notion of the smart genie, therefore, can be thought of as an intuitiv e way of identifying the genie that minimizes (28). In [1], we use the geometric interpretation of the Figure 2 to identify the useful genie that minimizes (28) when the channel is not in the low interference regime. In Figure 3, we plot the ne w outer bound along with the Z-channel sum rate outer bound (17) and the ETW outer bound (18). Observe that the ne w outer bound matches with the inner bound obtained by treating interference as noise when the interference is below a treshold. Figure 4 shows the interference to noise ratio (INR) threshold, belo w which treating interference as noise achiev es the sum capacity , as a function of the signal to noise ratio (SNR) in dB scale. It can be easily sho wn that the INR threshold, in a dB scale, is equal to one third of the SNR in the high SNR asymptotic regime. 22 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 1.8 2 2.2 2.4 2.6 2.8 3 3.2 3.4 3.6 h 2 Sum Rate Lower Bounds Z Channel Upper Bound One Bit Upper Bound New Upper Bound Fig. 3. Sum capacity of the two-user symmetric Gaussian interference channel in the low interference regime. 0 5 10 15 20 25 30 35 40 −10 −5 0 5 10 15 SNR INR Threshold Fig. 4. T wo user symmetric Gaussian interference channel: INR threshold, belo w which treating interference as noise achieves the sum capacity , as a function of the SNR. 23 B. Asymmetric Interference Channel For the asymmetric interference channel, we consider the asymmetric genie: S 1 = h 21 ( X 1 + η 1 W 1 ) S 2 = h 12 ( X 2 + η 2 W 2 ) . (31) Let ρ 1 be the correlation between Z 1 and W 1 (and ρ 2 the correlation between Z 2 and W 2 ). Theor em 2: Consider the asymmetric interference channel with interference parameters h 12 and h 21 satisfying | h 12 (1 + h 2 21 P 1 ) | + | h 21 (1 + h 2 12 P 2 ) | ≤ 1 . (32) Then treating interference as noise achie ves sum capacity , which is giv en by C sum = 1 2 log 1 + P 1 1 + h 2 12 P 2 + 1 2 log 1 + P 2 1 + h 2 21 P 1 . Pr oof: The proof is similar to that for the symmetric interference channel. Using the same arguments as in Lemma 11, the genie is useful if | h 21 η 1 | ≤ q 1 − ρ 2 2 | h 12 η 2 | ≤ q 1 − ρ 2 1 . Also, as in Lemma 12, the genie is smart if f η 1 ρ 1 = 1 + h 2 12 P 2 η 2 ρ 2 = 1 + h 2 21 P 1 . Thus there exists a useful and smart genie if there exist ρ 1 ∈ [0 , 1] and ρ 2 ∈ [0 , 1] such that | h 12 (1 + h 2 21 P 1 ) | ≤ ρ 2 q 1 − ρ 2 1 | h 21 (1 + h 2 12 P 2 ) | ≤ ρ 1 q 1 − ρ 2 2 . (33) By setting ρ 1 = cos φ 1 and ρ 2 = cos φ 2 , (33) implies (32). It is also true that (32) implies (33). This can be seen by setting φ such that | h 12 (1 + h 2 21 P 1 ) | ≤ cos 2 φ ≤ 1 − | h 21 (1 + h 2 12 P 2 ) | . i.e., | h 12 (1 + h 2 21 P 1 ) | ≤ cos 2 φ | h 21 (1 + h 2 12 P 1 ) | ≤ sin 2 φ. Setting ρ 1 = sin φ and ρ 2 = cos φ , we hav e (33). Remark 5: Theorem 2 is establised independently in [11] and [12]. 24 V I . T W O - U S E R I N T E R F E R E N C E C H A N N E L : O U T E R B O U N D S T O T H E C A PAC I T Y R E G I O N In Section IV -F, we observed that the ETW outer bound in the Lemma 9 can be tightened by considering a general class of genie signals and using the EPI instead of the worst case noise result. In this section, we use these observ ations to improv e the outer bounds. Theor em 3 (EPI-Based ETW Outer Bound): The capacity region of a two-user Gaussian interference channel with h 12 ≤ 1 and h 21 ≤ 1 is outer bounded by the regions gi ven belo w in Lemmas 13 and 14, along with the Lemma 10. Lemma 13 (T ightened version of the outer bound on R 1 + R 2 (18) ): The capacity region of a two- user Gaussian interference channel with h 12 ≤ 1 and h 21 ≤ 1 is contained in the region R 2 ≤ 1 2 log Cov ( Y 2 G | S 2 G ) Cov ( S 2 G | X 2 G ) + 1 2 log Cov ( S 1 G ) Cov ( Y 1 G | S 1 G ) Cov ( S 1 G | X 1 G ) 2 − 2 R 1 − σ 2 1 Cov ( S 1 G ) + σ 2 2 . for all { η 1 , η 2 , ρ 1 , ρ 2 } , the parameters of the genie defined in (31), such that σ 2 1 = 1 − ρ 2 2 − ( h 21 η 1 ) 2 > 0 σ 2 2 = 1 − ρ 2 1 − ( h 12 η 2 ) 2 > 0 . Interchanging the user indices, we get another such bound. Pr oof: Using (7) and (10), we ha ve nR 1 ≤ h ( S n 1 ) − n h ( S 1 G | X 1 G ) + n h ( Y 1 G | S 1 G ) − h ( Y n 1 | S n 1 , X n 1 ) nR 2 ≤ h ( S n 2 ) − n h ( S 2 G | X 2 G ) + n h ( Y 2 G | S 2 G ) − h ( Y n 2 | S n 2 , X n 2 ) . (34) Denote r 1 = R 1 + h ( S 1 G | X 1 G ) − h ( Y 1 G | S 1 G ) = R 1 − 1 2 log Cov ( Y 1 G | S 1 G ) Cov ( S 1 G | X 1 G ) r 2 = R 2 + h ( S 2 G | X 2 G ) − h ( Y 2 G | S 2 G ) = R 2 − 1 2 log Cov ( Y 2 G | S 2 G ) Cov ( S 2 G | X 2 G ) (35) to obtain nr 1 ≤ h ( S n 1 ) − h ( Y n 1 | S n 1 , X n 1 ) nr 2 ≤ h ( S n 2 ) − h ( Y n 2 | S n 2 , X n 2 ) . (36) T o apply EPI, { η 1 , η 2 , ρ 1 , ρ 2 } should satisfy ( h 21 η 1 ) 2 ≤ 1 − ρ 2 2 ( h 12 η 2 ) 2 ≤ 1 − ρ 2 1 . 25 Define the slack v ariables σ 2 1 = 1 − ρ 2 2 − ( h 21 η 1 ) 2 σ 2 2 = 1 − ρ 2 1 − ( h 12 η 2 ) 2 . Using EPI (Corollary 1), we ha ve nr 2 ≤ h ( S n 2 ) − h ( Y n 2 | S n 2 , X n 2 ) ≤ n 1 2 log 2 2 n h ( Y n 1 | S n 1 ,X n 1 ) − 2 π eσ 2 1 − n 1 2 log 2 2 n h ( S n 1 ) + 2 π eσ 2 2 r 2 ≤ 1 2 log 2 2 n h ( S n 1 ) 2 − 2 r 1 − 2 π eσ 2 1 − 1 2 log 2 2 n h ( S n 1 ) + 2 π eσ 2 2 ≤ 1 2 log 2 2 h ( S 1 G ) 2 − 2 r 1 − 2 π eσ 2 1 − 1 2 log 2 2 h ( S 1 G ) + 2 π eσ 2 2 ≤ 1 2 log Cov ( S 1 G ) 2 − 2 r 1 − σ 2 1 Cov ( S 1 G ) + σ 2 2 . By eliminating r 1 and r 2 , we get R 2 ≤ 1 2 log Cov ( Y 2 G | S 2 G ) Cov ( S 2 G | X 2 G ) + 1 2 log Cov ( S 1 G ) Cov ( Y 1 G | S 1 G ) Cov ( S 1 G | X 1 G ) 2 − 2 R 1 − σ 2 1 Cov ( S 1 G ) + σ 2 2 . Remark 6: Lemma 13, being a tightened version of the ETW sum rate outer bound (18), includes the ne w sum rate outer bounds presented in Section V. Lemma 14 (T ightened versions of the outer bounds on 2 R 1 + R 2 (19) and R 1 + 2 R 2 (20) ): The capac- ity region of a two-user Gaussian interference channel with h 12 ≤ 1 and h 21 ≤ 1 is contained in the region R 2 ≤ 1 2 log Cov ( Y 2 G | S 2 G ) Cov ( S 2 G | X 2 G ) + 1 2 log Cov ( Y 1 G ) 2 − 2 R 1 − σ 2 1 h 2 21 2 2 R 1 + σ 2 2 for all { η 1 , η 2 , ρ 1 , ρ 2 } , the parameters of the genie defined in (31), such that σ 2 1 = 1 − ( h 12 η 2 ) 2 > 0 σ 2 2 = 1 − ρ 2 2 − h 2 21 > 0 . Interchanging the user indices, we get another such bound. Pr oof: Use (5), (6) and (10) to obtain: nR 1 ≤ n h ( Y 1 G ) − h ( h 12 X 2 + Z n 1 ) nR 1 ≤ h ( h 21 X n 1 + h 21 Z n 1 ) − n h ( h 21 Y 1 G | X 1 G , X 2 G ) nR 2 ≤ h ( S n 2 ) − n h ( S 2 G | X 2 G ) + n h ( Y 2 G | S 2 G ) − h ( Y n 2 | S n 2 , X n 2 ) (37) 26 T o apply EPI, { η 1 , η 2 , ρ 1 , ρ 2 } should satisfy h 12 η 2 ≤ 1 h 21 ≤ q 1 − ρ 2 2 . Define the slack v ariables σ 2 1 = 1 − ( h 12 η 2 ) 2 σ 2 2 = 1 − ρ 2 2 − h 2 21 . Using EPI (Corollary 1) and the bounds on nR 1 in (37), we obtain h ( S n 2 ) ( a ) ≤ n 2 log 2 2 n h ( h 12 X 2 + Z n 1 ) − 2 π eσ 2 1 ( b ) ≤ n 2 log 2 2 h ( Y 1 G ) 2 − 2 R 1 − 2 π eσ 2 1 and h ( Y n 2 | S n 2 , X n 2 ) ( c ) ≥ n 2 log 2 2 n h ( h 21 X 1 + h 21 Z n 1 ) + 2 π eσ 2 2 ( d ) ≥ n 2 log 2 π e 2 2 R 1 h 2 21 + 2 π eσ 2 2 where the steps ( a ) and ( c ) follo w from EPI (Corollary 1) and the steps ( b ) and ( d ) use the bounds on nR 1 in (37). Using the abov e relations with the bound on nR 2 in (37), we obtain R 2 ≤ 1 2 log Cov ( Y 1 G ) 2 − 2 R 1 − σ 2 1 − 1 2 log ( Cov ( S 2 G | X 2 G )) + 1 2 log ( Cov ( Y 2 G | S 2 G )) − 1 2 log h 2 21 2 2 R 1 + σ 2 2 = 1 2 log Cov ( Y 2 G | S 2 G ) Cov ( S 2 G | X 2 G ) + 1 2 log Cov ( Y 1 G ) 2 − 2 R 1 − σ 2 1 h 2 21 2 2 R 1 + σ 2 2 . (38) A. Numerical Results In Figures 5 and 6, we plot the ne w outer bound, i.e., EPI-based ETW outer bound, along with the original ETW outer bound and the broadcast channel outer bound. T o compare the outer bounds, we also plot a special case of the Han-K obayashi inner bound, that does not include time sharing and is limited to only Gaussian distributions for the priv ate and common messages. Since the EPI-based ETW outer bound contains the original ETW outer bound and broadcast channel outer bound as special cases, it is obviously tighter . Figure 5 corresponds to P 1 = 10 , P 2 = 20 , h 2 12 = 0 . 04 , h 2 21 = 0 . 09 , which satisfy the condition (32) for lo w interference, and hence the inner and outer bounds meet at one point to gi ve the 27 0 0.2 0.4 0.6 0.8 1 1.2 1.4 1.6 1.8 0 0.5 1 1.5 2 R 1 R 2 HK Inner Bound ETW Outer Bound Broadcast Outer Bound New Outer Bound Fig. 5. T wo user Gaussian interference channel ( P 1 = 10 , P 2 = 20 , h 2 12 = 0 . 04 , h 2 21 = 0 . 09 ) in low interference regime: Bounds on the capacity region. 0 0.2 0.4 0.6 0.8 1 1.2 1.4 1.6 0 0.2 0.4 0.6 0.8 1 1.2 1.4 1.6 R 1 R 2 HK Inner Bound ETW Outer Bound Broadcast Outer Bound New Outer Bound Fig. 6. T wo user symmetric Gaussian interference channel ( P = 7 , h 2 = 0 . 2 ): Bounds on the capacity region. 28 sum capacity . Figure 6 corresponds to P 1 = P 2 = 7 , h 2 12 = h 2 21 = 0 . 2 , which do not satisfy the condition (32) for lo w interference, and hence inner and outer bounds do not meet. As discussed in Section IV -F, the outer bounds presented in this paper are tightened versions of the ETW outer bounds, obtained by considering a general class of genie signals and using EPI instead of the worst case noise result. Similar approach has been taken independently by two other groups - Shang, Kramer and Chen [11] and Motahari and Khandani [12]. The main difference in the approaches is that [11] and [12] use extremal inequality [22] instead of EPI. Although the extremal inequalities proposed in [22] are more general than EPI, both are equiv alent for the purpose of this paper . Hence we belie ve that both the approaches should yield the same bounds. Shang et. al. tightened only the sum rate outer bound (18) and hence their outer bound, equi v alent to Lemma 13, is weaker compared to Theorem 3 that includes Lemma 14 as well. Motahari et. al. tightened all the ETW outer bounds and hence their outer bound is equiv alent to Theorem 3. W e may compare Figure 6 with Figure 3 in [12] and Figure 5 with Figure 4 in [11]. V I I . G AU S S I A N I N T E R F E R E N C E N E T W O R K : S U M C A P A C I T Y I N L O W I N T E R F E R E N C E R E G I M E In section V, we established the sum capacity of the two-user Gaussian interference channel in a low interference regime. The intuition is that if the interference is low enough, the recei ver will not able to exploit the structure in the interference, and hence treating interference as noise achieves the sum capacity . It is natural to v erify if the result can be extended to an arbitrary interference network, and if it does, to see ho w the interference threshold scales with the number of users. In this section, we first consider two special cases of the general interference network: the many-to-one interference channel , where only one user experiences interference, and the one-to-many interfer ence channel , where the interference is generated by only one user . For these two special cases, we use a genie similar to that used for the two-user interference channel, which we call no w a scalar genie, to propose conditions under which treating interference as noise achie ves the sum capacity . Using the scalar genie, Shang et. al. deriv ed conditions for the optimality of treating interference as noise for an arbitrary Gaussian interference network [23]. For symmetric interference channels, this results in an INR total threshold, belo w which treating interference as noise achiev es sum capacity , that is independent of the number of users. Here we use the notation INR total for a symmetric interference channel to denote the total interference-to-noise ratio. W e sho w that there exists an alternativ e construction of the genie, where each receiv er is provided with multiple genie signals, resulting in a INR total threshold for the symmetric three-user interference channel, that is higher than the INR threshold for the symmetric 29 two-user interference channel. A. Many-to-one and One-to-many interference channels The many-to-one and one-to-many interference channels are studied in [24], [25], where the capacity region is characterized to within a constant number of bits. Many-to-one: In a many-to-one Gaussian interference channel only one user experiences the interference, i.e., h rt = 0 , ∀ t 6 = r , ∀ r 6 = 1 where we assume that the user 1 is the unlucky user without any loss of generality . Thus the many-to-one Gaussian interference channel is parameterized by { P 1 , P 2 , · · · , P M , h 12 , h 13 , · · · , h 1 M } : Y 1 = X 1 + M X t =2 h 1 t X t + Z 1 Y r = X r + Z r , for r = 2 , 3 , · · · , M . (39) One-to-many: In a one-to-many Gaussian interference channel only one user causes the interference, i.e., h rt = 0 , ∀ r 6 = t, ∀ t 6 = 1 where we assumed that user 1 is the interfering user . Thus the one-to-many Gaussian interference channel is parameterized by { P 1 , P 2 , · · · , P M , h 21 , h 31 , · · · , h M 1 } : Y 1 = X 1 + Z 1 Y r = h r 1 X 1 + X r + Z r , for r = 2 , 3 , · · · , M . (40) Theor em 4: For a many-to-one interference channel (39) satisfying M X i =2 h 2 1 i ≤ 1 (41) treating interference as noise achie ves the sum capacity , which is giv en by C sum = 1 2 log 1 + P 1 P M i =2 h 2 1 i P i ! + 1 2 M X i =2 log (1 + P i ) . (42) Pr oof: Allo wing the interfering users to cooperate can only increase the sum capacity . Let Y I be the vector denoting the collective receiv ed signal, X I and Z I denote the corresponding transmit and noise vectors and h = [ h 12 h 13 · · · h 1 M ] T to arri ve at Y 1 = X 1 + h T X I + Z 1 Y I = X I + Z I 30 Let S I = h T X I + W I be the side information giv en to the (collectiv e) receiv ers of the interfering users. Here W I is zero mean, unit v ariance, Gaussian random v ariable. Using Fano’ s inequality , we have n M X i =1 ( R i − n ) ≤ I ( X n 1 ; Y n 1 ) + I ( X n I ; Y n I , S n I ) = I ( X n 1 ; Y n 1 ) + I ( X n I ; S n I ) + I ( X n I ; Y n I | S n I ) = h ( Y n 1 ) − h ( S n I ) + h ( S n I ) − h ( W n I ) + h ( Y n I | S n I ) − h ( Z n I | W n I ) = h ( Y n 1 ) − n h ( W I G ) + h ( Y n I | S n I ) − n h ( Z I G | W I G ) ( a ) ≤ n h ( Y 1 G ) − n h ( W I G ) + n h ( Y I G | S I G ) − n h ( Z I G | W I G ) = nI ( X 1 G ; Y 1 G ) + nI ( X I G ; Y I G , S I G ) where the step (a) follows from Lemma 1. Thus the genie is useful. If (41) is true, then the random v ariable W I can be chosen such that W I = h T Z I + V where the Gaussian random v ariable V is independent of Z I . Therefore, S I = h T Y I + V and hence I ( X I G ; Y I G , S I G ) = I ( X I G ; Y I G ) making the genie smart. Hence the theorem follo ws. Theor em 5: For a one-to-many interference channel (40) satisfying M X i =2 h 2 i 1 P 1 + h 2 i 1 h 2 i 1 P 1 + 1 ≤ 1 (43) treating interference as noise achie ves the sum capacity , which is giv en by C sum = 1 2 log (1 + P 1 ) + 1 2 M X i =2 log 1 + P i h 2 i 1 P 1 + 1 (44) Pr oof: W e prove this theorem directly without the aid of a genie. n ( C sum − M n ) ≤ I ( X n 1 ; Y n 1 ) + M X i =2 I ( X n i ; Y n i ) = h ( Y n 1 ) − h ( Y n 1 | X n 1 ) + M X i =2 h ( Y n i ) − h ( Y n i | X n i ) = h ( Y n 1 ) − n h ( Y 1 G | X 1 G ) + M X i =2 h ( Y n i ) − h ( Y n i | X n i ) ≤ h ( Y n 1 ) − n h ( Y 1 G | X 1 G ) + M X i =2 n h ( Y iG ) − h ( Y n i | X n i ) 31 T o finish the proof, we further need to show that h ( Y n 1 ) − M X i =2 h ( Y n i | X n i ) = h ( X n 1 + Z n 1 ) − M X i =2 h ( h i 1 X n 1 + Z n i ) = M X i =2 λ i h ( X n 1 + Z n 1 ) − h ( h i 1 X n 1 + Z n i ) is maximized by X n 1 = X n 1 G , for some { λ i } M i =2 such that P M i =2 λ i = 1 . If (43) holds, it is possible to chose { λ i } M i =2 satisfying λ i ≥ h 2 i 1 P 1 + h 2 i 1 h 2 i 1 P 1 + 1 . For this choice of λ i , from Lemma 5, it follows that λ i h ( h i 1 X n 1 + h i 1 Z n 1 ) − h ( h i 1 X n 1 + Z n i ) , and therefore λ i h ( X n 1 + Z n 1 ) − h ( h i 1 X n 1 + Z n i ) , is maximized when X n 1 = X n 1 G . Hence the result follo ws. Remark 7: Theorems 4 and 5 can be shown to special cases of Theorem 4 in [23]. B. V ector genie W e no w propose a systematic construction of an useful genie for an arbitrary interference network. W e call this a vector genie because it in volv es gi ving multiple side information signals to each receiv er . This vector genie can be thought of as a generalization of the ETW genie (11) de veloped for the two-user interference channel. W e need to define an ordering function before constructing the vector genie signal. Definition 1 (Or dering function): W e call a function π : { 1 , 2 , · · · , M } → { 1 , 2 , · · · , M } an ordering function if it satisfies the follo wing properties { 1 , π (1) , π (2) (1) , · · · , π ( M − 1) (1) } = { 1 , 2 , · · · , M } π ( M ) ( r ) = r , ∀ r (45) where π ( j ) ( . ) denotes the function π ( . ) operated j times. Definition 2: Suppose Y r is a random v ariable that is an af fine combination of the variables { X t } M t =1 . For any A ⊆ { 1 , 2 , · · · , M } , Y r \{ X t , t ∈ A} denotes the random variable obtained after removing the contributions of { X t , t ∈ A} from Y r . For any fixed ordering function π , let S r = [ S r, 1 S r, 2 · · · S r,M − 1 ] > 32 be the side information gi ven to the receiver r , defined as S r,k = Y π ( k ) ( r ) \{ X π ( j ) ( r ) } k j =1 , for k = 1 , 2 , · · · , M − 1 . (46) For example, consider the three user interference network. With the ordering function π (1) = 2 , π (2) = 3 , π (3) = 1 we see that the genie signals defined by (46) are: r = 1 r = 2 r = 3 Y r : X 1 + h 12 X 2 + h 13 X 3 + Z 1 X 2 + h 21 X 1 + h 23 X 3 + Z 2 X 3 + h 31 X 1 + h 32 X 2 + Z 3 S r, 1 : h 21 X 1 + h 23 X 3 + Z 2 h 32 X 2 + h 31 X 1 + Z 3 h 13 X 3 + h 12 X 2 + Z 1 S r, 2 : h 31 X 1 + Z 3 h 12 X 2 + Z 1 h 23 X 3 + Z 2 The follo wing properties of the genie (46) are useful in deri ving the outer bounds. Pr oposition 2: For each r , the genie signal S r,M − 1 is interference free, i.e., S r,M − 1 \ X r is Gaussian. Pr oof: From the construction of the genie (46), we ha ve S r,M − 1 = Y π ( M − 1) ( r ) \{ X π ( j ) ( r ) } M − 1 j =1 which implies that S r,M − 1 \ X r ( a ) = Y π ( M − 1) ( r ) \{ X π ( j ) ( r ) } M j =1 ( b ) = Y π ( M − 1) ( r ) \{ X j } M j =1 = Z π ( M − 1) ( r ) where steps (a) and (b) follo w from the property (45) of the ordering function π . Pr oposition 3: For each receiver r , define ˜ Y r = [ Y r S r, 1 S r, 2 · · · S r,M − 2 ] (47) then S r = ˜ Y π ( r ) \ X π ( r ) . Pr oof: The result follo ws because S r, 1 = Y π ( r ) \ X π ( r ) 33 and for k = 2 , 3 , · · · , M − 1 , S r,k = Y π ( k ) ( r ) \{ X π ( j ) ( r ) } k j =1 = Y π ( k − 1) ( π ( r )) \{ X π ( j ) ( r ) } k j =1 = Y π ( k − 1) ( π ( r )) \ n { X π ( j ) ( r ) } k j =2 , X π ( r ) o = Y π ( k − 1) ( π ( r )) \ n { X π ( j ) ( π ( r )) } k − 1 j =1 , X π ( r ) o = S π ( r ) ,k − 1 \ X π ( r ) . W e now proceed to show that the vector genie (46) is useful and deri ve an outer bound on the sum capacity . Theor em 6: For any ordering function π , the genie defined in (46) is useful, i.e., the sum capacity of the interference network (2) is upper bounded by C sum ≤ M X i =1 I ( X iG ; Y iG , S iG ) where the genie signals { S i } are defined in (46). Pr oof: n ( C sum − M n ) ≤ M X i =1 I ( X n i ; Y n i , S n i ) = M X i =1 h ( Y n i , S n i ) − h ( Y n i , S n i | X n i ) ( a ) = M X i =1 h ( Y n i , S n i ) − h ˜ Y n i , S n i,M − 1 | X n i = M X i =1 h ( S n i ) + h ( Y n i | S n i ) − h S n i,M − 1 | X n i − h ˜ Y n i | S n i,M − 1 , X n i = M X i =1 h ( Y n i | S n i ) − h S n i,M − 1 | X n i + M X i =1 h ( S n i ) − h ˜ Y n i | S n i,M − 1 , X n i ( b ) = M X i =1 h ( Y n i | S n i ) − h S n i,M − 1 | X n i + M X i =1 h ( S n i ) − h S n π ( M − 1) ( i ) ( c ) = M X i =1 h ( Y n i | S n i ) − h S n i,M − 1 | X n i ( d ) = M X i =1 h ( Y n i | S n i ) − n h ( S iG,M − 1 | X iG ) 34 ( e ) ≤ M X i =1 n h ( Y iG | S iG ) − n h ( S iG,M − 1 | X iG ) where step (a) follows from the definition of ˜ Y r (47), step (b) follows from Propositions 2 and 3, step (c) follo ws because { π ( M − 1) ( i ) } M i =1 = { 1 , 2 , · · · , M } , step (d) follo ws from Proposition 2, and finally step (e) follo ws from Lemma 1. W e ha ve shown that { X n iG } M i =1 maximizes P M i =1 I ( X n i ; Y n i , S n i ) and clearly the maximum is gi ven by n P M i =1 I ( X iG ; Y iG , S iG ) , and hence we ha ve the result. Remark 8: The vector genie is a generalization of the ETW genie and hence Theorem 6 simplifies to the ETW bound (18) for the two-user interference channel. For the two-user interference channel, the ETW genie is also used to deriv e outer bounds (14-20) on the entire capacity region. In a similar fashion, the v ector genie can also be used to deriv e outer bounds on the entire capacity region of an arbitrary Gaussian interference network. Similar to the two-user case, we proceed to tighten the outer bound by correlating the noise terms in the genie signals to the recei ver noise. In particular , we explore if there exists a genie that is not just useful, but also smart, to establish the sum capacity in the low interference regime. C. Three user symmetric interfer ence channel T o simplify the presentation, we will restrict our attention to the symmetric three user channel, i.e., P t = P , ∀ t and h rt = h, ∀ r 6 = t . T o make the genie smart, we let the noise terms in the genie signals be correlated to the noise at the recei ver . r = 1 r = 2 r = 3 Y r : X 1 + hX 2 + hX 3 + Z 1 X 2 + hX 1 + hX 3 + Z 2 X 3 + hX 1 + hX 2 + Z 3 S r, 1 : hX 1 + hX 3 + hη 1 W 11 hX 2 + hX 1 + hη 1 W 21 hX 3 + hX 2 + hη 1 W 31 S r, 2 : hX 1 + hη 2 W 12 hX 2 + hη 2 W 22 hX 3 + hη 2 W 32 Here { W rk } 3 , 2 r =1 ,k =1 are zero mean, unit variance, Gaussian random variables, and η 1 , η 2 are real variables. Let Σ denote the cov ariance matrix of the random vector [ Z r W r 1 W r 2 ] > (which is independent of r ): Σ = 1 ρ 1 ρ 2 ρ 1 1 ρ 12 ρ 2 ρ 12 1 (48) Thus the genie is parameterized by { Σ , η 1 , η 2 } . 35 Lemma 15 (Useful Genie): The genie is useful i.e., C sum ≤ M X i =1 I ( X iG ; Y iG , S iG ) when Cov [ Z 1 hη 1 W 11 ] > | W 12 − Cov [ hη 1 W 11 hη 2 W 12 ] > < 0 . (49) Pr oof: Follo wing the proof of Theorem 6, we only need to show that M X i =1 h ( S n i ) − h ( ˜ Y n i | S n i,M − 1 , X n i ) = M X i =1 h ( S n i ) − h ˜ Y n π ( i ) | S n π ( i ) ,M − 1 , X n π ( i ) is maximized by { X n iG } . For i = 1 , h ( S n i ) − h ˜ Y n π ( i ) | S n π ( i ) ,M − 1 , X n π ( i ) = h ( S n 1 ) − h ˜ Y n 2 | S n 2 , 2 , X n 2 h hX n 1 + hX n 3 + hη 1 W n 11 hX n 1 + hη 2 W n 12 − h hX n 1 + hX n 3 + Z n 2 hX n 1 + hη 1 W n 21 | W 22 . (50) Using Lemmas 6 and 4, it follo ws that (50) is maximized by { X n iG } if the condition (49) holds. W e next gi ve the conditions for the genie to be smart in the following lemma, which is an extension of Lemma 12. Lemma 16 (Smart Genie): The genie is smart, i.e., I ( X iG ; Y iG , S iG ) = I ( X iG ; Y iG ) (51) if f the following conditions hold η 1 ρ 1 = 1 + 2 h 2 P − hP η 2 ρ 2 = 1 + 2 h 2 P . (52) Pr oof: Since I ( X iG ; Y iG , S iG ) = I ( X iG ; Y iG ) + I ( X iG ; S iG | Y iG ) (51) is equi valent to I ( X iG ; S iG | Y iG ) = 0 . (53) From Lemma 8, it follo ws that (53) is true if f I ( X iG ; S i, 1 G | Y iG ) = 0 I ( X iG ; S i, 2 G | Y iG ) = 0 . (54) 36 Using Lemma 7, we ha ve I ( X 1 G ; S 1 , 1 G | Y 1 G ) = 0 ⇐ ⇒ I ( X 1 G ; X 1 G + X 3 G + η 1 W 11 | X 1 G + hX 2 G + hX 3 G + Z 1 ) = 0 ⇐ ⇒ E [( X 3 G + η 1 W 11 )( hX 2 G + hX 3 G + Z 1 )] = E ( hX 2 G + hX 3 G + Z 1 ) 2 ⇐ ⇒ hP + η 1 ρ 1 = 1 + 2 h 2 P . and I ( X 1 G ; S 1 , 2 G | Y 1 G ) = 0 ⇐ ⇒ I ( X 1 G ; X 1 G + η 2 W 12 | X 1 G + hX 2 G + hX 3 G + Z 1 ) = 0 ⇐ ⇒ E [ η 2 W 12 ( hX 2 G + hX 3 G + Z 1 )] = E ( hX 2 G + hX 3 G + Z 1 ) 2 ⇐ ⇒ η 2 ρ 2 = 1 + 2 h 2 P . Theor em 7: For the symmetric three user Gaussian interference channel, suppose there exist { Σ < 0 , η 1 , η 2 } satisfying (49) and (52), then treating interference as noise achiev es the sum capacity , which is gi ven by C sum = 3 2 log 1 + P 1 + 2 h 2 P . Unlike in the two-user case, we hav e not been able to provide an explicit equation for the threshold on h (as a function of P ) belo w which treating interference as noise achie ves the sum capacity . Ne vertheless, for e very P , admissible v alues of h can be found numerically by searching for the parameters { Σ < 0 , η 1 , η 2 } that satisfy the conditions in Theorem 7. Using a scalar genie similar to that used for the two-user interference channel, Shang et. al. obtained a threshold on INR total that is independent of the number of users [23, Theorem 4]. In Figure 7, we plot a few admissible points that are computed numerically along with the INR total obtained using the scalar genie. An increase of more than 1 dB in the INR total threshold is seen by using the vector genie instead of the scalar genie. Note that INR total threshold obtained using the vector genie for the three-user interference channel is greater than the INR threshold for the two-user interference channel (which is same as the INR total threshold obtained using the scalar genie). Although, the thresholds we obtain in this paper are only lo wer bounds to the optimal threshold, we belie ve that the trend shown by the vector genie holds true, i.e., the optimal interfer ence thr eshold, below which tr eating interfer ence as noise achieves sum capacity , incr eases with the number of users . The 37 2 4 6 8 10 12 14 16 18 20 −6 −5 −4 −3 −2 −1 0 1 2 3 4 SNR INR total Threshold Scalar Genie Vector Genie Fig. 7. Three user symmetric Gaussian interference channel: INR total threshold, belo w which treating interference as noise achiev es the sum capacity , as a function of SNR. optimality of treating interference as noise in the low interference regime implies that the receiv ers are not able to exploit the structure in the interference. W ith more users in the network, the ability of the recei ver to exploit the structure in each of the interfering user’ s signal can only decrease because the interfering users’ signals interfere with each other . V I I I . C O N C L U S I O N S W e provided ne w , improved genie-aided outer bounds on the capacity region of a two-user Gaussian interference channel. Using these outer bounds, we showed that treating interference as noise achie ves the sum capacity in a low interference regime. Similar results were established in parallel by Shang, Kramer and Chen [11], and Motahari and Khandani [12]. Although the interference threshold, belo w which treating interference as noise achiev es sum capacity , is identical in the three works, the mathematical approach is considerably different. It is also to be noted that what has been obtained in all three works is only a lower bound on the interference threshold, and the question still remains as to what the optimal interfer ence threshold is. A natural extension of the two-user results is the generalization of the optimality of treating interference as noise in the lo w interference regime to Gaussian interference networks with more than two users. 38 W e provided closed form expressions that characterize the low interference regime for the many-to-one and one-to-many interference channels. Furthermore, we generalized the ETW genie [9] to an arbitrary Gaussian interference network, i.e., proposed a systematic construction of a genie such that treating interference as noise with Gaussian inputs achie ve the sum capacity of the genie-aided network. W e called this genie a vector genie, because it inv olves giving multiple side information signals to each recei ver . Similar to [9], [21], this vector genie can be used to deri ve outer bounds on the entire capacity region. By correlating the noise terms in the vector genie, we showed that the outer bound can be further tightened to establish the sum capacity in a low interference regime. For reasons of computational complexity , we only considered a three user symmetric interference channel, for which we demonstrated that the total interference threshold can be higher than that for the two-user case. The interesting question that remains to be answered is: how does the optimal interfer ence thr eshold scale as a function of the number of interfer ers in the network? R E F E R E N C E S [1] V . S. Annapureddy and V . V . V eeravalli, “Sum capacity of the Gaussian interference channel in the low interference regime, ” in Pr oceedings of IT A W orkshop, UCSD, CA , Jan 2008. A vailable for download at http://arxiv .org/abs/0801.0452. [2] ——, “Gaussian interference channels: Sum capacity in the low interference regime, ” in Pr oceedings of ISIT , T or onto, Canada , July 2008. [3] C. E. Shannon, “A mathematical theory of communication, ” Bell Syst. T ec h. J. , vol. 27, pp. 379–423, 623–656, 1948. [4] A. B. Carleial, “A case where interference does not reduce capacity, ” IEEE T rans. on Inform. Theory , vol. IT -21, no. 1, pp. 569–570, Sept. 1975. [5] T . S. Han and K. Kobayashi, “A new achie vable rate region for the interference channel, ” IEEE T rans. on Inform. Theory , vol. IT -27, no. 1, pp. 49–60, Jan. 1981. [6] H. Sato, “The capacity of the Gaussian interference channel under strong interference, ” IEEE T rans. on Inform. Theory , vol. IT -27, no. 6, pp. 786–788, Nov . 1981. [7] H. F . Chong, M. Motani, H. K. Garg, and H. E. Gamal, “On the Han-K obayashi region for the interference channel, ” Submitted to IEEE T rans. on Inform. Theory , Aug. 2006. [8] G. Kramer, “Outer bounds on the capacity region of Gaussian interference channels, ” IEEE T rans. on Inform. Theory , vol. IT -50, no. 3, pp. 581–586, March 2004. [9] R. H. Etkin, D. N. C. Tse, and H. W ang, “Gaussian interference channel capacity to within one bit, ” Submitted to IEEE T rans. on Inform. Theory , Feb. 2007. [10] T . Cover and J. Thomas, Elements of Information Theory . W iley , 2006. [11] X. Shang, G. Kramer , and B. Chen, “A ne w outer bound and noisy-interference sum-rate capacity for the Gaussian interference channels, ” submitted to IEEE T rans. on Inform. Theory , Dec. 2007. [12] A. S. Motahari and A. K. Khandani, “Capacity bounds for the Gaussian interference channel, ” submitted to IEEE T rans. on Inform. Theory , Jan. 2008. 39 [13] A. B. Carleial, “Interference channels, ” IEEE T rans. on Inform. Theory , vol. IT -24, no. 1, pp. 60–70, Sept. 1978. [14] J. A. Thomas, “Feedback can at most double Gaussian multiple access channel capacity, ” submitted to IEEE T rans. on Inform. Theory , Sept. 1987. [15] S. Diggavi and T . M. Cover , “W orst additive noise under cov ariance constraints, ” IEEE T rans. on Inform. Theory , vol. IT -47, no. 7, pp. 3072–3081, No v . 2001. [16] S. Boyd and L. V andenber ghe, Conve x optimization . Cambridge univ ersity press, 2006. [17] T . S. Han and K. K obayashi, Mathematics of Information and Coding . AMS Bookstore, 2002. [18] H. Sato, “On degraded Gaussian two-user channels, ” IEEE T rans. on Inform. Theory , vol. IT -24, no. 5, pp. 638–640, Sept. 1978. [19] M. H. M. Costa, “On the Gaussian interference channel, ” IEEE T rans. on Inform. Theory , vol. IT -31, no. 5, pp. 607–615, Sept. 1985. [20] I. Sason, “On the achiev able rate regions for the Gaussian interference channel, ” IEEE T rans. on Inform. Theory , vol. IT -50, no. 6, pp. 1345–1356, June 2004. [21] E. T elatar and D. Tse, “Bounds on the capacity region of a class of interference channels, ” in Pr oceedings of 2007 International Symposium on Information Theory , Nice, F rance , June 2007. [22] T . Liu and P . V iswanath, “An extremal inequality motiv ated by multiterminal information theoretic problems, ” IEEE T rans. on Inform. Theory , vol. IT -53, no. 5, pp. 1839–1851, May 2007. [23] X. Shang, G. Kramer, and B. Chen, “New outer bounds on the capacity region of Gaussian interference channels, ” in Pr oceedings of ISIT , T or onto, Canada , July 2008. [24] A. Jovicic, H. W ang, and P . V iswanath, “On network interference management, ” in Pr oceedings of IEEE Inform. Theory W or skshop, Lake T ahoe, CA , Sept. 2007. [25] G. Bresler, A. Parekh, and D. N. C. Tse, “The approximate capacity of an one-sided Gaussian interference channel, ” in Pr oceedings of 45th Annual Allerton Conf. Commun. Cont. and Comp., University of Illinois, IL , Sept. 2007.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment