Bayesian Generalized Probability Calculus for Density Matrices

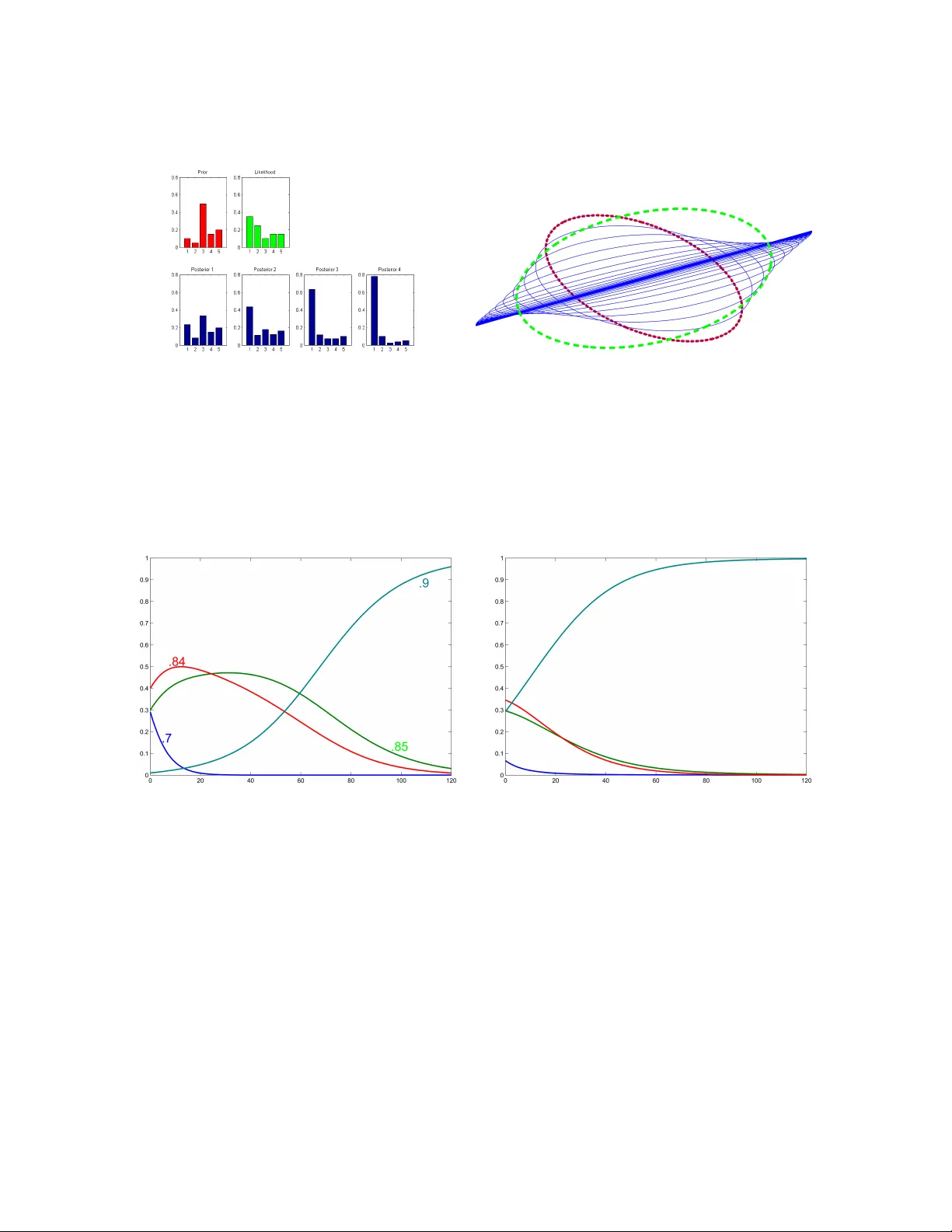

One of the main concepts in quantum physics is a density matrix, which is a symmetric positive definite matrix of trace one. Finite probability distributions can be seen as a special case when the density matrix is restricted to be diagonal. We dev…

Authors: Manfred K Warmuth, Dima Kuzmin