Boosting Algorithms: Regularization, Prediction and Model Fitting

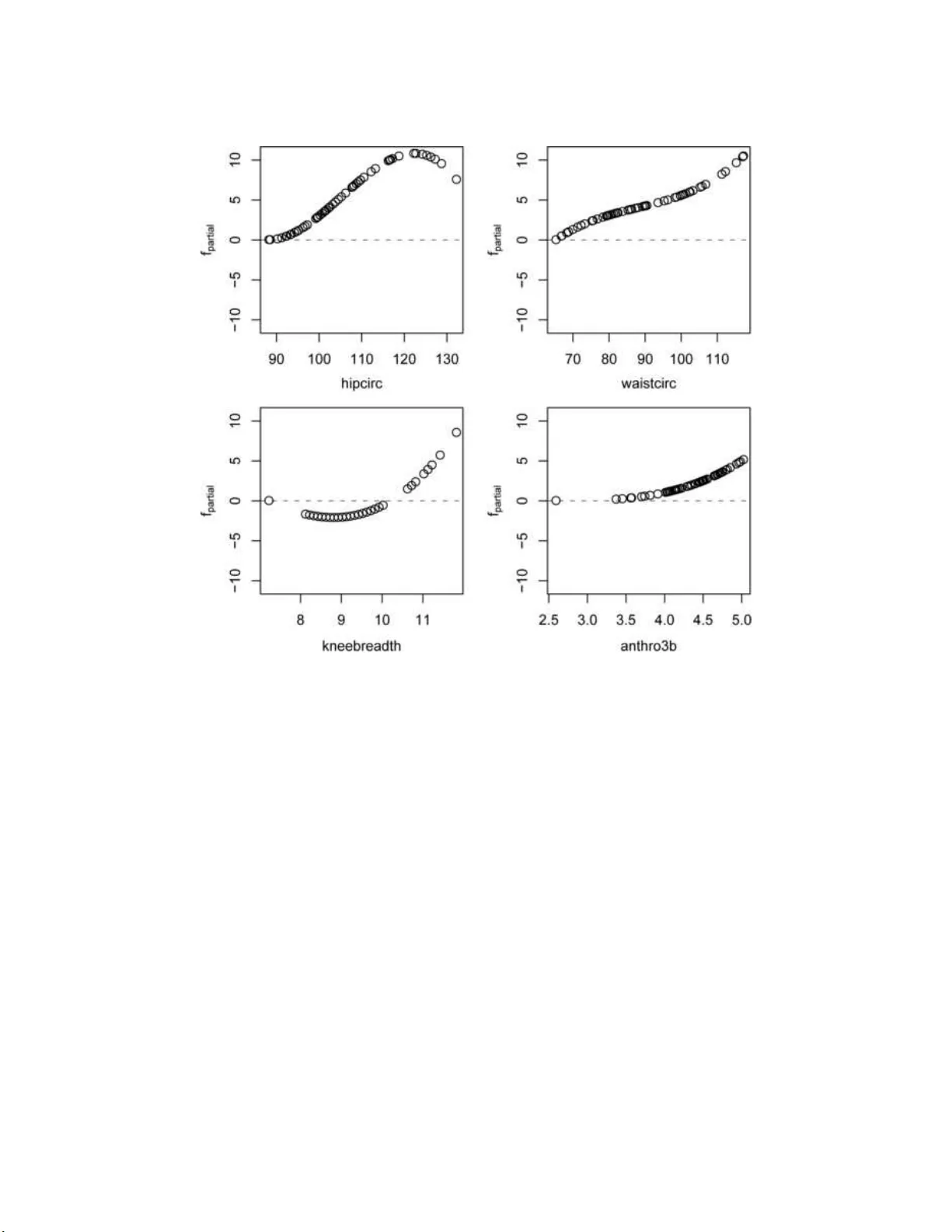

We present a statistical perspective on boosting. Special emphasis is given to estimating potentially complex parametric or nonparametric models, including generalized linear and additive models as well as regression models for survival analysis. Con…

Authors: Peter B"uhlmann, Torsten Hothorn