Variable selection for the multicategory SVM via adaptive sup-norm regularization

The Support Vector Machine (SVM) is a popular classification paradigm in machine learning and has achieved great success in real applications. However, the standard SVM can not select variables automatically and therefore its solution typically utili…

Authors: Hao Helen Zhang, Yufeng Liu, Yichao Wu

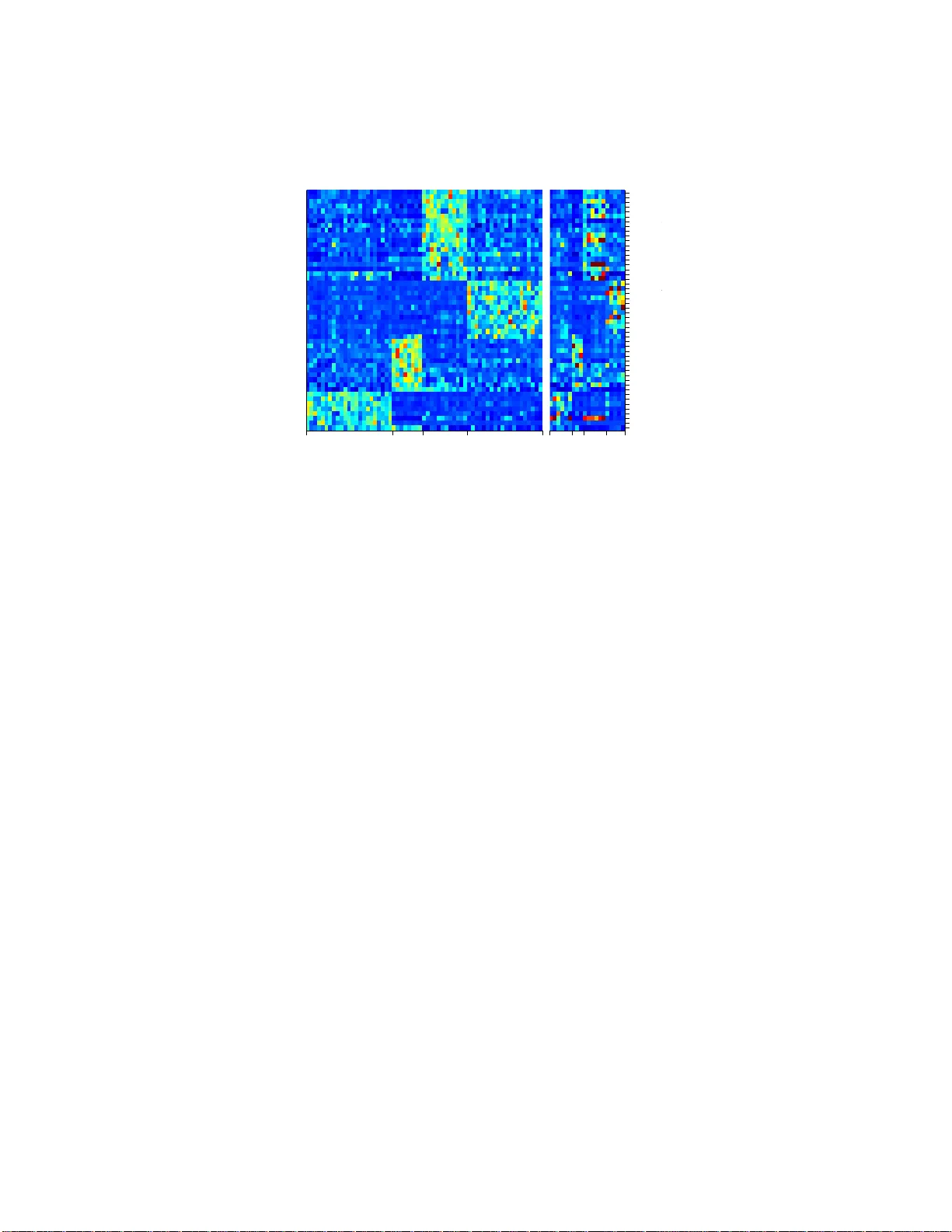

Electronic Journal of Stati stics V ol. 2 (2008) 149–167 ISSN: 1935-7524 DOI: 10.1214/ 08-EJS12 2 V ariable selecti on for the m ulticategory SVM via adapt iv e su p-norm regularizatio n Hao Helen Zhang Dep artment of Statistics North Car olina State University R aleigh, NC 27695 e-mail: hzhang2@ stat.ncs u.edu Y ufeng Liu ∗ Dep artment of Statistics and Op er ations R ese ar c h Car olina Center for Genome Scienc es University of North Car olina Chap el Hil l, NC 27599 e-mail: yfliu@em ail.unc. edu Yic hao W u Dep artment of Op er ations R ese ar ch and Financial Engine ering Princ e t on U ni versity Princ e t on, NJ 08544 e-mail: yichaowu @princet on.edu Ji Zh u Dep artment of Statistics University of Michigan Ann Arb or, MI 48109 e-mail: jizhu@um ich.edu Abstract: The Support V ector Mac hine (SVM) is a p opular classi fication paradigm in mac hine l earning and has ac hiev ed great success in real appli- cations. Ho we v er, the standard SVM can not select v ariables automatically and therefore its solution t ypically utilizes all the input v ariables without discrimination. This make s it di fficult to iden tify important predictor v ari- ables, which is often one of the primary goals in data analysis. In this paper, we propose t w o nov el types of regularization i n the context of the multi- category SVM (MSVM) for sim ultaneous classification and v ariable selec- tion. The MSVM generally r equires estimation of multiple discriminating functions and applies the argmax rule for prediction. F or each individual ∗ Corresp onding author. The authors thank the editor Professor Larry W asserman, the associate editor, and t w o review ers f or their constructiv e commen ts and suggestions. Liu’ s researc h w as supp orted in part by the National Science F oundation D M S-0606577 and DM S- 0747575. W u’s researc h was supported by the National Institute of Health NIH R01-GM07261. Zhang’s r esearc h was supp orted i n part by the National Science F oundation DMS-0645293 and the National Institute of Health NIH/NCI R01 CA- 085848. Zhu’s research was supported in part b y the National Science F oundation DMS-0505432 and DMS-0705532. 149 H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 150 v ari able, w e propose to c haracte rize its imp ortance b y the supnorm of its coefficient ve ctor asso ciated with different functions, and then minimize the MSVM hinge loss function sub ject to a p enalt y on the sum of supnorms. T o further improv e the supnorm penalty , we prop ose the adaptiv e regulariza- tion, whic h all o ws different weigh ts imp osed on different v ariables according to their relative i m portance. Both types of r egularization automate v ari- able selection in the pro cess of building classifiers, and lead to sparse mu lti- classifiers with enhanced interpretabilit y and im prov ed accuracy , esp ecially for high di m ensional l o w sample size data. One big adv antage of the sup- norm p enalty is its easy implement ation via standard linear programm ing. Sev eral simulated examples and one r eal gene data analysis demonstrate the outstanding p erformance of the adaptive s upnorm p enalt y in v ar ious data settings. AMS 2000 sub ject classificatio ns: Primary 62H30. Keywords and phrases: Classi fication, L 1 -norm p enalt y, multicategory, sup-norm, SVM. Receiv ed Septem ber 2007. Con ten ts 1 Int ro duction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 150 2 Metho dology . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 151 3 Computational Algor ithms . . . . . . . . . . . . . . . . . . . . . . . . 155 4 Adaptive P enalty . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 156 5 Sim ulation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 157 5.1 Five-Class Example . . . . . . . . . . . . . . . . . . . . . . . . . 157 5.2 F our- Class Linear Exa mple . . . . . . . . . . . . . . . . . . . . . 158 5.3 Nonlinear Exa mple . . . . . . . . . . . . . . . . . . . . . . . . . . 160 6 Real Ex ample . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 162 7 Discussion . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 164 Appendix . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 165 Literature Cited . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 165 1. In tro duction In sup ervis ed lea r ning problems, w e are giv en a training set of n e x amples from K ≥ 2 different p opulations. F or each ex a mple in the training set, we obs erve its cov ariate x i ∈ R d and the corres po nding lab el y i indicating its membership. Our ultimate goal is to learn a cla ssification rule whic h can accur ately predict the cla ss lab el of a future example based on its cov ariate. Among many clas- sification metho ds, the Supp ort V ector Machine (SVM) has gained muc h p op- ularity in b oth machine learning and statistics . The seminal work by V apnik ( 1995 , 1998 ) has laid the foundation for the g e neral statistical learning the- ory and the SVM, which furthermore ins pired v ar ious extensions o n the SVM. F or other r eferences o n the binary SVM, see Christia nini and Shaw e-T aylor ( 2000 ), Sch¨ olkopf and Smola ( 2002 ), a nd reference s therein. Recently a few at- tempts ha v e been made to g eneralize the SVM to multiclass pr oblems, suc h H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 151 as V apnik ( 199 8 ), W esto n and W a tkins ( 1999 ), Crammer and Singer ( 2 001 ), Lee et al. ( 20 04 ), Liu and Shen ( 200 6 ), and W u and Liu ( 20 07a ). While the SVM outpe r forms many other metho ds in terms of classificatio n accuracy in n umerous real problems, the implicit nature of its solution makes it les s attractiv e in providing insight in to the predictive ability o f individual v aria bles. Often times, selecting relev a n t v aria bles is the pr imary goal of data mining. F or the bina ry SVM, Bradley and Manga sarian ( 1998 ) demonstrated the utility of the L 1 pena lt y , which can effectiv ely selec t v ariables by shrinking small or r e dundan t co efficients to zero. Zhu et al. ( 2003 ) provides an efficient al- gorithm to compute the entire solutio n path for the L 1 -norm SVM. O ther forms of pena lty hav e a lso been studied in the context of bina ry SVMs, such as the L 0 pena lt y ( W eston et al. , 2 0 03 ), the SCAD pena lt y ( Zhang et a l. , 200 6 ), the L q pena lt y ( Liu et a l. , 2007 ), the co m bination of L 0 and L 1 pena lt y ( Liu and W u , 2007 ), the com bination of L 1 and L 2 pena lt y ( W ang et al. , 2 006 ), the F ∞ norm ( Zou and Y ua n , 200 6 ), and others ( Zhao et al. , 2 006 ; Zou , 200 6 ). F or multiclass problems, v aria ble selection beco mes more complex than the binary cas e, since the MSVM re quires estimation of multiple disc r iminating functions, among which each function has its own subset of imp ortant predic to rs. One natural idea is to extend the L 1 SVM to the L 1 MSVM, as do ne in the recent work of Lee et al. ( 2006 ) a nd W ang a nd Shen ( 200 7b ). Howev er, the L 1 pena lt y do es not distinguish the sour ce of co efficients. It treats all the co efficients equally , no matter whether they cor resp ond to the s a me v ariable or different v a riables, or they are more likely to be rele v an t or irrelev a nt . In this pape r, w e prop ose a new regula rized MSVM for more effective v aria ble selection. In contrast to the L 1 MSVM, whic h imp oses a p enalty o n the sum of absolute v alues of a ll co efficients, w e p enalize the sup-norm of the co efficients a sso ciated with each v aria ble. The prop os ed metho d is shown to be able to achiev e a higher degree of mo del parsimony than the L 1 MSVM without compromising class ification accuracy . This pap er is organized as follows. Section 2 fo rmulates the sup-norm r egu- larization for the MSVM. Section 3 prop oses a n efficient alg o rithm to implemen t the MSVM. Section 4 discuss es an ada ptiv e appro ach to improv e p er formance of the sup-norm MSVM b y a llowing differe n t penalties fo r differen t co v ar ia tes according to their relative imp ortance. Numerica l re s ults on sim ulated and gene expression data a r e given in Sections 5 and 6 , followed by a summary . 2. Metho dology In K -c a tegory cla ssification pro blems, we co de y as { 1 , . . . , K } and define f = ( f 1 , . . . , f K ) a s a decision function vector. Ea ch f k , a mapping from the input domain R d to R , repr esents the str ength of the evidence that an example with input x b elongs to the c lass k ; k = 1 , . . . , K . A classifier induced by f , φ ( x ) = arg max k =1 ,...,K f k ( x ) , H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 152 assigns a n example with x to the cla ss with the largest f k ( x ). W e a ssume the n training pair s { ( x i , y i ) , i = 1 , . . . , n } are independently a nd ident ically dis- tributed accor ding to an unknown pr obability distribution P ( x , y ). Given a classifier f , its perfo rmance is measured by the generaliz ation error, GE( f ) = P ( Y 6 = a rg max k f k ( X )) = E ( X ,Y ) [ I ( Y 6 = arg max k f k ( X ))]. Let p k ( x ) = Pr( Y = k | X = x ) b e the conditional pr obability of class k g iven X = x . The Bay es r ule which minimizes the GE is then g iven by φ B ( x ) = arg min k =1 ,..., K [1 − p k ( x )] = a rg max k =1 ,...,K p k ( x ) . (2.1) F or nonlinear pro blems, we assume f k ( x ) = b k + P q j =1 w kj h j ( x ) using a set of basis functions { h j ( x ) } . This linear representation of a nonlinear cla ssifier through basis functions will g reatly facilitate the for m ulation of the pr op osed metho d. Alternatively nonlinear cla s sifiers can also be achiev ed by applying the kernel trick ( Boser et al. , 1 992 ). How ev er, the kernel classifier is often given as a bla ck box function, where the co nt ribution o f eac h individual cov ar iate to the decision rule is too implicit to b e characterized. Ther efore we will use the basis expansion to co nstruct nonlinear classifier s in the pap er. The standar d m ulticategory SVM (MSVM; Lee et al. , 200 4 ) so lves min f 1 n n X i =1 K X k =1 I ( y i 6 = k )[ f k ( x i ) + 1] + + λ K X k =1 d X j =1 w 2 kj , (2.2) under the sum-to-zero c onstraint P K k =1 f k = 0. The sum-to-zer o constraint used here is to follow Lee et al. (2004) in their fra mew ork for the MSVM. It is imp os ed to elimina te redundancy in f k ’s and to as s ure ident ifiability o f the solution. This constraint is also a nece s sary condition for the Fis her consistency of the MSVM prop osed by Lee et al. ( 200 4 ). T o achiev e v ariable selection, W ang and Shen ( 2007b ) pro po sed to impose the L 1 pena lt y on the co efficient s and the corre- sp onding L 1 MSVM then solves min b , w 1 n n X i =1 K X k =1 I ( y i 6 = k )[ b k + w T k x i + 1] + + λ K X k =1 d X j =1 | w kj | (2.3) under the sum-to-z ero constraint. F or linear cla ssification rules, we start with f k ( x ) = b k + P d j =1 w kj x j , k = 1 , . . . , K . The sum-to-ze r o constraint then b e- comes K X k =1 b k = 0 , K X k =1 w kj = 0 , j = 1 , . . . , d. (2.4) The L 1 MSVM trea ts a ll w kj ’s equally without distinction. As opp osed to this, we ta ke into acco unt the fact that some of the co efficients are asso ciated with the same c ov a r iate, therefo re it is mor e natural to treat them as a group rather than sepa rately . Define the weigh t ma trix W of size K × d such that its ( k , j ) entry is w kj . The structure of W is shown as follows: H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 153 x 1 · · · x j · · · x d Class 1 w 11 · · · w 1 j · · · w 1 d · · · · · · · · · · · · · · · Class k w k 1 · · · w kj · · · w kd · · · · · · · · · · · · · · · Class K w K 1 · · · w K j · · · w K d . Throughout the paper, w e use w k = ( w k 1 , . . . , w kd ) T to repr esent the k th row v ector of W , and w ( j ) = ( w 1 j , . . . , w K j ) T for the j th column vector of W . According to Crammer and Sing e r ( 20 01 ), the v alue b k + w T k x defines the similarity scor e of the cla ss k , and the predicted label is the index of the row attaining the highest similarity score with x . W e define the sup- norm for the co efficient vector w ( j ) as k w ( j ) k ∞ = max k =1 , ··· ,K | w kj | . (2.5) In this wa y , the importance of each cov aria te x j is directly controlled b y its largest absolute co efficie n t. W e prop ose the sup- norm regularizatio n for MSVM: min b , w 1 n n X i =1 K X k =1 I ( y i 6 = k )[ b k + w T k x i + 1] + + λ d X j =1 k w ( j ) k ∞ , sub ject to 1 T b = 0 , 1 T w ( j ) = 0 , for j = 1 , . . . , d, (2.6) where b = ( b 1 , . . . , b K ) T . The sup-no rm MSVM enc o urages more s parse solutions than the L 1 MSVM, and identifi es imp o rtant v a riables more precise ly . In the following, we des crib e the main motiv ation o f the sup- no rm MSVM, which ma kes it more a ttractive for v aria ble selection tha n the L 1 MSVM. Fir stly , with a sup-norm p enalty , a noise v aria ble is removed if and only if all cor r esp onding K estimated co efficients ar e 0. On the other hand, if a v ar iable is imp orta nt with a p ositive sup-nor m, the sup-norm p enalty , unlike the L 1 pena lt y , do es not put any additional pe na lties on the o ther K − 1 co efficients. This is desira ble since a v ariable will b e kept in the mo del a s lo ng as the sup-nor m o f the K co efficients is p ositive. No further shrink a ge is needed for the remaining co efficients in ter ms of v ar iable s election. F or illustra tion, we plo t the region 0 ≤ t 1 + t 2 ≤ C in Figur e 1 , whe r e t 1 = max( w 11 , w 21 , w 31 , w 41 ) a nd t 2 = ma x( w 12 , w 22 , w 32 , w 42 ). Clea rly , the sup-nor m pena lt y shrinks sum o f tw o maximums cor resp onding to t wo v a riables. This helps to lead to more par simonious mo dels. In short, in co nt rast to the L 1 pena lt y , the sup-norm utilizes the group information of the decision function v ector and consequently the sup-norm MSVM can deliver better v a riable selection. F or three-class pro blems, we show that the L 1 MSVM a nd the new prop ose d sup-norm MSVM give iden tical solutions after adjusting the tuning par ameters, which is due to the sum-to- zero co nstraints on w ( j ) ’s. This equiv a lence, how e ver, do es not hold fo r the ada ptiv e pro cedures introduced in Section 4 . H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 154 0 C C t 1 =max{|w 11 |, |w 21 |, |w 31 |, |w 41 |} t 2 =max{|w 12 |, |w 22 |, |w 32 |, |w 42 |} t 1 +t 2 =C Fig 1 . Il lustr ative plot of the shrinkage pr op erty of the sup-norm. Proposition 2.1 . Whe n K = 3 , the L 1 MSVM ( 2.3 ) and the sup-norm MSVM ( 2.6 ) ar e e quivalent. When K > 3 , our empirical exp erience shows tha t the sup-norm MSVM generally p erforms well in terms o f classifica tion accuracy . Here we would like to point out t w o fundamental differe nc e s b etw een the sup- norm penalty and the F ∞ pena lt y used for g r oup v a riable selection ( Zhao et al. , 2006 ; Zou and Y uan , 2006 ) consider ing their similar express ions. The purpo se of group selection is to select several prediction v ar iables altogether if these predictors work a s a group. Ther efore, ea ch F ∞ term in Zou a nd Y uan ( 2006 ) is based o n the re g ression co efficie nts o f several v ariables whic h b elong to one group, whereas ea ch supnorm p enalty in ( 2.6 ) is a sso ciated w ith only one pre - diction v ar iable. Secondly , in the implementation of the F ∞ , o ne has to decide in adv a nce the num ber o f gro ups and which v ariables b elong to a certain group, whereas in the supnorm SVM eac h v ar ia ble is naturally a sso ciated with its own group and the num b er of gr oups is same as the num ber of cov ar iates. As a rema r k, we p oint o ut that Argyriou et a l. ( 2007 , 20 06 ) pr op osed a similar pena lt y for the purp os e of multi-task feature lea rning. Sp ecifically , they used a mixture of L 1 and L 2 pena lties. They first applied the L 2 pena lt y for each feature acr oss differen t tasks a nd then used the L 1 pena lt y for fea ture selection. In contrast, our p enalty is a combination of the L 1 and supnorm pena lties for m ulticategory class ification. The tuning parameter λ in ( 2.6 ) balances the tradeoff b et ween the data fit and the mo del parsimo ny . A prop er choice o f λ is imp ortant to assure go o d p er- formance of the resulting cla ssifier. If the chosen λ is to o small, the pro c edure tends to overfit the training data and gives a less sparse solution; on the other hand, if λ is to o large , the solution can beco me very s pa rse but pos sibly with H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 155 a low pr ediction power. The choice of the tuning para meter is typically done by minimizing either a n estimate of generalization err or or other related p erfor- mance mea sures. In simulations, we generate an extra independent tuning se t to choose the b est λ . F or r eal da ta ana ly sis, we use le ave-one-out cross v alidatio n of the misclass ification rate to s e lect λ . 3. Computational Algo rithms In this section we show that the optimization pr oblem ( 2.6 ) can b e converted to a linea r programming (LP) problem, and c a n therefo re b e solved using standard LP techniques in p olynomial time. This g reat computationa l adv antage is very impo rtant in real a pplications, esp ecially for lar ge data sets. Let A b e an n × K matching matr ix with its en try a ik = I ( y i 6 = k ) for i = 1 , . . . , n and k = 1 , . . . , K . Firs t we in tro duce sla ck v a riables ξ ik such that ξ ik = b k + w T k x i + 1 + for i = 1 , . . . , n ; k = 1 , . . . , K . (3.1 ) The optimization pr oblem ( 2.6 ) can b e ex pressed as min b , w , ξ 1 n n X i =1 K X k =1 a ik ξ ik + λ d X j =1 k w ( j ) k ∞ , sub ject to 1 T b = 0 , 1 T w ( j ) = 0 , j = 1 , . . . , d, ξ ik ≥ b k + w T k x i + 1 , ξ ik ≥ 0 , i = 1 , . . . , n ; k = 1 , . . . , K . (3.2) T o further simplify ( 3.2 ), we in troduce a seco nd set of slack v ariables η j = k w ( j ) k ∞ = max k =1 ,...,K | w kj | , which add some new co nstraints to the problem: | w kj | ≤ η j , for k = 1 , . . . , K ; j = 1 , . . . , d. Finally write w kj = w + kj − w − kj , where w + kj and w − kj denote the p ositive and negative parts of w kj , resp ectively . Similarly , w + j and w − j resp ectively consist of the positive and negative parts o f comp o nent s in w j . Denote η = ( η 1 , . . . , η d ) T ; then ( 3.2 ) b ecomes min b , w , ξ , η 1 n n X i =1 K X k =1 a ik ξ ik + λ d X j =1 η j , sub ject to 1 T b = 0 , 1 T [ w + ( j ) − w − ( j ) ] = 0 , j = 1 , . . . , d, ξ ik ≥ b k + [ w + k − w − k ] T x i + 1 , ξ ik ≥ 0 , i = 1 , . . . , n ; k = 1 , . . . , K , w + ( j ) + w − ( j ) ≤ η , w + ( j ) ≥ 0 , w − ( j ) ≥ 0 , j = 1 , . . . , d. (3.3) H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 156 4. Adaptiv e P enalt y In ( 2.3 ) a nd ( 2.6 ) , the same weigh ts are used for different v ar iables in the p enalty terms, which may b e to o restrictive, since a smaller p enalty may b e more desire d for those v ariables which are s o impor tant that we wan t to retain them in the mo del. In this section, we suggest that different v ariables should be p enalize d differently accor ding to their relative imp ortance. Ideally , large p enalties should be impose d on redundan t v ariables in o rder to eliminate them from mo dels mo re easily; a nd s ma ll p enalties should b e used on impor tant v aria bles in order to retain them in the final classifier. Mo tiv ated by this, we conside r the follo wing adaptive L 1 MSVM: min b , w 1 n n X i =1 K X k =1 I ( y i 6 = k )[ b k + w T k x i + 1] + + λ K X k =1 d X j =1 τ kj | w kj | , sub ject to 1 T b = 0 , 1 T w ( j ) = 0 , for j = 1 , . . . , d, (4.1) where τ kj > 0 repr esents the weight for co efficient w kj . Adaptive shrink age for each v ar iable has b een prop osed and studied in v a r- ious cont exts of re g ression pr o blems, including the adaptive LASSO for linear regres s ion ( Zou , 2 0 06 ), prop ortiona l hazar d models ( Zhang and Lu , 200 7 ), and quantile regr ession ( W ang et al. , 2 0 07 ; W u and Liu , 2 007b ). In particula r, Zo u ( 2006 ) has established the oracle proper t y of the adaptive LASSO and justified the use of differen t amo un ts of shrink ag e for differen t v ariables. Due to the sp e- cial for m o f the sup-no r m SVM, we consider the following tw o wa ys to employ the adaptive penalties: [I] min b , w 1 n n X i =1 K X k =1 I ( y i 6 = k )[ b k + w T k x i + 1] + + λ d X j =1 τ j k w ( j ) k ∞ , sub ject to 1 T b = 0 , 1 T w ( j ) = 0 , for j = 1 , . . . , d, (4.2) [II] min b , w 1 n n X i =1 K X k =1 I ( y i 6 = k )[ b k + w T k x i + 1] + + λ d X j =1 k ( τ w ) ( j ) k ∞ , sub ject to 1 T b = 0 , 1 T w ( j ) = 0 , for j = 1 , . . . , d, (4.3) where the vector ( τ w ) ( j ) = ( τ 1 j w 1 j , . . . , τ K j w K j ) T for j = 1 , ..., d . In ( 4.1 ), ( 4 .2 ), and ( 4.3 ), the weight s can b e regar de d as lev erage factors, which are adaptiv ely chosen suc h that large p enalties are imposed on coe ffi- cients of unimp ortant cov aria tes a nd small p enalties on co efficients of impor tant ones. Let ˜ w b e the so lution to standard MSVM ( 2.2 ) with the L 2 pena lt y . Our empirical exp erience s uggests that τ kj = 1 | ˜ w kj | H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 157 is a go o d choice for ( 4.1 ) and ( 4.3 ), and τ j = 1 k ˜ w ( j ) k ∞ is a goo d choice fo r ( 4.2 ). If ˜ w kj = 0 , whic h implies the infinite penalty on w kj , we set the co rresp onding co efficient solution ˆ w kj to b e zero . In terms of co mputational iss ue s , a ll thre e pro blems ( 4.1 ), ( 4.2 ), and ( 4.3 ) can b e solved as LP pro blems. Their entire so lutio n paths may be obta ined by some mo difications o f the algor ithms in W ang and Shen ( 2 0 07b ). 5. Simulation In this section, w e demonstrate the perfo r mance o f six MSVM metho ds: the standard L 2 MSVM, L 1 MSVM, sup-norm MSVM, ada ptive L 1 MSVM, and the tw o adaptive s up-norm MSVMs. Three simulation mo dels ar e c onsidered: (1) a linea r example with five classes ; (2) a linear ex a mple with four cla sses; (3) a nonlinear ex ample with thr ee cla s ses. In ea ch simulation setting, n observ ations are s im ulated a s the training data, and another n obse r v ations a re g enerated for tuning the regulariza tion parameter λ for each pro cedure. Therefor e the total sa mple size is 2 n for obtaining the final classifie r s. T o test the accuracy of the cla ssification rules, we a lso independently generate n ′ observ ations as a test set. The tuning parameter λ is selected via a grid sear ch o ver the grid: log 2 ( λ ) = − 14 , − 13 , . . . , 15. When a tie occur s, w e c hoos e the larg er v alue of λ . As we suggest in Section 4 , we use the L 2 MSVM s olution to derive the w eights in the adaptive MSVMs. The L 2 MSVM so lutio n is the final tuned s olution using the separ ate tuning set. Once the weights are ch osen, w e tune the pa rameter λ in the a daptive pro cedure via the tuning set. W e conduct 100 simulations for each classification method under all s e ttings. Each fitted classifier is then ev aluated in terms of its classification accura cy a nd v aria ble selection p erforma nc e . F or each metho d, we rep ort its av e r age testing error , the num ber of correct and inco rrect zero co e fficien ts among K d coeffi- cients, the mo del size as the num ber o f imp or tant ones amo ng the d v a riables, and the num ber of times that the tr ue mo del is c o rrectly identified. The nu m- ber s given in the pa rentheses in the tables a re the standa rd erro rs of the testing error s. W e also summarize the frequency of each v ariable b eing selected o ver 100 runs. All simulations a re done using the optimization so ft w are CPLEX with the AMPL interface ( F oure r et al. , 2 003 ). Mo re information ab out CP LEX can b e found on the ILOG w ebsite http ://www .ilog. com/products/optimization/ . 5.1. Five-Class Ex ample Consider a five-class example, with the input vector x in a 10-dimensiona l space. The first tw o co mpone nts of the input vector a re generated from a mixture H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 158 Gaussian in the following wa y: for each class k , ge nerate ( x 1 , x 2 ) independently from N ( µ k , σ 2 1 I 2 ), with µ i = 2 ( cos([2 k − 1] π / 5) , sin([2 k − 1] π / 5)) , k = 1 , 2 , 3 , 4 , 5 , and the r emaining eight comp onents are i.i.d. generated from N (0 , σ 2 2 ). W e generate the sa me num ber of observ a tions in each class. Here σ 1 = √ 2 , σ 2 = 1 , n = 250, and n ′ = 5 0 , 000. T a ble 1 Classific ation and variable sele ction r esults for the five-class example. TE, CZ, IZ, MS, and CM r efer to the t esting err or, the numb e r of c orr e ct zer os, the numb er of inc orr e ct zer os, the mo del size, and t he numb er of times that the t rue mo del is c orr e ctly identifie d, r e sp e ctively. Method TE CZ IZ MS CM L2 0.454 (0.034) 0.00 0. 00 10.00 0 L1 0.558 (0.022) 24.88 2.81 6.60 21 Adapt-L1 0.553 (0.020) 30.23 2.84 5.14 40 Supnorm 0.453 (0.020) 33.90 0.01 3.39 68 Adapt-supI 0.455 (0.024) 39.92 0.01 2.08 98 Adapt-supII 0.457 (0.046) 39.40 0.09 2.17 97 Ba y es 0.387 (—) 41 0 2 100 T able 1 shows that, in terms of classification a c curacy , the L 2 MSVM, the supnorm MSVM, and the tw o ada ptive supnorm MSVMs ar e among the bes t and their testing errors ar e clo se to each other. In terms of other measurements such as the num ber of correc t/ incorrect zeros, the model size, and the num ber of times that the tr ue mo del is cor rectly identified, the supno rm MSVM pr o cedures work muc h better than o ther MSVM metho ds. T able 2 shows the frequency of each v aria ble b eing selected by each pro cedure in 100 runs. The t ype I sup- no rm MSVM per forms the b est among all. Over- all the ada ptiv e MSVMs show significant improv emen t ov er the non-adaptive classifiers in ter ms of b oth class ification accura cy and v aria ble selection. T a ble 2 V ariable sele ct ion f re quency r esults for t he five-c lass example. Selection F requency Method x 1 x 2 x 3 x 4 x 5 x 6 x 7 x 8 x 9 x 10 L2 100 100 100 100 100 100 100 100 100 100 L1 100 100 59 55 60 58 56 61 57 54 Adapt-L1 100 100 44 40 43 37 39 41 35 35 Supnorm 100 100 15 17 20 1 7 14 20 17 19 Adapt-supI 100 100 1 1 0 2 1 1 1 1 Adapt-supII 100 100 2 2 2 2 2 2 3 2 5.2. F our-Class Line ar Example In the simulation example in Sectio n 5.1 , the informa tive v aria bles ar e impo rtant for all classes . In this section, we co ns ider an example wher e the infor mative v ari- H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 159 ables are imp ortant for some class es but not imp orta n t for other cla sses. Sp e cif- ically , we g enerate four i.i.d impor ta n t v ariables x 1 , x 2 , x 3 , x 4 from Unif[ − 1 , 1] as w ell as six indep endent i.i.d noise v ariables x 5 , . . . , x 10 from N (0 , 8 2 ). Define the functions f 1 = − 5 x 1 + 5 x 4 , f 2 = 5 x 1 + 5 x 2 , f 3 = − 5 x 2 + 5 x 3 , f 4 = − 5 x 3 − 5 x 4 , and set p k ( x ) = P ( Y = k | X = x ) ∝ exp( f k ( x )) , k = 1 , 2 , 3 , 4 . In this exa mple, we set n = 200 and n ′ = 4 0 , 000. Note that x 1 is not imp ortant for distinguishing class 3 a nd class 4 . Similarly , x 2 is noninfor mative for class 1 a nd class 4, x 3 is noninformative fo r cla s s 1 and class 2, a nd x 4 is noninfor mative for class 2 and class 3. T a ble 3 Classific ation and variable sele ction r esults for the four-class line ar example. Method TE CZ IZ MS CM L2 0.336 (0.063) 0.0000 0.0000 10.00 0 L1 0.340 (0.069) 2.5100 0.1600 9.99 0 Adapt-L1 0.320 (0.079) 18.2300 0.2600 7.21 21 Supnorm 0.332 (0.070) 0.8500 0.1400 9.98 0 Adapt-supI 0.327 (0.076) 9.3300 0.1400 7.83 15 Adapt-supII 0.326 (0.071) 9.9000 0.1400 7.69 9 Ba y es 0.1366 (—) 32 0 4 100 T able 3 summarizes the p erformanc e o f v a r ious pr o cedures, and T able 4 shows the frequency of each v a riable b eing selected b y each pro cedure in 100 runs. Due to the increased difficulty of this problem, the p erforma nces of all metho ds are not a s go o d a s that of the five-class example. F rom these r esults, we can see that the adaptive procedur es w ork better than the no n-adaptive pr o cedures bo th in terms of b oth cla ssification a ccuracy and v ariable selection. F ur thermore, the adaptive L 1 MSVM p erfor ms the b est overall. This is due to the difference b e- t ween the L 1 and the supnorm penalties . Our prop osed s upnorm p enalty trea ts all co efficients of one v ariable corr esp onding to different classes as a gro up a nd remov es the v ar iable if it is non- informative acros s all class lab els. By design of this exa mple, imp ortant v ariables hav e zero co e fficien ts for certain cla sses. As a result, our s upnorm pena lt y do e s no t deliver the b est p erfo r mance. Nevertheless, the adaptive supnorm pro cedures still p erform rea sonably . H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 160 T a ble 4 V ariable se le ct ion f r e quency r esults for t he four-class example. Selection F requency Method x 1 x 2 x 3 x 4 x 5 x 6 x 7 x 8 x 9 x 10 L2 100 100 100 100 100 100 100 100 100 100 L1 100 100 100 100 100 99 100 100 100 100 Adapt-L1 100 100 100 100 55 53 59 56 49 4 9 Supnorm 100 100 100 100 100 99 100 100 100 99 Adapt-supI 100 100 100 100 67 64 71 60 58 63 Adapt-supII 100 100 100 100 65 66 65 58 56 59 −3 −2 −1 0 1 2 3 −6 −4 −2 0 2 4 6 3 3 3 1 2 2 2 3 2 2 2 1 2 3 3 2 3 3 2 1 2 2 2 3 1 2 3 1 2 1 2 2 2 1 3 1 1 3 2 1 3 1 2 1 1 1 3 2 2 1 3 2 1 1 1 1 1 3 1 3 3 1 3 1 2 3 2 1 1 2 1 2 3 2 3 3 3 1 1 2 2 1 3 3 3 1 1 2 2 2 2 3 2 3 2 2 1 2 2 3 3 2 3 1 2 1 3 2 2 3 1 3 3 1 1 1 2 3 2 2 3 1 1 3 1 1 2 2 2 1 2 3 1 1 1 2 2 1 2 1 3 1 2 3 2 2 3 3 1 1 1 3 1 3 2 2 2 3 3 1 3 1 3 2 1 2 1 3 2 2 3 2 1 2 3 2 1 1 1 1 2 3 1 1 2 3 2 2 1 3 3 1 1 1 1 1 1 2 1 1 x 1 x 2 Fig 2 . The Bayes b oundary for the nonline ar thr ee-class example. 5.3. Nonline ar Example In this nonlinear 3-class example, we fir s t g e ne r ate x 1 ∼ Unif[ − 3 , 3] and x 2 ∼ Unif[ − 6 , 6]. Define the functions f 1 = − 2 x 1 + 0 . 2 x 2 1 − 0 . 1 x 2 2 + 0 . 2 , f 2 = − 0 . 4 x 2 1 + 0 . 2 x 2 2 − 0 . 4 , f 3 = 2 x 1 + 0 . 2 x 2 1 − 0 . 1 x 2 2 + 0 . 2 , and set p k ( x ) = P ( Y = k | X = x ) ∝ exp( f k ( x )) , k = 1 , 2 , 3 . The Bay es bo undary is plotted in Figure 2 . W e also genera te three no ise v ariables x i ∼ N (0 , σ 2 ), i = 3 , 4 , 5 . In this example, we set σ = 2 and n ′ = 4 0 , 000. T o achieve nonlinear classificatio n, we fit the nonlinea r MSVM by including the five ma in effects, their s quare ter ms, and their cross pro ducts as the basis functions. The results with n = 20 0 are summarized in T ables 5 and 6 . Clearly , H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 161 T a ble 5 Classific ation and variable sele ction r esults using se c ond or der p olynomial b asis functions for the nonline ar example in Se ction 5.3 with n = 200 . Method TE CZ IZ MS CM L2 0.167 (0.013) 0.00 0. 00 20.00 0 L1 0.151 (0.012) 21.42 0.03 14.91 0 Adapt-L1 0.140 (0.010) 43.13 0.00 6.92 31 Supnorm 0.150 (0.012) 22.70 0.01 14.43 0 Adapt-supI 0.140 (0.010) 40.84 0.00 7.21 31 Adapt-supII 0.140 (0.011) 41.50 0.00 6.21 36 Ba y es 0.120 (—) 52 0 3 100 T a ble 6 V ariable se le ct ion fr e quency r esults for the nonline ar e xample using se c ond or der p olynomial b asis functions with n = 200 . Selection F requency Method x 1 x 2 1 x 2 2 x 2 x 3 x 4 x 5 x 2 3 x 2 4 x 2 5 L2 100 100 100 100 100 100 100 100 100 100 L1 100 100 100 69 44 50 43 80 84 89 Adapt-L1 100 100 100 33 21 21 20 24 18 22 Supnorm 100 100 100 67 37 42 34 84 80 75 Adapt-supI 100 100 100 31 21 21 26 21 25 24 Adapt-supII 100 100 100 22 18 12 19 18 16 18 x 1 x 2 x 1 x 3 x 1 x 4 x 1 x 5 x 2 x 3 x 2 x 4 x 2 x 5 x 3 x 4 x 3 x 5 x 4 x 5 L2 100 100 100 100 100 100 100 100 100 100 L1 80 55 57 65 86 88 90 69 72 70 Adapt-L1 31 20 18 20 28 26 31 20 17 22 Supnorm 79 62 58 55 87 89 91 62 68 73 Adapt-supI 31 22 17 28 30 29 30 24 16 25 Adapt-supII 25 15 14 19 30 23 22 16 17 17 the adaptive L 1 SVM and the tw o a daptive sup-no r m SVMs deliver more a c- curate and sparse classifier s than the other methods. In this example, there are c orrelatio ns a mong cov ar iates and co nsequently the v ariable selection task bec omes mor e challenging. This difficult y is reflected in the v aria ble selection frequency r epo rted in T a ble 6 . Despite the difficulty , the adaptive pro cedures are able to r emov e nois e v aria bles reasonably well. T o examine the perfo rmance of v arious metho ds using a richer set of ba sis functions, we also fit nonlinear MSVMs via p olynomial basis of degree 3 with 55 basis functions. Results of classificatio n and v a riable selection with n = 200 and 4 00 are rep orted in T ables 7 a nd 8 resp ectively . Compared with the case o f the second order p olynomial basis, cla ssification testing erro r s using the third order p olyno mial ba sis ar e muc h la rger for the L 2 , L 1 , a nd supnorm MSVMs, but similar for the adaptive proc edures. Due to the lar ge basis set, none of the metho ds can identif y the cor r ect mo del. How ever, the a daptive pro cedures can eliminate more noise v ariables tha n the non- adaptive pro cedure s. This further demonstrates the effectiveness of adaptive w eigh ting. The results of v ar iable selection fre q uency (not r ep orted due to lack of space) s how a similar pattern as that of the second o rder p oly no mial. When n increase s from 2 00 a nd 400, H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 162 T a ble 7 Classific ation and variable sele ction r esults using thir d or der p olyno mial b asis functions for the nonline ar example in Se c tion 5.3 with n = 200 . Method TE CZ IZ MS CM L2 0.213 (0.018) 0.00 0.00 55.00 0 L1 0.170 (0.015) 59.22 0.57 40.44 0 Adapt-L1 0.138 (0.015) 120.71 0.17 19.28 0 Supnorm 0.171 (0.015) 60.08 0.61 40.06 0 Adapt-supI 0.141 (0.016) 114.29 0.17 20.22 0 Adapt-supII 0.142 (0.015) 106.78 0.22 19.75 0 Ba y es 0.120 (—) 157 0 3 100 T a ble 8 Classific ation and variable sele ction r esults using thir d or der p olyno mial b asis functions for the nonline ar example in Se c tion 5.3 with n = 400 . Method TE CZ IZ MS CM L2 0.162 (0.008) 0.00 0.00 55.00 0 L1 0.143 (0.008) 60.13 0.34 40.50 0 Adapt-L1 0.124 (0.004) 139.71 0.00 11.01 0 Supnorm 0.144 (0.010) 60.51 0.32 40.24 0 Adapt-supI 0.125 (0.005) 139.41 0.00 10.37 0 Adapt-supII 0.125 (0.004) 132.96 0.00 10.96 0 Ba y es 0.120 (—) 157 0 3 100 we can see that classifica tion accur acy for all metho ds increa ses as expec ted. Int erestingly , compared to the case of n = 200 , the p erfor ma nce of v ariable selection with n = 400 for non-adaptive pro cedures stays r elatively the same, while improves dramatically fo r the ada ptiv e proc e dures. 6. Real Example DNA micr o array technology has made it p ossible to monitor mRNA expr essions of thousands of genes sim ultaneously . In this section, we apply o ur six differen t MSVMs on the children c ancer data set in Khan et a l. ( 2001 ). K han et al. ( 20 01 ) classified the s mall round blue cell tumors (SRBCTs) of childhoo d into 4 clas s es; namely neuro blastoma (NB), r ha bdo m yosarcoma (RMS), non-Ho dgk in lym- phoma (NHL), and the Ewing family of tumor s (E WS) using cDNA gene expres- sion profiles. After filtering, 2308 gene profiles out of 6567 genes a re given in the data set, av ailable at http:/ /rese arch.nhgri.nih.gov/microarray/Supplement/ . The data set includes a training set o f size 6 3 a nd a test set of size 20. The dis- tributions of the four distinct tumor categories in the training and test s e ts are given in T able 9 . Note that Bur kitt lymphoma (BL) is a s ubset of NHL. T o analyze the data, we first standardize the data sets by a pplying a simple linear transforma tion based on the tra ining data . Sp ecifically , we standa r dize the expression ˜ x gi of the g -th gene of sub ject i to obtain x gi by the following formula: x gi = ˜ x gi − 1 n P n j =1 ˜ x gj sd ( ˜ x g 1 , · · · , ˜ x gn ) . H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 163 T a ble 9 Class distribution of the micr o arr ay example. Data set NB RMS BL EWS T otal T raining 12 20 8 23 63 T est 6 5 3 6 20 T a ble 10 Classific ation r esults of the mic ro arr ay data using 200 genes. Selected genes Pe nalt y T esting Error T op 100 Bottom 100 L2 0 100 100 L1 1/20 62 1 Adp-L1 0 53 1 Supnorm 1/20 53 0 Adp-supI 1/20 50 0 Adp-supII 1/20 47 0 Then we rank all gene s using their marginal relev ance in class separ ation b y adopting a simple criter ion used in Dudoit et a l. ( 2002 ). Sp ecifically , the rele- v ance meas ure for gene g is defined to b e the r atio of b etw een classes sum o f squares to within class sum o f squares as follows: R ( g ) = P n i =1 P K k =1 I ( y i = k )( ¯ x ( k ) · g − ¯ x · g ) 2 P n i =1 P K k =1 I ( y i = k )( x ig − ¯ x ( k ) · g ) 2 , (6.1) where n is the size of the training set, ¯ x ( k ) · g denotes the av erage express io n level of gene g for clas s k observ ations, and ¯ x · g is the overall mea n expr ession level of gene g in the training set. T o examine the per formance of v ariable selection o f all different metho ds, we s elect the top 1 0 0 and bo tto m 100 genes as cov ar iates a c cording the r elev ance measure R . O ur main go al here is to get a set of “imp or tant” genes and also a set of “unimpor tant” ge nes, a nd to see whether our metho ds can effectively remov e the “unimp o rtant” genes. All six MSVMs with different p enalties are applied to the training set. W e use leave-one-out cross v alidation on the sta ndardized tr a ining data with 2 00 genes for the pur po se of tuning para meter selection and then apply the resulting classifiers on the testing data. The results are tabulated in T able 10 . All metho ds hav e either 0 or 1 misclas sification on the testing set. In terms of gene selection, three sup-norm MSVMs a re able to eliminate a ll b ottom 100 genes and they use around 50 genes out of the top 100 genes to achiev e compar able cla ssification per formance to o ther metho ds. In Figure 3 , we plot heat maps of both training and testing sets on the left and right pa nels resp ectively . In these heat maps, r ows r epresent 5 0 genes se- lected by the Typ e I sup-norm MSVM a nd columns re pr esent patients. The g ene expression v a lues are reflec ted b y colors on the plo t, with red r epresenting the highest expressio n level and blue the low est expre ssion level. F or visua liz a tion, we group columns within eac h cla ss together and use hiera rchical cluster ing with correla tion distance on the training s e t to order the g enes so that g enes close to each other hav e simila r expressions. F r o m the left panel on Figure 3 , we can H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 164 Training data Test data EWS BL NB RMS EWS BL NB RMS Homo sapien s incomplete cDNA for a mutated allele of a myosin class I, myh−1c Homo sapien s incomplete cDNA for a mutated allele of a myosin class I, myh−1c transmembra ne protein apelin; peptid e ligand for APJ receptor recoverin glycine cleav age system protein H (aminomethyl carrier) Homo sapien s mRNA full length insert cDNA clone EUROIMAGE 45620 thioredoxin re ductase 1 cadherin 2, N −cadherin (neuronal) microtubule− associated protein 1B postmeiotic s egregation increased 2−like 12 glucose−6−p hosphate dehydrogenase protein tyrosi ne phosphatase, non−receptor type 12 transcriptiona l intermediary factor 1 growth assoc iated protein 43 ESTs dihydropyrimi dinase−like 2 kinesin family member 3C fibroblast gro wth factor receptor 4 presenilin 2 ( Alzheimer disease 4) sarcoglycan, alpha (50kD dystrophin−associated glycoprotein) nuclear recep tor coactivator 1 ESTs glycine amidi notransferase (L−arginine:glycine amidinotransferase) mesoderm sp ecific transcript (mouse) homolog lymphocyte−s pecific protein 1 Human DNA for insulin−like growth factor II (IGF−2); exon 7 and additional ORF neurofibromin 2 (bilateral acoustic neuroma) plasminogen activator, tissue interleukin 4 r eceptor Wiskott−Aldri ch syndrome (ecezema−thrombocytopenia) proteasome ( prosome, macropain) subunit, beta type, 8 (large multifunctional protease 7) major histoco mpatibility complex, class II, DM alpha pim−2 oncog ene ESTs proteasome ( prosome, macropain) subunit, beta type, 10 protein kinase , cAMP−dependent, regulatory, type II, beta postmeiotic s egregation increased 2−like 3 Rho−associa ted, coiled−coil containing protein kinase 1 EST translocation protein 1 Fc fragment o f IgG, receptor, transporter, alpha follicular lymp homa variant translocation 1 antigen identi fied by monoclonal antibodies 12E7, F21 and O13 caveolin 1, ca veolae protein, 22kD ATPase, Na+ /K+ transporting, alpha 1 polypeptide cyclin D1 (PR AD1: parathyroid adenomatosis 1) protein tyrosi ne phosphatase, non−receptor type 13 (APO−1/CD95 (Fas)−associated phosphatase) v−myc avian myelocytomatosis viral oncogene homolog Fig 3 . He at maps of the micr o arr ay data. The left and right p anels r epr esent the tr aining and testing set s r esp ectively. observe four blo ck structure s as so ciated with four c lasses. This implies that the 50 genes s elected ar e highly informative in predicting the tumor types. F or the testing set shown on the right pane l, we can still see the four blo cks although the str ucture and pattern a re not as clea n as the training set. It is interesting to note that sev eral genes in the testing set hav e higher expression lev els, i.e., more red, than the training set. In summar y , we conclude that the pr op osed sup-norm MSVMs a re indeed effectiv e in p erfor ming simultaneous classification and v ar iable selection. 7. Discuss ion As po int ed out in Lafferty and W as serman ( 2006 ), sparse learning is an imp o r- tant but challenging iss ue for high dimensional da ta. In this pap er, we propo se a new re gularizatio n metho d whic h applies the sup-norm p enalty to the MSVM to a ch ieve v ariable selec tio n. Thro ugh the new pe nalty , the natural group effect of the coe fficie n ts ass o ciated with the same v ar iable is em bedded in the reg- ularization framework. As a result, the sup-norm MSVMs can per form b etter v aria ble s election and deliver mor e parsimo nious clas sifiers than the L 1 MSVMs. Moreov er, our results show that the adaptive pro cedures work very well a nd im- prov e the corresp onding nonadaptive pro cedur es. The a daptive L 1 pro cedure can in some settings be as g o o d as and sometimes b etter than the adaptiv e supnorm pro cedur e s. As a future res earch dire c tion, we will further inv estigate the theoretica l proper ties of pro po sed methods. In some problems, it is possible to form gro ups among cov ar iates. As ar g ued in Y uan and Lin ( 2006 ) and Zou and Y uan ( 2006 ), it is advis able to use such group information in the model building pro cess to improve accura cy of the H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 165 prediction. The no tion of “gro up lasso” has als o b een studied in the context of learning a kernel ( Micchelli and Pon til , 2007 ). If such kind of group information is av aila ble for m ultica tegory classification, there will be tw o kinds of gro up information av aila ble for mo del building, one type of gro up formed b y the same cov a riate c orresp onding to differen t class es as considered in the pap er and the other kind formed among cov ar iates. A future research direction is to combine bo th group informatio n to construct a new multicategory clas s ification method. W e be lieve that such p otential classifiers can o utper form those without using the additional infor mation. This paper fo cuses on the v ar iable selection issue for supervis ed le a rning. In pr actice, semi-sup ervised le a rning is o ften enco un tered, and many metho ds hav e b een developed including Zhu et al. ( 2003 ) and W ang and Shen ( 2 0 07a ). Another future topic is to generalize the sup-no rm p enalty to the c o nt ext of semi-sup ervised learning . App endix Pro of of Prop o sition 2.1 : Without loss of g enerality , assume that { w 1 j , w 2 j , w 3 j } are all nonzero. Because of the sum-to-zer o constraint w 1 j + w 2 j + w 3 j = 0 , there must be one comp onent out of { w 1 j , w 2 j , w 3 j } has a differen t sign from the other t w o. Suppose the sign of w 1 j differs from the other t w o and then | w 1 j | = | w 2 j | + | w 3 j | b y the sum-to-zero constra in t. Conseq uen tly , we hav e | w 1 j | = max {| w 1 j | , | w 2 j | , | w 3 j |} . Therefor e, P 3 k =1 | w kj | = 2 k w ( j ) k ∞ . The equiv- alence of problem ( 2.2 ) with the tuning parameter λ and problem ( 2.6 ) with the tuning para meter 2 λ can b e then established. This completes the pr o of. Literature Cited Argyriou, A. , Evge niou, T. a nd M., P. (2006). Multi-task feature lear ning. Neur al Information Pr o c essing Systems , 19 . Argyriou, A. , Evgeniou, T. and M., P. (200 7). Co nvex m ulti-task feature learning. Machine L e arning . T o app ear. Boser, B. E. , G u y o n, I . M. and V apnik, V. (1992). A tr a ining a lgorithm for optimal margin classifiers. In Fifth Annual ACM Workshop on Computational L e arning The ory . ACM Press, Pittsburgh, P A, 144– 1 52. Bradley, P . S. and Mangasarian, O. L. (19 98). F ea ture selection via c o n- cav e minimization and s uppor t vector machines. In Pr o c. 15th International Conf. on Machine L e arning . Mo rgan Kaufmann, San F ra ncisco, CA, 82– 90. Christianini, N. and Sha we-T a ylor, J. (20 0 0). An intr o duction t o supp ort ve ctor machines and other kernel-b ase d le arning metho ds . Cambridge Univ er- sity P ress. Crammer, K. and Singer, Y. (2001). On the algorithmic implemen tation of m ulticlass kernel-based v ector machines. Journal of Machine L e arning R ese ar ch , 2 265–2 92. H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 166 Dudoit, S. , Fridl y and, J. and Speed, T. (200 2). Comparis on of discrimi- nation methods for the classificatio n of tumors using gene express ion data. Journal of Americ an St atistic al Ass o ciation , 97 77–8 7. MR19633 89 F ourer, R. , Ga y, D. and Kernighan, B. (2003 ). AMPL: A Mo de ling L an- guage for Mathematic al Pr o gr amming . Duxbury Pr ess. Khan, J. , Wei, J. S. , Ringn ´ er, M. , , Saal, L. H . , L a danyi, M. , Wester- mann, F. , Ber tho ld, F. , Schw ab, M. , Antonescu, C. R. , Peterson, C. and Mel tzer, P . S. (2001). Clas sification and dia gnostic prediction of cancers using g ene expression profiling a nd a rtificial neural net w orks. Natu r e Me dicine , 7 673 –679 . Laffer ty, J. and W asserman, L . (200 6). Challenges in s tatistical machine learning. St atistic a S inic a , 16 307 –323. MR22672 37 Lee, Y. , Kim, Y. , Lee, S. a nd Koo, J.-Y. (2 006). Structure d multicategory suppo rt vector machine with a nov a decomp osition. Biometrika , 93 55 5–571 . MR22614 42 Lee, Y. , Lin, Y. and W ahba, G. (2004). Multicateg o ry supp or t vector ma- chines, theor y , and application to the classification of microarr ay data and satellite radiance da ta. Journal of Americ an Statistic al Asso cia tion , 99 67– 81. MR20542 87 Liu, Y. and Shen, X. (20 06). Multicateg ory ψ -learning. Journ al of the Amer- ic an S tatistic al A sso ciation , 101 50 0–509 . MR22561 70 Liu, Y . a nd Wu, Y . (20 07). V a r iable selection v ia a combination of the l 0 and l 1 pena lties. Journal of Computation and Gr ap hic al Statistics , 16 7 82–79 8. Liu, Y. , Zhang, H. H. , P ark, C. a nd Ahn, J. (20 07). Supp ort vector machines with adaptive l q pena lties. Computational Statistics and Data Analysis , 51 6380– 6394. Micchelli, C. and P ontil, M. (2007). F eature space p er spe c tiv es for le arning the kernel. Machine L e arning , 66 2 97–3 1 9. Sch ¨ olkopf, B. and Smola, A . J. (2002). L e arning with Kernels . MIT Press . MR19499 72 V apnik, V. (1995). The Natu r e of St atistic al L e arning The ory . Springer -V erla g, New Y o rk. MR13679 65 V apnik, V. (199 8). St atistic al le arning t he ory . Wiley . MR16412 50 W ang, H. , Li, G. and Jiang, G. (2 007). Robust regr ession shr ink age and con- sistent v ar ia ble selection via the la d-lasso. Journ al of Business and Ec onomi cs Statistics , 25 3 47–35 5. W ang, J. and Shen, X. (2007a). Large margin semi-s uper vised le arning. Jour- nal of Machine L e arning R ese ar ch , 8 18 67–18 91. W ang, L. and Sh en, X. (20 07b). On l 1 -norm multi-class supp o rt vector ma- chines: metho dolo gy and theory . Journal of the A meric an St atistic al Asso ci- ation , 102 58 3–594 . W ang, L. , Z h u , J. and Zo u, H . (200 6). The doubly regularize d suppor t vector machine. Statistic a Sinic a , 16 5 89–61 5. MR22672 51 Weston, J. , El isseeff, A. , Sch ¨ olkopf, B. and Tipping, M. (2 003). Use of the zero-norm with linear mo dels and kernel methods. Journ al of Machi ne L e arning R ese ar ch , 3 1439 –1461 . MR20207 66 H.H. Z hang et al./V ariable sele ction for multic ate gory SVM 167 Weston, J. and W a tkins, C. (199 9). Multiclass suppor t v ector ma chines. In Pr o c e e dings of ESA NN99 (M. V er le ysen, ed.). D. F acto Pr ess. Wu, Y . and Liu, Y. (2007a). Robust truncated-hing e-loss supp ort vector ma- chines. Journal of the Americ an S tatistic al A sso ciation , 102 974 –983. Wu, Y. and Liu, Y. (200 7b). V ariable selection in qua n tile regr ession. Statistic a Sinic a . T o a ppea r. Yuan, M. and Lin, Y. (200 6). Mo del sele ction and estimation in r egressio n with group e d v ar iables. Journal of t he R oya l S tatistic al S o ciety, Series B , 68 49–67 . MR22125 74 Zhang, H . H. , Ahn, J. , Lin, X. and P ark, C. (2006). Gene selection using suppo rt vector mac hines with nonconv ex penalty . Bio informatics , 22 88 –95. Zhang, H. H . and L u, W. (200 7). Adaptive-lasso for cox’s prop ortiona l hazar d mo del. B iometrika , 94 691–7 03. Zhao, P. , Rocha, G. and Y u, B. (2006). Group ed and hierarchical mo del selection throug h comp osite absolute p enalties. T ec hnical Rep ort 703 , De- partment o f Statistics Universit y of Califor nia at Berkeley . Zhu, J. , Hastie, T. , R osset, S . and Tibshirani, R. (2003). 1-no rm suppo rt vector ma chines. Neu r al In formation Pr o c essing Systems , 16 . Zou, H. (2006). The a da ptive lass o and its or acle prop erties. Journal of t he Americ an Statistic al Asso ciation , 101 1 418–1 429. MR22794 69 Zou, H. and Yuan, M. (2006). The f ∞ -norm supp ort v ector mac hine. Statis- tic a Sinic a . T o app ear .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment