An efficient simulation algorithm based on abstract interpretation

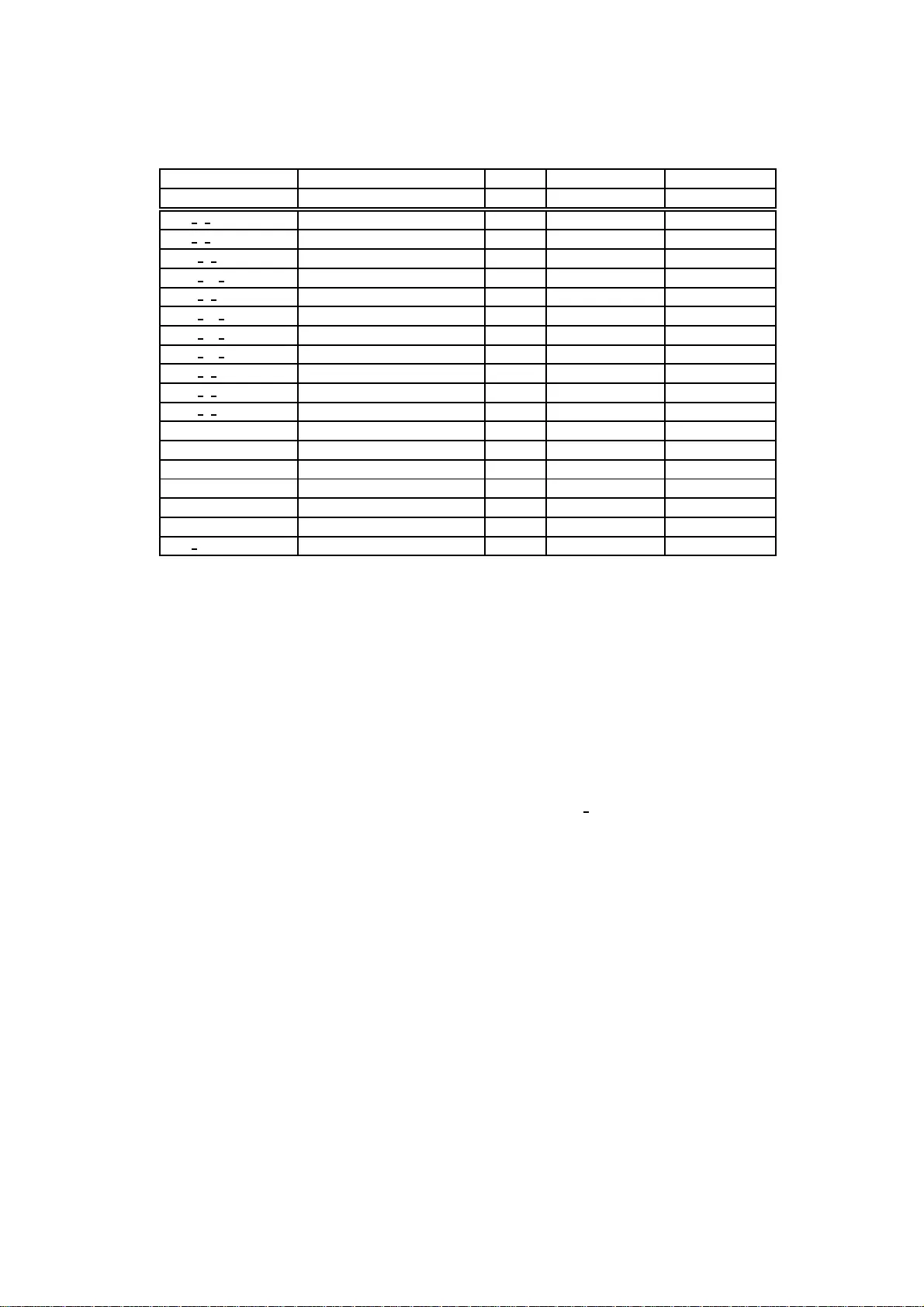

A number of algorithms for computing the simulation preorder are available. Let Sigma denote the state space, -> the transition relation and Psim the partition of Sigma induced by simulation equivalence. The algorithms by Henzinger, Henzinger, Kopke …

Authors: ** Francesco Ranzato, Francesco Tapparo (Dipartimento di Matematica Pura ed Applicata, University of Padova