Distributed Source Coding for Interactive Function Computation

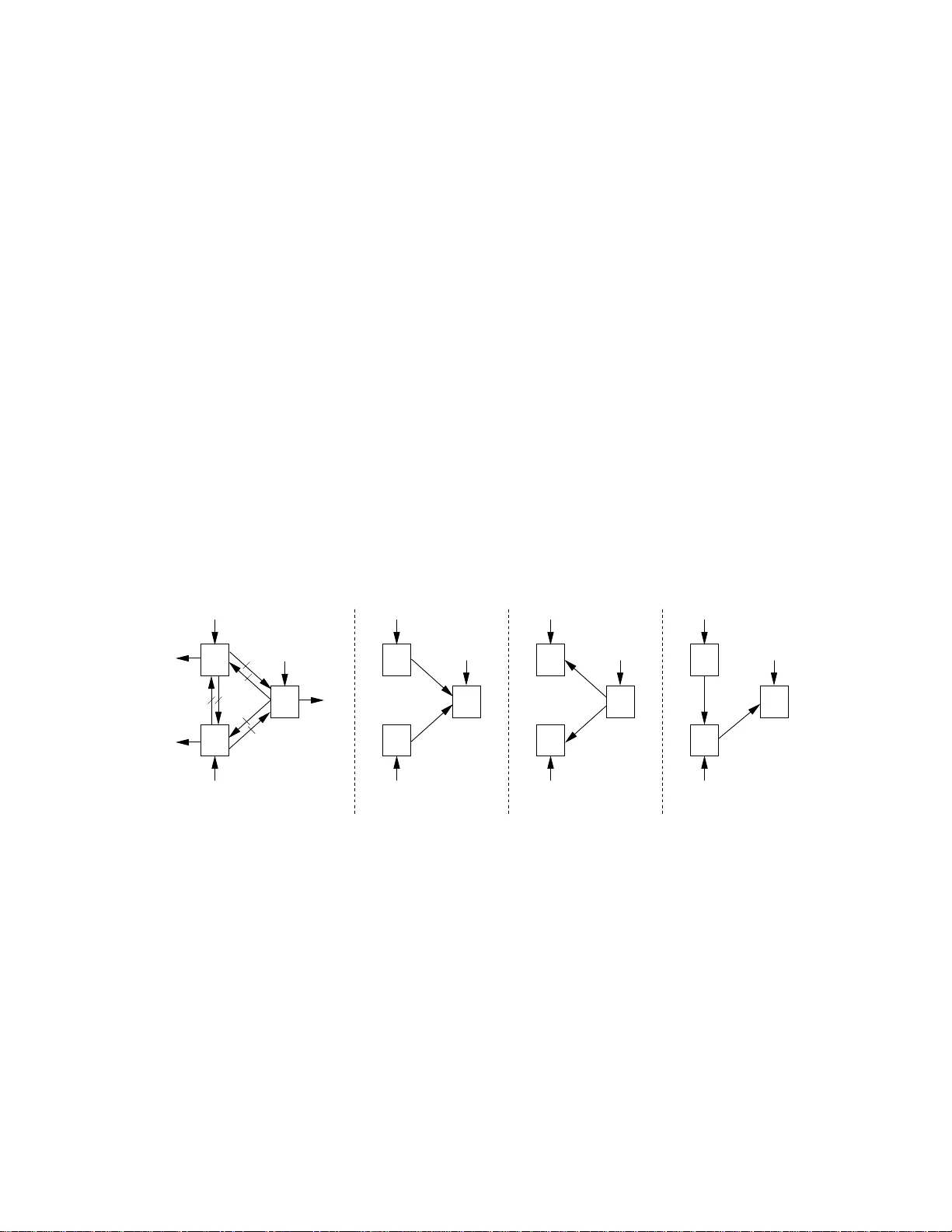

A two-terminal interactive distributed source coding problem with alternating messages for function computation at both locations is studied. For any number of messages, a computable characterization of the rate region is provided in terms of single-…

Authors: Nan Ma, Prakash Ishwar