Distributed Algorithms for Computing Alternate Paths Avoiding Failed Nodes and Links

A recent study characterizing failures in computer networks shows that transient single element (node/link) failures are the dominant failures in large communication networks like the Internet. Thus, having the routing paths globally recomputed on a …

Authors: Amit M. Bhosle, Teofilo F. Gonzalez

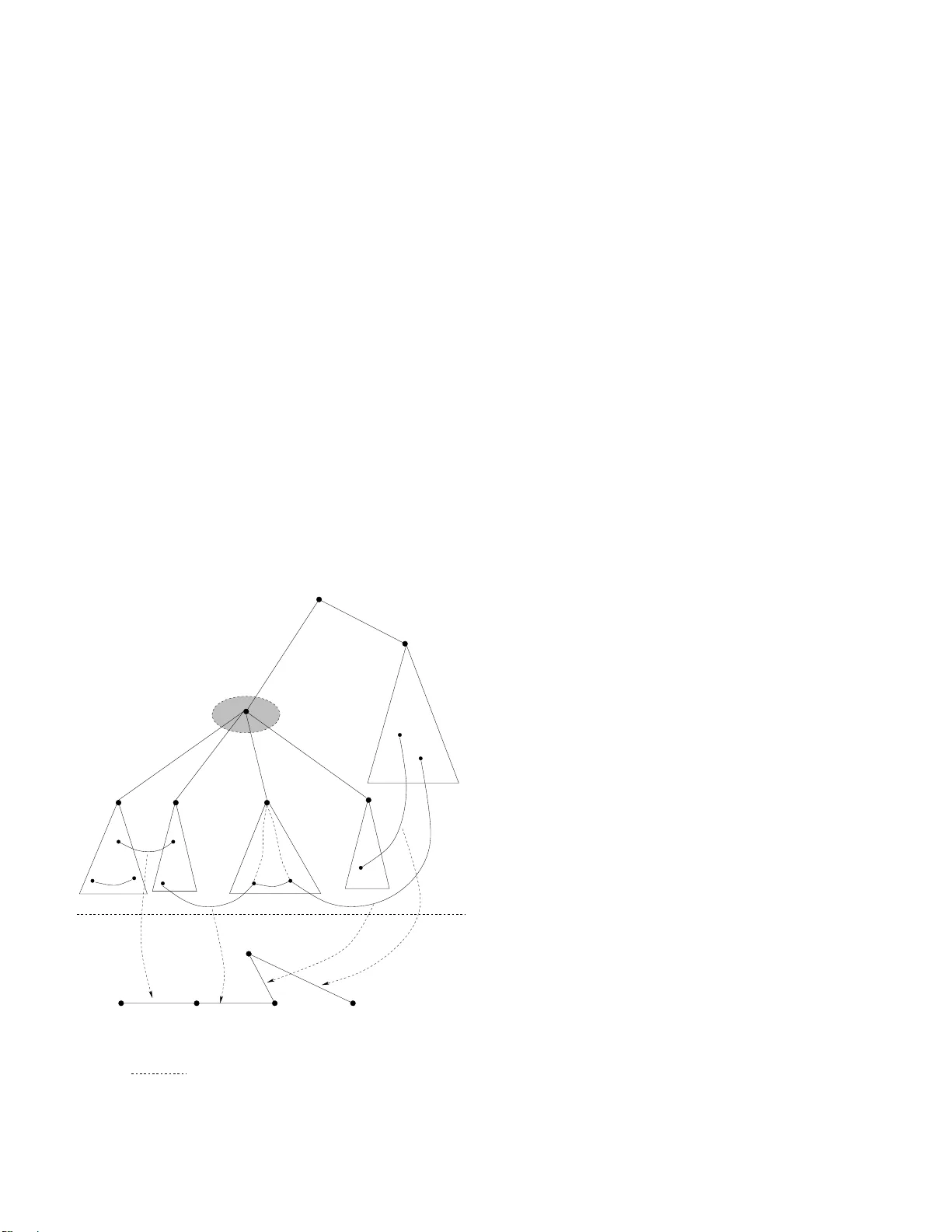

Distrib uted Algorithms for Computing Alternate P aths A v oiding Fa iled Nod es and Links Amit M. Bhosle, T eofilo F . Gonzalez Department of Compu ter Science Univ e rsity of California Santa Barbara, CA 93106 { bhosle,teo } @cs. ucsb .edu Abstract —A recent study characterizing failure s in computer networks shows that transient single element (node/lin k) failures are the dominant failures i n lar ge communication networks like the Inter n et. Thus, hav ing the routing paths globally reco mputed on a failu re does not pay off since the failed element reco vers fairly quickly , and the recomputed routing paths need to be discarded. In this paper , we present the first distributed algorithm that computes the alter n ate paths required by some proactive recov ery scheme s for h andling transi ent fail ures. Our algorithm computes paths that a void a failed node , and prov ides an a lternate path to a particular destination fro m an upstream neighbor of the failed node. With minor modifications, we can have the algorithm compute alternate paths that a void a failed link as well. T o the b est of our knowledge all pre vious algorithms proposed for computing alter nate paths a re centralized, and need complete informa tion of the n etwork graph as input to the algorithm. Index T erms —Distributed Algorithms, Computer Network Management, Network Reliability , Routin g Pro tocols I . I N T R O D U C T I O N Computer networks are norm ally r epresented by edge weighted graphs. The vertices rep resent co mputers (routers), the edges repre sent the commun ication lin ks b etween p airs of computer s, an d the weig ht of a n edg e represents the cost (e.g . time) req uired to transmit a message (of some given length) throug h the link. The links are bi-directional. Given a computer network rep resented by an ed ge weigh ted gr aph G = ( V , E ) , the p roblem is to fin d the best rou te (und er norm al opera tion load) to tra nsmit a m essage between every pair of vertices. The number of vertices ( | V | ) is n an d the numb er o f edges ( | E | ) is m . The shortest paths tree of a node s , T s , specifies the fastest way of tran smitting a m essage to node s originating at any given node in the g raph. Of course, this ho lds as lon g as messages ca n b e transmitted at the sp ecified costs. When the system ca rries heavy traffic on some links these r outes might not be th e best ro utes, but under no rmal operation th e ro utes are the fastest. It is well known that the all pair s shor test path problem , findin g a shortest path between every pa ir of nodes, can be computed in polyn omial time. In this paper we consider the case when th e n odes 1 in the network may b e suscep tible to transient faults. These are spo radic faults of at most one node at a tim e that last for a relatively sh ort period of time. This type of situa tion has bee n studied in the p ast [ 2], [3], [10], 1 The nodes are single- or multi- proce ssor compute rs [14], [16], [1 7] because it represents most of th e nod e failures occurrin g in networks. Single n ode failures repr esent more than 85% of a ll node failures [11]. Also, the se nod e failures are usually tr ansient , with 46% lasting less than a minute, and 86% lasting less than 10 minutes [11]. Because no des fail for relativ e short perio ds of time, p ropag ating inf ormation about the failure throu ghout the network is not re commen ded. T he reason for t his is that it takes time for the information about the failure to be comm unicated to all nodes an d it takes time for the nodes to r ecompu te the shortest paths in or der to re -adapt to the ne w network environment. Then, when the failing n ode recovers, a new messages disseminating this information needs to be sent to inform the nodes to roll back to the previous s tate. This pro cess also consumes re sources. Ther efore, prop agation of failures is best suited for the c ase wh en no des fail for lo ng periods of time. Th is is not the scen ario which ch aracterizes current network s, and is not conside red in this paper . In th is p aper we consider the case whe re the n etwork is biconne cted ( 2-node- connec ted ), m eaning that th e deletion o f a single no de does n ot disconn ect th e network. Biconne ctivity ensures th at th ere is at least one path be tween every pair of nodes even in the event that a nod e fails (p rovided the failed node is not th e or igin or destination o f a path). A ring network is an example of a b iconnec ted network, b ut it is no t necessary for a network to have a ring fo rmed by all of its no des in order to be bicon nected. T esting whether or no t a network is biconne cted can be perfor med in linear time with respect to the number of nodes and links in a ne twork. The algorithm is based on depth-first search [1 5]. Based o n ou r pr evious assumptions abo ut failures, a mes- sage origin ating at node x with destination s will be sent alo ng the path sp ecified by T s until it reaches no de s or a no de adjacent to a node that has failed. In the latter case, we need to use a recovery path to s from that p oint. Since we assume single no de faults a nd th e grap h is b iconnec ted, such a path always exists. W e call th is pro blem of fin ding the recovery paths the Sing le Node F ailure Recovery (SNFR) p roblem. I n this paper, we p resent an efficient d istributed algorithm to compute such paths. Also, our algorithm can be generalized to so lve some other problems r elated to finding alternate paths or edg es. A distributed a lgorithm for computin g the altern ate paths is particularly useful if the routing tables themselves are computed by a distributed alg orithm since it takes away the need to have a cen tralized view of the entire network graph. Centralized algor ithms inheren tly suf fer from th e overhead on the network a dministrator to p ut together (or source and verify) a co nsistent snapsho t of the system, in order to feed it to th e algorithm . This is followed by the need to deploy the ou tput generated by the algo rithm (e.g. alternate path ro uting tables) on the rele vant computers ( routers) in the system. Furthermore, centralized algorithm s ar e typically resourc e in tensiv e since a single comp uter needs to hav e enou gh memory and pr ocessing power to pr ocess a p otentially huge network g raph. Some other advantages of a distributed algorith m are reliability (no single points of failure), scalability and improved speed (com putation time). A. Related W ork A popular appro ach of tackling the issues related to transient failures of network elements is that of using pr oactive reco very schemes . These schemes typically work by precom puting alter- nate paths at the n etwork setup time for the failure scenarios, and then using th ese alternate paths to re- route the traffic when the failure actually o ccurs. Also, the in forma tion of the failure is suppressed in the hope that the failur e is tr ansient and the failed eleme nt will recover shor tly . The lo cal r erouting b ased solutions p ropo sed in [3], [10], [14], [1 6], [17] fall in to this category . Zhang, e t. al. [17] p resent p rotoco ls based on local r e- routing fo r dealing with tra nsient single node failures. Th ey demonstra te via simu lations that the recovery paths computed by their alg orithm are u sually within 1 5% of the theo retically optimal alter nate path s. W an g and Gao’ s Backup Route A ware Protocol ( BRAP) [16] also uses some preco mputed backu p routes in order to handle tra nsient sing le link failur es. One pro blem centr al to their solu tion asks for th e av ailability of r everse pa ths at each node. Ho wever , they do not d iscuss the compu tation of these reverse p aths. As we discu ss later, the alternate paths that our algorithm compu tes q ualify as the reverse paths required by the BRAP protocol of [ 16]. Slosiar and Latin [ 14] studied the single link failure recovery problem and presen ted an O ( n 3 ) time for com puting the link- av oidin g a lternate paths. A faster alg orithm, with a run ning time of O ( m + n log n ) for this problem was presented in [2]. The loca l-rerou ting based fast r ecovery protocol of [3] can use these paths to rec over from sin gle link failures as well. Both these algorithms, [ 2], [14 ], are centralized algorithms that w o rk using the information of the entire com municatio n graph. B. Pr eliminaries Our commun ication network is modele d b y an edge- weighted bic onnected un directed graph G = ( V , E ) , with n = | V | an d m = | E | . Each edg e e ∈ E has an associated cost (weight) , denoted by cost ( e ) , wh ich is a n on-negative real number . W e use p G ( s, t ) to den ote a shortest path between s and t in gra ph G an d d G ( s, t ) to denote its cost. A sho rtest path tree T s for a node s is a collectio n of n − 1 edges { e 1 , e 2 , . . . , e n − 1 } of G which for m a span ning tree of G such that the p ath from no de v to s in T s is a shortest path from v to s in G . W e say that T s is ro oted at nod e s . W ith respect to this root we define the set of nod es that are the childr en of a node x as follows. In T s we say that e very no de y that is adjacent to x such that x is o n the path in T s from y to s , is a child of x . For each node x in the shortest paths tree, k x denotes the number of children of x in the tree, an d C x = { x 1 , x 2 , . . . x k x } denotes this set of childr en of the node x . Also , x is said to be the p ar e nt o f each x i ∈ C x in the tree T s . The parent node , p , of a no de c is sometimes refer red to as a primary neig hbor or primary r ou ter of c , while c is referred to as an upstr eam neighbor o r u pstr ea m r o uter of p . The c hildren of a particu lar n ode are said to be siblings of each oth er . V x ( T ) deno tes the set of n odes in th e subtree o f x in the tree T and E x ⊆ E d enotes the set of all edges inciden t on the no de x in the graph G . nextH op ( x, y ) denotes the next node fro m x on th e shortest path from x to y . Note th at b y definition, nextH op ( x, y ) is the parent of x in T y . C. Pr oblem Definition The Single Nod e Failure Recovery prob lem is f ormally defined in [3] as follo ws: SNFR : Gi ven a biconne cted undirected edge weighted graph G = ( V , E ) , and the shor test paths tree T s ( G ) o f a no de s in G whe re C x = { x 1 , x 2 , . . . x k x } den otes the set of childr en of x in T s , f or each node x ∈ V and x 6 = s , find a path fro m x i ∈ C x to s in the grap h G = ( V \ { x } , E \ E x ) , whe re E x is the set of ed ges adjace nt to x . In o ther words, fo r each node x in the g raph, we are interested in finding alterna te paths from each of its children in T s to the no de s when the node x fails . No te that th e problem is not well defined when node s fails. The ab ove d efinition of alternate paths match es that in [ 16] for r everse paths : for e ach node x ∈ G ( V ) , find a path from x to the node s that do es not use the p rimary neighbor (parent node) y of x in T s . D. Main R esults Our main result is an efficient distributed alg orithm for the SNFR pro blem. Our algor ithm requ ires O ( m + n ) messages to b e tr ansmitted am ong th e n odes (r outers), and has a space complexity o f O ( m + n ) across all nod es in th e network (this, being asympto tically equal to the size of th e entire n etwork graph, is asympto tically op timal ). The space requir ement at any single node is linearly pr oportio nal to the nu mber o f children (th e node’ s degre e) and the num ber of siblings that the node has in the sho rtest paths tr ee of the d estination s . When used for multiple sink nodes in the network, the space complexity at each node is bou nded b y its total nu mber of children and siblings across the shortest paths tr ees of all the sink n odes. Note that even thou gh this is on ly bou nded by O ( n 2 ) in th eory (sin ce each nod e in the network can b e a sink, an d a node c an theo retically have O ( n ) childre n), it is much smaller in p ractice ( O ( n ) : fo r n sink nod es, as av e rage node degree in sho rtest paths trees is usually within 20 -40 ev en for n as high as a f ew 10 00 s). Finally , we d iscuss the scalability issues that may o ccur in large networks. Our a lgorithm is b ased o n a requ est-response mod el, and does no t requir e any globa l coordination amo ng the nodes. T o th e best o f our k nowledge, this is the first co mpletely decentralized and d istributed a lgorithm for co mputing alternate paths. All p revious algor ithms, in cluding those presen ted in [2], [3] , [1 0], [14], [16], [1 7] are centralized algorithms that work using the in formation of the entire n etwork graph a s input to the algor ithms. Furthermo re, our algorithm can be gen eralized to solve other similar pro blems. In particu lar , we can der iv e distributed algorithm s for: the s ingle link failure recovery problem studied in [2], [14], minimum spann ing trees sensiti v ity proble m [6] and the deto ur-critical edg e pro blem [12]. The cited pap ers present cen tralized algor ithms for the respective pro blems. I I . K E Y P RO P E RT I E S O F T H E A LT E R N AT E P AT H S W e n ow describe th e key properties of the alternate paths to a particular destinatio n that can be u sed by a no de in th e ev ent of its parent node’ s failure. These same principles hav e been used in the design of the centralized algorith m in [3]. Howe ver, for co mpleteness, we discuss them b riefly h ere. x 1 x x i k x x j x b b b b g u q p v p s g k y y y y 1 i j k x s x Edge translations from G G to R x (a) (b) x R r b r a g q Fig. 1. Reco vering from the fail ure of x : Construc ting the recov ery graph R x Figure 1(a) illustrates a scenario of a sing le nod e failure. In this case, the no de x h as failed, and we need to find alternate paths to s f rom e ach x i ∈ C x . Whe n a node fails, the shortest paths tree of s , T s , gets split into k x + 1 compon ents - one containing the source nod e s and each o f the remaining one s containing the subtree of a child x i ∈ C x . Notice that the edge { g p , g q } , which has o ne end point in th e subtree of x j , and the other outsid e th e subtree of x provides a ca ndidate recovery path for the node x j . T he complete pa th is of th e form p G ( x j , g p ) ❀ { g p , g q } ❀ p G ( g q , s ) . Sinc e g q is o utside the sub tree of x , the path p G ( g q , s ) is not af fe cted by the failure of x . Edges of this ty pe ( from a n ode in the subtree of x j ∈ C x to a node outside the subtre e o f x ) can be used by x j ∈ C x to esca pe the failure of node x . Such e dges are called green edges. For example, the edg e { g p , g q } is a green edg e. Next, co nsider the edg e { b u , b v } between a n ode in the subtree of x i and a node in the subtree of x j . Althoug h the re is no green edge with an end poin t in the subtree o f x i , the edges { b u , b v } and { g p , g q } tog ether of f er a candidate recovery path that can be used b y x i to recover from th e failure of x . Part o f this p ath connects x i to x j ( p G ( x i , b u ) ❀ { b u , b v } ❀ p G ( b v , x j ) ), after which it u ses the recovery path of x j (via x j ’ s gr een edge, { g p , g q } ). Ed ges of th is typ e (fr om a n ode in the subtree o f x i to a node in the subtree of a siblin g x j for some i 6 = j ) are called blu e ed ges. { b p , b q } is ano ther blu e edge and can be used b y the nod e x 1 to recover from the failure of x . Note that e dges like { r a , r b } and { b v , g p } with b oth end points within the subtree o f the same child of x do not help any of th e no des in C x to find a recovery p ath fr om the failure of nod e x . W e do no t consider such r ed edg es in the computatio n o f recovery paths, even th ough they may provide a sho rter r ecovery path fo r some no des (e. g. { b v , g p } may offer a shorter recovery path to x i ). The r eason for this is that routing protoco ls would need to be quite comp lex in o rder to u se th is inf ormation . As we describ e later in th e p aper, we carefu lly organize th e gr een and b lue edges in a way that allows us to retain o nly these ed ges and elimin ate useless (r ed) ones efficiently . W e now describe the c onstruction of a n ew graph R x , called the reco very g raph of x , which will be used to compute recovery paths for the elements of C x when the node x fails. A single so urce sho rtest pa ths co mputatio n on th is gr aph suffices to comp ute the recovery paths f or all x i ∈ C x . The gr aph R x has k x + 1 nodes, where k x = |C x | . A special node, s x , r epresents in R x , th e no de s in the original grap h G = ( V , E ) . Apart from s x , we have one node, denote d by y i , for each x i ∈ C x . W e add all th e g r een and blue edg es defined earlier to the g raph R x as fo llows. A green ed ge with an end po int in the subtree of x i (by definitio n, green edges have the o ther end point outside the sub tree of x ) translates to an edge b etween y i and s x . A blue edge with an end point in the subtre e of x i and the oth er in the subtree of x j translates to an edge between n odes y i and y j . Note th at the weight of the edg es add ed to R x need not be the same as the weight of the correspo nding gr een o r blue edges in G = ( V , E ) . The weig hts assign ed to the edg es in R x should take into accoun t th e weight of the actu al subpath in G co rrespond ing to th e edge in R x . As lon g as the weigh ts of e dges in R x don’t chan ge with x , or can be deter mined locally by th e node , they can be directly u sed in ou r algor ithm. The cand idate recovery path of x j that uses the green edge e = { u, v } ha s total c ost given by: g r eenW eig ht ( e ) = d G ( x j , u ) + cost ( u, v ) + d G ( v , s ) (1) This weight captures the weight of the actual subpath in G correspo nding to the ed ge add ed to R x . Howe ver, since the weight giv en by equation (1) for an edge depen ds o n the node x j whose rec overy path is bein g co mputed , it will typ ically b e different in each R x in which e ap pears as a green edge. The following weight fu nction is more efficient since it remains constant acr oss all R x graphs that e is part of. g r eenW eig ht ( e ) = d G ( s, x j ) + d G ( x j , u ) + cost ( u, v ) + d G ( v , s ) = d G ( s, u ) + cost ( u, v ) + d G ( v , s ) (2) Note that the correct weight ( as d efined by eq uation (1)) to be used fo r an R x can be derived by th e node x from the weight fu nction de fined ab ove b y subtracting d G ( s, x j ) = d G ( s, x ) + cost ( x, x j ) . Also, th e g reen ed ge with an end point in th e subtree o f x j with th e m inimum g r eenW eig ht remains the same, imm aterial of the gr eenW eight fun ction (equations (1) or ( 2)) used since equation (2) basically add s th e value d G ( s, x j ) to all such edges. As d iscussed ea rlier , a b lue ed ge provid es a pa th co nnecting two siblings o f x , say x i and x j . Onc e the path reach es x j , the remaining p art o f the recovery path of x i coincides with that of x j . If b = { p, q } is the blue edge connec ting the sub trees of x i and x j the length of the subpath from x i to x j is: bl ueW eig ht ( b ) = d G ( x i , p ) + cost ( p, q ) + d G ( q , x j ) (3) W e assign this weigh t to th e ed ge correspon ding to the blue edge { p, q } that is added in R x between y i and y j . Note that if w is the n earest com mon ancestor of the two end po ints u and v of and edge e = ( u, v ) , e is a green edg e in th e R gra phs for all nodes on path b etween w and u , an d w and v (excluding u , v a nd w : it is a b lue edge in R w , and is unu sable in R u and R v since a node z is deem ed to have failed while con structing R z ). Assuming that a node can determine wheth er an edge is blue or green in its recovery graph (we discuss this in detail in the next section), it is easy to see that it can derive the edge’ s blue weight fr om its g reen weight: bl ueW eig ht ( e ) = g re enW e ig h t ( e ) − (2 · d G ( s, w ) + cost ( w , w u ) + cost ( w , w v )) (4) where w u and w v are respectively the child nodes of w whose subtrees contain the node s u an d v . In formation about all terms being subtracted is av ailable locally at w , and consequ ently , the green W eig ht an d blueW eigh t values for an edge can be computed /derived u sing inf ormation local to the n ode w . If there ar e multiple gr een edges with an e nd po int in V x j , the subtree of x j , we choo se the one wh ich offers the shortest recovery path for y j (with ties being broken arbitrarily) a nd ignore the rest. Similarly , if th ere are multiple edg es between the subtrees of two siblings x i and x j , we r etain the o ne which offers the cheap est alternate p ath. The construction of our graph R x is now comp lete. Com- puting th e shortest path s tr ee of s x in R x provides enoug h informa tion to compute the recovery path s for all no des x i ∈ C x when x fails. Note tha t any e dge e = ( u, v ) acts as a blu e edge in at most one R x : that of the n earest-comm on-an cestor of u and v . Also, any no de c ∈ G ( V ) belongs to exactly one R x : that of its paren t in T s . As we discuss later, the space req uiremen t at any no de is linea rly proportio nal to the number of ch ildren and the number of sib lings th at it h as. Figure 1 illustrates the consturction of R x used to compute the recovery paths fr om th e n ode x i ∈ C x to the node s when the node x has failed. In this simple example, the path from y i to s x is y i ❀ y j ❀ s x . The co rrespon ding recovery path for x i is p G ( x i , b u ) ❀ { b u , b v } ❀ p G ( b v , x j ) , followed by the recovery path of x j : p G ( x j , g p ) ❀ { g p , g q } ❀ p G ( g q , s ) . I I I . A D I S T R I B U T E D A L G O R I T H M F O R C O M P U T I N G T H E A LT E R NAT E P AT H S In this section, we use the basic principals of the alternate paths describ ed earlier to design an efficient distributed alg o- rithm fo r com puting the altern ate paths. A. Computin g the DFS Labels Our distributed alg orithm requ ires that each nod e in the shortest paths tree T s maintain its d f sS tar t ( · ) and d f sE nd ( · ) labels in acco rdance with how a de pth-first-search (DFS) trav ersal of T s starts or ends at the n ode. Ref . [7] reports efficient distributed algorithms for this par ticular prob lem (of assigning lab les to the nodes in a tree as dictacted by a DFS trav ersal o f the tree). The basic algorithm reported in Ref. [7], named Wa ke & Label A , assigns DFS labels to the nodes in the ran ge [1 , n ] in asy mptotically optimal time and requires 3 n message s to be exchan ged between the nodes. They also discuss other variations of th is algorithm which vary with respect to the time req uired to assign the labels, th e rang e of labels, and the number of messages exchanged between th e nodes in th e network. An appr opriate alg orithm can be chosen to assign the d f sS tar t ( · ) an d d f sE n d ( · ) labels requ ired for our d istributed algorithm. W e sketch below th e basic algo rithm, W ake & Label A below . The Wake & Label A algorithm r uns in thre e p hases: wakeup , count , an d allo cation . In th e first ( wakeup ) phase, which is a top-d own phase, the r oot no de sends a message to all of its child nodes asking th em to report the nu mber of no des in their subtree (including them selves). Th e child nodes recursively pass on the m essage to their c hildren. I n the second ( coun t ) ph ase, wh ich is a botto m-up p hase, each node repor ts th e size o f its sub tree to its parent node. Th e variants of the Wake & Label algorithms d iffer in the last phase ( allocation ) which deals with assigning the lab els to th e nodes o f the tr ee. In the simplest version, on ce the root node knows the value of n (the total n umber of nodes in the tree), knowing the size of th e subtr ees of each child nod e, it can split the range [1 , n ] disjointly amo ng its ch ildren, and each child nod e recur si vely assigns a sub- range to its childr en (a child with c nodes in its su btree is assigned a range containing c values). The read er is referred to Ref. [7] for th e deta iled descriptio n and an alysis of the Wake & Label A algorithm an d its variants. For computing the d f sS tar t ( · ) an d d f sE nd ( · ) labels required by our algor ithm, the total range of these labe ls across all the nod es in T s is [1 , 2 n ] , and a ch ild with c children is assigned a ran ge of 2 c values. All other aspects of any of the DFS labe l assign ment algor ithms re ported in Ref. [ 7] can be u sed as appr opriate. Note that even though it is not explicitly mentio ned in Ref. [7], the Wake & Label A algorithm (inc luding our mo difications) can be imple mented on a request-respo nse mod el, without the nee d of any glo bal clock fo r coo rdination across th e n odes. B. Collecting the Gr ee n and Blue Edges Our algorithm requires that each node in the ne twork maintain th e following data-structu res: 1. ParentBlue Edges List : T he list of edg es in the network gr aph which have one end point within the subtree of the no de, and th e other end p oint in th e subtr ee of a sibling node. I.e. all edges from the no de’ s subtree that are blu e in the r ecovery gra ph R of the node’ s parent. 2. Childre nGreenEdges Map : A map that stor es for each child node, the cheape st green edge with an end po int in the child no de’ s subtr ee. Recollect that a green ed ge of a node has the other end point o utside the subtree of the node’ s parent. W e now discuss the details of this part o f the algorithm for b u ilding the ParentBlueEdges and ChildrenGree nEdges data-structur es. A pro cedure, CollectNonTr eeEdges , triggers a p rotoco l where each node recursively asks each of its ch ildren to fo rward it the non -tree edge s that have an end p oint in the child’ s subtree. Each node processes all its own non-tree edges, and those f orwarded b y a ch ild nod e. For pr ocessing a non-tr ee edge, a node u ses the d f sS tart ( · ) and d f sE nd ( · ) labels of the edge ’ s two end points to de cide wh ether the edge should be add ed to its P arentBlueEdg es list o r the ChildrenGree nEdges map. For an edge to be adde d to the Par entBlueEdge s list, th e edg e should have exactly one end p oint in th e node’ s subtre e, while the o ther end point still be within the par ent’ s subtree (but ou tside this node’ s subtree). For each edge that is forwarded b y a ch ild, the no de updates the corresp onding entry fo r the child in the Ch ildrenGreen Edges map if th e newly forwarded edge is ch eaper than the edge currently store d fo r the child. Finally , if a t least o ne of th e two end p oints of th e edge lies outside this n ode’ s subtree, it forwards the inf ormation of the e dge to the parent a fter updating its local data-stru ctures. Otherwise, it simply discards the edge an d does not fo rward it to its p arent. The reason for this is th at edges whose bo th end points belong to a node’ s subtree cannot serve as a blue or gre en edg e in the re covery grap h of the n ode’ s pare nt, and info rming the p arent ab out such an edg e does n ot serve any pur pose (if this no de is the nearest-co mmon -ancestor of the ed ge’ s two end poin ts, the edg e would be stored in the ParentBlue Edges lists at the two child nodes whose subtrees con tain the edge’ s end points). A child node in vokes the proce udre RecordNonTre eEdge defined below on its parent, with a me ssage M contain ing the following inform ation associated with a non -tree edge e : • e = ( p 1 , p 2 ) : The no n-tree ed ge, with p 1 and p 2 as the end po ints. • we ig ht ( e ) : W eight of the ed ge e . • sender I d : Id of this child node sending the message to the pare nt nod e. These in dividual pieces, e , p 1 , p 2 , and sender I d , can re- spectiv ely be accessed via M using the methods M .edge , M . p 1 , M . p 2 and M . senderId . Procedure RecordNonT reeEdge( M ) if (isMyDescendan t( M . p 1 ) AND isMyDescenda nt( M . p 2 )) do: // both end points in my // subtree: ignore return; fi // retrieve the current green // edge for this sender from // the ChildrenGr eenEdges map Edge existing = CGE.get( M .s enderId); Edge edge = M .edge; if (existing == null OR edge.weight < existing.weigh t), do: // if new or cheaper edge, // update our data-structure CGE.put( M .s enderId, edge); fi if (edgeIsBlueFor Parent(edge )), do: ParentBlueEd ges.add(edg e); fi // Reset the senderId, // and forward edge to parent M .senderId = self.id; parent.Recor dNonTreeEdg e( M ); End RecordNonT reeEdge The edge IsBlueForPar ent meth od used above deter- mines whe ther or no t an edg e is blu e for this node’ s par ent. This can be determined easily if the n ode knows its pare nt’ s d f sS tar t ( · ) and d f sE nd ( · ) labels. For efficiency , after the DFS labels h ave b een com putated, e ach nod e can query its parent for its labels, and store these locally . In some cases, these values can just be qu eried f rom th e parent nod e as and when n eeded. C. Compu ting the Alternate P ath s to Recover fr om a Nod e’ s F ailure Once the edge prop agation ph ase is over, par t of the informa tion required to constru ct R x , th e rec overy graph of x , is a vailable at the node x , and the remaining is av ailable at the children of x . In particu lar , x has the infor mation abo ut the nodes o f R x and th e gree n edges o f R x , while the c hildren of x hav e the inf ormation of the blue e dges of R x . Conceptually , x can construct the entire graph R x locally , and comp ute the shortest paths tree of s x . This p rocess would result in a sp ace complexity o f O ( m x + n x ) at nod e x , wh ere m x and n x denote the number of edges and n odes in R x re- spectiv ely . Note that m x can be as large as O ( n 2 x ) = O ( |C x | 2 ) . In order to keep the space requ irement low , the shortest path s tree, T s x , o f s x is b u ilt incrementally , by looking a t the ed ges of R x only w hen they are n eeded. Essentially , we use th e edges exactly in the order dicta ted by th e Dijkstra’ s shortest paths a lgorithm[ 5 ]. x initially builds R x using th e inf ormation it locally has: the k x + 1 no des, and the gr een edge from y i to s x for 1 ≤ i ≤ k x (if the ChildrenGreenEd ges map has an entry fo r x i ). x maintain s a priority que ue data structure, candidates , which initially has an entry f or each y i , with a priority 2 equal to the weight of the edge between s x and y i 3 . The remaining steps of the algo rithm are as follows. 1) While there are more entries in candid ates , execute steps 2 - 4. 2) Delete e ntry from candidates with highest priority . 3) Assign the priority value as th e final distance (from s x ) for the node y p associated with the queue entry . 4) Fetch the blu e e dges from ch ild node x p . For each blue edge thus retrieved, if it pr ovides a shorter p ath to its other end p oint, say x q , upd ate the pr iority of the q ueue entry co rrespon ding to y q with this v alu e. Note that the blue edges stored at a child no de x p are retrieved only when they are n eeded b y the a lgorithm, an d that each n ode x needs space linearly prop ortional to its nu mber of children , and the number of its siblin gs. For each sibling , a node needs to store at most one edge (which has the smallest blue w eight) with an e nd poin t in its own subtree, and th e other in the sibling’ s subtree. These edges are the blue edg es that ar e ad ded to the p arent no de’ s recovery grap h. Using Fibonacci heaps[8] for the priority queue, T s x can be comp uted in O ( m x + n x log n x ) time. 2 lo wer value implies higher priority 3 if no edge is present, a priority of ∞ is assigned I V . S C A L A B I L I T Y I S S U E S In large com municatio n networks, the n odes at h igher levels in the sho rtest paths tree (i.e. closer to the d estination) may face scalability issues. This h appen s primarily b ecause such nodes have large subtrees, and conseque ntly a large numbe r of edges may have an end poin t in th eir subtrees. Receiving informa tion about all these edges may potentially overwhelm the no des. In th is section , we d iscuss a few ap proach es to deal with such issues. The ap plicability o f the appr oaches varies with the pa rticular network topology , and the resou rces (mainly , the amou nt of tempo rary storag e) av ailable at the routers. Pr oduc er Consumer Pr o blem The problem of a n ode recei ving the information o f edges from its ch ild no des, and pr ocessing this in formatio n ca n be considered to b e a pr od ucer-consumer p roblem, where the child nodes pr oduce the edges, and a paren t nod e con sumes the ed ge by p rocessing it. The scalability issues occur in a case where all the child nodes together attempt to deli ver the edges to th eir parent at a r ate h igher than the rate at which the parent node can pro cess the edges. Recollect that processing an edge by a n ode includes u pdating its local data struc tures (if applicab le), and deli vering the information of the edge to the pare nt nod e. Our approache s of dealing with these scalability issues can be categorized in two br oad categories: (a) Th e consumer tries to minim ize the processing time (and thus, increase the consump tion rate), and (b) the pro ducers co- ordinate among themselves to limit th e rate at which the consum er receives the info rmation to be consumed. Consumer Driven Solutions The k ey p rincipals of this approach are the f ollowing. (a) If a parent n ode is too busy to process a new edge, it can reject the delivery attem pt o f the edge by th e c hild no de. For the par ent node, a rejected deliv e ry is eq uiv alen t to no delivery attempt at all. (b ) For a child node whose attempt to deliv er an edge was rejected by its parent, the pr ocessing of the edge is still incomplete. T o complete the pr ocessing, it mu st successfully deliver the edge to the parent. For a rejected d eliv e ry , the node must r etry the deliv er some tim e in future. The fact th at a no de may need to retry the delivery of an edge to its parent essentially translates to the requiremen t that the node have acce ss to a tempo rary stor age space wher e it can store the ed ges whose deliveries were rejecte d by its pa rent. Other wise, the d eliv e ry o f th e edg e will need to be transitively rejected b y all nodes d own to the nod e that initiated the edge’ s deliv e ry the very first time. Su ch options are usua lly pro hibitively expensive, since blips in the n etwork could also result in an edge no t bein g successfully de li vered to a paren t n ode. After the edge has been successfully delivered to the parent, its corr espondin g entry can be deleted from the temporar y storage. The tem porary storage space can be eithe r local o r re- mote storage, d ependin g on the size of th e network , and the hardware config uration o f the routers. Using the tempor ary storage, we split the r eceip t , and pr ocessing o f an edge into two indep endent parts. As par t of receiving an edg e, the p arent node just n eeds to store th e edge into the tempor ary stora ge. Once it has successfully stored the ed ge, it acknowledges the delivery attem pt of the child nod e. Next, eac h node runs a processing da emon , wh ich reads the informa tion persisted in the temp orary stor age and pr ocesses the edg es. The last step of this processing includes succ essfully delivering the informa tion of the edge to the node’ s parent. After suc cessful delivery , the inform ation about the e dge f rom the tem porary storage is deleted. In case the deliv e ry is re jected, the edge is kept in the stor age, an d its deliv e ry is retried af ter some time. Remote storage solutions could also be u sed as the temporar y storage sp ace. In particular, the Simple Queu e Service (SQS), o ffered by Amazon W eb Ser vices [13] is very well suited for this use c ase. T he SQS is a highly av ailable an d scalable web service, which exposes a qu eue interface via web ser vice APIs. The API s of our in- terest ar e enq ueue(Messag e) , read Message() and dequeue(Mess ageId) . Note that althoug h SQS is not a free service, its pay-as-yo u-go usag e-based pr icing mod el makes it a cheaper alternative to the traditional o ption of having large hard d isks on the route rs (and esp ecially m ore attractive for this use case since th e tempora ry storag e space is req uired only d uring the network set-up time). Also , it essentially provides an unlimited storage space since there’ s no restriction on the num ber o f messages that can be stored in an SQS instance, and c an thus be used immaterial of th e network size. When used in ou r protoc ol, eac h node instan tiates an SQS instance f or itself, and u ses it as its temp orary stora ge space. Pr oduc er Driven So lutions The second approac h th at we discuss here is based on the produ cers co -ordin ating among st th emselves to limit the rate at which the c onsumer receives the information to be consumed. For simplicity , we assume that the numb er of ed ges with an end poin t in the subtr ee of a no de x i (and which need to be forwarded to its pa rent x ) is p ropor tional to the size of the subtree V x i . If all the nodes x i for 1 ≤ i ≤ |C x | can coordinate am ongst th emselves abo ut the ir ed ge deli veries to x , they can, to a c ertain extent, ensure th at node x d oes not receive in formatio n ab out all the edges in a very short window of time . Essentially , a node x k is assign ed a total tim e propo rtional to | V x i | / | V x | fo r d eliv ering its ed ges to the parent x , in or der to ensure that a child node is assigned eno ugh tim e to d eliv er all of its edge s to x . Note that this appro ach relies on the ease of achieving coordin ation amon g all the child n odes of a nod e about delivering the edges. V . O T H E R R O U T I N G P A T H M E T R I C S Thoug h the sh ortest p aths m etric is a p opular metr ic used in the selection o f paths, several netw orks use so me other metr ics to select a pr eferred p ath. Examp les include metr ics ba sed on link band width, network d elay , hop count, lo ad, re liability , and comm unication cost. Ref. [1] presen ts a survey on the popular routing path me trics used. It is in teresting to note th at some o f th ese metrics (e.g. commu nication cost, hop-co unt) can be tra nslated to sho rtest path me trics. Optimizing ho p- count is same as com puting sho rtest paths whe re all edges have the same ( 1 unit) w eight, while co mmun ication cost can be direc tly used a s edg e weights. F o r optim izing metrics like path r elia bility and b andwidth , the shortest path algorithm s can be used with easy modification (e.g. the reliability of an entire path is the product of the reliabilities of the in dividual edges; the band width of a path is the min imum ban dwidth across the in dividual edg es on the p ath). For these metrics, algorithm s based o n shortest paths can be dir ectly used with the app ropriate modification s. A min imum span ning tree, which constru cts a spa nning tree with m inimum tota l weig ht is also used in some n etworks when the primary goal is to achieve r eachability . Note that alth ough we discuss our algo rithm in co ntext of sho rtest path s, the tech niques can be generalized to find alternate p aths in accordan ce with oth er metr ics, an d o ur algorithm can be used with appropr iate modifications. The modification s required would be in the weight functions (Equation s 1, 3) u sed for assigning weights to the edges added to R x , the recovery graph that is constru cted to find alternate p aths whe n the node x fails. Furth ermore , paths in R x should be com puted as dicta ted by the metr ic. E.g. constructing a m inimum spannin g tre e of R x , or find ing a maximum b andwidth p ath, etc. It is importan t to n ote that the pro cess of constructin g R x can be m odified so that it contains inf ormation about a wide variety of altern ate paths that av oid the failed node x and are rele vant f or the particula r metric being o ptimized. An appro priate alternate path c an be constructed de pendin g on the me tric o f in terest, an d othe r factors that affect path selection. In large n etworks, nod es typically denote auton omou s sys- tems (AS), which ar e network s owned an d opera ted by a single administrative e ntity . I t is c ommon for th e paths to be selected based on inter-AS p olicies. See Ref. [4] for a d etailed discussion on the ro uting p olicies in ISP networks. Policies are usually tra nslated to a set of ru les in a particular o rder of prece dence, and are u sed to determine th e pr eference of one r oute over the other . Such p olicies can be incorp orated in defining the weights of th e edges of R x , and/o r in the process of computing the paths in R x . In the extreme case (when an AS do es n ot wish to shar e its policy-b ased route selection rules with its neig hbor s), in formatio n about the graph R x can be re triev ed b y each node x i from x , in order to con struct R x locally , in o rder to com pute its own alternate path to s . Note that sinc e th e average degree of a nod e is usually small (within 2 0-40) , th e size of R x would typically be reasonab ly small. V I . C O N C L U D I N G R E M A R K S In this paper we h av e presen ted an efficient distributed algorithm for the c omputin g alter nate paths that av oid a failed node. T o the b est of our k nowledge, this is the first completely decentralized algorithm that computes such alternate paths. All previous algorithms, includin g those presented in [2], [3], [10], [14], [16], [17] are centralized algorithm s that work using the inf ormation of the entire network graph as input to the algorithm s. The p aths c omputed by our algorithm ar e r equired b y the single no de failure recovery pr otocol of [ 3]. They also qualif y as the re verse paths requir ed by the BRAP proto col of [16], which deals with single link failure recovery . Our distrib uted algorithm co mputes the exact same p aths as those ge nerated by th e ce ntralized algo rithm of [3], and ev en thoug h not optimal alternate paths, they are usually good - within 15 % of the optimal f or random ly generated graphs with 100 to 100 0 nodes, and with an average node degree of upto 3 5 . The read er is refer red to [3] for further details abou t the simu lations. Our algorithm can be gener alized to solve other similar problem s. In pa rticular, we can der iv e d istributed algorithm s for the single link failure recovery pr oblem [2], [1 4], the min- imum span ning tree sensitivity pro blem [6], and th e d etour- critical edge problem [ 12]. The cited p apers present centralized algorithm s for the problem s stud ied. All th ese are link failure recovery p roblem s that deal with the failure o f one link at a time. In th ese problems, f or each tree edge (m inimum s panning tree, o r sho rtest paths tree, d epend ing on the p roblem) , one needs to find an e dge across th e cu t in duced b y the deletion of the edge. W e essentially n eed to find edg es similar to the gr een edges for the SNFR prob lem, except for one minor chan ge: these green edges have one end poin t in the n ode’ s subtree, and the other outside its subtree (for the SNFR pro blem, the other end p oint nee ds to be outside th e subtree o f th e n ode’ s parent). Our DFS lab eling scheme can be used for d etermining whether an edge is gr een or not accord ing to this d efinition. Using the DFS label c omputatio n a lgorithm s of [7], and our protoco ls for ed ge p ropag ation ( RecordNonTree Edge ), we can find the requ ired alternate paths that avoid a failed ed ge. W e believ e that o ur techniqu es can be g eneralized to solve some oth er pro blems as well. In their recen t work, Kvalbein, et. al. [9] addre ss the issue of load balan cing wh en a proactive recovery scheme is used. While some previous papers have also in vestigated th e issue, as me ntioned in [9], they usually ha d to compro mise on th e perfor mance in the failure-f ree case. T o a somewhat limited extent, our alg orithm can be modified to take this aspect into consideratio n. For instan ce, instead of co mputing the shortest paths tree T s x in R x , one is free to compute other types of paths from each no de y i to s x in order to en sure that the sam e set of edges do n’t ge t used in many recovery paths. R E F E R E N C E S [1] Rainer Baumann, Simon Heimlicher , Mario Strasser , and Andreas W eibel . A surve y on routing metrics, T IK Report 262, ETH Z ¨ urich. 2006. [2] A. M. Bhosle and T . F . Gonz alez. Algorithms for single link fa ilure reco very and relate d problems. J. Graph Algorithms Appl. , 8(2):275– 294, 2004. [3] A. M. Bhosle and T . F . Gonzalez. Effic ient algorithms and routing protocol s for handling transie nt single node fail ures. In 20 th IASTED PDCS (to appear) , 2008. [4] Matthe w Caesar and Jennifer Rexford. Bgp routing policies in isp netw orks. IEEE Network , 19(6):5–11, 2005. [5] E. W . Dijkstra . A note on two proble ms in conne ction with graphs. In Numerisc he Mathematik , pages 1:269-271, 1959. [6] B. Dixon, M. Rauch, and R. E. T arjan. V erification and sensiti vity analysi s of minimum spanning trees in linear time. SIAM J . Comput. , 21(6):1184 –1192, 1992. [7] Pierre Fraigni aud, Andrzej Pelc, Da vid Peleg, and Stepha ne Perennes. Assigning labe ls in unkno wn anonymous netw orks (exte nded abstract). In PODC , pages 101–111, 2000. [8] M. L. Fredman and R. E. T arjan. Fibonacci heaps and their uses in improv ed network optimiz ation algorithms. J ACM , 34:596-615, 1987. [9] A. Kvalbe in, T . Cicic, and S. Gjessing. Post-fail ure routing performance with multiple routing configuration s. In INFOCOM , pages 98–106, 2007. [10] S. Lee, Y . Y u, S. Nelakudi ti, Z.-L. Zhang, and C.-N. Chuah. P roacti ve vs react i ve approaches to failu re resili ent routing. In Pr oc. of IEEE INFOCOM , 2004. [11] A. Markopul u, G. Iannacc one, S. Bhatta charya, C. Chuah, and C. Diot. Charac teriza tion of failures in an ip backbone . In P r oc. of IEEE INFOCOM , 2004. [12] E. Nardelli, G. Proietti , and P . Widma yer . Finding the detour -critical edge of a s hortest path between two nodes. Inf . Proce ss. L ett. , 67(1):51– 54, 1998. [13] Amazon W eb Services. http: //a ws.amazon.com/. [14] R. Slosi ar and D. Latin. A poly nomial-ti m e algorithm for the est ab- lishment of primary and alterna te paths in atm network s. In IEEE INFOCOM , pages 509-518, 2000. [15] R. Riv est T . Cormen, C Leiserson and C. Stein. Intr oduction to Algorithms . McGra w Hill , 2001. [16] F . W ang and L. Gao. A backup route a ware routing prot ocol - fast reco very from transient routing failures. In INFOCOM , 2008. [17] Z. Zhong, S. Nelaku diti, Y . Y u, S. Lee, J. W ang, and C.-N. Chuah. Fail ure inferencing based fa st rerouting for handling transient link and node failu res. In Pr oc. of IEEE INFOCOM , pages 4: 2859-2863, 2005.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment